2.2. Stereo vision-based pedestrian detection

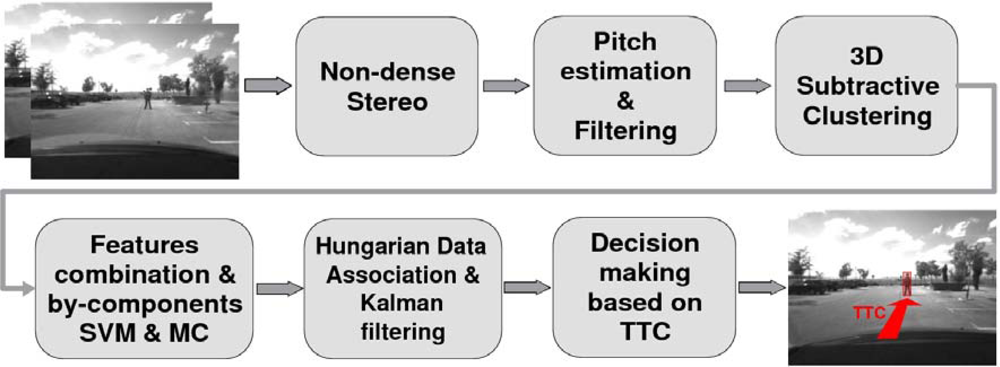

Pedestrian detection is carried out using the system described in [

12,

13] (

Figure 2 depicts an overview of the pedestrian detection architecture). Non-dense 3D maps are computed using a robust correlation process that reduces the number of matching errors [

14]. The camera pitch angle is dynamically estimated using the so-called virtual disparity map which provides a better performance compared with other representations such as v-disparity map or YOZ map [

13]. Two main advantages are achieved by means of pitch compensation. First, the accuracy of the time-to-collision estimation in car-to-pedestrian accidents is increased. Second, the separation between road points and obstacle points is improved, resulting in lower false-positive and false-negative detection rates [

13].

3D maps are filtered assuming the road surface as planar (which can be acceptable in most cases), i.e., points under the actual road profile and over the actual road profile plus the maximum pedestrian height are removed since they do not correspond to obstacles (possible pedestrians). The resulting filtered 3D maps are used to obtain the regions of interest (ROIs).

Based on the idea that obstacles (including pedestrians) have a higher density of 3D points than the road surface, ROI selection can be carried out by determining those positions in the 3D space where there is a high concentration of 3D points. A 3D subtractive clustering method is proposed to cope with the ROI selection stage using sparse data. The idea is to find high-density regions, which are roughly modelled by a single 3D Gaussian distribution, in the Euclidean space. The parameters of each Gaussian distribution are defined according to a minimum and maximum extent of pedestrians. Thus, whereas pedestrians are correctly selected, bigger obstacles such as vehicles or groups of pedestrians are usually split in two or more parts. To cope with the stereo accuracy the method is adapted to the expected depth error [

14].

The 2D candidates are then obtained by projecting the 3D points of each resulting cluster and computing their bounding boxes. A Support Vector Machine-based (SVM) classifier is then applied using an optimal combination of feature-extraction methods and a by-components approach [

12]. The RBF kernel provides better performance although the linear kernel is the best solution from a computational point of view. Each candidate (possible pedestrian) is divided in six regions (head, left and right arms, left and right legs and a region between the legs). Each region is independently learnt using different features. The optimal combination is obtained using texture features (

Texture Unit Number) for the head and the region between the legs, histograms of grey level differences for arms, and Histograms of Oriented Gradients (HOG) for the legs [

12]. The final classifier is trained with 67,650 samples (22,550 pedestrians and 45,100 non pedestrians, including mirrored images).

Nonetheless, the 2D bounding box corresponding to a 3D candidate might not perfectly match the actual pedestrian appearance in the image plane. Multiple candidates are generated around each original candidate. The so-called multi-candidate (MC) approach proves to increase the detection rate, the accuracy of depth measurements, as well as the detection range [

12]. The resulting pedestrians are tracked by means of a Kalman filter and the data association problem is solved using the Hungarian method.

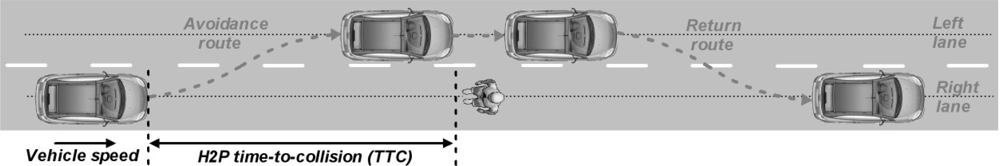

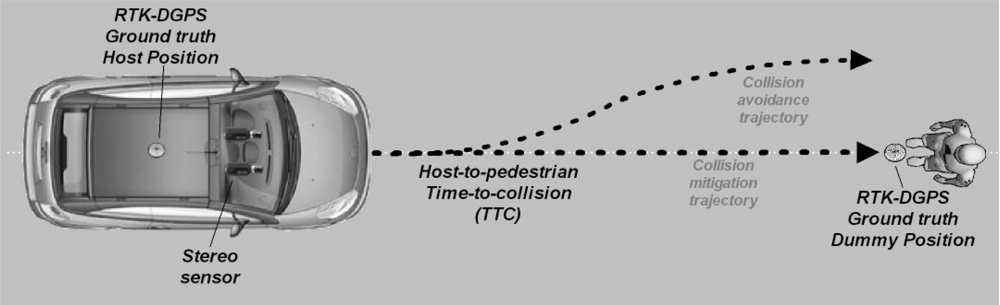

The last block of

Figure 2 is based on the computation of the time-to-collision (TTC) between the host vehicle and the pedestrians ahead and is defined according to the application requirements. For example, in case of

driving alert applications this stage will trigger alarms to the driver depending on the host-to-pedestrians (H2P) TTC. In case of

pedestrian collision mitigation applications [

13] an activation signal will be sent to a pedestrian protection airbag and/or an active hood system. For

pedestrian collision avoidance applications this stage will trigger the corresponding signals to the brake pedal and/or the steering wheel controllers.

The pedestrian detection system runs in real time (25 Hz) with 320 × 240 images and a baseline of

tx = 300 mm approximately. The stereo vision-based pedestrian detection system has been tested in real collision-mitigation experiments by active hood triggering and collision-avoidance tests by brakeing or decelerating [

13]. In this paper, its performance is analyzed in real experiments in the context of pedestrian collision avoidance by steering, including analysis of the depth estimation errors. Note that although depth estimation uncertainties have an effect in almost all blocks of

Figure 2 (excluding block number 4 -

feature selection and classification-), we will focus on the last stage by analyzing host-to-pedestrian distance estimation errors.

2.4. Stereo quantization error

There is a significant amount of published research on characterization of range estimation errors based on system parameters for stereo vision [

7,

8]. Here, our approach for computing the quantization error covariance for each point and the corresponding host-to-pedestrian distance estimation error is briefly described.

Given a calibrated rig of cameras and a correspondence between two points, one on the left camera (

ul,

vl) and another one on the right (

ur,

vr) the 3D position of the point in the world coordinate system is given by [

11]:

where

A is the matrix containing the equations for the 3D to 2D transformation for each one of the cameras and

b the independent term of the same equations. Matrices

A and

b are written as a function of the cameras intrinsic parameters:

Each camera intrinsic parameters [

ML MR] are estimated using an off-line calibration process. The intrinsic parameters describe the 3D to 2D transformation for each one of the cameras according to the following equation:

In order to compute how the different errors in quantization in

T = (

ul vl ur vr) affect the 3D position the partial derivatives for

Equation (1) are computed:

Applying the product rule for matrices:

and writing C as:

the expression for how the inaccuracies in the pixel position affect the 3D reconstruction is obtained:

Finally, substituting the intrinsic matrices values from

Equation (4):

Assuming T is a normally distributed random variable with mean 0 and variance:

where

,

,

,

are the uncertainties in pixels on the measure of

T, the final expression for the quantization error covariance is (note that the errors in the image coordinates are assumed to be independent so the covariance matrix is diagonal):

As each pedestrian is roughly modelled by a high concentration of 3D points, the final host-to-pedestrian distance estimation error is defined as the mean value of the Δzz value corresponding to all 3-D points that lie within the pedestrians detected by the subtractive clustering algorithm.

2.5. System parameters

A stereo imaging system needs to know how the various system parameters affect the depth estimation error, especially for automotive applications due to their safety component. Designing a stereo system involves choosing three main parameters: the

focal length of the cameras, the distance between the cameras (

baseline) and the

size of the images. The most important application requirements are the

depth estimation error, the

runtime and the distance of the

frontal blind zone, although the range estimation error is usually the deciding parameter, and the system parameters are chosen in order to meet an acceptable range error. Concerning this topic a considerable number of statistical depth error analysis works have been carried out [

7,

8] deriving quantitative expressions. Although these expressions describe the relationship between the range error and the system parameters, it is still difficult to obtain a fast method to define those parameters, especially when there are many parameters that affect to the depth estimation error.

In order to facilitate the choice of the system parameters we propose the use of pre-computed graphs including different settings. Whereas the H2P distance estimation error is computed assuming the general stereo case (non parallel optical axes), the graphs are computed using the ideal case. From the geometry of a parallel stereo pair (ideal case),

i.e., two cameras with parallel optical axes, the same intrinsic parameters (

fx,

fy,

u0,

v0) and separated by a baseline

tx, the depth value of a 3D point

P = (

X,

Y,

Z)

T can be defined as:

where

f is the cameras focal length,

xr and

xl are the x-projections in metrics coordinates on the right and left image planes respectively,

dx is the length of a pixel in the x-axis (

fx =

f /

dx),

ur and

ul are the x-projections in pixel coordinates on the right and left image planes respectively, and

du represents the disparity value in the x-axis of the image in pixels. Given the baseline

tx, the focal length in pixels in the x-axis

fx and the image size (

W,

H), the stereo error can be computed from the maximum disparity

duMAX=

W – 1 (minimum depth) to the minimum disparity

duMIN =1 in steps of 1 pixel as follows:

where the maximum theoretical range is given by

ZMAX =

fxtx.

Equation (14) describes the relationship between the depth accuracy and the product

fxtx, as well as the image size (the higher the image size (

W,

H) the higher the disparity range

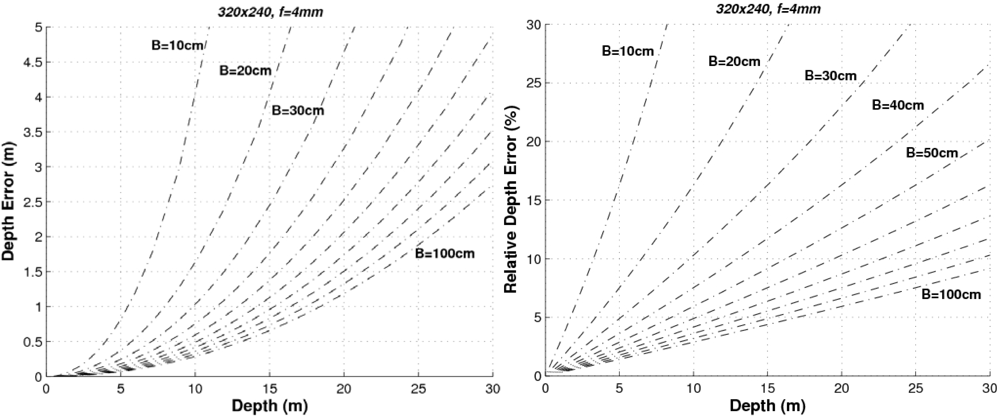

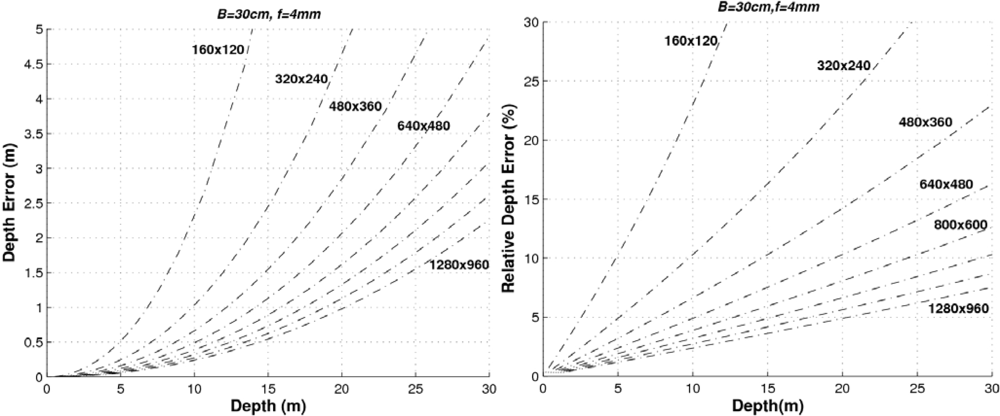

duMAX). In order to provide a graphical representation of the range error we have to fix some parameters. For example,

Figure 4 depicts the range error and the relative range error (Δ

Z /

Z) for different baselines using images of 320 × 240 pixels and a focal length of 4 mm.

As can be seen, the higher the baseline the lower the error. Let’s consider that our system (with 320 × 240 images and

f = 4 mm)) requires a relative error Δ

Z < 10% up to distances of 20 m. Then the baseline should be greater than 60 cm. If the relative error has to be Δ

Z < 5% up to distances of 5 m, then the minimum baseline would be 30 cm (and so on). If the baseline is defined to

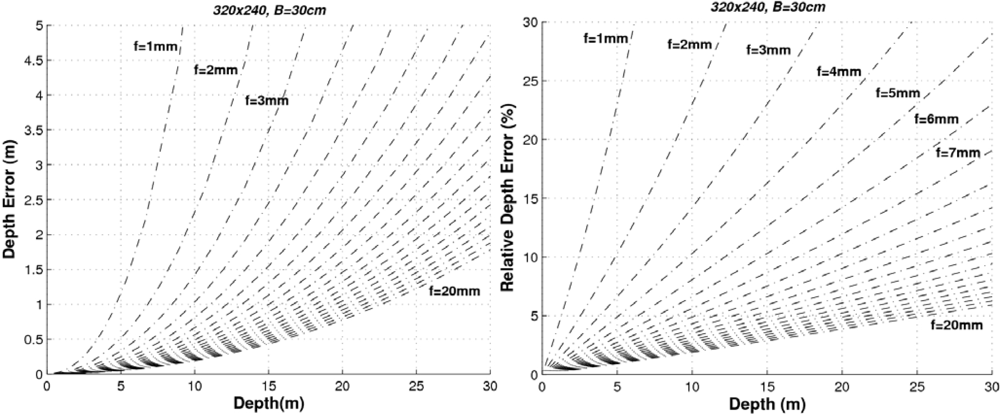

tx = 300 mm and the images have a size of 320 × 240 pixels, the higher the focal length the lower the error as can be seen in

Figure 5.

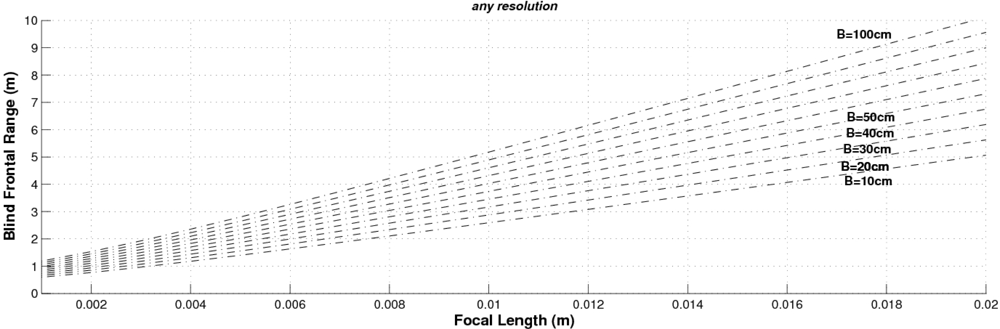

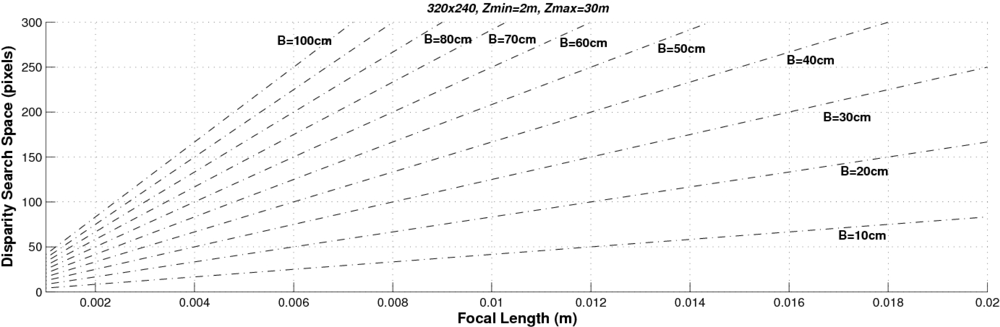

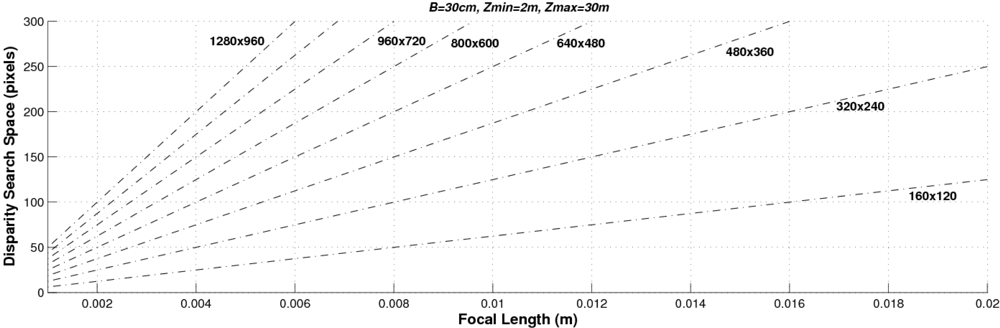

Finally,

Figure 6 shows both the absolute and the relative range errors for different image sizes corresponding to a sensor with

f = 4 mm and

tx = 300 mm. As can be observed, the higher the image size the lower the error. These graphs can be used for determining the system parameters according to the depth error requirements. Accordingly we can conclude that the higher the baseline, the focal length and the size of the images the lower the depth error. However, other parameters have to be taken into account when designing a stereo sensor: the computational load (which is defined by the range of the disparity search space) and the size of the frontal blind zone. As soon as we increase the values of the baseline and the focal length, both the size of the frontal blind zone (see

Figure 7) and the range of the disparity search space (see

Figure 8) also increase. In addition, the higher the size of the images, the higher the disparity search space (the computational load) as can be seen in

Figure 9.

The proposed stereo vision-based pedestrian detection system uses 320 × 240 images, a baseline of

tx = 300 mm and a focal length of

f = 4 mm. This sensor setup is mainly defined as a trade off between accurate range estimation and low computational load as well as low size of the frontal blind area. We can derive from

Figures 4–

6 that this sensor setup implies an almost linear relationship (with a slope approximately equal to 1) between the range and the relative range error up to distances of 15 m. As can be observed in

Figure 7, the size of the blind frontal area is mainly defined by the focal length of the cameras. In our case, a focal length

f = 4 mm defines a blind frontal area lower than 1.5 m. Finally, our sensor setup implies a disparity search space of 50 px in a range from 2 m to 30 m, as depicted in

Figures 8 and

9, which allows real-time stereo computation. Higher resolutions, e.g., 640 × 480, would certainly produce lower relative depth errors (almost half the error, as can be observed in

Figure 6). However, the disparity search space would be increased by a factor of 2 (

Figure 9). This is also applicable to the focal length. A focal length of

f = 8 mm would reduce the relative depth error by a factor 2 up to distances of 15 m (see

Figure 5), but the disparity search space would be also increased by a factor of 2 (see

Figure 8).