Risks of Deep Reinforcement Learning Applied to Fall Prevention Assist by Autonomous Mobile Robots in the Hospital

Abstract

:1. Introduction

1.1. Social Background

1.2. Related Work

1.3. Our Objective

2. Materials and Methods

2.1. Fall Risk and Phases of a Patient’s Life Cycle in the Hospital

2.1.1. Upon Arrival

- Getting out of and transferring from a car

- Bringing items (for example stick, wheel chair, cart, bag, umbrella, pet, and slippers)

- Declining physical, mental, and emotional health or ability depending on age, multidrug administration, level of inebriation, sight and hearing impairment, and injury/physical condition

- Slipping on wet floors of entrances and exits, tripping over a mat

- Environments such as escalator steps, speed of the escalator, and getting on/off

- Handrail positioning during movement

- Floor geometric patterns or color transitions, lighting changes

- Slope walking

2.1.2. Waiting for Examination and Consultation

- Leaning against backless and unfixed chairs

- Walking and other movements

- Rising or standing up

2.1.3. Examination, Surgery, Treatment

- Effects of changes in physical conditions

- Sedation and anesthesia after surgery

- Rising or standing up.

2.1.4. Rehabilitation

- Inadequate support equipment

- Inappropriate care (holding both hands of a patient and guiding, the work of one person being done by two, the work of two persons being done by one, leaving a patient alone).

2.1.5. In the Hospital Room

- Transfer between the bed, wheelchair, or stretcher

- Performing activities by themselves (or without assistance), such as going to the toilet, taking a walk, or going to other areas of the hospital

- Leaning on the drip stand

- Forgetting to fix equipment (such as tables)

- Environmental change due to differences in buildings and departments

- Changes in the mental state, such as impatience, anxiety, or mental conditions

- Personality traits, such as overconfidence and wariness of pressing a nurse call button

2.1.6. At Discharge

- Continued sedation and anesthetic effect

2.2. Consideration on Support Target by Autonomous Mobile Robots

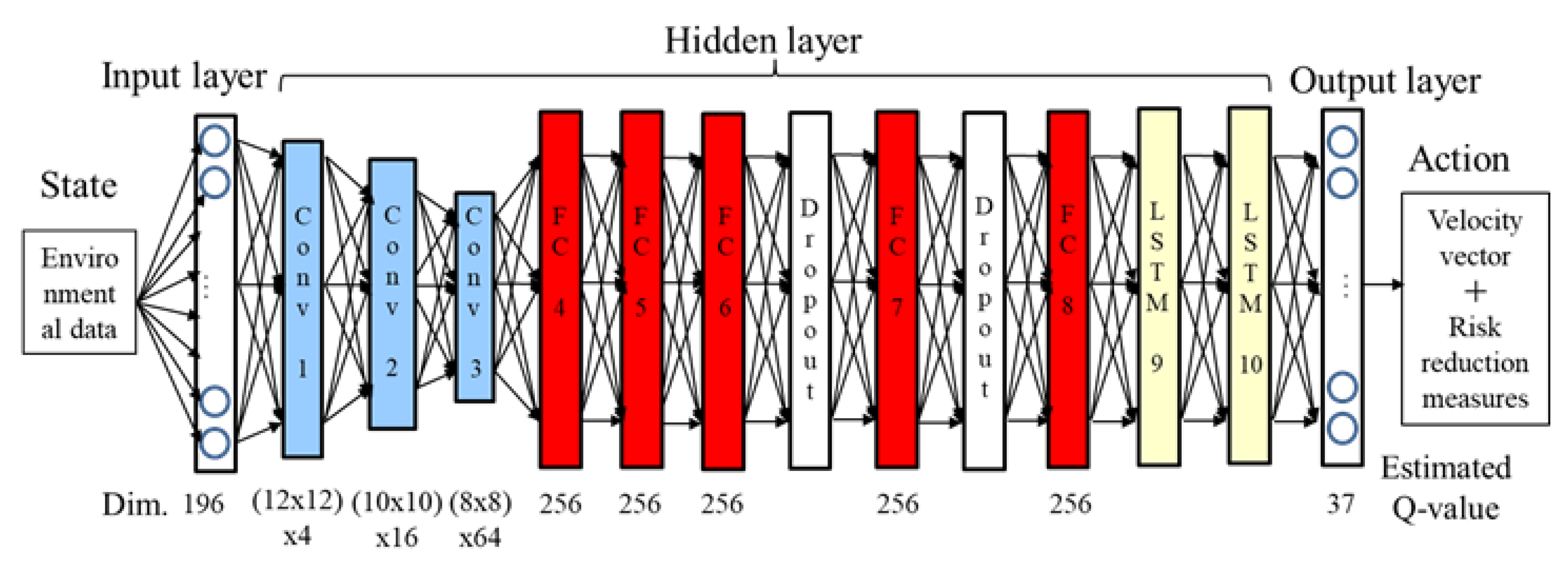

2.3. Proposal of Assist Method by Using Deep Reinforcement Learning

- The completeness of risk extraction is dependent on the experience and capability of the medical staff

- Risk assessment procedures are sometimes complex and require a specific number of person- hours depending on patient numbers. However, immediate risk assessment and reductions are required.

3. Results

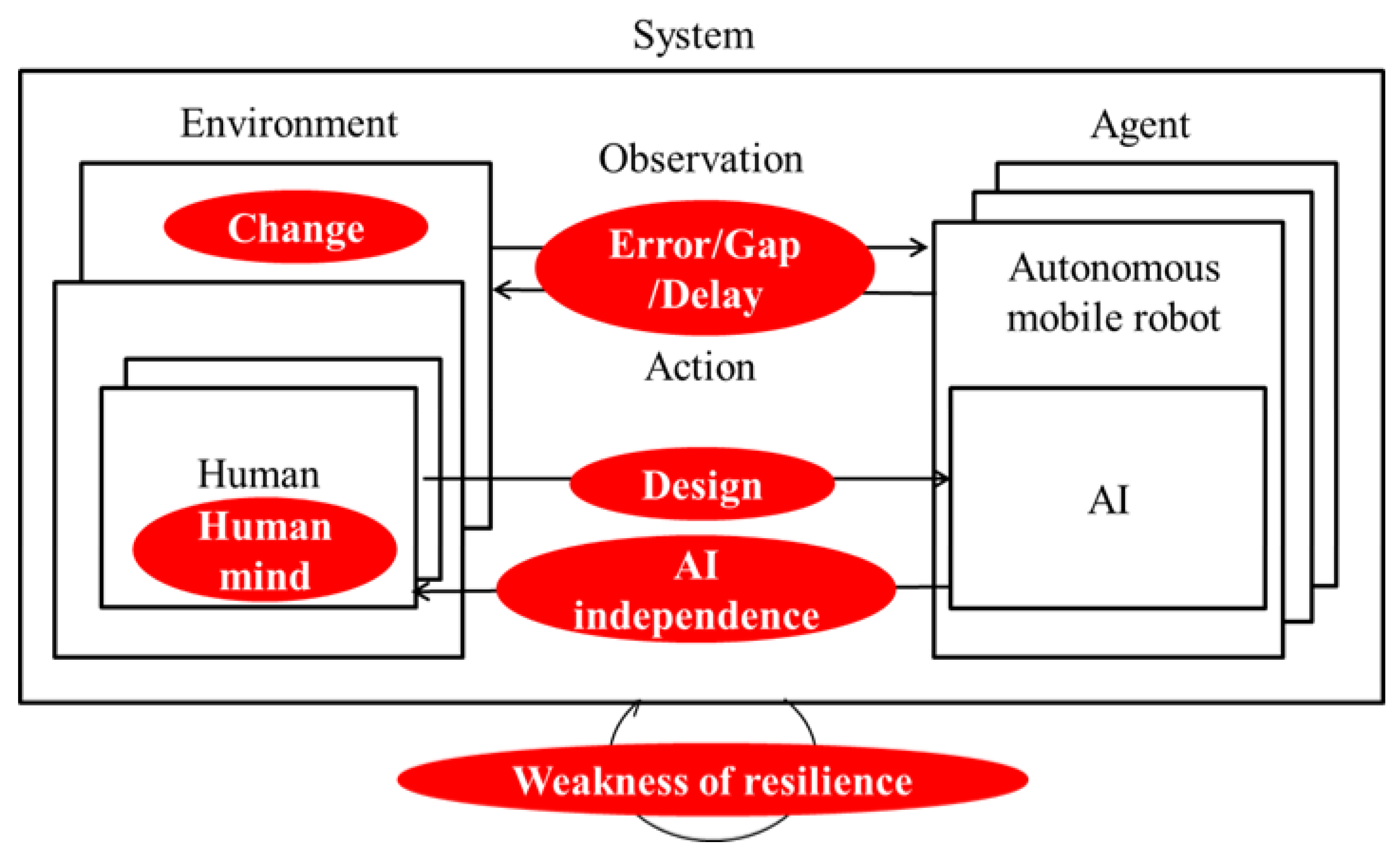

3.1. Risk from Changes

3.2. Risk from Errors, Gaps, and Delay

3.3. Risk from Design

3.4. Risk from AI Independence

3.5. Risk from Human Mind

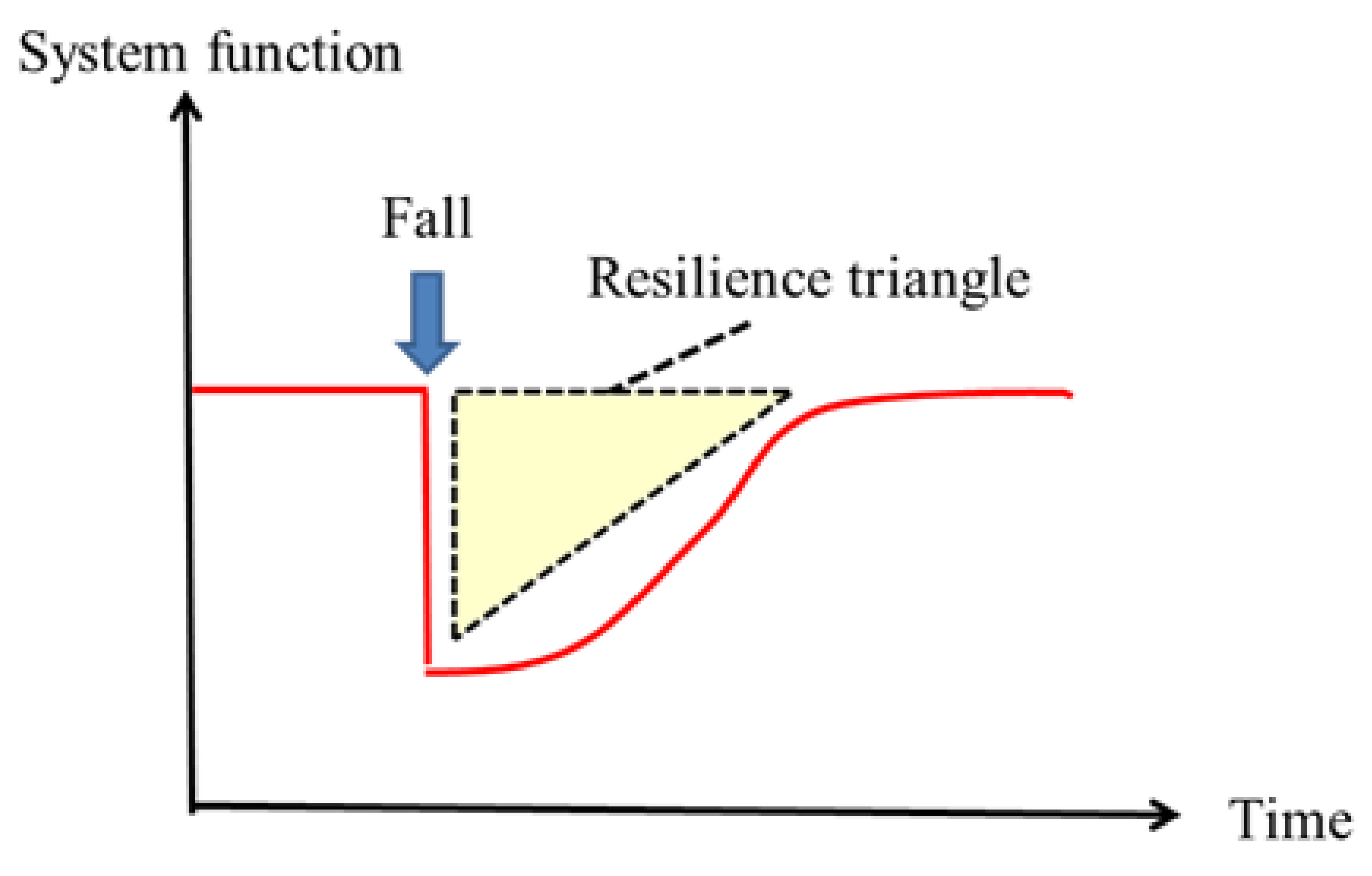

3.6. Risk from Weakness of Resilience

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Kurzweil, R. The Singularity is Near: When Humans Transcend Biology; Viking Books: New York, NY, USA, 2005. [Google Scholar]

- IEC International Electrotechnical Commission. IEC61508-ed2: Functional Safety of Electrical/Electronic/Programmable Electronic Safety-Related Systems; IEC International Electrotechnical Commission: Geneva, Switzerland, 2010. [Google Scholar]

- Fujiwara, K.; Sumi, Y.; Ogure, T.; Nakabo, Y. Three Safety Policies of Artificial Intelligence based on Robot Safety. In Proceedings of the 2017 JSME Conference on Robotics and Mechatronics, 1A1-F01, Fukushima, Japan, 10–13 May 2017. (In Japanese). [Google Scholar]

- Nakabo, Y.; Fujiwara, K.; Sumi, Y. Consideration of Errors and Faults Based on Safety of Machinery for Robot using Artificial Intelligence. In Proceedings of the 2017 JSME Conference on Robotics and Mechatronics, 1A1-F02, Fukushima, Japan, 10–13 May 2017. (In Japanese). [Google Scholar]

- Sumi, Y.; Kim, B.; Fujiwara, K.; Nakabo, Y. Development of Safety Evaluation Platform for the Robot using Artificial Intelligence. In Proceedings of the 35th RSJ, 3J1-06, Kawagoe, Japan, 11–14 September 2017. (In Japanese). [Google Scholar]

- Fujiwara, K.; Sumi, Y.; Ogure, T.; Nakabo, Y. Three Policies of AI-Safety in viewpoint of Functional Safety and Asymmetric Classification Methods for Judgement of Safety. In Proceedings of the 23nd Robotics Symposia, 3A1, Yaizu, Japan, 13–14 March 2018. (In Japanese). [Google Scholar]

- The Japanese Society for Artificial Intelligence. The Ethic Committee of the Japanese Society for Artificial Intelligence, Ethical Guidelines; The Japanese Society for Artificial Intelligence: Tokyo, Japan, 2017. [Google Scholar]

- Amodei, D.; Olah, C.; Steinhardt, J.; Christiano, P.; Schulman, J.; Mane, D. Concrete Problem in AI Safety. arXiv, 2016; arXiv:1606.06565v2. [Google Scholar]

- Namba, T.; Yamada, Y. Risk Analysis Method Preventing the Elderly from Falling. In Proceedings of the 22nd Robotics Symposia, 3A1, Annaka, Japan, 15–16 March 2017. (In Japanese). [Google Scholar]

- Namba, T.; Yamada, Y. Fall Risk Reduction for the Elderly by Using Mobile Robots Based on the Deep Reinforcement Learning. J. Robot. Netw. Artif. Life 2018, 4, 265–269. [Google Scholar] [CrossRef]

- Kobayashi, K.; Imagawa, S.; Suzuki, Y.; Nishida, Y.; Nagao, Y.; Ishiguro, N. Analysis of falls that caused serious events in hospitalized patients. Geriatr. Gerontol. Int. 2017. [Google Scholar] [CrossRef] [PubMed]

- Bruneau, M.; Chang, E.S.; Eguchi, T.R.; Lee, C.G.; O’Rourke, D.T.; Reinhorn, M.A.; Shinozuka, M.; Tierney, K.; Wallace, A.W.; Winterfeldt, V.D. A Framework to Quantitatively Assess and Enhance the Seismic Resilience of Communities. Earthq. Spectra 2003, 19, 733–752. [Google Scholar] [CrossRef]

- Aoki, M.; Itoi, T.; Sekimura, N. Resilience-Based Framework of engineered Systems for Continuous Safety Improvement. In Proceedings of the 12th International Conference on Structural Safety and Reliability, Vienna, Austria, 6–10 August 2017. [Google Scholar]

- Namba, T.; Yamada, Y. Risk of Deep Reinforcement Learning Applied to the Control Technology for the Autonomous Mobile Robot in the Human/Robot Coexisting Environment—A Study to Assist the Prevention from Falling for the patients in the Hospital. In Proceedings of the 2018 JSME Conference on Robotics and Mechatronics, 2A2-A13, Kokura, Japan, 2–5 June 2018. (In Japanese). [Google Scholar]

| Assessment | Yes | No | Automation | |

|---|---|---|---|---|

| Outpatient | Inpatient | |||

| Past history | ||||

| History of fall | 1 | 0 | ○ | |

| History of syncope | 1 | 0 | ○ | |

| History of convulsions | 1 | 0 | ○ | |

| Impairment | ||||

| Visual Impairment | 1 | 0 | ○ | |

| Hearing impairment | 1 | 0 | ○ | |

| Vertigo | 1 | 0 | ○ | ○ |

| Mobility | ||||

| Wheelchair | 1 | 0 | ○ | ○ |

| Cane | 1 | 0 | ○ | ○ |

| Walker | 1 | 0 | ○ | ○ |

| Need assistance | 1 | 0 | ○ | ○ |

| Cognition Disturbance of consciousness | ||||

| Restlessness | 1 | 0 | ○ | ○ |

| Memory disturbance | 1 | 0 | ○ | |

| Decreased judgment | 1 | 0 | ○ | |

| Dysuria | ||||

| Incontinence | 1 | 0 | ○ | |

| Frequent urination | 1 | 0 | ○ | |

| Need helper | 1 | 0 | ○ | |

| Go to bathroom often at night | 1 | 0 | ○ | |

| Difficult to reach the toilet | 1 | 0 | ○ | |

| Drug use | ||||

| Sleeping pills | 1 | 0 | ○ | |

| Psychotropic drugs | 1 | 0 | ○ | |

| Morphine | 1 | 0 | ○ | |

| Painkiller | 1 | 0 | ○ | |

| Anti-Parkinson drug | 1 | 0 | ○ | |

| Antihypertensive medication | 1 | 0 | ○ | |

| Anticancer agents | 1 | 0 | ○ | |

| Laxatives | 1 | 0 | ○ | |

| Dysfunction | ||||

| Muscle weakness | 1 | 0 | ○ | |

| Paralysis, numbness | 1 | 0 | ○ | ○ |

| Dizziness | 1 | 0 | ○ | ○ |

| Bone malformation | 1 | 0 | ○ | |

| Bone Rigidity | 1 | 0 | ○ | |

| Brachybasia | 1 | 0 | ○ | |

| Definitions | Conditions |

|---|---|

| Experiment environment | Deep Q-Learning Simulator |

| Algorithm | ε-greedy strategy (ε = 0.9→0.01) |

| Reward discount rate | γ = 0.9 |

| Learning style | Scene learning |

| AI platform | Chainer |

| Programing language | Python |

| State | 196 dimensions |

| Camera/LRF sensing data | position, pose, time |

| Patient’s data | Fall history, medical condition, medication, aid |

| Management data | Operational status of nurses and robots |

| Action | 37 actions |

| Speed fixed All 32 directions | 32 |

| Stop | 1 |

| Risk reduction measures | 4 (Remove, avoid, transit, accept) |

| Reward | Normalize each element |

| Achieve purpose | Positive/Negative |

| Intervention effect | Positive/Negative |

| Transfer efficiency | Positive/Negative |

| Safety | Positive/Negative |

| Processing interval | 100 ms |

| Risk Classification Characteristic of AI | Risk Factors | Risk Cases (Severity of Harm) | Construction Policy on Risk Reduction Measures | Proposed Risk Reduction Measures | |

|---|---|---|---|---|---|

| Ruled Based Type | DQN-Based Type | ||||

| Change | Completeness of branching by threshold | Inductive method exception | Leads inappropriate behavior (Fracture by falling) | Updating DNN model, and detecting change | Automatic real-time risk assessment and risk reduction (Learning international safety standards) |

| Error/Gap/Delay | Validity and optimality of threshold | Difference between virtual and real | Fracture caused by colliding or falling | Reducing spatiotemporal gap or learning including deviation | Data argumentation, ensemble, learning in space–time robust simulation |

| Design | Completeness, validity, optimality, security | Human defined parameter, no biological restriction | Fracture caused by colliding, slipping, falling during transfer or movement | External monitoring and emergency stop function | Safety verification, |

| AI independence | Designer’s intention | Creativity of AI | Attacking people without human intervention | Interacting with people | Mechanism to stop braking with human interaction |

| Human mind | Human malice, carelessness, misuse | Human malice, carelessness, misuse | Injuries to patients by outside purpose | Enlightenment, ethical guidelines, legislation | Safety standards related AI robotics, law constraints |

| Weakness of resilience | Unexpected accident | Not assuming after accident | Extension of hospitalization period | Clarification of restoration method | Simulate recovery method and procedure, Positive reward by shorten recovery time |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Namba, T.; Yamada, Y. Risks of Deep Reinforcement Learning Applied to Fall Prevention Assist by Autonomous Mobile Robots in the Hospital. Big Data Cogn. Comput. 2018, 2, 13. https://doi.org/10.3390/bdcc2020013

Namba T, Yamada Y. Risks of Deep Reinforcement Learning Applied to Fall Prevention Assist by Autonomous Mobile Robots in the Hospital. Big Data and Cognitive Computing. 2018; 2(2):13. https://doi.org/10.3390/bdcc2020013

Chicago/Turabian StyleNamba, Takaaki, and Yoji Yamada. 2018. "Risks of Deep Reinforcement Learning Applied to Fall Prevention Assist by Autonomous Mobile Robots in the Hospital" Big Data and Cognitive Computing 2, no. 2: 13. https://doi.org/10.3390/bdcc2020013

APA StyleNamba, T., & Yamada, Y. (2018). Risks of Deep Reinforcement Learning Applied to Fall Prevention Assist by Autonomous Mobile Robots in the Hospital. Big Data and Cognitive Computing, 2(2), 13. https://doi.org/10.3390/bdcc2020013