3. Methods

In the last twenty years, several methods have been proposed for background subtraction exploiting depth data, as an alternative or complement to color data. A summary of background subtraction methods for RGBD videos is given in

Table 1. Here, apart from the name of the authors and the related reference (column Authors and Ref.), we report (column Used data) whether they exploit only the depth information (D) or the complementary nature of color and depth information (RGBD). Moreover, we specify (column Depth data) how the considered depth data is acquired (Kinect, ToF cameras, stereo vision devices). Furthermore, we specify (column Model) the type of model adopted for the background, including Codebook [

34], Frame difference, Kernel Density Estimation (KDE) [

35], Mixture of Gaussians (MoG) [

36], Robust Principal Components Analysis (RPCA) [

37], Self-Organizing Background Subtraction (SOBS) [

38], Single Gaussian [

39], Thresholding, ViBe [

40], and WiSARD weightless neural network [

41]. Finally, we specify (column No. of models) if they extend to RGBD data well-known background models originally designed for color data (1 model) or model the scene background based on color and depth independently and then combine the results, on the basis of different criteria (2 models).

In the following, we provide a brief description of the reviewed methods, presented in chronological order. In case of research dealing with higher-level systems (e.g., teleconferencing, matting, fall detection, human tracking, gesture recognition, object detection), we limit our attention to background modeling and foreground detection.

Eveland et al. [

6] present a method of statistical background modeling for stereo sequences based on the disparity images extracted from stereo pairs. The depth background is modeled by a single Gaussian, similarly to [

39], but selective update prevents the incorporation of foreground objects into the background.

The method proposed by Gordon et al. [

42] is an adaptation of the MoG algorithm to color and depth data obtained with a stereo device. Each background pixel is modeled as a mixture of four-dimensional Gaussian distributions: three components are the color data (the YUV color space components), and the fourth one is the depth data. Color and depth features are considered independent, and the same updating strategy of the original MoG algorithm is used to update the distribution parameters. The authors propose a strategy where, for reliable depth data, depth-based decisions bias the color-based ones: in case that a reliable distribution match is found in the depth component, the color-based matching criterion is relaxed, thus reducing the color camouflage errors. When the stereo matching algorithm is not reliable, the color-based matching criterion is set to be harder to avoid problems such as shadows or local illumination changes.

Ivanov et al. [

43] propose an approach based on stereo vision, which uses the disparity (estimated offline) to warp one image of the pair in the other one, thus creating a geometric background model. If the color and brightness between corresponding points do not match, the pixels either belong to a foreground object or to an occlusion shadow. The latter case can be further disambiguated using more than two camera views.

Harville et al. [

16] propose a foreground segmentation method using the YUV color space with the additional depth values estimated by stereo cameras. They adopt four-dimensional MoG models, also modulating the background model learning rate based on scene activity and making color-based segmentation criteria dependent on depth observations.

Kolmogorov et al. [

44] describe two algorithms for bi-layer segmentation fusing stereo and color/contrast information, focused on live background substitution for teleconferencing. To segment the foreground, this approach relies on stereo vision, assuming that people participating in the teleconference are close to the camera. Color information is used to cope with stereo occlusion and low-texture regions. The color/contrast model is composed of MoG models for the background and the foreground.

Crabb et al. [

45] propose a method for background substitution, a regularly used effect in TV and video production. Thresholding of depth data coming from a ToF camera, using a user-defined threshold, is adopted to generate a trimap (consisting of background, foreground, and uncertain pixels). Alpha matting values for uncertain pixels, mainly in the borders of the segmented objects, are needed for a natural looking blending of those objects on a different background. They are obtained by cross-bilateral filtering based on color information.

In [

11] by Guomundsson et al., 3D multi-person tracking in smart-rooms is tackled. They adopt a single Gaussian model for the range data from a two-modal camera rig (consisting of a ToF range camera and an additional higher resolution grayscale camera) for background subtraction.

In [

46], Wu et al. present an algorithm for bi-layer segmentation of natural videos in real time using a combination of infrared, color, and edge information. A prime application of this system is in telepresence, where there is a need to remove the background and replace it with a new one. For each frame, the IR image is used to pre-segment the color image using a simple thresholding technique. This pre-segmentation is adopted to initialize a pentamap, which is then used by graph cuts algorithm to find the final foreground region.

The depth data provided by a ToF camera is used to generate 3D-TV contents by Frick et al. [

7]. The MoG algorithm is applied to the depth data to obtain foreground regions, which are then excluded by median filtering to improve background depth map accuracy.

In [

47], Leens et al. propose a multi-camera system that combines color and depth data, obtained with a low-resolution ToF camera, for video segmentation. The algorithm applies the ViBe algorithm independently to the color and the depth data. The obtained foreground masks are then combined with logical operations and post-processed with morphological operations.

MoG is also adopted in the algorithm proposed by Stormer et al. [

48], where depth and infrared data captured by a ToF camera are combined to detect foreground objects. Two independent background models are built, and each pixel is classified as background or foreground only if the two models matching conditions agree. Very close or overlapping foreground objects are further separated using a depth gradient-based segmentation.

Wang et al. [

49] propose TofCut, an algorithm that combines color and depth cues in a unified probabilistic fusion framework and a novel adaptive weighting scheme to control the influence of these two cues intelligently over time. Bilayer segmentation is formulated as a binary labeling problem, whose optimal solution is obtained by minimizing an energy function. The data term evaluates the likelihood of each pixel belonging to the foreground or the background. The contrast term encodes the assumption that segmentation boundaries tend to align with the edges of high contrast. Color and depth foreground and background pixels are modeled through MoGs and single Gaussians, respectively, and their weighting factors are adaptively adjusted based on the discriminative capabilities of their models. The algorithm is also used in an automatic matting system [

82] to automatically generate foreground masks, and consequently trimaps, to guide alpha matting.

Dondi et al. [

50] propose a matting method using the intensity map generated by ToF cameras. It first segments the distance map based on the corresponding values of the intensity map and then applies region growing to the filtered distance map, to identify and label pixel clusters. A trimap is obtained by eroding the output to select the foreground, dilating it to select foreground, and selecting as indeterminate the remaining contour pixels. The obtained trimap is fed in input to a matting algorithm that refines the result.

Frick et al. [

51] use a thresholding technique to separate the foreground from the background in multiple planes of the video volume, for the generation of 3D-TV contents. A posterior trimap-based refinement using hierarchical graph cuts segmentation is further adopted to reduce the artifacts produced by the depth noise.

Kawabe et al. [

52] employ stereo cameras to extract pedestrians. Foreground regions are extracted by MoG-based background subtraction and shadow detection using the color data. Then the moving objects are extracted by thresholding the histogram of depth data, computed by stereo matching.

Mirante et al. [

53] exploit the information captured by a multi-sensor system consisting of a stereo camera pair with a ToF range sensor. Motion, retrieved by color and depth frame difference, provides the initial ROI mask. The foreground mask is first extracted by region growing in the depth data, where seeds are obtained by the ROI, then refined based on color edges. Finally, a trimap is generated, where uncertain values are those along the foreground contours, and are classified based on color in the CIELab color space.

Rougier et al. [

54] explore the Kinect sensor for the application of detecting falls in the elderly. For people detection, the system adopts a single Gaussian depth background model.

Schiller and Koch [

55] propose an approach to video matting that combines color information with the depth provided by ToF cameras. Depth keying is adopted to segment moving objects based on depth information, comparing the current depth image with a depth background image (constructed by averaging several ToF-images). MoG is adopted to segment moving objects based on color information. The two segmentations are weighted using two types of reliability measure for depth measurements: the depth variance and the amplitude image of the ToF-camera. The weighted average of the color and depth segmentations is used as matting alpha value for blending foreground and background, while its thresholding (using a user-defined threshold) is used for evaluating moving object segmentation.

Stone and Skubic [

56] use only the depth information provided by a Kinect device to extract the foreground. For each pixel, minimum and maximum depth values d

and d

are computed by a set of training images to form a background model. For a new frame, each pixel is compared against the background model, and those pixels which lie outside the range [d

− 1, d

+ 1] are considered foreground.

In [

9], Han et al. present a human detection and tracking system for a smart environment application. Background subtraction is applied only on the depth images as frame-by-frame difference, assisted by a clustering algorithm that checks the depth continuity of pixels in the neighborhood of foreground pixels. Once the object has been located in the image, visual features are extracted from the RGB image and are then used for tracking the object in successive frames.

In the surveillance system based on the Kinect proposed by Clapés et al. [

57], a per pixel background subtraction technique is presented. The authors propose a background model based on a four-dimensional Gaussian distribution (using color and depth features). Then, user and object candidate regions are detected and recognized using robust statistical approaches.

In the gesture recognition system presented by Mahbub et al. [

10], the foreground objects are extracted by the depth data using frame difference.

Ottonelli et al. (2013) [

59] refine ViBe segmentation of the color data by adding to the achieved foreground mask a compensation factor computed based on the color and depth data obtained by a stereo camera.

In the object detection system presented by Zhang et al. [

60], background subtraction is achieved by single Gaussian modeling of the depth information provided by a Kinect sensor.

Fernandez-Sanchez et al. [

58] adopt Codebook as background model and consider data captured by Kinect cameras. They analyze two approaches that differ in the depth integration method: the four-dimensional Codebook (CB4D) considers merely depth as a fourth channel of the background model, while the Depth-Extended Codebook (DECB) adds a joint RGBD fusion method directly into the model. They proved that the latter achieves better results than the former. In [

15], the authors consider stereo disparity data, besides color. To get the best of color and depth features, they extend the DECB algorithm through a post-processing stage for mask fusion (DECB-LF), based on morphological reconstruction using the output of the color-based algorithm.

Braham et al. [

61] adopt two background models for depth data, separating valid values (modeled by a single Gaussian model) and invalid values (holes). The Gaussian mean is updated to the maximum valid value, while the standard deviation follows a quadratic relationship with respect to the depth. This leads to a depth-dependent foreground/background threshold that enables the model to adapt to the non-uniform noise of range images automatically.

In [

17], Camplani and Salgado propose an approach, named CL

, based on a combination of color and depth classifiers (CL

and CL

) and the adoption of the MoG model. The combination of classifiers is based on a weighted average that allows to adaptively modifying the support of each classifier in the ensemble by considering foreground detections in the previous frames and the depth and color edges. For each pixel, the support of each classifier to the final segmentation result is obtained by considering the global edge-closeness probability and the classification labels obtained in the previous frame. In [

62], the authors improve their method, proposing a method named MoG-RegPRE, that builds not only pixel-based but also region-based models from depth and color data, and fuses the models in a mixture of experts fashion to improve the final foreground detection performance.

Chattopadhyay et al. [

63] adopt RGBD streams for recognizing gait patterns of individuals. To extract RGBD information of moving objects, they adopt the SOBS model for color background subtraction and use the obtained foreground masks to extract the depth information of people silhouettes from the registered depth frames.

In [

18], Gallego and Pardás present a foreground segmentation system that combines color and depth information captured by a Kinect camera to perform a complete Bayesian segmentation between foreground and background classes. The system adopts a combination of spatial-color and spatial-depth region-based MoG models for the foreground, as well as two color and depth pixel-wise MoG models for the background, in a Logarithmic Opinion Pool decision framework used to combine the likelihoods of each model correctly. A post-processing step based on a trimap analysis is also proposed to correct the precision errors that the depth sensor introduces in the object contour.

The algorithm proposed by Giordano et al. in [

64] explicitly models the scene background and foreground with a KDE approach in a quantized x-y-hue-saturation-depth space. Foreground segmentation is achieved by thresholding the log-likelihood ratio over the background and foreground probabilities.

Murgia et al. [

65] propose an extension of the Codebook model. Similarly to CB4D [

58], it includes depth as a fourth channel of the background model but also applies colorimetric invariants to modify the color aspect of the input images, to give them the aspect they would have under canonical illuminants.

In [

66], Song et al. model grayscale color and depth values based on MoG. The combination of the two models is based on the product of the likelihoods of the two models.

Boucher et al. [

67] initially exploit depth information to achieve a coarse segmentation, using middleware of the adopted ASUS Xtion camera. The obtained mask is refined in uncertain areas (mainly object contours) having high background/foreground contrast, locally modeling colors by their mean value.

Cinque et al. [

68] adapt to Kinect data a matting method previously proposed for ToF data. It is based on Otsu thresholding of the depth map and region growing for labeling pixel clusters, assembled to create an alpha map. Edge improvement is obtained by logical OR of the current map with those of the previous four frames.

Huang et al. [

69] propose a post-processing framework based on an initial segmentation obtained solely by depth data. Two post-processing steps are proposed: a foreground hole detection step and object boundary refining step. For foreground hole detection, they obtain two weak decisions based on the region color cue and the contour contrast cue, adaptively fused according to their corresponding reliability. For object boundary refinement, they apply weighted fusion of motion probability weighted temporal prior, color likelihood, and smoothness constraints. Therefore, besides handling challenges such as color camouflage, illumination variations, and shadows, the method maintains spatial and temporal consistency of the obtained segmentation, a fundamental issue for the telepresence target application.

Javed et al. [

70] propose the DEOR-PCA (Depth Extended Online RPCA) method for background subtraction using binocular cameras. It consists of four main stages: disparity estimation, background modeling, integration, and spatiotemporal constraints. Initially, the range information is obtained using disparity estimation algorithms on a set of stereo pairs. Then, OR-PCA is applied to each of color left image and related disparity image to model the background, separately. The integration adds low-rank and sparse components obtained via OR-PCA to recover the background model and foreground mask from each image. The reconstructed sparse matrix is then thresholded to get the binary foreground mask. Finally, spatiotemporal constraints are applied to remove from the foreground mask most of the noise due to depth information.

In [

71], Nguyen et al. present a method where, as an initial offline step, noise is removed from depth data based on a noise model. Background subtraction is then solved by combining RGB and depth features, both modeled by MoG. The fundamental idea in their combination strategy is that when depth measurement is reliable, the segmentation is mainly based on depth information; otherwise, RGB is used as an alternative.

Sun et al. [

72] propose a MoG model for color information and a single Gaussian model for depth, together with a color-depth consistency check mechanism driving the updating of the two models. However, experimental results aim at evaluating background estimation, rather than background subtraction.

Tian et al. [

73] propose a depth-weighted group-wise PCA-based algorithm, named DG-PCA. The background/foreground separation problem is formulated as a weighted L

-norm PCA problem with depth-based group sparsity being introduced. Dynamic groups are first generated solely based on depth, and then an iterative solution using depth to define the weights in L

-norm is developed. The method handles moving cameras through global motion compensation.

In [

19], Huang et al. present a method where two separate color and depth background models are based on ViBe, and the two resulting foreground masks are fused by weighted average. The result is further adaptively refined, taking into account multi-cue information (color, depth, and edges) and spatiotemporal consistency (in the neighborhood of foreground pixels in the actual and previous frames).

In [

20], Liang et al. propose a method to segment foreground objects based on color and depth data independently, using an existing background subtraction method (in the experiments they choose MoG). They focus on refining the inaccurate results through supervised learning. They extract several features from the source color and depth data in the foreground areas. These features are fed to two independent classifiers (in the experiments they choose random forest [

83]) to obtain a better foreground detection.

In [

74], Palmero et al. propose a baseline algorithm for human body segmentation using color, depth, and thermal information. To reduce the spatial search space in subsequent steps, the preliminary step is background subtraction, achieved in the depth domain using MoG.

In the method proposed by Chacon et al. [

75], named SAFBS (Self-Adapting Fuzzy Background Subtraction), background subtraction is based on two background models for color (in the HSV color space) and depth, providing an initial foreground segmentation by frame differencing. A fuzzy algorithm computes the membership value of each pixel to background or foreground, based on color and depth differences, as well as depth similitude, of the current frame and the background. Temporal and spatial smoothing of the membership values is applied to reduce false alarms due to depth flickering and imprecise measurements around object contours, respectively. The classification result is then employed to update the two background models, using automatically computed learning rates.

De Gregorio and Giordano [

76] adapt an existing background modeling method using the WiSARD weightless neural network (WNNs) [

41] to the domain of RGBD videos. Color and depth video streams are synchronously but separately modeled by WNNs at each pixel, using a set of initial video frames for network training. In the detection phase, classification is interleaved with re-training on current colors whenever pixels are detected as belonging to the background. Finally, the obtained output masks are combined by an OR operator and post-processed by morphological filtering.

Javed et al. [

77] investigate the performance of an online RPCA-based method, named SRPCA, for moving object detection using RGBD videos. The algorithm consists of three main stages: (i) detection of dynamic images to create an input dynamic sequence by discarding motionless video frames; (ii) computation of spatiotemporal graph Laplacians; and (iii) application of RPCA to incorporate the preceding two steps for the separation of background and foreground components. In the experiments, the algorithm is tested by using only intensity, only RGB, and RGBD features, leading to the surprising conclusion that best results are achieved using only intensity features.

The algorithm proposed by Maddalena and Petrosino [

78], named RGBD-SOBS, is based on two background models for color and depth information, exploiting a self-organizing neural background model previously adopted for RGB videos [

84]. The resulting color and depth detection masks are combined, not only to achieve the final results but also to better guide the selective model update procedure.

Minematsu et al. [

79] propose an algorithm, named SCAD, based on a simple combination of the appearance (color) and depth information. The depth background is obtained using, for each pixel, its farthest depth value along the whole video, thus resulting in a batch algorithm. The likelihood of the appearance background is computed using texture-based and RGB-based background subtraction. To reduce false positives due to illumination changes, SCAD roughly detects foreground objects by using texture-based background subtraction. Then, it performs RGB-based background subtraction to improve the results of texture-based background subtraction. Finally, foreground masks are obtained using graph cuts to optimize an energy function which combines the two likelihoods of the background.

Moyá-Alcover et al. [

32] construct a scene background model using KDE with a three-dimensional Gaussian kernel. One of the dimensions models depth information, while the other two model normalized chromaticity coordinates. Missing depth data are modeled using a probabilistic strategy to distinguish pixels that belong to the background model from those which are due to foreground objects. Pixels that cannot be classified as background or foreground are placed in the undefined class. Two different implementations are obtained depending on whether undefined pixels are considered as background (GSM

) or foreground (GSM

), demonstrating their suitability for scenes where actions happen far or close to the sensor, respectively.

Trabelsi et al. [

80] propose the RGBD-KDE algorithm, also based on a scene background model using KDE, but using a two-dimensional Gaussian kernel. One of the dimensions models depth information, while the other models the intensity (average of RGB components). To reduce computational complexity, the Fast Gaussian Transform is adapted to the problem.

Zhou et al. [

81] construct color and depth models based on ViBe and fuse the results in a weighting mechanism for the model update that relies on depth reliability.

5. Datasets

Several RGBD datasets exist for different tasks, including object detection and tracking, object and scene recognition, human activity analysis, 3D-simultaneous localization and mapping (SLAM), and hand gesture recognition (e.g., see surveys in [

24,

86,

87]). However, depending on the application they have been devised for, they can include single RGBD images instead of videos, or they can supply GTs in the form of bounding boxes, 3D geometries, camera trajectories, 6DOF poses, or dense multi-class labels, rather than GT foreground masks.

In

Table 3, we summarize some publicly available RGBD datasets suitable for background subtraction that include videos and, eventually, GT foreground masks. Specifically, we report their acronym, website, and reference publication (column Name & Refs.), the source for depth data (column Source), whether or not they also provide GT foreground masks (column GT masks), the number of videos they include (column No. of videos), some RGBD background subtraction methods adopting them for their evaluation (column Adopted by), and the main application they have been devised for (column Main application).

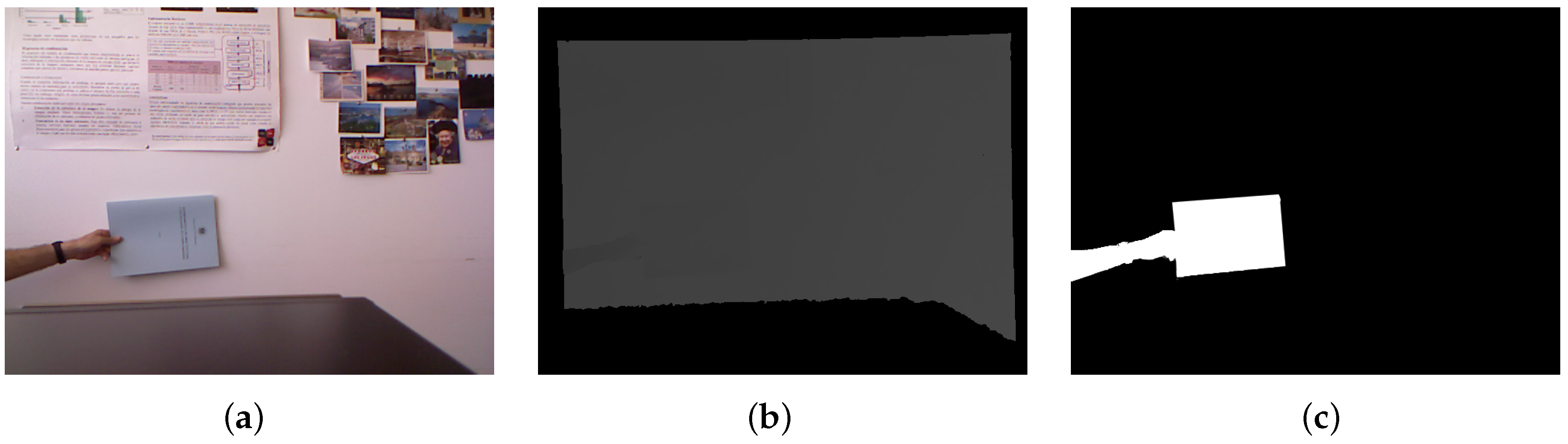

The GSM dataset [

32] includes seven different sequences designed to test some of the main problems in scene modeling when both color and depth information are used: color camouflage, depth camouflage, color shadows, smooth and sudden illumination changes, and bootstrapping. Each sequence is provided with some hand-labeled GT foreground masks. All the sequences are also included in the SBM-RGBD dataset [

33] and accompanied by 56 GT foreground masks.

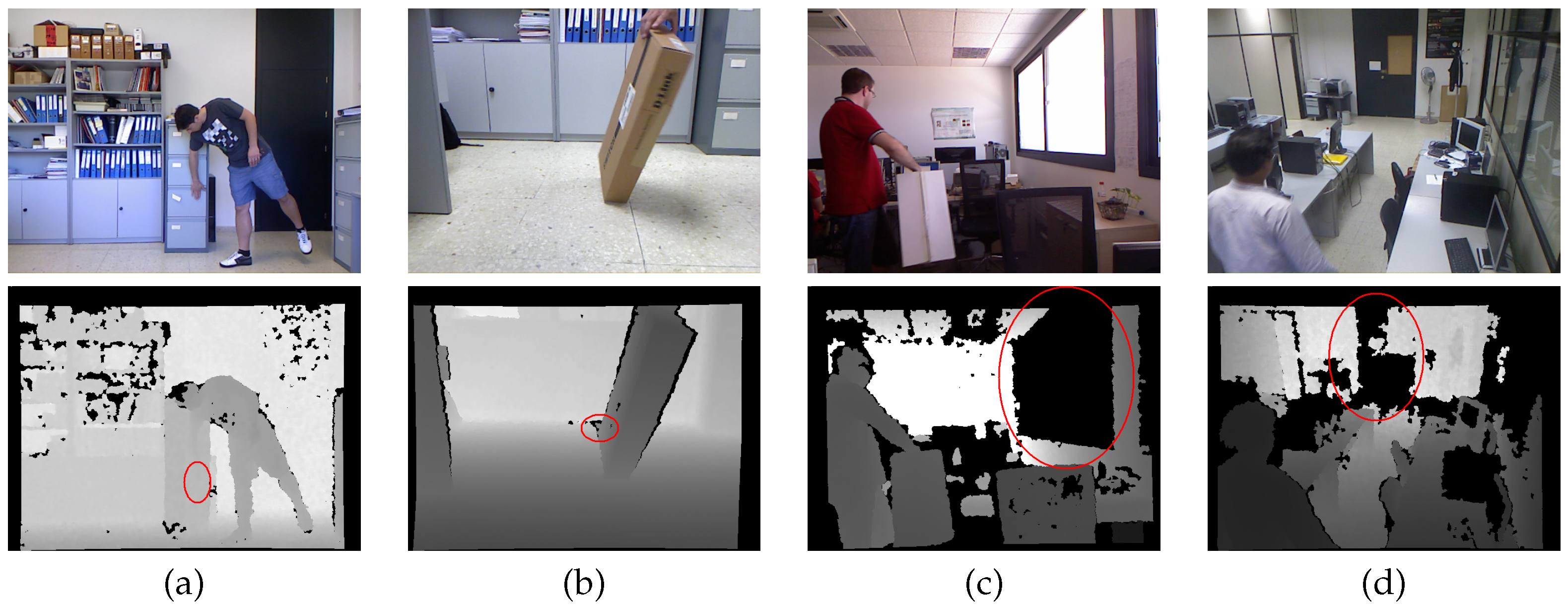

The Kinect dataset [

90] contains nine single person sequences, recorded with a Kinect camera, to show depth and color camouflage situations that are prone to errors in color-depth scenarios.

The MICA-FALL dataset [

71] contains RGBD videos for the analysis of human activities, mainly fall detection. Two scenarios are considered for capturing activities that happen at the center field of view of one of the four Kinect sensors or at the cross-view of two or more sensors. Besides color and depth data, accelerometer information and the coordinates of 20 skeleton joints are provided for every frame.

The MULTIVISION dataset consists of two different sets of sequences for the objective evaluation of background subtraction algorithms based on depth information as well as color images. The first set (MULTIVISION Stereo [

15]) consists of four sequences recorded by stereo cameras, combined with three different disparity estimation algorithms [

103,

104,

105]. The sequences are devised to test color saturation, color and depth camouflage, color shadows, low lighting, flickering lights, and sudden illumination changes. The second set (MULTIVISION Kinect [

58]) consists of four sequences recorded by a Kinect camera, devised to test out of sensor range depth data, color and depth camouflage, flickering lights, and sudden illumination changes. For all the sequences, some frames have been hand-segmented to provide GT foreground masks. The four MULTIVISION Kinect sequences are also included in the SBM-RGBD dataset [

33] and accompanied by 294 GT foreground masks.

The Princeton Tracking Benchmark dataset [

95] includes 100 videos covering many realistic cases, such as deformable objects, moving camera, different occlusion conditions, and a variety of clutter backgrounds. The GTs are manual annotations in the form of bounding-boxes drawn around the objects on each frame. One of the sequences (namely, sequence bear_front) is also included in the SBM-RGBD dataset [

33] and accompanied by 15 GT foreground masks.

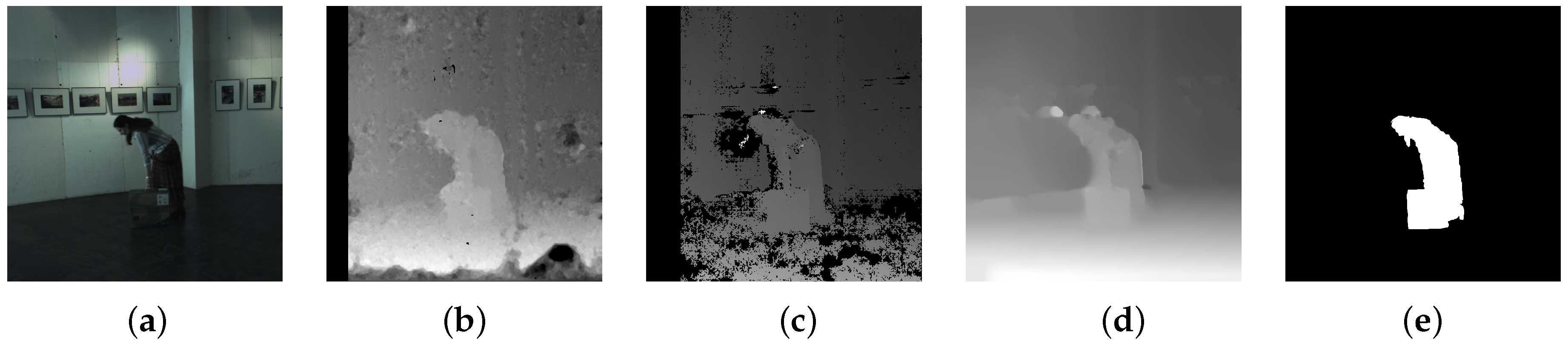

The RGB-D Object Detection dataset [

17] includes four different sequences of indoor environments, acquired with a Kinect camera, that contain different demanding situations, such as color and depth camouflage or cast shadows. For each sequence, a hand-labeled ground truth is provided to test foreground/background segmentation algorithms. All the sequences, suitably subdivided and reorganized, are also included in the SBM-RGBD dataset [

33] and accompanied by more than 1100 GT foreground masks.

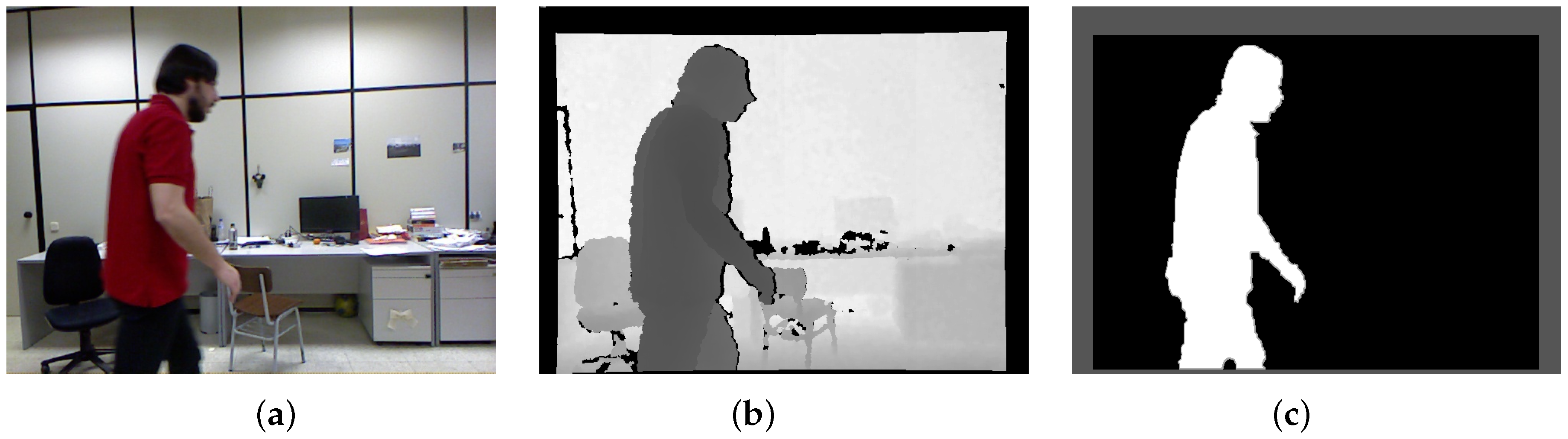

The RGB-D People dataset [

98] is devoted to evaluating people detection and tracking algorithms for robotics, interactive systems, and intelligent vehicles. It includes more than 3000 RGBD frames acquired in a university hall from three vertically mounted Kinect sensors. The data contains walking and standing persons seen from different orientations and with different levels of occlusions. Regarding the ground truth, all frames are annotated manually to contain bounding boxes in the 2D depth image space and the visibility status of subjects. Unfortunately, the GT foreground masks built and used in [

62] are not available.

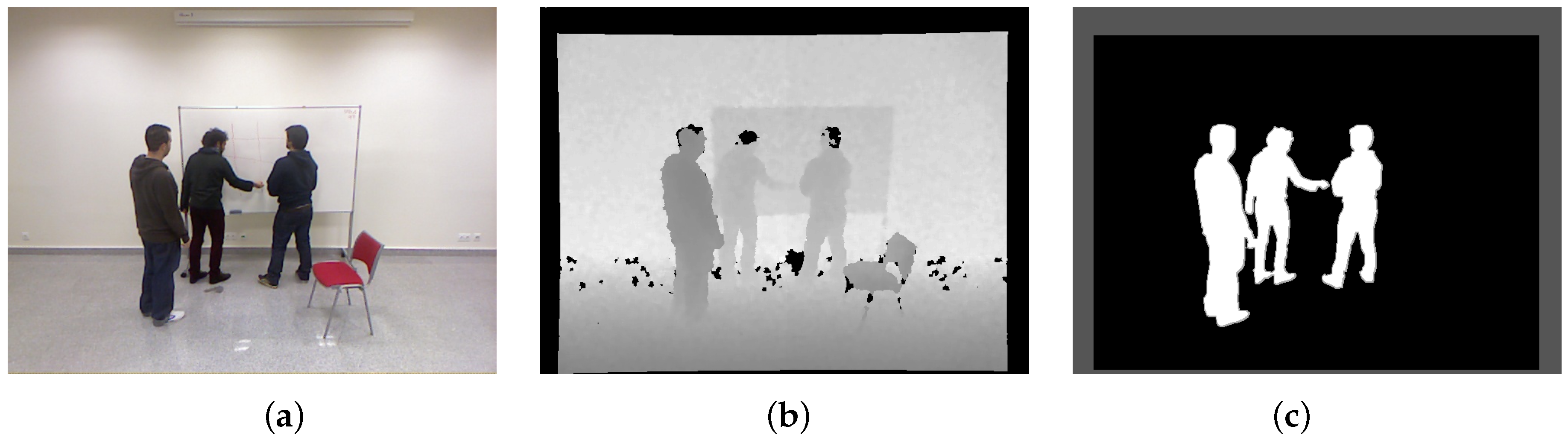

The SBM-RGBD dataset [

33] is a publicly available benchmarking framework specifically designed to evaluate and compare scene background modeling methods for moving object detection on RGBD videos. It involves the most extensive RGBD video dataset ever made for this specific purpose and also includes videos coming from other datasets, namely, GSM [

32], MULTIVISION [

58], Princeton Tracking Benchmark [

95], RGB-D Object Detection dataset [

17], and UR Fall Detection Dataset [

106,

107]. The 33 videos acquired by Kinect cameras span seven categories, selected to include diverse scene background modeling challenges for moving object detection: illumination changes, color and depth camouflage, intermittent motion, out of sensor depth range, color and depth shadows, and bootstrapping. Depth images are already synchronized and registered with the corresponding color images by projecting the depth map onto the color image, allowing a color-depth pixel correspondence. For each sequence, pixels that have no color-depth correspondence (due to the difference in the color and depth cameras centers) are signaled in a binary Region-of-Interest (ROI) image and are excluded by the evaluation.

Other publicly available RGBD video datasets are worth mentioning, being equipped with pixel-wise GT foreground masks, which are devoted to specific applications. These include the BIWI RGBD-ID dataset [

108,

109] and the IPG dataset [

110,

111], targeted to people re-identification, and the VAP Trimodal People Segmentation Dataset [

74,

112], that contains videos captured by thermal, depth, and color sensors, devoted to human body segmentation.