Implementation Support of Security Design Patterns Using Test Templates †

Abstract

:1. Introduction

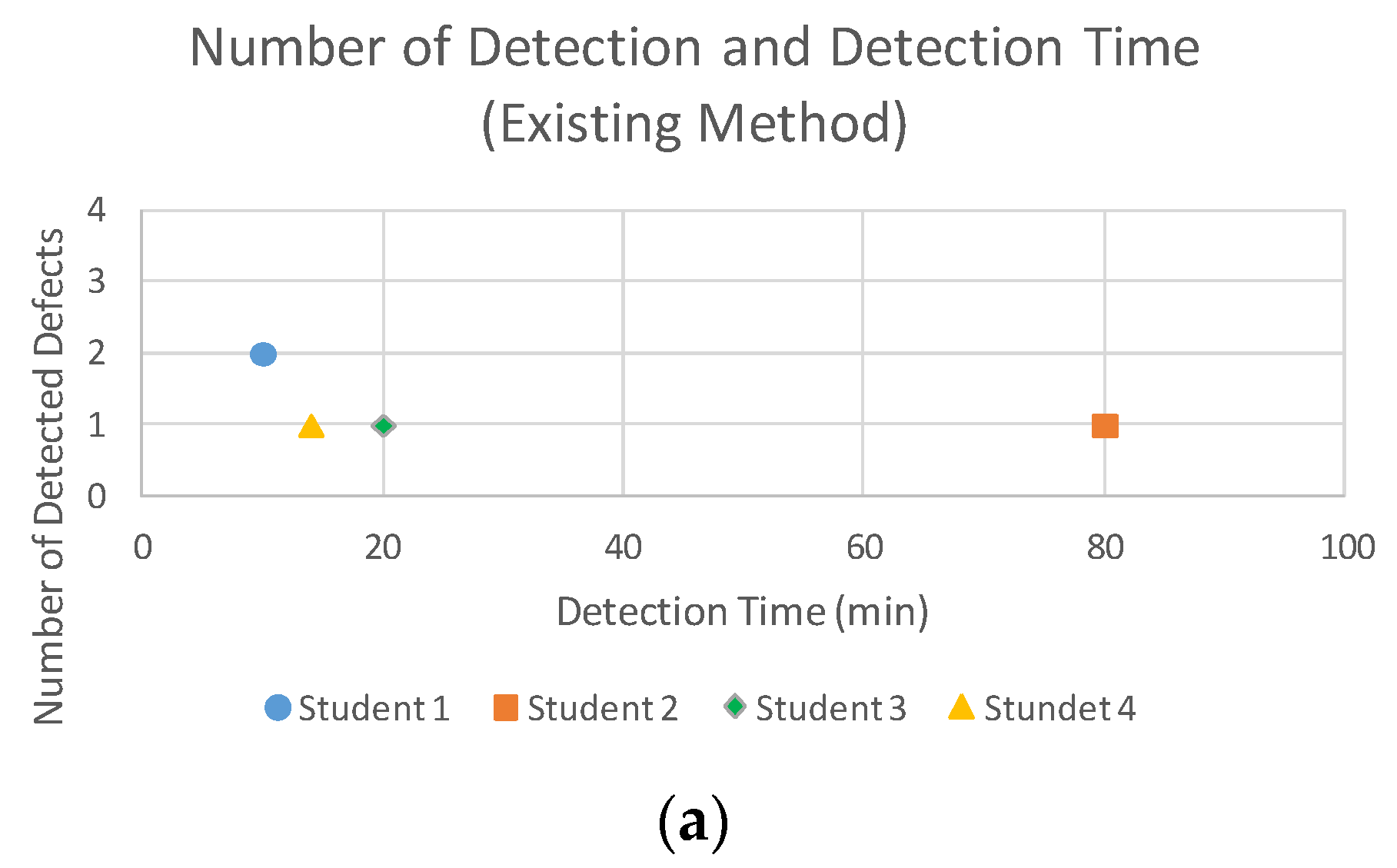

- RQ 1: Is our method more efficient at finding defects when implementing a security design pattern than an existing method?

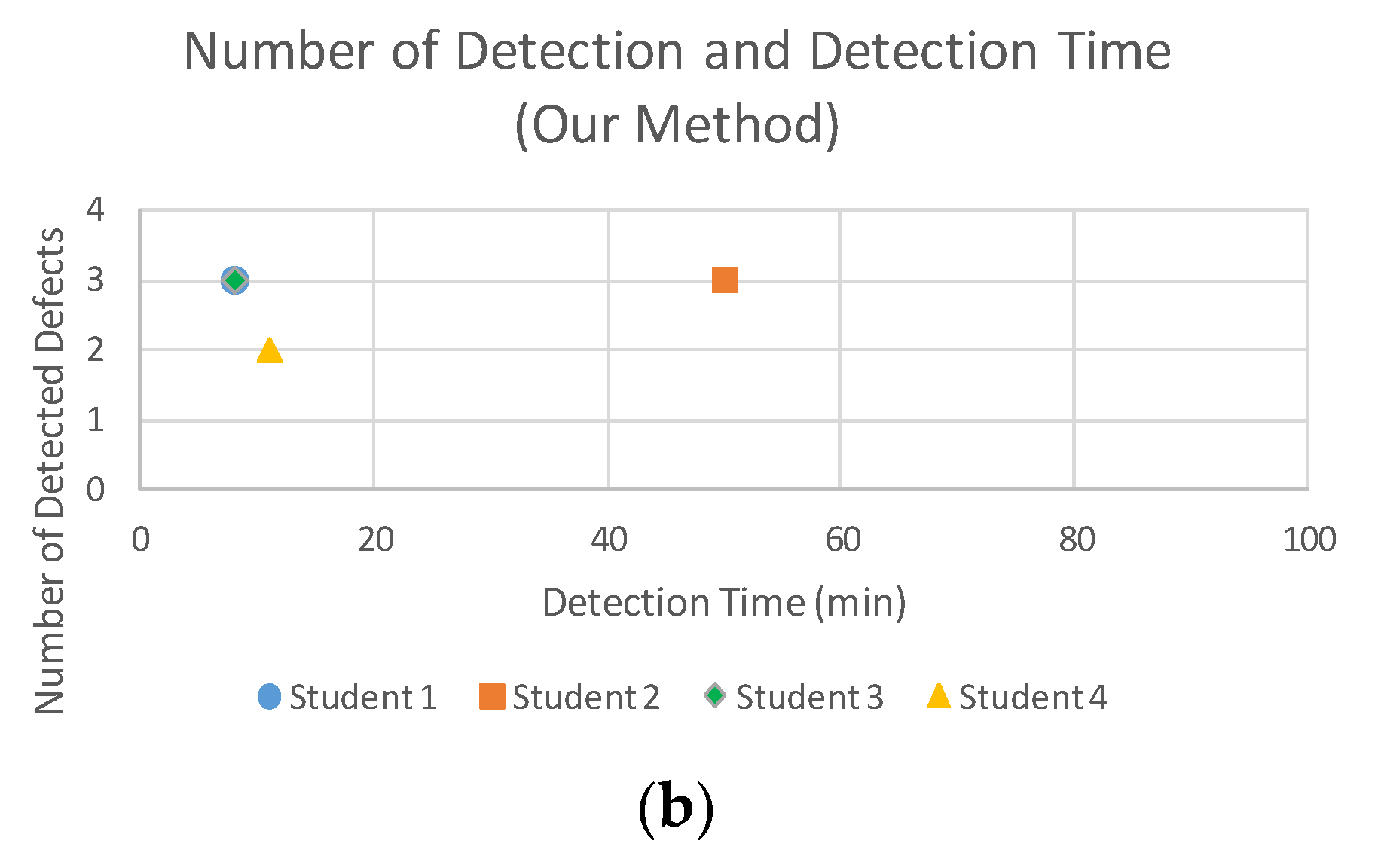

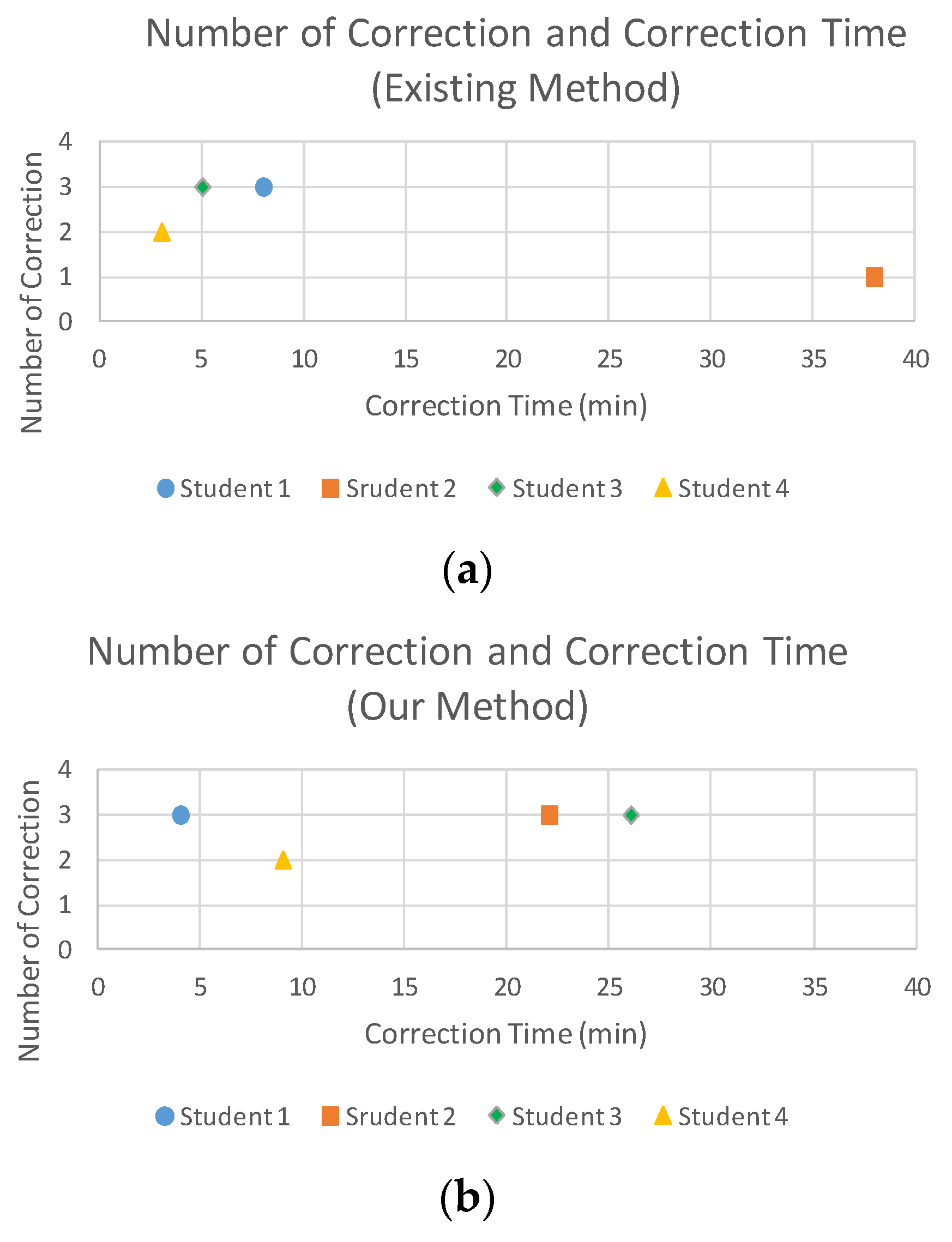

- RQ 2: Does our method create a more effective test to find implementation defects in a security design pattern than an existing method?

- RQ3: Compared to an existing method, does our method more effectively create a test to find implementation defects in a security design pattern and allow for a regression test?

- RQ 4: Is our method more effective at correcting implementation defects in a security design pattern compared to an existing method?

- We create a reusable test template derived from security design patterns.

- Implementation defects in a security design pattern are efficiently determined.

- Our method supports the appropriate implementation of security design patterns.

2. Background and Problem

2.1. Security Design Patterns

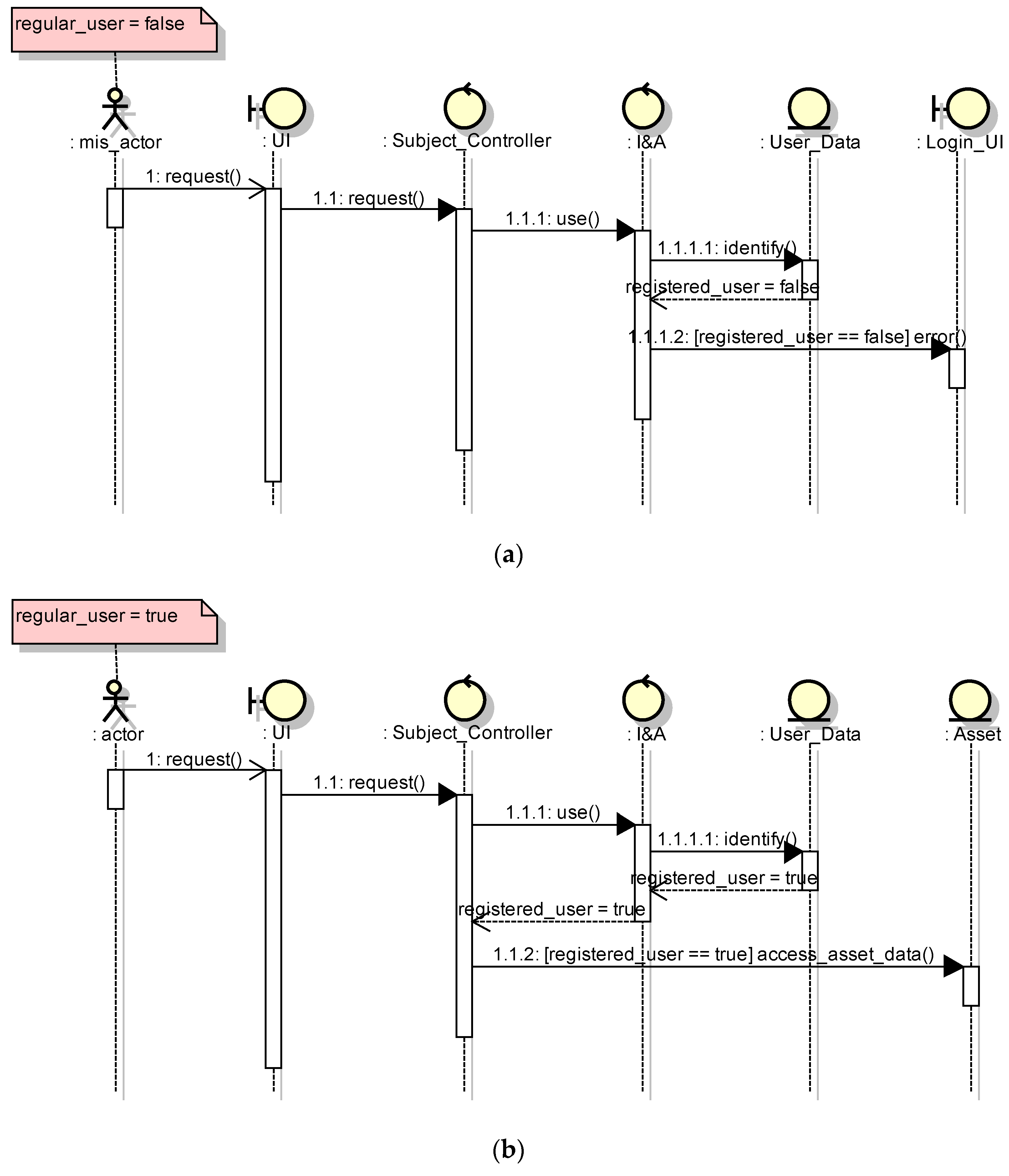

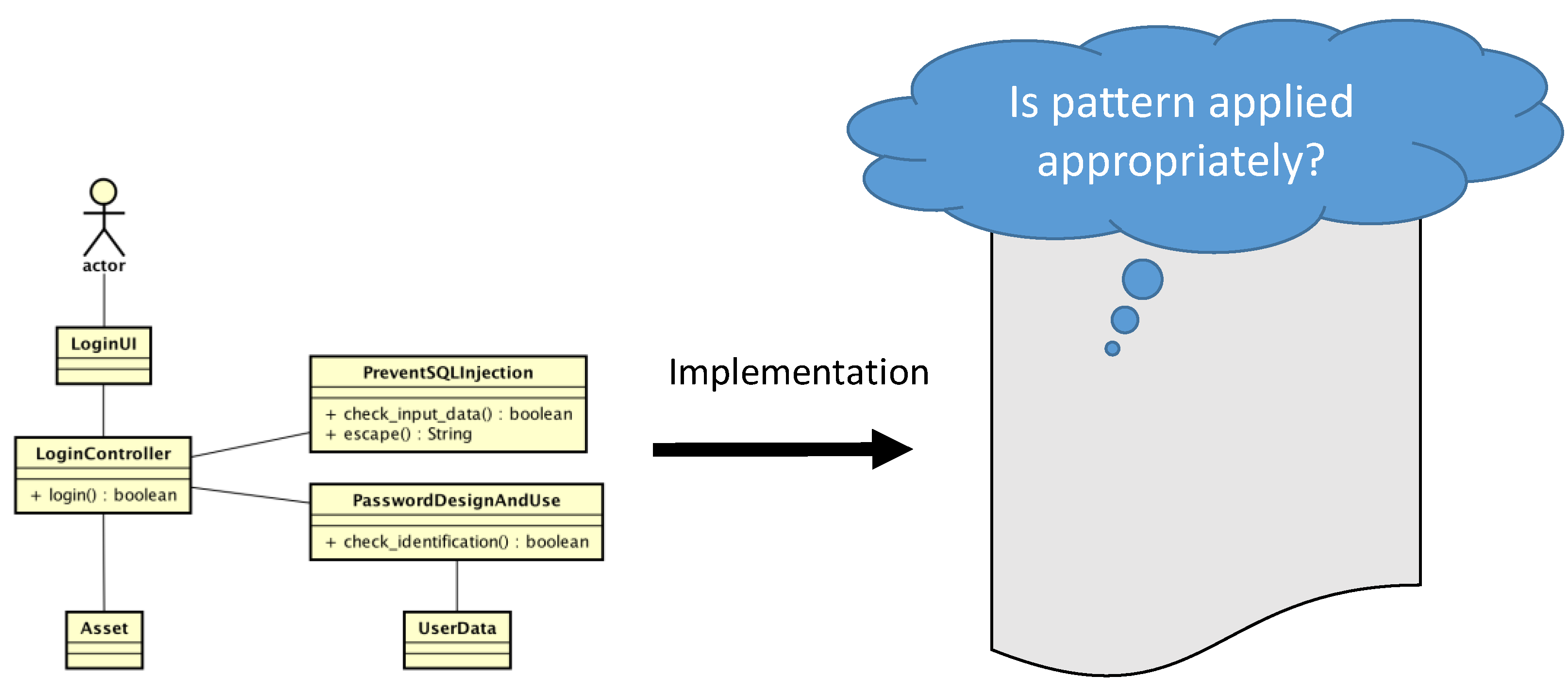

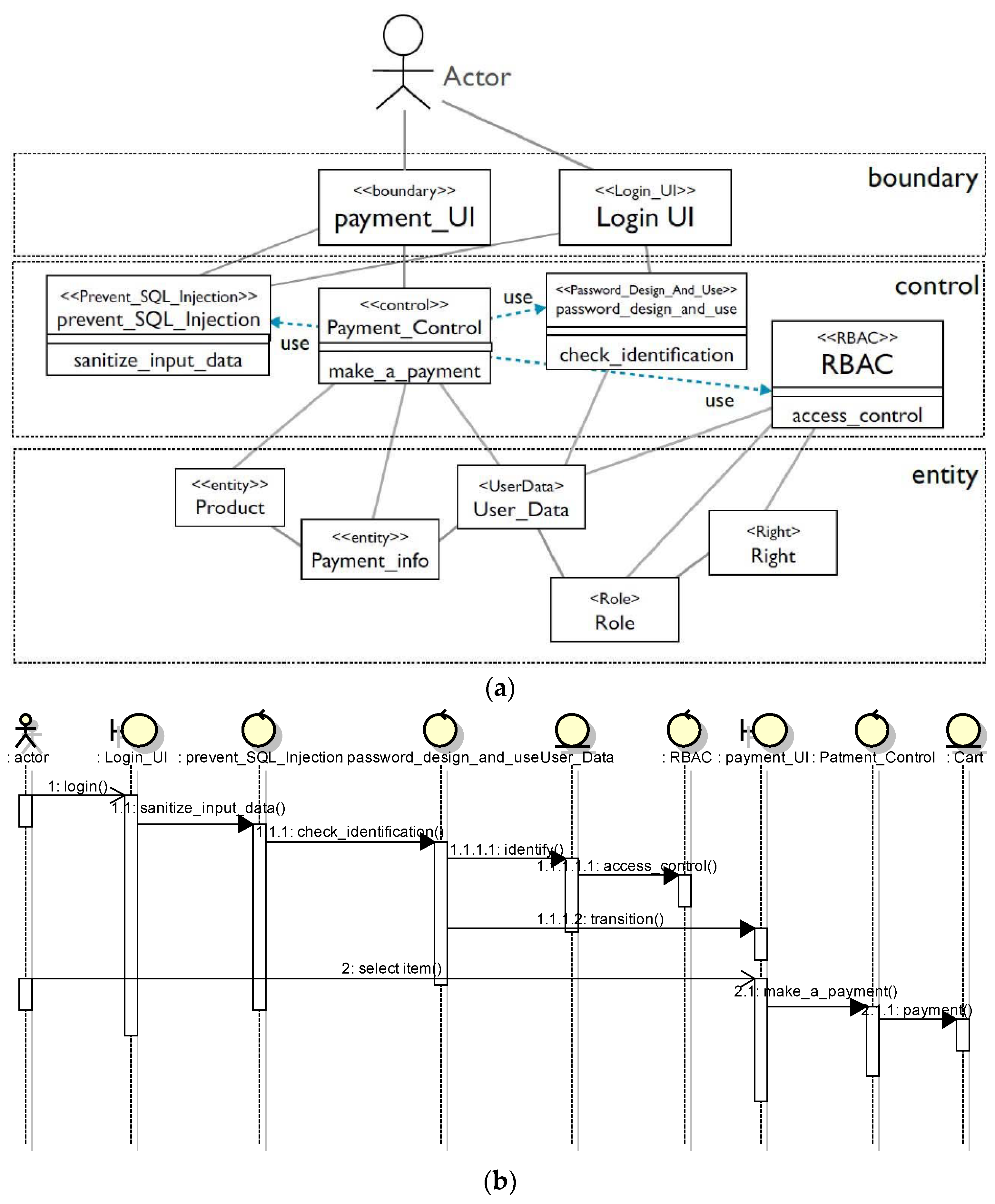

2.2. Motivating Example of Implementation Problem

3. Related Work

3.1. Security Patterns Verification

3.2. Model-Based Security Testing

3.3. Test-driven Development (TDD) for Security

3.4. Decision Table Testing

3.5. Aspect-Oriented Programming

4. Implementation Support of Security Design Patterns

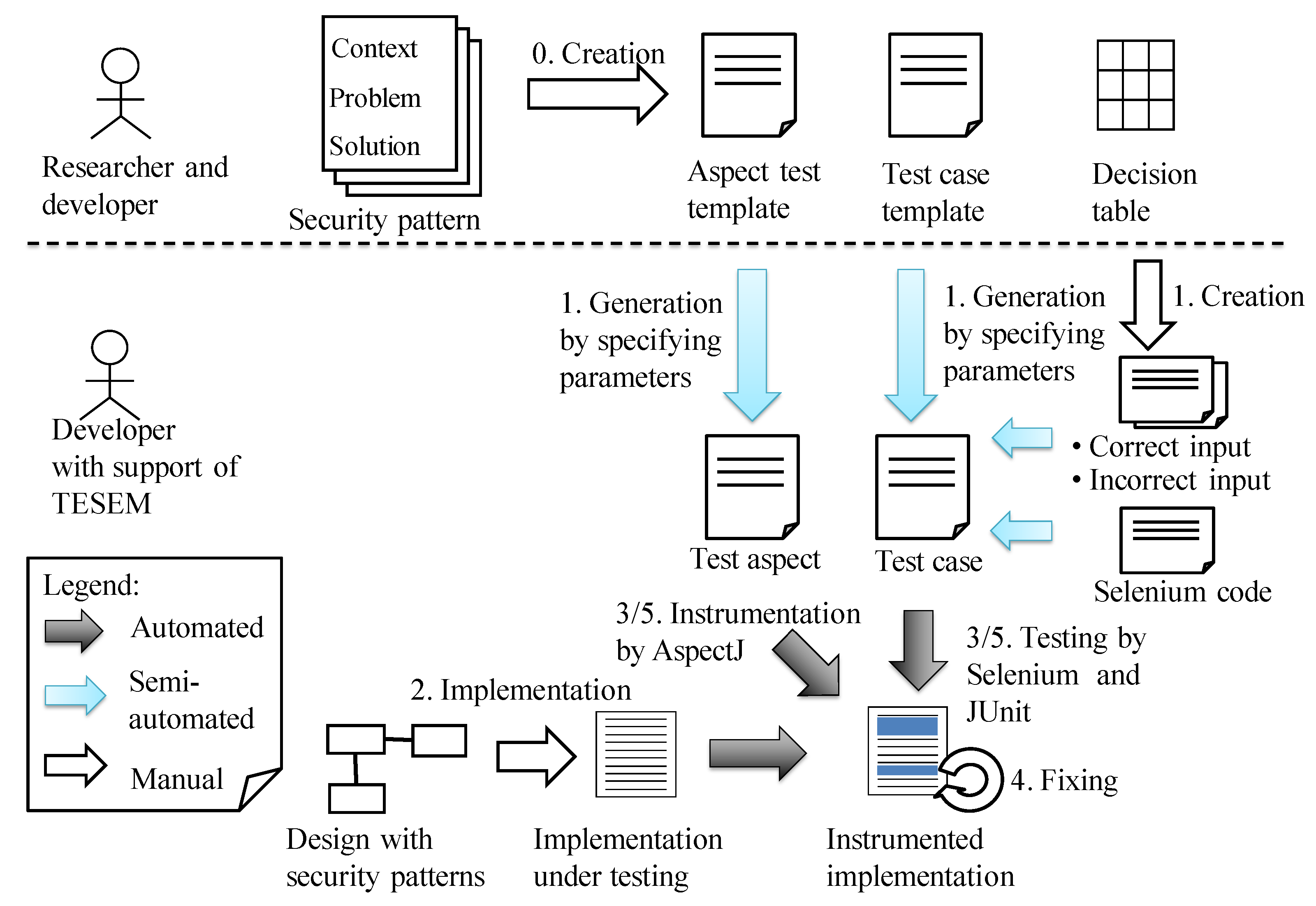

4.1. Overview

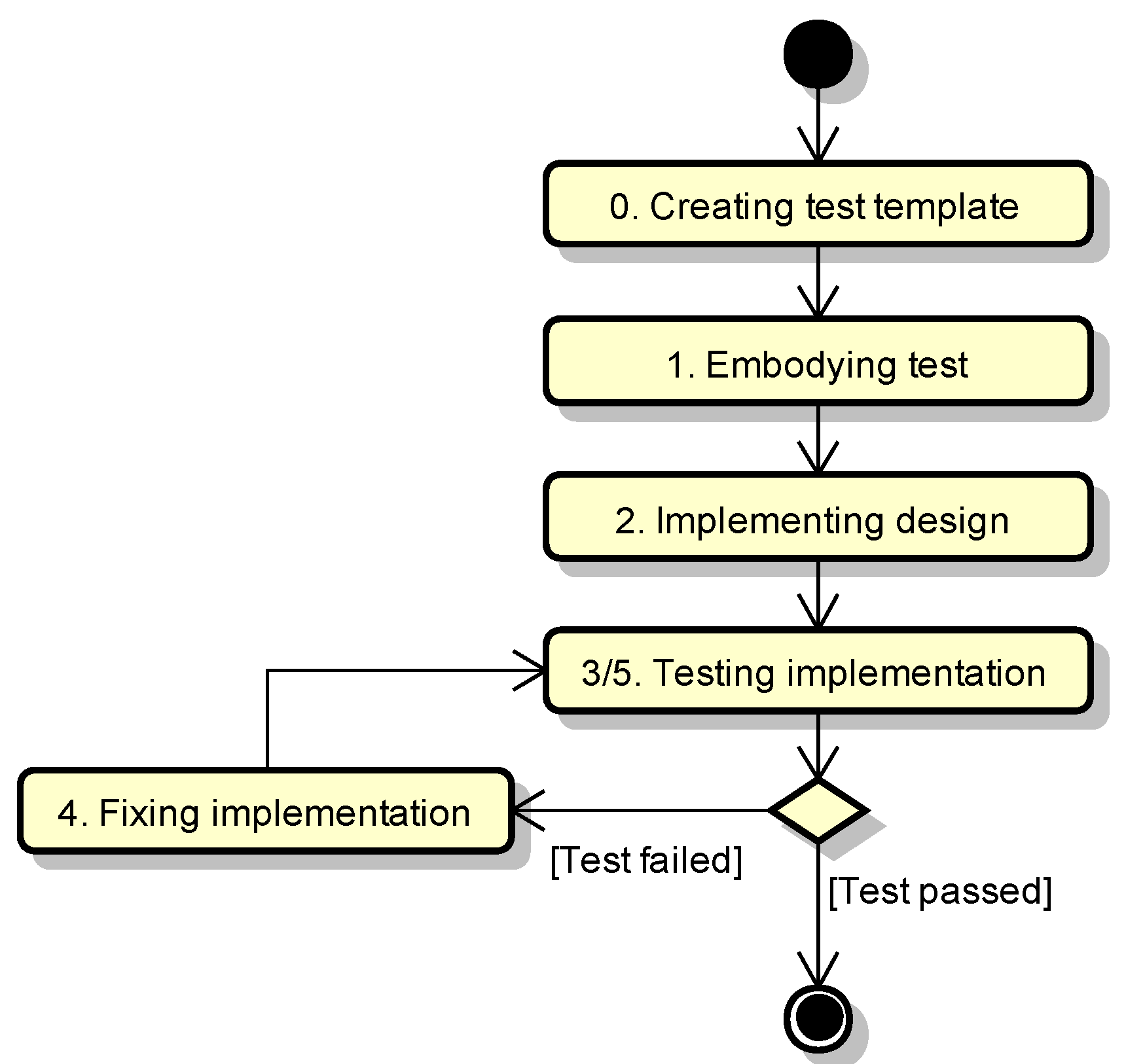

- Step 0:

- Create a test template.A test template is prepared from a security design pattern. The test template consists of an “aspect test template” and a “test case template”.

- Step 1:

- Embody tests.A test is embodied by the given design information in a test template.

- Step 2:

- Implement a design.Although the pattern application cannot be verified, the intended design for the security design pattern can be implemented.

- Step 3:

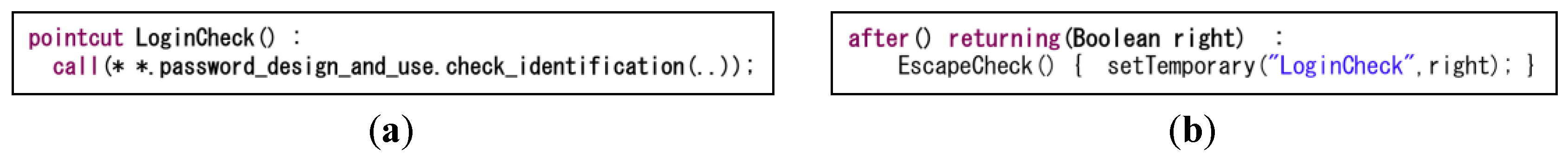

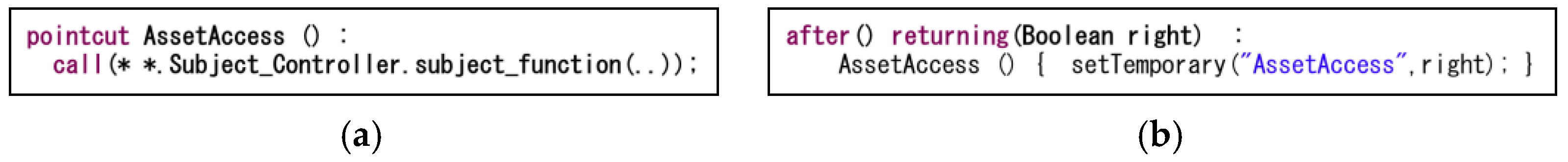

- Test and validate the applied patterns.Based on TDD, a test is quickly executed to validate the applied patterns in the implementation phase. During this step, concrete test aspects generated from aspect test templates are weaved into the implementation under testing for the instrumentation purpose.

- Step 4:

- Fix.The implementation is fixed based on the defects found in Step 3.

- Step 5:

- Re-test and re-validate applied patterns.The fixed implementation is re-tested to re-validate the applied patterns. If the test is passed successfully, then the patterns are appropriately applied in the implementation phase. If the test is failed, Step 4 is repeated until the re-test passes.

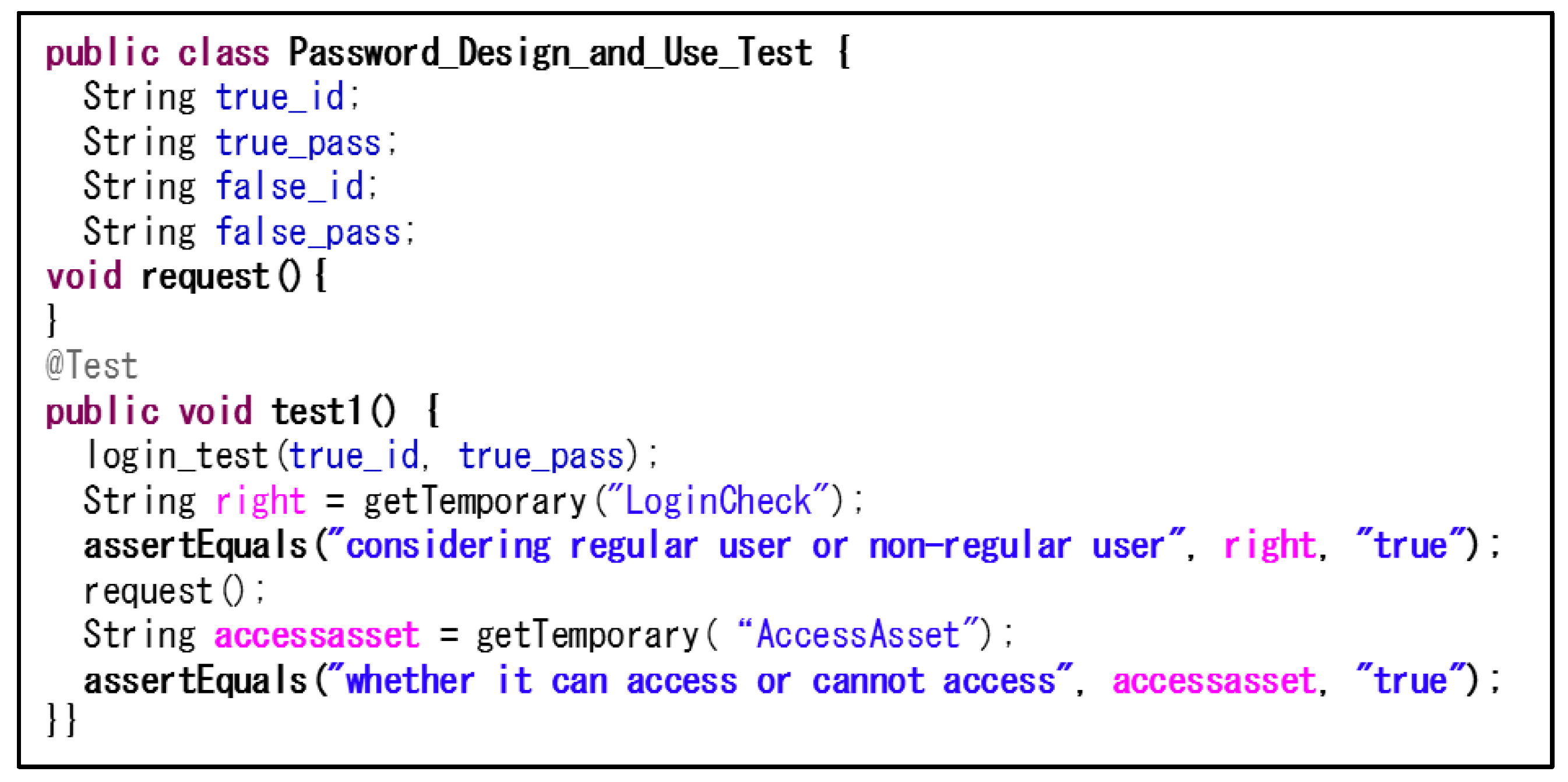

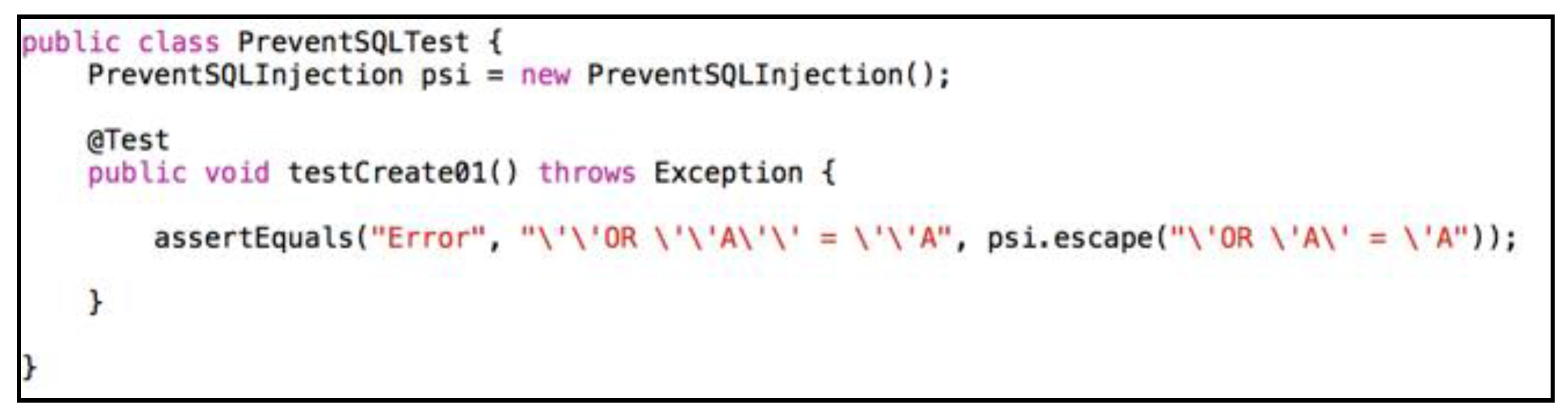

4.2. Test Template and Test Case

- (1)

- For each security design pattern to be tested, we (and the developers) prepare and register a pair of a test case template and an aspect test template using the three steps below. Once registered, the templates can be reused for further development projects.

- (a)

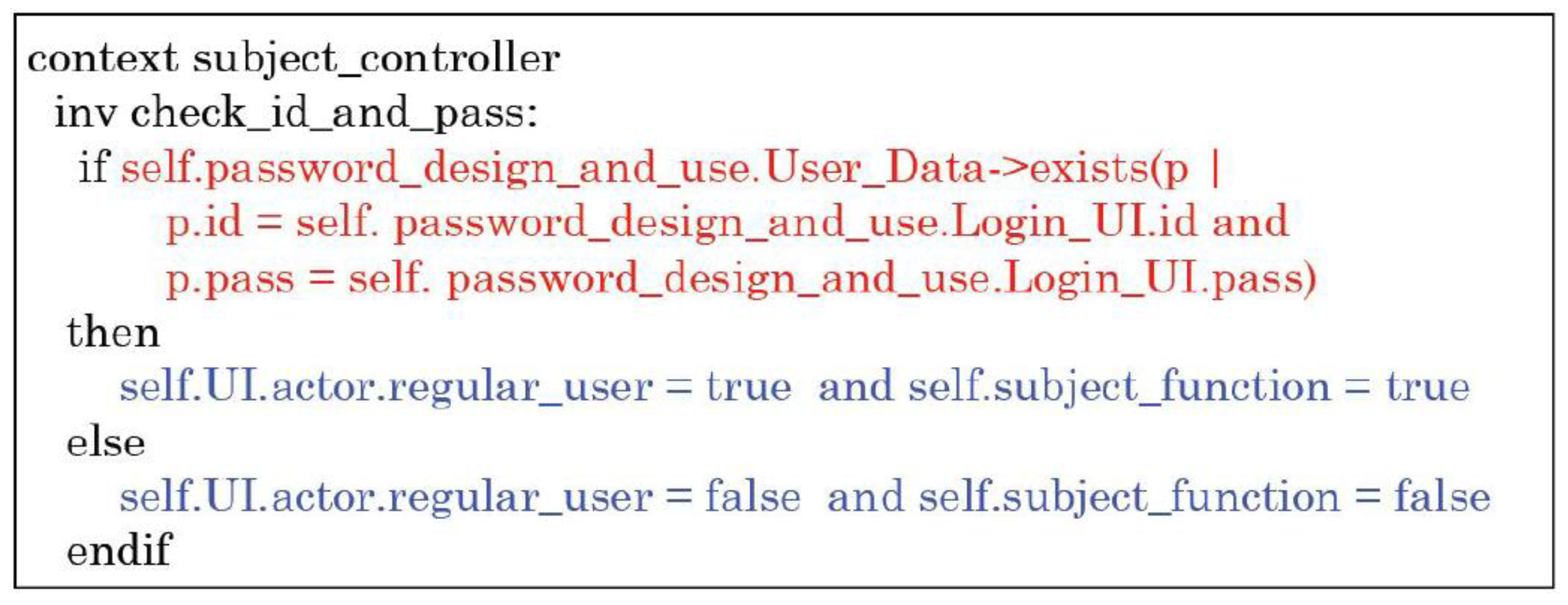

- Developers create a decision table from the OCL description.

- (b)

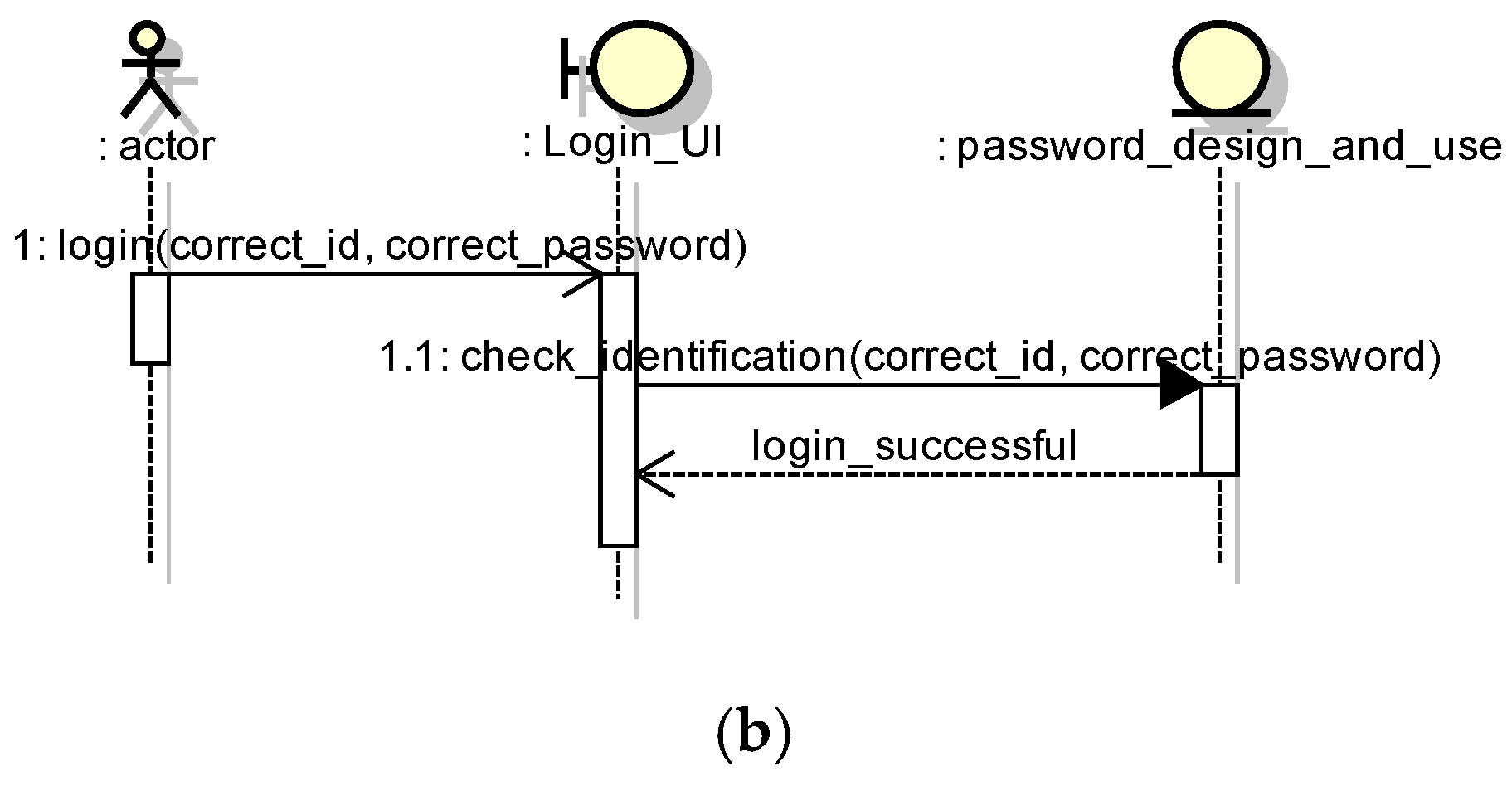

- Developers create an aspect test template for an internal processing observation from the decision table and the pattern structure.

- (c)

- Developers create a test case template from the decision table and the pattern behavior.

- (2)

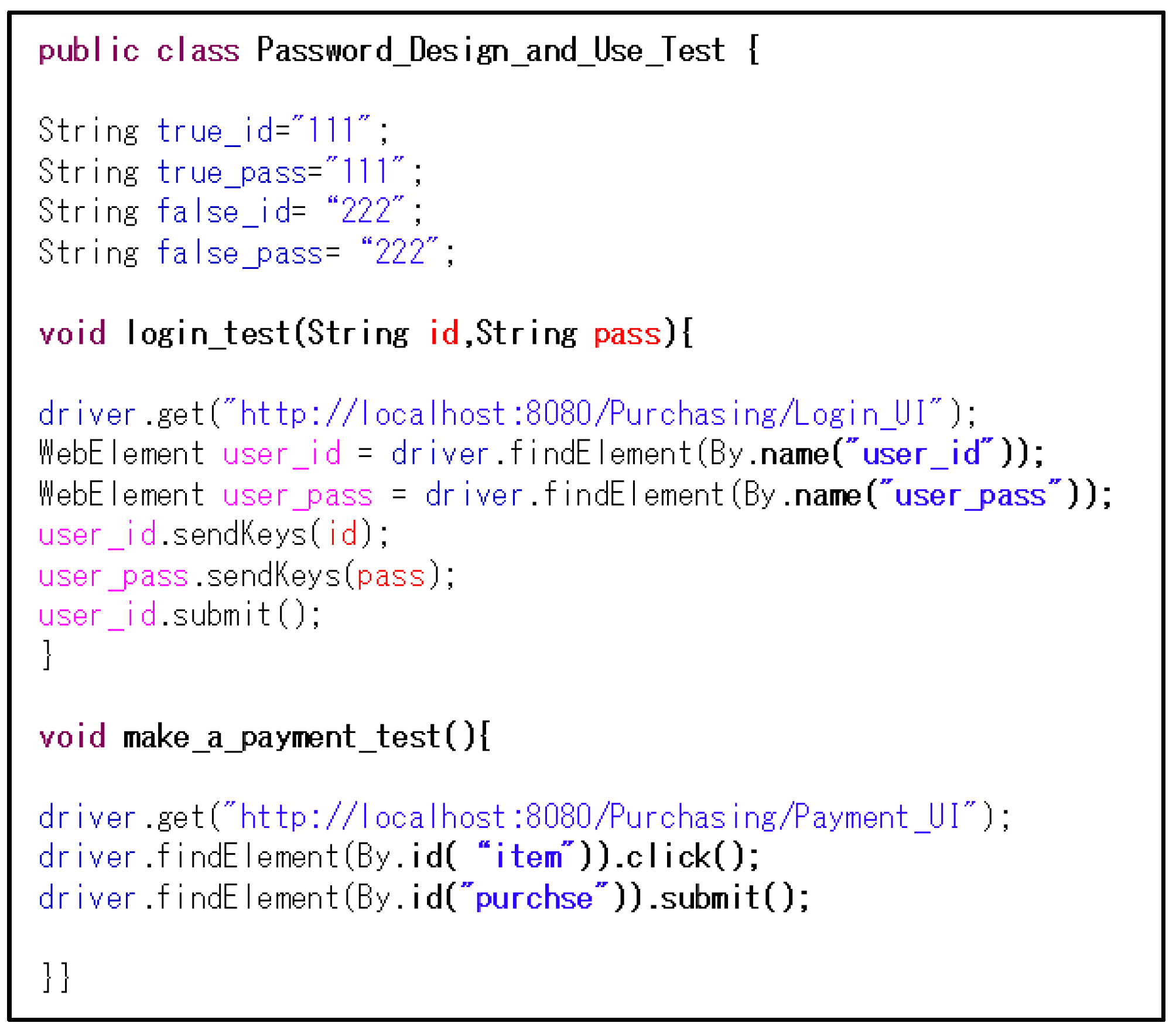

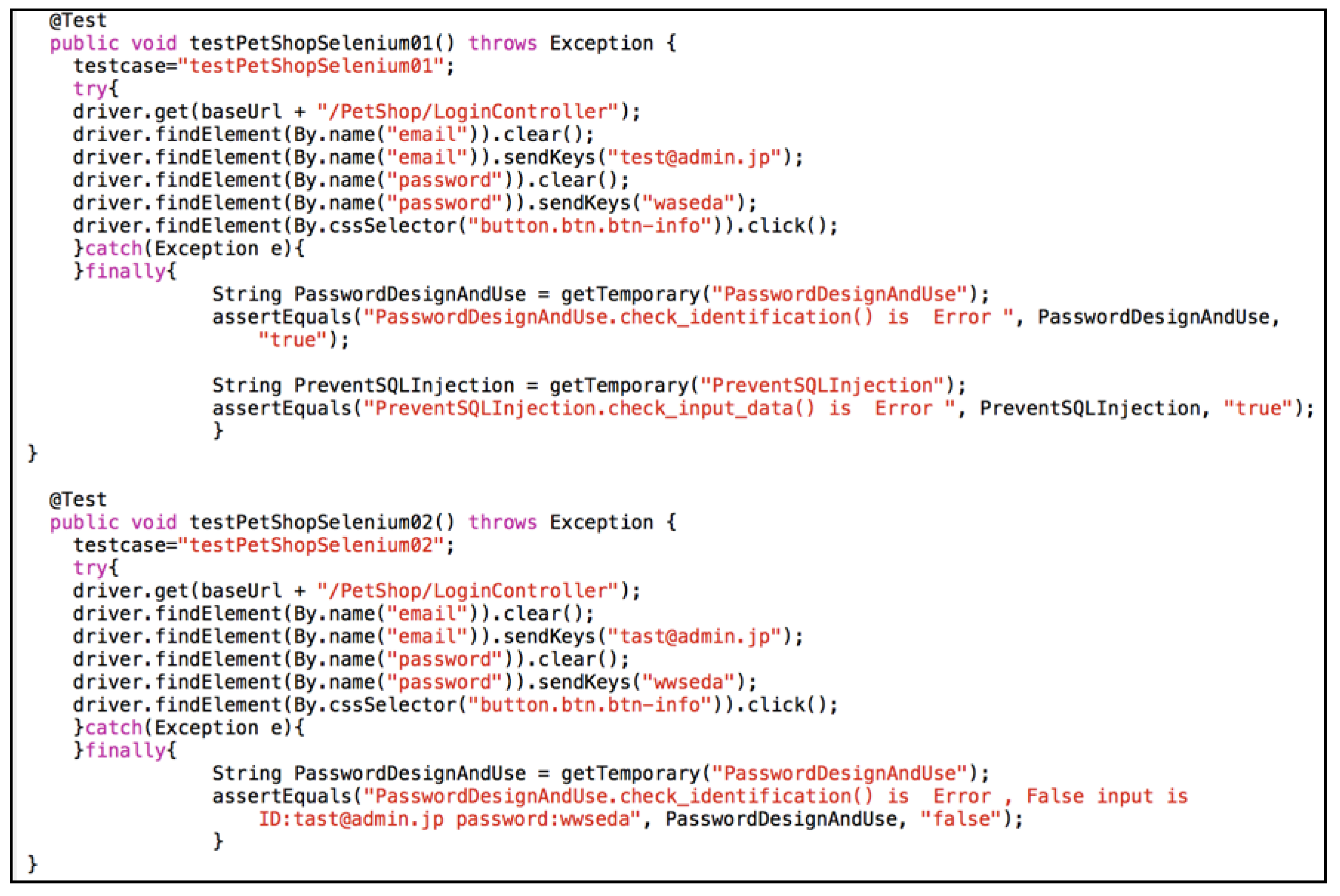

- By using TESEM, developers can easily generate concrete test cases as follows:

- (a)

- Developers bind the elements constituting the selected security design pattern and class/interface names in the given design model by specifying a class/interface name for each pattern element as a parameter.

- (b)

- Developers input the Selenium code corresponding to the target security design pattern to be tested, such as a code behaving as a login function to test the login.

- (c)

- Developers specify concrete correct values for the parameters in the test cases, such as concrete values of ID and password for testing login. Developers also specify concrete incorrect values.

- (d)

- Finally, TESEM generates test cases together with concrete test aspects using the parameters and the information specified in the above steps.

4.3. Tool Implementation and Example

4.4. Limitations

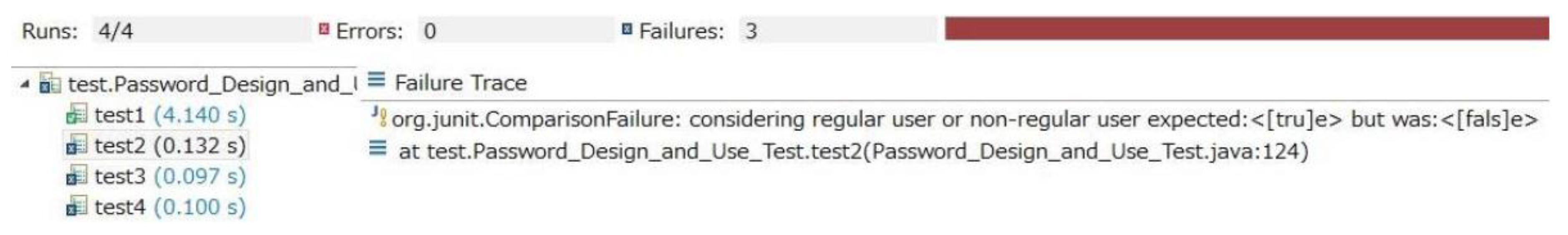

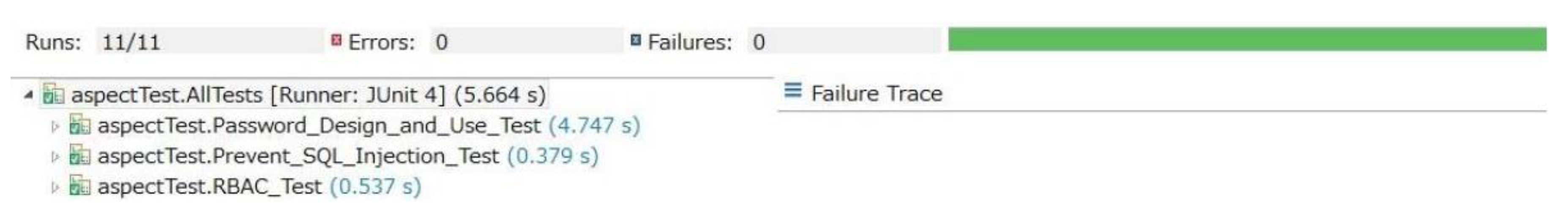

5. Case Study

6. Evaluation

6.1. Experimental Overview

- Step 1: Complete a questionnaire before the experiment.

- Step 2: Listen to an explanation of our method, JUnit, and Selenium.

- Step 3: Detect and correct the defects by the existing (our) method.

- Step 4: Detect and correct the defects by our (existing) method.

- Step 5: Complete a questionnaire after the experiment.

- What is your skill level regarding developing a program?

- What is your knowledge level regarding security?

- What is your knowledge level regarding software testing?

- What is your knowledge level regarding web systems development?

- Which method do you prefer to find defects in the software?

- Which method do you feel is more efficient at finding defects?

- Which method do you prefer to modify defects in the software?

6.2. Experimental Results

6.3. Discussion

6.4. Threats to Validity

7. Conclusions and Future Work

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Yoshioka, N.; Washizaki, H.; Maruyama, K. A Survey on Security Patterns. Prog. Inform. 2008, 5, 35–47. [Google Scholar] [CrossRef]

- Devanbu, P.T.; Stubblebine, S. Software Engineering for Security: A Roadmap. In Proceedings of the Conference on the Future of Software Engineering, New York, NY, USA, 4–10 June 2000; pp. 227–239.

- Schumacher, M.; Fernandez-Buglioni, E.; Hybertson, D.; Buschmann, F.; Sommerlad, P. Security Patterns: Integrating Security and Systems Engineering; John Wiley & Sons: Chichester, UK, 2006. [Google Scholar]

- Object Management Group. Unified Modeling Language (UML) Version 2.5, 2015. Available online: http://www.omg.org/spec/UML/ (accessed on 3 June 2016).

- Object Management Group. OCL 2.4, 2014. Available online: http://www.omg.org/spec/OCL/2.4/ (accessed on 9 June 2016).

- Warmer, J.; Kleppe, A. The Object Constraint Language: Precise Modeling with UML; Addison-Wesley: Reading, MA, USA, 1998. [Google Scholar]

- Kobashi, T.; Yoshizawa, M.; Washizaki, H.; Fukazawa, Y.; Yoshioka, N.; Kaiya, H.; Okubo, T. TESEM: A Tool for Verifying Security Design Pattern Applications by Model Testing. In Proceedings of the 8th IEEE International Conference on Software Testing, Verification, and Validation (ICST 2015), Graz, Austria, 13–17 April 2015; pp. 13–17.

- Fernandez-Buglioni, E. Security Patterns in Practice: Designing Secure Architectures Using Software Patterns; John Wiley & Sons: Chichester, UK, 2013. [Google Scholar]

- Munetoh, S.; Yoshioka, N. Model-Assisted Access Control Implementation for Code-centric Ruby-on-Rails Web Application Development. In Proceedings of the International Conference on Availability, Reliability and Security, Regensburg, Germany, 2–6 September 2013; pp. 350–359.

- Abramov, J.; Shoval, P.; Sturm, A. Validating and Implementing Security Patterns for Database Applications. In Proceedings of the 3rd International Workshop on Software Patterns and Quality (SPAQu), Orlando, FL, USA, 25 October 2009.

- Dong, J.; Peng, T.; Zhao, Y. Automated verification of security pattern compositions. J. Inf. Softw. Technol. 2010, 25, 274–295. [Google Scholar] [CrossRef]

- Hamid, B.; Percebois, C.; Gouteux, D. Methodology for Integration of Patterns with Validation Purpose. In Proceedings of the European Conference on Pattern Language of Programs (EuroPLoP), Irsee, Germany, 11–15 July 2012; pp. 1–14.

- Jürjens, J. Secure Systems Development with UML; Springer: Berlin, Germany, 2005. [Google Scholar]

- Lodderstedt, T.; Basin, D.A.; Doser, J. SecureUML: A UML-Based Modeling Language for Model-Driven Security. In Proceedings of the 5th International Conference on the Unified Modeling Language (UML), Dresden, Germany, 30 September–4 October 2002; pp. 426–441.

- Dalal, S.R.; Jain, A.; Karunanithi, N.; Leaton, J.M.; Lott, C.M.; Patton, G.C.; Horowitz, B.M. Model-based Testing in practice. In Proceedings of the International Conference on Software Engineering, Los Angeles, CA, USA, 16–22 May 1999; pp. 285–294.

- Felderer, M.; Zech, P.; Breu, R.; Büchler, M.; Pretschner, A. Model-based security testing: A taxonomy and systematic classification. Softw. Test. Verif. Reliab. 2016, 26, 119–148. [Google Scholar] [CrossRef]

- Tretmans, J.; Brinksma, E. TorX: Automated Model-Based Testing. In Proceedings of the Conference on Model-Driven Software Engineering, Nuremberg, Germany, 11–12 December 2003; pp. 11–12.

- Felderer, M.; Agreiter, B.; Breu, R.; Armenteros, A. Security Testing by Telling TestStories. In Proceedings of the Conference on Modellierung, Klagenfurt, Austria, 24–26 March 2010; pp. 24–26.

- Schieferdecker, I.; Grossmann, J.; Schneider, M. Model-based security testing. In Proceedings of the 7th Workshop on Model Based Testing, Tallinn, Estonia, 25 March 2012; pp. 1–12.

- Kim, H.; Choi, B.; Yoon, S. Performance testing based on test-driven development for mobile applications. In Proceedings of the International Conference on Ubiquitous Information Management and Communication, Suwon, Korea, 15–16 January 2009; pp. 612–617.

- Fraser, S.; Astels, D.; Beck, K.; Boehm, B.; McGregor, J.; Newkirk, J.; Poole, C. Discipline and practices of TDD: (test driven development). In Proceedings of the Conference on Object-oriented Programming, Systems, Languages, and Applications, Anaheim, CA, USA, 26–30 October 2003; pp. 268–270.

- Sonia; Singhal, A. Development of Agile Security Framework Using a Hybrid Technique for Requirements Elicitation. In Proceedings of the International Conference on Advances in Computing, Communication and Control (ICAC), Mumbai, India, 28–29 January 2011; pp. 178–188.

- Selenium. Available online: http://docs.Seleniumhq.org/ (accessed on 9 June 2016).

- Holmes, A.; Kellogg, M. Automating functional tests using Selenium. In Proceedings of the Agile Conference, Minneapolis, MN, USA, 23–28 July 2006; p. 6.

- JUnit. Available online: http://junit.org (accessed on 3 June 2016).

- Tahchiev, P.; Leme, F.; Massol, V.; Gregory, G. JUnit in Action; Manning Publications Co.: Shelter Island, NY, USA, 2010. [Google Scholar]

- Copeland, L. A Practitioner’s Guide to Software Test Design; Artech House: London, UK, 2004. [Google Scholar]

- Di Lucca, G.A.; Fasolino, A.R.; Faralli, F.; de Carlini, U. Testing Web Applications. In Proceedings of the 18th International Conference on Software Maintenance (ICSM), Montreal, QC, Canada, 3–6 October 2002; pp. 310–319.

- Noikajana, S.; Suwannasart, T. Web Service Test Case Generation Based on Decision Table. In Proceedings of the 8th International Conference on Quality Software (QSIC), Oxford, UK, 12–13 August 2008; pp. 321–326.

- Hartmann, J.; Imoberdorf, C.; Meisinger, M. UML-Based Integration Testing. In Proceedings of the ACM SIGSOFT International Symposium on Software Testing and Analysis (ISSTA), Portland, OR, USA, 21–24 August 2000; pp. 60–70.

- Briand, L.; Labiche, Y. A UML-Based Approach to System Testing. In Proceedings of the 4th International Conference on the Unified Modeling Language: Modeling Languages, Concepts, and Tools (UML), Toronto, ON, Canada, 1–5 October 2001; pp. 194–208.

- Endrikat, S.; Hanenberg, S. Is Aspect-Oriented Programming a Rewarding Investment into Future Code Changes? A Socio-technical Study on Development and Maintenance Time. In Proceedings of the International Conference on Program Comprehension, Kingston, ON, Canada, 22–24 June 2011; pp. 51–60.

- Kiczales, G.; Hilsdale, E.; Hugunin, J.; Kersten, M.; Palm, J.; Griswold, W. An overview of AspectJ. In Proceedings of the Conference on Object-Oriented Programming, Budapest, Hungary, 18–22 June 2001; pp. 327–354.

- Tanter, É. Execution levels for aspect-oriented programming. In Proceedings of the 9th International Conference on Aspect-Oriented Software Development, Rennes and Saint Malo, France, 15–19 March 2010; pp. 37–48.

- Montrieux, L.; Jürjens, J.; Haley, C.B.; Yu, Y.; Schobbens, P.Y.; Toussaint, H. Tool support for code generation from a UMLsec property. In Proceedings of the IEEE/ACM International Conference on Automated Software Engineering, Antwerp, Belgium, 20–24 September 2010; pp. 357–358.

- TESEM. Available online: http://patterns.fuka.info.waseda.ac.jp (accessed on 3 June 2016).

- Kobashi, T.; Yoshioka, N.; Okubo, T.; Kaiya, H.; Washizaki, H.; Fukazawa, Y. Validating Security Design Pattern Applications Using Model Testing. In Proceedings of the 8th International Conference on Availability, Reliability and Security (ARES2013), Regensburg, Germany, 2–6 September 2013; pp. 62–71.

- Yoshizawa, M.; Kobashi, T.; Washizaki, H.; Fukazawa, Y.; Okubo, T.; Kaiya, H.; Yoshioka, N. Verification of Implementing Security Design Patterns Using a Test Template. In Proceedings of the 9th International Conference on Availability, Reliability and Security (ARES2014), Fribourg, Switzerland, 8–12 September 2014; pp. 178–183.

- Kobashi, T.; Yoshioka, N.; Kaiya, H.; Washizaki, H.; Okubo, T.; Fukazawa, Y. Validating Security Design Pattern Applications by Testing Design Models. Int. J. Secure Softw. Eng. 2014, 5, 1–30. [Google Scholar] [CrossRef]

- EMSsec. Available online: http://lab.iisec.ac.jp/~okubo_lab/Members/okubo/wiki/index.php?EMSSec (accessed on 3 June 2016).

| 1 | 2 | 3 | 4 | ||

| Conditions | Inputted ID agrees with User Data | Yes | Yes | No | No |

| Inputted Password agrees with User Data | Yes | No | Yes | No | |

| Actions | Considered a regular user | × | |||

| Can access an asset | × | ||||

| Considered a non-regular user | × | × | × | ||

| Cannot access an asset | × | × | × | ||

| Students | What is your skill level regarding developing a program? | What is your knowledge level regarding security? | What is your knowledge level regarding software testing? | What is your skill level regarding web systems development? | Which method do you prefer when finding defects in software? | Which method do you feel more efficiently finds defects in software? | Which method do you prefer when modifying defects in software? |

|---|---|---|---|---|---|---|---|

| 1 | H | NH | H | NH | O | O | O |

| 2 | NH | L | NH | NH | O | O | O |

| 3 | NH | NH | NH | L | O | O | RO |

| 4 | NH | H | NH | NH | O | O | RO |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yoshizawa, M.; Washizaki, H.; Fukazawa, Y.; Okubo, T.; Kaiya, H.; Yoshioka, N. Implementation Support of Security Design Patterns Using Test Templates. Information 2016, 7, 34. https://doi.org/10.3390/info7020034

Yoshizawa M, Washizaki H, Fukazawa Y, Okubo T, Kaiya H, Yoshioka N. Implementation Support of Security Design Patterns Using Test Templates. Information. 2016; 7(2):34. https://doi.org/10.3390/info7020034

Chicago/Turabian StyleYoshizawa, Masatoshi, Hironori Washizaki, Yoshiaki Fukazawa, Takao Okubo, Haruhiko Kaiya, and Nobukazu Yoshioka. 2016. "Implementation Support of Security Design Patterns Using Test Templates" Information 7, no. 2: 34. https://doi.org/10.3390/info7020034

APA StyleYoshizawa, M., Washizaki, H., Fukazawa, Y., Okubo, T., Kaiya, H., & Yoshioka, N. (2016). Implementation Support of Security Design Patterns Using Test Templates. Information, 7(2), 34. https://doi.org/10.3390/info7020034