1. Introduction

We often use the concept of energy in order to characterize our being. Aristotle (384–322 BC) described with the concept of enérgeia an active force, which transforms potentialities into actualities. Nowadays, the concept of energy is consistently used in literature, within our daily life and in physics. We may feel “full of energy”, or that “his batteries have run out”. Why do I highlight this?

The first introduction of a physical concept of energy was made by Julius Robert Mayer, and dates back to the year 1842. This concept is nowadays used within an isomorphic semantic content in physics, daily life and literature. The basic understanding is that energy is a conserved entity, may cause chains of activities, and can be stored, transformed and released. Those activities may consist of physical (chemical, biological) events, and also of mental events. The concept of energy seems to be so clear, that it does not even appear in the “Very Short Introductions” series of the Oxford University Press. Well, none of this is true for the concept of information. Why? Because the concept of information does not yet conceptualize any physical terminus. Nor does it deal with a logic that frames the openness and incompleteness of the (physically described) world.

It goes even further. Typically, science is interested in the truth of something. Even if there are different kinds of theories of truth (correspondence theory, coherence theory, pragmatism, among others), it is commonly agreed that scientific theories should deal in a certain (i.e., corresponding, coherent, pragmatic) manner with “reality”. Within those theories, contradictions should be avoided; paradoxes and contradictions should be eliminated, while—as a goal—a somehow consistent and meaningful set of sentences, principles, formulas, equations, resolutions, pictures etc. has to be laid down. This objective dimension does not reflect the main scope of “information”. Nor does the pure subjective, psychological, personal dimension itself create the core of our interest. The main force of the proposed concept comes out of the idea, that any “information” stimulates our thinking towards the incomplete and the inconsistent. We are grasping for paradoxes, contradictions and incoherent meanings, because this creates the media and level of interest in order to approach new possibilities, opinions and solutions. We are not interested in things which we already know (and it does not even matter if we agree on such things, or not). But we are interested in things that may support the opening of our minds, which help us to approach new insights: which stimulate us to new forms of thinking.

A good piece of music or art leaves us with uncountable and irreducible patterns of perceptions, feelings and ideas. This already holds true for our ancestors who first resisted running away from fire that spontaneously ignited in the savanna. Based on first experiences, they discovered that there may be a chance to find some benefit of high quality: grilling meat, for instance, that could remain edible for longer periods than previously experienced, easier to eat and digest. Or the idea of flying like a bird, anticipated already by Ikaros and Daidalos, sketched in detail by Leonardo da Vinci, and first achieved by Otto Lilienthal. We may also fail, but we take failure as a stimulus for further investigation: the discovery of programmable machines is very helpful to support automated calculations, manage huge amounts of data, but fails to simulate human thinking. Never the less, we are constantly in search for new challenges: in a word, we are continuously striving for information.

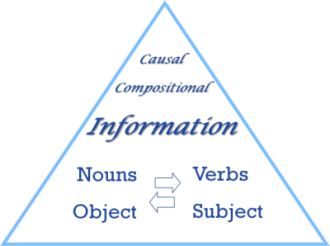

It seems that our striving for new information, for the included middle, creates a core attitude and understanding of being human. Of course, we need science in order to reproduce objective knowledge and well-arranged structures of actions. Those actions and underlying rules are commonly agreed on, and create an indispensible foundation of our culture. But the concept of information seems to deal with an integrating horizon, which spreads itself between and (from a dynamic perspective) beyond the subjective and objective dimension of reality (

Figure 1). The reader may think about the axiom of the excluded middle (there exists no third term T which is at the same time A and non-A). Logicians like Joseph Brenner, Basarab Nicolescu and Stéphane Lupasco argue for an

included (emergent) middle [

1,

2,

3,

4]. I am thankful to Joseph Brenner for continuing deep discussions on the subject. In short, “information” indicates the capability of systems to have privileged access to states that signal to the system the possibility of further enrichment; that is, there exists a functionality that creates and indicates fundamentally new structures.

Figure 1.

Dimensions of Information. The so-called “classical logic” covers the objective dimension. Aristotle characterized three basic laws: the law of noncontradiction, the law of identity and the law of the excluded middle (example: you are in love or not; there is no in-between). But he already left a door open for the concept of the included middle, because the law of the excluded middle does not hold for future events (example:

falling in love). Aristotle also characterized the causal role of the

form which creates the immanent character of living systems [

5].

From within the physical perspective, this concept declares the appearance of new kinds of (macrophysical) laws as “information”. An example is the (macrophysical) law/rule, which describes the structure of snow crystals. The reader may imagine that during the development of the universe the very first appearance of snowflakes took place at a somewhat late phase (that is, the capability to create snow crystals was a pure potentiality during the early phases of the universe: the universe “selected” the path for different particles to become snow crystals). But as a consequence it will be shown that “thoughts” are of an identical physical evidence: they hold the state of causal, physical laws. Such thoughts are based on the capability of systems to store traces of information (which reflect the different environmental conditions which gave rise to different systems), and to influence—based on such traces – the further creation of different kinds of thoughts.

We (as specific kinds of tracing systems) have privileged access to identify messages that offer potential for further enrichment of structure and form. Our consciousness—experience of phenomena—holds the local perspective of the universal process of structurization. But there exists no other information within this universe with regard to the conscious process; this process is the concept of the universe to solve the task (the natural law) in order to create further structure from within the perspective of individuals.

○ Postulate 2 (P2): The tendency towards further enrichment of structure holds the state of a natural law. The selection of further structural modifications is done by information. Empirical evidence for this thesis is given by the specific boundary conditions of our universe; due to the very low entropy at the beginning of the universe, and based on the interaction between the 2nd law of thermodynamics and Pauli’s exclusion principle, there is an overall tendency towards the emergence of new system structures, new systems and new (macrophysical) laws. Those laws define/explain the unity of any system.

We might already imagine a kind of ethical spirit that comes out of this thesis. If the assumption (or the knowledge) is that nature has given a task to us in terms of a natural law, or a natural rule, we are forced to create structure. This study focuses on a conceptual understanding of the informational process, and expands on our intrinsic responsibility for conscious support of the development of the active dimension of information. We are acting “right” if we do create structure. This is based on communication and cooperation with all other people. It also includes all kinds of activities that enrich the structure of our biophysical sphere. The reader might think that structure might get lost. Or, malign people might destroy structure (i.e., by dominating other people; even resorting to weapons and war). We have to show in more detail that those actions are based on static, local optimizations. A new ethical spirit may arise based on the knowledge of the potential for further overall structurization. And this is the path of humanity, because the process of creating structure is the physical implementation of freedom.

This approach stimulates further remarks on the form of this paper. Typically, the scientific approach eliminates or minimizes the subjective dimension in terms of trying to achieve the highest degree of objectivity. This holds true even for psychological theories. I will rely on this approach as well, but will add within a mirror perspective the dynamization of the subjective dimension. In addition, I will argue that this subjective dimension provides—scientifically/objectively—the basis of a new ethical spirit. If nature gives us the task (as a kind of natural law) to continuously enrich and develop dynamics and structure, it has to be shown by that means—from within a physical and semantic perspective. For this reason, I would like to encourage the reader to investigate—in addition to the “objective” message of this text—using a conscious subjective dynamization. The scope of this paper is to deliver an input for further dynamization of ourselves towards the creation of new structures.

Let us look at an example: the principle of least effort [

6]. This principle—originally examined by George Kingsley Zipf—postulates that any living species will naturally choose the path of least effort. Based on Zipf’s introduction and the established foundations of the principle of least effort it seems to be counter-intuitive that this principle is only very rarely cited and used within scientific papers. We feel somehow uncomfortable if we hear that we always choose the path of least effort. Jeff Robbins has established that the corresponding “objective” rule is the Principle of Maximum Entropy (PME). PME is cited ten times more frequently in scientific papers than the corresponding Principle of Least Effort (PLE) [

7]. By citing PME, our own self-image is not directly touched (as is the case when we rely on the principle of least effort). But from behind this background, Robbins explains that we are pushed towards buzzwords like “made easy” and “made fast”: the incarnation of the principle of least effort. The solution what we propose is that this principle is only the other side of the same coin. PME and PLE reflect the 2nd law of thermodynamics, and do not take into account Pauli’s exclusion principle, respectively Postulate P2. As a consequence—with regard to our practice of objective science—we do not consciously dynamize or develop ourselves. And there might be even a resistance for such a dynamization. An example has been analyzed by the linguist Thomas Steinfeld [

8]. He analyzed the usage of verbs within the speech of managers, notably financial managers. His conclusion is that these managers tend to avoid the use of verbs, especially when addressing an audience. The reason is that using verbs imposes activities of their and maybe also lead to further developments. So the main massage of those managers is: “I will give you some buzzwords in order to satisfy your questions. But I will not give you any further information about what I’m really going to do.”

What could help us in order to overcome such kinds of problems? What closes the gap between our subjective awareness and constitution and the objective boundary conditions that are given to us within this world? It will be argued that this is “information”, which is created by us from within a causal-compositional perspective. Further: I will argue that the knowledge and usage of this concept of information will feed us with physically based energy, supports further personal development and helps to enter into an active ethical spirit. The adjective “active” denotes the fact that it is possible to overcome “passive ethics” (controlled by various kinds of “you should not …” rules) by an active approach (the simple active rule, which exists as well within a physical context, says: create more structure with regard to the overall system: “be fair”, see Part II of this study). However, we have to keep in mind that the usage and notion of the terminus “information” is also used for people surveillance, which is executed by informants. It will become clear that this is just a local optimization.

It is essential to draw a correspondence of this approach with the Theory of Information, as introduced by Mark Burgin [

9]. The main difference is that Burgin’s descriptive theory still focuses on a strong relationship between what is to be understood as “information” and what is to be understood as “structure”. Structures are conceptualized as quantitative descriptions of some arrangements of parts (elements) of a whole potentiality of possible compositions of those elements. The proposed causal-compositional concept of information declares the overall (set of) rules that define any possible and valid arrangement of elements as “information”. While Burgin focuses more on the local changes of a system and its structure (“In a broad sense, information for a system R is the capacity to bring about changes in the system R.” [

9] (pp. 99)), the proposal is to incorporate the overall rules as fundamental entities into the concept of information. A given structure displays a “projection” of a physical regularity/law, which describes the unity and physical reason of that structure. An example is the law that describes the hexagonal structure of ice molecules.

This concept of information is based on the following three principles. These principles are derived from postulates P1 and P2:

Principle 1 (Pri-1):

Messages do not contain any information per se.

The informational content (meaning) of any message is given by the codified transformation of systems, which may release and/or receive portions of energy/matter (“messages”, “signifiers”). This includes the sender as well as the receiver. Information is conceptualized as the codified (code: the rule, the natural law) description of the transformation of any kind of system. By enlisting such transformations, sender and receiver are constitutive elements of this scenario, accompanied by the corresponding exchange of portions of energy/matter. The code identifies the law/rule that defines the unity of any system. Any system transformation may cause (or is caused by) a certain emission or reception of portions of energy/mass. Classical concepts of semantics studies the meaning of something and typically focuses on the relation between signifiers (words/phrases/symbols) and what they stand for [

10]. The process of this study has to be inverted, as the meaning of any signifier is given by a codified transformation of systems. A signifier does not code any information by itself, but the informational content

becomes coded within the overall informational scenario. The information is primarily given by the transformational rule/law of the system, and secondarily by the specific transformation that happens in the context of the emitted/received signifier (portions of energy/matter). Multiple communication scenarios may be combined and/or nested within each other. The appearance and any further internal understanding of “messages” is based on the concept of codified system transformations.

Principle 2 (Pri-2): The completion principle. The universe is logically based on physical laws, which become completed from within a fundamental perspective. Any system (physical object) transformation holds a potentiality for newness. The fundaments of such newness are derived out of a conceptualization of the informational incompleteness of physics. This second principle provides a basis to explain and describe the development of any system, including the emergence of new structures and new kinds of systems. This item reaches beyond physics and leads to the topic that creates the heart of our understanding of real information; any information of value stimulates receiving systems to new kinds of structures (for human beings: new knowledge and insight). According to the Pauli Exclusion Principle, our universe must be fundamentally diverse, and has the potential to becoming more diverse (heterogeneous), as well as more homogeneous, by the operation of the 2nd Law. Both the Pauli Exclusion Principle and the 2nd Law of Thermodynamics enable the appearance of structured matter/structured systems. Based on the very low entropy at the beginning of the universe, there is an overall tendency towards the emergence of new system structures and new systems. New kinds of systems display new (macro) physical laws. For example, the chlorine atom holds the potential to interact with sodium atoms and may complete by this process its configuration of the outer electron shells. This also holds true for systems that are more complex. We see in all cases that the number of possible system states increases from within an overall perspective; the phase space becomes completed and enables new kinds of functionalities (new system states may act within a causal sense and may become new causal functionalities).

What is the relationship of those principles to a semantic concept of information? A well-known and consistently developed proposal has been developed by Luciano Floridi. He declares that an “instance of Information, understood as semantic content […] consists of n data; the data are well formed; the well formed data are meaningful.” [

11] (p. 21) So what does “well-formed data” mean within our approach? The ontological base for any “well-formedness” is given through the principle Pri-2, the completion principle. Put simply, this principle declares that new (macrophysical) laws appear continuously within our universe; the universe is completed daily or even every second. Examples are the appearance of atoms, of snow crystals, of living species and even of thoughts. It will be shown in detail that (through the physical basis provided by the 2nd law of thermodynamics, which interacts with the Pauli Exclusion Principle) any kind of system continuously approaches a maximization of system states. This also includes new kinds of developments and new kinds of systems. Now, “data” will be explained as “well formed” and “meaningful”, if the conditions of this completion principle are fulfilled. For the very first snow crystals that appeared in the universe, such “well formed data” is given by the surrounding conditions (temperature, pressure, atmospheric humidity

etc.), and also through the appearance of water molecules, which could be assembled in order to extend the structure of the growing snow crystals. It is well understood that a snow crystal occupies a larger number of possible system states (entropy), in comparison to single water molecules. Now, ontologically new information has appeared within this universe; this is the (macrophysical) definition of possible structures of snow crystals. The same holds true for new kinds of thoughts and new kinds of behavior. The main difference to living species is that those kinds of systems are much more selective with regard to the “well-formedness” of any kind of data. In short, this selectivity deals with the ability of any living system to learn. “Information” points to those irreversible compositions of new system structures and functionalities that are subsumed under the umbrella of learning. This process will be explained through the concept of dynamization of so-called boundary conditions.

In sum, Floridi”s definition of information is to be characterized as a specific application of our “information-as-causal-compositional-potential”-explanation to language using, learning systems.

Principle 3 (Pri-3): Information is the causal-compositional process (or proceeding process). Any information is based on the (rule-based) capability of any system to interact with other systems and to compose on a non-algorithmic basis new overall system states (information holds a causal-compositional potential). Any piece of empirical information is given by the set of (macro) physical regularities/laws, the required set of dynamized boundary conditions (in order to enable modifications of system structures/new macrophysical laws) and their corresponding system transformations. The terminus “composition” declares a global, non-algorithmic capability as its core impetus. Validation is given by the aim of continuously increasing possible system states. This process comprises the dynamization of so-called boundary conditions into the actual physical scenario, and enables the initiation of new overall system states. These overall system states are characterized by a higher number of possible states, in comparison to the number of system states in a non-interacting situation. In the case of the same number of possible states, we are speaking of reversible interactions.

Information is the causal-compositional process:

○ which describes as initial values a particular physical system, its actual state, its potentially possible state space and accordingly, its region of stability, as well as the lawfully regular (and thereby) macrophysically-measurable description of the system,

○ which describes as further initial values the description of an energetic-material, structural influence on the system,

○ describes as the resultant values the physical system, its actualized state, its actualized potential phase space, and accordingly its region of stability, as well as the lawfully regular and thereby macrophysically-measurable actualized description of the system,

○ which also describes further possible output values (often designated as output signal)

Growth and development in nature is bound to an overall physical process that follows the principle of minimal energy, in conjunction with the completion principle Pri-2. Macrophysical, algorithmic rules/laws appear as partly stabilized invariants with regard to the ongoing, non-algorithmic process of development. Roger Penrose points out that, within the perspective of quantum physics, the non-algorithmic process is described by a vectored, linear superposition of all possible system states. All possible states have to be superposed, and no possibility exists to reduce the amount of superpositions through the use of periodic patterns (periodic patterns are preconditions to create cyclic algorithms). In this way, nature is trying out, in terms of an “intelligent search” [

12], an immense amount of superpositions in order to identify a next state according to the principle of minimal energy. Penrose explains this behavior with the example of the non-algorithmic growth of quasi-crystals. They show in detail a pattern where the elements are composed together in a non-periodic manner. A similar process enables adaptation and learning for living species. If environmental conditions do not change, then the system structure does not become rearranged. If the environmental conditions change, then the system rearranges its elements and their connections through a new overall pattern. This rearrangement is undertaken by a full-blown, non-algorithmic superposition (“brute-force”). For this reason the structure of the pattern cannot be calculated; it holds a kind of a new idea, which fits to the principle of minimal energy. This compositional concept of non-algorithmic superposition describes the fundamental quality of information, which is the concept for newness. Then, of course—in a next step—abstraction processes may take place, and quasi-algorithmic patterns become visible. From a systems perspective, reversible system transformations take place on the basis of such an algorithmic framework. But in this case, no new structure appears. Contrariwise, the ever-continuing process of creation (and also “destruction”) of new structures becomes mediated and enforced by living species, and the human mind holds a privileged access to this process. Nature is incomplete from within a fundamental perspective, and it is our task to complete nature, as well as ourselves, within a continuous process. This is, in fact, the place where paradoxes, new metaphors or just simple “failures” enter into the informational scene.

Another specific conceptual horizon has to be considered. This horizon deals with a core element with the rise of specific information; it is the fact that the subjectively comparative element of this causal-compositional process of development are thoughts. We are declaring the nature of thoughts as specific kinds of causal and physical laws. And as those thoughts are enrolled through culturally developed words (but newly combined from within individual’s perspectives), that personality comes to existence. That is, any thought incorporates on the one hand new and unique kinds of physical laws within this universe, and on the other hand, the core of what we call “information”. In one word, information deals with physical, lawful uniqueness and—for this reason—personal existence. Here, we are at the heart of our interpretation of the included middle. Of course, the cultural process will also flatten, align or eliminate newly-created ideas and concepts. This will give foundation to the objective dimension of information. But this objective dimension can and will only develop with regard to the emergence of the dynamic subjective process, with regard to the included middle. And, this process by itself is not computable (in an algorithmic sense) by a specific device. This is because, within any such process, the specific stream and history of information has to be taken into account, which describes the historical, physical, biological and cultural existence of any individual. However, this process can be imagined and simulated by individuals with comparable histories and experiences. For this reason, the creation and exchange of such information lies within the heart of a human being. And of course, regarding the support that computers might add to this process, we may envisage the possibility and necessity of the so-called information society.

So, where does semantic information start from? Who of our ancestors was the first one who created “knowledge” out of “well-formed data”? When did we start to create knowledge ourselves? What makes the heart of any creative process? What do literature and art says about such creativity? “A good poem adds something to our world which was not there before”. Within such words, the German poet Reiner Kunze characterized the topic of “good art” during a lecture in Radebeul/ Germany. During his lifetime, he perceived many different kinds of information. Initially living in the GDR, and having been pursued and harassed by the Stasi (East German’s ministry of “state security”) Kunze later emigrated to the western part of Germany. He was forced to leave his country and home. What I want to emphasize here is that there is a spiritual life, and this life is characterized by an inherent expansion and completion process. While missing many usual conveniences, and even members of his family, Kunze (and many similar to him) experienced and built himself (and his neighbors) from within such an informational completion and expanding process. An analogue scenario within the physical world is the very first creation of a snowflake within an evolving planetary system. Although based on environmental influences and signals, the very first snowflakes can be clearly described by a (macro) physical law. This law holds a similar informational structure as a good poem. A good poem helps us to better understand and live within this world. It acts like a guiding principle. And so does the physical law, which describes the appearance and structure of snowflakes.

Today we are entering into the so-called information society. But we live in an unbalanced world. Supported by the use of modern information technology, the world becomes even more out of balance; the distance between poor and rich seems continually to grow. Typically, information technology deals with human effort removal. “Made easy” seems to be a central value that is supported by modern IT. Is there a realistic counterforce against “the automatic tie-in of goodness with human effort removal” [

7]? We will argue for a real counterforce, which supports and enables further communication, further growth of interconnections and the development of new relationships between people. This counterforce is “information”. If we look at our entire universe, the development of structure, of life and of consciousness, then we have a first hint about the existence of such a counterforce. Furthermore, we will argue that it is a task of our conscious life to enrich communication. In addition, the energy to fulfill such a task seems to be sponsored by natural evidence.

This study introduces the compositional perspective in information science and physics in three steps. The first is built on Shannon’s concept of information. The logical level of causal (physical) rules is introduced as a core ingredient of information. The second step analysis comprises the already-existing compositional elements within the classical decompositional approach in physics. The basic compositional component of the proposed concept of information will be introduced: the interaction between the 2nd law of thermodynamics and the Pauli Exclusion Principle. The third step comprises the field of thoughts (Gottlob Frege [

13,

14]), of knowledge and of consciousness. This leads to a physical interpretation of the thinking process; “thoughts” characterize the logical foundation of the individual-specific, the causal-included middle. Finally, the conceptual foundation of our intrinsic responsibility to support the development of the active dimension of information will be derived from this first part of the study.

3. Second Step: System Composition and Physics—Towards a Causal Compositional Concept of Information

“Physicalism is the thesis that everything is physical, or as contemporary philosophers sometimes put it, that everything supervenes on, or is necessitated by, the physical” [

15]. There is something fundamental that is missing in physicalism. The typical argument that explains any appearance of structure is based on a reference to the very low entropy existing during the birth of the universe. The core argument is that, within any physically described process, there is always another characteristic of this process given, which is typically not described by physicists. Taken literally, we could call this the dynamization of the so-called boundary conditions. Typically, the boundary conditions of any physically described scenario do not change during such an explanation. But for the cases we are interested in, they do. Such changes always happen when new structures appear. Any new kind of molecule and any new thought initiates such new kinds of structures. We call this new, invariant organization principle (which may be a new kind of a physical law)

information. Any new thought is in a physical sense identical to a new kind of a physical law. As far as I can see, physicists have not drawn their attention to this phenomenon. But this phenomenon explains a core element of any concept of information, and this is the concept for Newness. The physicist David Bohm can be seen as an exception, because on the one hand he introduced a physical concept of so-called hidden variables (which incorporates a concept for the dynamization of boundary conditions) [

16]. And on the other hand Bohm explains an idea of “active information” [

17], which declares a new kind of causal influence on evolving systems. He argues for the idea of self-determination of any physical system. This self-determination happens with respect to the boundary conditions of the system. That is, a potentiality or some potential information is given, which may cause the appearance of new system models and states. Critical points may occur during the development of a system. Those critical points offer the possibility that new, more complex structures may develop. Those structures become stabilized if the entire system may initiate more system states than the system could achieve in its preceding structure. Self-determination is the physical concept explaining the creation of identity.

According to the Pauli Exclusion Principle, our universe must be fundamentally diverse, and has the potential to become more diverse (heterogeneous), as well as more homogeneous, by the operation of the 2nd Law. The Pauli Exclusion Principle underpins many of the characteristic properties of matter, from the large-scale stability of matter to the existence of the periodic table of the elements. The goal is to show that the generation of entropy also causes the creation of structure (“Gestalt”). But Pauli’s exclusion principle has to be is taken into account as well. This example was designed by Carl Friedrich

vs. Weizsäcker [

18], although I have to add the notion of Pauli’s exclusion principle as well. We design a simplified small universe, which exists of atoms. Only one type of atom exists, but this type can assemble itself into larger molecules. The rules of this assembly process represent the equivalent of the Pauli Exclusion Principle within this small universe. Such molecules may be called “condensed matter”. Each atom and molecule may contain quantities of energy. The energy is given in small, undividable portions (quanta). There exists an overall amount of energy

E, and each molecule may hold a number

q of energy quanta.

q itself consists of an amount

qB (binding energy) and an amount

qA (activation energy, which may be interpreted as kinetic energy). The activation energy

qA is given by a non-negative integer (

qA = 0, 1, 2, …).

The binding energy qB is negative. Its value equals the number of atoms that are assembled within a molecule.

qB = 1 − k (k: number of atoms).

For a single atom qB = 1 − 1 = 0: for a molecule made of two atoms qB = 1 − 2 = −1.

Within this condensational model of matter we have molecules mi of different size i (i = 1, 2, …, 5). All molecules are made of the same type of atoms (m1 = 1 atom, m2 = 2 atoms …). Within each molecule a binding energy of value −1 (b-quant: binding-quant) is required for each related atom. Example: The molecule m4 requires a binding energy of value −3 b-quanta. We can now put those molecules into a thermal bath of value Q = −3, −2, … 3 and so forth. For each molecule we can now calculate the activation level q(mi, Q) = mi(b-quant) + Q.

Example:

a) m4, Q=-3: activation level q(m4, −3) = 3 − 3 = 0

b) m4, Q=3: activation level q(m4, 3) = 3 + 3 = 6

Example b) shows that we have, for a thermal bath of value +3, exactly 6 thermal-quanta injected to the molecule m4. We may also assume that molecules do not exist with negative activation levels.

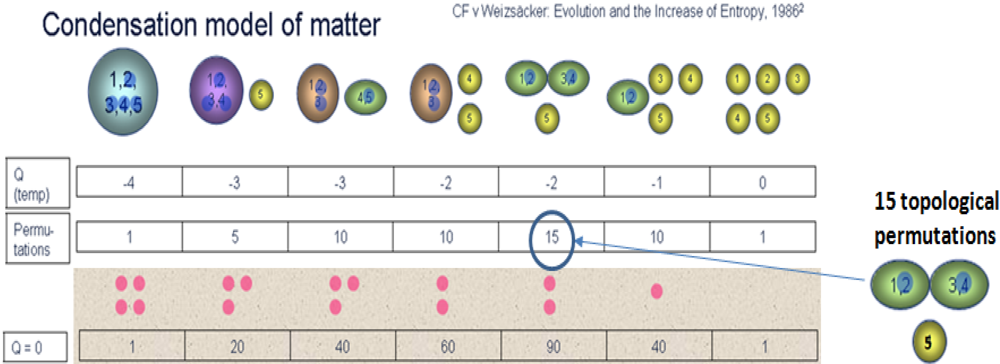

Figure 2 demonstrates the possible numbers of system states with regard to different levels of energy Q (different temperatures). For Q = −4 no solution is available; such molecules would just not exist. Existence becomes possible by stepping into the next value of Q = −3. The big molecule made of five atoms contains a single energy quantum. 2 types of molecules show up, but do not contain any energy quanta. 4 types of molecules do not yet come to existence.

We may assume now that those thermal-quanta can freely move within each molecule. Let us provide a brief explication for this assumption. Feynman gives an explication by discussing the hydrogen molecule ([

19], chapter 10–3). He gives names to the electrons: let us say “a” and “b”. Let us call the protons “P” and “Q”. According to this, we may distinguish between following base states:

Base State 1: “Pa, Qb”

Base State 2: “Pb, Qa”

But this is not yet the whole story. If those electrons have the same spin, then no molecule will be assembled. Both atoms will push away from each other. But if they have different spin, than states 1 and 2 become possible (“potential”). It is even more complicated. The spin of the protons may also differ. That means, we may have eight different base states of a hydrogen molecule.

Figure 2.

Condensational model of molecules within a thermal bath, showing the number of possible microstates for each possible set of molecules made up of five atoms.

But even more states possible. Both electrons may be assembled around one proton. This is a state of higher energy (in fact, different kinds of such states are possible). But it is a possible state (example: it is the state of lowest energy, if we look at the NaCl molecule).

Now, let us come back to the condensational model. If there is activation energy

qA available, then states beyond the state of lowest energy become “potentialized” (Brenner [

1]). That is, both electrons may be assembled around one proton, without destroying the molecule. Given this, the following additional Base States become potentialized:

Base State 3: “Pab, Q”

Base State 4: “P, Qab”

Of course, this activation energy qA may also move to a single atom. Then, consequently, the electron will occupy a state of higher energy. But the atom still exists (that is, the electron will not disappear from the atom).

Of course, there are limits regarding a maximal activation level. By passing these limits, molecules and atoms will be destroyed. This limit is usually at higher values, and has no effect on the small amounts of activations within our model.

Figure 3 shows an example where all possible permutations of thermal-quanta are explicitly displayed. Two thermal-quanta create all possible permutations within three different kinds of molecules. I have also displayed the number of possible topological permutations. The example in

Figure 3 shows that the set of three molecules (2 × m

2, 1 × m

1) can be created in 15 different permutations of five individual atoms. The number of possible microstates equals the number of thermal permutations multiplied by the number of topological permutations. Weizsäcker shows with this example that for five given atoms it is much more probable to create structured systems than just to remain as separate single atoms. For cooler temperatures, the median of the system size is even larger. This scenario—from within a simplified sense—is also true for our universe. Weizsäcker argues that the creation of planet systems can be understood in a similar manner. If the beginning of the universe is characterized through single, separate elements (fog) and high temperature, the expansion and cooling of the universe creates by itself higher probabilities for systems of skeletons. By adding gravitational forces, those skeletons will create solar systems. There is no warm death in the end of the universe (metaphorically speaking, since, of course, the universe does “end”).

Figure 3.

Condensational model of molecules, displaying all possible permutations for the molecule made of 2 times m2 and 1 time m1.

The scope of this example is the visualization of a causal-compositional scenario of a self-forming system in terms of self-determination and the creation of identity. The self-determined state of the system is the state having the highest probability. Higher level effects of stabilization are fully determined by lower level equations (i.e., quantum field theory)—but this is just a kind of notation, as higher level effects (macroscopic physical laws) are just numerical/heuristic condensations of the quantum field equations on lower level. But new ensembles of systems will show up at higher levels, and can be characterized by new sets of macrophysical equations. These abstract laws hold a causal state within certain boundary conditions, and also influence living systems. The forms of fins and wings are examples of the causal influence of physical forces on living species. The use and invention of, for example, mechanical tools counts in the same direction. The design and use of tools expresses equivalent causal influences of physical forces. The application of the lever rule in order to throw stones towards an enemy might be an example. In the end such new laws—new mathematical models—complete the hierarchical description of systems.

If the potentiality for the appearance of more complex systems is given, then we speak about upward causation. The whole is, to some degree, constrained by the influence of the parts (upward causation). But this whole will enable the accessibility of an enriched phase space for all parts of the system. This new system is characterized by a new mathematical model, a new kind of macroscopic physical law. And this new law characterizes on the other hand the downward causation of this overall process of structurization. An example in physics is the sudden appearance of crystals within freezing fluids. The entire fluid becomes restructured, due to this overall process of freezing.

But this scenario does not yet deliver a notion of “signals” and “messages”. Simply put, the terms “signal/message” are used for systems that will change their state, whereas such a change is determined and caused by an incoming event. We name this informational scenario “communication”,

a) if a system is transformed from one state into another state,

b) if this transformation causes the emission of a certain amount of energy/matter,

c) if this energy/matter gets absorbed by another system, including the consecutive change of the state of the receiving system.

Incoming signals may also force the creation of new transformations. Such new transformations are based on the capability of systems to store traces of information (which reflect the different environmental conditions which disturbed the different systems), and to influence—based on such traces—further creations of different kinds of transformations. Such behavior is a basic characteristic for learning and for living systems. Let us now develop within an example the causal compositional concept of information with regard to living species (

Figure 4,

Figure 5 and

Figure 6).

Figure 4.

The Causal-Compositional Concept of Information: an overall Transformation Framework (1) codifies the meaning of an incoming message (2), and an action scheme (3) subsequently triggered; the finch, of course, dissipates energy during its motor activities, but not with regard to the informational scenario: incoming signals trigger action schemes directly, without further modifications of action schemes.

Figure 5.

Composing and storing new sets of transformations; energy is dissipated through adaptations and modifications of action schemes; the transformational framework develops towards further superpositions of system states (4), where new states become stabilized due to changing boundary conditions (5a, 5b).

Figure 6.

Composition of new structures: the shape of the classical fly is transformed towards the shape of a wasp (6); the initial scenario (2), (3) does not take place any more.

The first scenario describes the application of an example of typical, well-established behavior (

Figure 4). A bird (perhaps one of Darwin’s finches) may be specially suited to eating a particular type of gnat. Nothing new gets invented. It is well understood that the activities have to increase the sum of the entropy of the system [

20]. Within our example, the system exists of some finches and their environment. From within this perspective, the forces for all activities are fed through the increase of entropy. The action schemes themselves are one part of an overall transformational framework that aims to create structure and to increase the entropy of the world (

Figure 4: the action scheme describes the activity of the species; the causal informational content of this activity is given by the amount of the growing entropy). Those action schemes are also embedded into the overall transformational framework, which holds the character of a causal, macrophysical law.

We have to note that the incoming signals do not represent such macrophysical laws, but never the less they are embedded into the whole scenario and hold the character of informational triggers. The action (action scheme 3) is ultimately caused by an incoming signal, which triggers a stored transformation (action scheme). Bennett has shown that only the destruction of information is caused by dissipating energy [

21,

22,

23]. From within a physical perspective, this interpretation deals with the disassembly of molecules and/or atoms. This process may consume energy, but also dissipates energy. Bennet claimed that the previous state of the system needs to be reset (erased), so that the new program could be stored, including a certain amount of data storage capacity. Given this, Bennet (and also Toffoli [

24]) showed that any program could be implemented within the framework of a reversible definition of computation. Those results are consistent with the current proposal. That is, any “information” is given by the system of rules that transforms input data into output data. Subsequently, Grassmann pointed out that pure information transformation does not consume any energy [

25].

Thus, the source of nutrition disappears when the finch arrives–blown in by a sudden storm—at a foreign island (

Figure 5). Especially when Such birds have not yet adapted to specific appearances of nutrition, especially when they are still young. Usually, the young bird learns about its sources for nutrition by imitating the behavior of a parent. The specific shape of a gnat will be stored in the bird’s memory by dissipating energy.

Certain neural network substructures will be bound together. It is interesting to see that this kind of hierarchical system architecture has also been introduced into the engineering concepts of neural networks (see for example Moriarty, D.

et al. [

26]). The energy to establish connections comes out of the success of such activity. But our traveling finch did not have the chance to learn by imitation. Its neural apparatus has to stimulate itself. One of the astonishing results is that certain types of finches are more adapted at working with tools than chimpanzees. For example, they use small sticks to collect insects that live in holes. Our finch only dissipates energy by learning new sources of nutrition (that is, modification and adaptation of action schemes).

The next scenario shows the inverse case (activity 4): the appearance of a marmalade fly is given by activity 4 (the marmalade fly was named insect of the year 2004 by the German Entomological Institute). This insect has established a baffling mimicry: it looks like a wasp and so will not be eaten by birds that dislike wasps. In this way, the marmalade fly also increases the entropy of the world, because it is specially adapted to specific nutrition that is not available to birds. Here, we can see that this kind of information is created by the overall causal-compositional framework. It is important to understand that the entire system also enables structures of learning that are not coupled to kinds of action schemes. We will develop this kind of activity deeper in Part 2 of this study, with regard to the concept of self-development. Although the marmalade fly may not be considered a liar, this insect already symbolizes the concept of self-development towards new kinds of identities.

Portions of information do not exist (this would be a “portion of a rule”). What exist are signals/messages or portions of energy/matter/structure. The informational process holds another ontological state as opposed to any other portion of energy/matter/structure (as described in decompositional physics). The informational process delivers the primary non-algorithmic construction (composition) of systems out of its parts. The non-algorithmic characteristics are given through the incorporation and dynamization of formerly unconceptualized boundary conditions. From an ontological perspective, the informational process conceptualizes the emergence of newly appearing (macro-) physical laws. For this reason it becomes evident that information causes a causal process, rather than “is” a causal process:

○ Any portion of energy/matter/structure (or more complex systems) holds the capability to interact with other systems and to initiate new overall (macrophysical) system states and system structures. This portion is called “signal/message”, if such an interaction takes place. This new system state/structure displays new information. Such a state/structure has already existed as a potentiality of the participating elements/systems. This potentiality has become actualized.

○ A signal does not contain information per se. The information is given by the corresponding transformation of the receiver, by the overall (non-local) rule or law, which logically frames this process of transformation.

Given this, we can understand that the usage of the terminus “message” already indicates the potential system states/informational states of any receiver. And, we may still speak of informational contents of messages in terms of “portions of information”. It has to become clear that the concept of information is—like the concept of physical laws—a fundamental concept. “Information” always characterizes the overall organization of any system, and therefore causes the behavior of any system that interacts with other systems. So, “portions of information” do exist insofar as the conditions and possible states of a receiver are already known. Given this, it is possible to somehow predict the reaction of a receiver of a specific message. However, this scenario does not illustrate the fundamental aspect that lies at the heart of our understanding of information. And, this aspect deals with the fact of the newness of any information. Usually we are not interested in things already known. We are interested in things that may enable us to expand our knowledge and to expand our possible states.

4. Third Step: Information and Thoughts—The Individual-Specific, Causal Included Middle

A specific aspect of life is that instead of a network-like structure (crystals etc.), a copying process overlays networks. This is based on (but beyond) the restricted local relationship of atoms to their neighbors within a network. We will now find a multitude of reactions and possible interactions from within a more global perspective. A one-dimensional relationship is replaced by a multidimensional, overall model, although incorporating the one-dimensional basis. Critical situations appear when new stress factors become vacant and force a further dynamization of boundary conditions of living systems. New phase spaces are explored and developed.

During human evolution, phonetic articulations were activated in the beginning on an unintentional basis; they have been side-effects of the motor apparatus. This apparatus had to control certain fine motor activities. However, the signals for these fine motoric activities directly stimulate the phonetic apparatus (the controls of the fine motor apparatus and the phonetic apparatus are placed near to each other within the human brain). As an outcome, such unintentional phonetic articulations began to hold the meaning of the fine motor activity. In the end, this mechanism enabled the identity of language and action, and gives us a scheme to interpret the artifacts that were produced by our ancestors in order to understand their verbal competence. The anthropologist Thomas Wynn reconstructed the evolution of artificially-created pebble tools [

27]. In the beginning, tools were created by two simple strokes (already created by different subspecies of

Australopithecus). Later on, the number of strokes increased continuously (

Homo habilis, Homo erectus). Finally,

Homo sapiens neanderthalensis and early

Homo sapiens sapiens were capable of composing innumerable sets of strikes in order to manufacture their tools. This is the argument that those species were also capable of articulating and memorizing unlimited, recursively embedded statements (sentences). But, given the overall informational process, any such statement plays a part of a continuously ongoing overall process of restructurization.

“Statements” which seem interesting to us and worthwhile following up (i.e., synthetic statements, according to the theory of the Gestalt) are “questions”: questions of the cognitive system to itself, with the aim of reorganizing and restructuring itself by reorganizing and restructuring its shape and ideas. As a “question”, I will describe a situation in which, by activating distributed contexts of knowledge, the cognitive system generates new structures in the current framework of identities of thoughts: structures that show a certain probability for a recursive reorganization of the shape and ideas of this exact cognitive system. And, the causal force comes out of the completion principle.

Previous theories of “meaning” (semantics)—for example, the concept of correspondence and coherence of truth—need to be countered by bringing up the inverse conception, which does not make the statics of statement systems the subject of its explanation [statements are true when they correspond with reality (correspondence theory) or when they can be embedded or integrated in a system of statements (coherence theory)], but which primarily makes the dynamic process of the restructuring/reshaping of meanings in consequence of an examination of synthetic statements the subject of its investigation. The classical categories of truth, whether statements for instance, have to be marked as true in an axiomatic sense (provability), are merely secondarily definitive. The truth carrier is therefore transferred from the static statement (in terms of a semantic proposition) to the dynamic physical-cognitive restructuring process, including the “statement”, as a physical incorporation of the included middle. The meaning of a word is primarily not given by the word. This meaning is given through the identity (and diversity) of the thought, which uses and incorporates such words. Based on this identity, correspondence and coherence may be derived from it. Provability and validation are important, but are not of primary interest concerning our analysis of the acting included middle. To put it into another way: The human spirit comes into being during the creation of new, maybe contradictory and paradox connections, correspondences and out-formations. This scenario may turn into a new overall form, and further mediation of such a form through language creates information. Following this approach, we are encountering a compositional concept of truth (information throughout formation).

What is a physical indicator of a new, emerging form? Because they are fragmented, there is, as yet, no possible validation with regard to coherence, correspondence or pragmatics. How does the system create and indicate such a new form in terms of the included middle? Well, from a logical standpoint, the system transforms itself towards a new structure. An intermediate step during this transformational process creates a new identity and is called a “thought”. Any thought or idea initiates an identity of new information. The logician Gottlob Frege has already introduced the concept of such causal identity [

13,

14]. Given this, any thought does not primarily “represent” anything from the outside world, but initiates something that has not existed before (namely a new thought). Of course, any interaction of any system with the outside world incorporates information about the outside world. But this is not the key part. The core element is that any interaction with the outside world follows the direction of increasing and maximizing the number of possible system states. This is the core of the information, which explains and guides the transformations of any system. And this is the task of our conscious process and experience. Any new thought is identified by physical evidence, because it will move the system towards an increasing number of possible overall states. For this reason, Frege introduced his concept of the “power of assertion”. Only such thoughts are “true” which will increase the number of possible states. And, further on, this concept declares an identity between the personal encoded (and via language, mediated) expression and the meaning of such a thought/statement. We argue that a sign within language is created by the identity of the sound/phoneme with its content (thesis from Swiss linguist Ferdinand de Saussure, 1857–1930: language is

form rather than substance [

28]; more details in

Trabant [

29]). Mediated language and physiological state (with regard to acting and embodiment) hold an isomorphic relationship.

An example is the thought which describes the mental organization in order to prepare and to execute a precise throwing action, for example, to hit a rapidly moving animal. This has been analyzed in detail by William Calvin [

30]. But we need to emphasize the physical scenario, and the reader is invited to follow. Many different movements have to be overlaid and “simulated” at the same time. The arm and the whole body have to be brought into position, and the throw has to be planned with regard to fine motoric details. We are dealing with a superposing linear system. The same characteristics hold true for quantum systems; an overall process or transformation is defined through the linear overlay of many single transformations. As a solution, those detailed transformations are selected which identify a scenario with minimal energy. The form of the successful throw is identified in the same manner as the parabolic shape of a flying stone: from a physics perspective, it is always the scenario comprising minimal energy that is selected (in physics, this is called the principle of minimal energy).

But there are, of course, possible mistakes and errors. Without any experience, the first throw will fail. Then, it is the task of our cognitive system to rearrange the scenario and optimize the shape. This may include contradictory elements: you may have to throw faster than you could ever imagine! You may be forced to move your body in a manner you never envisaged before!—We might already imagine that this logical structure also holds true for more complex technical inventions, but also for new forms of art. This can be straightforwardly interpreted from within a physical logic. That is, only such kinds of thoughts are true, which initiate an identity relationship between the “meaning” of a thought and its causal, physical (biological, social) structure and implementation. Nowadays, a couple of different theories of truth are still under discussion (see the online open access Stanford Encyclopedia of Philosophy). The different kinds of theories of truth will remain with regard to their different possible interpretations. But within our causal-compositional concept of information, we have to rely on an identity theory of truth with regard to the process of the included middle. Any (true) thought does not primarily “re”present something from the outside world, but implements structures from the physical (biological, social) world itself. But, once again, our focus is—primary to any truth—the fundamental human category of creating paradoxes, contradictions (“I have never done this,—so how could I?”), outformation, non-identity, the included middle.

The so-called conscious experience is the phenomenal counterpart of this search process. For this reason, we are bound to contradictions from the beginning. And, as we reflect this process at its heart within a mediated language, we are encouraged to move towards the so-called inexpressible. A communication medium such as language supports the communication and evaluation of thoughts. And, if those living systems modify and develop their behavioral models, then a full singularization and individualization of information takes place. Even your neighbor holds different kinds of informational structures and models. This equals the appearance of consciousness. Consciousness is the ability to compose singular informational structures. It is the ability to trespass the local towards the global. There is no place within this universe where identical conscious structures are given. Consciousness—as it is—creates universal individuality. It is the concept of the universe to create consciousness in order to solve the task of continuously increasing the number of possible states. Our conscious experience exactly reflects the universality and singularity of such systems: thoughts (as forms of hierarchical structures) modify our behavior, including control of motor activities, but also in terms of stimuli transmitted to other forms. Within this perspective, our mind exerts control over our internal organization. That is the reason why we experience a

now, where the entire history of all those embedded system structures culminates into a single point of control, which is continuously seeking to extend action schemata (principle of maximum entropy) or to optimize the outcome with respect to the cost (principle of least effort). Please note that a couple of approaches have been made to explain consciousness within the context of quantum physics. David Chalmers undertook a broad overview on this topic. In one of his papers, he argues for a double meaning of information [

31]. His approach to characterizing information within a physical and phenomenal dimension fits with the intention of this paper and our definition of information.

The main characteristics of Information—non-local re-structurization of an overall system—provide a prima facie argument for consciousness. Consciousness is the “defined- undefined”, the acting completion theorem; it is the search for newness. The concept of now—in terms of hierarchical system evolution—brings us into the center of this structure. Consciousness is the single-entity-based capability of full entity-based transformation; that is: learning and composing of the self. It is this level of transformation-enabling “nowness” that is open for individual newness and though the system enables spontaneous behavior. Consciousness is the ability for spontaneous compositions of new thoughts and acting capabilities—which constantly feeds the process of modifying ourselves within a sustainable dimension. A reviewer of this study pointed to the following characterization, provided by the neuroscientist Gerald Edelmann:

“... what you lose on entering a dreamless deep sleep ... deep anesthesia or coma ... what you regain after emerging from these states. [The] experience of a unitary scene

composed variably of sensory responses ... memories ... situatedness ...” [

32] (emphasis GL).

Where does the “unitary” of any such experienced scene come from, and to what extent are sensory responses, memory functionality and situatedness composed of each other? This compositional arrangement is the outcome of a fundamental potential of the objective and subjective dimension of this universe, mediated via the informational process (included middle). And, as it culminates in a phenomenal and individual now, its overall compositional counterpart is made up of the wholeness of our moral stance. This structure gives conceptual foundation of our intrinsic responsibility to support the development of the active dimension of information. If this universe is intrinsically characterized by information, then any individualized species, which is composed and composes itself out of a singular now, has the responsibility to enrich this universe towards its informational continuation.

The second part of this study will develop this wholeness of our moral stance, based on the analysis of the active dimension of language oriented, spontaneous information. The inversion of the perspective of classical semantics will explain the process of development towards enriching structures, which is supported by natural force and mediated through language oriented, metaphoric compositions. But this process requires continuous, internal, conscious stabilization within culture. New cultural capabilities cause cultural transformations, and it is an ever-continuing task to stabilize such transformations, and at the same time support the enrichment process. “Information” is a basic, yet metaphoric, concept of modern culture and it is our task to develop and stabilize this concept with regard to its content and its usage in order to support an enriched life for all people. There is not much theory required in order to identify the process of personal development. Any successful subprocess leads to an internal state of overall integration, which expresses itself by an intrinsic smile.