Social Pressure and Environmental Effects on Networks: A Path to Cooperation

Abstract

:1. Introduction

2. Model

Evolutionary Rules

3. Results

3.1. Influence of the Update Rule

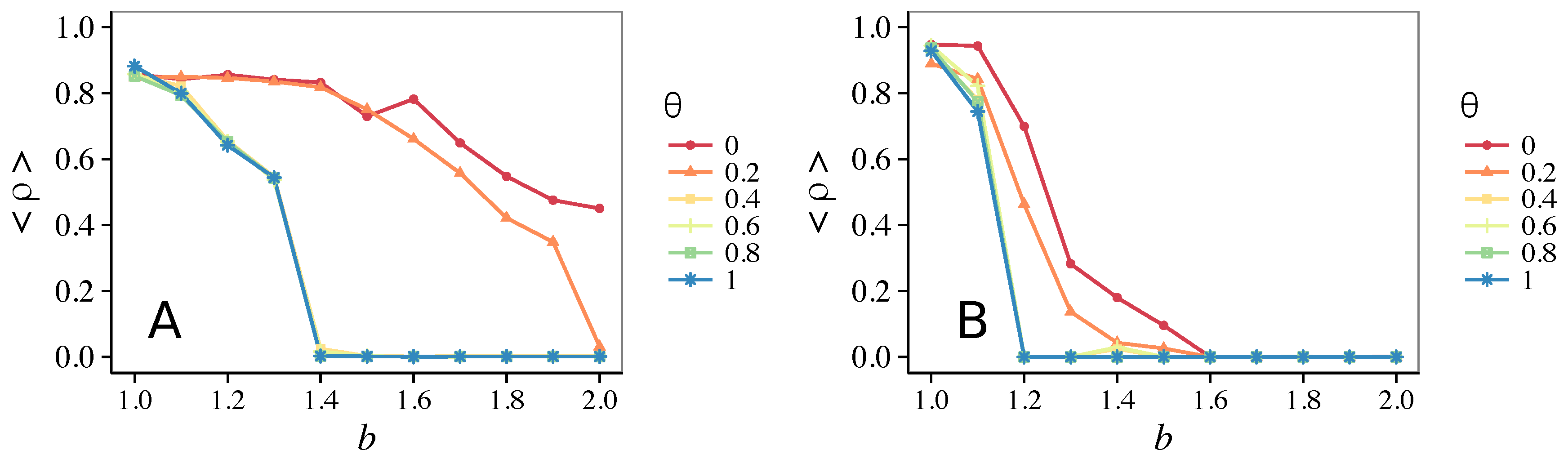

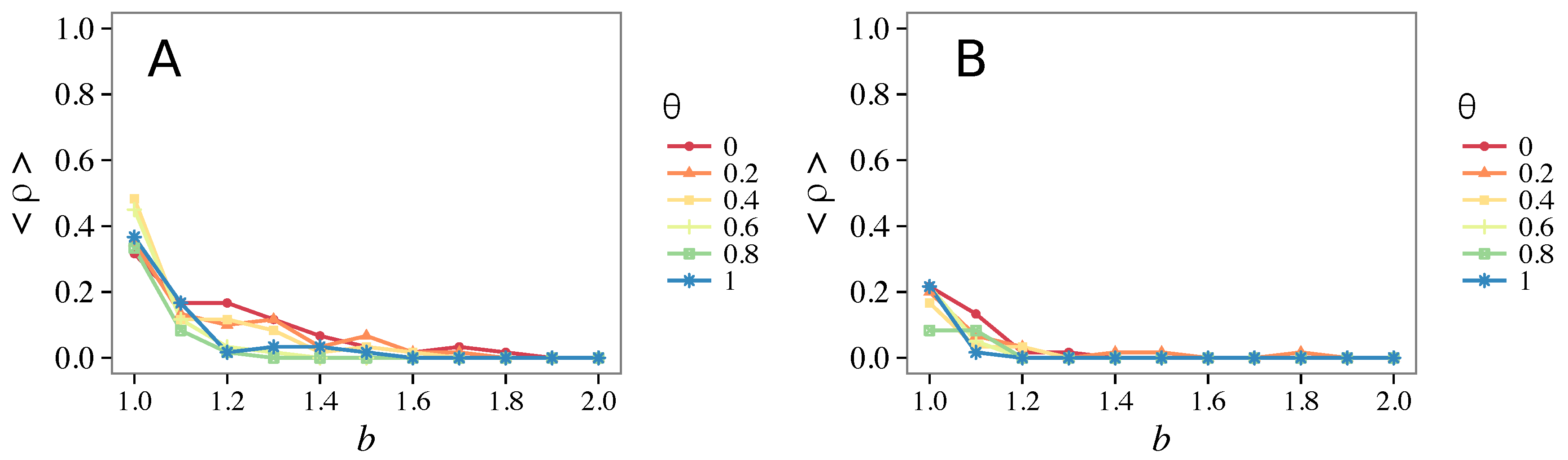

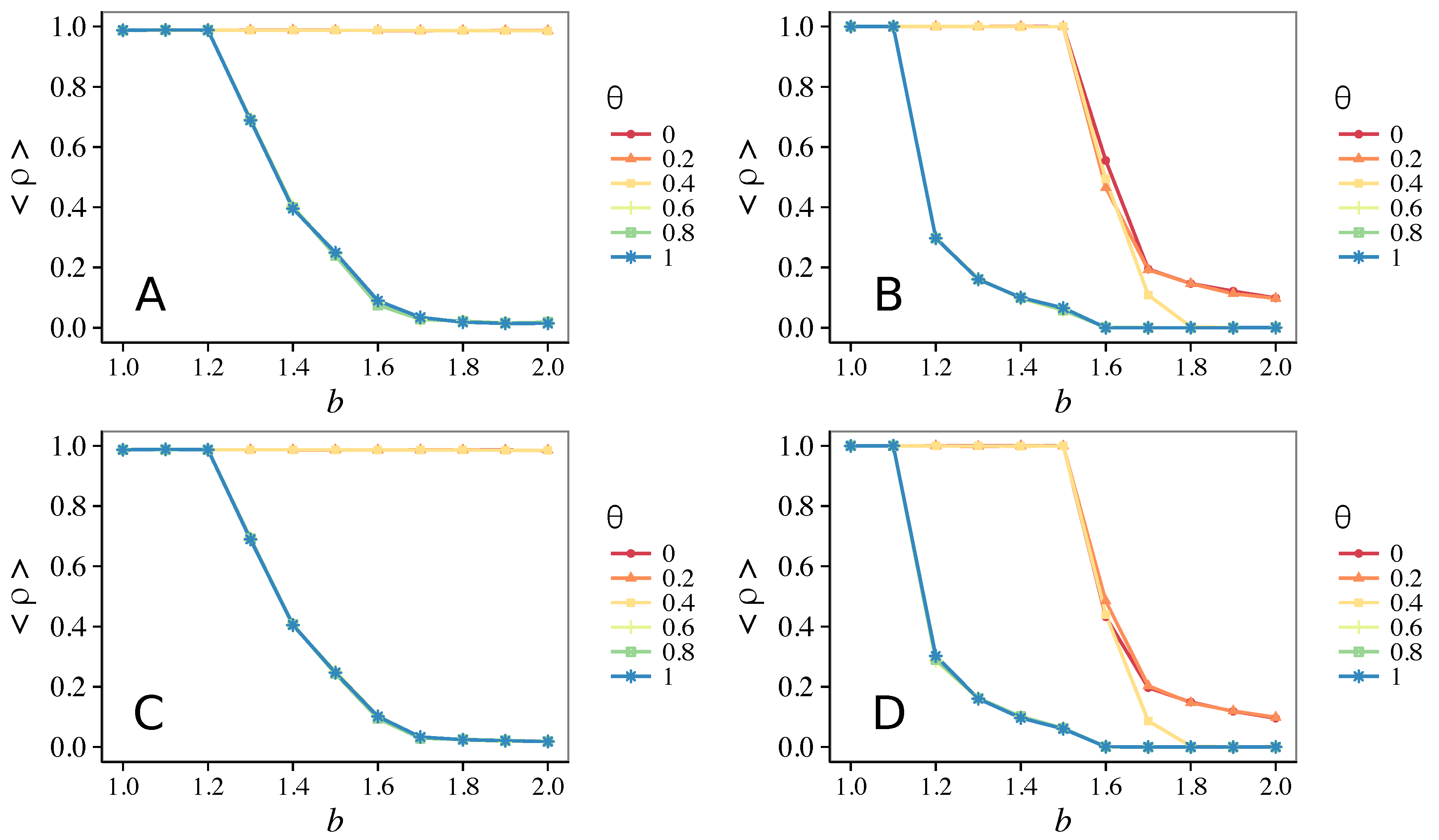

3.1.1. Unconditional Imitation

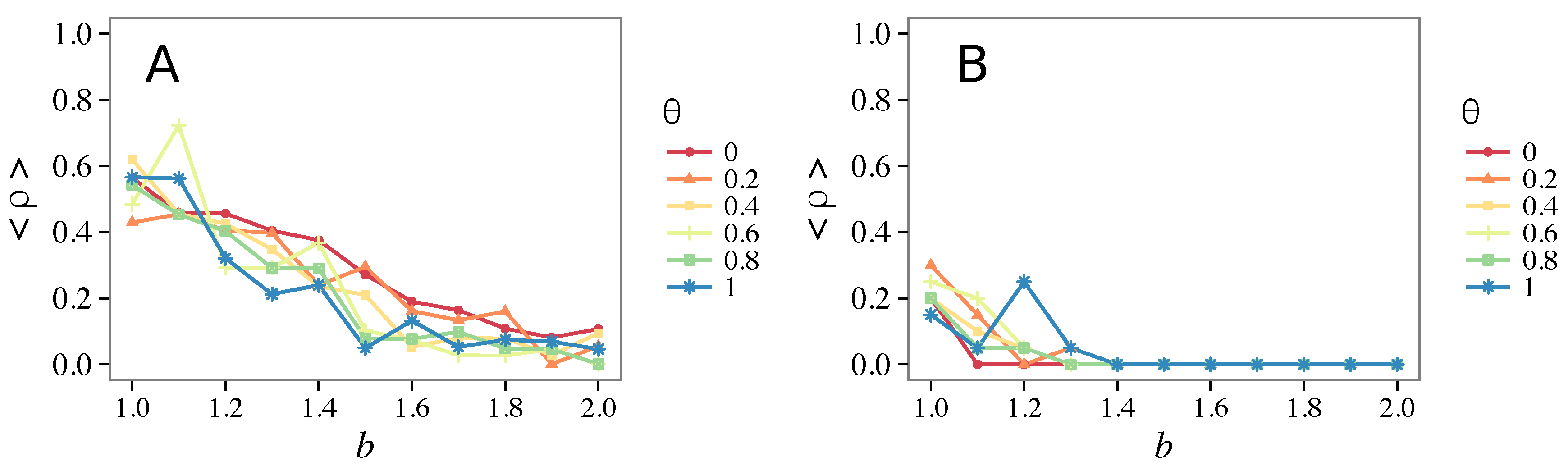

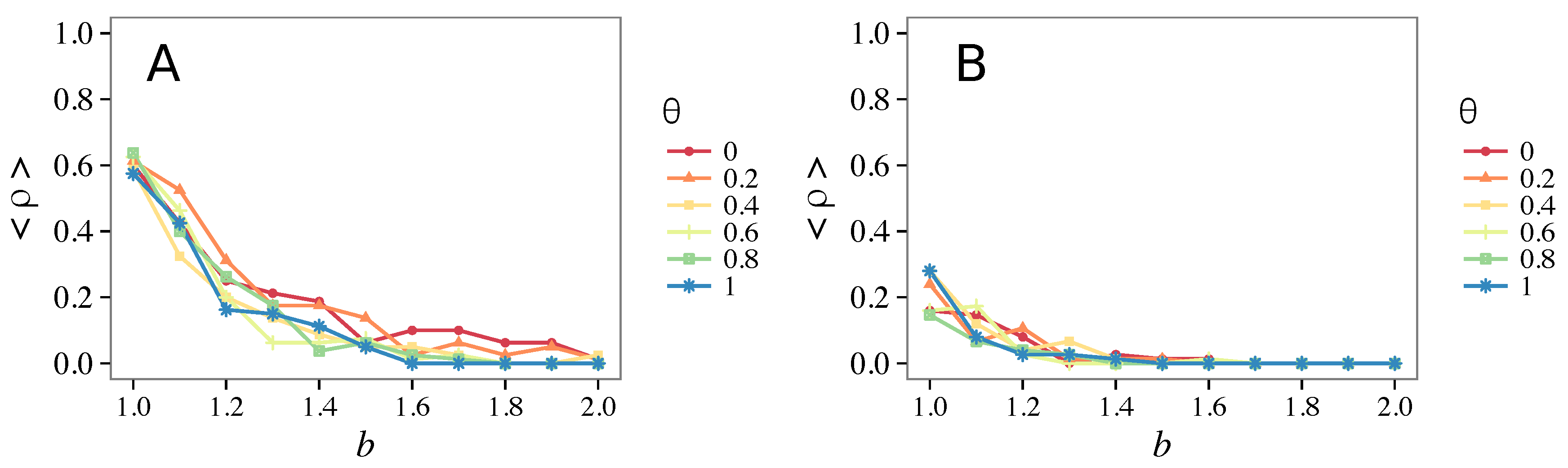

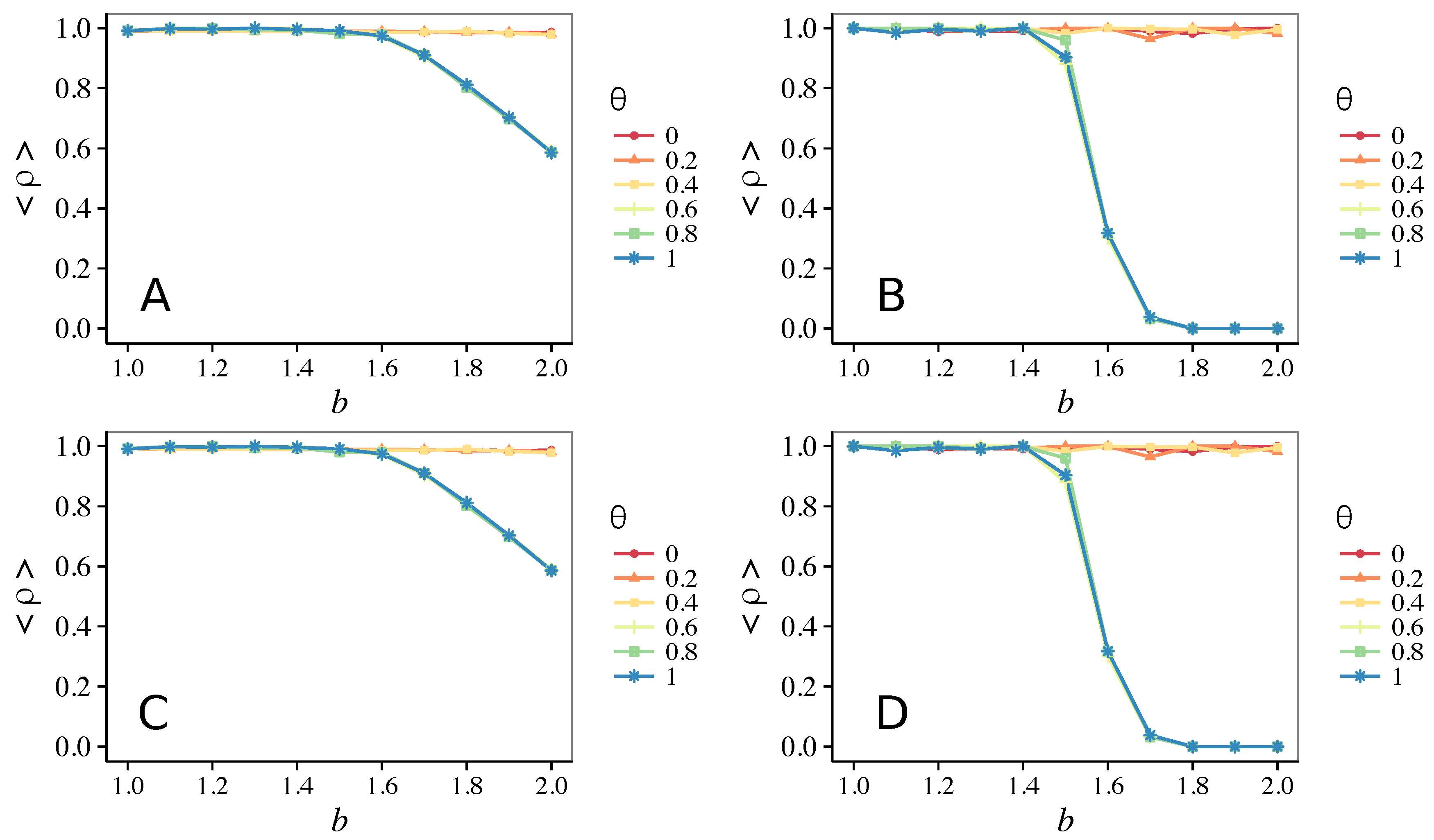

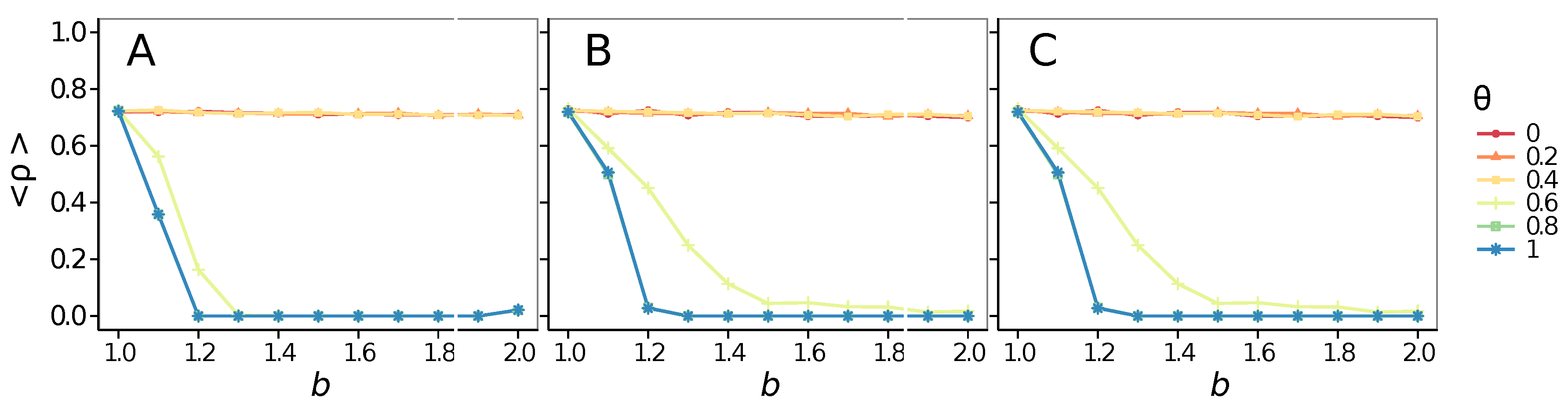

3.1.2. Mixed Update Rule

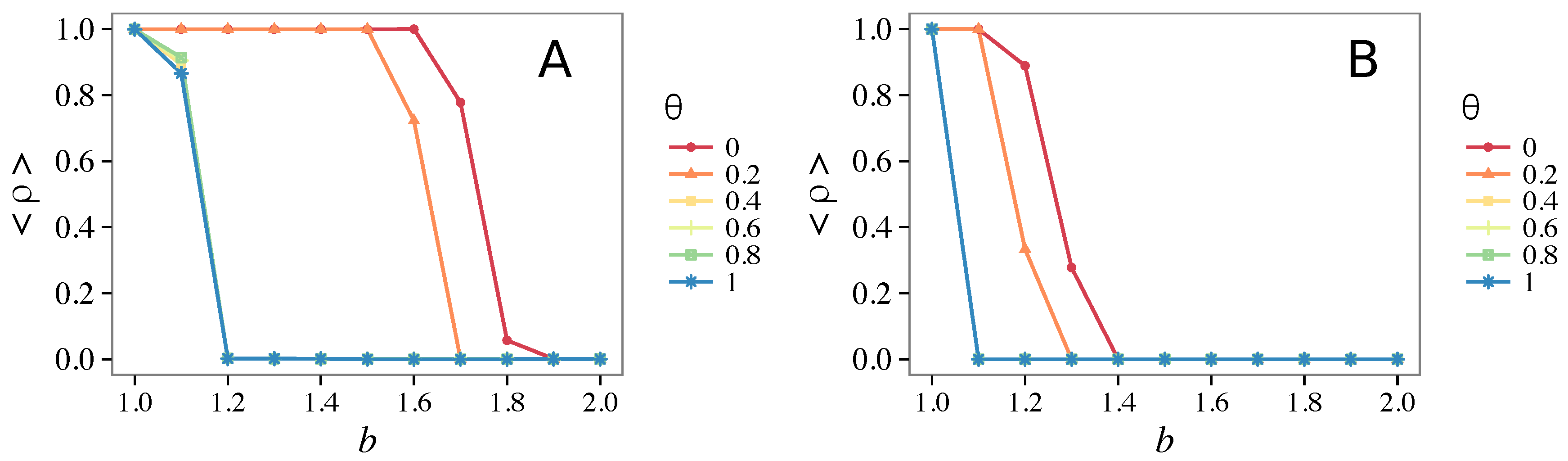

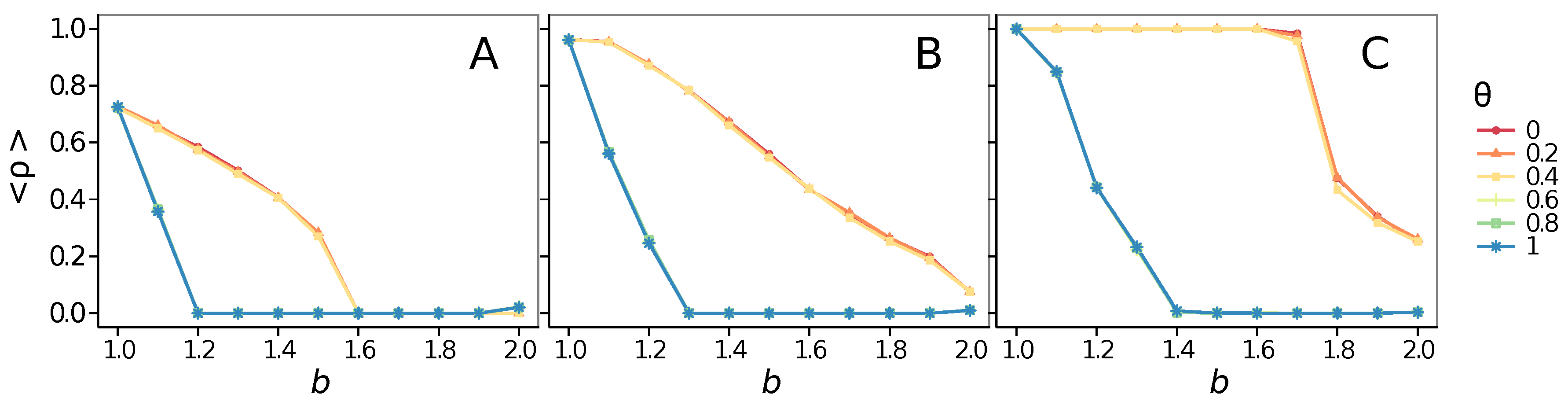

3.2. Other Topologies

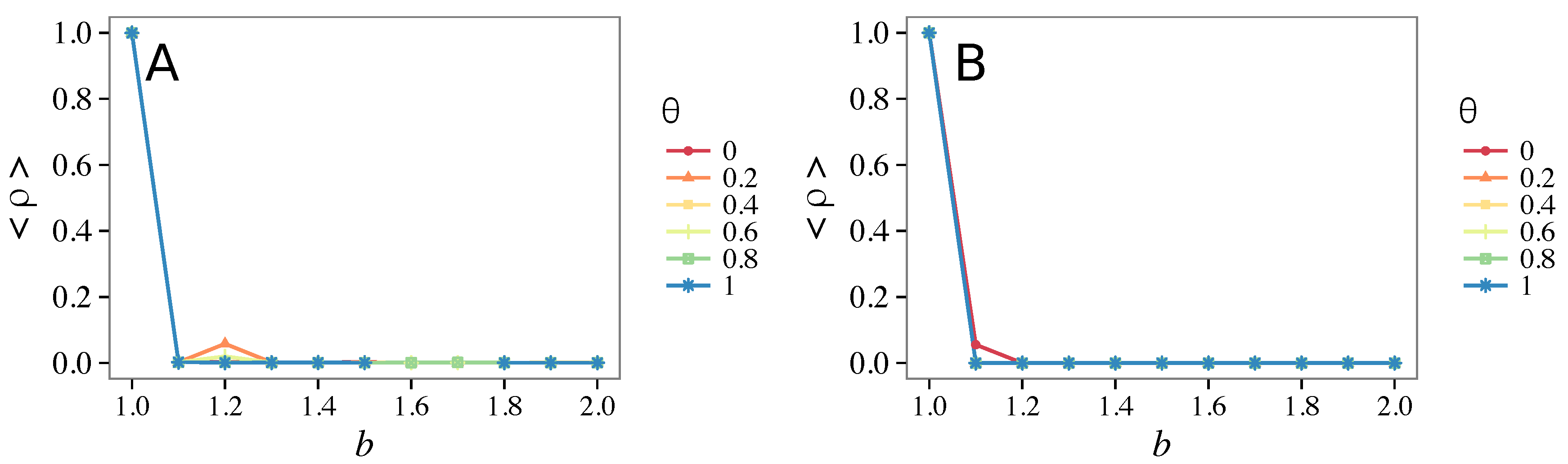

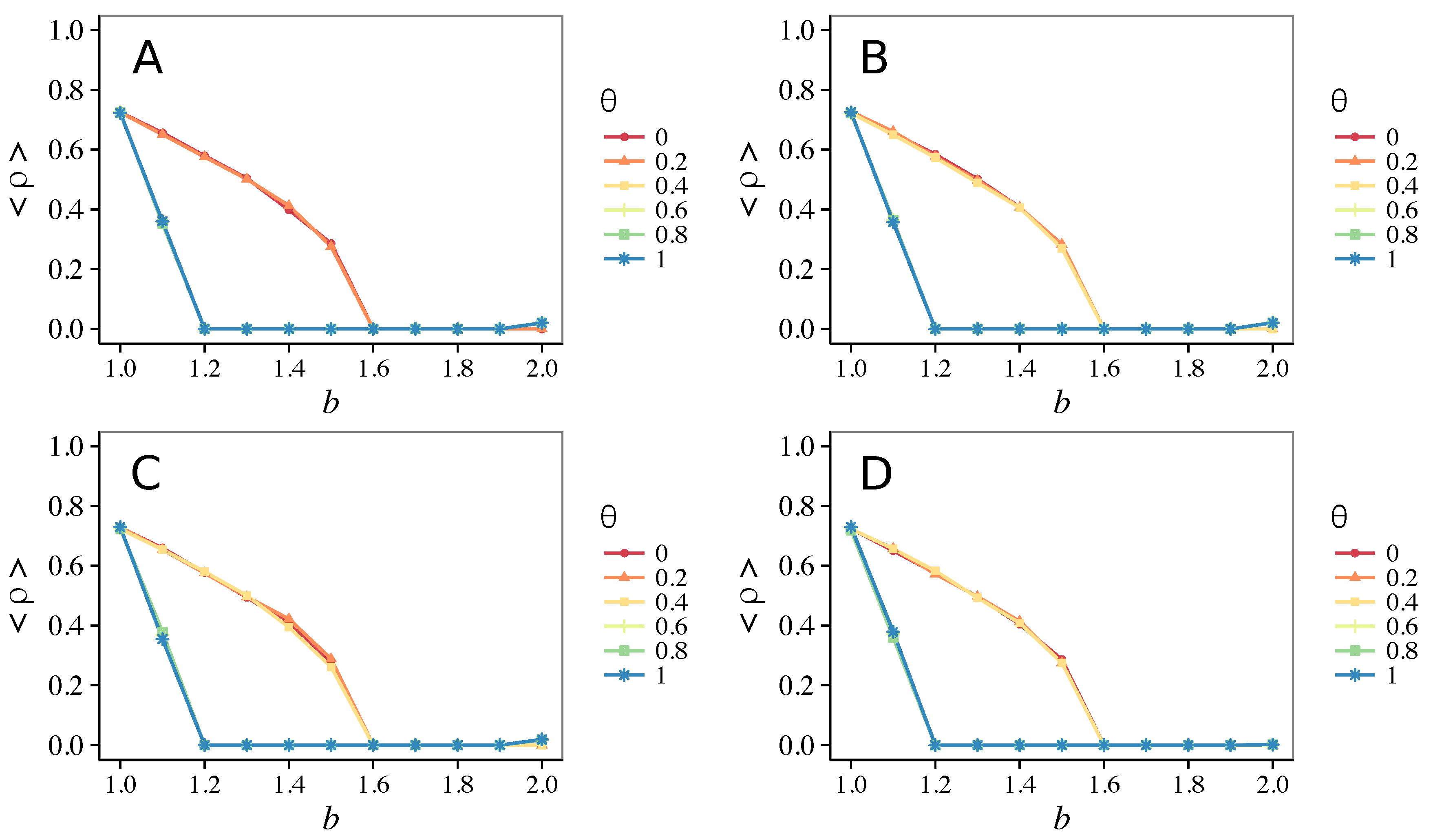

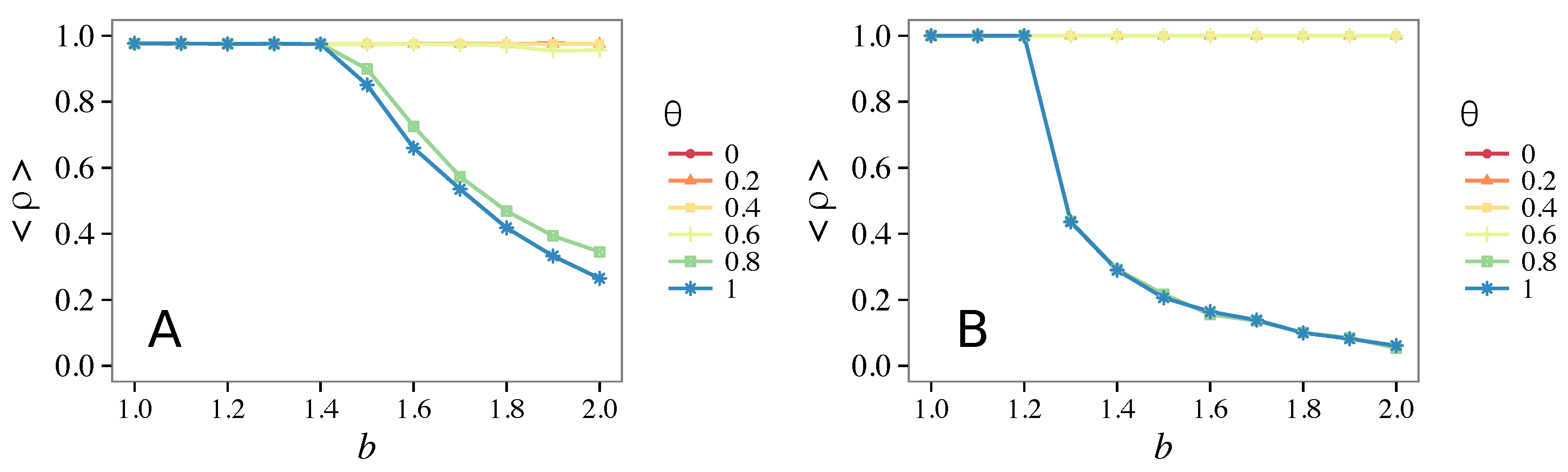

3.3. Different Initial Conditions

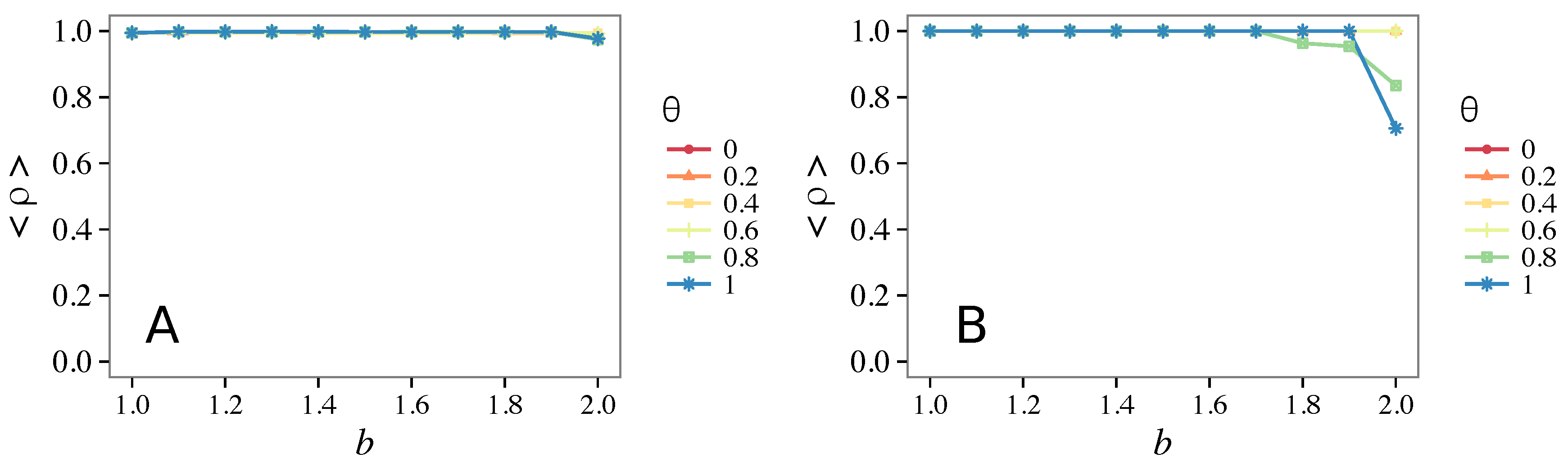

3.4. Case of Vigilant Defectors

4. Discussion and Conclusions

- Vigilance needs the small-world effect (the presence of short-cuts connecting individuals physically far away from each other) to be efficient in fostering cooperation: indeed, in regular lattices, Figure 7, it does not help, and the small-world property is ubiquitous in most real social systems (only the smallest communities can be modeled by complete graphs, and Euclidean topologies are even more uncommon in human societies).

- Vigilance works not only when the individuals update their strategy by means of an essentially evolutionary rule (REP), but also when they evolve through more typically “social” mechanisms as pure imitation (at least on ER networks); moreover, considering the mixed rule, which takes into account the intrinsic non-strategic component of humans’ decision making processes, we found that the cooperation can tolerate the influence of irrationality only when this is low (), coherently with the results of Ref. [22].

- Concerning again the update rule, it is worth stressing that, in heterogeneous networks (scale-free), vigilance is beneficial for cooperation only with replicator update, whilst with strategic imitation (UI) the presence of hubs appears to be detrimental for the emergence of pro-social behaviors.

- The results do not depend sensitively on the initial conditions (at least in heterogeneous topologies): this is a fundamental feature of the model since it is usually hard to determine the initial conditions for real social systems; on the other hand, in complete graphs (i.e., in mean-field approximation), this is not true, but only small human communities can be described in this way, and, in such cases, different dynamical mechanisms are at work [32].

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Darwin, C. The Descent of Man; John Murray: London, UK, 1871. [Google Scholar]

- Axelrod, R.; Hamilton, W.D. The naked emperor: Seeking a more plausible genetic basis for psychological altruism. Science 1981, 211, 1390–1396. [Google Scholar] [CrossRef] [PubMed]

- Hamilton, W.D.; Axelrod, R. The evolution of cooperation. Science 1981, 211, 1390–1396. [Google Scholar]

- Maynard-Smith, J.; Szathmáry, E. The Major Transitions in Evolution; Oxford University Press: Oxford, UK, 1995. [Google Scholar]

- Nowak, M.A.; May, R.M. Evolutionary games and spatial chaos. Nature 1992, 359, 826–829. [Google Scholar] [CrossRef]

- Nowak, M.A. Five rules for the evolution of cooperation. Science 2006, 314, 1560–1563. [Google Scholar] [CrossRef] [PubMed]

- Roca, C.P.; Cuesta, J.A.; Sánchez, A. Evolutionary game theory: Temporal and spatial effects beyond replicator dynamics. Phys. Life Rev. 2009, 6, 208–249. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Moyano, L.G.; Sánchez, A. Evolving learning rules and emergence of cooperation in spatial prisoner’s dilemma. J. Theor. Biol. 2009, 259, 84–95. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Nowak, M.A.; Sigmund, K. Evolution of indirect reciprocity by image scoring. Nature 1998, 393, 573–577. [Google Scholar] [CrossRef] [PubMed]

- Ashlock, D.; Smucker, M.D.; Stanley, E.A.; Tesfatsion, L. Preferential partner selection in an evolutionary study of prisoner’s dilemma. BioSystems 1996, 37, 99–125. [Google Scholar] [CrossRef]

- Andrighetto, G.; Giardini, F.; Conte, R. The Cognitive Underpinnings of Counter-Reaction: Revenge, Punishment and Sanction. Sist. Intell. 2010, 22, 521–523. [Google Scholar]

- Giardini, F.; Vilone, D. Evolution of gossip-based indirect reciprocity on a bipartite network. Sci. Rep. 2016, 6, 37931. [Google Scholar] [CrossRef] [PubMed]

- Trivers, R. The evolution of reciprocal altruism. Q. Rev. Biol. 1971, 46, 35–57. [Google Scholar] [CrossRef]

- Alexander, R.D. The Biology of Moral Systems; Aldine de Gruyter: New York, NY, USA, 1987. [Google Scholar]

- Bateson, M.; Nettle, D.; Roberts, G. Cues of being watched enhance cooperation in a real-world setting. Biol. Lett. 2006, 2, 412–414. [Google Scholar] [CrossRef] [PubMed]

- Rossano, M.J. Supernaturalizing Social Life. Hum. Nat. 2007, 18, 272–294. [Google Scholar] [CrossRef] [PubMed]

- Oda, R.; Kato, Y.; Hiraishi, K. The Watching-Eye Effect on Prosocial Lying. Evol. Psychol. 2015, 13. [Google Scholar] [CrossRef]

- Pereda, M. Evolution of cooperation under social pressure in multiplex networks. Phys. Rev. E 2008, 94, 032314. [Google Scholar] [CrossRef] [PubMed]

- Ichinose, G.; Sayama, H. Invasion of cooperation in scale-free networks: Accumulated vs. average payoffs. Available online: https://arxiv.org/abs/1412.2311 (accessed on 12 January 2017).

- Watts, D.J. A simple model of global cascades on random networks. Proc. Natl. Acad. Sci. USA 2002, 99, 5766–5771. [Google Scholar] [CrossRef] [PubMed]

- Vilone, D.; Ramasco, J.J.; Sánchez, A.; San Miguel, M. Social and strategic imitation: The way to consensus. Sci. Rep. 2012, 2, 686. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Vilone, D.; Ramasco, J.J.; Sánchez, A.; San Miguel, M. Social imitation versus strategic choice, or consensus versus cooperation, in the networked Prisoner’s Dilemma. Phys. Rev. E 2014, 90, 022810. [Google Scholar] [CrossRef] [PubMed]

- Santos, F.C.; Pacheco, J.M. Scale-Free Networks Provide a Unifying Framework for the Emergence of Cooperation. Phys. Rev. Lett. 2005, 95, 098104. [Google Scholar] [CrossRef] [PubMed]

- Erdös, P.; Rényi, A. On the evolution of random graphs. Publ. Math. Inst. Hung. Acad. Sci. 1960, 5, 17–61. [Google Scholar]

- Barabási, A.L.; Albert, R. Emergence of scaling in random networks. Science 1999, 286, 509–512. [Google Scholar] [PubMed]

- Watts, D.J.; Strogatz, S.H. Collective dynamics of ’small-world’ networks. Nature 1998, 393, 440–442. [Google Scholar] [CrossRef] [PubMed]

- Vilone, D.; Sánchez, A.; Gómez-Gardeñes, J. Random topologies and the emergence of cooperation: The role of short-cuts. J. Stat. Mech. 2011. [Google Scholar] [CrossRef]

- Caldarelli, G. Scale-Free Networks: Complex Webs in Nature and Technology; Oxford University Press: Oxford, UK, 2007. [Google Scholar]

- Barrat, A.; Weigt, M. On the properties of small-world network models. Eur. Phys. J. B 2000, 13, 547–560. [Google Scholar] [CrossRef]

- Dunbar, R.I. Gossip in evolutionary perspective. Rev. Gen. Psychol. 2004, 8, 100–110. [Google Scholar] [CrossRef]

- Giardini, F.; Conte, R. Gossip for social control in natural and artificial societies. Simulation 2012, 88, 18–32. [Google Scholar] [CrossRef] [Green Version]

- Guazzini, A.; Vilone, D.; Bagnoli, F.; Carletti, T.; Grotto, R.L. Cognitive network structure: An experimental study. Adv. Complex Syst. 2012, 15, 1250084. [Google Scholar] [CrossRef]

© 2017 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pereda, M.; Vilone, D. Social Pressure and Environmental Effects on Networks: A Path to Cooperation. Games 2017, 8, 7. https://doi.org/10.3390/g8010007

Pereda M, Vilone D. Social Pressure and Environmental Effects on Networks: A Path to Cooperation. Games. 2017; 8(1):7. https://doi.org/10.3390/g8010007

Chicago/Turabian StylePereda, María, and Daniele Vilone. 2017. "Social Pressure and Environmental Effects on Networks: A Path to Cooperation" Games 8, no. 1: 7. https://doi.org/10.3390/g8010007