Mixed Cryptography Constrained Optimization for Heterogeneous, Multicore, and Distributed Embedded Systems

Abstract

:1. Introduction

2. Related Work

3. Threat Model

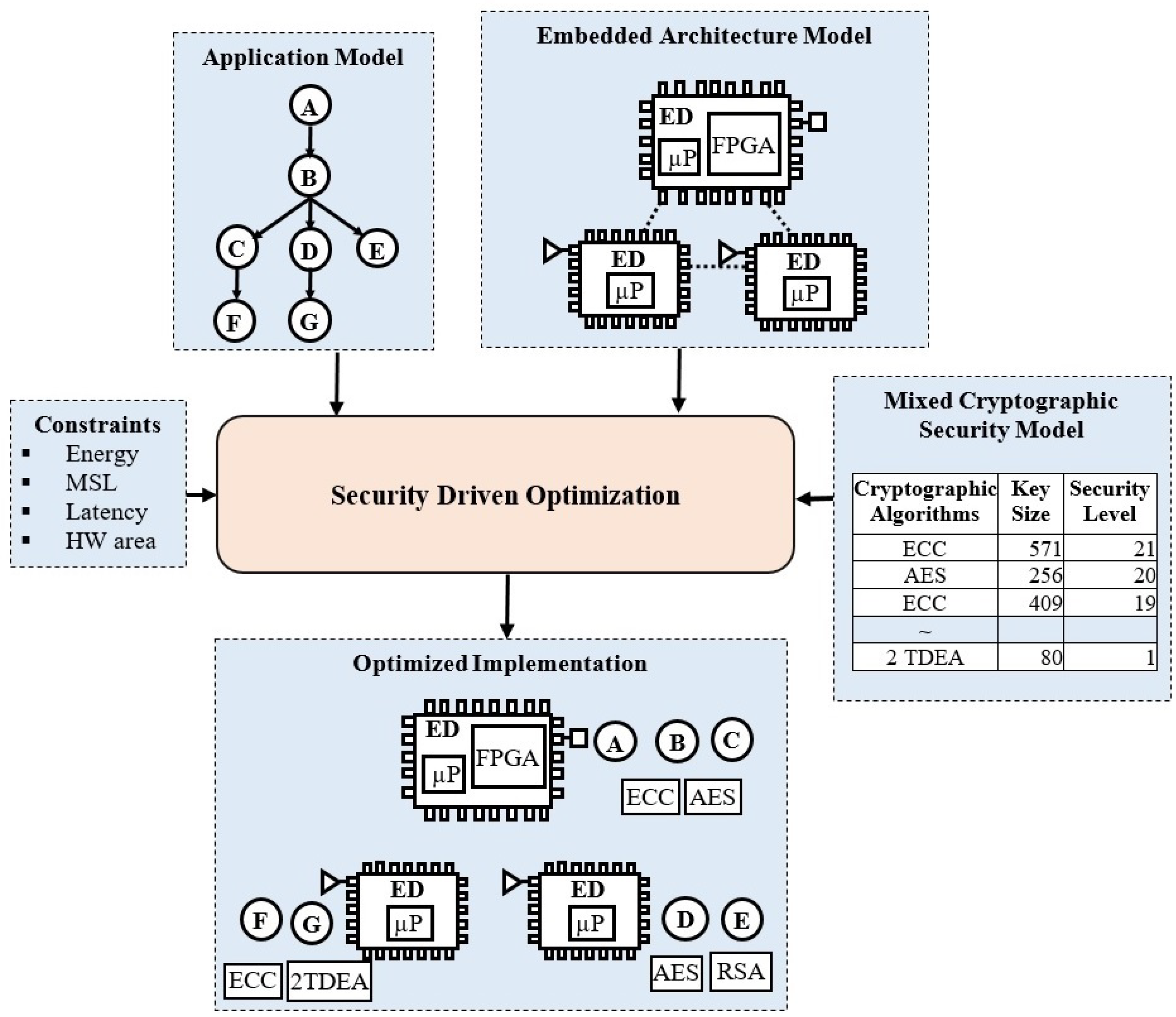

4. Security-Driven Optimization Methodology

4.1. Application Modeling

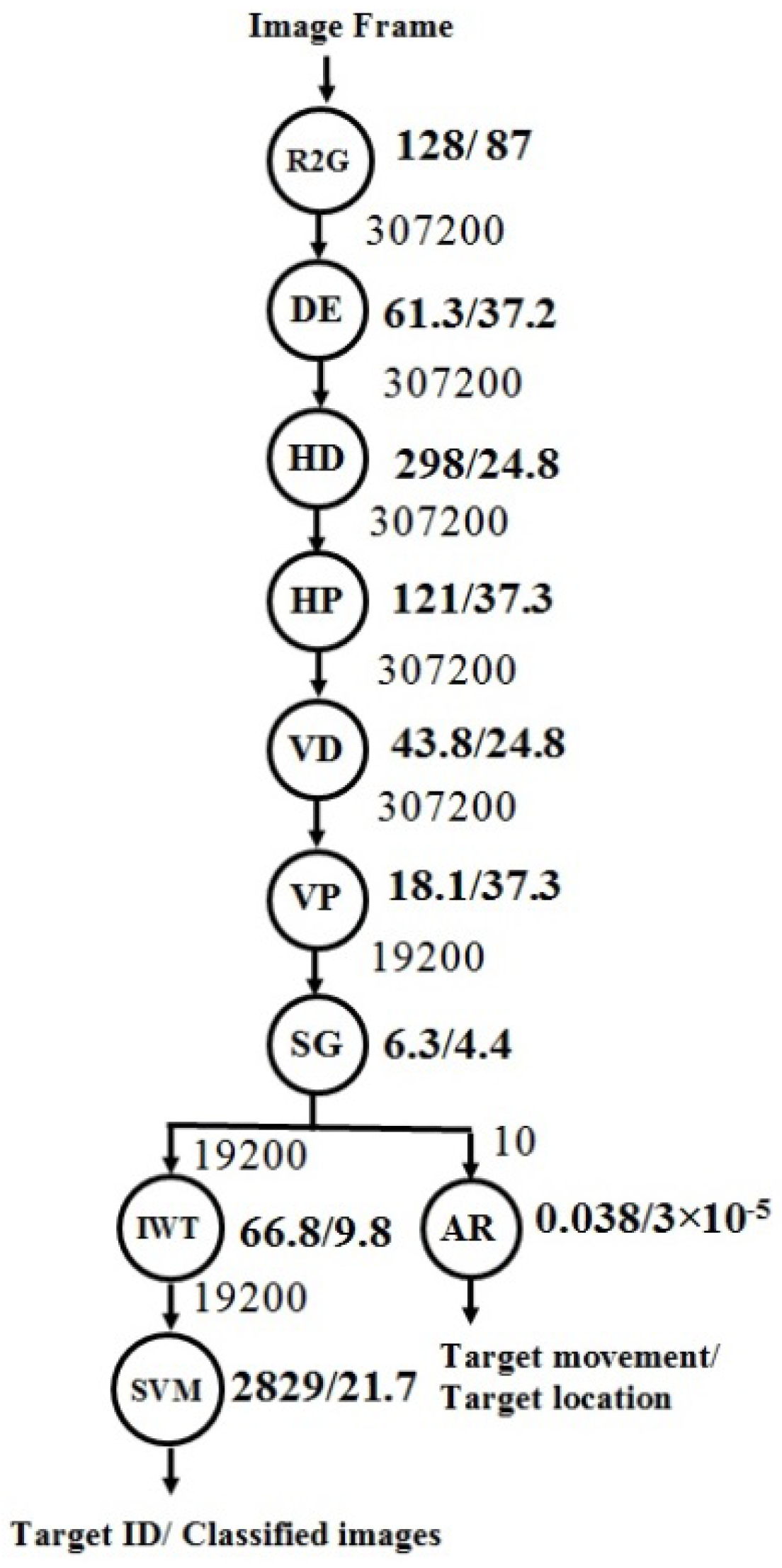

4.1.1. Dataflow Model

4.1.2. Execution Latency Model

4.1.3. Communication Latency

4.2. Mixed Cryptography Security Model

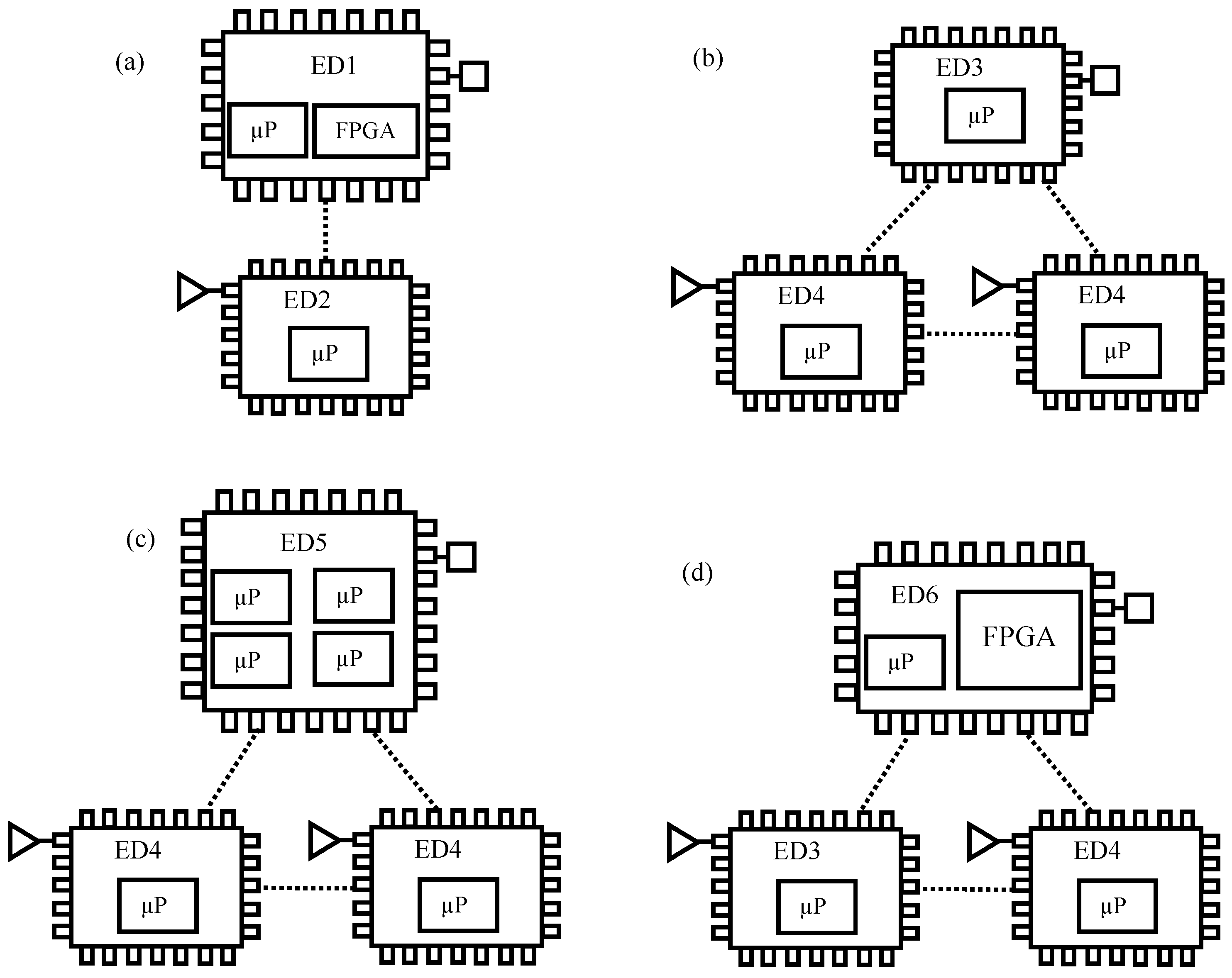

4.3. Embedded System Architecture Model

4.3.1. Embedded Device

4.3.2. Power Consumption

4.4. Energy Optimization Methods

4.5. Genetic Optimization Algorithm

| Algorithm 1: Fitness function with penalty |

| Input: P, MSLC, EC. Output: f(x).

|

4.6. Security Policy Constraints

5. Experimental Results

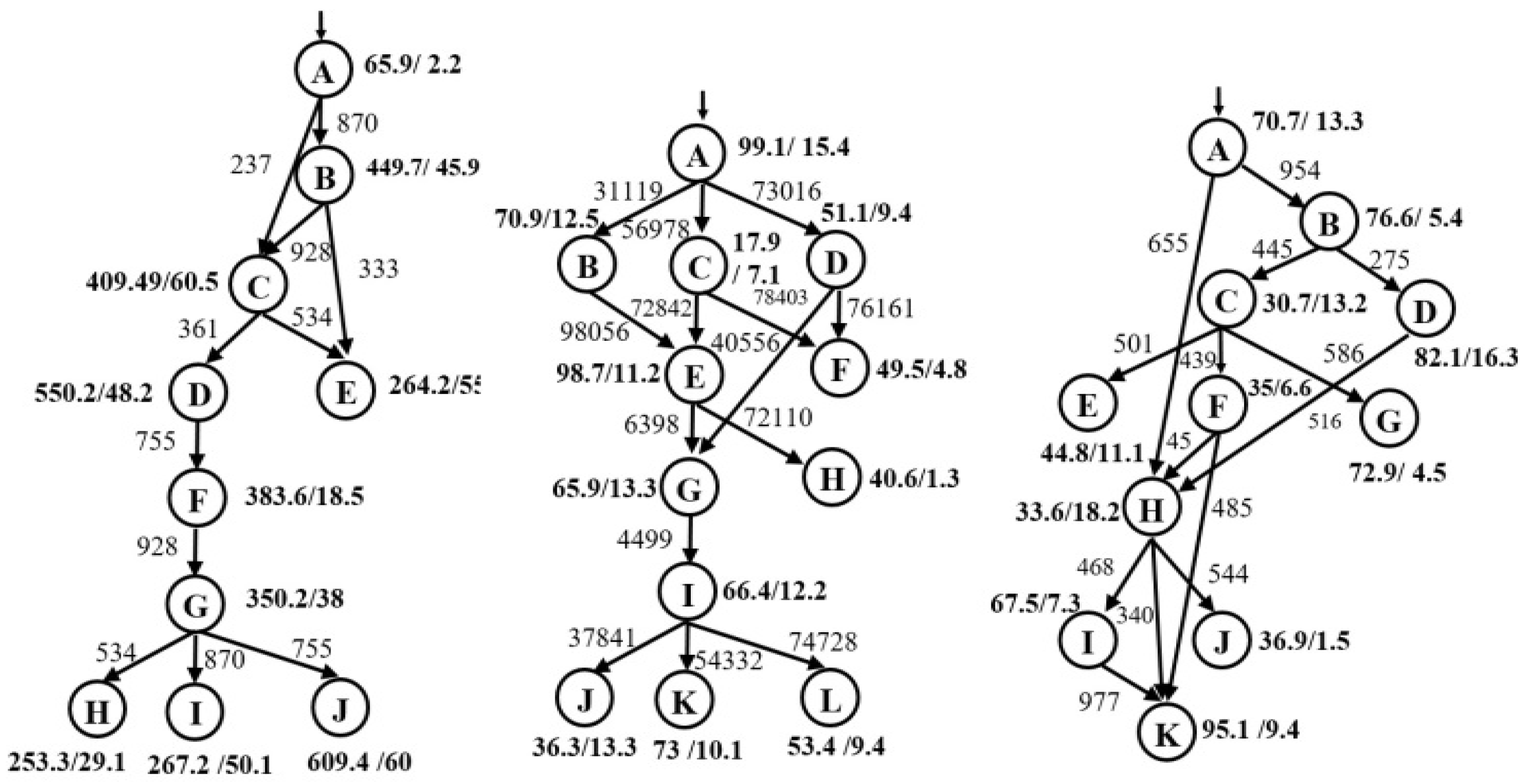

5.1. Experimental Setup

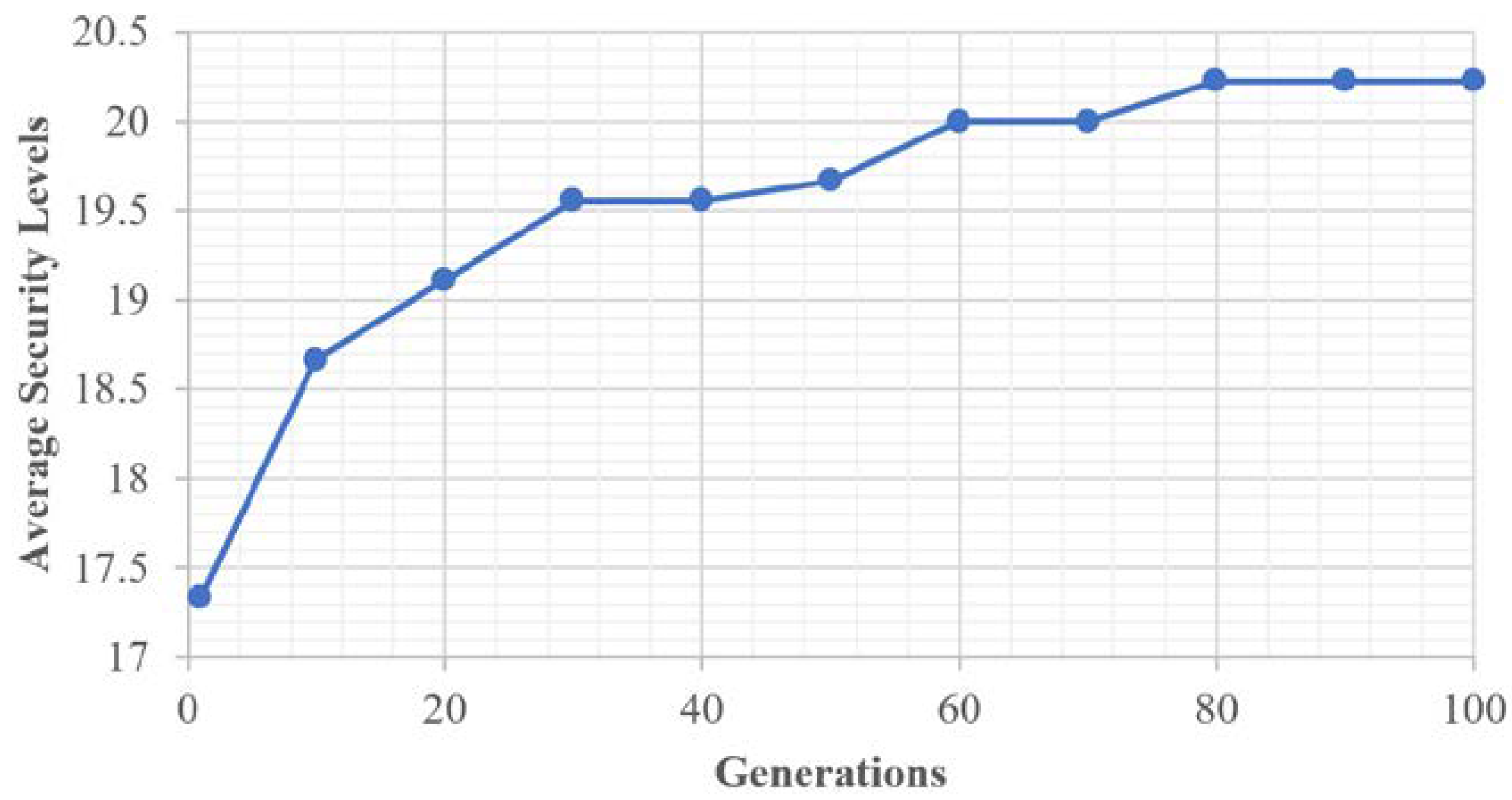

5.2. Genetic Algorithm Performance

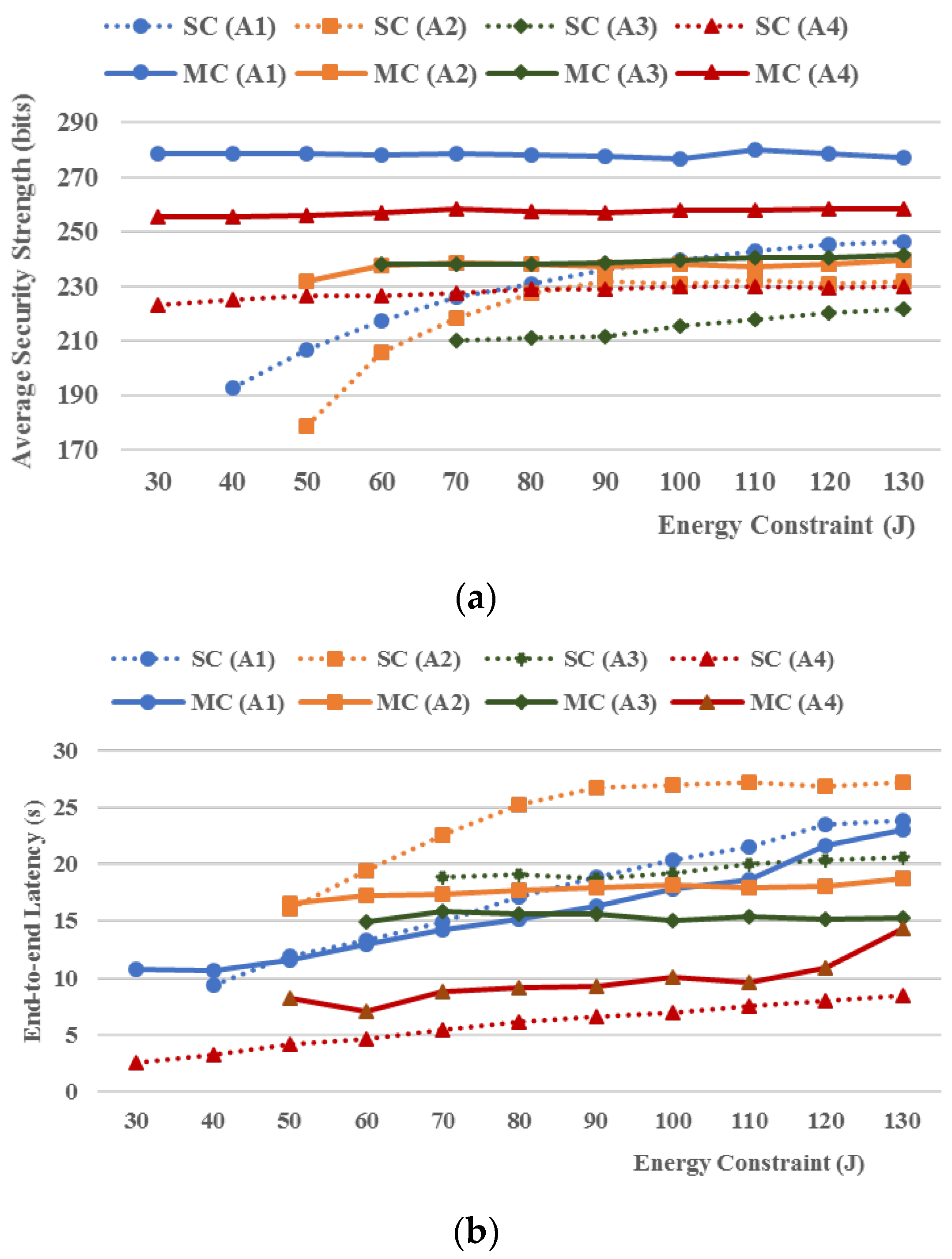

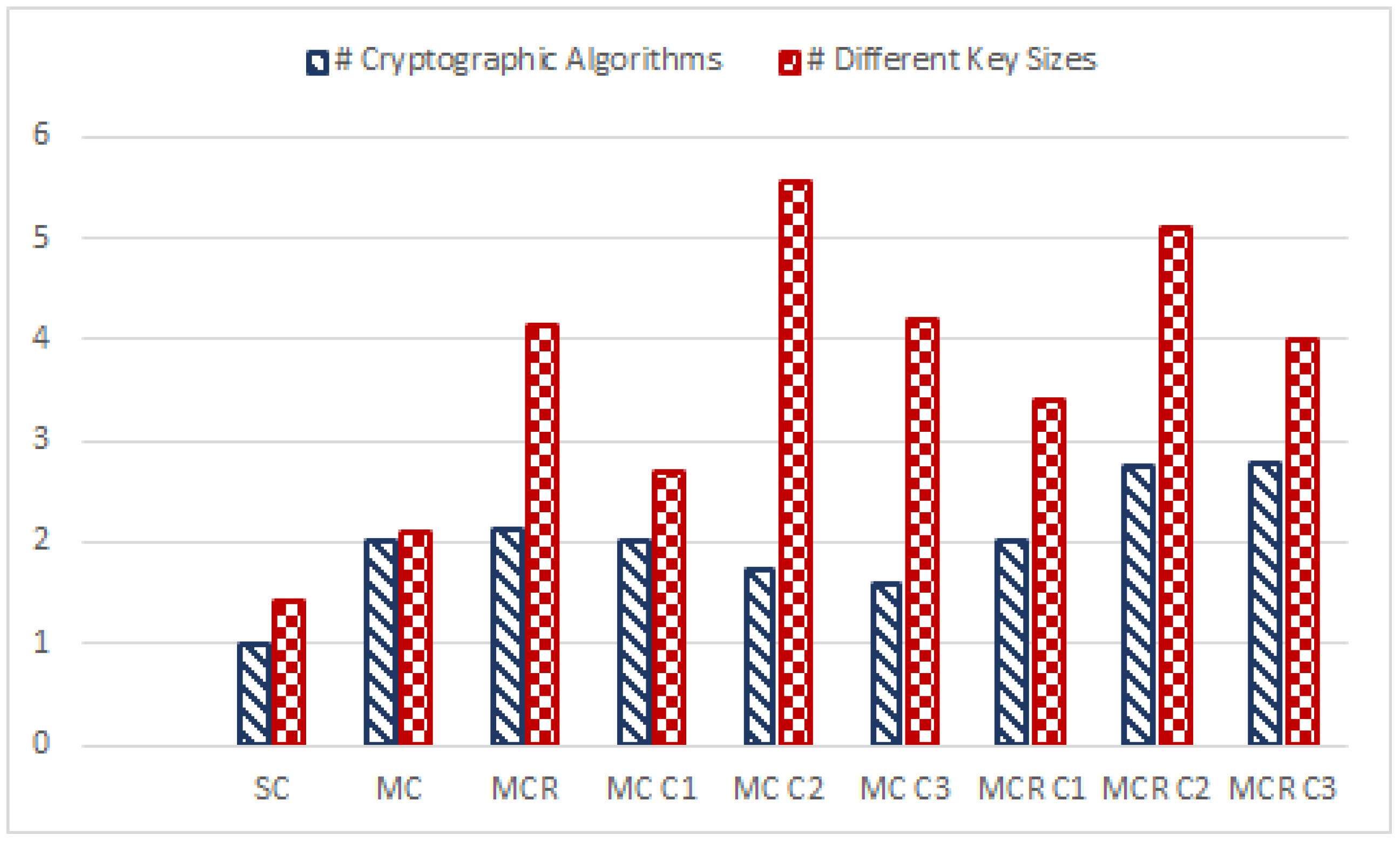

5.3. MC/MCR Security Model

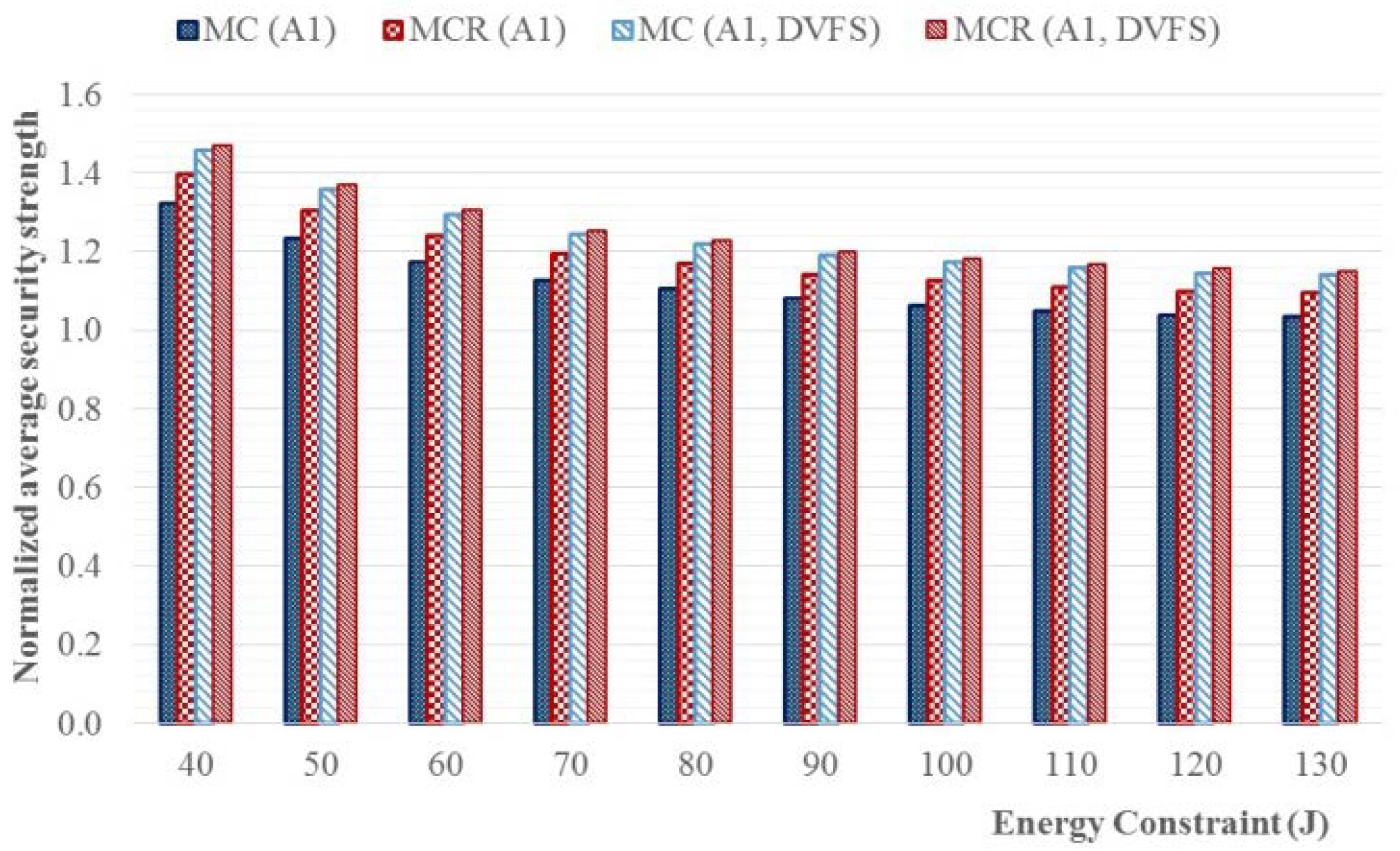

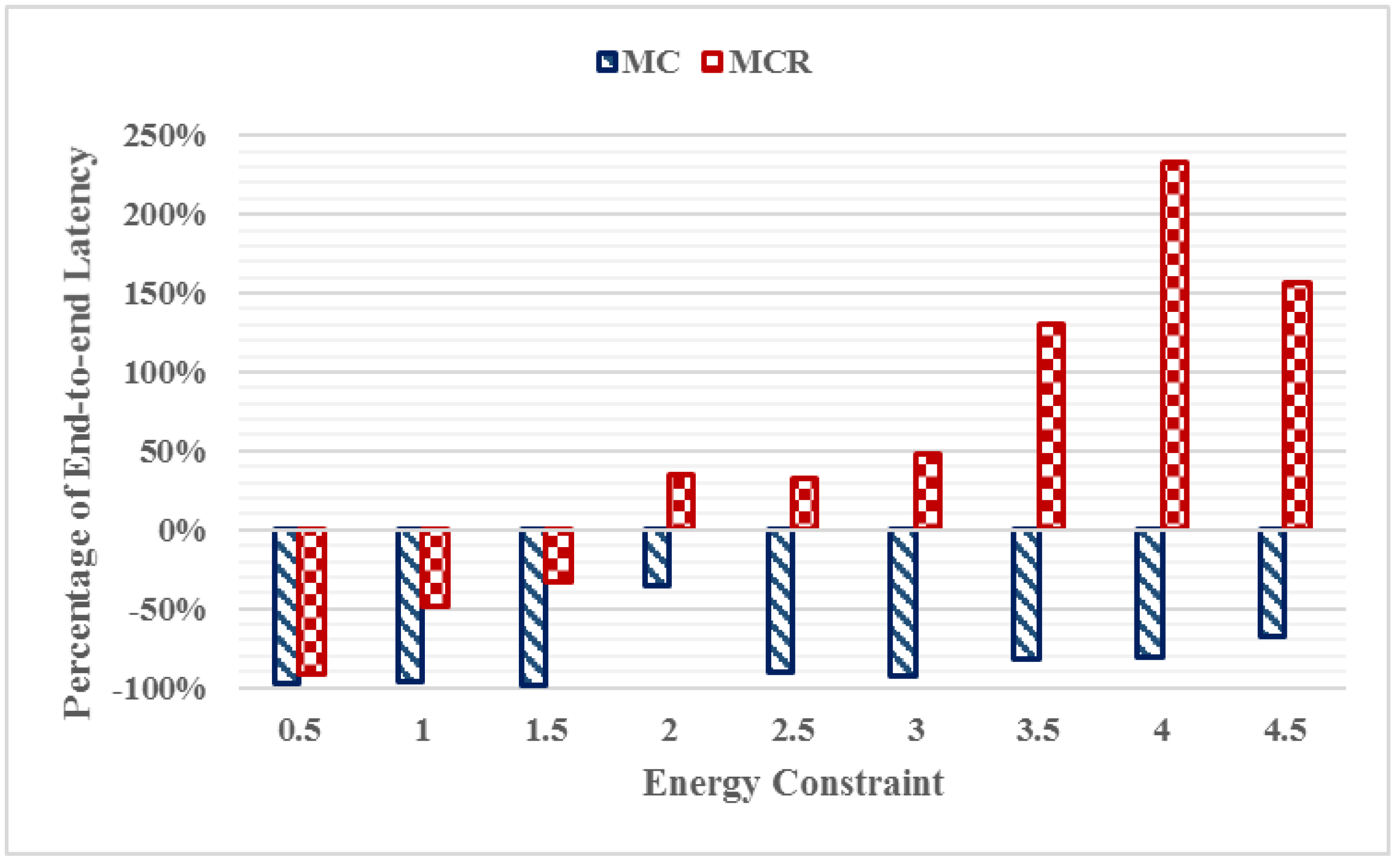

5.4. Mixed Cryptography Security Model with Security Policy Constraints

6. Conclusions and Future Work

Author Contributions

Conflicts of Interest

References

- Wang, X.; Wang, S.; Bi, D. Distributed Visual-Target-Surveillance System in Wireless Sensor Networks. IEEE Trans. Syst. Man Cybern. B Cybern. 2009, 39, 1134–1146. [Google Scholar] [CrossRef] [PubMed]

- Jahnavia, V.; Ahameda, S. Smart Wireless Sensor Network for Automated Greenhouse. IETE J. Res. 2015, 61, 180–185. [Google Scholar] [CrossRef]

- Rifà-Pous, H.; Herrera-Joancomartí, J. Computational and Energy Costs of Cryptographic Algorithms on Handheld Devices. Future Internet 2011, 3, 31–48. [Google Scholar] [CrossRef]

- Gu, Z.; Han, G.; Zeng, H.; Zhao, Q. Security-Aware Mapping and Scheduling with Hardware Co-Processors for FlexRay-based Distributed Embedded Systems. IEEE Trans. Parallel Distrib. Syst. 2016, 27, 3044–3057. [Google Scholar] [CrossRef]

- Zhang, X.; Zhan, J.; Jiang, W.; Ma, Y.; Jiang, K. Design Optimization of Energy- and Security-Critical Distributed Real-Time Embedded Systems. In Proceedings of the IEEE International Symposium on Parallel & Distributed Processing Workshops and PhD Forum, Cambridge, MA, USA, 20–24 May 2013; pp. 741–750. [Google Scholar]

- Medien, Z.; Machhout, M.; Bouallegue, B.; Khriji, L.; Baganne, A.; Tourki, R. Design and Hardware Implementation of QoSS-AES Processor for Multimedia applications. Trans. Data Priv. 2010, 3, 43–64. [Google Scholar]

- Pop, P.; Eles, P.; Peng, Z.; Pop, T. Analysis and Optimization of Distributed Real-Time Embedded Systems. ACM Trans. Des. Autom. Electron. Syst. 2006, 11, 593–625. [Google Scholar] [CrossRef] [Green Version]

- Selicean, D.; Pop, P. Design Optimization of Mixed-Criticality Real-Time Embedded Systems. ACM Trans. Embed. Comput. Syst. 2015, 14, 50. [Google Scholar]

- Pomante, L. System-level Design Space Exploration for Dedicated Heterogeneous Multi-Processor Systems. In Proceedings of the IEEE International Conference on Application-specific Systems, Architectures and Processors, Sousse, Tunisia, 22–24 June 2011; pp. 79–86. [Google Scholar]

- Pomante, L. HW/SW Co-Design of Dedicated Heterogeneous Parallel Systems: An Extended Design Space Exploration Approach. IET Comput. Dig. Tech. 2013, 7, 246–254. [Google Scholar] [CrossRef]

- Shang, L.; Dick, R.P.; Jha, N.K. SLOPES: Hardware–Software Co-synthesis of Low-Power Real-Time Distributed Embedded Systems with Dynamically Reconfigurable FPGAs. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2007, 26, 508–526. [Google Scholar] [CrossRef]

- Lin, C.-W.; Zhu, Q.; Sangiovanni-Vincentelli, A. Security-Aware Mapping for TDMA-Based Real-Time Distributed Systems. In Proceedings of the IEEE/ACM International Conference on Computer-Aided Design (ICCAD), San Jose, CA, USA, 2–6 November 2014; pp. 24–31. [Google Scholar]

- Lin, C.-W.; Zhu, Q.; Sangiovanni-Vincentelli, A. Security-Aware Modeling and Efficient Mapping for CAN-Based Real-Time Distributed Automotive Systems. IEEE Embed. Syst. Lett. 2015, 7, 11–14. [Google Scholar] [CrossRef]

- Jiang, K.; Eles, P.; Peng, Z. Co-Design Techniques for Distributed Real-Time Embedded Systems with Communication Security Constraints. In Proceedings of the Design, Automation & Test in Europe Conference & Exhibition (DATE), Dresden, Germany, 12–16 March 2012; pp. 947–952. [Google Scholar]

- Singh, G.; Kinger, S. Integrating AES, DES, and 3-DES Encryption Algorithms for Enhanced Data Security. Int. J. Sci. Eng. Res. 2013, 4, 7. [Google Scholar]

- Mansour, I.; Chalhoub, G. Evaluation of different cryptographic algorithms on wireless sensor network nodes. In Proceedings of the 2012 International Conference on Wireless Communications in Unusual and Confined Areas (ICWCUCA), Clermont Ferrand, France, 28–30 August 2012. [Google Scholar]

- Peter, S.; Zessack, M.; Vater, F.; Panic, G.; Frankenfeldt, H.; Methfessel, M. Encryption-Enabled Network Protocol Accelerator. In Proceedings of the International Conference on Wired/Wireless Internet Communications, Tampere, Finland, 28–30 May 2008; pp. 79–91. [Google Scholar]

- Kuppuswamy, P.; Al-Khalidi, S. Hybrid Encryption/Decryption Technique Using New Public Key and Symmetric Key Algorithm. Int. J. Inf. Comput. Secur. 2014, 6, 372–382. [Google Scholar] [CrossRef]

- Xin, M. A Mixed Encryption Algorithm Used in Internet of Things Security Transmission System. In Proceedings of the International Conference on Cyber-Enabled Distributed Computing and Knowledge Discovery, Xi’an, China, 17–19 September 2015. [Google Scholar]

- Ravi, S.; Raghunathan, A.; Kocher, P.; Hattangady, S. Security in Embedded Systems: Design Challenges. ACM Trans. Des. Autom. Electron. Syst. 2004, 3, 461–491. [Google Scholar] [CrossRef]

- Verbelen, Y.; Braeken, A.; Kubera, S.; Touhafi, A.; Vliegeny, J.; Mentens, N. Implementation of a Server Architecture for Secure Reconfiguration of Embedded Systems. ARPN J. Syst. Softw. 2011, 1, 270–279. [Google Scholar]

- Hwang, D.; Schaumont, P.; Tiri, K.; Verbauwhede, I. Securing Embedded Systems. IEEE Secur. Priv. 2006, 4, 40–49. [Google Scholar] [CrossRef]

- Xiao, K.; Forte, D.; Jin, Y.; Karri, R.; Bhunia, S.; Tehranipoor, M. Hardware Trojans: Lessons Learned after One Decade of Research. ACM Trans. Des. Autom. Electron. Syst. 2016, 22, 6. [Google Scholar] [CrossRef]

- Fern, N.; San, I.; Koç, Ç.K.; Cheng, K.-T. Hiding Hardware Trojan Communication Channels in Partially Specified SoC Bus Functionality. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2017, 36, 1435–1444. [Google Scholar] [CrossRef]

- Nam, H.; Lysecky, R. Latency, Power, and Security Optimization in Distributed Reconfigurable Embedded Systems. In Proceedings of the Reconfigurable Architecture Workshop (RAW), Chicago, IL, USA, 23–27 May 2016; pp. 124–131. [Google Scholar]

- Sandoval, N.; Mackin, C.; Whitsitt, S.; Lysecky, R.; Sprinkle, J. Runtime Hardware/Software Task Transition Scheduling for Runtime-Adaptable Embedded Systems. In Proceedings of the International Conference on Field-Programmable Technology (ICFPT), Kyoto, Japan, 9–11 December 2013; pp. 342–345. [Google Scholar]

- Najib, A.K. An Empirical Study to Compare the Performance of some Symmetric and Asymmetric Ciphers. Int. J. Secur. Appl. 2013, 7, 1–16. [Google Scholar]

- Kim, H.; Lee, S. Design and Implementation of a Private and Public Key Crypto Processor and Its Application to a Security System. IEEE Trans. Consum. Electron. 2004, 50, 214–224. [Google Scholar]

- Iana, V.; Anghelescu, P.; Serban, G. RSA encryption algorithm implemented on FPGA. In Proceedings of the International Conference on Applied Electronics (AE), Pilsen, Czech Republic, 7–8 September 2011. [Google Scholar]

- NIST Special Publication 800-57. Recommendation for Key Management, Part 1: General; NIST Special Publication: Gaithersburg, MD, USA, 2016.

- Li, H.; Huang, J.; Sweany, P.; Huang, D. FPGA implementations of elliptic curve cryptography and Tate pairing over a binary field. J. Syst. Arch. 2008, 54, 1077–1088. [Google Scholar] [CrossRef]

- Jaervinen, K. The State-of-the-Art of Hardware Implementations of Elliptic Curve Cryptography. In Proceedings of the Workshop on Hardware Benchmarking, Bochum, Germany, 7 June 2017. [Google Scholar]

- Alrimeih, H.; Rakhmatov, D. Fast and Flexible Hardware Support for ECC Over Multiple Standard Prime Fields. IEEE Trans. Very Large Scale Integr. Syst. 2014, 22, 2661–2674. [Google Scholar] [CrossRef]

- Homaifar, A.; Qi, C.X.; Lai, S.H. Constrained optimization via genetic algorithms. Simulation 1994, 62, 242–254. [Google Scholar] [CrossRef]

- Hilton, A.; Culver, T. Constraint-handling methods for optimal groundwater remediation design by genetic algorithms. In Proceedings of the IEEE International Conference on Systems, Man, and Cybernetics, San Diego, CA, USA, 14 October 1998; pp. 3937–3942. [Google Scholar]

- Tessema, B.; Yen, G.G. An Adaptive Penalty Formulation for Constrained Evolutionary Optimization. IEEE Trans. Syst. Man Cybern. A Syst. Hum. 2009, 39, 565–578. [Google Scholar] [CrossRef]

- Liu, X.; Zhao, M.; Li, S.; Zhang, F.; Trappe, W. A Security Framework for the Internet of Things in the Future Internet Architecture. Future Internet 2017, 9, 27. [Google Scholar] [CrossRef]

- Dick, R.P.; Rhodes, D.L.; Wolf, W. TGFF: Task Graphs for Free. In Proceedings of the International Workshop on Hardware/Software Codesign, Seattle, WA, USA, 18 March 1998. [Google Scholar]

| Security Level | Cryptographic Algorithms | Key Size/Rounds | Equivalent Rijndael Key Size |

|---|---|---|---|

| 21 | ECC | 571 | 285.5 |

| 20 | Rijndael | 256/14 | 256 |

| 19 | Rijndael | 256/13 | 256 |

| 18 | Rijndael | 256/12 | 256 |

| 17 | Rijndael | 256/11 | 256 |

| 16 | Rijndael | 256/10 | 256 |

| 15 | ECC | 409 | 204.5 |

| 14 | Rijndael | 192/13 | 192 |

| 13 | Rijndael | 192/12 | 192 |

| 12 | Rijndael | 192/11 | 192 |

| 11 | Rijndael | 192/10 | 192 |

| 10 | ECC | 283 | 142 |

| 9 | Rijndael | 128/12 | 128 |

| 8 | Rijndael | 128/11 | 128 |

| 7 | Rijndael | 128/10 | 128 |

| 6 | ECC | 233 | 116.5 |

| 5 | RSA | 2048 | 80 |

| 4 | 3 TDEA | 112 | 80 |

| 3 | ECC | 163 | 81.5 |

| 2 | RSA | 1024 | 80 |

| 1 | 2 TDEA | 80 | 80 |

| 0 | None | 0 | 0 |

| Security Level | Cryptographic Algorithm | Key Size | Equivalent Rijndael Key Size |

|---|---|---|---|

| 12 | ECC | 571 | 285.5 |

| 11 | Rijndael | 256 | 256 |

| 10 | ECC | 409 | 204.5 |

| 9 | Rijndael | 192 | 192 |

| 8 | ECC | 283 | 142 |

| 7 | Rijndael | 128 | 128 |

| 6 | ECC | 233 | 116.5 |

| 5 | RSA | 2048 | 112 |

| 4 | 3 TDEA | 112 | 112 |

| 3 | ECC | 163 | 81.5 |

| 2 | RSA | 1024 | 80 |

| 1 | 2 TDEA | 80 | 80 |

| 0 | None | 0 | 0 |

| VBODT (HCHC) | HCLC | LCHC | LCLC | |

|---|---|---|---|---|

| Tasks | 10 | 10 | 12 | 11 |

| Connectivity (edges/node) | 0.9 | 1.1 | 1.16 | 1.27 |

| Avg. Latency (ms) | 357 | 360 | 60.2 | 58 |

| (0.04, 2.8 K) | (66, 609) | (18, 99) | (31, 95) | |

| Avg. Comm. (words) | 177 K | 645 | 55 K | 509 |

| (10, 307 K) | (237, 928) | (4.5 K, 98 K) | (45, 977) | |

| HW Speedup | 13 X | 10 X | 6 X | 6 X |

| (0.6–12 X) | (2–60 X) | (1–15 X) | (1–15 X) | |

| HW Static | 78.1 | 78.1 | 78.1 | 78.1 |

| Power (mW) | (14.8, 123) | (14.8, 123) | (14.8, 123) | (14.8, 123) |

| HW Dynamic Power (mW) | 125.8 | 125.8 | 62.9 | 62.9 |

| (14.9, 785) | (14.9, 785) | (7.5, 392.5) | (7.5, 392.5) | |

| Energy Constraint | 10 J | 0.5 J | 1 J | 0.5 J |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Nam, H.; Lysecky, R. Mixed Cryptography Constrained Optimization for Heterogeneous, Multicore, and Distributed Embedded Systems. Computers 2018, 7, 29. https://doi.org/10.3390/computers7020029

Nam H, Lysecky R. Mixed Cryptography Constrained Optimization for Heterogeneous, Multicore, and Distributed Embedded Systems. Computers. 2018; 7(2):29. https://doi.org/10.3390/computers7020029

Chicago/Turabian StyleNam, Hyunsuk, and Roman Lysecky. 2018. "Mixed Cryptography Constrained Optimization for Heterogeneous, Multicore, and Distributed Embedded Systems" Computers 7, no. 2: 29. https://doi.org/10.3390/computers7020029