1. Introduction

Accurate information about the forest is essential for making well-founded management decisions. The necessary information has traditionally been obtained from field visits and sample-based forest inventories [

1]. To improve the accuracy, more samples are collected. However, field measurements are expensive and time-consuming, and the method is inefficient on larger scales. Remote sensing techniques complement and can further increase the value of the field inventoried data, with large detailed coverages at acceptable costs [

2,

3,

4]. The combined use of remote sensing data and field based measures has been evaluated for estimations of common forest attributes, such as Lorey’s mean height, H

L (the tree height is weighted with its basal area, BA), stem diameter, stem volume (VOL), and biomass [

5,

6,

7,

8].

There are numerous remote sensing techniques available, with their respective (dis-)advantages. For the past 10 years, airborne laser scanning (ALS) has been considered the most accurate remote sensing technique for forest estimations [

5,

6,

7]. The strength of ALS is its ability of reconstructing the forest at a very high level of details in three dimensions (3D). However, it is a rather expensive technique, which restricts its use for frequent acquisitions and larger areas. The airborne platform is, moreover, not directly available to most people, and requires arduous practicalities to be managed, which might limit its use in small applications, for example by private forest owners.

To cope with these problems related to costs, availability, and practicalities, image-matching techniques may be an alternative. In its simplest form, the same objects (pixels) are detected in at least two images, acquired from different positions in space, and, based on the appeared differences (parallaxes) and the known positions for the acquisitions, the x, y, z coordinates can be computed for the objects. Digital surface models (DSMs) can be computed from air- and space-borne images, which in combination with an accurate digital terrain model (DTM) can be used to derive forest attributes [

9,

10,

11]. Typical 3D attributes, e.g., forest height and some volumetric metric, can be derived at sufficient quality (better than traditional field inventory methods currently used by many forest companies), and the spectral information can be combined to further improve model accuracies [

10,

12]. Airborne photogrammetry is an old, well-known technique used in many countries including Sweden, but it suffers from high costs similar to those of ALS. However, it has the advantage of also providing spectral data, and promising results for estimation of forest attributes have been reported by, e.g., Bohlin et al. [

13], Stepper et al. [

14], and White et al. [

11]. An attractive alternative to the expensive aerial platform is satellites, which provide frequent acquisition with large coverage at reasonable cost and short repetition intervals. New stereo acquisitions can simply be ordered directly via the Internet. Moreover, the resolution of the very high resolution (VHR) satellite sensors available today is similar (~0.5 m ground sampling distance) to imagery from airborne platforms flown at ~5000 m elevation (commonly used for photogrammetry). Additionally, the VHR sensors often provide more and narrower spectral bands, facilitating complex analyses. The set of optical VHR sensors (including, e.g., GeoEye-1, Ikonos, Pléiades and WorldView-2), are normally steerable sensors that make stereo or even tri-stereo acquisitions available from a single overpass, which is crucial to obtain two cloud-free images within a short temporal interval.

Thus far, a limited number of studies have used optical VHR sensors for image matching and estimation of forest attributes. However, they have covered different conditions. Kayitakire et al. [

15] used textural metrics from Ikonos-2 imagery to retrieve different forest attributes in temperate forest with mainly spruce in Belgium. They predicted top height with 2.2 m RMSE (10%), stocking (stems·ha

−1) with 276 stems·ha

−1 (29%), and BA with 7.1 m

2·ha

−1 (16%). St-Onge et al. [

16] evaluated the accuracy of average dominant forest height (1.7 m RMSE, 8%) and above-ground biomass (AGB; 71 tons·ha

−1, 47%) at plot level, based on an Ikonos stereo pair and an ALS DTM at a Canadian boreal test site located in Quebec. The coefficient of determination (R

2) reached 0.91 and 0.79, respectively. WorldView-2 imagery has been involved in studies from different biomes around the globe. Ozdemir and Karnieli [

17] evaluated numerous structural parameters in a dryland forest plantation with mostly pine trees in Israel. They found that WorldView-2 textural metrics could be used to estimate BA with 1.8 m

2·ha

−1 RMSE (17%), stocking with 110 stems·ha

−1 (29%) and VOL with 27 m

3·ha

−1 (44%), for 29 plots of 30 m × 30 m. One conclusion was that further studies should cover different ecological regions. Straub et al. [

18] used Cartosat-1 and WorldView-2 stereo images to estimate growing stock (VOL) in a mixed German temperate forest. They found the RMSE for VOL to be 162 m

3·ha

−1 (50%) for Cartosat-1 and 143 m

3·ha

−1 (44%) with WorldView-2 data at plot level. Similarly, Shamsoddini et al. [

19] used WorldView-2 multispectral data to map pine plantation structures in a temperate forest in New South Wales, Australia. They investigated several combinations of spectral and height derivatives to explain tree height, diameter, VOL, BA, and stocking. Their final models were combinations of different spectral derivatives, height- and textural metrics. The RMSEs were 1.9 m (8%) for tree height, 4.1 cm (14%) for diameter, 90 m

3·ha

−1 (30%) for VOL, 7.7 m

2·ha

−1 (23%) for BA, and 151 trees·ha

−1 (25%) for stocking. They pointed out that textural features perform better than spectral information for estimating forest attributes. Perko et al. [

20] examined the mapping potential of Pléiades stereo and triplet data at two mountainous test sites, located in Trento, Italy, and Innsbruck, Austria. Their focus was geo-locational accuracy and height accuracy compared to ALS data. They found it necessary to manually assign ground control points (GCPs) to achieve sub-pixel accuracy and, moreover, the image matching computed from an image triplet was preferred over matching from only an image pair.

Immitzer et al. [

21] used WorldView-2 stereo images in combination with national forest inventory data to map growing stock wall-to-wall in a temperate forest in Bavaria, Germany. They found the combination of using both spectral and height data to be the best, corresponding to the R

2 being 0.53 and the RMSE for VOL was 120 m

3·ha

−1 (32%) at plot level. Maack et al. [

22] used WorldView-2 imagery for a German test site and Pléiades data for a test site located in Chile in order to estimate AGB. They emphasized the evaluation of combining spectral derivatives, textural metrics, in addition to image matched height metrics. They found the highest model performance when combining either height metrics and spectral derivatives, or height metrics and textural metrics. The model combining height metrics and spectral derivatives had 47 tons·ha

−1 (24%) RMSE at the German test site and 59 tons·ha

−1 (36%) at the test site in Chile. Both Immitzer et al. [

21] and Maack et al. [

22] obtained their main model contributions from the height metrics, while the spectral/textural contributions were rather limited. In Finland, Yu et al. [

7] used WorldView-2 data to evaluate forest attributes in boreal forest. They compared numerous remote sensing techniques to estimate AGB (22 tons·ha

−1 RMSE, 16%), VOL (43 m

3·ha

−1, 16%), BA (4.3 m

2·ha

−1, 16%), basal area-weighted mean diameter (3.4 cm, 13%), and H

L (1.4 m RMSE, 7%) at plot level. They found the upper height percentiles 80 to 100 (p80 to p100) to be the most powerful predictors for H

L and mean diameter, while p30, mean height, and a canopy closure metric were most important for estimation of VOL and AGB.

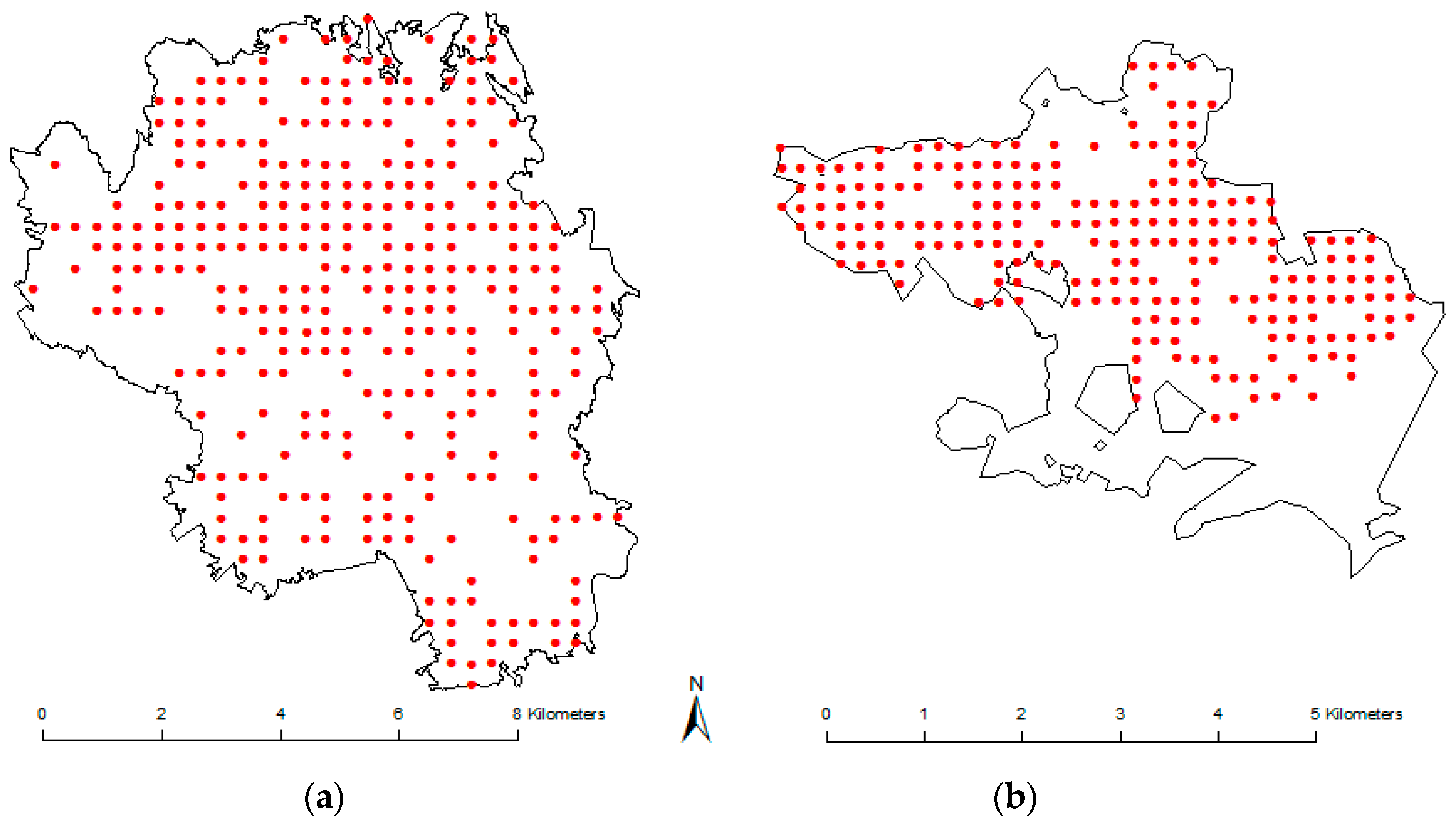

From the review of past articles, few papers [

20,

22] have evaluated Pléiades satellite data for forestry purposes. The objective for this study is therefore to examine the use of Pléiades satellite data for estimating forest attributes in boreal forest, when a high-resolution DTM from ALS is available. Specifically, the explanatory capacity of using spectral data and texture metrics in addition to image matched height metrics is assessed. The evaluated forest attributes are H

L, ALS height p90, BA, VOL, and AGB. The attribute ALS p90, highly correlated to H

L, is included to facilitate comparisons to other sensors, and to regions where field data are not available. For comparison, the forest attributes are also estimated solely from ALS data.

4. Discussion

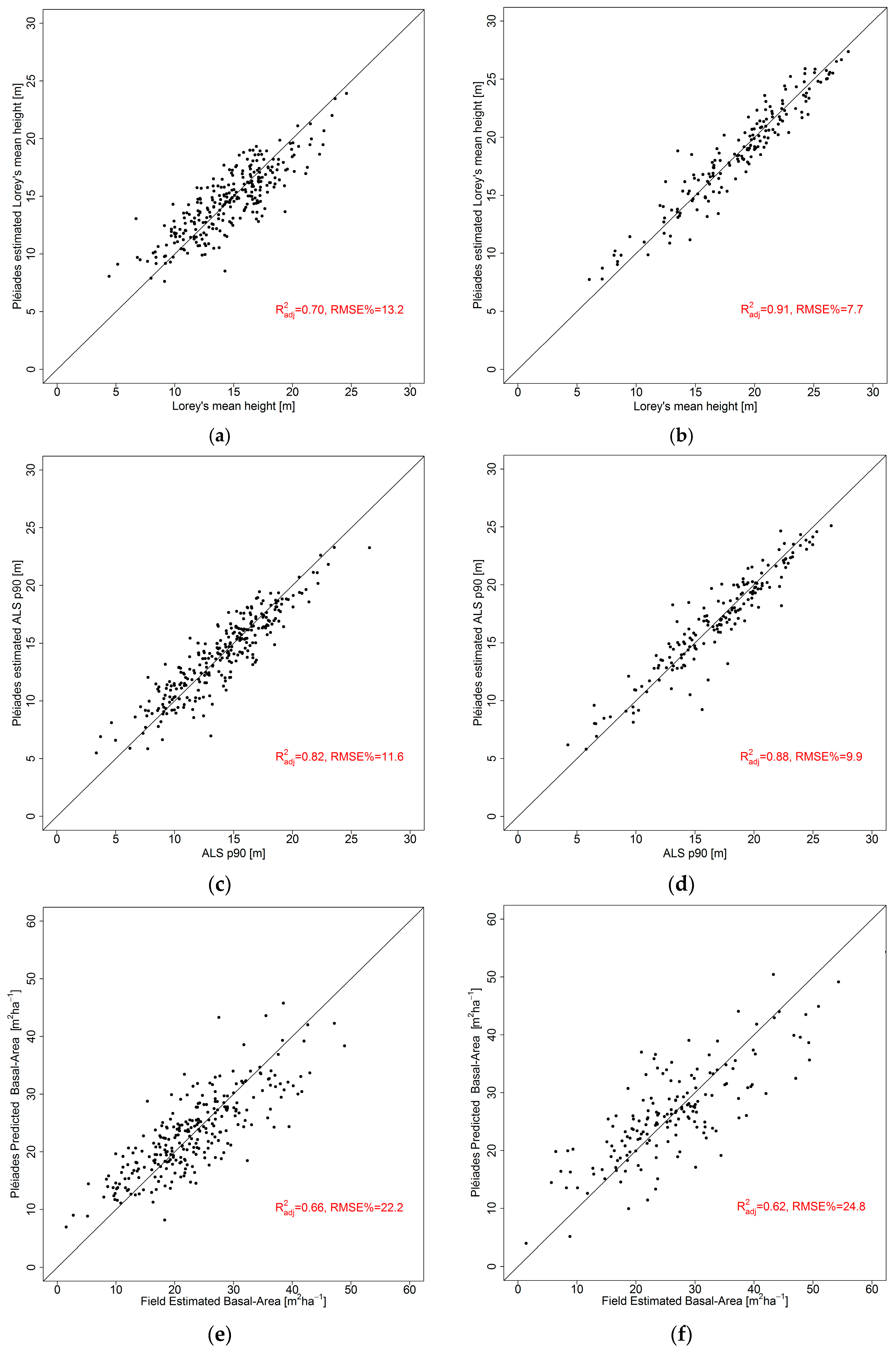

The image-matched height metrics showed a considerable potential for estimation of the target variables and, by adding also spectral derivatives and textural metrics, most models could be improved further. The tree height H

L was almost entirely described by the height metrics and the RMSE decreased only from 2.03 m to 1.92 m in Krycklan, and 1.62 m to 1.44 m in Remningstorp (

Table 6 and

Table 7). Similarly, the ALS p90 decreased from 1.68 m to 1.62 m in Krycklan, while no improvement could be achieved in Remningstorp (

Table 6 and

Table 7). This indicates that if only tree heights are of interest, there is a limited reimbursement of also acquiring and processing spectral and textural data. The accuracy of the height metrics modelled from Pléiades data are lower than models based solely on ALS data in Krycklan. However, the H

L was estimated better from VHR data (1.44 m) as from ALS data (1.60 m) in Remningstorp (

Table 7 and

Table 8). The accuracy of the height-modelled metrics is similar or slightly better than past studies based on other VHR sensors. When image-matched heights from the Ikonos sensor were used, the dominant forest height was estimated with 1.7 m RMSE [

16], and when models based purely on spectral/textural features were used, Shamsoddini et al. [

19] estimated the tree height with 1.9 m RMSE using WorldView-2 imagery; correspondingly, Kayitakire et al. [

15] estimated the top height with 2.2 m, using Ikonos imagery. However, it is interesting that the latter two received such low RMSE values from models based merely on spectral/textural features. The pine plantation used as field data by Shamsoddini et al. [

19] might have increased the textural importance, as the forest is similarly managed. This hypothesis is strengthened by the results from Kayitakire et al. [

15], whose test site almost entirely consisted of a well-managed spruce plantation. This, therefore, would possibly explain the slightly larger improvement of height estimations in Remningtorp compared to Krycklan when the spectral/textural features were added (0.24 m and 0.12 m for H

L respectively), as Remningstorp is highly managed and Krycklan to a lower degree contains homogenous forest. A similar theory was also presented by Maack et al. [

22], who claimed that natural regenerated forest would be more homogenous and therefore more sensible to textural metrics and less dependent on species, compared to other forests. Yu et al. [

7] found that H

L could be estimated with 1.4 m RMSE in a Finnish boreal forest with approximately 75% coniferous forest. However, they used squared sample plots with 32 m × 32 m size, which gives a considerably larger plot area then in the current study (0.1 ha vs. 0.03 ha).

The estimation of BA was highly dependent on the height metrics, and the spectral/textural features added a limited improvement (0.5 to 0.8 m

2·ha

−1;

Table 6 and

Table 7). The mean height was a suitable estimator for BA in Krycklan, and in Remningstorp the squared mean height was better suited. This could be due to different forest management actions, as thinning operations produce stands with different characteristics with respect to H

L, but similar characteristics for BA. Remningstorp is a highly managed test site, while Krycklan follows traditional management. Nevertheless, BA is frequently used to decide on forest management. The ALS-based models could to a slightly higher degree describe the BA well (4.3 m

2·ha

−1 and 5.7 m

2·ha

−1;

Table 8). This might be due to the higher degree of penetration from ALS compared to the optical wavelengths in VHR data. The Pléiades-based models estimating BA achieved the lowest accuracy of the different target features, with RMSEs of 5.1 m

2·ha

−1 (22%) and 6.6 m

2·ha

−1 (25%), respectively. This is slightly better than Shamsoddini et al. [

19] and St-Onge et al. [

16], who reported the BA in a pine plantation at 7.7 m

2·ha

−1 (23%) and 7.1 m

2·ha

−1 (16%), respectively. However, it is higher both in absolute and relative terms compared to Yu et al. [

7], who reported the BA with 4.3 m

2·ha

−1 (16%), and Ozdemir and Karnieli [

17], who reported the BA with 1.8 m

2·ha

−1 (17%). The main difference and possible explanation of this are the larger plots (~0.1 ha) that were used in the two latter studies. Yu et al. [

7] did also evaluate the effect of plot size, and could show that their estimations of BA increased by 3 to 10 percentage points when the plot size decreased from 32 m × 32 m to 16 m × 16 m. Consequently the present results seem to be of similar magnitude and reasonably accurate. The BA is overall estimated with comparable accuracy to VOL or AGB, regardless of sensor for both our test sites, and this is also the case in the study by Yu et al. [

7].

The VOL and AGB are highly correlated, as was reflected in the results. The R

2 values were between 0.73 and 0.77, and the RMSEs were 25.4% to 26.9% for both test sites and both attributes. From the scatter plots it can be concluded that estimations at higher volume/biomass possess considerably higher standard deviations, which might indicate a limited applicability for denser forest and southern forests with higher volumes/biomasses. Experience from using field data from these test sites together with other sensors (for example TanDEM-X radar data [

36]) has shown that the standard deviations can remain constant along the entire evaluated range. In the current study, the heights generally had to be transformed to achieve significance in the regression modelling, and in Krycklan the heights were raised to the power of 2.3 while at Remningstorp the height was raised to the power of 2.6 and 2.7 for VOL and AGB, respectively (

Table 6 and

Table 7). In most VOL/AGB models, a non-transformed height variable complemented the transformed one to constitute the best model. The results are similar to what St-Onge et al. [

16] achieved from Ikonos data (71 tons·ha

−1) and lower than Shamsoddini et al. [

19] estimated from WorldView-2 spectral/textural features (90 m

3·ha

−1, 30%). In relative terms, it is also lower than Ozdemir and Karnieli [

17], who reported 44% RMSE (27 m

3·ha

−1) for VOL, based on WorldView-2 imagery of an Israeli pine plantation forest. Immitzer et al. [

21] estimated the VOL with 120 m

3·ha

−1 (32%) from WorldView-2 data in a German forest. Moreover, Maack et al. [

22] used Pléiades data to estimate AGB at a test site in Chile, and achieved 59 tons·ha

−1 (36%). Considering the results from other studies, the VHR data might be better suited for estimation of VOL/AGB in boreal forest than other forest types. Moreover, the Pléiades data specifically appear slightly better suited for estimation of forest attributes, compared to other VHR sensors.

It seems that the spectral and textural features were of greater importance for BA/VOL/AGB estimation than for those of H

L/ALS p90. This might be due to the missing information about forest density from merely height metrics (the BA was estimated with intermediate accuracy,

R2 = 0.51 to 0.58, from height metrics;

Table 6), and this would therefore confirm the hypothesis that spectral/textural features from VHR imagery can contribute as a predictor for forest density. This study also confirms the hypothesis presented by Immitzer et al. and Maack et al. [

21,

22], that height metrics are superior to spectral derivatives and textural metrics when estimation of forest attributes are considered. This study found the spectral derivatives to be of similar importance to textural metrics, when the textural features were computed from VHR imagery with 1-m resolution, using an 11 × 11 pixels moving window. Smaller window sizes did not improve the results in this study, which is contrary to the conclusions of Maack et al. [

22]. They found the smallest window size of 3 × 3 pixels gave the best AGB estimates. This study further confirms the hypothesis that textural metrics have a greater importance for estimation of forest attributes in homogenous forests (for example, plantation forests and well-managed forests) compared to more heterogeneous ones [

15,

16,

17,

19,

22].

The potential of using Pléiades data as a replacement for ALS data seems promising. Overall, models from both sensors perform in similar ranges, although the performance of the ALS-based models appears to be more repeatable across the test sites. Nevertheless, the Pléiades-based models surpassed the performance of ALS-based models regarding HL, VOL, and AGB in Remningstorp. A deeper understanding of the influence of the local forest conditions on the estimation results could increase the robustness and thus likelihood of replacing ALS data with Pléiades data.

Some of the past studies have involved some bias [

7,

22]. However, these have been based on non-parametric models (i.e., random forest or nearest-neighbour methods) and therefore this problem can be ignored in this study, which is based on regression analysis. The experience from the current study is that image matching from Pléiades imagery performs equally well as image matching with other VHR sensors (such as WorldView-2). Similarly to the Finnish study [

7], it was found that the top height percentiles generally were most important, even though the mean height frequently became significant as an explanatory variable. In the current study, the ALS p90 was used as a height metric that is easy to obtain and practical for comparisons across test sites. It was also the ALS height metric that had the highest correlation to the image-matched height metrics. Sparse and low forest, typically young forest, was strongly influenced by underestimation in the image matching. Similarly, forest plots containing glades were frequently assigned too-low heights. This is likely due to the few (even absence of) matching points. Plots with deciduous trees in leaf-off conditions were also frequently noticed as outliers, probably by similar reasons. In this study, there was no obvious saturation in the estimations of VOL or AGB. However, the absence of a saturation effect could not be confirmed either, as the estimations of VOL/AGB exceeding ~250 tons·ha

−1 or 500 m

3·ha

−1 started to flutter dubiously. In this study, most images possessed along-track incidence angles of about ±13° and, additionally, one more nadir acquisition. This causes the along-track intersection angles to be about optimum according to past studies, regarding the reconstruction of stereo heights [

10,

37]. However, the nadir image from Krycklan was −8°, which is less optimal than about 0°, as it causes one image pair to have a difference of only 5°, while the next stereo pair has a difference of ca. 20°. This causes the overall tri-stereo setup for Krycklan to be less optimal than the tri-stereo setup for Remningstorp. However, it is difficult to evaluate its influence on the final reconstructed heights, as other differences also separate the test sites.

5. Conclusions

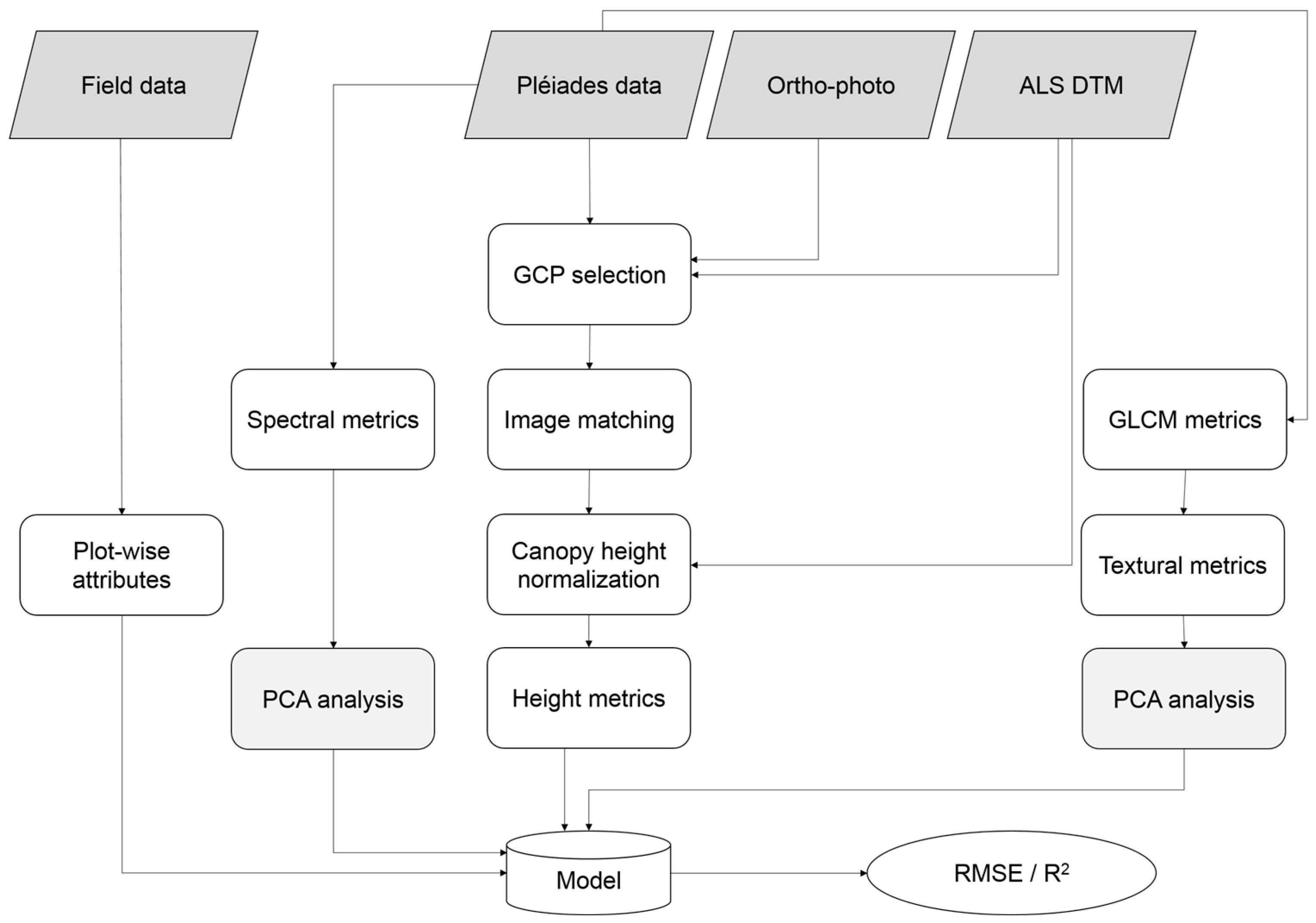

In this study, the potential of using very high resolution Pléiades imagery to estimate a number of common forest attributes in boreal forest was examined, when a high-resolution digital terrain model was available. The estimated attributes were HL, ALS p90, BA, VOL, and AGB, derived for 10-m plots. The explanatory variables were derived from three processing alternatives. Height metrics were extracted from image matching of the images acquired from different incidence angles. Spectral derivatives were derived by performing principal component analysis of the spectral bands. Lastly, second-order textural metrics were extracted from a grey-level co-occurrence matrix, computed with an 11 × 11 pixels moving window. Principal component analysis was used to optimize the textural usage also. The analysis took place at two Swedish test sites, Krycklan and Remningstorp, containing boreal and hemi-boreal forest. It was found that the image-matched height metrics were most important in all models, and that the spectral and textural metrics contained similar information. Nevertheless, the best estimations were obtained when all three explanatory sources were used. The lowest RMSE was estimated with 1.4 m (7.7%) for HL, 1.7 m (10%) for ALS p90, 5.1 m2·ha−1 (22%) for BA, 66 m3·ha−1 (27%) for VOL, and 26 tons·ha−1 (26%) for AGB, respectively. For comparison, the same forest attributes were also estimated from ALS data. Overall, the Pléiades-based models showed similar performance to the ALS-based models, and for some attributes (HL, VOL, and AGB) the Pléiades performance was even higher. The Pléiades results were similar across the test sites, despite the slightly different forest types and managements. Both test sites contained mainly coniferous forest and hence no influence of species was assessed. Sparse and low forest were generally assigned too-low heights in the image matching, and very dense forest with high volume/biomass were generally estimated with larger inaccuracy, which, however, could not directly be obtained as saturation. Textural features can improve the estimations, especially in homogenous forest, and a larger moving window (11 × 11 pixels) was preferred over smaller ones, though the computational cost is substantial.

In summary, this paper has shown the potential of using VHR satellite images suitable for image matching for the estimation of numerous relevant forest attributes. The presented method was shown to be an attractive alternative to ALS, and this method has the potential of being repeated frequently at reasonable cost.