1. Introduction

Clouds often obscure on the ground target objects and thus have significant impact on remote sensing image interpretation [

1,

2]. Further, the accurate detection of clouds directly affects inversion of biophysical variables in other applications [

3]. Even though a particular image might have excessive cloud coverage, the image can still be useful as long as specific areas of interest are not obscured. Therefore, the presence or absence of clouds in a particular image needs to be accurately determined.

Cloud detection is an active area of research in the field of remote sensing with many proposed cloud detection methods. These methods can be divided into two categories: those using and not using thermal infrared bands. Methods that use thermal infrared bands often provide highly accurate cloud detection results. Zhu and Woodcock proposed an object-oriented Function of Mask (Fmask) cloud and shadow detection method for Landsat images using the Top of Atmosphere (TOA) reflectance and brightness temperature. This method achieves a detection accuracy reaching 96.4% [

4]. Huang et al. used clear view forest pixels as a reference to define cloud boundaries when separating clouds from clear view surfaces in a spectral-temperature space for forest change analysis [

5]. Most high-resolution satellite images, however, lack a thermal infrared band; so researchers often use temporal and spatial correlation information from images in cloud detection algorithms. Sedano et al. [

6] proposed a method that first use pixel value comparison between high resolution images and cloud free composites at lower spatial resolution from almost simultaneously acquired dates to identify cloud pixels and then obtain cloud mask using object-based regional growth. The advantage of this method is that it does not rely on thermal bands and can be used to extract clouds in the highest-resolution remote sensing images, but the disadvantages is that it relies on lower spatial resolution from almost simultaneously acquired dates and if there are a large cloud in image, the method will fail. Tseng et al. [

7] applied multi-temporal cloud detection algorithms for cloud detection. This method have good accuracy, but the biggest disadvantage is that acquired dates of the reference image and the observed image must be very close. Han et al. proposed an automatic cloud detection method for high-resolution images that first detects clouds in a target image including cloud cover, by applying a simple threshold. Using the difference between the target image and the reference image, clouds can be extracted from the peripheral region of high-resolution images [

8]. This method also needs to use temporal correlation among pixels.

On the other hand, methods that do not use thermal bands and rely on the attribute information from single images have been proposed. Maximum and minimum filters and second-moment texture measures have been tested to detect clouds and cloud shadows based on an analysis of different spectral and texture features of clouds, thin clouds, vegetation, bare ground, and roads [

9]. Marais et al. [

10] have presented a simple image transform that optimally combines four image channels into a greyscale image for threshold-based cloud detection on Quickbird images. Fisher used reflectance feature and automated morphological feature extraction to detect cloud in SPOT5 HRG Imagery [

11]. Wavelet analysis, pattern recognition, machine learning, and computer vision technologies have also been used for cloud detection [

12,

13,

14,

15,

16]. These methods use spectral information to extract cloud areas; however, these methods only apply in situations when spectral features of other objects such as buildings are not similar to clouds. In high-resolution images, the spectral features of city buildings are similar to those of clouds [

17]. Therefore, additional features found in clouds and buildings are needed to discriminate them accurately. Clouds have high reflectance with relatively uniform, smooth texture characteristics and have irregular natural shapes, therefore integration of different features might provide a better solution for differentiating between clouds and high reflective objects in high-resolution images.

Several classification methods have been proposed that are based on the integration of spatial and spectral features; these can be divided into two types: multi-classifier algorithms [

18], and single classifier multi-feature algorithms [

19,

20]. The multi-classifier methods use a variety of classifiers for decision fusion and processing multi-features in parallel. The single classifier multi-feature approach normalizes the different features and then uses a single classifier on several related features to process vectors of hybrid features. We therefore selected a single classifier multi-feature approach as the basis for our new cloud detection method; however, one key issue facing single classifier multi-feature algorithms is the choice of classification method. Machine learning as an artificial intelligence algorithm has been widely used in remote sensing image classification [

21]. The application of artificial neural networks, support vector machine, intelligent optimization algorithms such as genetic algorithms, partial swarm optimization, and artificial immune systems in remote sensing image classification reflect this trend [

22,

23,

24,

25,

26,

27].

Some researchers have studied cloud features and machine learning for cloud detection. Wang et al. [

28] compared Support Vector Machine (SVM) with Back Propagation Neural Network (BP-NN) method with training set numbers for cloud detection, finding that when sample size is small, SVMs perform better than BP-NN method. Hughes et al. [

29] developed a neural network approach to detect clouds in Landsat scenes using spectral and spatial information. Chenthan [

30] has compared Linear, Polynomial, and Gaussian-RBF kernel in SVMs and concluded the combination of texture and Linear SVM with correct classification rate of 92.30% in cloud detection. Başeski [

31] used color and line based elimination as well as texture based SVM classification to detect cloud on RGB images. Latry considered SVM cloud detection within PLEIADES-HR framework [

32]. Although many researchers have studied cloud detection classification methods based on features, many of these approaches are based on spectral and texture information, and do not exploit other features, such as shape and NDVI or combine multi-features in machine learning. The integration of multi-feature and machine learning strategies to detect clouds could not only allow features to complement each other, but also make full use of the remote sensing image information.

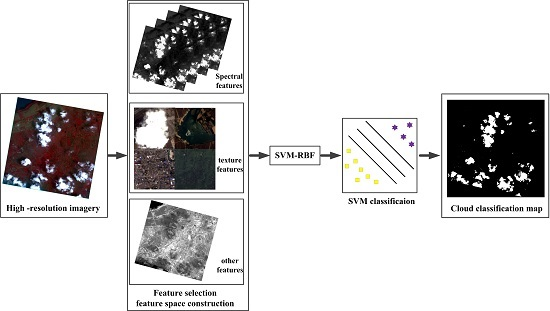

In this paper, we propose a new cloud detection approach based on machine learning and multi-feature fusion. It does not use the thermal band or rely on time information and is suitable for different clouds types, sizes, and densities, and different underlying surface environments. This paper is organized as follows. The first section describes the experimental data, discusses typical cloud features in Gao Fen-1/2(GF-1/2) satellite images, details the selection of the typical characteristics of various images, and discusses the construction of feature space and multi-feature fusion. The second section details our results. The third section includes a discussion and the last section includes conclusions drawn from our research.

4. Discussion

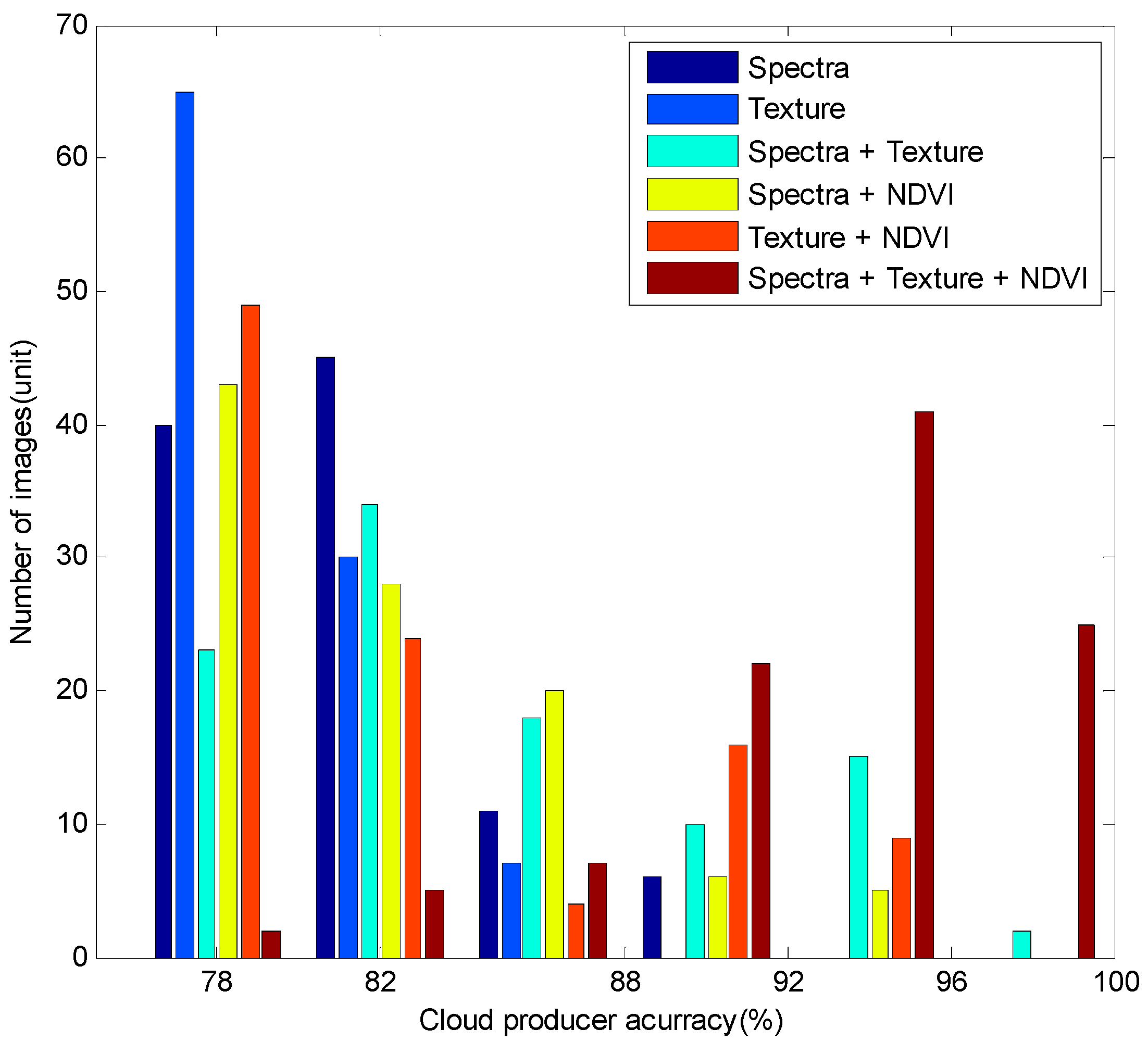

4.1. Accuracy Analysis of Multiple Features

To validate multi-feature fusion cloud extraction, different features were fused including Spectra (S), Texture (T), Spectra + Texture (ST), Spectra + NDVI (SN), Texture + NDVI (TN), and Spectra + Texture + NDVI (STN) of 102 GF-1/2 images for cloud accuracy assessment and analyzed. Considering cloud and non-cloud areas as two classes, the cloud producer accuracy and cloud user accuracy, the overall accuracy and Kappa coefficients were calculated as below [

55]:

where

denotes cloud producer accuracy;

denotes cloud user accuracy;

denotes agreement between ground truth and the algorithm mask;

represents pixels that belong to clouds in the ground truth but the classification technique has failed to classify them into clouds; and

represent pixels that belong to backgrounds that are labeled as belonging to clouds.

Table 5 shows the quantity of images of the six features combination grouped by producer accuracy, 76%–80%, 80%–84%, 84%–88%, 88%–92%, 92%–96%, and 96%–100%, and the average producer accuracy of each feature combination.

Figure 9 is a corresponding histogram.

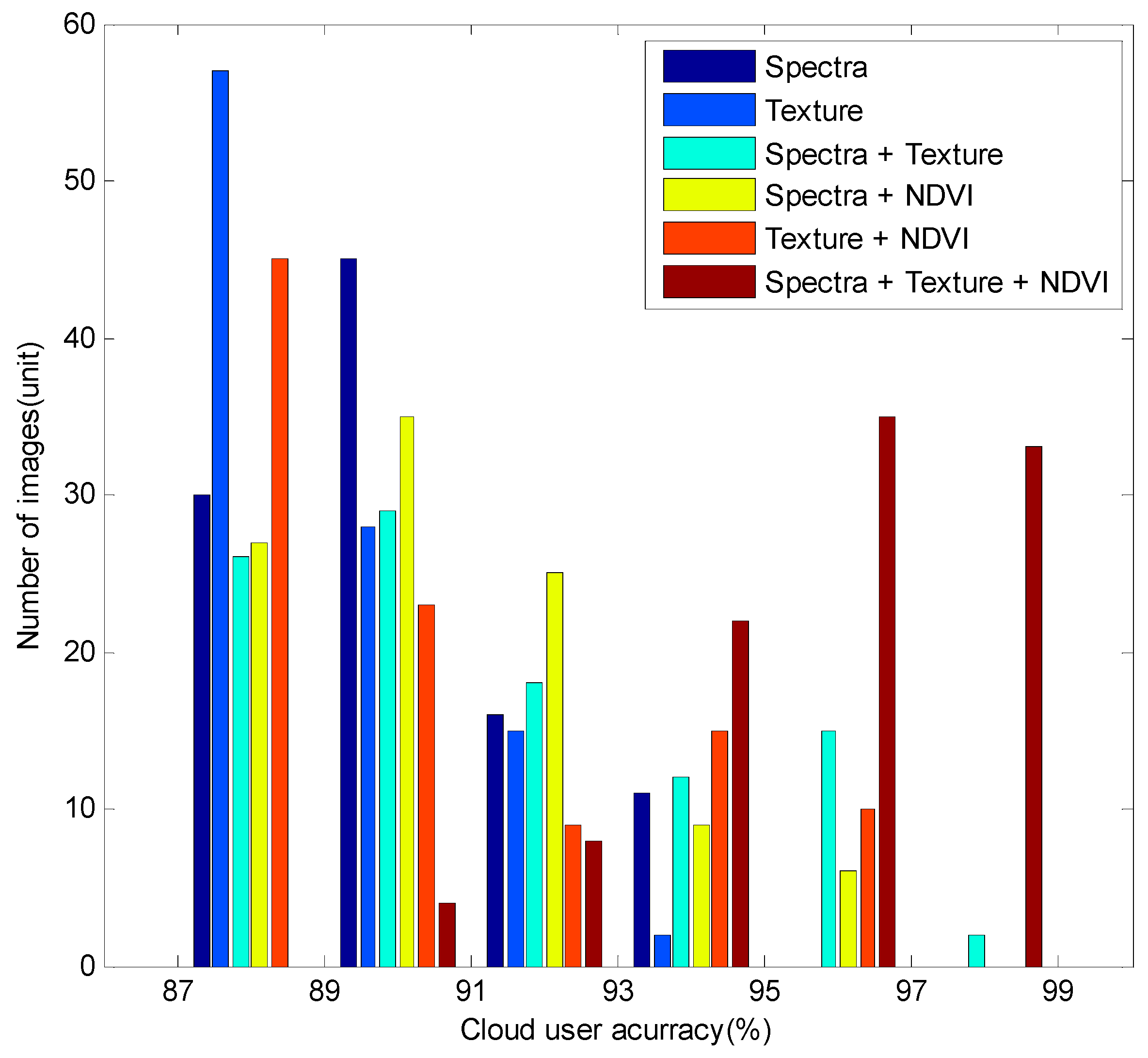

Table 6 shows the quantity of images of the six features combination grouped by user accuracy, 86%–88%, 88%–90%, 90%–92%, 92%–94%, 94%–96%, and 96%–98%, and the average user accuracy of each feature combination.

Figure 10 is the corresponding histogram.

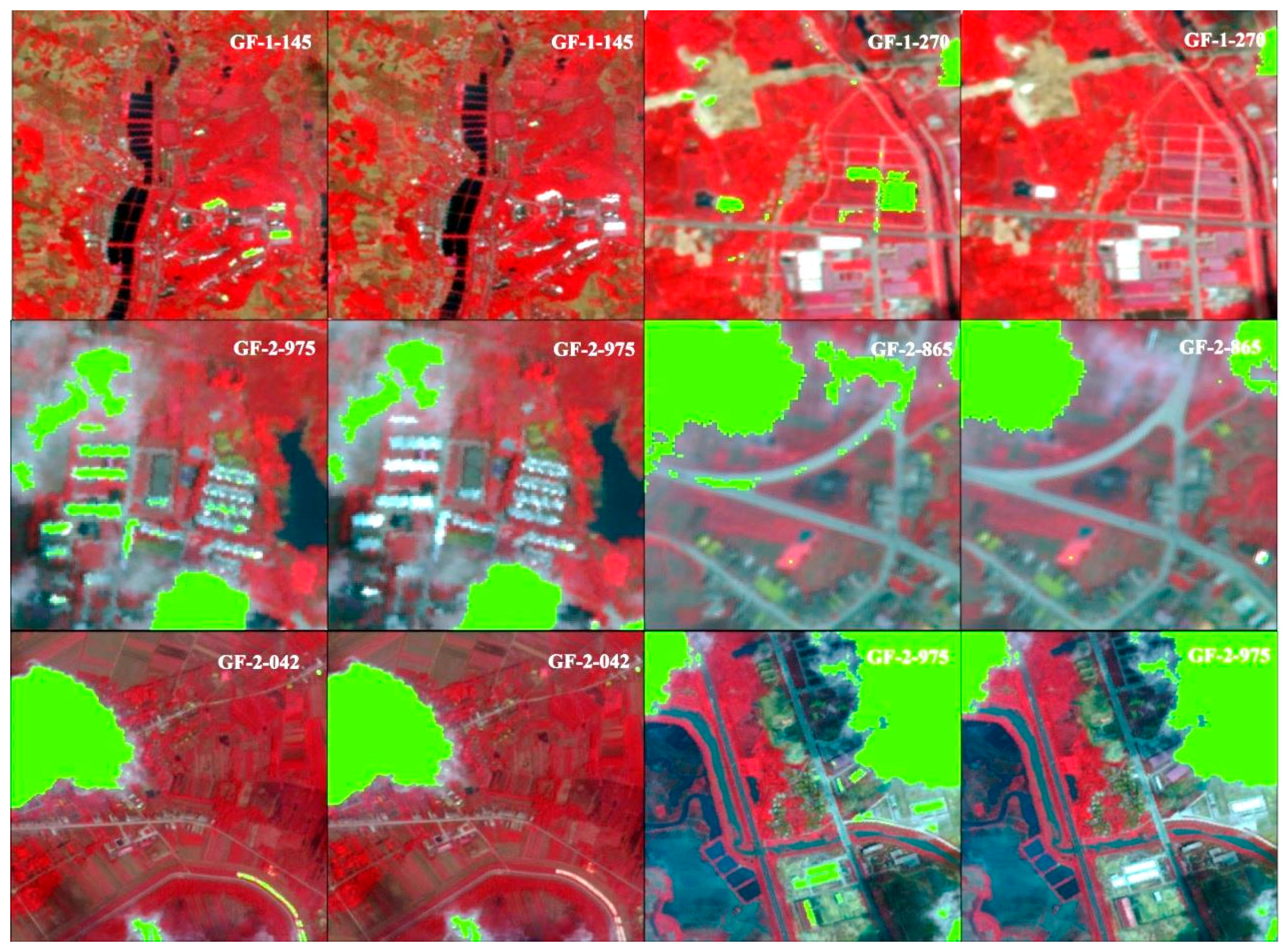

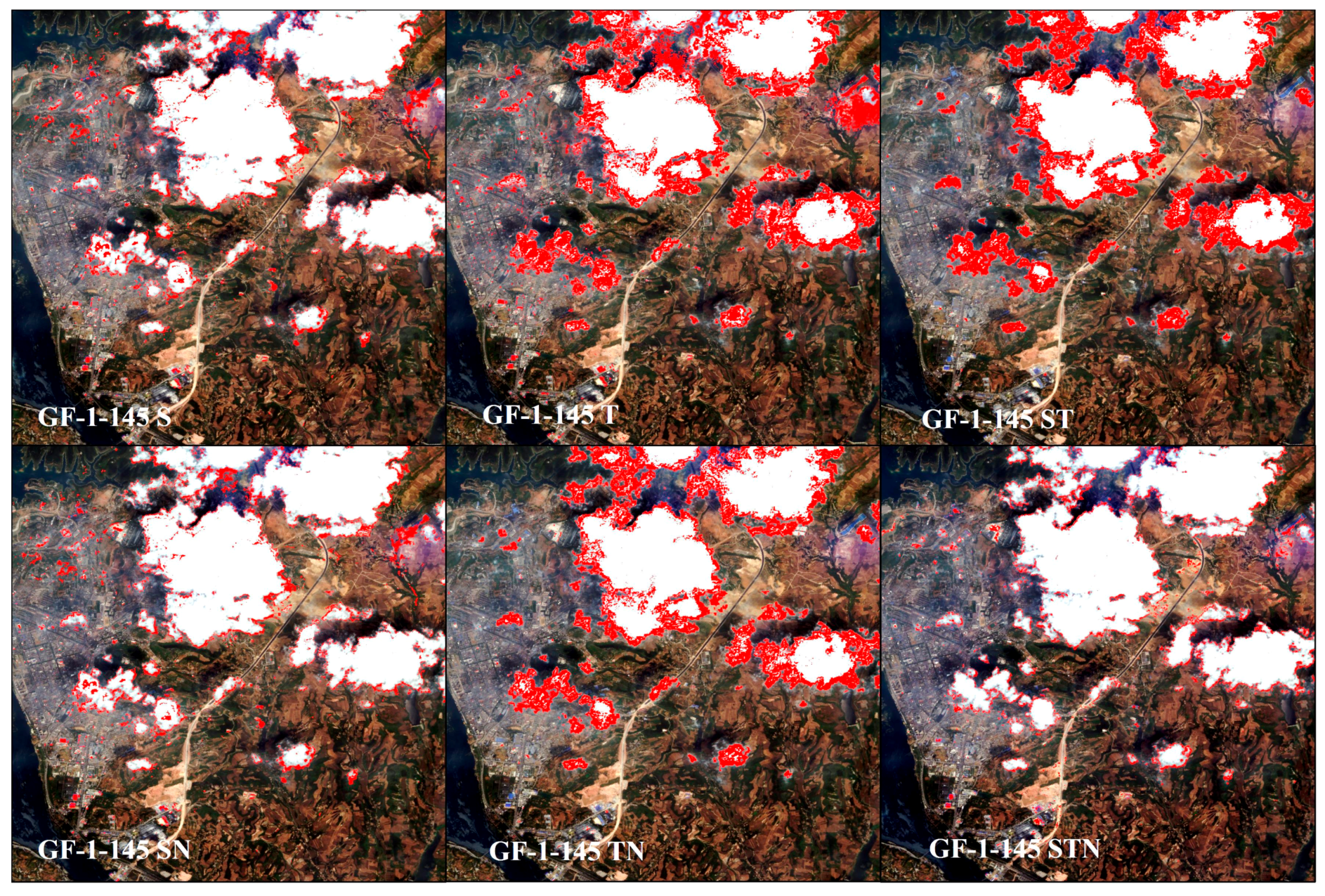

In order to compare the different feature fusion results, we show the partial enlarged view of total class error results of cloud detection for S, T, ST, SN, TN, and STN feature fusion on GF-1-145 images in

Figure 11. Red pixel regions in the picture represent total classification error results in cloud detection.

From

Figure 11, we see that total classification error results in cloud detection for STN was less than in S, T, ST, SN, TN feature fusion. The main reason is that STN performs better on houses, roads, and cloud edges in cloud detection than other feature fusion methods. These results suggest that multi-feature fusion is effective in cloud detection and allows features to complement each other.

The overall accuracy of our approach reached more than 91.45%; the Kappa coefficient of all images was more than 80%, which demonstrates the effectiveness of our method.

From

Table 5 and

Table 6, and

Figure 9, we see STN feature combinations delivered greater accuracy than other methods, the cloud producer accuracy reached 93.67% and cloud user producer accuracy reached 95.67%, indicating that feature combinations are effective in cloud detection. Our results show that multi-feature classification has higher accuracy than a single feature classification. Feature combinations with spectral features have a higher accuracy than feature combinations without spectral features. Our results demonstrate that a single feature cannot represent the image content. Using only a single feature to classify all categories does not make full use of all the discriminative information; hence, classification accuracy is low. Multi-features fusion can take advantage of every feature, and therefore delivers accurate results. Thus, we conclude that spectral feature is important for cloud detection.

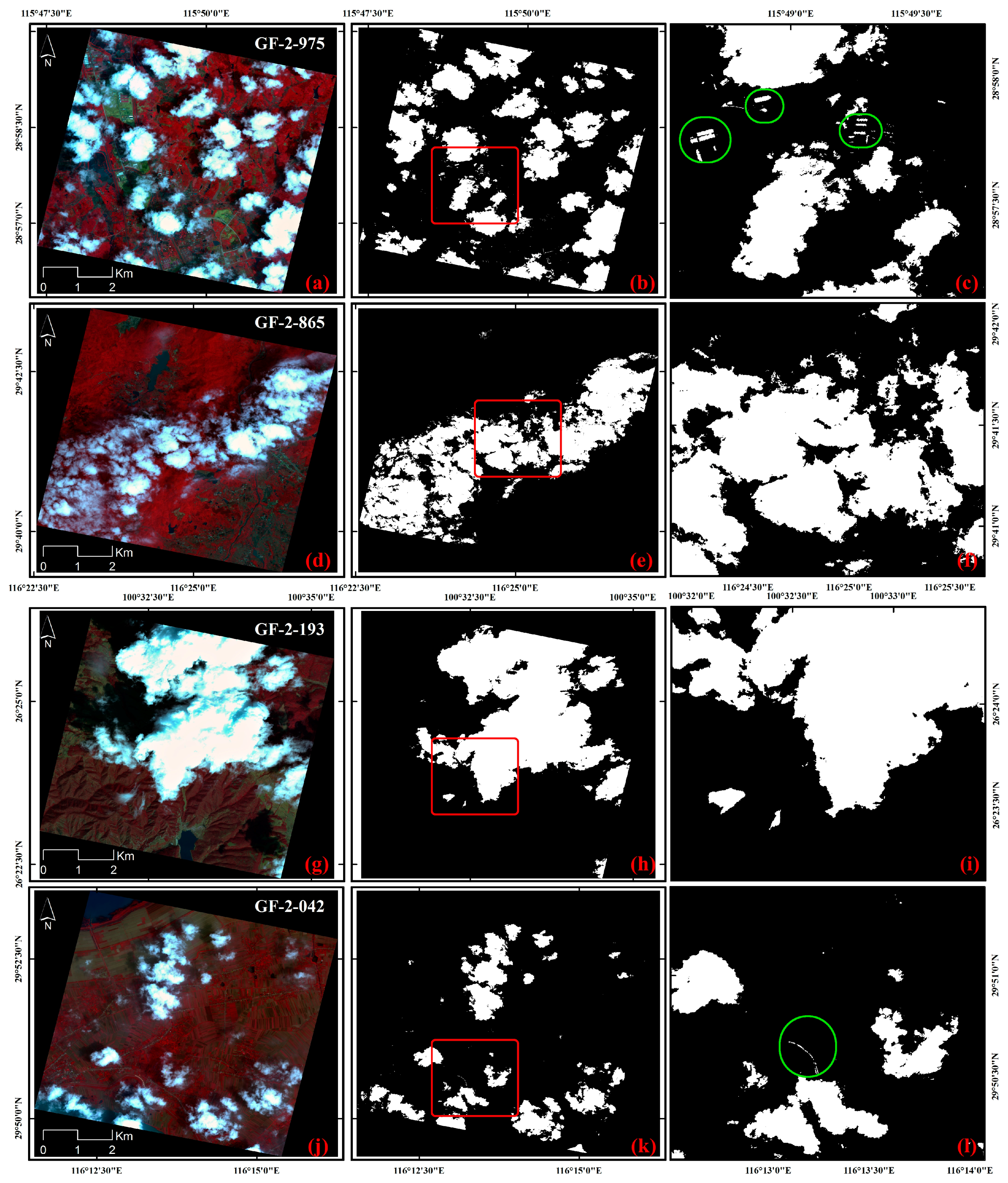

4.2. The Scalability of the Algorithm

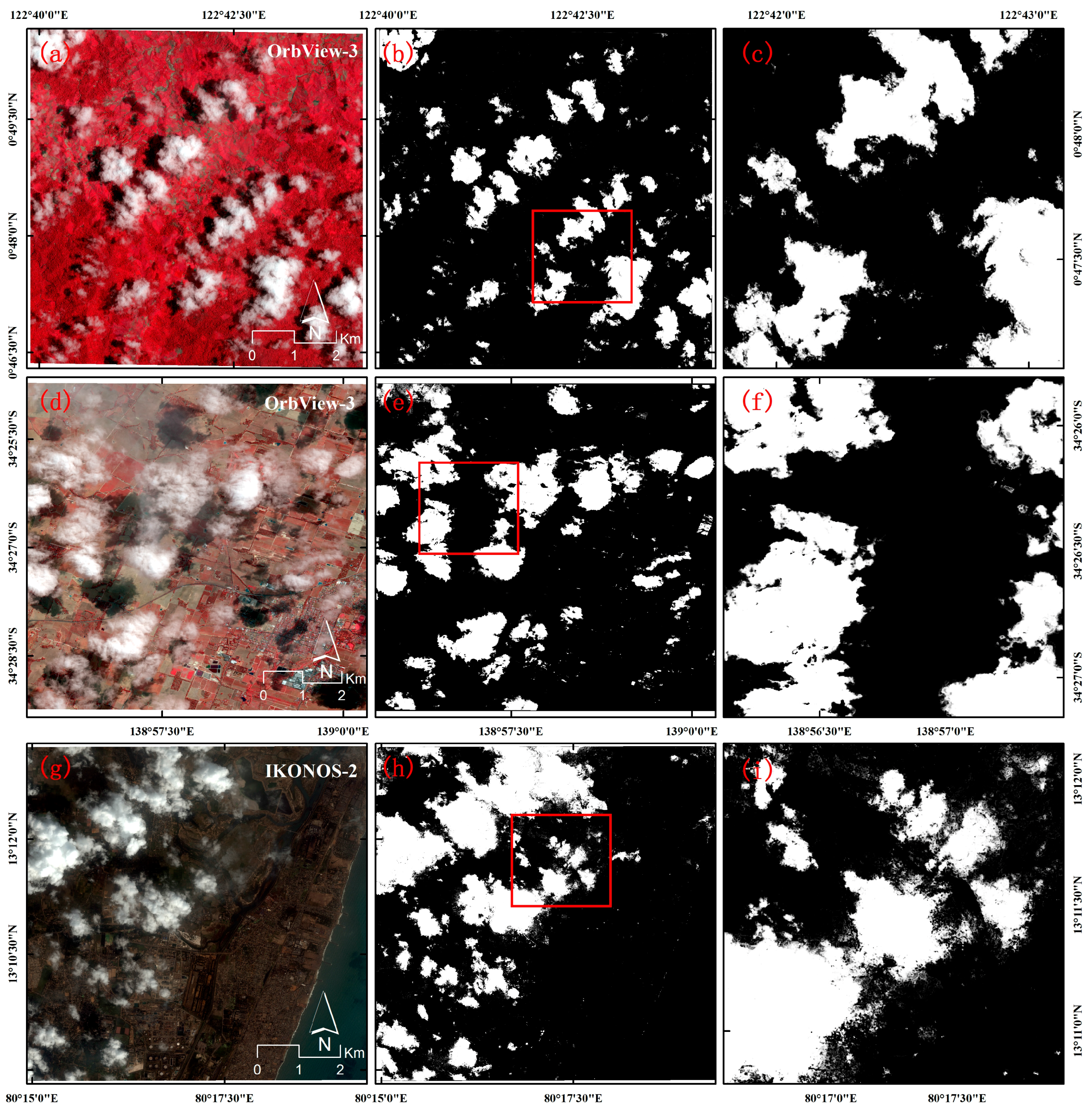

Because our method carry out spectral attributes, texture attributes and NDVI feature fusion based on machine learning to achieve cloud detection, our method is suitable for high-resolution image data include near-infrared bands. To demonstrate the scalability of the algorithm, the algorithm is applied to two OrbView-3 images and one IKONOS-2 image, and the cloud classification maps are shown in

Figure 12.

Figure 12a–c the original map, cloud classification map, and the magnified area corresponds to the red line regions of cloud classification map for one OrbView-3 image, respectively;

Figure 12d–f for other OrbView-3 image; and

Figure 12g–i for IKONOS-2 image;

As can be seen from the figure, our method has good cloud detection results on OrbView-3 and IKONOS-2 images, which indicates that our method is not only suitable for GF-1/2 satellite imagery, but also suitable for other high satellite image data with near-infrared bands.

4.3. Summary of Advantages and Disadvantages

The proposed method has substantial advantages over conventional methods. In this paper, machine learning and multi-feature fusion are used for cloud detection. This method makes full use of the multi-feature information and has good accuracy than a single feature. Moreover, our method does not rely on thermal bands and multi-temporal information. It is suitable for various high-resolution earth observation sensors with near-infrared bands. Our proposed method is applicable in a variety of scenarios including high reflectance buildings, roads, agriculture and mountains. Furthermore, our method has good cloud detection results on large, medium, and small clouds and also has good effect on high, medium, and low density clouds.

However, our method has some disadvantages. We focus on machine learning and multi-feature fusion, but do not consider distinguishing between cloud and other objects including bare soil, desert, snow, and ice. In addition, we do not consider impact of season on the clouds. In future study, we will try to apply machine learning and feature fusion method to distinguish between cloud and special underlying surface environments including bare soil, desert, snow, and ice.

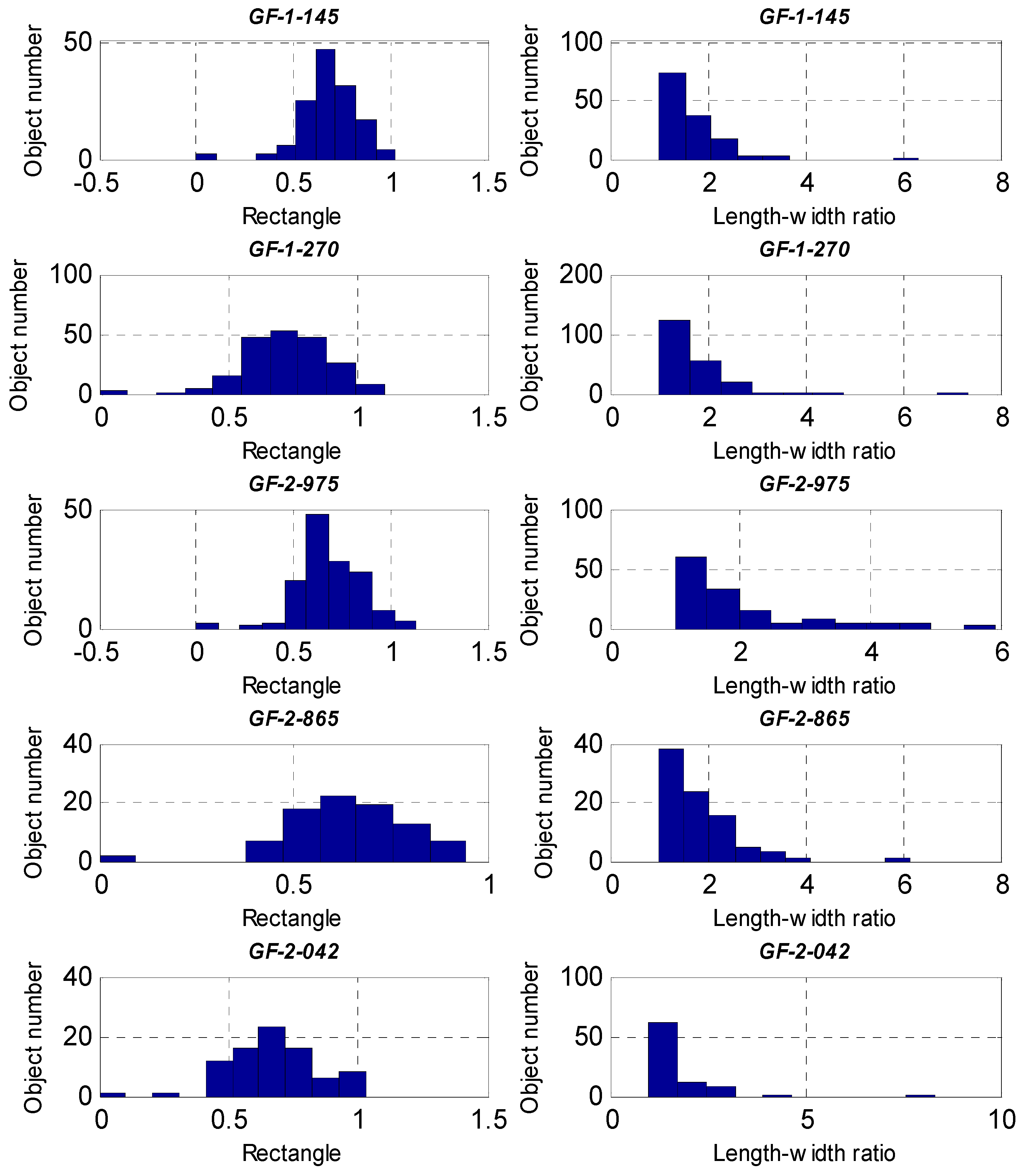

5. Conclusions

In this paper, we propose a novel approach for cloud detection using machine learning and multi-feature fusion based on a comparative analysis of typical spectral, textural, and other feature differences between clouds and backgrounds in GF-1/2 images. The core principles are to select typical features and feature fusion based on machine learning for cloud detection. Cloud detection results showed that the proposed algorithm performed well on different clouds types, sizes, and densities, and different underlying surface environments. Furthermore, we experimentally demonstrate that multi-feature fusion yields more accurate cloud detection than a single feature approach and the spectral features in cloud detection are particularly important. We applied object-oriented post-processing in the final step by using rectangles and a length–width ratio shape index further reducing misclassification of highly reflective buildings and roads. Our proposed method is applicable in a variety of scenarios and is reliable in cloud detection. It can be applied to images from various high-resolution earth observation sensors with near-infrared bands. A shortcoming of our proposed method is that we need to select the training samples manually. Our future research will address automatic training samples selection and feature learning approaches. In addition, our research is designed to meet the needs of disaster reduction project of China, which focused on drought and floods in southern China, experimental data were selected from southern China. Thus, currently we do not consider other underlying surface environments including bare soil, desert, snow, and ice. There are other regional and seasonal data that need to be addressed in follow-up study, and we will improve methods to suit different underlying surface environments.