Assessment of Image-Based Point Cloud Products to Generate a Bare Earth Surface and Estimate Canopy Heights in a Woodland Ecosystem

Abstract

:1. Introduction

2. Materials and Methods

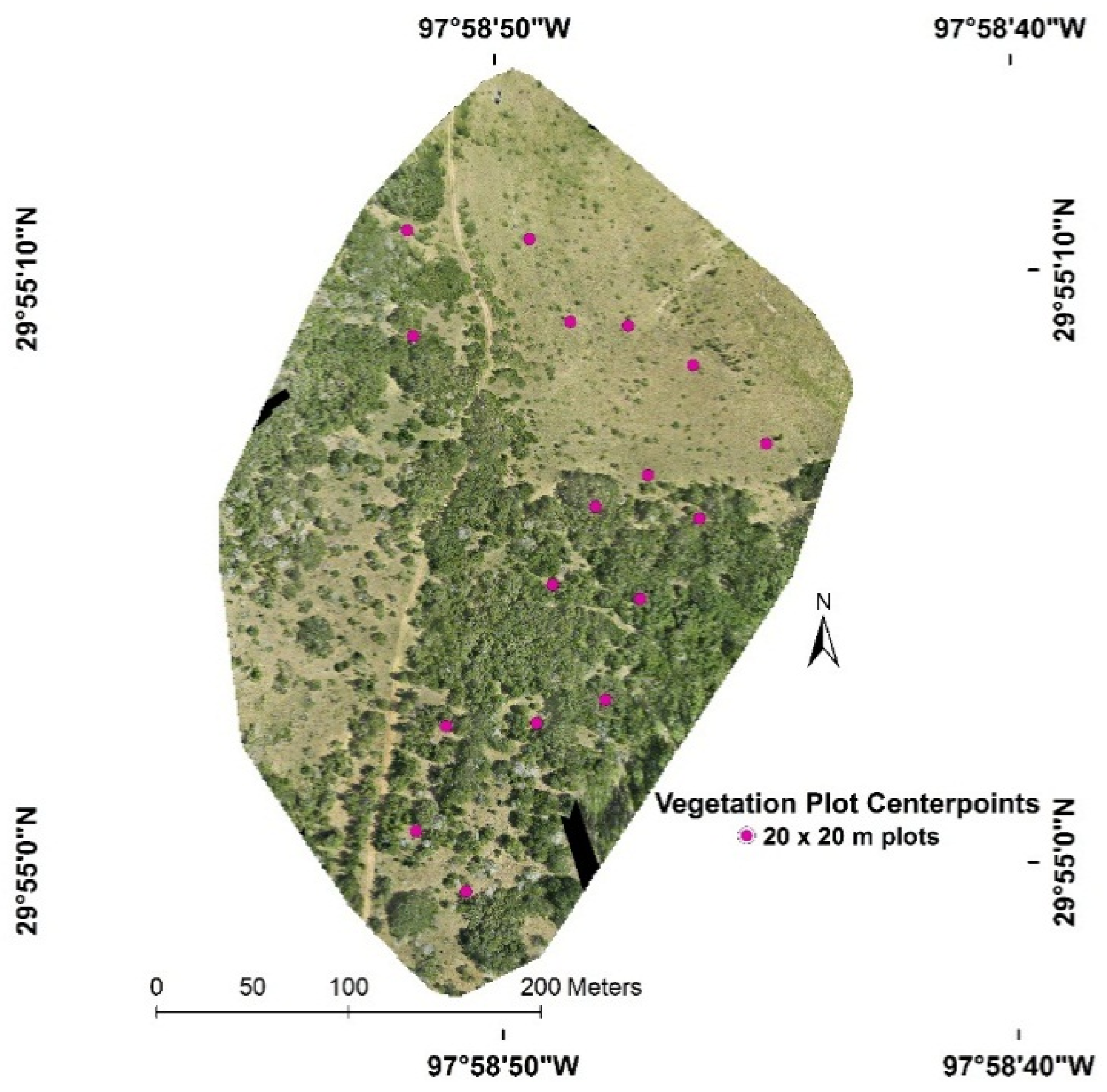

2.1. Study Area

2.2. Image Data Collection, Processing, and Point Cloud Generation

2.3. SfM Point Cloud Processing

2.4. Lidar Data Collection and Processing

2.5. Vegetation Data Collection

2.6. Data Analysis

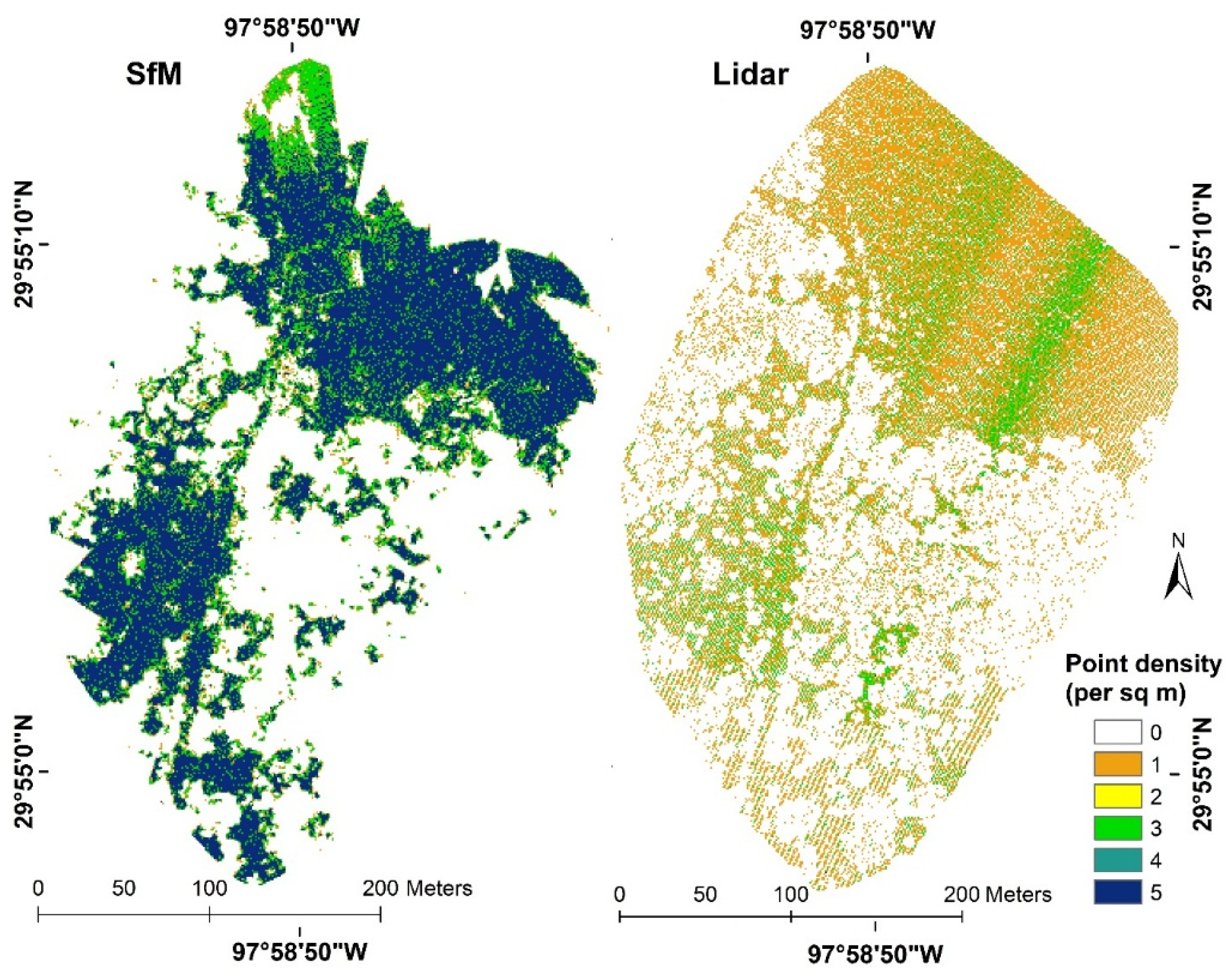

3. Results

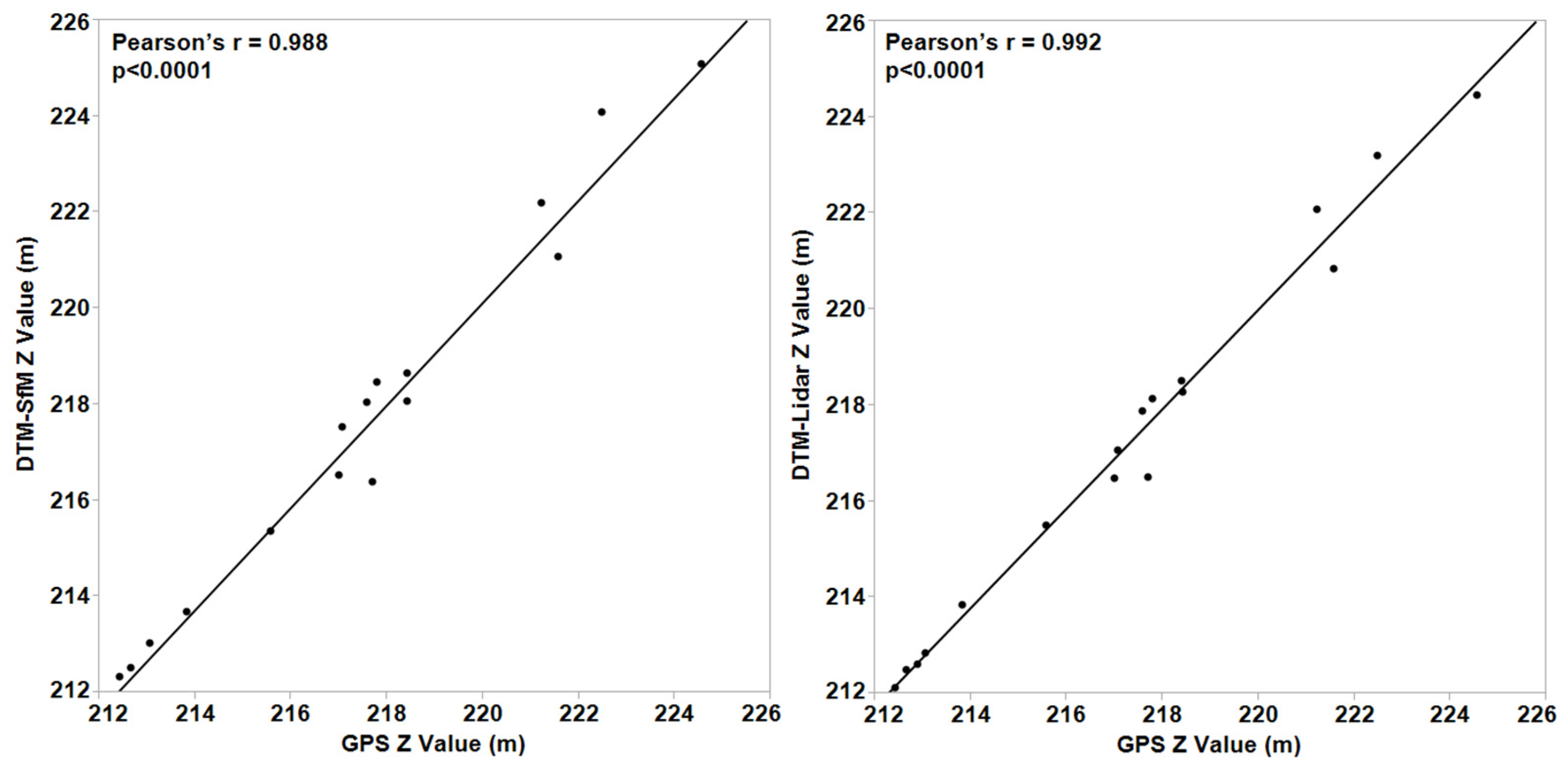

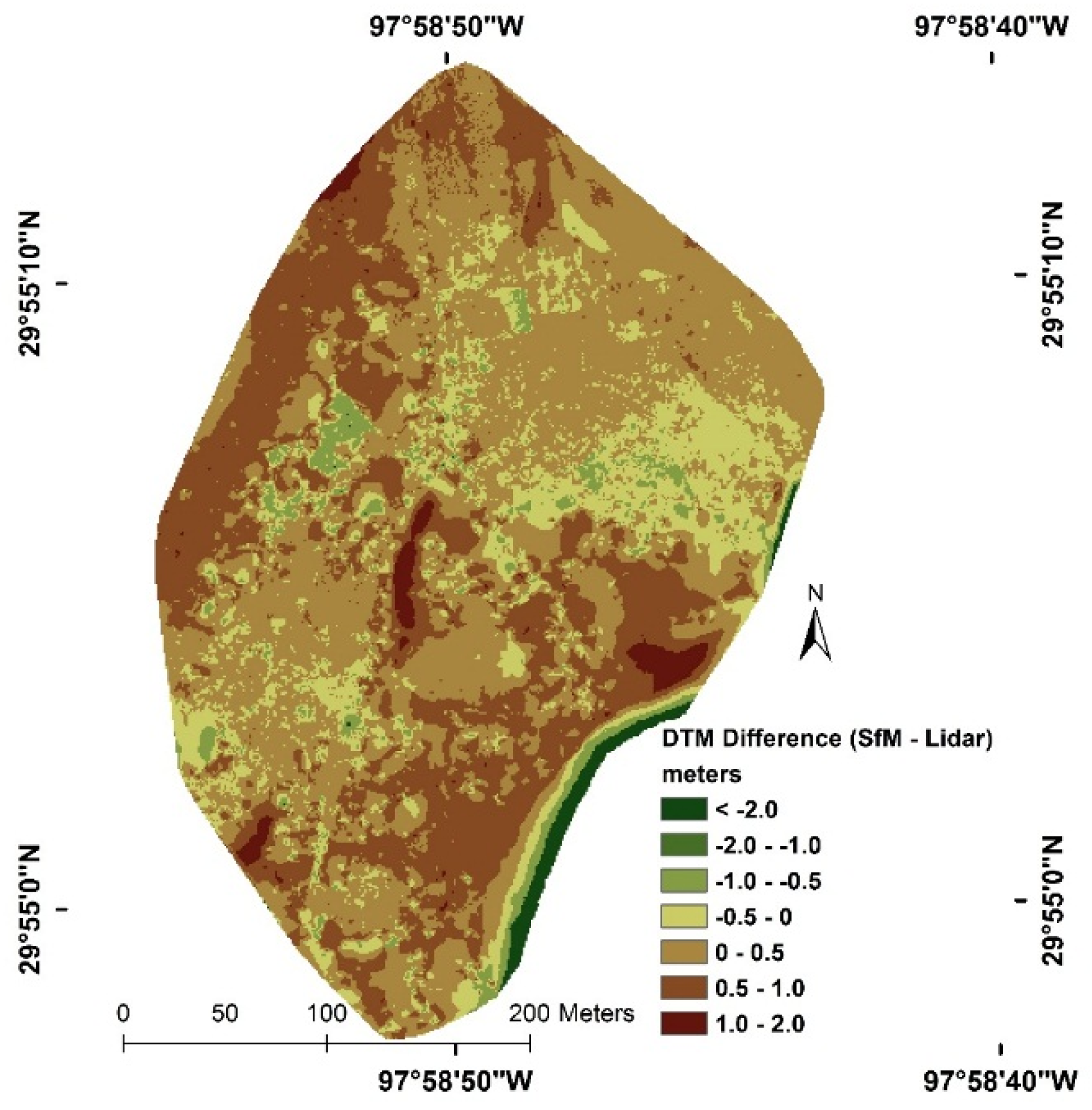

3.1. SfM and Lidar DTM Comparisons Data Analysis

| Dataset Characteristics | SfM | Lidar |

|---|---|---|

| Total points | 9,318,164 | 251,221 |

| Ground-classified points | 161,145 | 49,967 |

| Percent ground | 1.7 | 19.9 |

| Nominal point density (points/sq. m) | 2.58 | 0.72 |

| Nominal point spacing (m) | 0.58 | 1.17 |

| Comparison Metrics (n = 17) | GPS-DTMSfM | GPS-DTMlidar |

|---|---|---|

| Mean difference (m) | −0.31 | 0.08 |

| Standard deviation of difference (m) | 0.73 | 0.49 |

| Median difference (m) | 0.10 | 0.08 |

| Minimum difference (m) | −1.59 | −0.85 |

| Maximum difference (m) | 1.29 | 1.17 |

3.2. Evaluation of SfM Point Cloud Products to Estimate Tree Canopy Heights

| Field Mean | Field Med | Field Max | |

|---|---|---|---|

| SfMSfM Mean | 0.95 | 0.94 | 0.93 |

| SfMSfM Med | 0.95 | 0.95 | 0.91 |

| SfMSfM Max | 0.53 | 0.52 | 0.55 |

| SfMSfM 95P | 0.88 | 0.86 | 0.91 |

| SfMSfM 90P | 0.92 | 0.91 | 0.93 |

| SfMSfM 85P | 0.94 | 0.92 | 0.94 |

| SfMSfM 80P | 0.94 | 0.93 | 0.94 |

| SfMlidar Mean | 0.94 | 0.92 | 0.95 |

| SfMlidar Med | 0.95 | 0.94 | 0.93 |

| SfMlidar Max | 0.47 | 0.47 | 0.51 |

| SfMlidar 95P | 0.83 | 0.82 | 0.89 |

| SfMlidar 90P | 0.88 | 0.89 | 0.91 |

| SfMlidar 85P | 0.90 | 0.89 | 0.93 |

| SfMlidar 80P | 0.90 | 0.89 | 0.93 |

| SfMSfM | R2 (RMSE) | Kfold R2 (k = 3) | SfMlidar | R2 (RMSE) | Kfold R2 (k = 3) | |

|---|---|---|---|---|---|---|

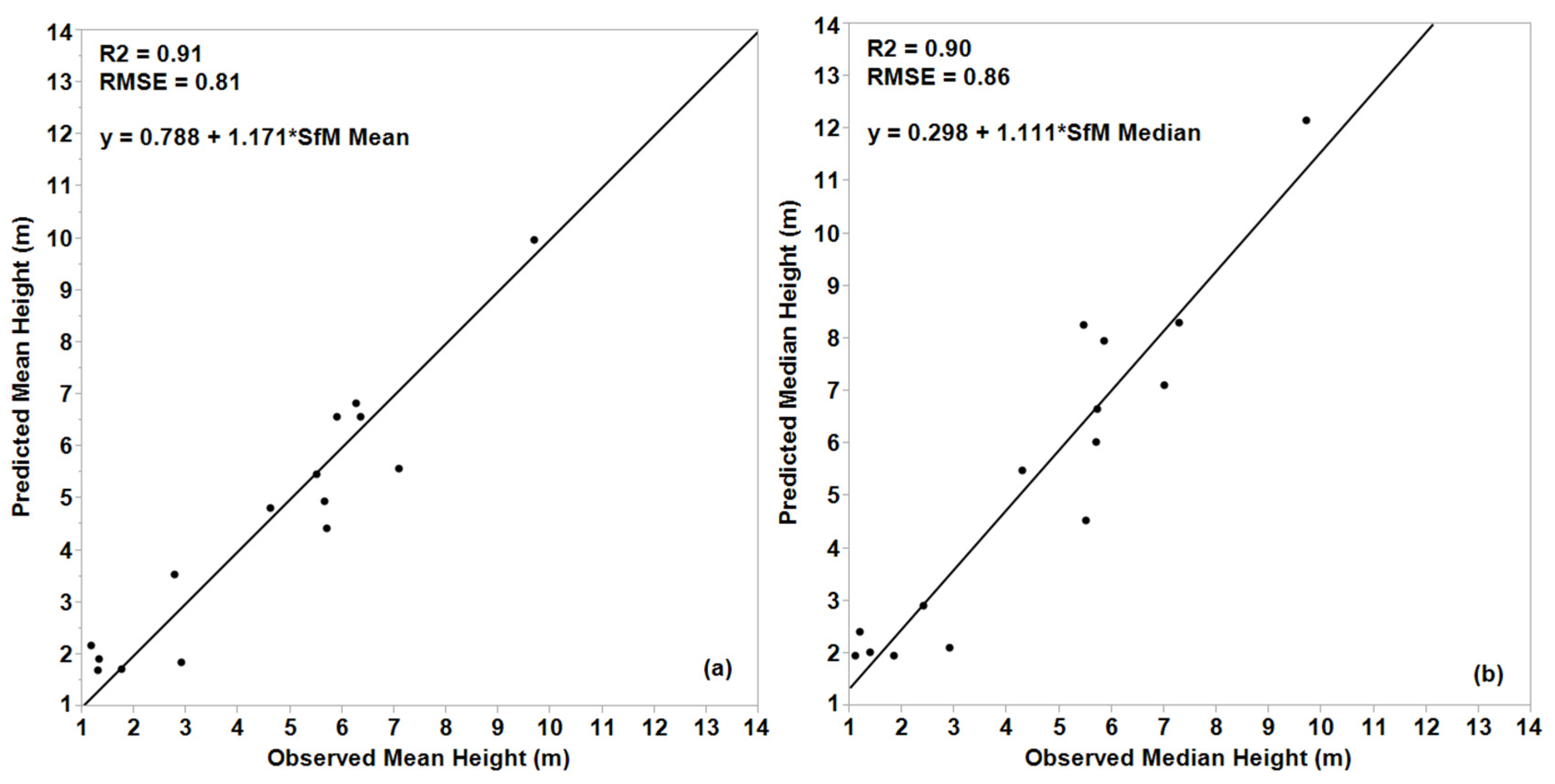

| Mean Canopy Height | y = 0.788 + 1.171 * SfMSfM Mean | 0.91 (0.81) | 0.88 | y = 0.154 + 1.093 * SfMlidar Med | 0.90 (0.84) | 0.87 |

| Median Canopy Height | y = 0.298 + 1.111 * SfMSfM Med | 0.89 (0.91) | 0.83 | y = 0.051 + 1.108 * SfMlidar Med | 0.89 (0.89) | 0.87 |

| Max. Canopy Height | y = 0.233 + 1.256 * SfMSfM 80P | 0.89 (1.24) | 0.88 | y = 0.072 + 1.673 * SfMlidar Mean | 0.89 (1.23) | 0.84 |

4. Discussions

4.1. Image Acquisition, Processing, and Point Cloud Generation

4.2. SfM Point Cloud Filtering and DTM Generation

4.3. Canopy Height Estimates and Model Performance

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Means, J.E.; Acker, S.A.; Fitt, B.J.; Renslow, M.; Emerson, L.; Hendrix, C.J. Predicting forest stand characteristics with airborne scanning lidar. Photogramm. Eng. Remote Sens. 2000, 66, 1367–1371. [Google Scholar]

- Popescu, S.C.; Wynne, R.H.; Nelson, R.F. Estimating plot-level tree heights with lidar: Local filtering with a canopy-height based variable window size. Comput. Electron. Agric. 2002, 37, 71–95. [Google Scholar] [CrossRef]

- Smith, A.M.S.; Falkowski, M.J.; Hudak, A.T.; Evans, J.S.; Robinson, A.P.; Steele, C.M. A cross-comparison of field, spectral, and lidar estimates of forest canopy cover. Can. J. Remote Sens. 2009, 35, 447–459. [Google Scholar] [CrossRef]

- Korhonen, L.; Korpela, I.; Heiskanen, J.; Maltamo, M. Airborne discrete-return lidar data in the estimation of vertical canopy cover, angular canopy closure and leaf area index. Remote Sens. Environ. 2011, 115, 1065–1080. [Google Scholar] [CrossRef]

- Riano, D.; Valladares, F.; Condes, S.; Chuvieco, E. Estimation of leaf area index and covered ground from airborne laser scanner (lidar) in two contrasting forests. Agric. For. Meteorol. 2004, 124, 269–275. [Google Scholar] [CrossRef]

- Jensen, J.L.R.; Humes, K.S.; Vierling, L.A.; Hudak, A.T. Discrete return lidar-based prediction of leaf area index in two conifer forests. Remote Sens. Environ. 2008, 112, 3947–3957. [Google Scholar] [CrossRef]

- Peduzzi, A.; Wynne, R.H.; Fox, T.R.; Nelson, R.F.; Thomas, V.A. Estimating leaf area index in intensively managed pine plantations using airborne laser scanner data. For. Ecol. Manag. 2012, 270, 54–65. [Google Scholar] [CrossRef]

- Lefsky, M.A.; Cohen, W.B.; Harding, D.J.; Parker, G.G.; Acker, S.A.; Gower, S.T. Lidar remote sensing of above-ground biomass in three biomes. Glob. Ecol. Biogeogr. 2002, 11, 393–399. [Google Scholar] [CrossRef]

- Hauglin, M.; Gobakken, T.; Astrup, R.; Ene, L.; Næsset, E. Estimating single-tree crown biomass of Norway spruce by airborne laser scanning: A comparison of methods with and without the use of terrestrial laser scanning to obtain the ground reference data. Forests 2014, 5, 384–403. [Google Scholar] [CrossRef]

- Li, W.; Niu, Z.; Huang, N.; Wang, C.; Gao, S.; Wu, C. Airborne lidar technique for estimating biomass components of maize: A case study in Zhangye City, Northwest China. Ecol. Indic. 2015, 57, 486–496. [Google Scholar] [CrossRef]

- Coops, N.C.; Wulder, M.A.; Culvenor, D.S.; st-Onge, B. Comparison of forest attributes extracted from fine spatial resolution multispectral and lidar data. Can. J. Remote Sens. 2004, 30, 855–866. [Google Scholar] [CrossRef]

- Holmgren, J. Prediction of tree height, basal area and stem volume in forest stands using airborne laser scanning. Scand. J. For. Res. 2004, 19, 543–553. [Google Scholar] [CrossRef]

- Ahmed, O.S.; Franklin, S.E.; Wulder, M.A. Integration of lidar and landsat data to estimate forest canopy cover in coastal British Columbia. Photogramm. Eng. Remote Sens. 2014, 80, 953–961. [Google Scholar] [CrossRef]

- Leberl, F.; Irschara, A.; Pock, T.; Meixner, P.; Gruber, M.; Scholz, S.; Wiechert, A. Point clouds: Lidar versus 3D vision. Photogramm. Eng. Remote Sens. 2010, 76, 1123–1134. [Google Scholar] [CrossRef]

- Fonstad, M.A.; Dietrich, J.T.; Courville, B.C.; Jensen, J.L.; Carbonneau, P.E. Topographic structure from motion: A new development in photogrammetric measurement. Earth Surf. Process. Landf. 2013, 38, 421–430. [Google Scholar] [CrossRef]

- Snavely, N. Scene Reconstruction and Visualization from Internet Photo Collections. Ph.D. Thesis, University of Washington, Seattle, WA, USA, 2008. [Google Scholar]

- Snavely, N.; Seitz, S.M.; Szeliski, R. Modeling the world from internet photo collections. Int. J. Comput. Vis. 2008, 80, 189–210. [Google Scholar] [CrossRef]

- Kaminsky, R.S.; Snavely, N.; Seitz, S.T.; Szeliski, R. Alignment of 3D point clouds to overhead images. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops (CVPR Workshops 2009), Miami, FL, USA, 20–25 June 2009; pp. 63–70.

- Mathews, A.J.; Jensen, J.L.R. Three-dimensional building modeling using structure from motion: Improving model results with telescopic pole aerial photography. In Proceedings of the 35th Applied Geography Conference, Minneapolis, MN, USA, 10–12 October 2012; Volume 35, pp. 98–107.

- Pollefeys, M.; Gool, L.V.; Vergauwen, M.; Verbiest, F.; Cornelis, K.; Tops, J. Visual modeling with a hand-held camera. Int. J. Comput. Vis. 2004, 59, 207–232. [Google Scholar] [CrossRef]

- Verhoeven, G. Taking computer vision aloft–archaeological three-dimensional reconstructions from aerial photographs with photoscan. Archaeol. Prospect. 2011, 18, 67–73. [Google Scholar] [CrossRef]

- Dandois, J.P.; Ellis, E.C. High spatial resolution three-dimensional mapping of vegetation spectral dynamics using computer vision. Remote Sens. Environ. 2013, 136, 259–276. [Google Scholar] [CrossRef]

- Dandois, J.P.; Ellis, E.C. Remote sensing of vegetation structure using computer vision. Remote Sens. 2010, 2, 1157–1176. [Google Scholar] [CrossRef]

- Mathews, A.; Jensen, J. Visualizing and quantifying vineyard canopy LAI using an Unmanned Aerial Vehicle (UAV) collected high density structure from motion point cloud. Remote Sens. 2013, 5, 2164–2183. [Google Scholar] [CrossRef]

- White, J.; Wulder, M.; Vastaranta, M.; Coops, N.; Pitt, D.; Woods, M. The utility of image-based point clouds for forest inventory: A comparison with airborne laser scanning. Forests 2013, 4, 518–536. [Google Scholar] [CrossRef]

- Aber, J.S.; Marzoff, I.; Ries, J.B. Small-Format Aerial Photography: Principles, Techniques and Geosciences Applications; Elsevier: Oxford, UK, 2010. [Google Scholar]

- Romano, M.E. Lidar processing and software. In Digital Elevation Model Technologies and Applications: The DEM Users Manual, 2nd ed.; Maune, D.F., Ed.; American Society for Photogrammetry and Remote Sensing: Bethesda, MD, USA, 2007; pp. 479–498. [Google Scholar]

- Zhang, K.; Cui, Z. ALDPAT 1.0. Airborne Lidar Data Processing and Analysis Tools; National Center for Airborne Laser Mapping, Florida International University: Miami, FL, USA, 2007. [Google Scholar]

- Sibson, R. A brief description of natural neighbor interpolation. In Interpolating Multivariate Data; Barnett, V., Ed.; John Wiley & Sons: New York, NY, USA, 1981; Volume 21, pp. 21–36. [Google Scholar]

- Watson, D. Contouring: A Guide to the Analysis and Display of Spatial Data; Pergamon Press: London, UK, 1992. [Google Scholar]

- Meng, X.; Currit, N.; Zhao, K. Ground filtering algorithms for airborne lidar data: A review of critical issues. Remote Sens. 2010, 2, 833–860. [Google Scholar] [CrossRef]

- Naesset, E.; Bollandsas, O.M.; Gobakken, T. Comparing regression methods in estimation of biophysical properties of forest stands from two different inventories using laser scanner data. Remote Sens. Environ. 2005, 94, 541–553. [Google Scholar] [CrossRef]

- Andersen, H.-E.; McGaughey, R.J.; Reutebuch, S.E. Estimating forest canopy fuel parameters using lidar data. Remote Sens. Environ. 2005, 94, 441–449. [Google Scholar] [CrossRef]

- Jensen, J.L.R.; Humes, K.L.; Conner, T.; Williams, C.J.; DeGroot, J. Estimation of biophysical characteristics for highly variable mixed-conifer stands using small-footprint lidar. Can. J. For. Res. 2006, 36, 1129–1138. [Google Scholar] [CrossRef]

- Erdody, T.L.; Moskal, L.M. Fusion of lidar and imagery for estimating forest canopy fuels. Remote Sens. Environ. 2010, 114, 725–737. [Google Scholar] [CrossRef]

- Saremi, H.; Kumar, L.; Turner, R.; Stone, C. Airborne lidar derived canopy height model reveals a significant difference in radiata pine (Pinus radiata D. Don) heights based on slope and aspect of sites. Trees 2014, 28, 733–744. [Google Scholar] [CrossRef]

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons by Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jensen, J.L.R.; Mathews, A.J. Assessment of Image-Based Point Cloud Products to Generate a Bare Earth Surface and Estimate Canopy Heights in a Woodland Ecosystem. Remote Sens. 2016, 8, 50. https://doi.org/10.3390/rs8010050

Jensen JLR, Mathews AJ. Assessment of Image-Based Point Cloud Products to Generate a Bare Earth Surface and Estimate Canopy Heights in a Woodland Ecosystem. Remote Sensing. 2016; 8(1):50. https://doi.org/10.3390/rs8010050

Chicago/Turabian StyleJensen, Jennifer L. R., and Adam J. Mathews. 2016. "Assessment of Image-Based Point Cloud Products to Generate a Bare Earth Surface and Estimate Canopy Heights in a Woodland Ecosystem" Remote Sensing 8, no. 1: 50. https://doi.org/10.3390/rs8010050

APA StyleJensen, J. L. R., & Mathews, A. J. (2016). Assessment of Image-Based Point Cloud Products to Generate a Bare Earth Surface and Estimate Canopy Heights in a Woodland Ecosystem. Remote Sensing, 8(1), 50. https://doi.org/10.3390/rs8010050