Current instrumentations perform radiometric and photometric measurements, quantifying singular quantities of the radiation physical phenomenon in the first case and of the luminous characterization in the second case; these instruments do not integrate in the same measure several quantities and do not consider the qualitative aspect with the quantitative one. The methodology presented in this work has the aim of quantifying at the same time the effect elicited by both the quantity, in terms of total irradiation, and the quality, as overall spectral composition, of the light radiation received by the eye; it calculates the whole luminous stimulation, both visual and non-visual, coming from a field of view, of an interior setting as well as of an exterior environment.

The method is based on the integration of measures performed in the real environment with post-processing by means of numerical calculation: measures of irradiance of the light source at the eye, reflectance of materials contained in the field of view and luminance of the scene are elaborated and their values are integrated in a numerical algorithm with the V(λ) or with the C(λ). Result of the calculation is a value representing, respectively, the visual or the circadian stimulus deriving from the field of view taken into account.

2.1. Simplifying Hypotheses

In order for the measurement and processing model to be valid, two simplifying hypotheses are necessary: the evaluation of a fixed field of view and the approximation of small areas within the visual field.

The method is based in the evaluation of static field of views, that are exemplified by shots performed with a digital camera, and for quantifying an environment that varies in time (as, for instance, a daylight scenario or a viewer’s change of direction of gaze), it would be required to analyze a series of shots, taken at different times. This operation can be performed more correctly with frames of a video when evaluating the visual stimuli, as the response of the visual system is immediate and the change in vision have to be monitored with a sequence of images taken in rapid succession. On the contrary the non-visual system has a slow adaptation time (minutes) to the change of lighting scenario, so the variation occurring in the field of view can be monitored with very large temporal steps; for this reason, in this second case, the fix point of view can be a valid approximation of the situation of an office, where the worker spends most of the hours at the desk in the same position.

The second hypothesis is linked to the fact that a common visual field is made up of many different objects, whose surfaces reflect light according to their spectral reflectance. For the purpose of the model, it is necessary to provide an acceptable approximation of the radiation reaching the eye: for this reason, only materials occupying an extended portion of the visual field are actually measured, while for the others only a rough approximation is performed as described at the end of the following paragraph.

2.2. Visual Field Examples

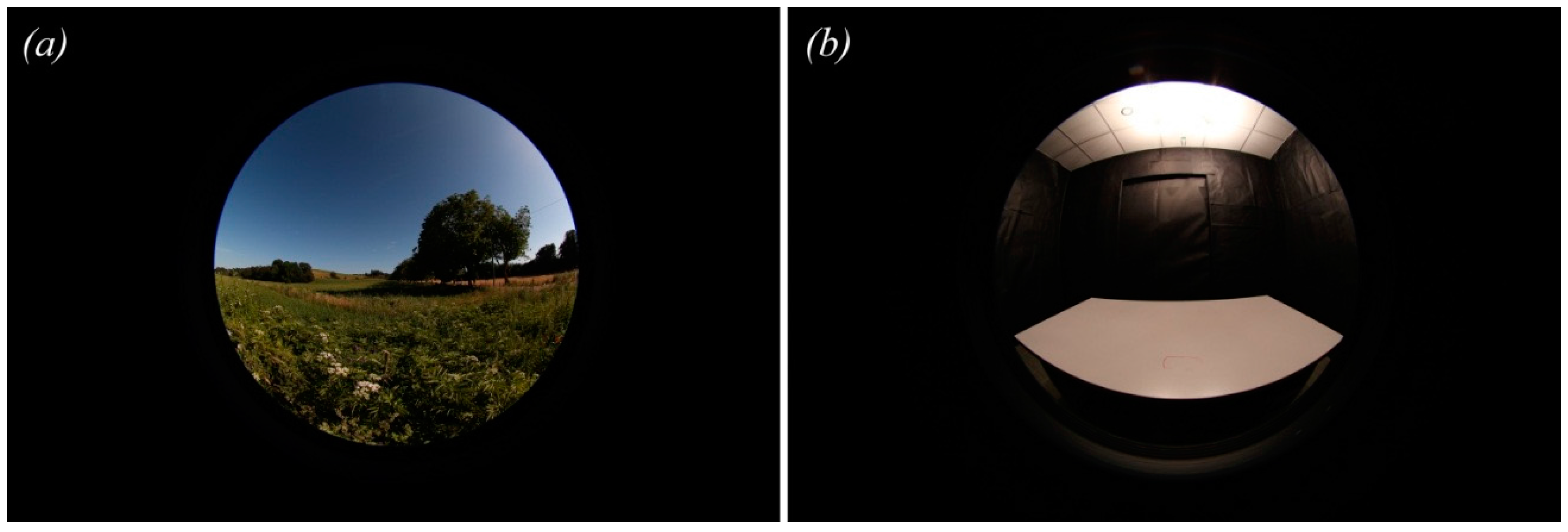

The numerical method for the evaluation of the luminous stimulus provided by the environment is based on a photo shot, as it is a valid approximation of the visual field: the spectral and the photometric quantities recorded at the eye were implemented in the algorithm. Two examples of visual fields are provided in

Figure 1 for a better understanding and as experimental test of this method: an outdoor countryside view (V1), in which the field of view is divided in two main “materials”, the blue sky vault and the green grass on the ground, having very different luminance values and reflection coefficients; and an indoor controlled environment shot (V2), where the luminous stimulation comes mainly from the lamp and the only surfaces involved in reflection are the white ceiling and the light grey desk, being the lateral walls covered with a low reflective black material. The method employed for capturing the images is explained in the next

Section 2.3.

A visual field is often made up of many different surfaces, some of which appear to occupy a very limited portion of it: considering the contribution of every single material can be impossible, or at least it can take quite too much time and effort for being considered a feasible method, hence it is necessary to choose and measure only the most extended materials, approximating the others. For the purposes of this method, a single material or a cluster of different materials occupying a little portion of the visual field have been approximated to one single material of the prevalent area, and associated, inside the calculation, to a material of a similar color, taken from a spectral reflectance library [

34,

35,

36].

Figure 1.

The two visual fields: (a) V1 divided by the blue sky in the upper part and the green grass in the bottom; and (b) V2 characterized by an artificial light source, the white ceiling, the grey desk and black walls.

Figure 1.

The two visual fields: (a) V1 divided by the blue sky in the upper part and the green grass in the bottom; and (b) V2 characterized by an artificial light source, the white ceiling, the grey desk and black walls.

The error deriving from this approximation can be considered acceptable because it is used exclusively within small portion of the visual field; although this procedure can lead to a minimum degree of mistake because the human eye itself undergoes to an approximation due to metamerism, which is related to the fact that different spectral power distribution can result in the same perceived color. If, in a second moment, the need for a more precise analysis arises, because the image scene within the visual field is composed by many different objects of different colors, it is also possible to split the approximated area into smaller areas, and to associate each one of them a different material, allowing the technicians to reach the preferred level of approximation they need.

In the view V1, only the sky and the grass were taken into account, leaving out the trees on the background and in the V2 the black coating, the ceiling and the desk surface were considered, excluding the space under the desk, that indeed occupy a not-so small part of the visual field, but this was chosen only for didactical purpose.

2.3. Measurements

Measurements in the visual fields were recorded for calculating the stimulus, coming from the environment, received by the eye. The observed radiation corresponds to the radiance Lλ, which can be described as the irradiation Eλ coming from the light source that arrives on a surface and is reflected according to the reflectance Rλ of the surface; considering a Lambertian surface that reflects in all the direction of the hemisphere:

As this work proposes an experimental method, in this first attempt only Lambertian reflection for simplicity was considered, postponing the consideration of other typologies of reflection in future developments.

Multiplying Lλ with the V(λ) or with the C(λ), the visual (VS) and the circadian stimulus (CS) coming from the field of view can be respectively obtained:

Spectral radiance of the light source have been performed by means of a spectroradiometer Jeti Specbos 1211UV with a sensitivity of 1 nm, 10 degree observer, adaptive integration time, without additional lenses; it has been recorded at the eye level, corresponding to the visual fields V1 (

Figure 2) and V2 (

Figure 3).

Figure 2.

Spectral radiance distribution of the natural light source measured at the eye in the V1: the emission was recorded in the bands of visible and UV-A, and it is characterized by high values in the low wavelengths and low values in the long wavelengths. The singular values for each wavelength were considered in the calculation procedure.

Figure 2.

Spectral radiance distribution of the natural light source measured at the eye in the V1: the emission was recorded in the bands of visible and UV-A, and it is characterized by high values in the low wavelengths and low values in the long wavelengths. The singular values for each wavelength were considered in the calculation procedure.

The spectral reflectance of the materials was taken from on line libraries with free access [

34,

36].

Luminance measures were collected by simply taking a shot of the visual field, without any particular restriction by means a video luminance meter LMK Mobile Advanced based on Canon Eos 500D camera with a luminance calibrated 4.5 mm objective (fisheye); for performing luminance image measurements the instrument requests the following setting: focal aperture F4, autofocus, image stabilizer deactivated, auto exposure bracketing (AEB) ± 2 exposure values. The camera was mounted on a tripod at the eye height, considering a standing human in the V1 (170 cm) and a seated person in V2 (110 cm).

Each measurement was taken with the video luminance meter in the same direction as gaze (i.e., the same direction as the fisheye shot), and it was performed multiple times at different points, choosing the portions of material which appear to have the highest luminance; the mean values for each materials were then calculated inside a spreadsheet application.

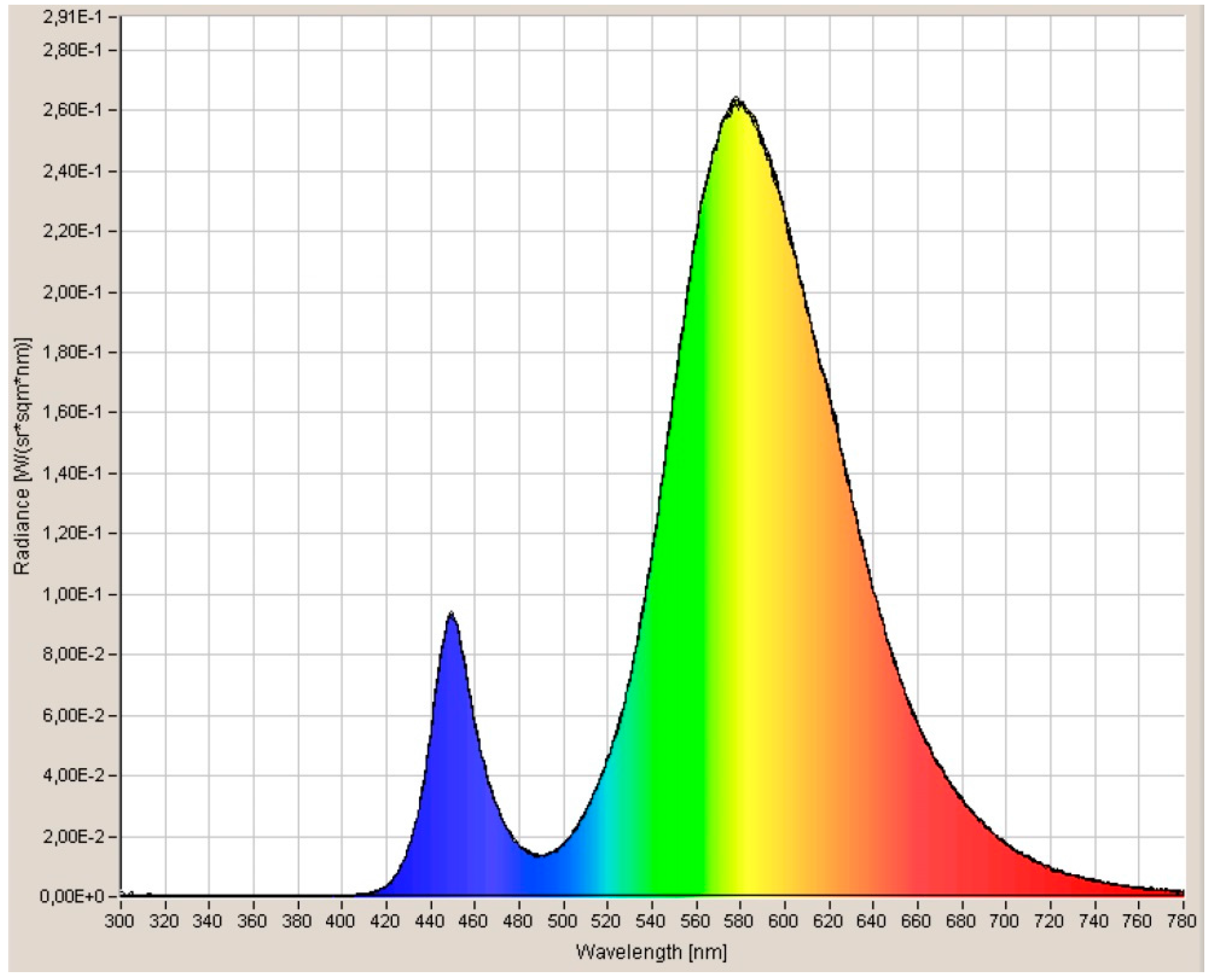

Figure 3.

Spectral radiance distribution of the artificial light sources measured at the eye in the V2: the emission is limited to the visual field and it is characterized by a lower peak in the blue region and a higher peak in the yellow region. The singular values for each wavelength were considered in the calculation procedure.

Figure 3.

Spectral radiance distribution of the artificial light sources measured at the eye in the V2: the emission is limited to the visual field and it is characterized by a lower peak in the blue region and a higher peak in the yellow region. The singular values for each wavelength were considered in the calculation procedure.

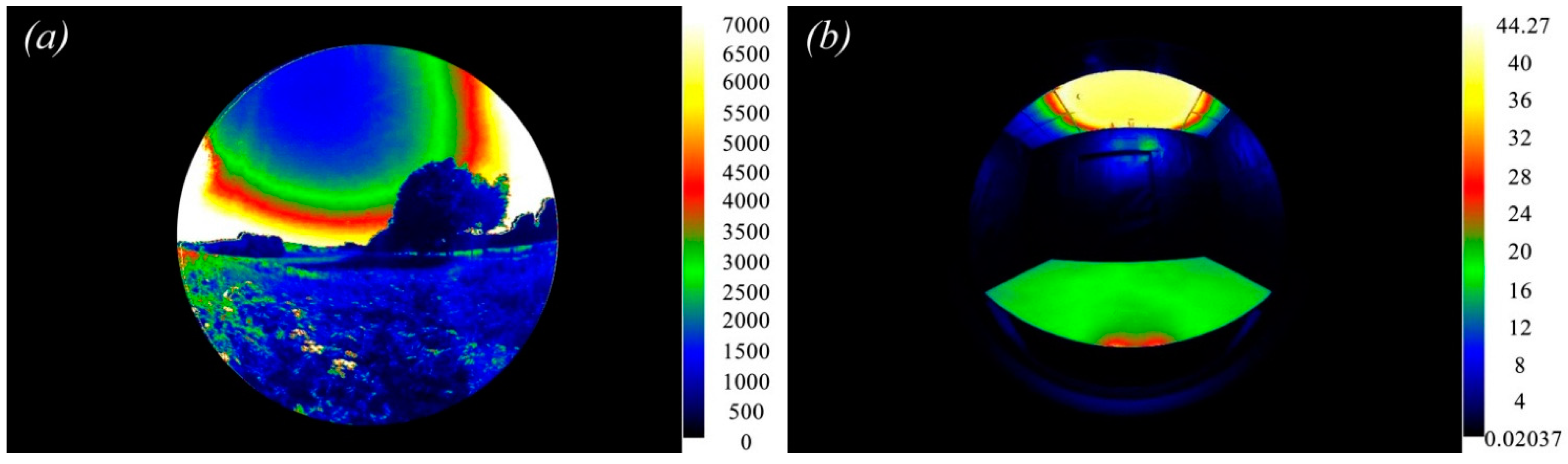

The instrument gives back two images representing the same field of view: a classical picture in jpg format and a pseudo-colors image with the corresponding luminance values in raw format; luminance values were extracted and processed inside LMK Labsoft software. Luminance image for the two examples of visual fields are reported in

Figure 4.

Figure 4.

Luminance levels of V1 (a) and V2 (b) expressed in cd/m2. In both the measures, the luminance coming from the bottom of the field of view has more constant values, than the luminance coming from the upper side. In the calculation procedure the singular value of each pixel was considered.

Figure 4.

Luminance levels of V1 (a) and V2 (b) expressed in cd/m2. In both the measures, the luminance coming from the bottom of the field of view has more constant values, than the luminance coming from the upper side. In the calculation procedure the singular value of each pixel was considered.

These spectral and photometric data were elaborated to obtain the stimulation deriving from the two fields of view taken into consideration.

As the stimulation deriving from the visual field is more complex than that coming from a single material, a procedure for applying the simplified model to the V1 and V2 has been performed: it made use of an elaboration of the images presented in

Figure 1 and of an analytical calculation, as described in the next sections.

2.4. Reflectance-Material Coupling Procedure

In order to associate every pixel of the image with its own spectral reflectance it was necessary to divide the visual field into heterogeneous zones, considering as the same part the homogeneous materials: visual field jpeg images were post-processed into an image editor, roughly drawing the contours of each material and filling each area with a different shade of gray. The output of this process is a grayscale jpeg, from now on called “material mask” made up of spots of different gray shades ranging between 0 (black) and 255 (white). The right column of

Figure 5 shows material masks for the left column visual fields V1 and V2, and the areas corresponding to various materials are represented as different shades of gray (ranging from 0, corresponding to black, to 255, corresponding to white).

Figure 5.

Digital images and corresponding rough material masks of the two visual fields: (up) in the outdoor picture the material masks were associated to three different areas corresponding to the sky, the grass and the trees; and (down) in the indoor picture the material masks were associated to five different areas corresponding to the light source, the ceiling, the walls, the desk and the space under the desk.

Figure 5.

Digital images and corresponding rough material masks of the two visual fields: (up) in the outdoor picture the material masks were associated to three different areas corresponding to the sky, the grass and the trees; and (down) in the indoor picture the material masks were associated to five different areas corresponding to the light source, the ceiling, the walls, the desk and the space under the desk.

The lightness of the gray in the material mask does not respects the lightness of colors in the image, as the grayscale is only used for individuating the order in which the material will be successively processed: the material assigned to the darkest shade of gray will be the first one, while the material associated to the lightest shade of gray will be the last one. An example of grayscale subdivision for ten materials can be seen in

Table 1.

Table 1.

Example of convention between material numbers and grayscale values.

Table 1.

Example of convention between material numbers and grayscale values.

| Material n # | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 |

|---|

| Gray (0–255) | 0 | 25 | 50 | 75 | 100 | 125 | 150 | 175 | 200 | 225 |

Moreover, it is important that 0 is not used as a material filling, because the exterior part of the circular image of the field of view is already black (see

Figure 2), and hence this color should be avoided in order to perform a valid calculation. This correspondence between the number codifying the material and the shade of gray its silhouette is filled with is necessary for the next step of the procedure. Furthermore, non-measured materials should be assigned to white mask (255), because they are processed in a second moment.

The example picture V1, representing a typical countryside view, was divided into three different material areas:

- -

Area 1 corresponding to the grass, was associated to material 3 having a grayscale value of 50.

- -

Area 2 corresponding to the sky, was assigned to material 4 having a value of 75 in the grayscale.

- -

Area 3 corresponds to the trees; it was associated to the white mask (255 in grayscale value), as it was not measured.

The picture V2, representing a windowless indoor environment, was divided into five areas:

- -

Area 1 corresponds to walls, is material 5 (100 in grayscale value).

- -

Area 2 corresponds to the plasterboard ceiling, is material 6 (125 in grayscale value).

- -

Area 3 corresponds to the desk surface, is material 7 (175 in grayscale value).

- -

Area 4 corresponds to the LED luminaire, is material 8 (200 in grayscale value).

- -

Area 5 corresponds to the floor tiles, which have not been measured, as because they occupy a little and peripheral portion of the visual field, as because their luminance is quite low; for this reason it is represented with the white material mask. In the study, the difference in light transmittance from the center and the periphery of the fisheye lens has not been investigated.

Each material mask image was considered a lines (n) per columns (m) two-dimensional matrix for processing.

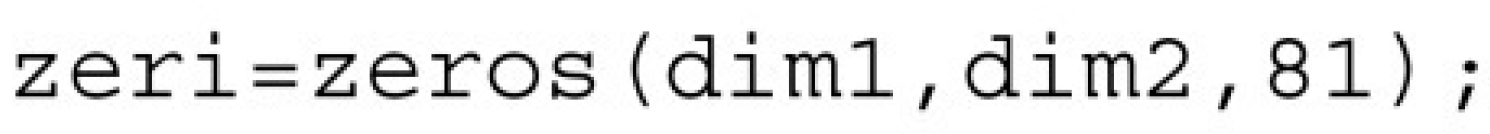

2.5. Results: The Script

A script was written, for associating the pixels of the image, considered as an n × m matrix, to their relative values of spectral reflectance and luminance; it can be run by using spectral radiometric data from performed measurements, data coming from open databases of spectral reflectance and the luminance image.

In the script the spectral irradiance of the light source and the spectral reflectance of materials, acquired every 5 nm, can be seen as two column vectors with 81 values, while the luminance data are insert as image; photopic efficiency function V(λ) and circadian efficiency function C(λ) are both embedded in the code: in particular, since the latter has not been definitively established yet, it can be freely modified as soon as a better fitting function is developed.

The aim of this short code is to manage data, leading to a final radiance value coming from all the visual field.

The script performs the numbered steps described below.

(1) It asks the user to input the following data:

Material mask (jpeg file, containing n × m × 1 values).

Luminance image (txt file containing n × m × 1 values).

Spectral reflectance of each one of the materials, uploaded in order (material 1 first, 81 × 1 values).

Spectral irradiance of the light source (81 × 1 values).

Number of measured materials within the scene (single value).

The program performs the following calculations.

(2) It counts the occurrences of every value inside the material mask and creates a two-column matrix that shows the values in column 1 and the number of their occurrences in column 2.

This step, together with 3 and 4, is performed because boundaries between different materials are affected by noise (values in the matrix different from the established ones of the grayscale), and thus it is necessary to remove such noise and obtain only the useful data out of the image.

(3) It sorts the values of the two-column matrix following the first column.

(4) It uses the number N of materials to extract the first n + 2 values with higher occurrence from the material mask (

Figure 6). N + 2 is used in order to obtain the values in the material mask that correspond to the measured materials plus the two fixed values, that are 0 and 255, corresponding, respectively, to the outside of the visual field (black space around the fisheye image) and the non-measured materials, that are going to be approximated later on.

Figure 6.

Script structure at point 4, used to extract the values with higher occurrence from the material mask.

Figure 6.

Script structure at point 4, used to extract the values with higher occurrence from the material mask.

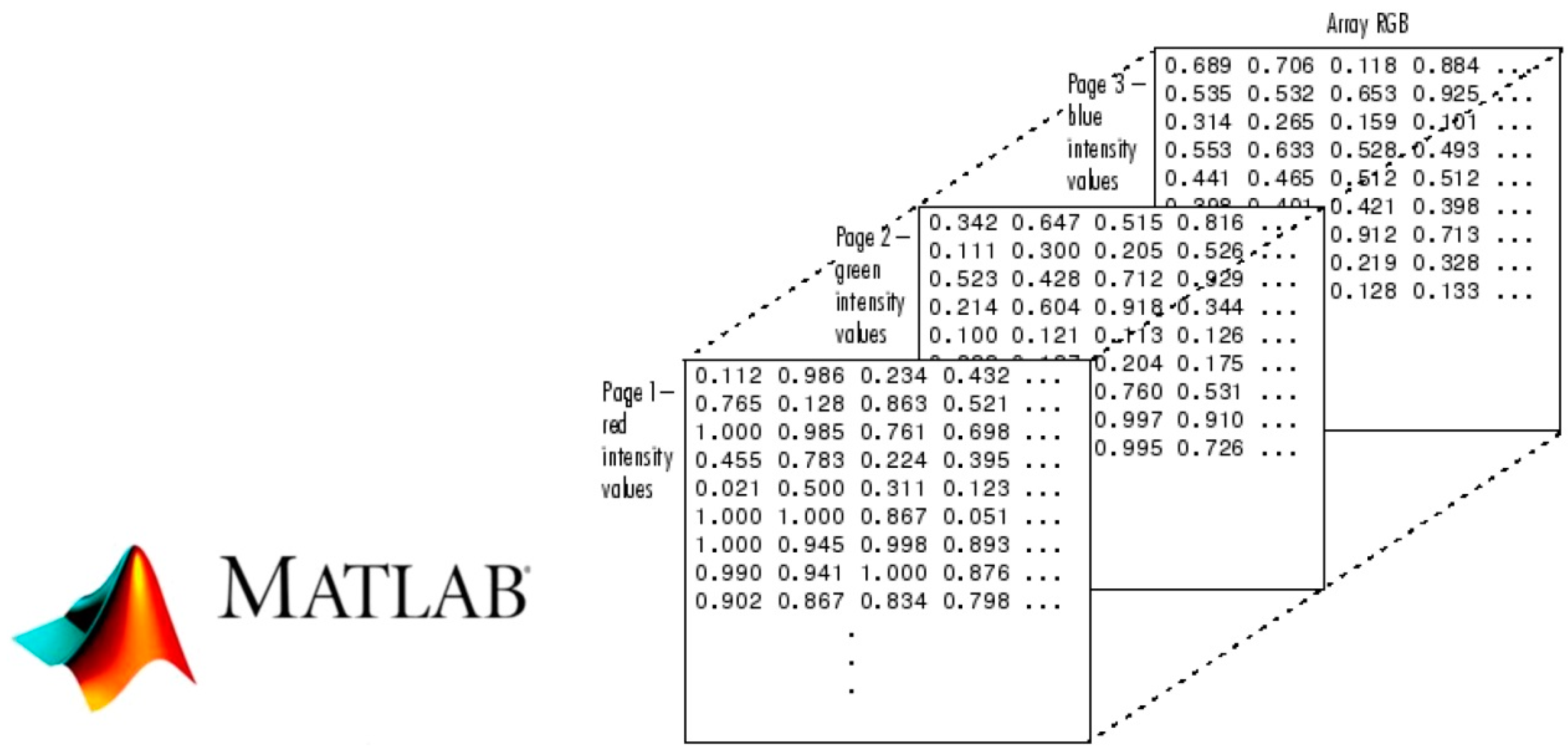

(5) It creates a three-dimensional matrix of zeroes with the same dimensions as the image and 81 sheets of depth (corresponding to the division of the visual field into a Δλ = 5 nm), attached behind the luminance image. This intermediate step is necessary because it is not possible to combine matrices whose dimensions do not match, but is mathematically possible to edit values inside a matrix that has the same n × m dimension as the image (

Figure 7).

Figure 7.

Script structure at point 5, which creates the three-dimensional matrix of zeroes.

Figure 7.

Script structure at point 5, which creates the three-dimensional matrix of zeroes.

(6) It associates, to every single pixel in the image, its corresponding irradiance, weighted by the relative luminance value in that point. Such value, which is the ratio between the absolute luminance value in that position and the maximum luminance value of the image, is used as a correction factor in order to take into account different luminance of the same material within the scene, avoiding measuring irradiance of every single point. The theoretical basis on which this calculation is possible is the following: although luminance values are different in two points of the same material, and thus their spectral irradiance is different in absolute value, they do have anyway similar spectral distributions, only more flattened for lower luminance values.

(7) For each material, the spectral reflectance or the reflectance of the most similar color contained in the library is employed in the calculation.

In a first attempt, the validation of the used analytical procedure was done using a simplified model that considered the stimulation coming from single materials: the difference between the value of radiance calculated through the formula 1 and the radiance measured was about 0.002 W/(sr·m

2). The graph of

Figure 8 shows the difference obtained in the spectral distribution with the material of the desk; the discrepancy occurred in the band between 720–780 nm is due to the spectral reflectance selected in the color library used in the calculation procedure, that presents small differences respect to real reflectance, as the color catalogued was very similar to that of the real material. The very small difference obtained in the final value of radiance validates the use of data contained into the libraries.

Figure 8.

Example of procedure validation: obtained difference in the spectral distribution. The values of radiance calculated was very similar to the radiance measured in the most part of the visual spectrum, differing only in the long wavelengths between 720 and 780 nm (point 7). It calculates the average circadian radiance per wavelength of the all image using Equation (1) and outputs it in a table (

Figure 9).

Figure 8.

Example of procedure validation: obtained difference in the spectral distribution. The values of radiance calculated was very similar to the radiance measured in the most part of the visual spectrum, differing only in the long wavelengths between 720 and 780 nm (point 7). It calculates the average circadian radiance per wavelength of the all image using Equation (1) and outputs it in a table (

Figure 9).

The final result is an n × m × 45 matrix (in the case of a 10-nm measurement) (

Figure 10). In order to calculate the total irradiance reaching the eye for every wavelength, sums are performed: for those material whose reflection spectrum has not been measured because they occupied only a small portion of the field of view, the RGB values are considered.

Figure 9.

Script structure at point 8 that calculates the average circadian radiance per wavelength.

Figure 9.

Script structure at point 8 that calculates the average circadian radiance per wavelength.

Figure 10.

Structure of a multidimensional n × m × 45 matrix to calculate the total irradiance reaching the eye for every wavelength.

Figure 10.

Structure of a multidimensional n × m × 45 matrix to calculate the total irradiance reaching the eye for every wavelength.

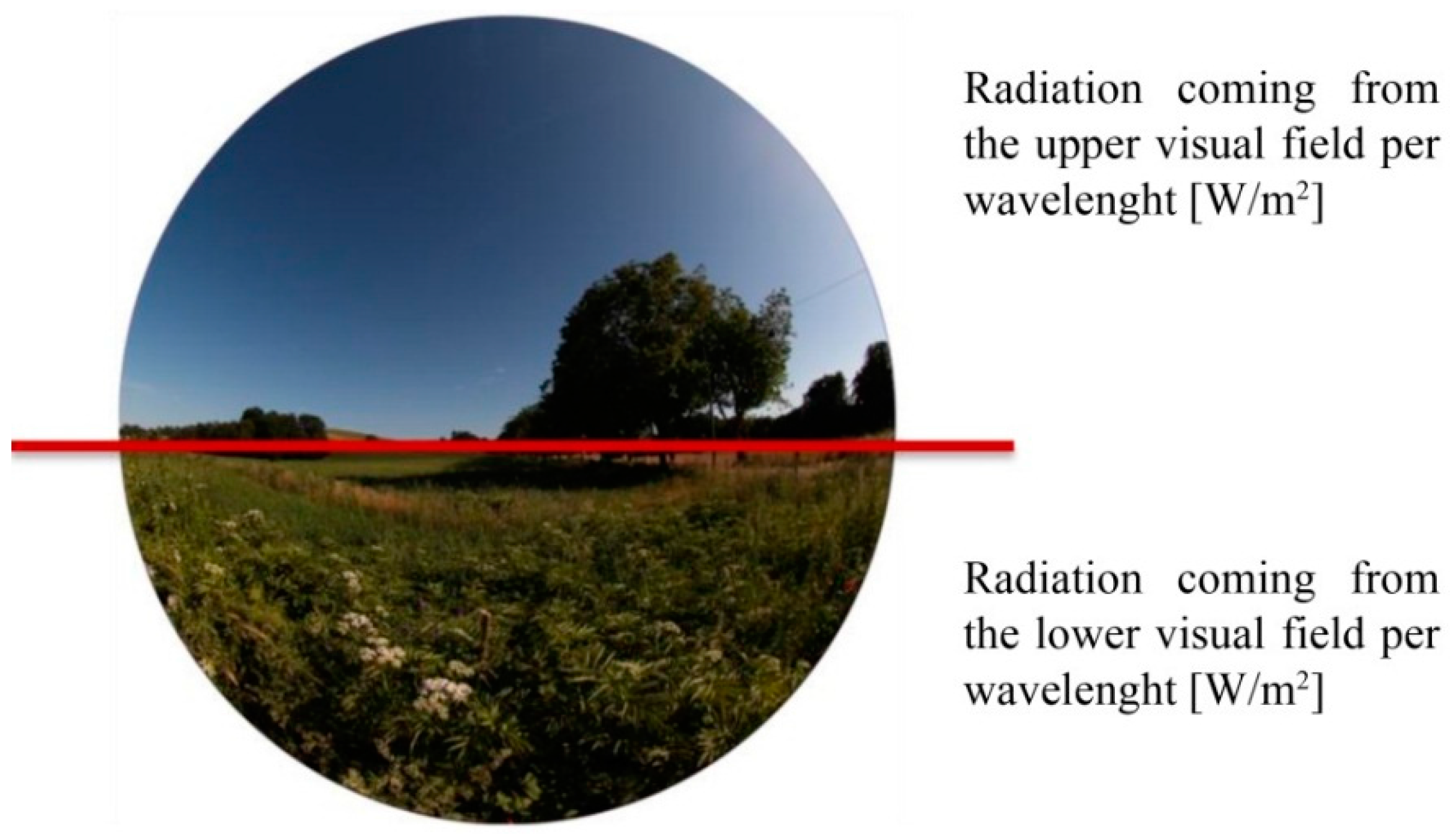

In this way, it is possible to know the quantity of light (radiance) per wavelength reaching the retina from different directions, allowing to distinguish between light coming from above the horizon and from below (

Figure 11); for instance, within a room, the radiance coming from the light source and the windows located above and that reflected from the furniture located below the visual field can be measured independently. This operation is important for the calculation of the non-visual stimulus, as it has been discovered that the circadian photoreceptors, the ipRGC, are mainly placed in the inferior part of the retina, so they are principally stimulated from the light coming from the upper part of the visual field [

37].

Figure 11.

The method described allows knowing the radiation per wavelength reaching the eye and coming from the upper and from the lower half of visual field.

Figure 11.

The method described allows knowing the radiation per wavelength reaching the eye and coming from the upper and from the lower half of visual field.

This numerical calculation can apply a circadian weighing function using Brainard’s circadian sensitivity function C(λ) to spectral reflectance of every material, hence obtaining what can be called “circadian reflectance”; then it performs a multiplication for each wavelength between circadian reflectance of each material and irradiance of the light source, resulting in circadian spectral irradiance of every material. However, spectral reflectance coefficient is non-dependent upon the light source lighting the material; hence, it is more useful in the case that data are collected by online resources.