Sparse Representation for Infrared Dim Target Detection via a Discriminative Over-Complete Dictionary Learned Online

Abstract

: It is difficult for structural over-complete dictionaries such as the Gabor function and discriminative over-complete dictionary, which are learned offline and classified manually, to represent natural images with the goal of ideal sparseness and to enhance the difference between background clutter and target signals. This paper proposes an infrared dim target detection approach based on sparse representation on a discriminative over-complete dictionary. An adaptive morphological over-complete dictionary is trained and constructed online according to the content of infrared image by K-singular value decomposition (K-SVD) algorithm. Then the adaptive morphological over-complete dictionary is divided automatically into a target over-complete dictionary describing target signals, and a background over-complete dictionary embedding background by the criteria that the atoms in the target over-complete dictionary could be decomposed more sparsely based on a Gaussian over-complete dictionary than the one in the background over-complete dictionary. This discriminative over-complete dictionary can not only capture significant features of background clutter and dim targets better than a structural over-complete dictionary, but also strengthens the sparse feature difference between background and target more efficiently than a discriminative over-complete dictionary learned offline and classified manually. The target and background clutter can be sparsely decomposed over their corresponding over-complete dictionaries, yet couldn't be sparsely decomposed based on their opposite over-complete dictionary, so their residuals after reconstruction by the prescribed number of target and background atoms differ very visibly. Some experiments are included and the results show that this proposed approach could not only improve the sparsity more efficiently, but also enhance the performance of small target detection more effectively.1. Introduction

The distance between man-made satellites and ground-based EO sensors is usually more than 30,000 km, and the angle between the object and ground-based EO sensor is so small that the target on a EO sensor is a small blob with only several pixels. Meanwhile, the energy of the object decays greatly for long distance propagation, and it is usually submerged in noise and clutter. This causes great difficulty for infrared dim small target detection and tracking [1,2]. The problem of how to effectively distinguish dim small targets from clutter has been widely studied over the past years, and a number of dim target detection algorithms have been developed and they can approximately be classified into two categories, namely, detection before track (DBT) and track before detection (TBD) [3–5]. Image filtering and content learning are the two basic methods of DBT-based target detection algorithms. The image filtering-based detection algorithms such as Top-Hat [6], TDLMS [7] and wavelet transform [8,9] usually whiten the image signal, and then determine whether there is a target or not in every scan by the amplitude threshold using some criteria, such as constant false alarm ratio (CFAR). The content learning-based target detection algorithms such as principal component analysis (PCA) compare the similarity of the image and the template pre-learned by knowledge [10]. The TBD-based algorithms jointly process more consecutive scans and declare the presence of a target and its track by searching the candidate trajectory using an exhaustive hypothesis. Temporal cross product (TCP) is presented to extract the characteristics of temporal pixels by using a temporal profile in infrared image sequences, and it could effectively enhance the signal-to-clutter ratio (SCR) [11]. Higher detection probability and lower false alarm probability in every scan could not only facilitate analysis of characteristics, including movement analysis, but also simplify the computational complexity for TBD.

The sparse representation decomposed on an over-complete dictionary is a newly-developed content learning-based target detection algorithm strategy [12–14]. In the over-complete dictionary, there are large number of atoms representing target and even background [15]. Gaussian [16,17] and Gabor [18] are the representative functions used to construct structural over-complete dictionaries offline. It is difficult for these structural over-complete dictionaries to suit the target and background with non-structure shape, so their representation coefficient vectors would be not sparse enough to distinguish targets from background clutter [19]. The adaptive morphological component over-complete dictionary is constructed according to the image content, and it could enhance the sparsity of the representation coefficient vector. However, the atoms representing target and background are mixed together, and the sparsity of the representation coefficient vector is usually too lower to detect target signals [20], therefore, it is necessary to discriminate the atoms representing targets from the ones describing background clutter. The existing techniques to discriminate atoms usually choose background clutter to train a background over-complete dictionary, and manually select target signals to build a target over-complete dictionary. The discriminative over-complete dictionary trained manually could greatly improve the sparsity of the representation coefficient vector and also improve the performance of dim target detection. Nevertheless, its serious limitation is that the atoms couldn't adapt to moving targets and changing backgrounds effectively, so it is necessary to distinguish the atoms automatically for the discriminative over-complete dictionary in order to further enhance the capability of dim target detection.

An infrared dim target detection approach based on sparse representation over discriminative over-complete dictionary learned online is proposed in this paper. An adaptive morphological over-complete dictionary is built according to infrared image content by a K-singular value decomposition (K-SVD) algorithm [21], and then a target over-complete dictionary is discriminated automatically from a background over-complete dictionary by the criteria that the atoms representing dim target signals could be decomposed more sparsely over a Gaussian over-complete dictionary than the one in a background over-complete dictionary. The remainder of the paper is organized as follows: the adaptive over-complete dictionary is trained in Section 2. It is further divided into target over-complete dictionary and background over-complete dictionary in Section 3. The sparsity-driven dim target detection approach is presented in Section 4. Some experiments are included in Section 5 to evaluate the performance of the discriminative over-complete dictionary and Gaussian over-complete dictionary, and the results show that the target detection performance achievable by the proposed approach is significantly enhanced. Conclusions are drawn in Section 6.

2. Adaptive Morphological Component Dictionary

Infrared dim target images consists of target, background and noise, and can be modeled as [5]:

where, a k is target intensity amplitude; xt, yt denotes the target location at instant k, and xt and yt represent the horizontal and vertical direction, respectively; δx k and δy k are the extent parameters at horizontal and vertical direction, respectively, and they are usually several pixels for their angle is very small when the distance between the man-made satellite and ground-based EO receiver is 30,000 km. Moreover, the signal-to-noise (SNR) is always also low because the target's energy decays greatly when it propagates in noise and clutter.

The K-singular value decomposition (K-SVD) algorithm is adopted to learn the image content and train adaptive over-complete dictionary D from a large number of infrared dim images. The dictionary is trained by the following formula [22]:

The two terms D and γ should be solved simultaneously in Equation (3). The construction of the adaptive over-complete dictionary is an iterative process, and there are two stages in every iteration, namely, sparse coding and dictionary update [23]:

- (1)

Sparse coding. In this stage, assuming that D is fixed; the sparse representation γ is updated by solving the following formula:

It means that the sparsest representation vector γ is searched by an orthogonal matching pursuit (OMP) algorithm under the constraint that the residual would be less than the error tolerance ε [24,25].

- (2)

Dictionary D update. During this stage, only one atom dk in the dictionary D is updated at every iteration, and then the residual is estimated by:

Therefore, an approximate solution set (dk,γk) would be optimized by the SVD algorithm. Repeating Equation (5), every atom dk in the dictionary D will be updated until the residual is less than the error tolerance ε. The signal representation error decays exponentially with increasing iteration number, and the adaptive dictionary would be constructed after a few iterations. The final version dictionary D, which called adaptive morphological over-complete dictionary, would be compatible with the content of infrared image.

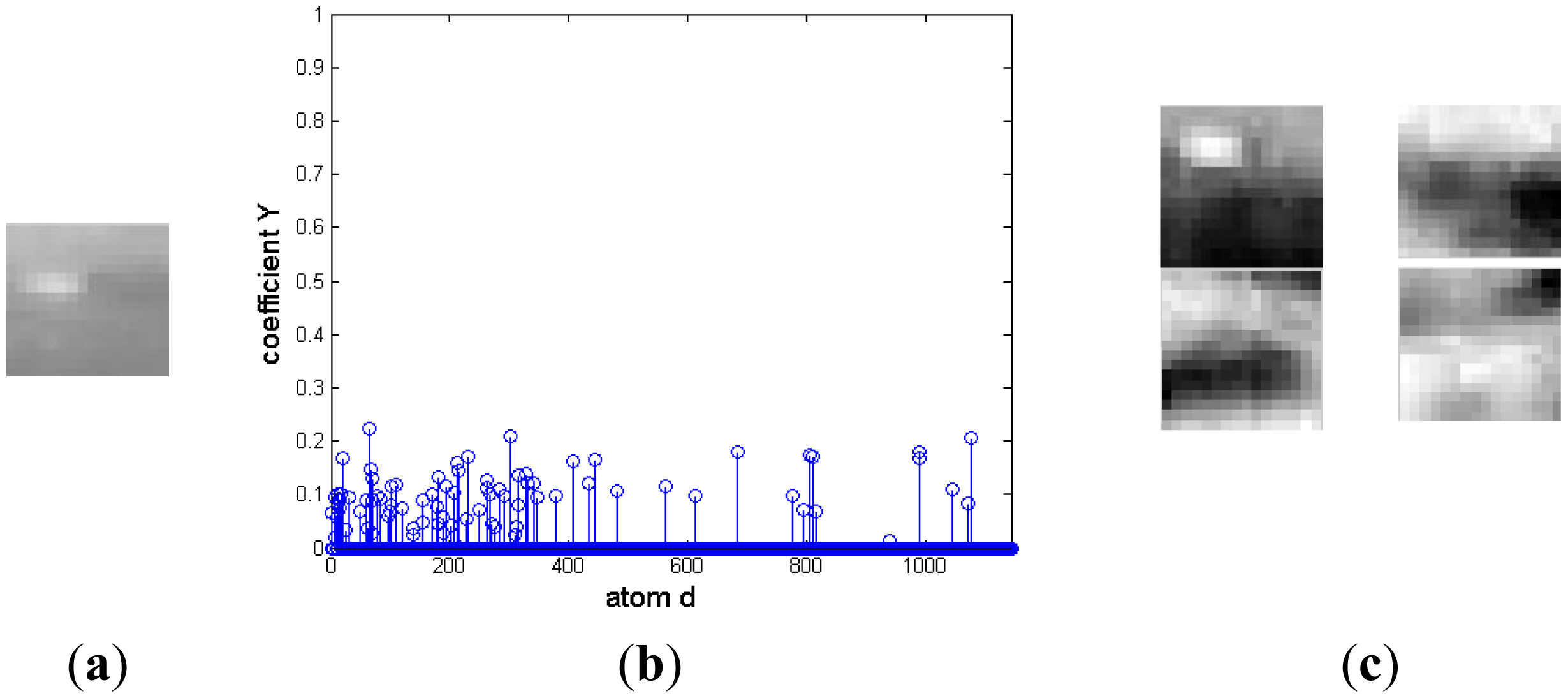

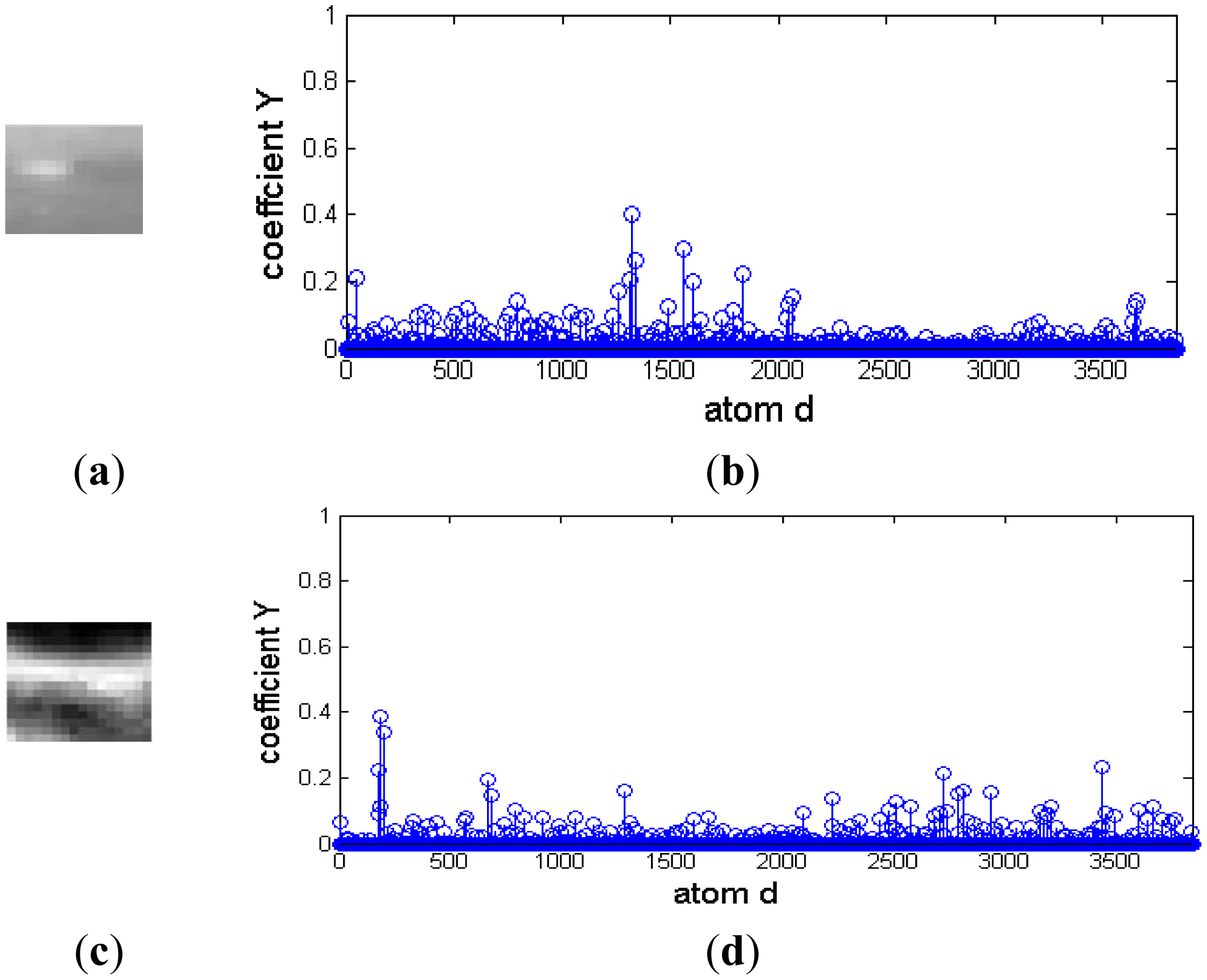

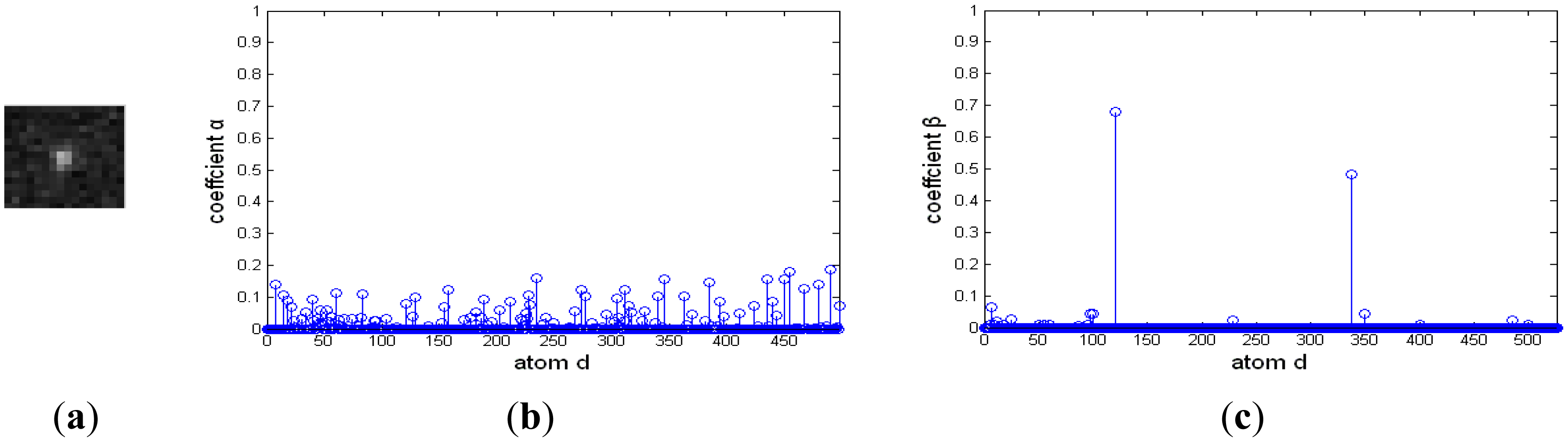

In the morphological over-complete dictionary D, the atoms representing target and background are mixed together. This could induce two difficulties for the signal is decomposed based on not only the target over-complete dictionary, but also the background over-complete dictionary. One is that the representing coefficients mightn't be sparse. The other is that the representing coefficients are irregular and couldn't easily discriminate target from background. To better illustrate the challenge, an example is shown in Figure 1. There is a target in Figure 1a. Its representation coefficient decomposed on the over-complete dictionary D is shown in Figure 1b. The dictionary contains 1,144 atoms. There are many nonzero coefficients on atoms representing target and background, and the four atoms corresponding to the largest four nonzero entries are shown in Figure 1c. The first atom represents the target, and the other three atoms describe the background. Therefore, it is necessary to discriminate the atoms representing the target from the ones describing background clutter.

3. Discriminative Over-Complete Dictionary Constructed Online

The sparse representation model assumes that the signal could be reconstructed with the same type of over-complete dictionary and corresponding representation coefficients [26]. For the background signal fb, it can be represented by a linear combination of the background atoms:

Similarly, for the target signal ft, it can also be sparsely represented as a linear combination of the target atoms:

Since background clutter and the target signal usually consist of different materials, they have distinct signatures and thus lie in different over-complete dictionaries. If an infrared image is a target signal, it ideally can be represented by a target dictionary, but can't be represented by the background atoms. In this case, α is a zero vector and β is a sparse vector. Therefore, the infrared image can be sparsely represented by the union of background over-complete dictionary and target over-complete dictionary, and the location of nonzero entries in the sparse vector γ actually contains critical information about the class of the infrared image.

The existing techniques to discriminate atoms usually choose background clutter to train a background over-complete dictionary, and manually select target signals to build a target over-complete dictionary offline. Nevertheless, the serious limitation of such an offline discriminative over-complete dictionary is that the atoms couldn't effectively adapt to moving targets and changing backgrounds, which would induce the discrimination between target and clutter to be too small to distinguish targets from background clutter, so it is necessary to distinguish automatically the atoms online for the discriminative over-complete dictionary in order to further enhance the capability of dim target detection.

As the Introduction discusses, the distance between a geosynchronous satellite and a ground-based EO receiver is more than 30,000 km, and the angle is so small that the target on the EO sensor is a small blob with only several pixels with Gaussian distribution, so dim small targets usually are described by a two-dimensional Gaussian intensity model (GIM), which is widely used to describe infrared dim small targets:

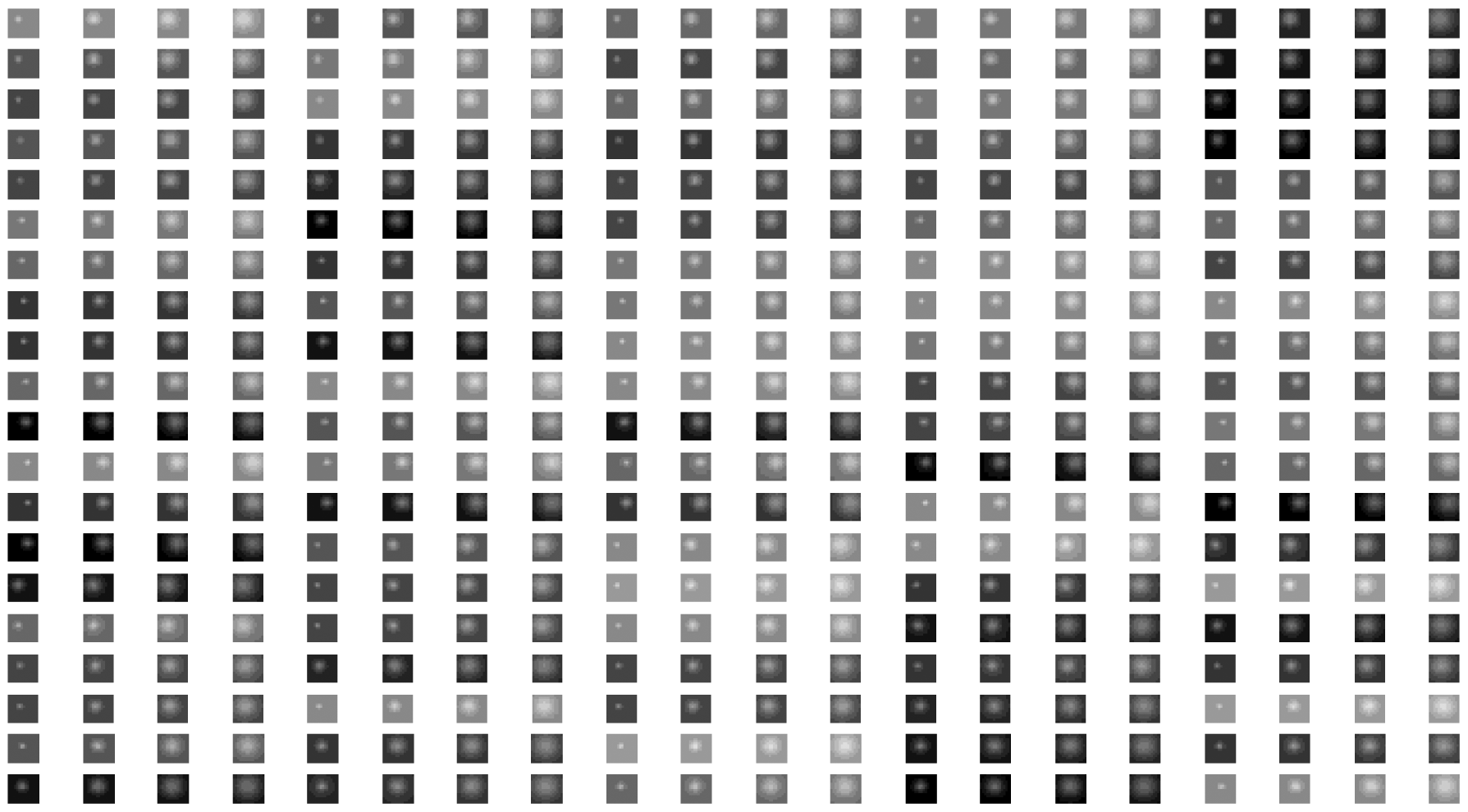

The extent parameters control the target intensity spread degree, and it represents a small point when they are very little, and denote a flat block when they are very large. In content learning-based target detection algorithms, the correlation between infrared image and GIM is usually measured to decide whether there is dim target or not. In this paper, the GIM-based structural over-complete dictionary is adopted to test the atoms of adaptive morphological component dictionary, and automatically discriminate the atoms representing target from the ones describing background clutter. There are four parameters in GIM, namely, center position (i0,j0), peak intensity Imax, horizontal and vertical extent parameters and , and they are adjusted to generate a large number of diverse atoms with different position, brightness and shape. Figure 2 is a part of the atoms of Gaussian over-complete dictionary Dgaussian, and each atom has 7 × 7 pixels.

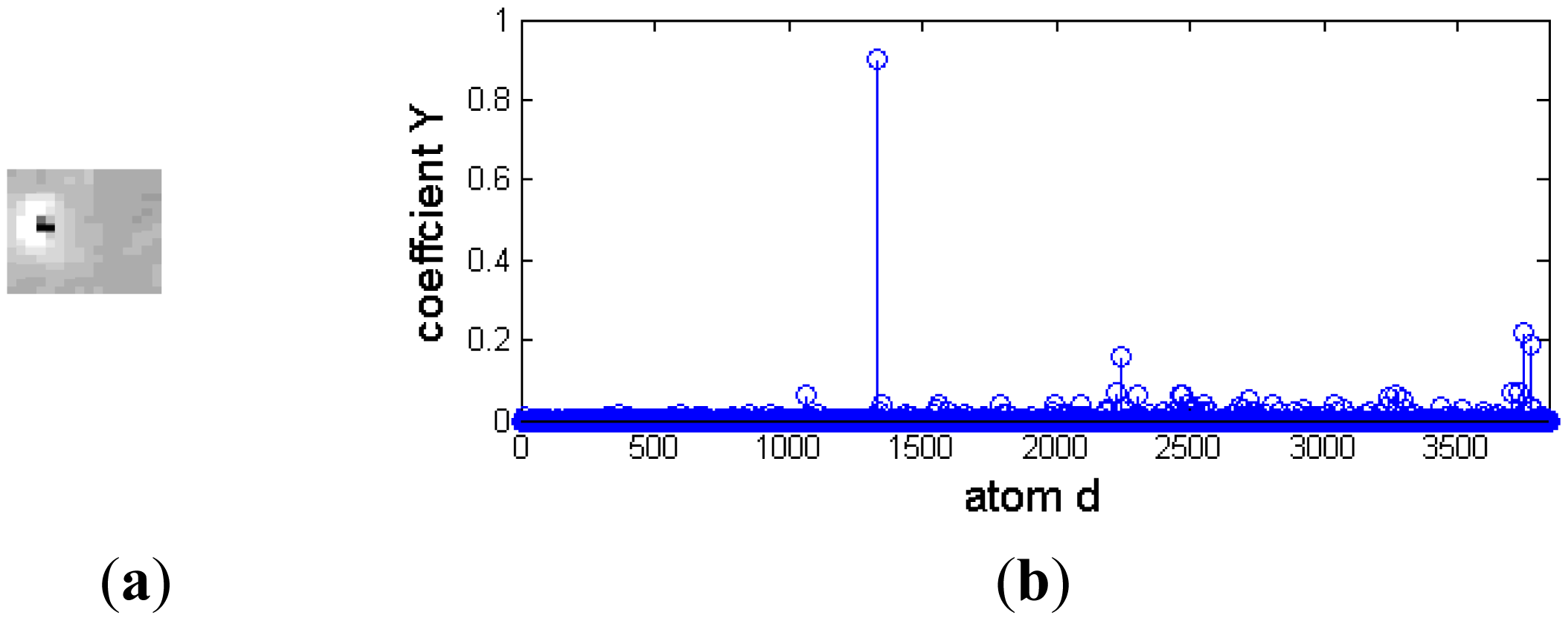

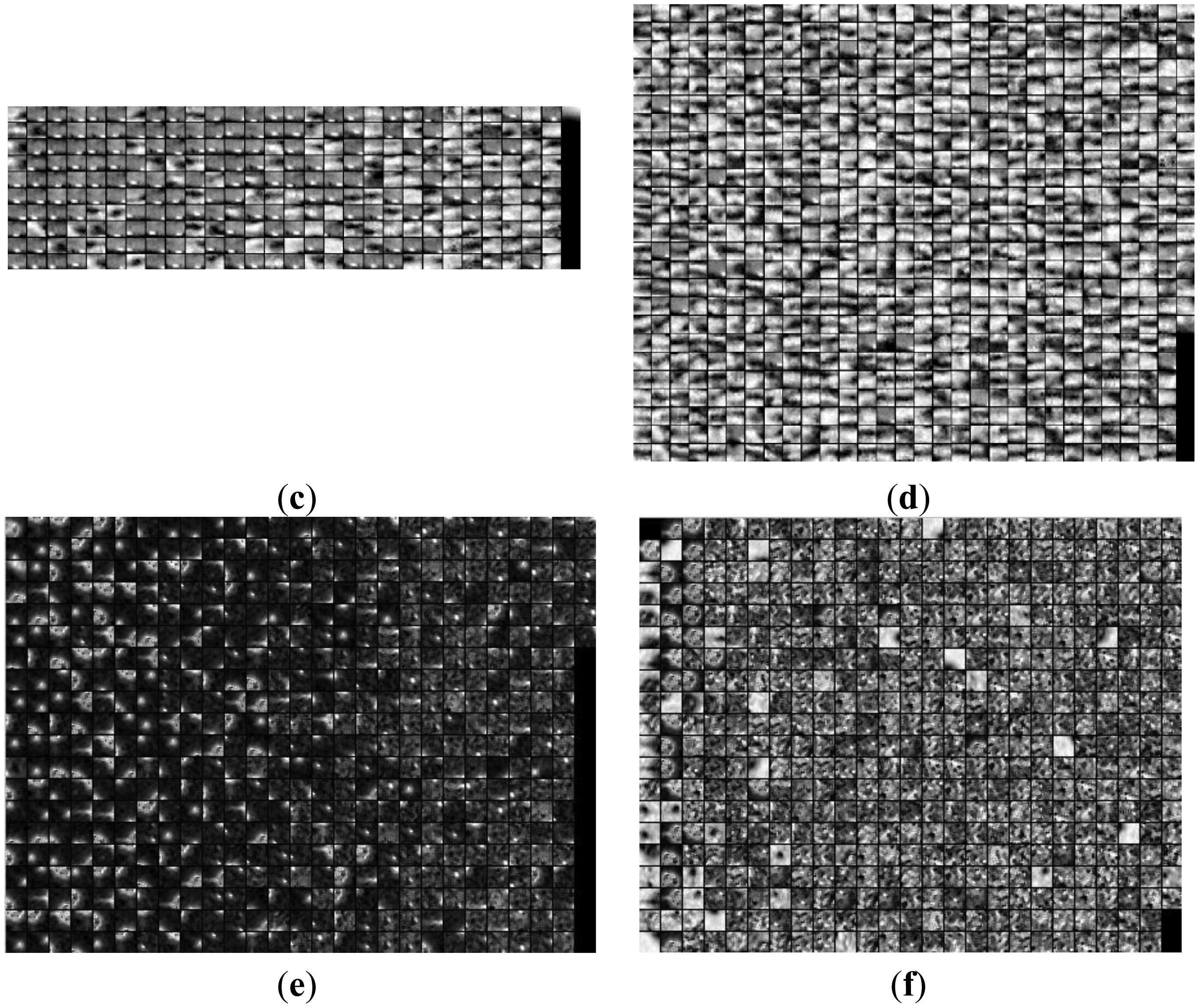

Although a dim moving target is always polluted by environmental noise, it approximately affords a two-dimensional Gaussian model. Otherwise, different background clutter has diverse and abundant morphology. For example, cirrocumulus cloud is composed of small spherical clouds, which arrange in rows or groups; stratocumulus cloud is generally larger and looser, and its thickness and shape are also different. Figure 3 is the representation coefficients of target signal and background noise decomposed based on a Gaussian over-complete dictionary. The target signals and background noise are from a deep space image. Figure 3a and 3b are the target image block and its representation coefficients, respectively. The target signal is a bright blob with a black noise at its center, and it is very similar to a two-dimensional Gaussian function. There are only several nonzero representation coefficients, and the target signal could be decomposed sparsely by a Gaussian over-complete dictionary. Figure 3c and 3d are background noise and its representation coefficients, respectively. Much of its representation coefficients are nonzero, and the background noise should be reconstructed by many Gaussian atoms.

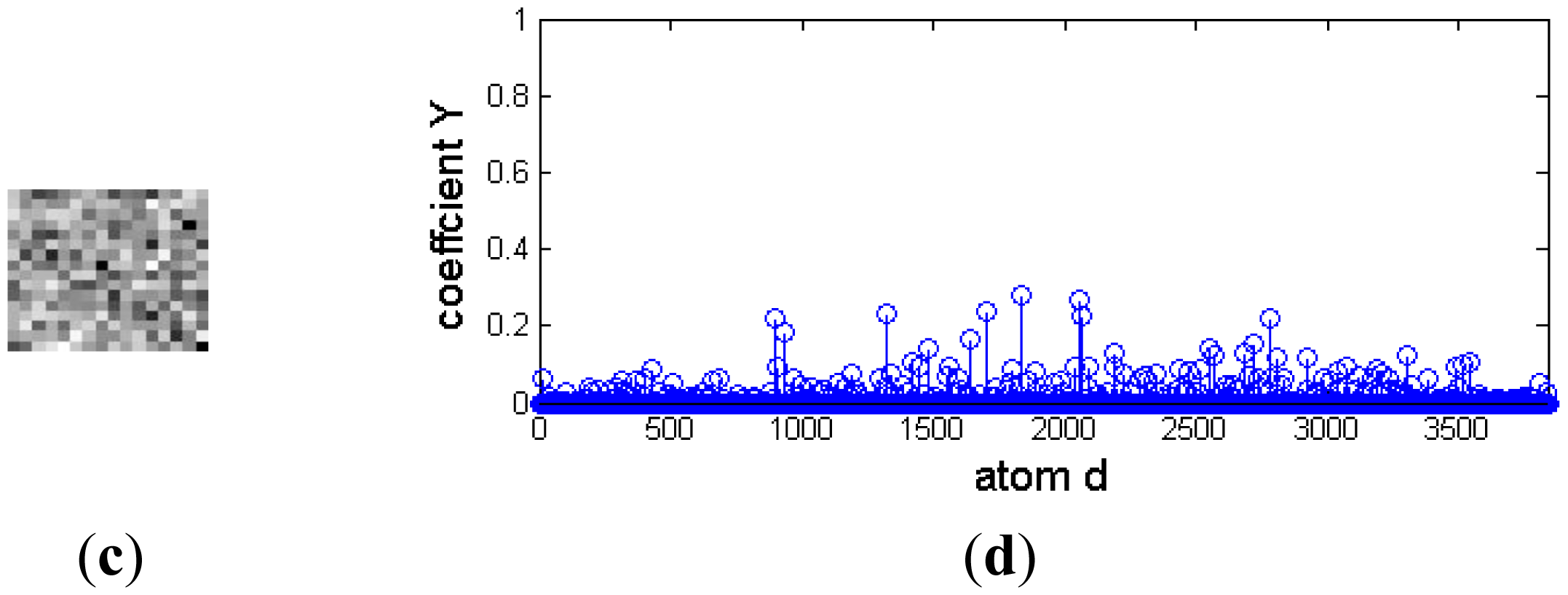

A small infrared target in a cloud background and their representation coefficients decomposed based on a Gaussian over-complete dictionary are shown in Figure 4. Figure 4a and 4b are the target signal and its representation coefficients, respectively. The target signal is a bright rectangle, and it has more nonzero representation coefficients than that of the target signal similar to the two-dimensional Gaussian function shown in Figure 3. Therefore, a target signal like a bright rectangle could be reconstrcted by a few of Gaussian atoms with maximum nonzero representation coefficients. Figure 4c and 4d are the cloud background and its representation coefficients, respectively. It is shown that much of these coefficients are nonzero, and it couldn't be decomposed sparsely. Moreover, the representation coefficient difference between target signal and background is so small that it is hard to distinguish the target from background clutter.

Therefore, compared with the background atom in the adaptive morphological component dictionary, the target atom could be reconstructed from a lesser amount of Gaussian atoms. In other words, with the same number of Gaussian atoms with maximum sparse coefficients, the residual of a background atom would be much greater than that of a target atom. Based on this idea, the atom in the adaptive morphology over-complete dictionary D could be classified as target over-complete dictionary Dt and background over-complete dictionary Db. The atom dk is decomposed on Gaussian over-complete dictionary Dgaussian as follows:

Whether the atom dk is target atom or not could be decided by comparing the residual r(dk) with a threshold δ:

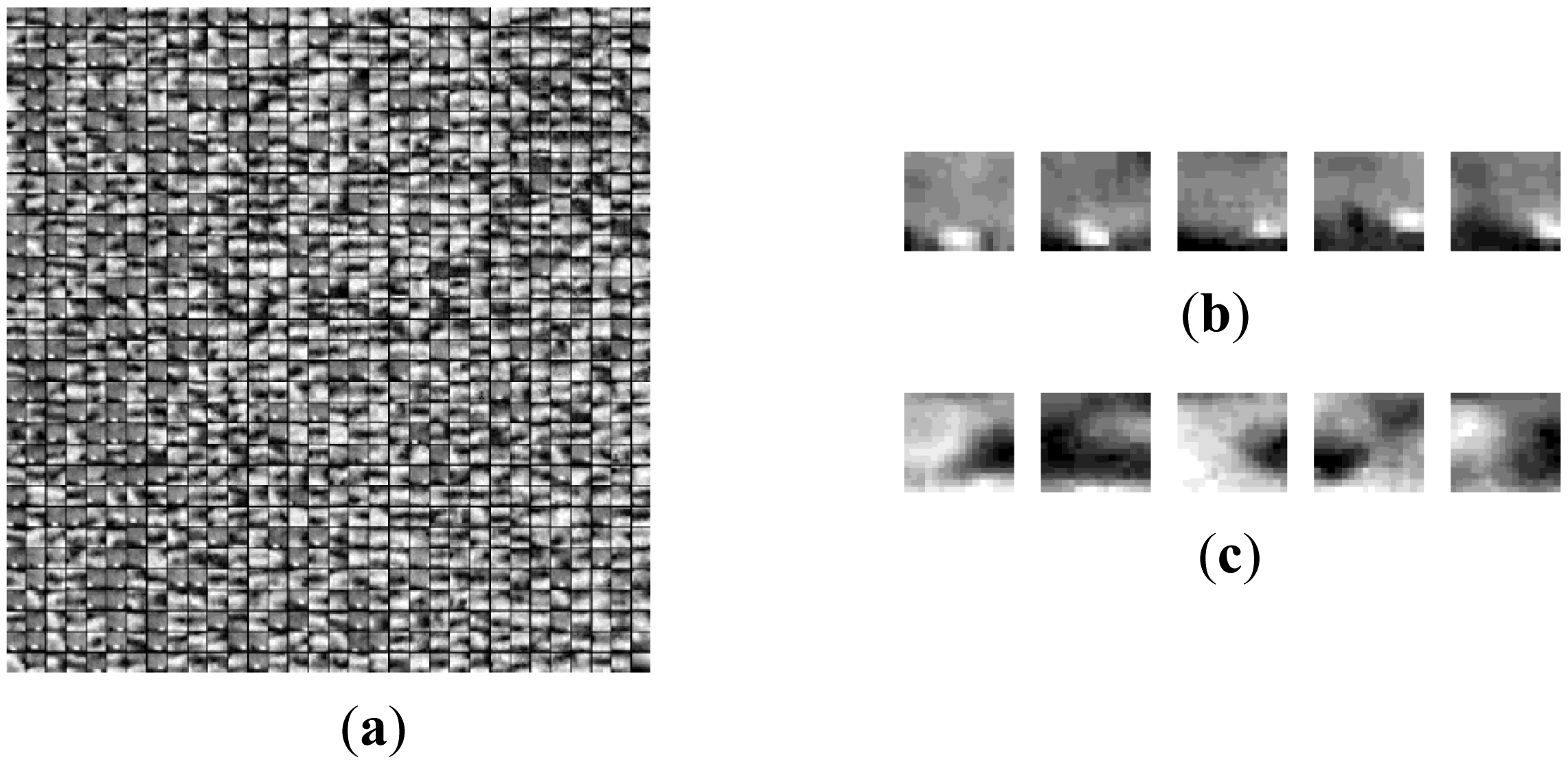

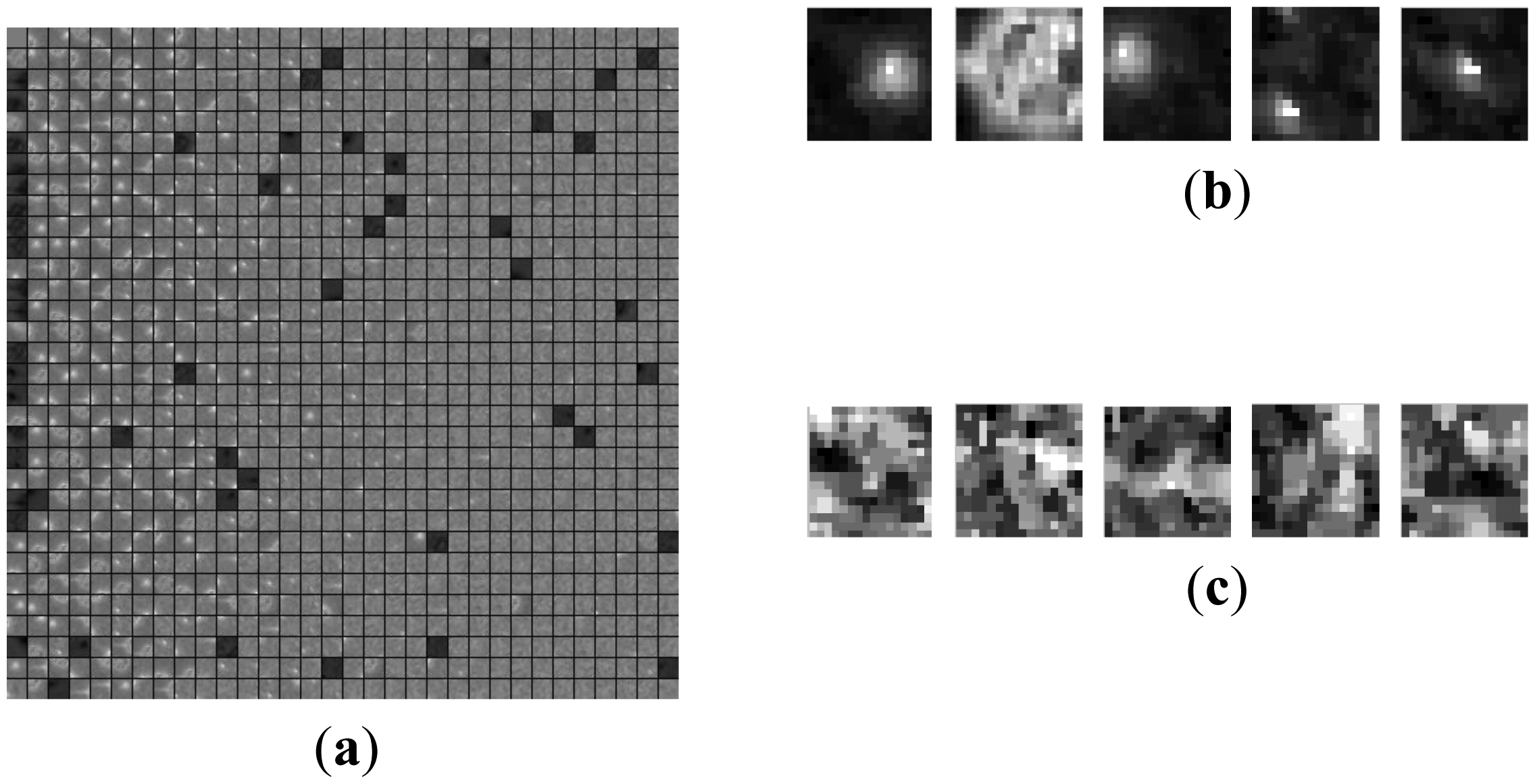

The threshold δ usually is proportional to the size of the atom. Every atom of D could be identified, then the adaptive background over-complete dictionary Db and target over-complete dictionary Dt could be constructed online and automatically. Figure 5 is an example of the discriminative over-complete dictionary learned online. Figure 5a is a part of adaptive morphological dictionary D for space target image. Figure 5b shows five target atoms. The target atoms have abundant shapes, and they can more really reflect the morphological characteristics of the original dim target than Gaussian atom. Five background atoms are listed in Figure 5c, and all of them are noise.

4. Dim Target Detection Criteria

Once the target and background over-complete dictionaries Dt and Db are learned and constructed online through these above procedures, the image f could be decomposed on these two dictionaries with the error tolerance σ, respectively:

These formulas are approximately solved by greedy pursuit algorithms such as orthogonal matching pursuit (OMP) or subspace pursuit (SP). The target can be sparsely decomposed over the target over-complete dictionary, yet it can't be sparsely decomposed on the background over-complete dictionary. For target signals, the residual reconstructed by target atoms with maximum representation coefficients would be less than that reconstructed by the same number of background atoms with maximum representation coefficients. Let us define the residual reconstructed by target over-complete dictionary as rt f:

The sparsity-based dim target detector can be done by comparing the difference of residuals with a prescribed threshold, i.e.,

If D(f) > η, f would be labeled as target; otherwise, it would be labeled as background clutter. η is a prescribed threshold.

5. Experimental Results and Analysis

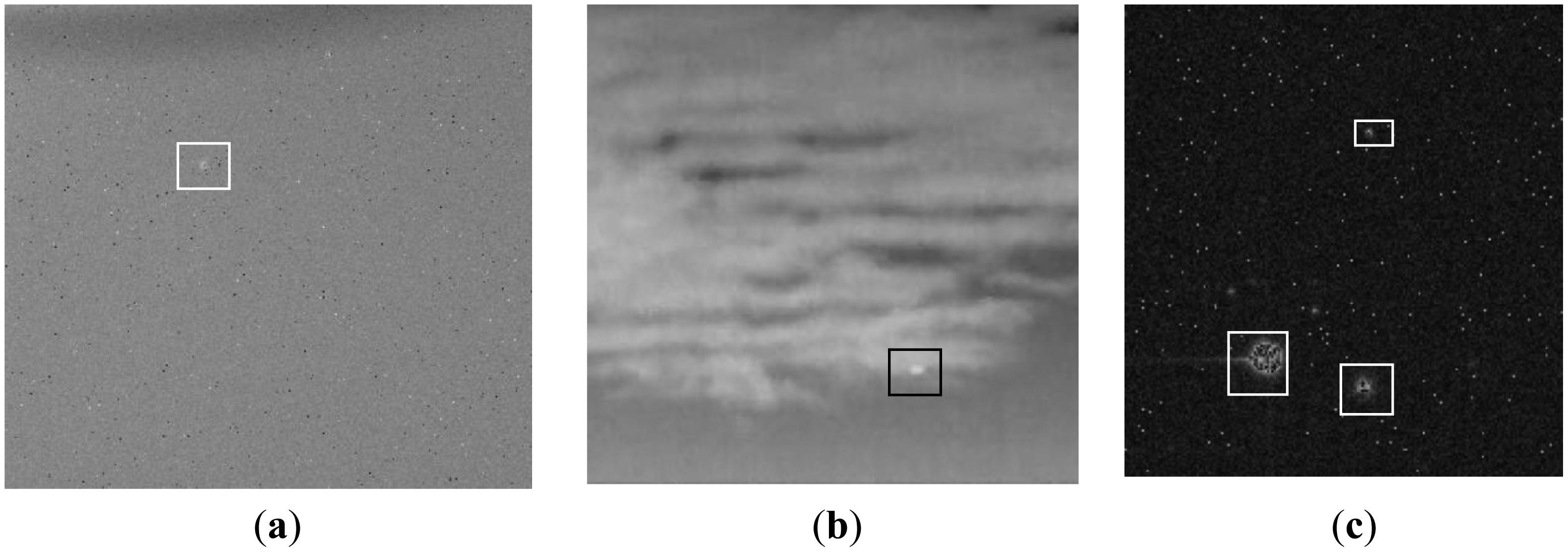

The following experiments have been implemented in MATLAB language on personal computer with a Pentium dual-core CPU E5900. Figure 6 shows the low contrast infrared images, which are captured outfield by an EO imaging tracking system. Figure 6a is the deep space sequence image, Figure 6b is the cloud sequence image, and Figure 6c is the multi-target image. Noise and cloud are the background clutter of deep space images, multi-target images and cloud images, respectively. The target is the brighter maculous form at the center of rectangle box. Their signal-to-noises (SNRs) are about 2.3 and 3.5 in Figure 6a and 6b, respectively. In Figure 6c, three targets with different scale are marked, and their SNRs are different.

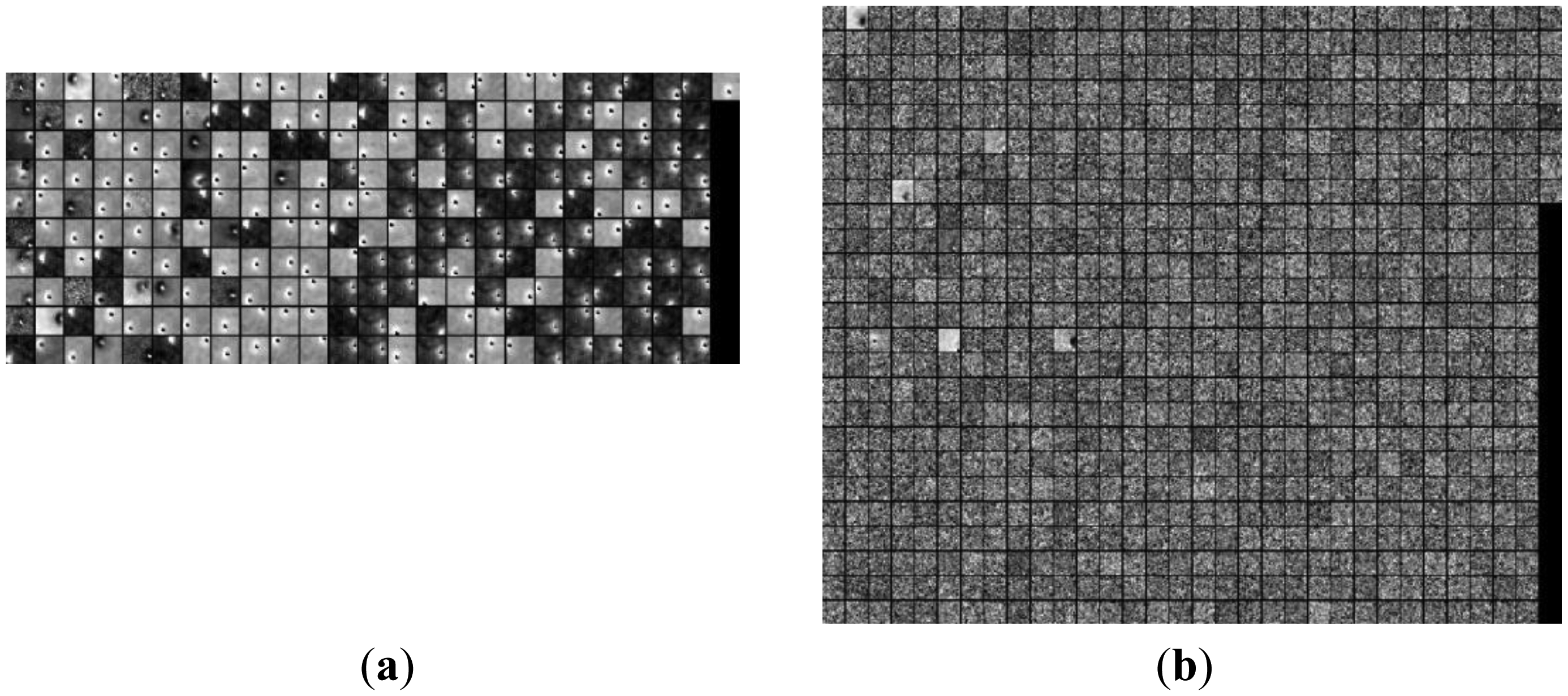

Figure 5, 7 and 8 are the morphological over-complete dictionary for the deep space images, cloud images and multi-target images, respectively. Compared with the Gaussian over-complete dictionary shown in Figure 2, the adaptive morphological over-complete dictionary has more diverse and abundant morphology, and it would be more suitable to represent original images with less atoms. Every atom is 7 × 7 pixels.

The space image and cloud image are decomposed on a Gabor over-complete dictionary (GD), Gaussian model over-complete dictionary (GMD), and adaptive morphological over-complete dictionary (AMCD), and then they are reconstructed by the five atoms with maximum representation coefficients. The first two are structural dictionaries, and the last one is a non-structural dictionary. The residual energy between the original image and the reconstructed image is introduced to evaluate the capability of sparse representation.

Obviously, the smaller the residual energy is, the more powerful the sparse representation would be. Ten target image blocks and ten background image blocks in space image and cloud image are decomposed and reconstructed, and their residual energy (not normalization) are shown in Figure 9. For the twenty image blocks, the residual energy of AMCD is the minimum, and that of GD is the maximum. This figure indicates that structural dictionary AMCD, which is trained according to image content, could more effectively describe the morphological component than these non-structural dictionaries GD and GMD, and its sparse representation ability is the most powerful.

The discriminative over-complete dictionaries for space image, cloud image and multi-target image are shown in Figure 10. Figure 10a and 10b are the target over-complete dictionary and background over-complete dictionary for space image, respectively; Figure 10c and 10d are the target over-complete dictionary and background over-complete dictionary for cloud image, respectively; Figure 10e and 10f are the target over-complete dictionary and background over-complete dictionary for multi-target images, respectively. The threshold δ used to distinguish target over-complete dictionary and background over-complete dictionary is equal to three multiplied by size of the atom, and the parameter k is equal to five. The target atoms are bright points, which are located at various positions of the image blocks with diverse shapes. The background atoms are noise and cloud clutter for space image, cloud image, and multi-target image respectively. There are 250 target atoms and 894 background atoms for deep space image, 280 target atoms and 884 background atoms for cloud image, and 526 target atom and 498 background atoms for multi-target image. For the space image, three background atoms and two target atoms are wrongly identified as target atom and background atoms, respectively; for cloud image, there are 22 background atoms and nine target atoms are wrongly classified as target atom and background atom, respectively; for multi-target image, there are seven background atoms and six target atoms are wrongly classified as target atom and background atom, respectively. The correct probabilities for space image, cloud image and multi-target image are more than 98%, 91%, and 95%, respectively, yet, depending on the complex degree of background clutter, the detection probability by the criteria based on Gaussian over-complete dictionary fluctuates.

The representation coefficients of the target image blocks decomposed on this discriminative over-complete dictionary are shown in Figures 11, 12 and 13. In Figures 11, 12 and 13, Figures 11a– 13a are target signals, and Figures 11b–13b and Figures 11c–13c are the coefficients on corresponding background over-complete dictionary and corresponding target dictionary, respectively. There are many nonzero coefficients in Figures 11b–13b of Figures 11, 12 and 13, and this means that the signal couldn't be sparsely represented by background over-complete dictionary. In Figures 11, 12 and 13, Figures 11c–13c have less nonzero coefficients than that of Figures 11b–13b, and it indicates the target image blocks could be sparsely represented by target over-complete dictionary. Moreover, the residual reconstructed by background over-complete dictionary is much than that of target over-complete dictionary with the same m atoms, Here, m is equal to five. The target signal could be reconstructed by five target atoms with maximum nonzero coefficients in the corresponding target over-complete dictionary, and the residuals of deep space image, cloud image and multi-target image are very small, about 0.13, 0.17 and 0.09 (normalization), respectively. Otherwise, their residuals after reconstructed using five background atoms with maximum nonzero coefficients in corresponding background over-complete dictionary are very big, and they are 0.94, 0.91 and 0.89, respectively. The residuals reconstructed by corresponding target over-complete dictionary rt(f) are less than that constructed by corresponding background over-complete dictionary rb(f), and the image would be correctly labeled as target.

The representation coefficient of the background image blocks decomposed based on this discriminative over-complete dictionary are shown in Figures 14, 15 and 16. In Figures 14, 15 and 16, Figures 14a–16a are background noise, and Figures 14b–16b and Figures 14c–16c are the representation coefficients based on the corresponding background over-complete dictionary and corresponding target over-complete dictionary, respectively. In Figures 14, 15 and 16, There are some nonzero coefficients on atoms in Figures 14b–16b and Figures 14c–16c, and Figure 14b–16b has less nonzero coefficients than that of Figures 14c–16c, and it indicates the background image blocks could be represented based on the corresponding background over-complete dictionary more sparsely than based on the corresponding target over-complete dictionary. Moreover, the residual reconstructed by the background over-complete dictionary is less than that of the target over-complete dictionary with the same atoms. The background image could be reconstructed by five background atoms with maximum nonzero coefficients in the corresponding background over-complete dictionary, and the residuals of deep space image, cloud image and multi-target image are about 0.17, 0.28 and 0.10 (normalization), respectively. The residuals reconstructed using five target atoms with maximum nonzero coefficients in corresponding target over-complete dictionary is 0.64, 0.83 and 0.75, respectively. Their residuals are rt(f) > rb(f), and the image should be labeled as background.

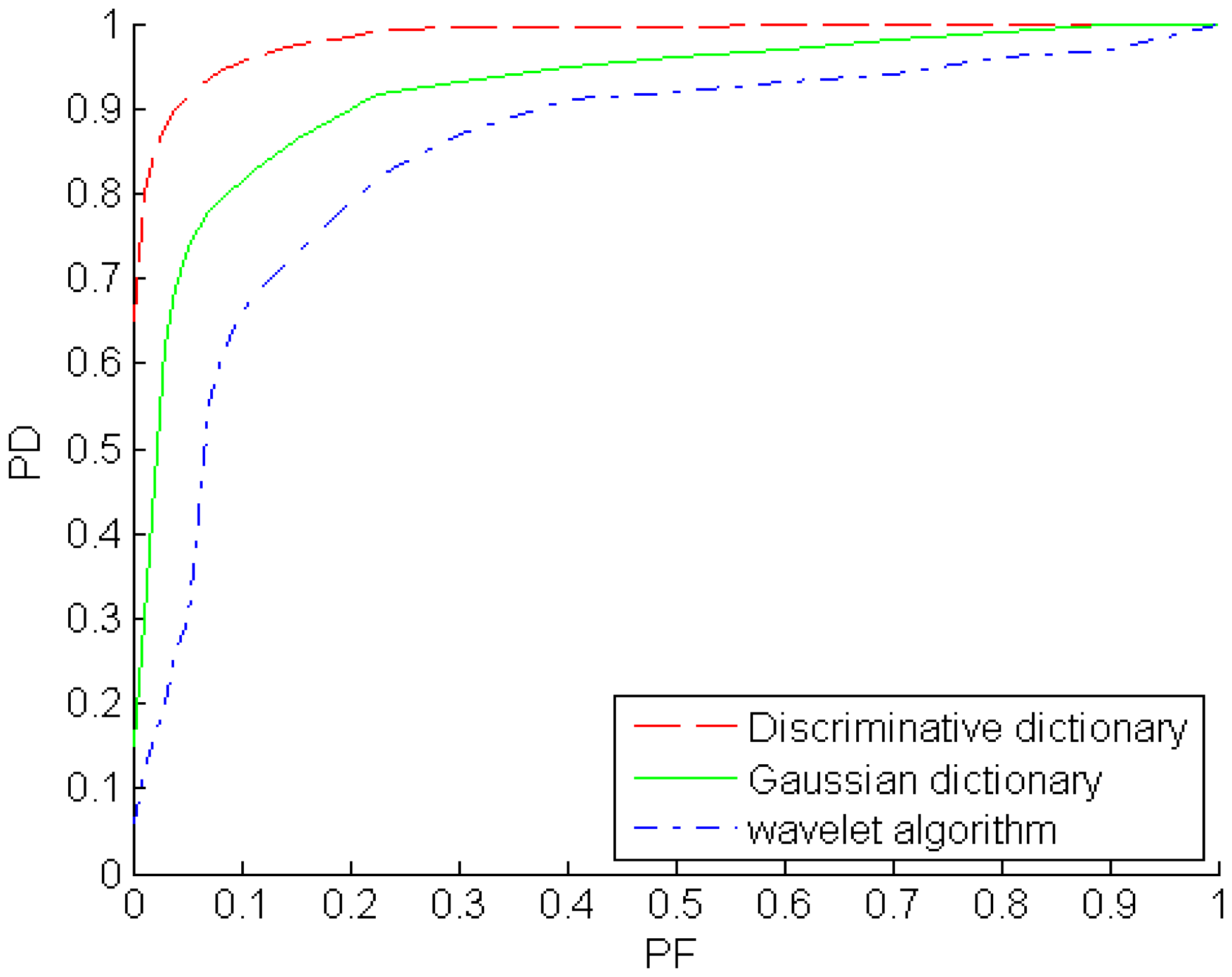

The receiver operating characteristic (ROC) curves of the Gaussian over-complete dictionary, discriminative over-complete dictionary and wavelet algorithm are shown in Figure 17. The ROC curve describes the probability of detection (PD) as a function of the probability of false alarms (PF). The PF is calculated by the number of false alarms (background pixels determined as target) over the number of background samples, and the PD is the ratio of the number of hits (target pixels determined as target) and the total number of true target samples. The extent parameters and in the two dimensional Gaussian model are extended to some degree, background clutter, even flat background, could be represented sparsely by the Gaussian over-complete dictionary. Hence, PD and PF would be increased with the extent parameters increasing. From the ROC plots, the discriminative over-complete dictionary outperforms the Gaussian over-complete dictionary, i.e., the PD of the former is larger than that of the latter when their PFs are same. Meanwhile, the wavelet algorithm is the worst one among the three algorithms for the example images.

6. Conclusions

This paper proposed an infrared dim target detection approach based on a sparse representation on a discriminative over-complete dictionary. This non-structural over-dictionary adaptively learns the content of infrared images online and is further divided into a target over-complete dictionary and a background over-complete dictionary automatically. The target over-complete dictionary could describe target signals, and the background over-complete dictionary would represent background clutter. This discriminative over-complete dictionary not only can capture significant features of background clutter and dim targets better than a structural over-complete dictionary, but also can efficiently strengthen the sparse feature difference between background clutter and target signals better than a discriminative over-complete dictionary learned offline and classified manually. The experimental results show that this proposed approach could effectively improve the performance of small target detection.

When SNR is lower than one, a target signal would be submerged in the strong noise, and its shape would be polluted and be not represented simply by a Gaussian model. Future work would focus on how to more effectively distinguish the target over-complete dictionary and background over-complete dictionary from adaptive morphological over-complete dictionary for diverse target signals and background clutter even in low SNR. Moreover, the computation time of the discriminative over-complete dictionary to detect target signal is about 6.4 s for a frame image, and this proposed algorithm should be optimized to decrease the computation complexity.

Acknowledgments

This research was supported by the National Natural Science Foundation of China under Grant No.61071191, Natural Science Foundation of Chongqing under grant No. CSTC 2011BB2048, and Fundamental Research Funds for the Central Universities under Grant No. 106112013CDJZR160007, and China Postdoctoral Science Foundation under Grant No. 2014M550455. And we are also grateful to the reviewers for their suggestion.

Author Contributions

Zheng-Zhou Li contributed conception, Jing Chen and Qian Hou designed the discriminative over-complete dictionary, Hong-Xia Fu and Zhen Dai designed the image reconstruction experimental work based on over-complete dictionary, Gang Jin designed the target detection experimental work based on discriminative over-complete dictionary, and Ru-Zhang Li and Chang-Ju Liu designed the target detection experimental work based on wavelet and evaluated the target detection performance. The authors jointly prepared the manuscript.

References

- Liou, R.J.; Azimi-Sadjadi, M.R. Dim target detection using high order correlation method. IEEE Trans. Aeros. Electr. Syst. 1993, 29, 841–856. [Google Scholar]

- Grossi, E.; Lops, M.; Venturino, L. A Novel Dynamic Programming Algorithm for Track-Before-Detect in Radar Systems. IEEE Trans. Signal. Process. 2013, 61, 2608–2619. [Google Scholar]

- Grossi, E.; Lops, M. Sequential along-track integration for early detection of moving targets. IEEE Trans. Signal. Process. 2008, 56, 3969–3982. [Google Scholar]

- Orlando, D.; Venturino, L.; Lops, M. Track-before-detect strategies for STAP radars. IEEE Trans. Signal. Process. 2010, 58, 933–938. [Google Scholar]

- Li, Z.Z.; Qi, L.; Li, W.Y.; Jin, G.; Wei, M. Track initiation for dim small moving infrared target based on spatial-temporal hypothesis testing. J. Infrared Millim. Terahertz Waves 2009, 30, 513–525. [Google Scholar]

- Bai, X.Z.; Zhou, F.G.; Jin, T. Enhancement of dim small target through modified top-hat transformation under the condition of heavy clutter. Signal. Process. 2010, 90, 1643–1654. [Google Scholar]

- Cao, Y.; Liu, R.M.; Yang, J. Small target detection using two-dimensional least mean square (TDLMS) filter based on neighborhood analysis. Int. J. Infrared Millim. Waves 2008, 29, 188–200. [Google Scholar]

- Wang, T.; Yang, S.Y. Weak and small infrared target automatic detection based on wavelet transform. Intell. Inform. Technol. Appl. 2008, 2008, 609–701. [Google Scholar]

- Davidson, G.; Griffiths, H.D. Wavelet detection scheme for small targets in sea clutter. Electr. Lett. 2002, 38, 1128–1130. [Google Scholar]

- Panagopoulos, S.; Soraghan, J.J. Small-target detection in sea clutter. IEEE Trans. Geosci. Remote Sens. 2004, 42, 1355–1361. [Google Scholar]

- Tae-wulk, B. Small target detection using bilateral filter and temporal cross product in infrared images. Infrared Phys. Technol. 2011, 54, 403–411. [Google Scholar]

- Bai, X.Z.; Zhou, F.G.; Xie, Y.C.; Jin, T. Enhanced detectability of point target using adaptive morphological clutter elimination by importing the properties of the target region. Signal Process. 2009, 89, 1973–1989. [Google Scholar]

- Pillai, J.K.; Patel, V.M.; Chellappa, R. Sparsity inspired selection and recognition of iris images. Proceedings of the IEEE 3rd International Conference on Biometrics: Theory, Applications, and Systems, Washington, DC, USA, 28–30 September 2009; pp. 1–6.

- Hang, X.; Wu, F.X. Sparse representation for classification of tumors using gene expression data. J. Biomed. Biotech. 2009. [Google Scholar] [CrossRef]

- Chen, Y.; Nasrabadi, N.M.; Tran, T.D. Sparse representation for target detection in hyperspectral imagery. IEEE J. Selected Top. Signal. Process. 2011, 5, 629–640. [Google Scholar]

- Zhao, J.J.; Tang, Z.Y.; Yang, J.; Liu, E.-Q.; Zhou, Y. Infrared small target detection based on image sparse representation. J. Infrared Millim. Waves 2011, 30, 156–166. [Google Scholar]

- Zheng, C.Y.; Li, H. Small infrared target detection based on harmonic and space matrix decomposition. Opt. Eng. 2013, 52, 066401. [Google Scholar]

- Bi, X.; Chen, X.D.; Zhang, Y.; Liu, B. Image compressed sensing based on wavelet transform in contourlet domain. Signal Process. 2011, 91, 1085–1092. [Google Scholar]

- Chen, J.; Wang, Y.T.; Wu, H.X. A coded aperture compressive imaging array and its visual detection and tracking algorithms for surveillance systems. Sensors 2012, 12, 14397–14415. [Google Scholar]

- Donoho, D.L.; Elad, M.; Temlyakov, V.N. Stable recovery of sparse overcomplete representations in the presence of noise. IEEE Trans. Inform. Theory 2006, 52, 6–18. [Google Scholar]

- Aharon, M.; Elad, M.; Bruckstein, A. K-SVD: An algorithm for designing overcomplete dictionaries for sparse representation. IEEE Trans. Signal Process. 2006, 54, 4311–4322. [Google Scholar]

- Donoho, D.; Huo, X. Uncertainty principles and ideal atomic decomposition. IEEE Trans. Inform. Theory 2001, 47, 2845–2862. [Google Scholar]

- Wright, J.; Yang, A.Y.; Ganesh, A.; Sastry, S.S.; Ma, Y. Robust face recognition via sparse representation. IEEE Trans. Pattern Anal. Mach. Intell. 2009, 31, 210–227. [Google Scholar]

- Elad, M.; Aharon, M. Image denoising via sparse and redundant representations over learned dictionaries. IEEE Trans. Image Process. 2006, 15, 3736–3745. [Google Scholar]

- Tropp, J.A.; Gilbert, A.C. Signal recovery from random measurements via orthogonal matching pursuit. IEEE Trans. Inform. Theory 2007, 53, 4655–4666. [Google Scholar]

- Dai, W.; Milenkovic, O. Subspace pursuit for compressive sensing signal reconstruction. IEEE Trans. Inform. Theory 2009, 55, 2230–2249. [Google Scholar]

© 2014 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license ( http://creativecommons.org/licenses/by/3.0/)

Share and Cite

Li, Z.-Z.; Chen, J.; Hou, Q.; Fu, H.-X.; Dai, Z.; Jin, G.; Li, R.-Z.; Liu, C.-J. Sparse Representation for Infrared Dim Target Detection via a Discriminative Over-Complete Dictionary Learned Online. Sensors 2014, 14, 9451-9470. https://doi.org/10.3390/s140609451

Li Z-Z, Chen J, Hou Q, Fu H-X, Dai Z, Jin G, Li R-Z, Liu C-J. Sparse Representation for Infrared Dim Target Detection via a Discriminative Over-Complete Dictionary Learned Online. Sensors. 2014; 14(6):9451-9470. https://doi.org/10.3390/s140609451

Chicago/Turabian StyleLi, Zheng-Zhou, Jing Chen, Qian Hou, Hong-Xia Fu, Zhen Dai, Gang Jin, Ru-Zhang Li, and Chang-Ju Liu. 2014. "Sparse Representation for Infrared Dim Target Detection via a Discriminative Over-Complete Dictionary Learned Online" Sensors 14, no. 6: 9451-9470. https://doi.org/10.3390/s140609451