Cloud-Based Geospatial 3D Image Spaces—A Powerful Urban Model for the Smart City

Abstract

:1. Introduction

2. Related Work

- Georeferencing strategies for the RGB-D imagery and the obtainable absolute measuring accuracies (Section 5);

- Depth map extraction strategies and the obtainable relative measuring accuracies (Section 6);

- The smart exploitation of the new urban model with respect to functionality and ease-of-use (Section 7).

3. Geospatial 3D Image Spaces

3.1. Concept

- Provide a high-fidelity metric photographic representation of the urban environment, which is easy to interpret and which can be augmented with existing or projected GIS data

- The RGB and the depth information shall be spatially and temporally coherent, i.e. the radiometric and the depth observation should ideally take place at exactly the same instance

- The depth information shall be dense, ideally providing a depth value for each pixel of the corresponding RGB image

- Image collections are usually ordered, e.g., in the form of images sequences, for simple navigation and shall efficiently be accessed via spatial data structures

- The model shall support metric imagery with different geometries, e.g., with perspective, panoramic or fish eye projections

- The model shall be easy-to-use and shall at least support simple, robust and accurate image-based 3D measurements using enhanced 3d monoplotting

- The model shall provide measures to protect privacy

3.2. Discussion

4. Implementation and Test Environment

4.1. Data Acquisition System

- A NovAtel SPAN inertial navigation system with a tactical grade UIMU-LCI inertial measuring unit (IMU) featuring fiber-optics gyros and with a L1/L2 GNSS kinematic antenna

- Up to five stereo camera systems with either 11 MP or Full HD resolution, a typical radiometric resolution of 12 bits and max. data capturing rates of 5 fps or 30 fps respectively

- The stereo systems are mounted on a rigid frame with typical stereo baselines of approx. 1 m

- Typical configurations consist of a main stereo system facing forward and additional stereo systems facing aft, sideways or even pointing downwards at the road surface

- Recent additions include up to two Ladybug 5 multi-head panoramic cameras

- All sensors are synchronized using hardware trigger signals from a custom-built trigger box which also supports distance-based triggering to ensure uniform image sequences even in busy or congested traffic

- Typical data acquisition speeds range from 30 to 80 km/h and max. acquired data volumes are in the order of up to 1 TB per hour of operation, depending on the acquisition parameters

4.2. Processing Pipeline

4.3. Cloud-Based Management and Web-Based Exploitation System

4.4 Study Area and Data

5. High Accuracy Georeferencing—Strategies and Results

5.1. Motivation and Challenges

- A kinematic acquisition with typical speeds between 30 and 80 km/h

- In challenging urban environments with generally poor GNSS coverage

- With the need to also create such models in GNSS-denied areas such as in tunnels or buildings,

- The requirement to tie the urban model, i.e. the 3D imagery, to local control points,

- The use of multi-sensor systems with typically more than 10 sensor heads.

5.2. Direct Georeferencing

5.3. Integrated and Image-Based Georeferencing

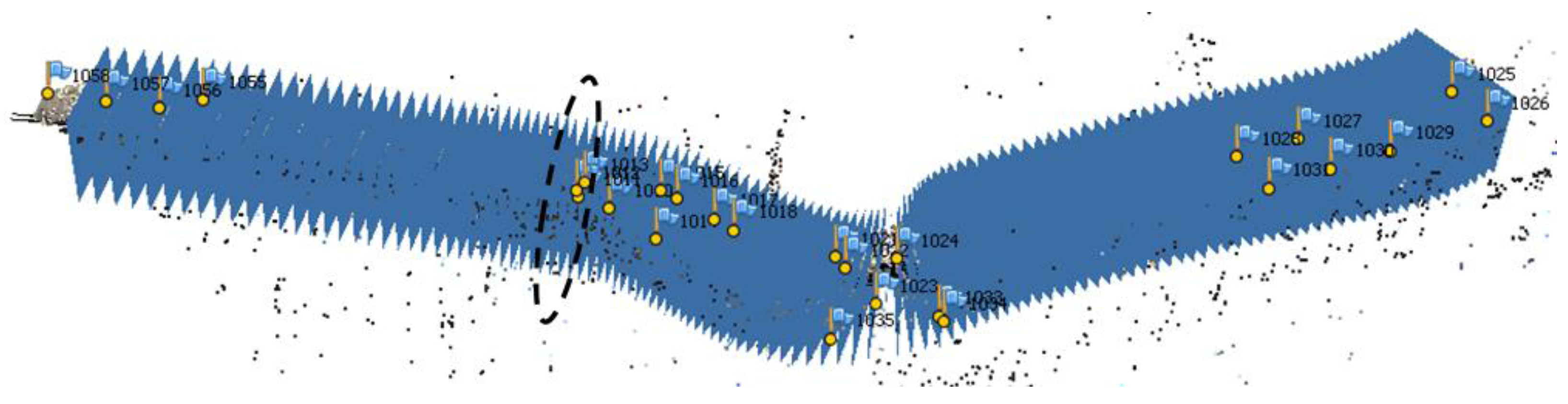

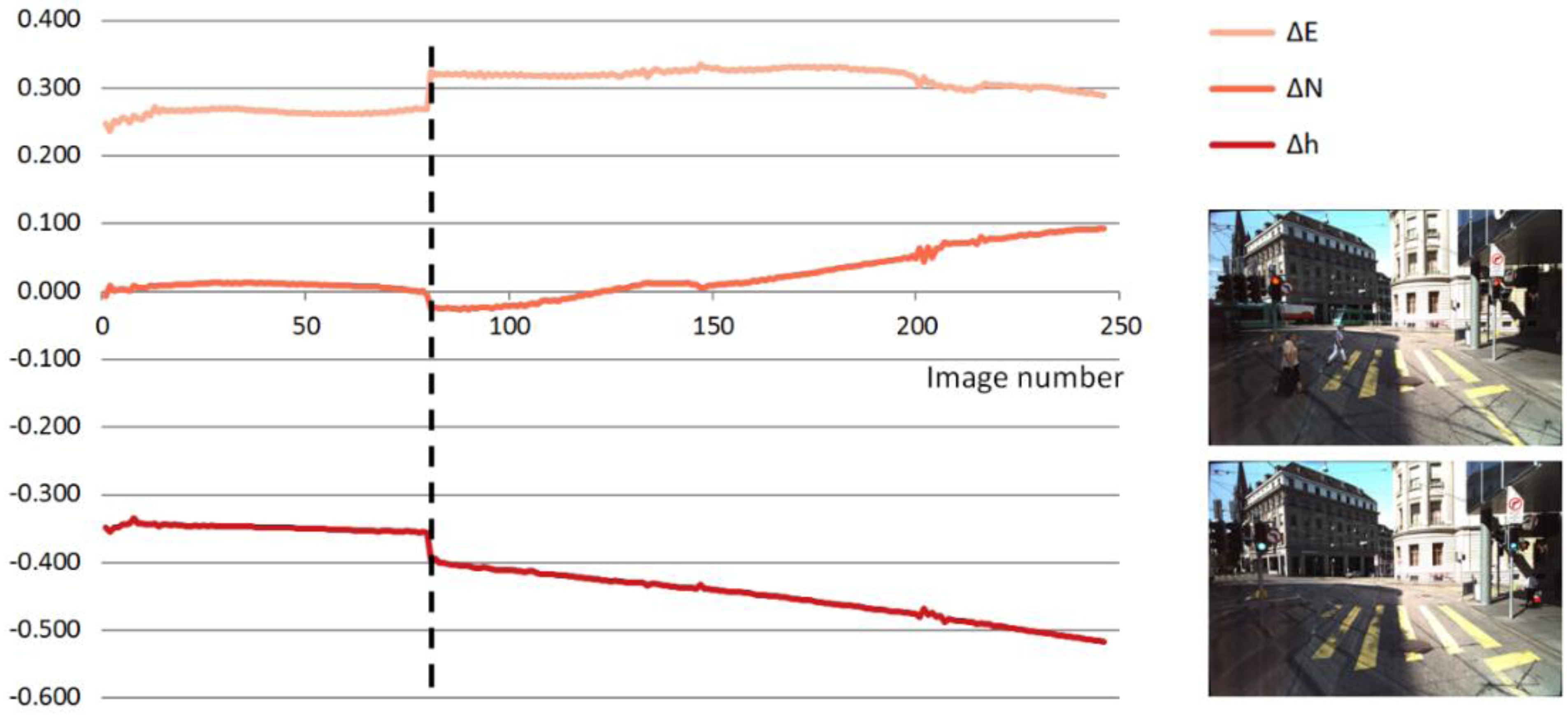

5.4. Experiments and Results

5.5. Discussion

6. Dense Image Matching for Depth Map Extraction—Strategies and Results

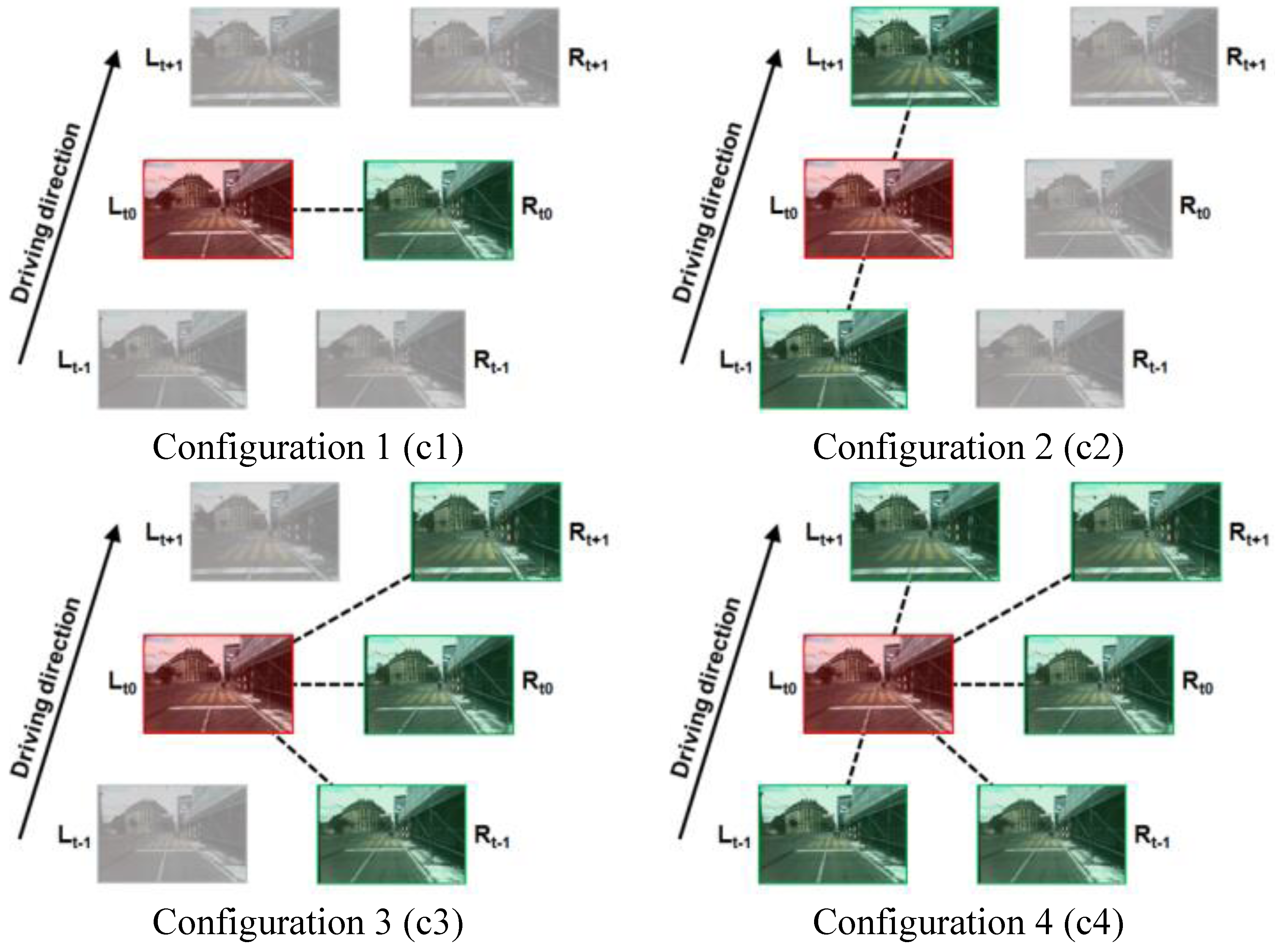

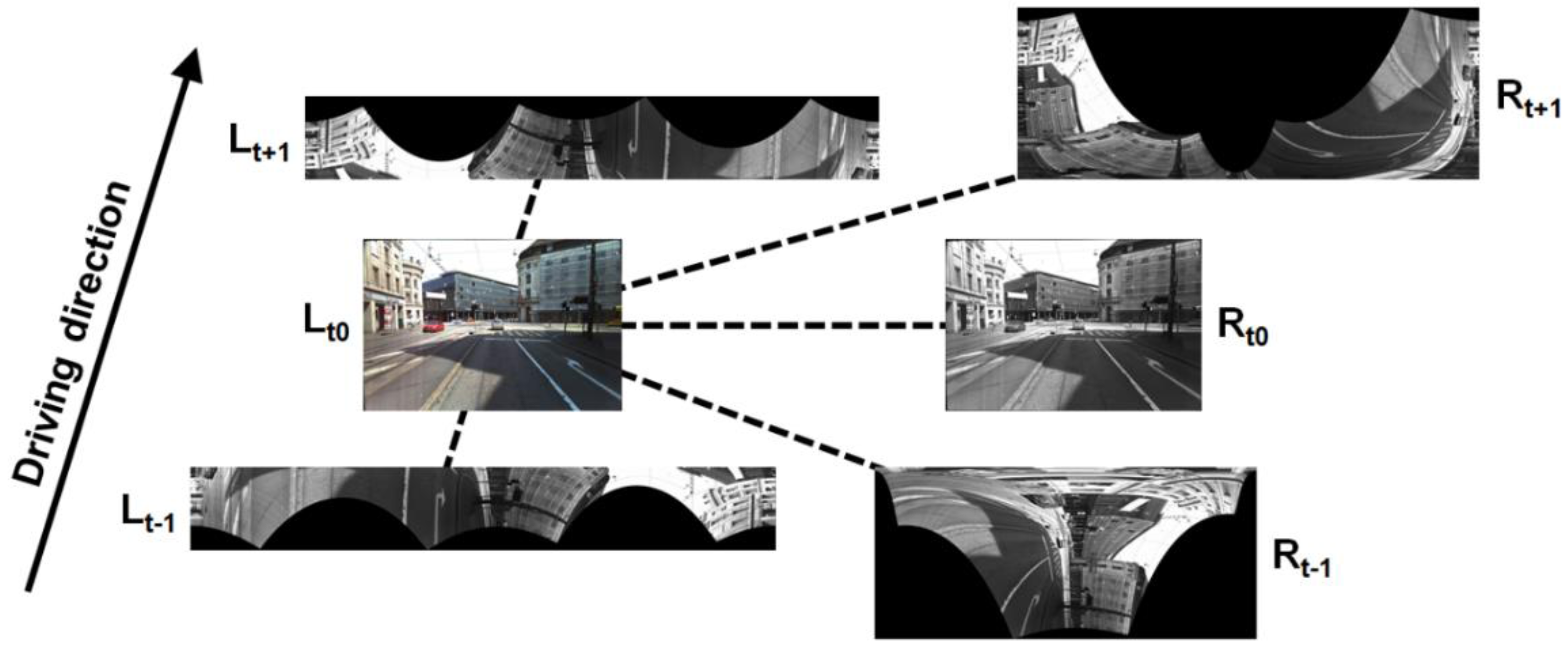

6.1. Matching Approaches and Configurations

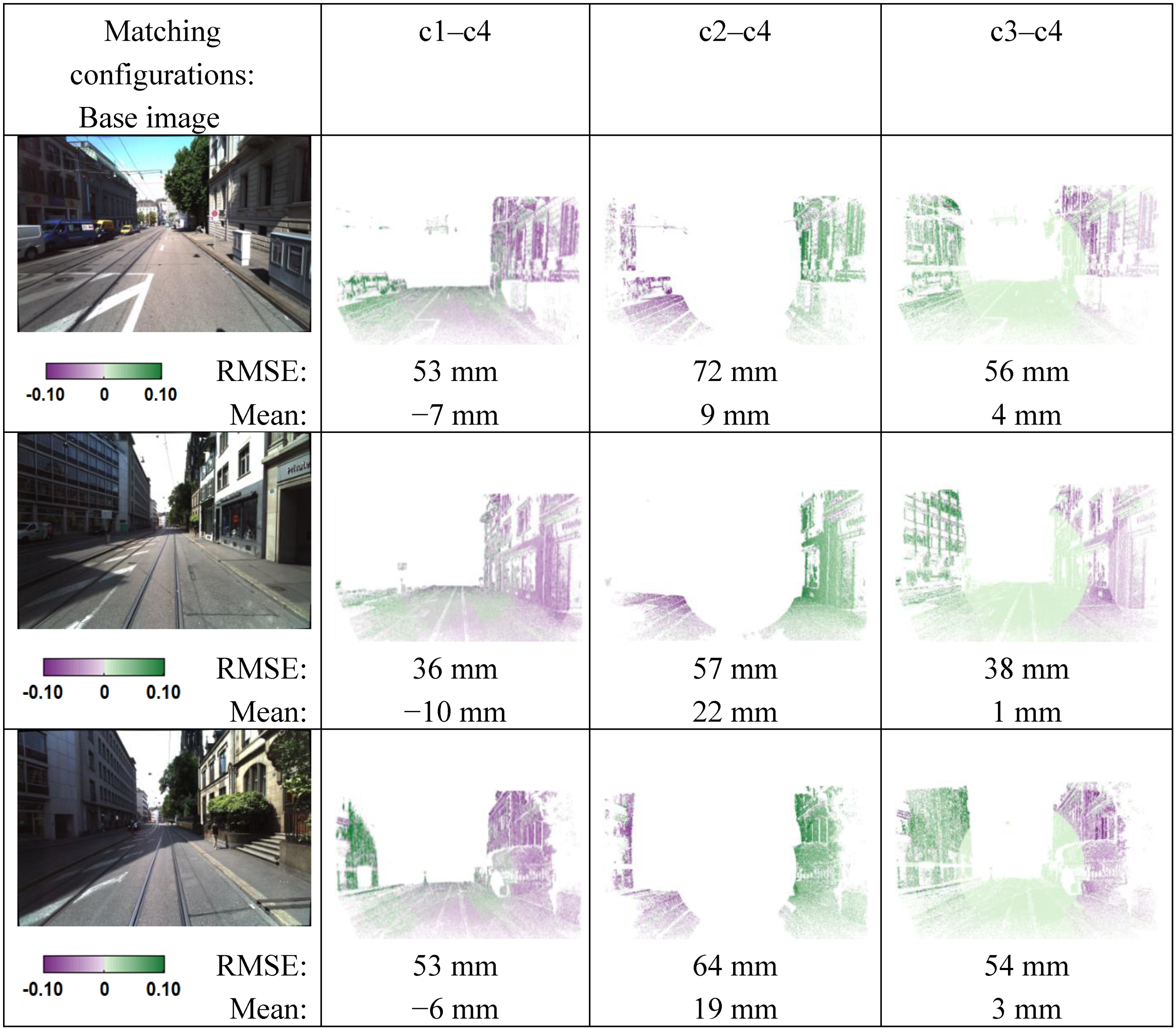

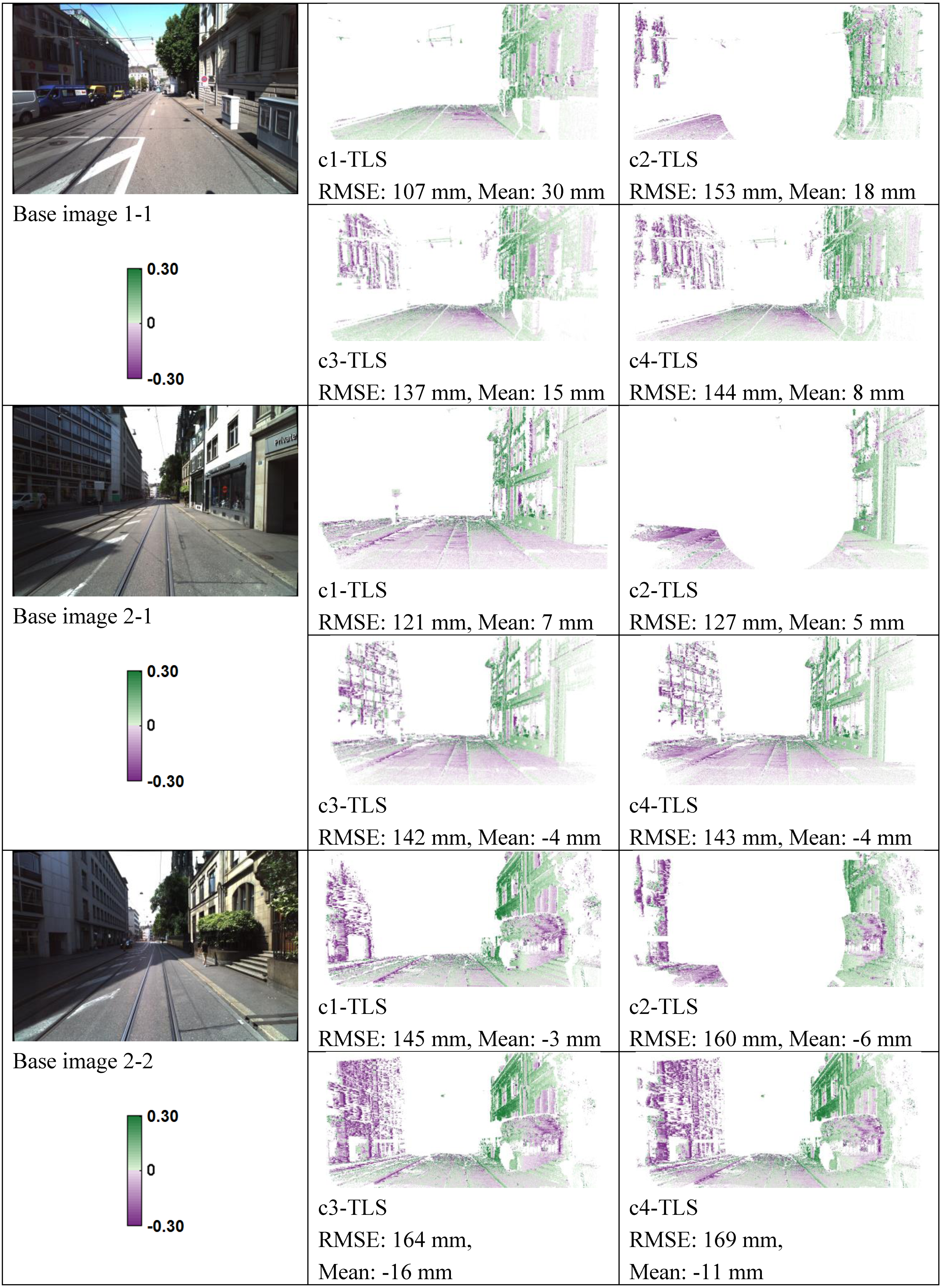

6.2. Experiments and Results

6.3. Discussion

7. Smart Exploitation of Cloud-Based 3D Image Spaces

8. Conclusions and Future Work

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Albino, V.; Berardi, U.; Dangelico, R.M. Smart cities: Definitions, dimensions, performance, and initiatives. J. Urban Technol. 2015, 22, 3–21. [Google Scholar] [CrossRef]

- Hall, R.E.; Bowerman, B.; Braverman, J.; Taylor, J.; Todosow, H.; von Wimmersperg, U. The vision of a smart city. In Proceedings of the 2nd International Life Extension Technology Workshop, Paris, France, 28 Sepetember 2000.

- Harrison, C.; Eckman, B.; Hamilton, R.; Hartswick, P.; Kalagnanam, J.; Paraszczak, J.; Williams, P. Foundations for smarter cities. IBM J Res. Dev. 2010, 54, 1–16. [Google Scholar] [CrossRef]

- Cretu, L.-G. Smart cities design using event-driven paradigm and semantic web. Inform. Econ. 2012, 16, 57–67. [Google Scholar]

- Petrie, G. Mobile mapping systems—An introduction to the technology. GeoInformatics 2010, 13, 32–43. [Google Scholar]

- NASA Mars Mapping Technology Brings Main Street to Life. Available online: https://spinoff.nasa.gov/Spinoff2008/ct_9.html (Accessed on 28 July 2015).

- Paparoditis, N.; Papelard, J.-P.; Cannelle, B.; Devaux, A.; Soheilian, B.; David, N.; Houzay, E. Stereopolis II: A multi-purpose and multi-sensor 3D mobile mapping system for street visualisation and 3D metrology. Rev. Française Photogramm. Télédétection 2012, 200, 69–79. [Google Scholar]

- Anguelov, D.; Dulong, C.; Filip, D.; Frueh, C.; Lafon, S.; Lyon, R.; Ogale, A.; Vincent, L.; Weaver, J. Google street view: Capturing the world at street level. Computer 2010, 43, 32–38. [Google Scholar] [CrossRef]

- Lippman, A. Movie-maps: An application of the optical videodisc to computer graphics. In Proceedings of the 7th Annual Conference on Computer Graphics and Interactive Techniques, Seattle, WA, USA, 14–18 July 1980.

- Ellum, C.; El-Sheimy, N. Land-based mobile mapping systems. Photogramm. Eng. Remote Sens. 2002, 68, 13–17. [Google Scholar]

- Puente, I.; González-Jorge, H.; Martínez-Sánchez, J.; Arias, P. Review of mobile mapping and surveying technologies. Measurement 2013, 46, 2127–2145. [Google Scholar] [CrossRef]

- Xiao, J.; Fang, T.; Zhao, P.; Lhuillier, M.; Quan, L. Image-based street-side city modeling. In Proceeding of the ACM SIGGRAPH Asia 2009, Yokohama, Japan, 16–19 Decemeber 2009.

- Pollefeys, M.; Nistér, D.; Frahm, J.M.; Akbarzadeh, A.; Mordohai, P.; Clipp, B.; Engels, C.; Gallup, D.; Kim, S.J.; Merrell, P.; et al. Detailed real-time urban 3D reconstruction from video. Int. J. Comput. Vis. 2008, 78, 143–167. [Google Scholar] [CrossRef]

- Meilland, M.; Comport, A.I.; Rives, P. Dense omnidirectional RGB-D mapping of large-scale outdoor environments for real-time localization and autonomous navigation. J. F. Robot. 2015, 32, 474–503. [Google Scholar] [CrossRef]

- Nebiker, S.; Bleisch, S.; Christen, M. Rich point clouds in virtual globes—A new paradigm in city modeling? Comput. Environ. Urban Syst. 2010, 34, 508–517. [Google Scholar] [CrossRef]

- Musialski, P.; Wonka, P.; Aliaga, D.G.; Wimmer, M.; van Gool, L.; Purgathofer, W. A survey of urban reconstruction. Comput. Graph. Forum 2013, 32, 146–177. [Google Scholar] [CrossRef]

- Lafarge, F.; Mallet, C. Creating large-scale city models from 3D-point clouds: A robust approach with hybrid representation. Int. J. Comput. Vis. 2012, 99, 69–85. [Google Scholar] [CrossRef]

- Van Gool, L.; Martinovic, A.; Mathias, M. Towards semantic city models. In Proceedings of the 54th Photogrammetric Week, Stuttgart, Germany, 11–15 September 2013.

- Grzeszczuk, R.; Kosecka, J.; Vedantham, R.; Hile, H. Creating compact architectural models by geo-registering image collections. In Proceedings of the 12th Computer Vision Workshops (ICCV Workshops), Kyoto, Japan, 27 September–4 October 2009.

- Burkhard, J.; Cavegn, S.; Barmettler, A.; Nebiker, S. Stereovision mobile mapping: System design and performance evaluation. Int. Arch. Photogram. Remote Sens. Spatial. Inform. Sci. 2012, 5, 453–458. [Google Scholar] [CrossRef]

- Eugster, H.; Huber, F.; Nebiker, S.; Gisi, A. Integrated georeferencing of stereo image sequences captured with a stereovision mobile mapping system—Approaches and practical results. In Proceedings of the ISPRS—International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Melbourne, Australia, 25 August–1 September 2012.

- Huber, F.; Nebiker, S.; Eugster, H. Image sequence processing in stereovision mobile mapping—Steps towards robust and accurate monoscopic 3D measurements and image-based georeferencing. In Proceeding of the Photogrammetric Image Analysis, ISPRS Conference, Munich, Germany, 5–7 October 2011.

- Verbree, E.; Zlatanova, S.; Smit, K. Interactive navigation services through value-added CycloMedia panoramic images. In Proceedings of the 6th international conference on Electronic commerce, Delft, The Netherlands, 25–27 October 2004.

- Swart, A.; Broere, J.; Veltkamp, R.; Tan, R. Refined non-rigid registration of a panoramic image Sequence to a Lidar point cloud. In Proceeding of the Photogrammetric Image Analysis, ISPRS Conference, Munich, Germany, 5–7 October 2011.

- Nebiker, S. Advances in imaging and photogrammetry. Geospatial Today 2012, 11, 12–16. [Google Scholar]

- Cavegn, S.; Haala, N.; Nebiker, S.; Rothermel, M.; Tutzauer, P. Benchmarking high density image matching for oblique airborne imagery. Int. Arch. Photogram. Remote Sens. Spatial. Inform. Sci. 2014, 3, 45–52. [Google Scholar] [CrossRef]

- Nebiker, S.; Cavegn, S.; Eugster, H.; Laemmer, K.; Markram, J.; Wagner, R. Fusion of airborne and terrestrial image-based 3D modelling for road infrastructure management—Vision and first Experiments. Int. Arch. Photogram. Remote Sens. Spatial. Inform. Sci. 2012, 4, 79–84. [Google Scholar] [CrossRef]

- Chon, J.; Wang, J.; Ristevski, J.; Slankard, T. High-Quality Seamless Panoramic Images; InTech Open Access Publisher: Rijeka, Croatia, 2012. [Google Scholar]

- Hirschmüller, H. Stereo processing by semiglobal matching and mutual information. IEEE Trans. Pattern Anal. Mach. Intell. 2008, 30, 328–341. [Google Scholar] [CrossRef] [PubMed]

- Geiger, A.; Roser, M.; Urtasun, R. Efficient large-scale stereo matching. In Proceedings of the 10th Asian Conference on Computer Vision, Computer Vision—ACCV, Queenstwon, New Zealand, 8–12 November 2010.

- Evaluation of Matching Strategies for Image-based Mobile Mapping. Available online: http://www.isprs-ann-photogramm-remote-sens-spatial-inf-sci.net/II-3-W5/361/2015/isprsannals-II-3-W5-361-2015.pdf (accessed on 28 July 2015).

- Cannelle, B.; Paparoditis, N.; Pierrot-Deseilligny, M.; Papelard, J.-P. Off-line vs. On-line calibration of a panoramic-based mobile mapping system. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2012, 3, 31–36. [Google Scholar] [CrossRef]

- Kersting, A.P.; Habib, A.F.; Rau, J.-Y. New method for the calibration or multi-camera mobile mapping systems. Int. Arch. Photogram. Remote Sens. Spatial. Inform. Sci. 2012, 1, 121–126. [Google Scholar] [CrossRef]

- Rau, J.-Y.; Habib, A.F.; Kersting, A.P.; Chiang, K.-W.; Bang, K.-I.; Tseng, Y.-H.; Li, Y.-H. Direct sensor orientation of a land-based mobile mapping system. Sensors 2011, 11, 7243–7261. [Google Scholar] [CrossRef] [PubMed]

- OpenCV Camera Calibration and 3D Reconstruction, StereoSGBM. Available online: http://docs.opencv.org/modules/calib3d/doc/camera_calibration_and_3d_reconstruction.html#stereosgbm (accessed on 28 July 2015).

- Sure: Photogrammetric Surface Reconstruction from Imagery. Available online: www.ifp.uni-stuttgart.de/publications/2012/Rothermel_etal_lc3d.pdf (accessed on 28 July 2015).

- Agisoft PhotoScan. Available online: http://www.agisoft.com/ (accessed on 28 July 2015).

- Cramer, M.; Stallmann, D.; Haala, N. Direct georeferencing using GPS/inertial exterior orientations for photogrammetric applications. Int. Arch. Photogramm. Remote Sens. 2000, 33, 198–205. [Google Scholar]

- Scharstein, D.; Szeliski, R. A Taxonomy and evaluation of dense two-frame stereo correspondence Algorithms. Int. J. Comput. Vis. 2002, 47, 7–42. [Google Scholar] [CrossRef]

- Pollefeys, M.; Koch, R.; van Gool, L. A simple and efficient rectification method for general motion. In Proceedings of the IEEE International Conference on Computer Vision, Kerkyra, 20–27 Sepetmber 1999.

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? The KITTI vision benchmark suite. In IEEE Conference on Computer Vision and Pattern Recognition, Providence, Rhode Island, 16–21 June 2012.

- Single-Image High-Resolution Satellite Data for 3D Information Extraction. Available online: http://www.ipi.uni-hannover.de/fileadmin/institut/pdf/041-willneff.pdf (accessed on 28 July 2015).

- Becker, R.; Benning, W.; Effkemann, C. 3D-monoplotting. kombinierte auswertung von laserscannerdaten und photogrammetrischen aufnahmen. zfv Zeitschrift für Geodäsie, Geoinf. und Landmanag. 2004, 129, 347–355. [Google Scholar]

- Hough, P.V. Methods and Means for Recognizing Complex Patterns. U.S. Patent 3,069,654, 18 December 1962. [Google Scholar]

- Zhang, J.; Hallquist, A.; Liang, E.; Zakhor, A. Location-based image retrieval for urban environments. In Proceedings of the Image Processing. (ICIP), 18th IEEE Internatinal. Conference, Brussels, Belgium, 11–14 September 2011.

© 2015 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Nebiker, S.; Cavegn, S.; Loesch, B. Cloud-Based Geospatial 3D Image Spaces—A Powerful Urban Model for the Smart City. ISPRS Int. J. Geo-Inf. 2015, 4, 2267-2291. https://doi.org/10.3390/ijgi4042267

Nebiker S, Cavegn S, Loesch B. Cloud-Based Geospatial 3D Image Spaces—A Powerful Urban Model for the Smart City. ISPRS International Journal of Geo-Information. 2015; 4(4):2267-2291. https://doi.org/10.3390/ijgi4042267

Chicago/Turabian StyleNebiker, Stephan, Stefan Cavegn, and Benjamin Loesch. 2015. "Cloud-Based Geospatial 3D Image Spaces—A Powerful Urban Model for the Smart City" ISPRS International Journal of Geo-Information 4, no. 4: 2267-2291. https://doi.org/10.3390/ijgi4042267