2.1. Design of the Bin-Dog Prototype

To test the proposed concept, it is essential to design a research prototype of the bin-dog robot capable of handling bins in a natural orchard environment. Such a prototype should perform the critical functions of (1) carrying a 1.20 m × 1.20 m × 1.00 m fruit bin and traveling at a 1.2 m·s−1 maximum speed within a 2.40 m tree alleyway in typical Pacific Northwest (PNW) high-density tree fruit orchards; (2) carrying an empty bin to go over a full bin laid in alleyway, and placing an empty bin at a target location in the harvesting zone; and (3) driving a full bin, weighing up to 400 kg, back from the harvesting zone to the designated bin collecting area outside the alleyway, all in one trip. To do so, the bin-dog prototype uses a “go-over-the-bin” function, which allows it to drive over a full bin between tree rows.

As illustrated in

Figure 1, the system manages bins in a five-step process. It can (1) load an empty bin in the collection station and drive it into an alleyway until reaching the full bin, (2) lift the empty bin and drive over the full bin, (3) continue to the target spot and place the empty bin, (4) drive back to load the full bin, and (5) drive the loaded full bin out of the alleyway to the collection station. By combining two trips into one, such a process has the potential to greatly reduce the travel distance and time, significantly improving overall efficiency.

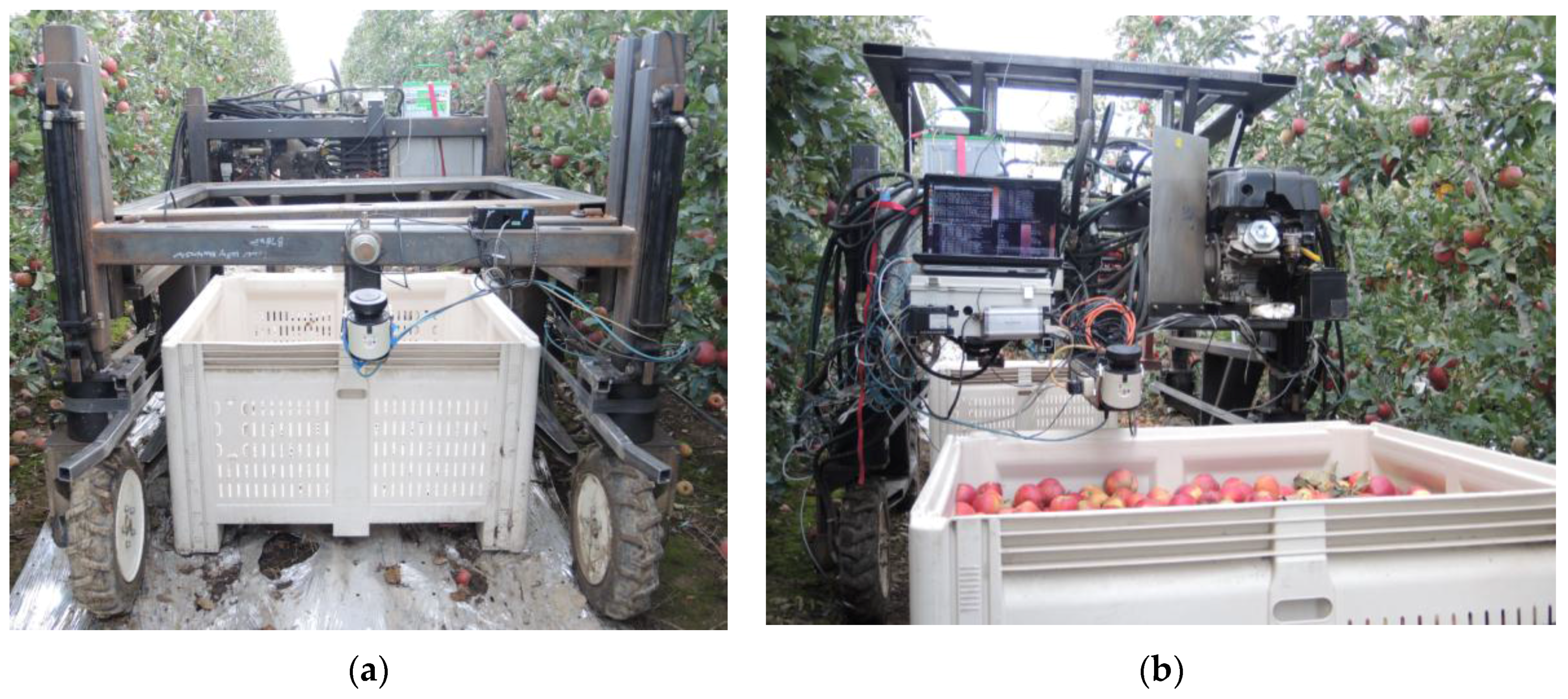

To validate the concept and usability of the proposed bin-dog system in a natural orchard environment, a research prototype (

Figure 2) is designed and fabricated [

26].

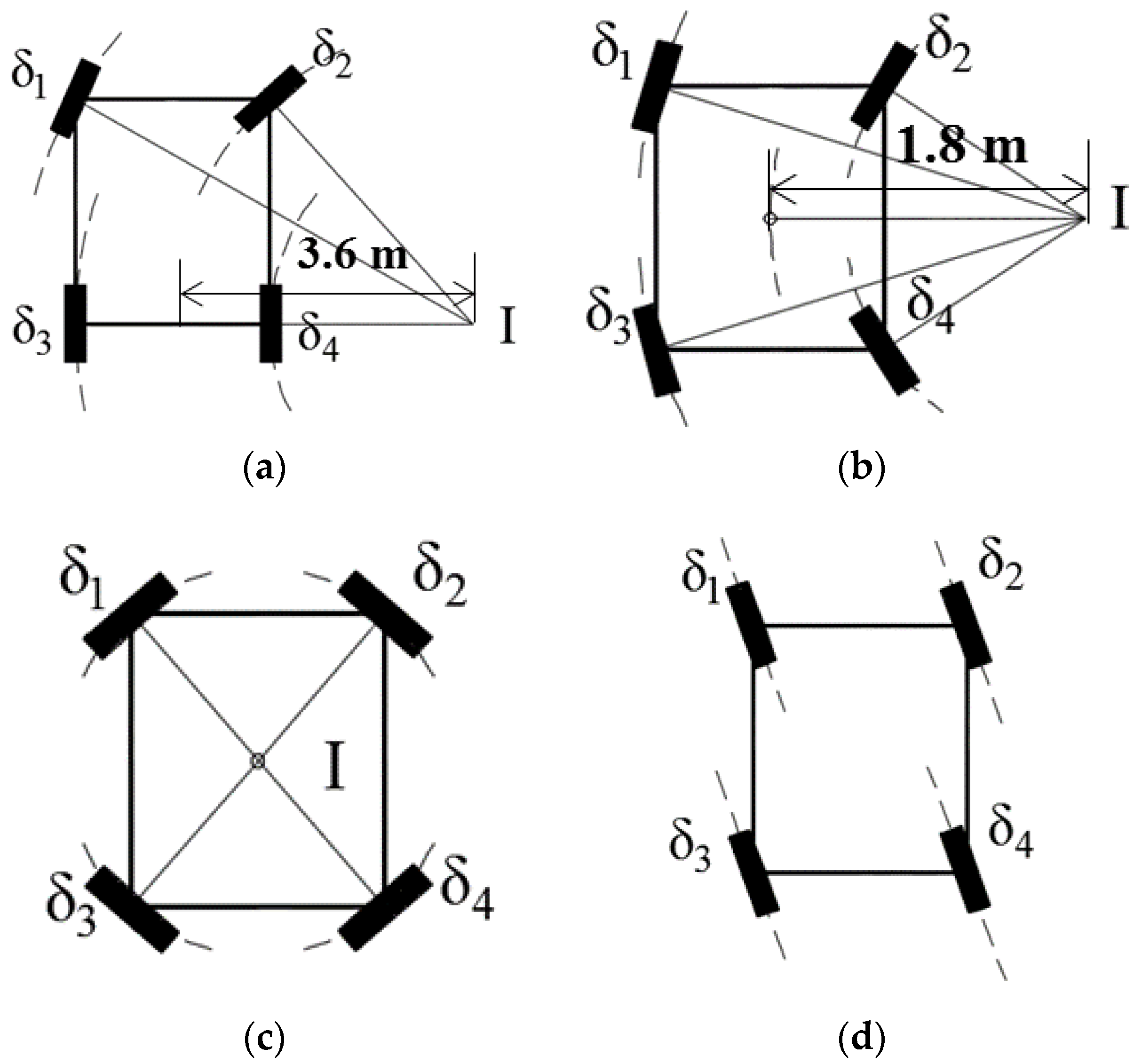

This research prototype is built with two main components: the driving and steering system, and the bin-loading system. A hydraulic system is used to actuate both systems. The hydraulic system is powered using a 9.7 kW gas engine (LF188F-BDQ, LIFAN Power, Chongqing, China) and operated under a pressure of 10.3 MPa. The research prototype uses a four-wheel-independent-steering (4WIS) system to obtain the effective and accurate maneuverability needed to guide the platform in the confined space of a natural orchard environment. It uses four low-speed high-torque hydraulic motors (TL series, Parker Hannifin, Cleveland, OH, USA) and four hydraulic rotary actuators (L10-9.5, HELAC, Enumclaw, WA, USA), which are controlled by eight bidirectional proportional hydraulic valves (Series VPL, Parker Hannifin, Cleveland, OH, USA) to achieve such a steering system. Four optical incremental encoders (TR2 TRU-TRAC, Encoder Products Company, Sagle, ID, USA) are used to measure the rotational speeds of each wheel, and the steering angles are measured by four absolute encoders (TRD-NA720NWD, Koyo Electronics Industries Co. LTD, Tokyo, Japan). To obtain the most effective maneuverability within the confined working space of an orchard, the bin-dog uses four common steering modes: Ackermann steering, front-and-rear-steering (FRS), spinning, and crab steering, as illustrated in

Figure 3. To avoid excessive mechanical stress caused by large steering angles, the minimum turning radius of the Ackermann steering and the FRS are set at 1.8 m and 3.6 m respectively. The maximum vehicle speed (both forward and backward) is up to 1.2 m·s

−1, and the maximum steering speed of a wheel is 30°·s

−1.

The prototype uses a forklift-type bin loading system, operated by a hydraulically actuated scissors-structured lifting mechanism, to gain effective and reliable operation. Based on this design, two cylinders (HTR 2016, Hercules, Clearwater, FL, USA) are symmetrically installed on both sides of the scissors structure, and a flow divider (YGCBXANAI, Sun Hydraulics, Sarasota, FL, USA) is used to ensure the two cylinders extended and retracted at the same pace to ensure smooth bin-loading motion. The actions of the cylinders are controlled using a solenoid operated directional control valve (SWH series, Northman Fluid Power, Glendale Heights, IL, USA). A touch switch is also installed on the fork to determine if a bin is loaded on the fork or not. A string potentiometer is installed on the hydraulic cylinder to measure the forking lift height. The bin-loading system allows the bin-dog to handle loads up to 500 kg. It takes about 7 s to lift a bin from ground to the highest position (1.5 m) and 5 s to lower it.

With all the aforementioned mechanical subsystems, basic functionalities, including driving and steering with 4WIS system, loading and unloading bins are achieved.

2.2. Control and Navigation System of the Bin-Dog

To automatically complete the designated bin-managing process described in

Figure 1, the control and navigation system of the bin-dog should be able to safely navigate in a headland and between tree rows, and identify and locate fruit bins in the alleyways. As stated in the introduction, this article focuses on reporting the development of the platform for a novel bin-dog system. The control and navigation algorithms developed for maneuvering the bin-dog are distinct contributions, which are applicable to general autonomous in-orchard platforms, and have been reported in more detail in separate articles [

27,

28]. As a brief description, the newly-developed control and navigation system has two subsystems: a low-level control system for realizing driving and steering with 4WIS, and a multi-sensor based navigation system for localization, object detection, task scheduling and navigation in orchard. The two subsystems are briefly introduced in the following sections.

2.2.1. Low-Level Driving and Steering Control System

As illustrated by

Figure 4, the low level control system are implemented in a microcontroller unit (MCU) (Arduino Mega, Arduino, Scarmagno, Italy) and responsible for the control of all the actuators on the bin-dog. The bin-dog has two work modes: automatic and manual. When it is in automatic mode, the low-level control system receives commands from the navigation system, which is implemented on a laptop. The commands include vehicle speed and angle, steering mode, and desired fork height. When it is in manual mode, the same commands are sent from a control pad through radio frequency (RF) modules. A wheel coordination module is used to calculate the desired wheel speed and angle of each wheel based on these commands. Afterward, the generated control signals are sent to a multi-channel valve driver (MC2, Parker Hannifin, Cleveland, OH, USA) through CAN bus to achieve the desired actions.

2.2.2. Navigation System

The navigation system consists of four basic modules: localization, object detection, task schedule, and navigation. The localization module determines the position of the bin-dog in open space (headland) and GPS-denied environments (tree alleyway). The object detection module allows the system to identify and locate objects, including tree rows and fruit bins. The task schedule module arranges the sequences of actions to complete different bin-managing tasks such as headland turning, straight line tracking between tree rows, and “go-over-the-bin.” The Robot Operating System (ROS) [

29] is used to run the main navigation program and to collect and process the sensor data. Due to difference of data type and device interface used in our system, as shown in

Figure 5, we use two sensor topics—“/scan” and “/sensor” (data transmission channels in ROS) to collect perception data. Sensors, including GPS, a bin-loading sensor, and a fork height sensor, are first connected to the MCU. The sensed data are wrapped into a self-defined data format before being sent to the ROS system. The topic “/scan” reads the data from Light Detection and Ranging (LIDAR) sensors, and the topic “sensor” reads the wrapped data from the MCU. These data are further processed in the “navigation node” to generate driving-control commands. Calculated control commands from the navigation system are then passed (through a serial topic named “/command”) to the MCU used in the low-level control system. These commands are sent at a rate of 20 Hz.

2.2.3. Localization

When operating in open space, global sensors, such as GPS, can provide high-accuracy position coordinates. However, when operating between tree rows, GPS sensors could experience dropouts due to interference from the tree canopy. Therefore, in this study, a RTK GPS (AgGPS 432, Trimble, Sunnyvale, CA, USA), two laser scanners (LMS111, SICK, Waldkirch, Germany), and an inertia measurement unit (IMU) (AHARS-30, Xsens, Enschede, The Netherlands) are used as perception sensors for the bin-dog. As illustrated in

Figure 6, two laser scanners are installed in the front and rear of the bin-dog, respectively. Both laser scanners are mounted at 20° angle looking 5.00 m in front of them. These laser scanners allow the bin-dog to perform navigation tasks while driving forward or backward in the alleyways. They have a sample rate of 25 Hz and their data form the point cloud used to achieve real-time perception.

Localization in headland is achieved by calculating the global position of the bin-dog and its heading angle. The RTK-GPS had sub-inch accuracy, a 10-Hz sample frequency, and is used as the global sensor to acquire the global position in the headland. The yaw angle from the IMU is used as the heading angle. A GPS-based navigation system [

27] is used to guide the bin-dog to track a predefined path in the headland. The pure pursuit method (

Figure 7) is used to calculate steering angles for both Ackermann and FRS steering.

In the figures,

gx and

gy are the coordinates of the navigation target point,

ld is the look-ahead distance,

δc is the steering angle, and

eld is the offset of the target point. The pure pursuit method navigates the control point (located at the middle of two rear wheels for Ackermann steering and the geometry center for FRS) to the target point using a circular arc. The target point is found by searching for a point on a predicted path with a distance of

ld to the control point. The steering angles for Ackermann and FRS steering determined by the pure pursuit method can be calculated using the following equations:

As the global position of the bin-dog in the alleyway is not available, its localization in the alleyway is achieved by calculating its offset and heading angle relative to the alleyway centerline. In this study, the relative pose of the laser scanner for navigation (front laser scanner if driving forward and rear laser scanner if backward) is used as the pose of the bin-dog. This can be calculated using the equations below:

where

Dl,

Dr denote the distance from the laser scanner to the left and right tree rows, and

Al,

Ar denote the angles between the bin-dog centerline and tree rows on both sides.

To find the accuracy of the pose computation, a perception experiment was conducted. In this experiment, the bin-dog stopped in an alleyway with different poses, and point clouds from the front laser scanner were used to calculate perceived poses.

Table 1 shows the perceived poses and corresponding ground truths (manually measured separately by the researchers). The bin-dog was manually controlled to position it at each initial pose and the average value and root mean square errors (RMSEs) of heading angle and offset were calculated over a sequence of 100 readings from the front laser scanner. As can be seen, the differences between the perceived values and the ground truths were small (angle error less than 1.0° and distance error less than 0.05 m). Additionally, the RMSEs were almost identical to the average deviations, showing that the perception was stable and robust despite the noisy environment. Even though this was a static measurement, the results are non-trivial, as the branches and leaves were actually moving with wind. This shows that the localization in the alleyway is stable and capable of eliminating the effects of a noisy environment.

2.2.4. Object of Interest Detection

During bin management, the bin-dog needs to frequently interact with its surrounding environments to perform corresponding actions. For example, it needs to steer into a targeted tree alleyway from the headland and to be aligned with a bin when the bin is detected. To achieve this goal, we have built outline models for the objects. In our experiments at the orchard, there are two types of object to be identified: the tree rows and the bins. The implementation of this method is supported by the laser scanner installed on the bin-dog. Since the objects of interest are detected by sensing a linear hyperplane of their 3D shape, this results in a linear boundary identification.

Figure 8 shows the flow diagram of the object detection algorithm. While identifying target objects, point clouds captured by the laser scanners (dataset) are sampled and streamed to the classification part (structure classification), where local structures are predicted. Local structures are then integrated to fit a model object. For example, if we are identifying a straight line, point clouds are used to first construct smaller line segments during structure classification. Then we integrate the line segments to generate a longer line model. Once the detection is complete, we are able to compute the relative poses of identified objects (such as distance and angle). Explanations on how this system work are provided as follows.

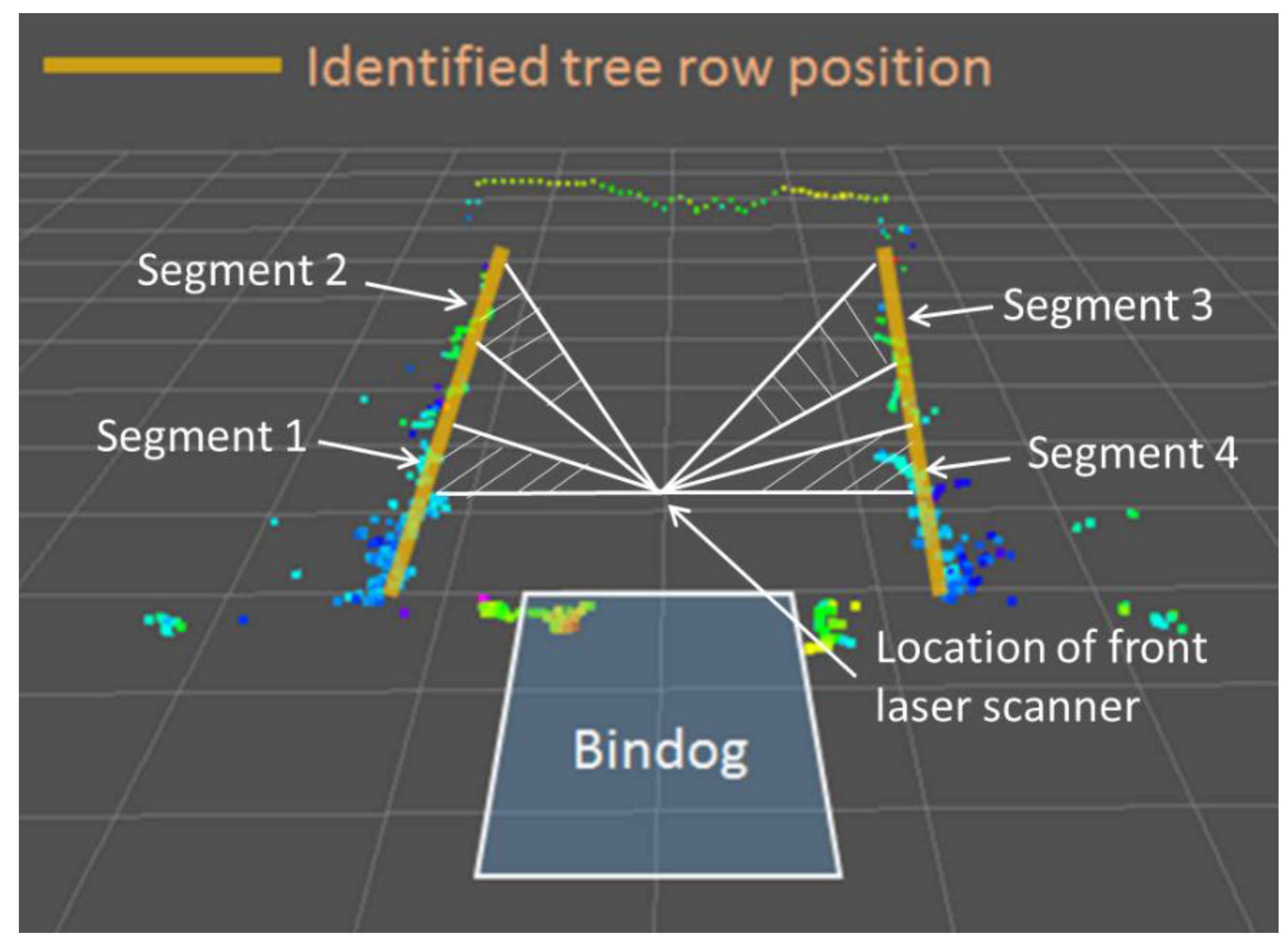

Tree row: Tree rows are important references for navigation when the bin-dog is working between them. As mentioned, in modern apple orchards, trees are planted in straight rows. Therefore, the point clouds of tree rows can be recognized as models of straight lines. To calculate the tree row model, we first select two sampling segments for a tree row (

Figure 9). In each segment, we calculate the angle and distance of the line model formed by a pair of point clouds. The arithmetic means of angles and distances of the line models in a segment are used to represent the line model of the segment. Then, we calculate line model of the tree row by calculating the arithmetic mean of line models of two segments.

Figure 9 illustrates the point clouds captured by a laser scan when bin-dog is driving between tree rows. Through the model identification algorithm shown above, we compute the relative angle (line slope) and distance (line bias) of the tree row, upon which we estimate the tree row position.

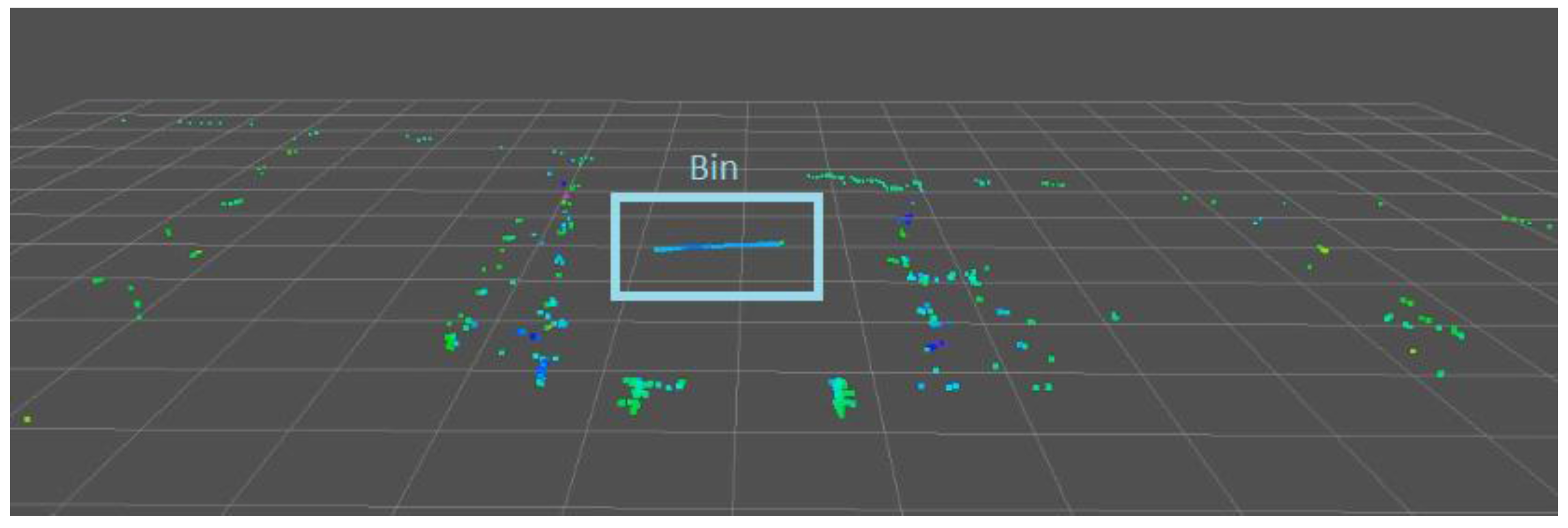

Bin: A typical fruit bin used during apple harvesting has a size of 1.20 m × 1.20 m × 1.00 m (width × length × height). The spacing between the two front wheels of the bin-dog is 1.40 m. Therefore, to successfully load a bin on the fork, it is important to precisely detect and locate the bin.

Figure 10 shows the point clouds of a bin in an alleyway. As can be seen, the point clouds of the front side of a target bin (highlighted by a rectangle) form a horizontal straight line and contain little noise, which is in stark contrast to that of the tree rows. A straight line model is used to fit the perceived point clouds, which could represent the location of the bin. The RMSE of point clouds relative to the model has a magnitude order of 10

−2 m, which is unique compared to the tree rows (10

−1 m). Therefore, the algorithm can obtain highly accurate estimates of the relative position of the bin. Through the model identification algorithm shown above, we compute the relative angle (line slope) and distance (line bias), upon which we estimate bin positions.

2.2.5. Task Schedule

The entire bin-managing process needs to be completed in different environments through performing various actions in the correct order. Therefore, the task schedule module is used to determine the sequence of actions and specify the sensors and control strategy used in a task based on its environment. The tasks that need to be completed in the bin-managing process mainly include headland turning, straight-line tracking between tree rows, and “go-over-the-bin,” which are specified below. While Zhang [

28] described the detailed algorithm development and validation results for task scheduling, a brief explanation on how this system works is provided as follows:

Headland turning: Headland turning is primarily performed to transition the bin-dog from the headland into the alleyways. Both the GPS and laser scanner-based navigation systems are used in this task. The GPS-based navigation is used to localize the bin-dog and track a predefined path that leads to the entrance of a targeted alleyway. Once the bin-dog reaches the marked location for the entrance and its heading angle has been adjusted towards the alleyway, the laser-based navigation is then used to guide it to enter the alleyway and stay on the alleyway centerline.

Straight-line tracking between tree rows: The task of straight-line tracking allows the bin-dog to navigate between tree rows without collision with tree rows. Therefore, in this task, the bin-dog needs to stay on the alleyway centerline and maintain a small pose error. The pose errors of the bin-dog in this task include lateral error (Δy) and the orientation error (Δψ) (

Figure 11). They are used to calculate the steering angle for the low-level control system.

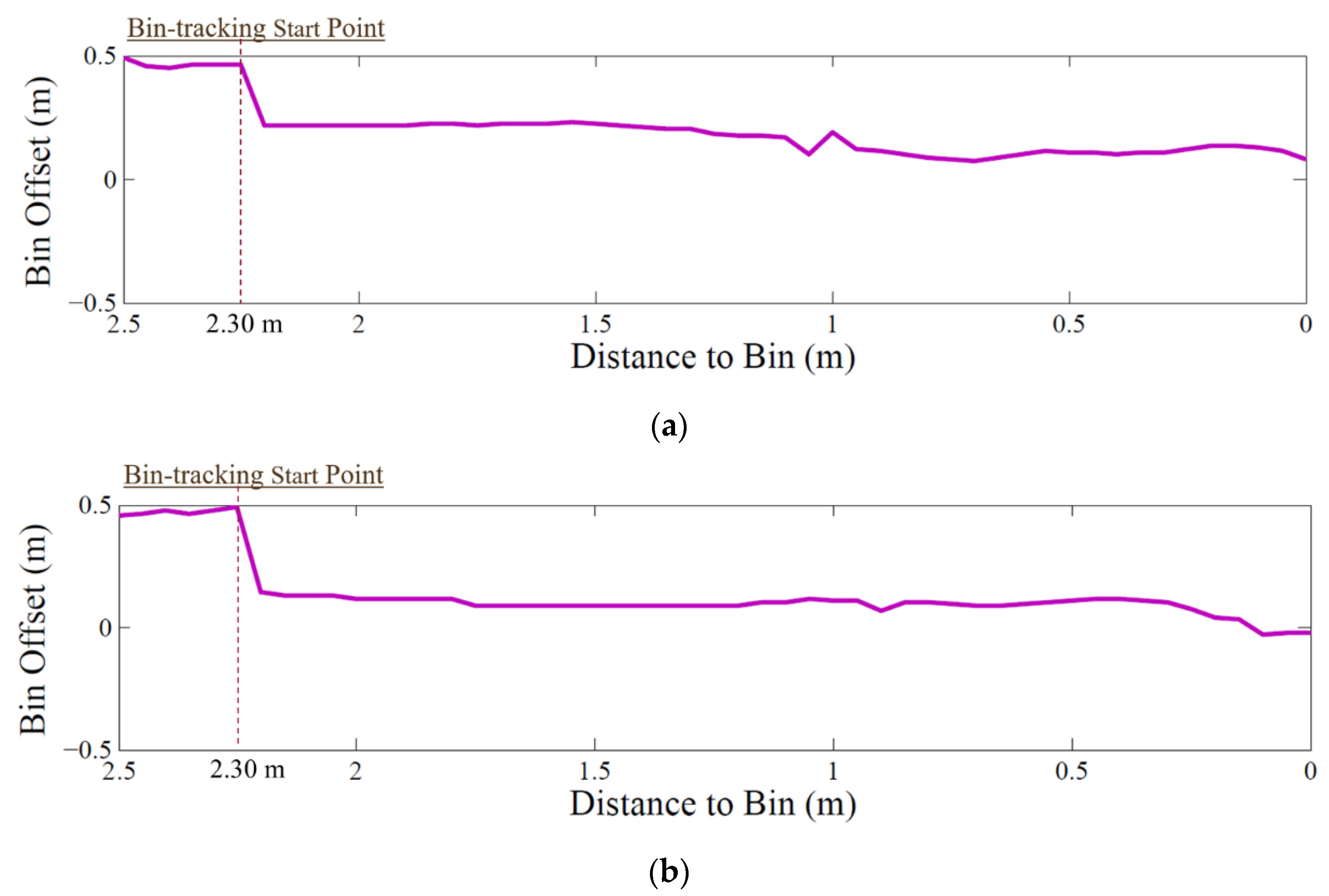

Go-over-the-bin: “Go-over-the-bin” is the core action for the bin-managing process. As mentioned in the previous section, it allows the bin-dog to replace a full bin with an empty bin in one trip. This task breaks down into a six-step process. In this task, (1) the bin-dog first carries an empty bin and uses the alleyway centerline as the reference to approach the full bin in the alleyway. Once its distance to the full bin is smaller than a preset threshold (2.30 m in our test), it lifts up the empty bin to a height of 1.2 m and uses the full bin as the reference for navigation. (2) When the full bin just gets under the front laser scanner, even though the laser scanner loses the point clouds of the front side of the bin, it can still capture point clouds from the body of the bin for reference. (3) As the bin-dog drives further forward, it completely loses the point clouds of the full bin. However, in such a situation, the bin-dog has already aligned with the bin and is partially above it. Therefore, it is set to keep driving straight forward for 20 s and then unload the empty bin. (4) Afterwards, the bin-dog lifts up its fork to 1.2 m and uses its rear laser scanner to drive over the full bin. (5) It then stops and lowers the fork to the ground, drives forward again until the full bin hits the touch switch on the fork, which triggers the fork lifting action. (6) Once the full bin is lifted off the ground (0.1 m), the bin-dog uses its rear laser scanner to drive backward and leave the alleyway.

2.3. Experimental Validation

To test and validate the performance of the bin-dog system, extensive tests were performed in a commercial orchard in Yakima Valley of Eastern Washington. The prototype of the bin-dog was fabricated in 2015, and a series of field validation experiments were conducted in different seasons of 2015 and 2016 in a V-trellis fruiting wall commercial orchard in PNW (

Figure 12a,b). The orchard had an inter-row spacing ranging from 2.30 m to 2.40 m at a height of 1.50 m and the width of its headland was 6.00 m. Notice that the experiments conducted in winter were more challenging than it would be in normal operation as the defoliation of the trees made the laser scanner much more difficult to detect the tree rows. In total, more than 200 testing runs were conducted in these field experiments, with some of them recording performance data and others used for performance observation. These test runs can be converted into over 40 km of bin management traversals in the orchard. This section highlights a set of experiments sufficient to demonstrate the performance of our prototype and the control methods used.

2.3.1. Headland Turning

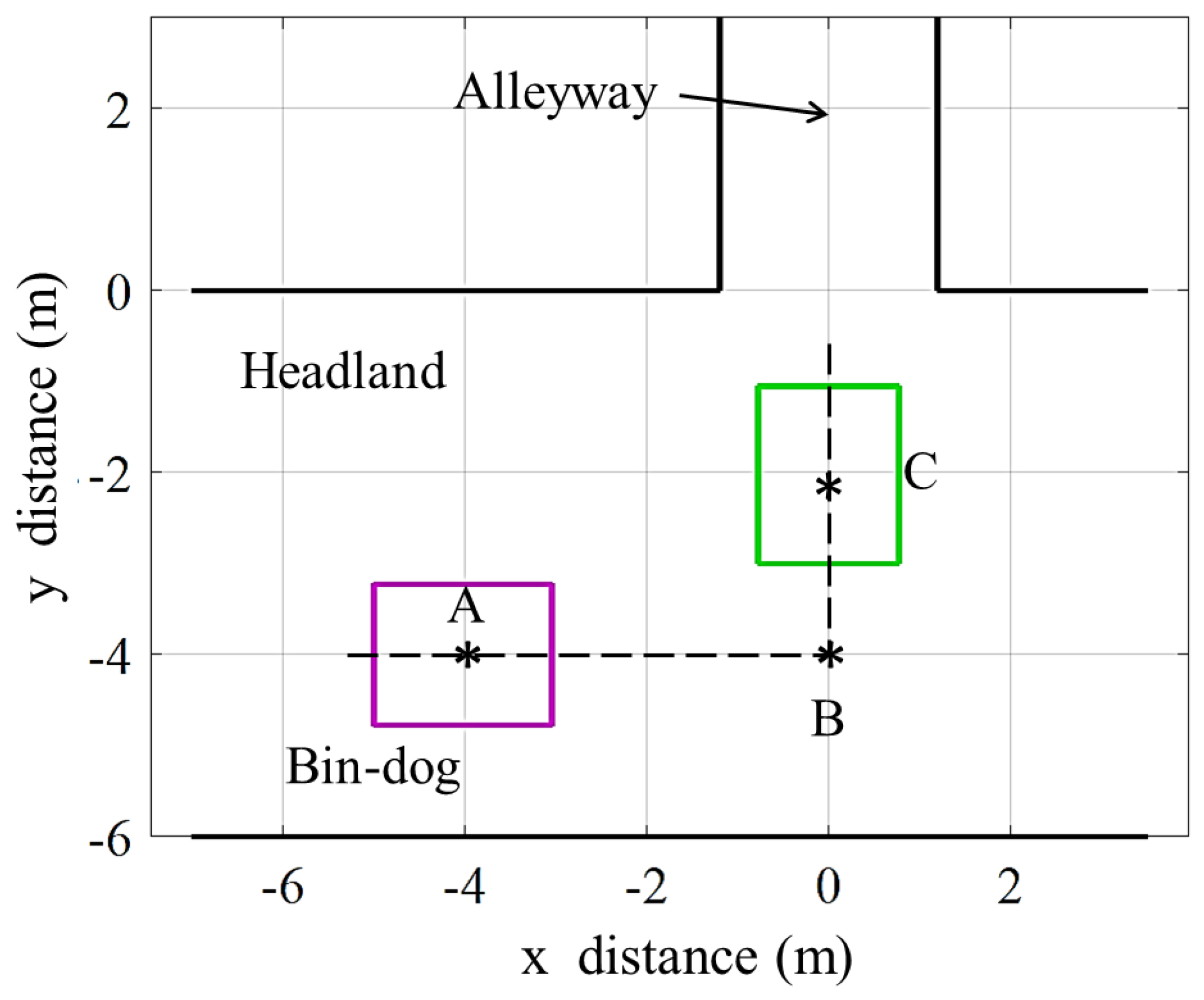

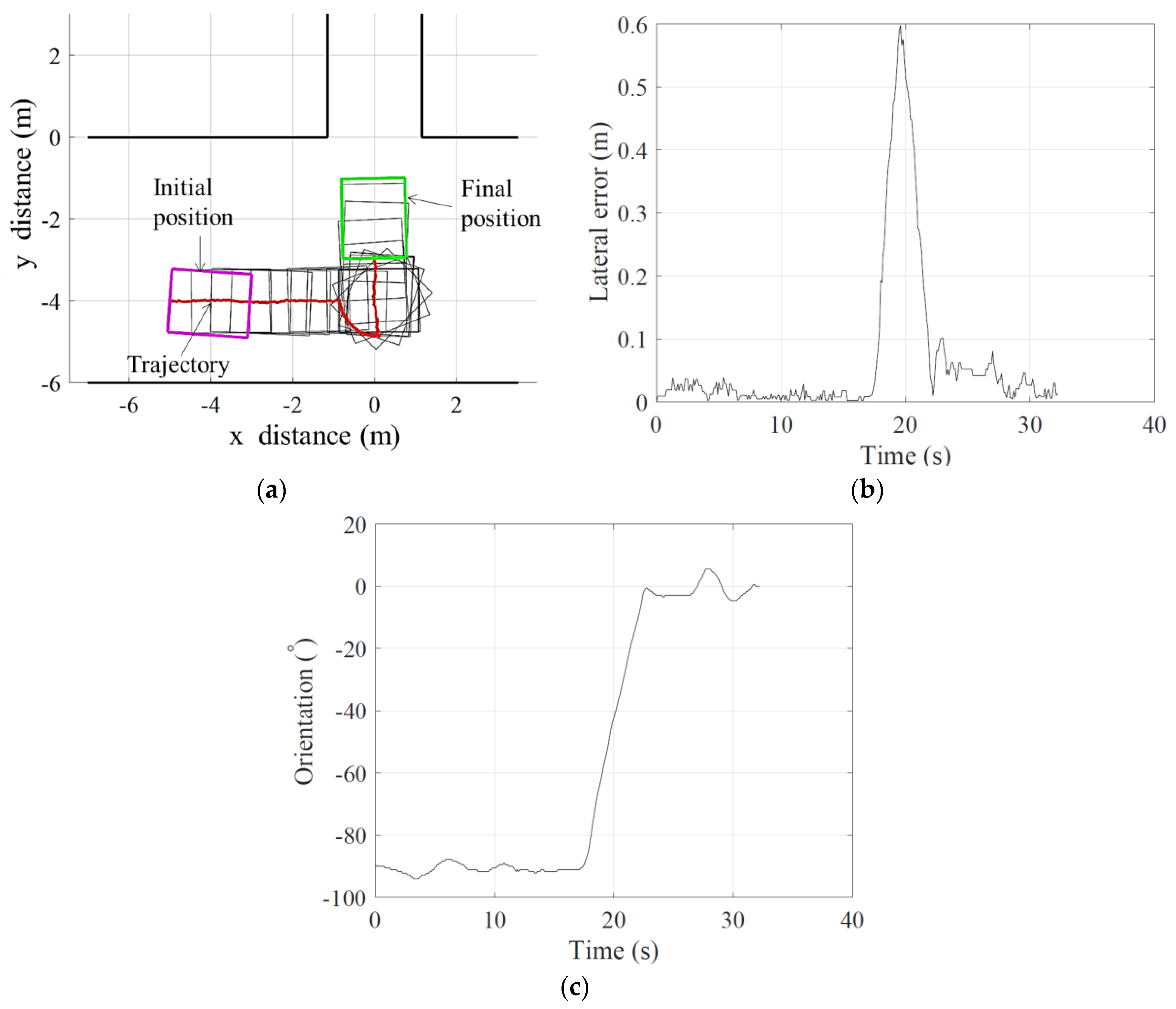

The test of headland turning was conducted to assess the headland turning performance of the bin-dog. As illustrated in

Figure 13, in this test, the bin-dog was initially heading east and stopped at point A (−4, −4) m on the headland which was 4 m away from the entrance of an alleyway. When the test started, the bin-dog used Ackermann steering to track a straight line until it reached point B (0, −4) m. The steering mode then switched into spinning mode, and the bin-dog rotated counter-clockwise until its heading vector was parallel to the alleyway. The steering mode switched back to Ackermann steering again and the bin-dog followed a straight line to reach point C (0, −2) m. In this test, the location information from GPS and orientation information from IMU were used to compare with their theoretical values to calculate lateral errors and orientation of the bin-dog. The speed of the bin-dog in this test was fixed at 0.40 m·s

−1, and the test had three repetitions.

The GPS location of the middle point of the rear wheels (tracking point) and heading angle of the bin-dog were recorded through the test. In this test, the lateral error of a data point is defined as the shortest distance from it to the path. The orientation error is defined as the angle between the heading vector of the bin-dog and the direction of the alleyway. Since rotation during the spinning mode was achieved by tracking the desired heading angle instead of tracking a path, the calculation of RMSE of lateral error does not include the spinning component of the trajectory. The observation on more than 40 repeat tests over a two-year testing period resulted in very similar performance.

2.3.2. Straight Line Tracking between Tree Rows

Driving speed is a factor that could influence the path tracking performance between tree rows. To achieve both efficient operation and smooth trajectory tracking, the desired speed of the system should be selected so as to properly balance between these two metrics. To examine this trade-off, in this test, three different test speed settings were selected: 0.30 m·s−1, 0.60 m·s−1, and 1.00 m·s−1. With each speed setting, the bin-dog navigated along the alleyway for 20 m and repeated for five times. In addition, numerous repeating tests from 20 m to 250 m (the whole length of the alleyway) tracking were observed without recording the performance data over the two-year testing period. All observed tests could successfully complete the task and resulted in very similar performance.

2.3.3. “Go-over-the-bin”

The test was set up to validate and evaluate the “go-over-the-bin” function of the bin-dog. In this test, we placed a full bin in an alleyway which had a 0°-heading angle and 0 m offset to the alleyway centerline. The bin-dog initially carried an empty bin to a location in the alleyway that was 5 m away from the full bin. It followed the “go-over-the-bin” work process described in task schedule section to unload the empty bin and remove the full bin. The test had five repetitions and the driving speed of the bin-dog was fixed at 0.60 m·s−1. Similar to other tasks, more than 20 repeated tests were observed without recording the performance data over the two-year testing period.