An Alternative View of Privacy on Facebook

Abstract

: The predominant analysis of privacy on Facebook focuses on personal information revelation. This paper is critical of this kind of research and introduces an alternative analytical framework for studying privacy on Facebook, social networking sites and web 2.0. This framework is connecting the phenomenon of online privacy to the political economy of capitalism—a focus that has thus far been rather neglected in research literature about Internet and web 2.0 privacy. Liberal privacy philosophy tends to ignore the political economy of privacy in capitalism that can mask socio-economic inequality and protect capital and the rich from public accountability. Facebook is in this paper analyzed with the help of an approach, in which privacy for dominant groups, in regard to the ability of keeping wealth and power secret from the public, is seen as problematic, whereas privacy at the bottom of the power pyramid for consumers and normal citizens is seen as a protection from dominant interests. Facebook's understanding of privacy is based on an understanding that stresses self-regulation and on an individualistic understanding of privacy. The theoretical analysis of the political economy of privacy on Facebook in this paper is based on the political theories of Karl Marx, Hannah Arendt and Jürgen Habermas. Based on the political economist Dallas Smythe's concept of audience commodification, the process of prosumer commodification on Facebook is analyzed. The political economy of privacy on Facebook is analyzed with the help of a theory of drives that is grounded in Herbert Marcuse's interpretation of Sigmund Freud, which allows to analyze Facebook based on the concept of play labor (= the convergence of play and labor).1. Introduction

Facebook is the most popular social networking site (SNS). SNS are typical applications of what is termed web 2.0, they are web-based platforms that integrate different media, information and communication technologies that allow at least the generation of profiles that display information describing the users, the display of connections (connection list), the establishment of connections between users displayed on their connection lists, and communication between users.

Mark Zuckerberg, Eduardo Saverin, Dustin Moskovitz, and Chris Hughes, who were then Harvard students, founded Facebook in 2004. Facebook is the second most often accessed website in the world (data source: alexa.com, accessed on January 19, 2011). 38.4% of all Internet users have accessed Facebook in the three month period from October 19, 2010 to January 19, 2011 (data source: alexa.com, accessed on January 19, 2011). This means that more than 755 million individuals are Facebook users (data source for worldwide Internet users: http://www.Internetworldstats.com/stats.htm, accessed on 01-19-2011). Facebook's revenues were more than $US 800 million in 2009 (Reuters: Facebook '09 revenue neared $800 million, http://www.reuters.com/article/idUSTRE65H01W20100618, accessed on 09-10-2010). Estimations in 2010 were that Facebook was likely to increase its revenues to more than $US 1 billion in 2010 (Mashable/Business: Facebook could surpass $1 billion in revenue this year, http://mashable.com/2010/03/02/facebook-could-surpass-1-billion-in-revenue-this-year, accessed on 09-10-2010), assessments in early 2011 said that it accumulated advertising revenues of $US 1.86 billion in 2010 (How Facebook earned $1.86 billion ad revenue in 2010, http://www.pcmag.com/article2/0,2817,2375926,00.asp, accessed on 01-19-2011).

The popularity of Facebook makes it an excellent example for studying what privacy can and should mean in the web 2.0 world. The task of this paper is to give a critical political economy view of privacy on Facebook. Such an approach is especially interested in uncovering the role of surplus value, exploitation, and class in the studied phenomena (Dussel 2008 [1], p. 77; Negri 1991 [2], p. 74). For doing so, first the notion of privacy is discussed (Section 2). Then, the dominant kind of analysis of Facebook privacy is criticized by characterizing it as a form of privacy fetishism (Section 3). Based on this critique, an alternative approach is outlined that positions privacy on Facebook by conducting an ideology analysis of the Facebook privacy policy (4.1). This policy is the legal mechanism enabling and guaranteeing the exploitation of surplus value. The specific way of surplus value generation and appropriation of Facebook is discussed in Section 4.2. Finally, some conclusions are drawn and strategies for alternative online privacy politics are outlined in Section 5.

2. The Notion of Privacy

Privacy is not only about information and communication. A woman, who was beaten and injured by her husband so that a court has ruled that he is not allowed to come near her, not only has the interest that her husband does not obtain information about her, she also has the interest that he physically does not come near her so as not to be able to maltreat her. She probably will change her mobile phone number in order to obtain informational and communicational privacy, and also may consider moving to a new flat. This example shows that information and communication is not the only dimension of privacy, although it is the one that is most relevant when we discuss Internet privacy. Tavani (2008 [3], 2010 [4]) therefore says that informational privacy is one of four kinds of privacy, the other three being physical/accessibility privacy, decisional privacy, and psychological/mental privacy. Solove (2008 [5]) argues that communication is one context of privacy besides the family, the body, sex, and the home. In an information society, accessibility is not only access to a physical space, but also access to informational spaces. On the Internet, there is a connection between informational privacy and accessibility privacy. The common ground of information and physicality is that the Internet and other networks are informational spaces. For discussing the role of privacy on Facebook, the concept of informational privacy is important. Tavani (2008 [3]) distinguishes between the restricted access theory, the control theory, and the restricted access/limited control theory of privacy.

The restricted access theory of informational privacy sees privacy achieved if one is able to limit and restrict others from access to personal information (Tavani 2008 [3], pp. 142ff). The classical form of this definition is Warren's and Brandeis' notion of privacy: “Now the right to life has come to mean the right to enjoy life—the right to be let alone” (Warren and Brandeis 1890 [6], p. 193). They discussed this right especially in relation to newspapers and spoke of the “evil of invasion of privacy by the newspapers”. Although some scholars argue that Warren's and Brandeis' (1890 [6]) paper is the source of the restricted access theory (for example: Bloustein 1964/1984 [7]; Schoeman 1984 [8]; Rule 2007 [9], p. 22; Solove 2008 [5], pp. 15f), the same concept was already formulated by John Stuart Mills 42 years before Warren and Brandeis in his 1848 book Principles of Political Economy (Mill 1965 [10], p. 938). This circumstance shows the inherent connection of the modern privacy concept and liberal thought. The control theory of privacy sees privacy as control and self-determination over information about oneself (Tavani 2008 [3], pp. 142ff). The most influential control definition of privacy was given by Westin (1967 [11], p. 7): “Privacy is the claim of individuals, groups or institutions to determine for themselves when, how, and to what extent information about them is communicated to others” (Westin 1967 [11], p. 7). In a control theory of privacy, there is privacy even if one chooses to disclose all personal information about oneself. In an absolute restricted access theory of privacy, there is only privacy if one lives in solitary confinement without contact with others. The restricted access/limited control theory (RALC) of privacy tries to combine both concepts. It distinguishes “between the concept of privacy, which it defines in terms of restricted access, and the management of privacy, which is achieved via a system of limited controls for individuals” (Tavani 2008 [3], p. 144; see also: Moor 2000 [12]).

All three kinds of definitions of informational privacy have in common that they deal with the moral questions of how information about humans should be processed, who shall have access to this data, and how this access shall be regulated. All have in common the normative value that some form of data protection is needed.

Etzioni (1999 [13]) stresses that it is a typical American liberal belief that strengthening privacy can cause no harm. He stresses that privacy can undermine common goods (public safety, public health). That privacy is not automatically a positive value has also been reflected on in a number of general criticisms of privacy. Critics of the privacy concept argue that it promotes an individual agenda and possessive individualism that can harm the public/common good (Bennett 2008 [14], pp. 9f; Bennett and Raab 2006 [15], p. 14; Etzioni 1999 [13]; Gilliom 2001 [16], pp. 8, 121; Hongladarom 2007 [17], p. 115; Lyon 1994 [18], p. 296; Lyon 2007 [19], pp. 7, 174; Stalder 2002 [20]; Tavani 2008 [3], pp. 157f), that it can be used for legitimatizing domestic violence in families (Bennett and Raab 2006 [15], p. 15; Lyon 1994 [18], p. 16; Lyon 2001 [21], p. 20; Quinn 2006 [22], p. 214; Schoeman 1992 [23], pp. 13f; Tavani 2008 [3], pp. 157f; Wacks 2010 [24], p. 36), that it can be used for planning and carrying out illegal or antisocial activities (Quinn 2006 [22], p. 214; Schoeman 1984 [25], p. 8), that it can conceal information in order to mislead and misrepresent the character of individuals and is therefore deceptive (Bennett and Raab 2006 [15], p. 14; Schoeman 1984 [25], p. 403; Posner 1978/1984 [26]; Wasserstrom 1978/1984 [27]), that a separation of public and private life is problematic (Bennett and Raab 2006 [15], p. 15; Lyon 2001 [21], p. 20; Sewell and Barker 2007 [28], pp. 354f), or that it advances a liberal notion of democracy that can be opposed by a participatory notion of democracy (Bennett and Raab 2006 [15], p. 15). Privacy has also been criticized as a western-centric concept that does not exist in an individualistic form in non-western societies (Burk 2007 [29]; Hongladarom 2007 [17]; Zureik and Harling Stalker 2010 [30], p. 12). There have also been discussions of the concept of privacy based on ideology critique (Stahl 2007 [31]) and intercultural philosophy (see for example: Capurro 2005 [32]; Ess 2005 [33]).

These critiques show that the question is therefore not how privacy can be best protected, but in which cases privacy should be protected and in which cases it should not. As Facebook is a capitalist corporation focused on accumulating capital, especially the economic questions relating to online privacy are of interest in this paper.

Countries like Switzerland, Liechtenstein, Monaco, or Austria have a tradition of relative anonymity of bank accounts and transactions. Money as private property is seen as an aspect of privacy, about which no information should be known by the public. In Switzerland, the bank secret is defined in the Federal Banking Act (§47). The Swiss Bankers Association sees bank anonymity as a form of “financial privacy” ( http://www.swissbanking.org/en/home/qa-090313.htm, accessed on 09-21-2010) that needs to be protected and speaks of “privacy in relation to financial income and assets” ( http://www.swissbanking.org/en/home/dossier-bankkundengeheimnis/dossier-bankkundengeheimnis-themen-geheimnis.htm, accessed on 09-21-2010). In most countries, information about income and the profits of companies (except for public companies) is treated as a secret, a form of financial privacy. The privacy-as-secrecy conception is typically part of the limited access concept of privacy (Solove 2008 [5], p. 22). Control theories and limited access/control theories of privacy in contrast do not stress absolute secrecy of personal information as desirable, but rather stress the importance of self-determination in keeping or sharing personal information and the different contexts, in which keeping information to oneself or sharing it is considered important. In this vein, Helen Nissenbaum stresses that the “right to privacy is neither a right to secrecy nor a right to control but a right to appropriate flow of personal information” (Nissenbaum 2010 [34], p. 127). In all of these versions of privacy theories, secrecy of information plays a certain role, although the exact role and desirability of secrecy is differently assessed.

The problem of secret bank accounts/transaction and the intransparency of richness and company profits is not only that financial privacy can support tax evasion, black money, and money laundering, but also that it hides wealth gaps. Financial privacy reflects the classical liberal account of privacy. So for example John Stuart Mill formulated a right of the propertied class to economic privacy as “the owner's privacy against invasion” (Mill 1965 [10], p. 232). Economic privacy in capitalism (the right to keep information about income, profits, bank transactions secret) protects the rich, companies, and wealthy. The anonymity of wealth, high incomes, and profits makes income and wealth gaps between the rich and the poor invisible and thereby ideologically helps legitimatizing and upholding these gaps. It can therefore be considered an ideological mechanism that helps reproducing and deepening inequality.

It would nonetheless be a mistake to fully cancel privacy rights and to dismiss them as bourgeois values. Liberal privacy discourse is highly individualistic; it is always focused on the individual and his/her freedoms. It separates the public and private sphere. Privacy in capitalism can best be characterized as an antagonistic value that is, on the one side, upheld as a universal value for protecting private property, but is at the same time permanently undermined by corporate surveillance into the lives of humans for profit purposes (and by political surveillance for administrative purposes, defense and law enforcement). Capitalism protects privacy for the rich and companies, but at the same time legitimates privacy violations of consumers and citizens. It thereby undermines its own positing of privacy as a universal value.

Privacy is in modern societies an ideal rooted in the Enlightenment. Capitalism is grounded in the idea that the private sphere should be separated from the public sphere, should not be accessible by the public, and that therefore autonomy and anonymity of the individual are needed in the private sphere. The rise of the idea of privacy in modern society is connected to the rise of the central ideal of the freedom of private ownership. Private ownership is the idea that humans have the right to own as much wealth as they want, as long as it is inherited or acquired through individual achievements. There is an antagonism between private ownership and social equity in modern society. How much and what exactly a person owns is treated as an aspect of privacy in many contemporary societies. To keep ownership structures secret is a measure of precaution against the public questioning or the political and individual attack against private ownership. Capitalism requires anonymity and privacy in order to function. But full privacy is also not possible in modern society because strangers enter social relations that require trust or enable exchange. Building trust requires knowing certain data about other persons. It is therefore checked with the help of surveillance procedures if a stranger can be trusted. Corporations have the aim of accumulating ever more capital. That is why they have an interest in knowing as much as possible about their workers (in order to control them) and the interests, tastes, and behaviors of their customers; this results in the surveillance of workers/employees and consumers. The ideals of modernity (such as the freedom of ownership) also produce phenomena such as income and wealth inequality, poverty, unemployment, precarious living and working conditions. The establishment of trust, socio-economic differences, and corporate interests are three qualities of modernity that necessitate surveillance. Therefore, modernity on the one hand advances the ideal of a right to privacy, but on the other hand it must continuously advance surveillance that threatens to undermine privacy rights. An antagonism between privacy ideals and surveillance is therefore constitutive of capitalism.

When discussing privacy on Facebook, we should therefore go beyond a bourgeois notion of privacy and try to advance a socialist notion of privacy that tries to strengthen the protection of consumers and citizens from corporate surveillance. Economic privacy is therefore posited as undesirable in those cases, where it protects the rich and capital from public accountability, but as desirable, where it tries to protect citizens from corporate surveillance. Public surveillance of the income of the rich and of companies and public mechanisms that make their wealth transparent are desirable for making wealth and income gaps in capitalism visible, whereas privacy protection from corporate surveillance is also important. In a socialist privacy concept, the existing privacy values have to be reversed. Whereas today we mainly find surveillance of the poor and of citizens who are not owners of private property and protection of private property, a socialist privacy concept focuses on surveillance of capital and the rich in order to increase transparency and privacy protection of consumers and workers. A socialist privacy concept conceives privacy as the collective right of dominated and exploited groups that need to be protected from corporate domination that aims at gathering information about workers and consumers for accumulating capital, disciplining workers and consumers, and for increasing the productivity of capitalist production and advertising. The liberal conception and reality of privacy as an individual right within capitalism, protects the rich and the accumulation of ever more wealth from public knowledge. A socialist privacy concept as collective right of workers and consumers can protect humans from the misuse of their data by companies. The question therefore is: Privacy for whom? Privacy for dominant groups in regard to the ability to keep wealth and power secret from the public can be problematic, whereas privacy at the bottom of the power pyramid for consumers and normal citizens can be a protection from dominant interests. Privacy rights should therefore be differentiated according to the position people and groups occupy in the power structure.

3. Facebook and Privacy Fetishism

Liberal privacy theories typically talk about the positive qualities that privacy entails for humans or speak of it as an anthropological constant in all societies, without discussing the particular role of privacy in capitalist society. Solove (2008 [5], p. 98) summarizes the positive values that have been associated with privacy in the existing literature: Autonomy, counterculture, creativity, democracy, eccentricity, dignity, freedom, freedom of thought, friendship, human relationships, imagination, independence, individuality, intimacy, psychological well-being, reputation, self-development. The following values can be added to this list (see the contributions in Schoeman 1984 [23]): emotional release, individual integrity, love, personality, pluralism, self-determination, respect, tolerance, self-evaluation, trust.

Analyses that associate privacy with universal positive values tend to not engage with actual and possible negative effects of privacy and the relationship of modern privacy to private property, capital accumulation, and social inequality. They give unhistorical accounts of privacy by arguing that privacy is a universal human principle that brings about positive qualities for individuals and society. They abstract from issues relating to the political economy of capitalism, such as exploitation and income/wealth inequality. But if there are negative aspects of modern privacy, such as the shielding of income gaps and of corporate crimes, then such accounts are problematic because they neglect negative aspects and present modern values as characteristic for all societies. Karl Marx characterized the appearance of the “definite social relation between men themselves” as “the fantastic form of a relation between things” (Marx 1867 [35], p. 167) as fetishistic thinking. Fetishism mistakes phenomena that are created by humans and have social and historical character as being natural and existing always and forever in all societies. Phenomena such as the commodity are declared to be “everlasting truths” (Marx 1867 [35], p. 175, fn34). Theories of privacy that do not consider privacy as historical, that do not take into account the relation of privacy and capitalism or only stress its positive role, can, based on Marx, be characterized as privacy fetishism. In contrast to privacy fetishism, Barrington Moore (1984 [36]) argues, based on anthropological and historical analyses of privacy, that it is not an anthropological need “like the need for air, sleep, or nourishment” (Moore 1984 [36], p. 71), but “a socially created need” that varies historically (Moore 1984 [36], p. 73). The desire for privacy, according to Moore, develops only in societies that have a public sphere that is characterized by complex social relationships, which are seen as “disagreeable or threatening obligation” (Moore 1984 [36], p. 72). Moore argues that this situation is the result of stratified societies, in which there are winners and losers. The alternative would be the “direct participation in decisions affecting daily lives” (Moore 1984 [36], p. 79).

A specific form of privacy fetishism can also be found in research about Facebook and social networking sites in general. The typical standard study of privacy on Facebook and other social networking sites focuses on the analysis of information disclosures by users (in many cases younger users) (for example: Acquisti and Gross 2006 [37]; Barnes 2006 [38]; Dwyer 2007 [39]; Dwyer, Hiltz and Passerini 2007 [40]; Fogel and Nehmad 2009 [41]; Gross, Acquisti and Heinz 2005 [42]; Hodge 2006 [43]; Lewis, Kaufman and Christakis 2008 [44]; Livingstone 2008 [45]; Stutzman 2006 [46]; Tufeki 2008 [47]). Their privacy is considered to be under threat because they disclose too much information about themselves and thereby become targets of criminals and surveillance. Privacy is strictly conceived as an individual phenomenon that can be protected if users behave in the correct way and do not disclose too much information. All issues relating to the political economy of Facebook, such as advertising, capital accumulation, the appropriation of user data for economic ends, and user exploitation, are ignored. One can therefore characterize such analyses as Facebook privacy fetishism.

Marx has stressed that a critical theory of society does “not preach morality at all” (Marx and Engels 1846 [48], p. 264) because human behavior is an expression of the conditions individuals live in. Critical theorists “do not put to people the moral demand: Love one another, do not be egoists, etc.; on the contrary, they are very well aware that egoism, just as much selflessness, is in definite circumstances a necessary form of the self-assertion of individuals” (Marx and Engels 1846 [48], p. 264). The implication of uncritical analyses of social networking sites is moral condemnation and the simplistic conclusion that it is morally bad to make personal data public. Paraphrasing Marx, critical theorists in contrast do not put moral demands on users to not upload personal data to public Internet platforms, because they are very well aware that this behavior is under capitalist circumstances a necessary form of the self-assertion of individuals.

One can also characterize Facebook privacy fetishism as victimization discourse. Such research concludes that social networking sites pose threats that make users potential victims of individual criminals, such as in the case of cyberstalking, sexual harassment, threats by mentally ill persons, data theft, data fraud, etc. Frequently these studies also advance the opinion that the problem is a lack of individual responsibility and knowledge and that as a consequence users put themselves at risk by putting too much private information online and not making use of privacy mechanisms, for example by making their profile visible for all other users. One problem of the victimization discourse is that it implies young people are irresponsible, passive, ill informed, that older people are more responsible, that the young should take the values of older people as morally superior and as guidelines, and especially that there are technological fixes to societal problems. It advances the view that increasing privacy levels will technologically solve societal problems and ignores that this might create new problems because decreased visibility might result in less fun for the users, less contacts, and therefore less satisfaction, as well as in the deepening of information inequality. Another problem is that such approaches implicitly or explicitly conclude that communication technologies as such have negative effects. These are pessimistic assessments of technology that imply that there are inherent risks in technology. The causality underlying these arguments is one-dimensional: It is assumed that technology as cause has exactly one negative effect on society. But both technology and society are complex, dynamic systems (Fuchs 2008 [49]). Such systems are to a certain extent unpredictable and their complexity makes it unlikely that they will have exactly one effect (Fuchs 2008 [49]). It is much more likely that there will be multiple, at least two, contradictory effects (Fuchs 2008 [49]). The techno-pessimistic victimization discourse is also individualistic and ideological. It focuses on the analysis of individual usage behavior without seeing and analyzing how this use is conditioned by the societal context of information technologies, such as surveillance, the global war against terror, corporate interests, neoliberalism, and capitalist development.

In contrast to Facebook privacy fetishism, it is the task for Critical Internet Studies to analyze Facebook privacy in the context of the political economy of capitalism.

4. Privacy and the Political Economy of Facebook

Gavison (1980 [50]) argues that protection from commercial exploitation should not be grouped under privacy. Her argument is that there are many forms of commercial exploitation that have nothing to do with privacy protection and vice versa. Applying her argument to Facebook means that the exploitation of user data for economic purposes on Facebook is not a privacy issue. But the argument does not hold: issues that are related to the use of personal information by others, such as companies, are always privacy issues because the question arises if the consumers/users/prosumers have control over how their information is used, can influence privacy policies, and if the information processing and use is transparent. It would therefore be a mistake to see Facebook's economic commodifcation of users with the help of its terms of use and privacy policy not as an issue of privacy and surveillance.

Karl Marx positioned privacy in relation to private property. The liberal concept of the private individual and privacy would see man as “an isolated monad, withdrawn into himself … The practical application of the right of liberty is the right of private property” (Marx 1843 [51], p. 235). Modern society's constitution would be the “constitution of private property” (Marx 1843 [52], p. 166). For Hannah Arendt, modern privacy is expression of a sphere of deprivation, where humans are deprived of social relations and “the possibility of achieving something more permanent than life itself” (Arendt 1958 [53], p. 58). “The privation of privacy lies in the absence of others” (Arendt 1958 [53], p. 58). Arendt says that the relation between private and public is “manifest in its most elementary level in the question of private property” (Arendt 1958 [53], p. 61]. Habermas (1989, [54]) stresses that the modern concept of privacy is connected to the capitalist separation of the private and the public realm. Privacy is for Habermas an illusionary ideology—“pseudo-privacy” (Habermas 1989 [54], p. 157)— that in reality functions as community of consumers (Habermas 1989 [54], p. 156) and enables leisure and consumption as a means for the reproduction of labor power so that it remains vital, productive, and exploitable (Habermas [54], p. 159).

The theories of Marx, Arendt, and Habermas have quite different political implications, but the three authors have in common that they stress the importance of addressing the notions of privacy, the private sphere, and the public, by analyzing their inherent connection to the political economy of capitalism. For a critical analysis it is important to discuss privacy on Facebook not simply as the revelation of personal data, but to inquire into the political economy and ownership structures of personal data on Facebook. Most contemporary analyses of privacy on web 2.0 and social networking sites neglect this dimension that was stressed by Marx, Arendt and Habermas. Marx, Arendt and Habermas remind us that it is important to focus on the political economy of privacy when analyzing Facebook.

Facebook is a capitalist company. Therefore its economic goal is to achieve financial profit. It does so with the help of targeted personalized advertising, which means that it tailors advertisements to the consumption interests of the users. Social networking sites are especially suited for targeted advertising because they store and communicate a vast amount of personal likes and dislikes of users that allow surveillance of these data for economic purposes, finding out which products the users are likely to buy. This explains why targeted advertising is the main source of income and the business model of most profit-oriented social networking sites. Facebook uses mass surveillance because it stores, compares, assesses, and sells the personal data and usage behavior of several hundred million users. But this mass surveillance is personalized and individualized at the same time because the detailed analysis of the interests and browsing behavior of each user and the comparison to the online behavior and interests of other users allows to sort the users into consumer interest groups and to provide each individual user with advertisements that, based on algorithmic selection and comparison mechanisms, are believed to reflect the user's consumption interests. For this form of Internet surveillance to work, permanent input and activity of the users are needed, which are guaranteed by the specific characteristics of web 2.0, especially the upload of user-generated content and permanent communicative flows.

Capitalism is based on the imperative to accumulate ever more capital. To achieve this, capitalists either have to prolong the working day (absolute surplus value production) or to increase the productivity of labor (relative surplus value production) (on relative surplus value, see: Marx 1867 [46], Chapter 12). Relative surplus value production means that productivity is increased so that more commodities and more surplus value can be produced in the same time period as before. “For example, suppose a cobbler, with a given set of tools, makes one pair of boots in one working day of 12 hours. If he is to make two pairs in the same time, the productivity of his labour must be doubled; and this cannot be done except by an alteration in his tools or in his mode of working, or both. Hence the conditions of production of his labour, i.e., his mode of production, and the labour process itself, must be revolutionized. By an increase in the productivity of labour, we mean an alteration in the labour process of such a kind as to shorten the labour-time socially necessary for the production of a commodity, and to endow a given quantity of labour with the power of producing a greater quantity of use-value. … I call that surplus-value which is produced by lengthening of the working day, absolute surplus-value. In contrast to this, I call that surplus-value which arises from the curtailment of the necessary labour-time, and from the corresponding alteration in the respective lengths of the two components of the working day, relative surplus-value” (Marx 1867 [35], pp. 431f).

Sut Jhally ([55], p. 78) argues that “reorganizing the watching audience in terms of demographics” is a form of relative surplus value production. Targeted Internet advertising can also be interpreted as a form of relative surplus value production: At one point in time, not only one advertisement is shown to the audience by the advertisers as in non-targeted advertising, but different advertisements are shown to different user groups depending on the monitoring, assessment and comparison of their interests and online behavior. On traditional forms of television, all watchers see the same advertisements at the same time. In targeted online advertising, advertising companies can present different ads at the same time. The efficiency of advertising is increased, more advertisements that are likely to fit the interests of consumers are shown in the same time period as before. These advertisements are partly produced by the advertising company's wage laborers and partly by the Internet users, whose user-generated data and transaction data are utilized. The more targeted advertisements there are, the more likely it is that users recognize ads and click on them.

The click-and-buy process by users is the surplus value realization process of the advertizing company. Targeted advertising allows Internet companies to present not just one advertisement at one point in time to users, but numerous advertisements; so more advertising time is in total produced and presented as a commodity by Internet companies to users. Relative surplus value production means that more surplus value is generated in the same time period as earlier. Targeted online advertising is more productive than non-targeted online advertising because it allows presenting more ads in the same time period. These ads contain more surplus value than the non-targeted ads, i.e., more unpaid labor time of the advertising company's paid employees and of users, who generate user-generated content and transaction data.

In order to understand the political economy of Facebook, its legal-political framework (4.1) and its accumulation model need to be analyzed (4.2).

4.1. Facebook's Privacy Policy

The use of targeted advertising and economic surveillance is legally guaranteed by Facebook's privacy policy (Facebook privacy policy, version from December 22, 2010, http://www.facebook.com/policy.php, accessed on January 19, 2011). In this section of the paper, I will conduct a qualitative critical discourse analysis of those parts of the Facebook privacy policy that are focused on advertising. It is an important principle of critical discourse analysis to contextualize texts (van Dijk 1997 [56], p. 29). Therefore the advertising-focused passages of the Facebook privacy context are put into the context of the political economy of capitalism. Another principle is to ask what meaning and functions the sentences and words have (van Dijk 1997 [56], p. 31). Therefore I discuss which meaning and role the advertising-related passages have in light of the political economy context. Critical discourse analyzes challenges taken-for-granted assumptions by putting them into the context of power structures in society. “The goals of critical discourse analysis are also therefore ‘denaturalizing’” (Fairclough 1995 [57], p. 36). The task is to denaturalize assumptions about privacy and advertising in the Facebook privacy policy if they are not put into the context of power and capitalism. “One crucial presupposition of adequate critical discourse analysis is understanding the nature of social power and dominance. Once we have such an insight, we may begin to formulate ideas about how discourse contributes to their reproduction” (van Dijk 1993 [58], p. 254). By understanding the role of advertising in the Facebook privacy policy, one can understand how self-regulatory legal mechanisms reproduce economic power and dominance.

That Facebook can largely regulate itself how it deals with the data of the users is also due to the circumstance that it is a legally registered company with its headquarters in Palo Alto, California, United States. Facebook's privacy policy is a typical expression of a self-regulatory privacy regime, in which businesses largely define themselves how they process personal user data. The general perception in privacy and surveillance studies is that there is very little privacy protection in the United States and that the United States is far behind the European nations in protecting privacy (Tavani 2010 [4], p. 166; Wacks 2010 [24], p. 124; Zureik and Harling Stalker 2010 [30], p. 15); also U.S. data protection laws only cover government databanks and due to business considerations leave commercial surveillance untouched in order to maximize profitability (Ess 2009 [59], p. 56; Lyon 1994 [18], p. 15; Rule 2007 [9], p. 97; Zureik 2010 [60], p. 351). Facebook's terms of use and its privacy policy are characteristic of the liberal U.S. data protection policies that are strongly based on business self-regulation. They also stand for the problems associated with a business-friendly self-regulatory privacy regime: If privacy regulation is voluntary, the number of organizations engaging in it tends to be very small (Bennett and Raab 2006 [15], p. 171): “Self-regulation will always suffer from the perception that it is more symbolic than real because those who are responsible for implementation are those who have a vested interest in the processing of personal data”. “In the United States, we call government interference domination, and we call market place governance freedom. We should recognize that the marketplace does not automatically ensure diversity, but that (as in the example of the United States) the marketplace can also act as a serious constraint to freedom” (Jhally 2006 [61], p. 60).

Joseph Turow (2006 [62], pp. 83f) argues that privacy policies of commercial Internet websites are often complex, written in turgid legalese, but formulated in a polite way. They would first assure the user that they care about his/her privacy and then spread over a long text advance elements that mean that personal data is given to (mostly unnamed) “affiliates”. The purpose would be to cover up the capturing and selling of marketing data. Turow's analysis can be applied to Facebook.

Facebook wants to assure users that it deals responsibly with their data and that users are in full control of privacy controls. Therefore as an introduction to the privacy issue, it writes: “Facebook is about sharing. Our privacy controls give you the power to decide what and how much you share” ( http://www.facebook.com/privacy/explanation.php, accessed on 01-19-2011). The use of advertisement is spread throughout Facebook's privacy policy that is 35,709 characters long (approximately 11 single-spaced A4 print pages). The complexity and length of the policy makes it unlikely that users read it in detail. Facebook on the one hand says that it uses the users' data to provide a “safe experience”, but on the other hand uses targeted advertising, in which it sells user data to advertisers: “We allow advertisers to choose the characteristics of users who will see their advertisements and we may use any of the non-personally identifiable attributes we have collected (including information you may have decided not to show to other users, such as your birth year or other sensitive personal information or preferences) to select the appropriate audience for those advertisements. For example, we might use your interest in soccer to show you ads for soccer equipment, but we do not tell the soccer equipment company who you are”. Facebook avoids speaking of selling user-generated data, demographic data and user behavior. It instead always uses the phrase “sharing information“ with third-parties, which is a euphemism for the commodification of user data.

The section about information sharing in Facebook's privacy policy starts with the following paragraph: “Facebook is about sharing information with others—friends and people in your communities—while providing you with privacy settings that you can use to restrict other users from accessing some of your information. We share your information with third parties when we believe the sharing is permitted by you, reasonably necessary to offer our services, or when legally required to do so”. The section is only about the sharing between users and ignores the issue of the sharing of user data with advertisers. As of January 2011, there are no privacy settings on Facebook that allow users to disable the access of advertisers to data about them (there are only minor privacy settings relating to “social advertising” in Facebook friend communities). The formulation that the privacy settings can be used for restricting access to information is only true for other users, but not for advertisers. The formulation also reveals that Facebook thinks targeted advertising is “reasonably necessary” and “permitted by the users”. The problem is that the users are not asked if they find targeted advertising necessary and agree to it.

Facebook says that it does not “share your information with advertisers without your consent”, but targeted advertising is always activated, there is no opt-in and no opt-out option. Users must agree to the privacy terms in order to be able to use Facebook and thereby they agree to the use of their self-descriptions, uploaded data, and transaction data to be sold to advertising clients. Given the fact that Facebook is the second most used web platform in the world, it is unlikely that many users refuse to use Facebook because doing so will make them miss the social opportunities to stay in touch with their friends and colleagues, to make important new contacts, and may result in being treated as outsiders in their communities. Facebook coerces users into agreeing to the use of their personal data and collected user behavior data for economic purposes because if you do not agree to the privacy terms that make targeted advertising possible, you are unable to use the platform. Users are not asked if they want to have their data sold to advertisers, therefore one cannot speak of user consent. Facebook utilizes the notion of “user consent” in its privacy policy in order to mask the commodification of user data as consensual. It bases its assumption on a control theory of privacy and assumes that users want to sacrifice consumer privacy in order to be able to use Facebook. Also a form of fetishism is at play here because Facebook advances the idea that only advertising-financed social networking is possible in order to “help improve or promote our service”. The idea that social networking sites can be run on a non-profit and non-commercial basis, as is for example attempted by the alternative social networking project Diaspora, is forestalled by presenting advertising on social networking sites as purely positive mechanisms that help improve the site. Potential problems posed by advertising for users are never mentioned.

Advertisers not only receive data from Facebook for targeting advertising, they also use small programs, so-called cookies, to collect data about user behavior. Facebook provides an opt-out option from this cookie setting that is not visible in the normal privacy settings, but deeply hidden in the privacy policy with a link to a webpage, where users can deactivate cookie usage by 66 advertising networks (as of January 19, 2011). The fact that this link is hard to find makes it unlikely that users opt-out. This reflects the attitude of many commercial websites towards privacy protection mechanisms as being bad for business. It also shows that Facebook values profit much higher than user privacy, which explains the attempt to make the usability for opting-out of cookie use for targeted advertising as complex as possible.

Facebook reduces the privacy issue to visibility of information to other users. In the privacy settings, users can only select which information to show or not to show to other users, whereas they cannot select which data not to show to advertisers. Advertising is not part of the privacy menu and is therefore not considered as a privacy issue by Facebook. The few available advertising settings (opt-out of social advertising, use of pictures and name of users for advertising) are a submenu of the “account settings”. Targeted advertising is automatically activated and cannot be deactivated in the account- and privacy-settings, which shows that Facebook is an advertising- and economic surveillance-machine that wants to store, assess, and sell as much user data as possible in order to maximize its profits.

Its privacy policy also allows Facebook to collect data about users' behavior on other websites and to commodify this data for advertising purposes: “Information from other websites. We may institute programs with advertising partners and other websites in which they share information with us”. These data can be stored and used by Facebook, according to its privacy policy, for 180 days.

To sum up: Facebook's privacy policy is a manifestation of a self-regulatory privacy policy regime that puts capital interests first. It is long and written in complex language, in order to cover up the economic surveillance and commodification of user data for targeted advertising that helps Facebook accumulate capital. Facebook euphemistically describes this commodification process as “sharing”. The privacy policy advances the perception that Facebook always seeks consent by users before selling user data to advertisers, which masks that Facebook does not ask users if they truly wish to have their personal data, user-generated data, and user behavior data sold for advertising, that users cannot participate in the formulation of privacy policies, and that they are coerced into having to accept Facebook's policy in order to use the platform. Facebook hides an opt-out option from cookie-based advertising deep in its privacy policy and only provides a minimum of advertising privacy options in its settings. It reduces the issue of privacy to controlling the visibility of user information to other users and neglects the issue of control of advertising settings as a fundamental privacy issue. Facebook also collects and commodifies data about user behavior on other websites. The analysis shows that on Facebook “privacy is property” (Lyon 1994 [18], p. 189) and that Facebook tries to manipulate the perception of privacy by Facebook users and the public by complexifying the understanding of targeted advertising in its privacy policy, minimizing advertising control settings, implementing a complex usability for the few available advertising opt-out options, and reducing privacy to an individual and interpersonal issue. But what is the larger purpose of Facebook's manipulative privacy policy and advertising settings?

In order to understand the deeper reasons, it is necessary to analyze Facebook's accumulation strategy and Facebook user exploitation.

4.2. Exploitation on Facebook

Alvin Toffler (1980 [63]) introduced the notion of the prosumer in the early 1980s. It means the “progressive blurring of the line that separates producer from consumer” (Toffler 1980 [63], p. 267). Toffler describes the age of prosumption as the arrival of a new form of economic and political democracy, self-determined work, labor autonomy, local production, and autonomous self-production. But he overlooks that prosumption is used for outsourcing work to users and consumers, who work without payment. Thereby corporations reduce their investment costs and labor costs, jobs are destroyed, and consumers who work for free are extremely exploited. They produce surplus value that is appropriated and turned into profit by corporations without paying wages. Notwithstanding Toffler's uncritical optimism, his notion of the “prosumer” describes important changes of media structures and practices and can therefore also be adopted for critical studies.

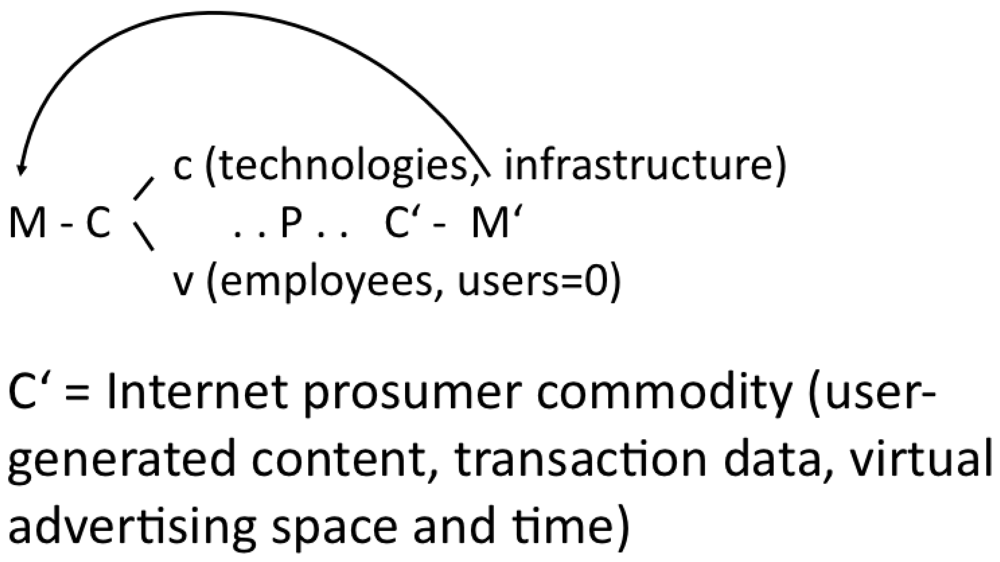

For Marx (1867 [35]), the profit rate is the relation of profit to investment costs: p = s / (c + v) = surplus value/(constant capital (= fixed costs) + variable capital (= wages)). If Internet users become productive web 2.0 prosumers, then in terms of Marxian class theory this means that they become productive laborers who produce surplus value and are exploited by capital because for Marx productive labor generates surplus (Fuchs 2010 [64]). Therefore not merely those who are employed by web 2.0 corporations for programming, updating, and maintaining the soft- and hardware, performing marketing activities, etc., are exploited surplus value producers, but also the users and prosumers, who engage in the production of user-generated content. New media corporations do not (or hardly) pay the users for the production of content. One accumulation strategy is to give them free access to services and platforms, let them produce content, and to accumulate a large number of prosumers that are sold as a commodity to third-party advertisers. Not a product is sold to the users, but the users are sold as a commodity to advertisers. The more users a platform has, the higher the advertising rates can be set. The productive labor time that is exploited by capital on the one hand involves the labor time of the paid employees and on the other hand all of the time that is spent online by the users. For the first type of knowledge labor, new media corporations pay salaries. The second type of knowledge is produced completely for free. There are neither variable nor constant investment costs. The formula for the profit rate needs to be transformed for this accumulation strategy:

s: surplus value; c: constant capital; v1: wages paid to fixed employees; v2: wages paid to users

The typical situation is that v2 ≥ 0 and that v2 substitutes v1 (v1 ≥ v2 = 0). If the production of content and the time spent online were carried out by paid employees, the variable costs would rise and profits would therefore decrease. This shows that prosumer activity in a capitalist society can be interpreted as the outsourcing of productive labor to users, who work completely for free and help maximizing the rate of exploitation (e = s/v = surplus value/variable capital) so that profits can be raised and new media capital may be accumulated. This situation is one of infinite exploitation of the users. Capitalist prosumption is an extreme form of exploitation, in which the prosumers work completely for free.

That surplus value generating labor is an emergent property of capitalist production, means that production and accumulation will break down if this labor is withdrawn. It is an essential part of the capitalist production process. That prosumers conduct surplus-generating labor, can also be seen by imagining what would happen if they would stop using Facebook: The number of users would drop, advertisers would stop investments because no objects for their advertising messages and therefore no potential customers for their products could be found, the profits of the new media corporations would drop, and they would go bankrupt. If such activities were carried out on a large scale, a new economy crisis would arise. This thought experiment shows that users are essential for generating profit in the new media economy. Furthermore they produce and co-produce parts of the products, and therefore parts of the use value, exchange value, and surplus value that are objectified in these products.

Dallas Smythe (1981/2006 [65]) suggests that in the case of media advertisement models, the audience is sold as a commodity to advertisers: “Because audience power is produced, sold, purchased and consumed, it commands a price and is a commodity. … You audience members contribute your unpaid work time and in exchange you receive the program material and the explicit advertisements” (Smythe 1981/2006 [65], pp. 233, 238). With the rise of user-generated content, free access social networking platforms, and other free access platforms that yield profit by online advertisement—a development subsumed under categories such as web 2.0, social software, and social networking sites—the web seems to come close to accumulation strategies employed by the capital on traditional mass media like TV or radio. The users who upload photos, and images, write wall posting and comments, send mail to their contacts, accumulate friends or browse other profiles on Facebook, constitute an audience commodity that is sold to advertisers. The difference between the audience commodity on traditional mass media and on the Internet is that, in the latter case, the users are also content producers; there is user-generated content, the users engage in permanent creative activity, communication, community building, and content-production. That the users are more active on the Internet than in the reception of TV or radio content, is due to the decentralized structure of the Internet, which allows many-to-many communication. Due to the permanent activity of the recipients and their status as prosumers, we can say that in the case of Facebook and the Internet the audience commodity is an Internet prosumer commodity (Fuchs 2010 [64]).

Surveillance on Facebook is surveillance of prosumers, who dynamically and permanently create and share user-generated content, browse profiles and data, interact with others, join, create, and build communities, and co-create information. The corporate web platform operators and their third party advertising clients continuously monitor and record personal data and online activities; they store, merge, and analyze collected data. This allows them to create detailed user profiles and to know about the personal interests and online behaviors of the users. Facebook sells its prosumers as a commodity to advertising clients. Money is exchanged for the access to user data that allows economic surveillance of the users. The exchange value of the Facebook prosumer commodity is the money value that the operators obtain from their clients; its use value is the multitude of personal data and usage behavior that is dominated by the commodity and exchange value form. The surveillance of the prosumers' permanently produced use values, i.e., personal data and interactions, by corporations allows targeted advertising that aims at luring the prosumers into consumption and at manipulating their desires and needs in the interest of corporations and the commodities they offer. Facebook prosumers are first commodified by corporate platform operators, who sell them to advertising clients and, secondly, this results in their intensified exposure to commodity logic. They are double objects of commodification, they are commodities themselves and through this commodification their consciousness becomes, while online, permanently exposed to commodity logic in the form of advertisements. Most online time is advertising time. On Facebook, personal data, interests, interactions with others, information behavior, and also the interactions with other websites are used for targeted advertising. So while you are using Facebook, it is not just you interacting with others and browsing profiles, all of these activities are framed by advertisements presented to you. These advertisements come about by permanent surveillance of your online activities. Such advertisements do not necessarily represent consumers' real needs and desires because the ads are based on calculated assumptions, whereas needs are much more complex and spontaneous. The ads mainly reflect marketing decisions and economic power relations—much information about actors who do not have the financial power to buy advertisements, but who may nonetheless be interesting and relate to the same topic, is left out.

Sut Jhally (1987 [55], Chapter 2) argues that Dallas Smythe's notion of the audience commodity is too imprecise. Jhally says that advertisers buy the watching time of the audience as a commodity. His central assumption is that one should see “watching time as the media commodity” (Jhally 1987 [55], p. 73). “When the audience watches commercial television it is working for the media, producing both value and surplus value” (Jhally 1987 [55], p. 83). He says that the networks buy the watching-power of the audience (Jhally 1987 [55], p. 75), but this is a flawed assumption because watchers do not receive money for owning a television or for watching. Their work is unpaid. Jhally argues that the audience watching time is the programme time and that advertising watching time is surplus time (Jhally 1987 [55], p. 76). The audience's wage would be the programming (Jhally 1987 [55], p. 85). Andrejevic (2002 [66], 2004 [67], 2007 [68], 2010 [69]) has applied Jhally's analysis to reality TV, the Internet, social networking sites, and interactive media in general and says that there the accumulation strategy is not based on exploiting the work of watching, but the work of being watched.

The analytical problem that Smythe and Jhally in relation to TV, radio, and newspapers had to cope with is that consuming these media is a passive activity. Therefore they had to find a way to argue that this passive activity also produces surplus value. Jhally's analysis that in the case of television watching time is sold as a commodity, equals saying that the more watchers there are, the higher advertising profits are generated. In the case of television this part of his analysis is feasible, but in the world of the Internet, the situation is different. Here users are not passive watchers, but active creators of content. Advertisers are not only interested in the time that users spend online, but in the products that are created during this time—user-generated digital content and online behavior. The users' data—information about their uploaded data, their interests, demographic data, their browsing and interaction behavior—is sold to the advertisers as a commodity. Contrary to the world of television that Jhally analyzes, on the Internet the users' subjective creations are commodified. Therefore, Smythe's original formulation holds here that the audience itself—its subjectivity and the results of its subjective creative activity—is sold as a commodity. But the Internet is an active medium, where consumers of information are producers of information. Therefore it is better to speak in the case of Facebook and the capitalist parts of web 2.0 of Internet prosumer commodification.

Figure 1 shows the process of capital accumulation on Facebook. Facebook invests money (M) for buying capital: Technologies (server space, computers, organizational infrastructure, etc.) and labor power (paid Facebook employees). These are the constant and variable capital outlays. Facebook employees, who create the Facebook online environment that is accessed by Facebook users, produce part of the surplus value. Facebook users make use of the platform for generating content that they upload (user-generated data). The constant and variable capital invested by Facebook that is objectified in the Facebook environment is a prerequisite of their activities. Their products are user-generated data, personal data, and transaction data about their browsing behavior and communication behavior on Facebook. Facebook sells this data commodity to advertising clients at a price that is larger than the invested constant and variable capital. The surplus value contained in this commodity is partly created by the users, partly by the Facebook employees. The difference is that the users are unpaid and therefore infinitely exploited. Once the Internet prosumer commodity that contains the user-generated content, transaction data, and the right to access virtual advertising space and time is sold to advertising clients, the commodity is transformed into money capital and surplus value is realized into (money form) capital.

Daniel Solove (2008 [5], Chapter 5) has worked out a model of different privacy violations that is based on a model of information processing. There is a data subject and a data holder. Privacy violation can occur in relation to the data subject (invasion) or in relation to the data holder (information collection, processing, or dissemination). Based on these four groups of harmful violations, Solove distinguishes 16 forms of privacy violations. Many of these forms can be found when analyzing the economic operations of Facebook: Facebook watches and records usage behavior and personal data uploaded and entered by users (surveillance), it aggregates information about users that is obtained from its own site and other sites (aggregation), based on aggregation it identifies the consumer interests of users (identification), it is unclear to whom exactly the data is shared for economic purposes (exclusion from knowledge about data use, one can here also speak of the intransparency of data use), the data are exploited for profit generation and therefore for economic purposes (data appropriation, understood as “the use of the data subject's identity to serve another's aims and interests” (appropriation; Solove 2008 [8], p. 105). The surveillance, aggregation, identification, intransparency and appropriation of personal data and usage data are essential activities of Facebook that serve economic purposes. They are all part of Facebook's business model that is based on targeted personalized advertising. Solove defines secondary use as a privacy violation, where data is used for a purpose without the data subject's consent. Commercial social networking sites are primarily used because they allow users to communicate with their friends, colleagues, and others and to establish new relationships (Fuchs 2009 [70], 2010 [71], 2010 [72]). Their privacy policies tend to be complex and long. Although users formally agree to the commercial usage of their data, they do not automatically morally agree and express concerns about data appropriation for economic purposes (Fuchs 2009 [70], 2010 [71], 2010 [72]). One can therefore here also speak of a secondary data use in a specific normative sense.

Arendt (1958 [53]) and Habermas (1989 [54]) stress that capitalism has traditionally been based on a separation of the private and the public sphere. Facebook is a typical manifestation of a stage of capitalism, in which the relation of public and private, and labor and play, collapses and in which this collapse is exploited by capital.

“The distinction between the private and the public realms … equals the distinction between things that should be shown and things that should not be hidden” (Arendt 1958 [53], p. 72). On Facebook, all private data and user behavior is shown to the corporation, which commodifies both, whereas what exactly happens with their data and to whom these data are sold for the task of targeting advertising is hidden from the users. So the main form of privacy on Facebook is the intransparency of capital's use of personal user data that is based on the private appropriation of user data by Facebook. The private user dimension of Facebook is that content is user-generated by individual users. When it is uploaded to Facebook or other social media, parts of it (to a larger or smaller degree depending on the privacy settings the users choose) become available to lots of people, whereby the data obtains a more public character. The public availability of data can both have advantages (new social relations, friendships, staying in touch with friends, family, relatives over distance, etc.) and disadvantages (job-related discrimination, stalking, etc.) for users (Fuchs 2009 [70], 2010 [71], 2010 [72]). The private-public relation has another dimension on Facebook: The privately generated user data and the individual user behavior become commodified on Facebook. This data is sold to advertising companies so that targeted advertising is presented to users and Facebook accumulates profit that is privately owned by the company. Facebook commodifies private data that is used for public communication in order to accumulate capital that is privately owned. The users are excluded from the ownership of the resulting money capital, i.e., they are exploited by Facebook and are not paid for their creation of surplus value (Fuchs 2010 [64]). Facebook is a huge advertising-, capital accumulation-, and user exploitation-machine. Data surveillance is the means for Facebook's economic ends.

Capitalism connects labor and play in a destructive dialectic. Traditionally, play in the form of enjoyment, sex, and entertainment was in capitalism only part of spare time, which was unproductive and separate from labor in time. Freud (1961 [73]) argued that the structure of drives is characterized by a dialectic of Eros (drive for life, sexuality, lust) and Thanatos (drive for death, destruction, aggression). Humans would strive for the permanent realization of Eros (pleasure principle), but culture would only become possible by a temporal negation and suspension of Eros and the transformation of erotic energy into culture and labor. Labor would be a productive form of desexualization—the repression of sexual drives. Freud speaks in this context of the reality principle or sublimation. The reality principle sublates the pleasure principle; human culture sublates human nature and becomes man's second nature. Marcuse (1955 [74]) connected Freud's theory of drives to Marx's theory of capitalism. He argued that alienated labor, domination, and capital accumulation have turned the reality principle into a repressive reality principle—the performance principle: alienated labor constitutes a surplus-repression of Eros—the repression of the pleasure principle takes on a quantity that exceeds the culturally necessary suppression. Marcuse connected Marx's notions of necessary labor and surplus labor/value to the Freudian drive structure of humans and argued that necessary labor on the level of drives corresponds to necessary suppression and surplus labor to surplus-repression. This means that in order to exist, a society needs a certain amount of necessary labor (measured in hours of work) and hence a certain corresponding amount of suppression of the pleasure principle (also measured in hours). The exploitation of surplus value (labor that is performed for free and generates profit) would mean not only that workers are forced to work for free for capital to a certain extent, but also that the pleasure principle must be additionally suppressed.

“Behind the reality principle lies the fundamental fact of Ananke or scarcity (Lebensnot), which means that the struggle for existence takes place in a world too poor for the satisfaction of human needs without constant restraint, renunciation, delay. In other words, whatever satisfaction is possible necessitates work, more or less painful arrangements and undertakings for the procurement of the means for satisfying needs. For the duration of work, which occupies practically the entire existence of the mature individual, pleasure is ‘suspended’ and pain prevails” (Marcuse 1955 [74], p. 35). In societies that are based on the principle of domination, the reality principle takes on the form of the performance principle. Domination “is exercised by a particular group or individual in order to sustain and enhance itself in a privileged situation” (Marcuse 1955 [74], p. 36). The performance principle is connected to surplus-repression, a term that describes “the restrictions necessitated by social domination” (Marcuse 1955 [74], p. 35). Domination introduces “additional controls over and above those indispensable for civilized human association” (Marcuse 1955 [74], p. 37).

Marcuse (1955 [74]) argues that the performance principle means that Thanatos governs humans and society and that alienation unleashes aggressive drives within humans (repressive desublimation) that result in an overall violent and aggressive society. Due to the high productivity reached in late-modern society, a historical alternative would be possible: The elimination of the repressive reality principle, the reduction of necessary working time to a minimum and the maximization of free time, an eroticization of society and the body, the shaping of society and humans by Eros, the emergence of libidinous social relations. Such a development would be a historical possibility—but one incompatible with capitalism and patriarchy.

Gilles Deleuze (1995 [75]) has pointed out that in contemporary capitalism, disciplines are transformed in such a way that humans increasingly discipline themselves without direct external violence. He terms this situation the society of (self-)control. It can for example be observed in the strategies of participatory management. This method promotes the use of incentives and the integration of play into labor. It argues that work should be fun, workers should permanently develop new ideas, realize their creativity, enjoy free time within the factory, etc. The boundaries between work time and spare time, labor and play, become fuzzy. Work tends to acquire qualities of play, and entertainment in spare time tends to become labor-like. Working time and spare time become inseparable. At the same time work-related stress intensifies and property relations remain unchanged. The exploitation of Internet users by Facebook (and other Internet companies) is an aspect of this transformation. It signifies that private Internet usage, which is motivated by play, entertainment, fun, and joy—aspects of Eros—has become subsumed under capital and has become a sphere of the exploitation of labor. It produces surplus value for capital and is exploited by the latter so that Internet corporations accumulate profit. Play and labor are today indistinguishable. Eros has become fully subsumed under the repressive reality principle. Play is largely commodified, there is no longer free time or spaces that are not exploited by capital. Play is today productive, surplus value generating labor that is exploited by capital. All human activities, and therefore also all play, tends under the contemporary conditions to become subsumed under and exploited by capital. Play as an expression of Eros is thereby destroyed, human freedom and human capacities are crippled. On Facebook, play and labor converge into play labor that is exploited for capital accumulation. Facebook therefore stands for the total commodification and exploitation of time—all human time tends to become surplus-value generating time that is exploited by capital. Table 1 summarizes the application of Marcuse's theory of play, labor and pleasure to Facebook.

5. Conclusion

Facebook founder and CEO, Mark Zuckerberg, says that Facebook is about the “concept that the world will be better if you share more” (Wired Magazine, August 2010). Zuckerberg has repeatedly said that he does not care about profit, but wants to help people with Facebook's tools and wants to create an open society. Kevin Colleran, Facebook advertising sales executive, argued in a Wired story that “Mark is not motivated by money” (Wired Magazine, August 2010). In a Times story (source: http://business.timesonline.co.uk/tol/business/industry_sectors/technology/article4974197.ece; Times, October 20, 2008), Zuckerberg said: “The goal of the company is to help people to share more in order to make the world more open and to help promote understanding between people. The long-term belief is that if we can succeed in this mission then we will also be able to build a pretty good business and everyone can be financially rewarded. … The Times: Does money motivate you? Zuckerberg: No”.

Zuckerberg's view of Facebook is contradicted by the analysis presented in this paper. The analysis has shown that Facebook's privacy strategy masks the exploitation of users. If Zuckerberg really does not care about profit, why is Facebook not a non-commercial platform and why does it use targeted advertising? The problems of targeted advertising are that it aims at controlling and manipulating human needs, that users are normally not asked if they agree to the use of advertising on the Internet, but have to agree to advertising if they want to use commercial platforms (lack of democracy), that advertising can increase market concentration, that it is intransparent for most users what kind of information about them is used for advertising purposes, and that users are not paid for the value creation they engage in when using commercial web 2.0 platforms and uploading data. Surveillance on Facebook is not only an interpersonal process, where users view data about other individuals that might benefit or harm the latter, it is primarily economic surveillance, i.e., the collection, storage, assessment, and commodification of personal data, usage behavior, and user-generated data for economic purposes. Facebook, and other web 2.0 platforms, are large advertising-based capital accumulation machines that achieve their economic aims by economic surveillance.

Its privacy policy is the living proof that Facebook is primarily about profit-generation by advertising. “The world will be better if you share more”? But a better world for whom is the real question? “Sharing” on Facebook in economic terms means primarily that Facebook “shares” information with advertising clients. And “sharing” is only the euphemism for selling and commodifying data. Facebook commodifies and trades user data and user behavior data. Facebook does not make the world a better place; it makes the world a more commercialized place, a big shopping mall without exit. It makes the world only a better place for companies interested in advertising, not for users.

This paper questioned privacy concepts that protect and keep capitalist interests secret, and socio-economic inequality. This does not mean that the privacy concept should be abandoned, but challenged by a socialist privacy concept that protects workers and consumers. On Facebook, the “audience” is a worker/consumer—a prosumer. How can socialist privacy protection strategies be structured? The overall goal is to drive back the commodification of user-data and the exploitation of prosumers by advancing the decommodification of the Internet. Three strategies for achieving this goal are the advancement of opt-in online advertising, civil society surveillance of Internet companies, and the establishment and support of alternative platforms.

Opt-in privacy policies are typically favored by consumer and data protectionists, whereas companies and marketing associations prefer opt-out and self-regulation advertising policies in order to maximize profit (Bellman et al. 2004 [76]; Federal Trade Commission 2000 [77]; Gandy 1993 [78], 2003/2007 [79]; Quinn 2006 [22]; Ryker et al. 2002 [80]; Starke-Meyerring and Gurak 2007 [81]). Socialist privacy legislation could require all commercial Internet platforms to use advertising only as an opt-in option, which would strengthen the users' collective possibility for self-determination.

Corporate watch-platforms monitor the behavior of companies and report companies' misbehavior online. Examples are CorpWatch Reporting ( http://www.corpwatch.org), Transnationale Ethical Rating ( http://www.transnationale.org), or the Corporate Watch Project ( http://www.corporatewatch.org). Such projects are civil society mechanisms that monitor companies in order to make corporate crime and unethical corporate behavior transparent to the public. Similar platforms could be established for documenting privacy violations by Internet corporations and for explaining the platforms' privacy policies and strategies of user exploitation.

A third strategy of socialist privacy politics is to establish and support non-commercial, non-profit Internet platforms. The most well known project is Diaspora, which tries to develop an open source alternative to Facebook. Diaspora defines itself as “privacy-aware, personally controlled, do-it-all, open source social network” ( http://www.joindiaspora.com, accessed on November 11th, 2010).

Facebook's understanding of privacy is property-oriented and individualistic. It reflects the dominant capitalistic concept of privacy. Not only is a socialist privacy concept and strategy needed, but also alternatives to Facebook and the corporate Internet.

| Essence of human desires: | Reality principle in societies with scarcity | Repressive reality principle in classical capitalism | Repressive reality principle in capitalism in the age of Facebook |

|---|---|---|---|

| Immediate satisfaction | Delayed satisfaction | Delayed satisfaction | Immediate online satisfaction |

| Pleasure | Restraint of pleasure | Leisure time: pleasure, work time: restraint of pleasure, surplus repression of pleasure | Collapse of leisure time and work time, leisure time becomes work time and work time leisure time, all time becomes exploited, online leisure time becomes surplus value-generating, wage labor time = surplus repression of pleasure, play labor time = surplus value generating pleasure time |

| Joy (play) | Toil (work) | Leisure time: joy (play), work time: toil (work) | Play labor: joy and play as toil and work, toil and work as joy and play |

| Receptiveness | Productiveness | Leisure time: receptiveness, work time: productiveness | Collapse of the distinction between leisure time/work time and receptiveness/productiveness, total commodification of human time |

| Absence of repression of pleasure | Repression of pleasure | Leisure time: absence of repression of pleasure, work time: repression of pleasure | Play labor time: surplus value generation appears to be pleasure-like, but serves the logic of repression (the lack of ownership of capital) |

Acknowledgments

The research presented in this paper was conducted in the project “Social Networking Sites in the Surveillance Society”, funded by the Austrian Science Fund (FWF): project number P 22445-G17. Project co-ordination: Christian Fuchs.

References

- Dussel, E. The Discovery of the Category of Surplus Value. In Karl Marx's Grundrisse: Foundations of the Critique of the Political Economy 150 Years Later; Musto, M., Ed.; Routledge: New York, NY, USA, 2008; pp. 67–78. [Google Scholar]

- Negri, A. Marx beyond Marx; Pluto: London, UK, 1991. [Google Scholar]