Deep-Learning-Based Classification for DTM Extraction from ALS Point Cloud

Abstract

:1. Introduction

- (1)

- Slope-based methods. The kernel foundation of these methods considers that two adjacent points are likely to belong to different categories if they have a mutation in height [2,3]. Slope-based methods are fast and easy to implement. Their shortcoming is their dependency on different thresholds in different terrains.

- (2)

- Mathematical morphology-based methods. These methods are composed of a series of 3D morphological operations on the ALS points. The results of morphological methods heavily rely on the filter window size. Small windows can only filter small non-ground objects, such as telegraph poles or small cars. By contrast, large windows often filter several ground points and make the results of filtration smooth. Zhang [4] proposed progressive morphological filters, which can filter large non-ground objects with ground points preserved by varying the filter window size, to overcome this problem.

- (3)

- Progressive triangular irregular network (TIN)-based method. Axelsson [5] proposed the iterative TIN; this network has been used in some business software. The TIN selects the coarse lowest points as ground points and builds a triangulated surface from them. Then, the TIN adds new points to the triangular surface under many constrains for slope and distance. However, the method is easily affected by negative outliers; these outliers draw the triangular surface downward.

- (4)

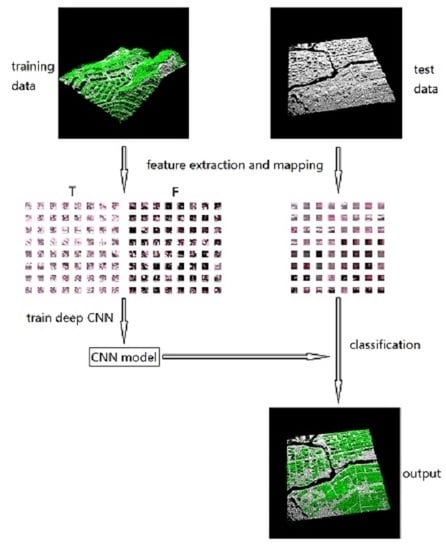

2. Methods

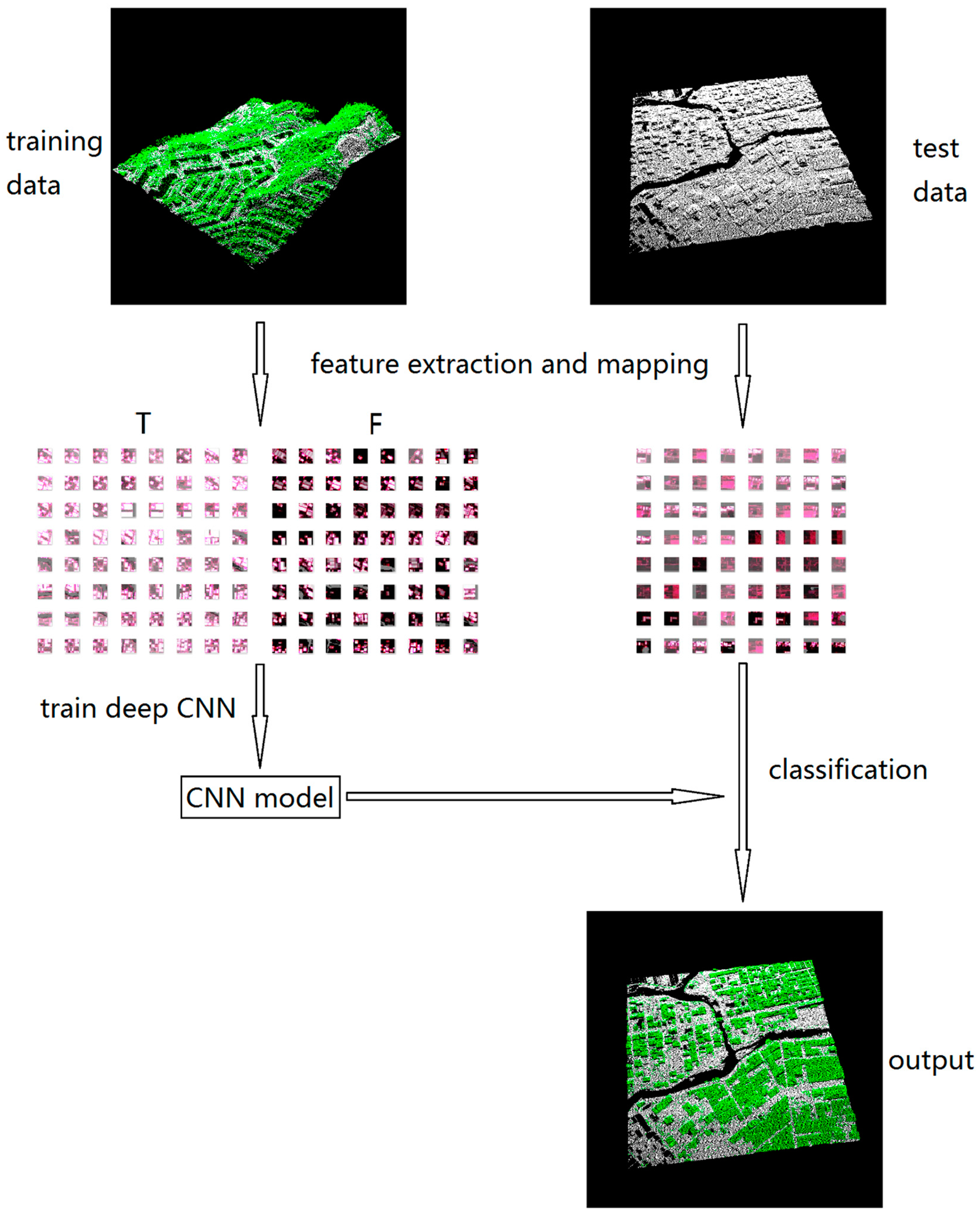

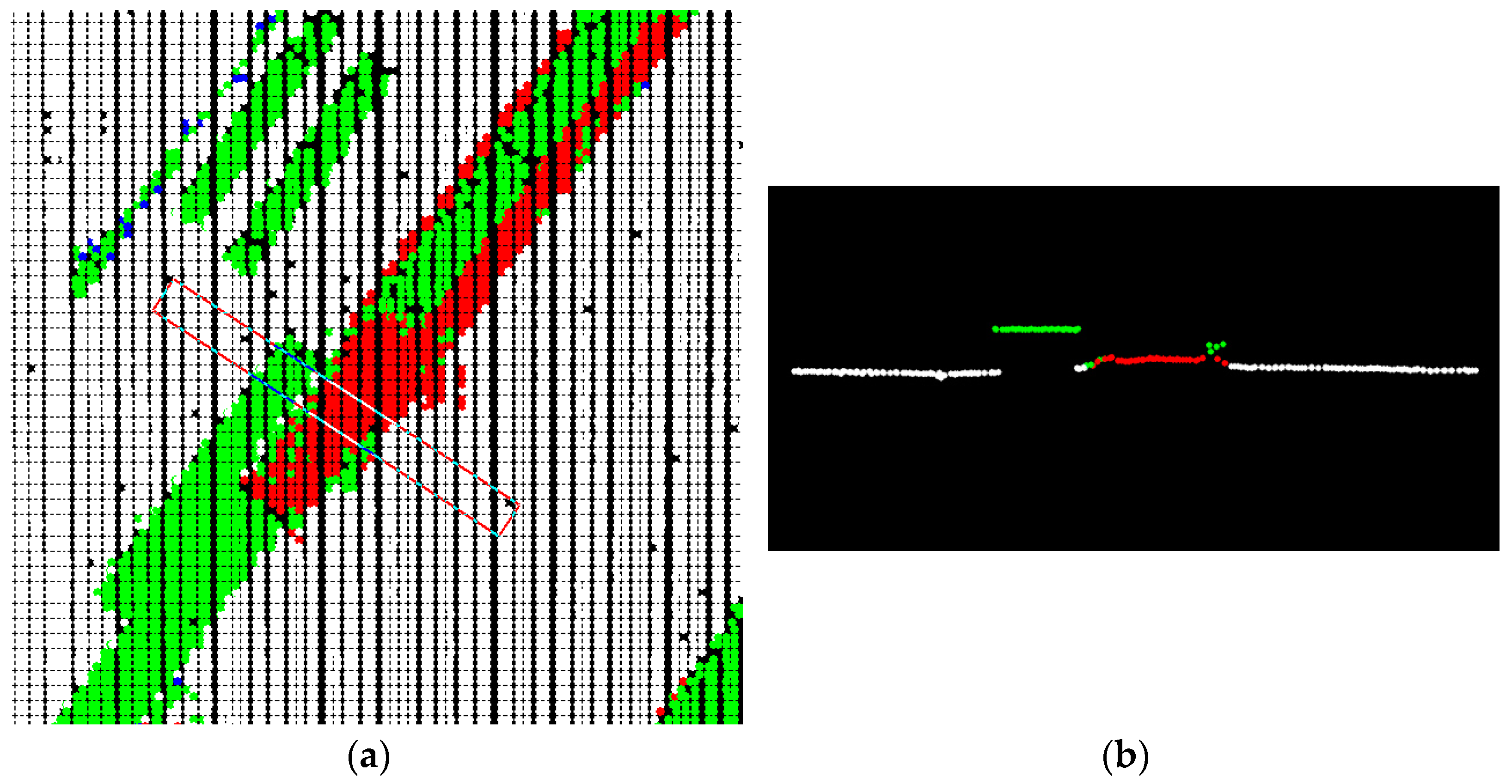

2.1. Information Extraction and Image Generation

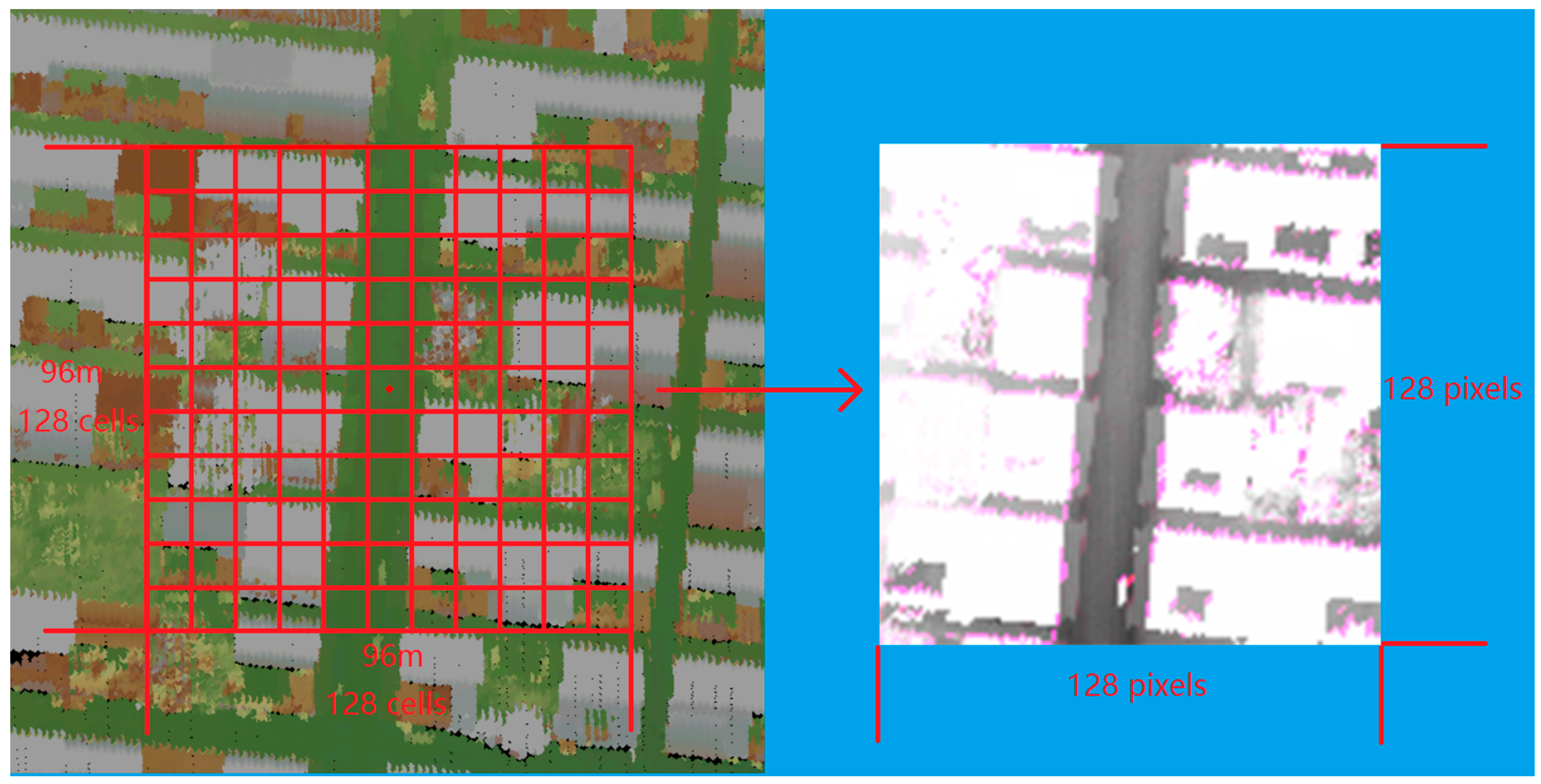

2.2. Convolutional Neural Network

- (1)

- An input layer is denoted as here. The input layer contains the input data for the network. The input of the deep CNN model is a three-channel (red, green, blue) 128 × 128 image generated from an ALS points.

- (2)

- A convolution layer is denoted as here. The convolution layer is the core building block of a convolutional network that performs most of the computational heavy lifting. Convolutional layers convolve the input image or feature maps with a learnable linear filter, which have a small receptive field (local connections) but extend through the full depth of the input volume. The output feature maps represent the responses of each filter on the input image or feature maps. Each filter is replicated across the entire visual field and the replicated unit share the same weights and bias (shared weights), which allows for features to be detected regardless of their position in the visual field. As a result, the network learns filters that activate when they see a specific type of feature at some spatial position in the input. In our model, all of the convolution layers use the same sized 3 × 3 convolution kernel.

- (3)

- A batch normalization layer is denoted as here. Given that the deep CNN often has a large number of parameters, taking care to prevent overfitting is necessary, particularly when the number of training samples is relatively small. Batch normalization [26] normalizes the data in each mini-batch, rather than merely performing normalization once at the beginning, using the following equation:

- (1)

- A rectified linear units layer is denoted as here. Activation layers are neuron layers that apply nonlinear activations on input neurons. They increase the nonlinear properties of the decision function and of the overall network without affecting the receptive fields of the convolution layer. Rectified linear units (ReLU) proposed by Nair and Hinton in 2010 [27] is the most popular activation function. ReLU can be trained faster than typical smoother nonlinear functions and allows the training of a deep supervised network without unsupervised pretraining. The function of ReLU can be demonstrated as .

- (2)

- A pooling layer is denoted as here. Pooling layers are nonlinear downsampling layers that achieve maximum or average values in each sub-region of input image or feature maps. The intuition is that once a feature has been found, its exact location is not as important as its rough location relative to other features. Pooling layers increase the robustness of translation and reduce the number of network parameters.

- (3)

- A fully-connected layer is denoted as here. After several convolutional and max pooling layers, high-level reasoning in the neural network is performed via fully-connected layers. A fully-connected layer takes all neurons in the previous layer and connects it to every single neuron it has. Fully-connected layers are not spatially located anymore, thereby making them suitable for classification rather than location or semantic segmentation.

3. Experimental Analysis

3.1. Experimental Data

3.2. Training

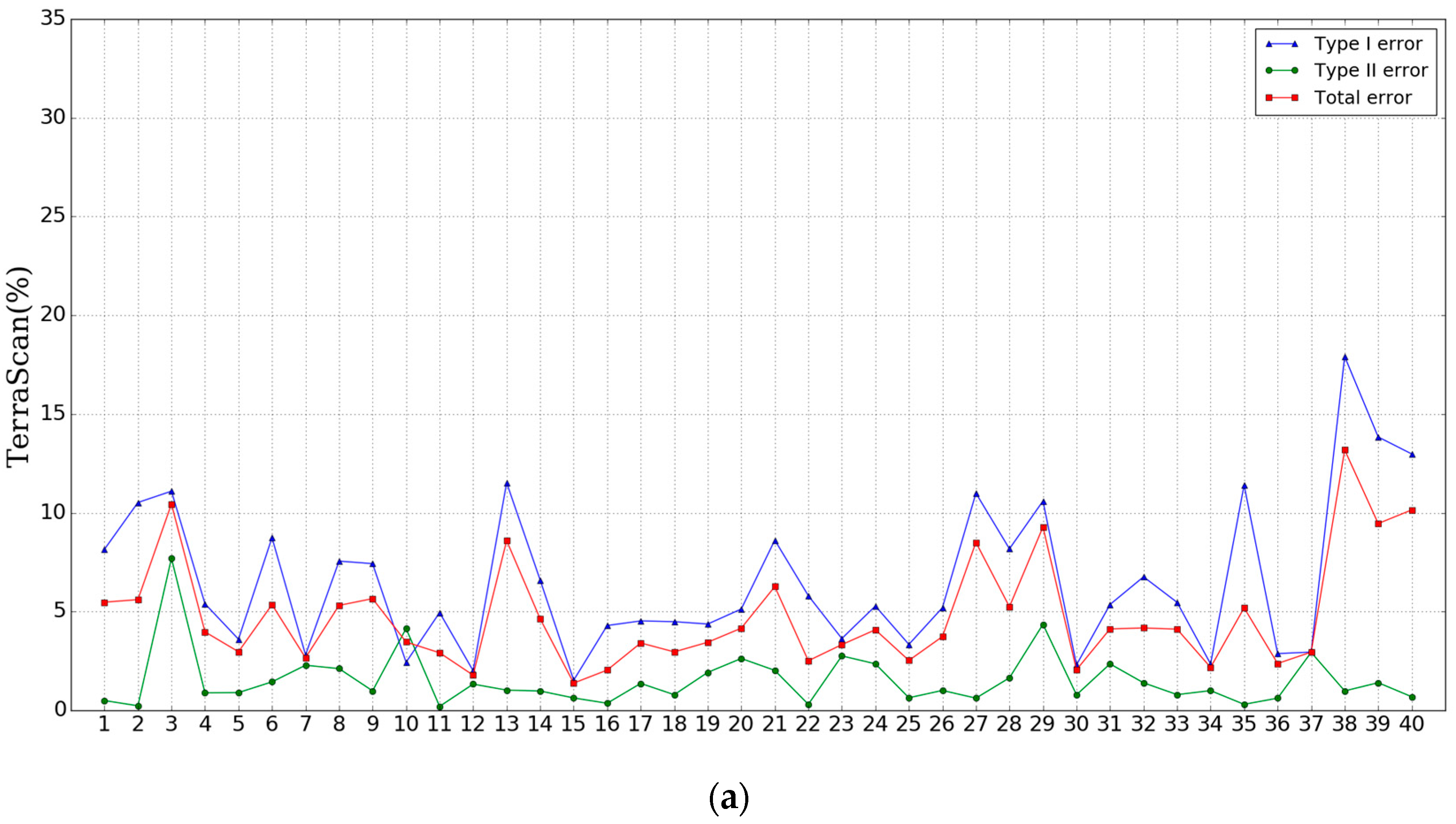

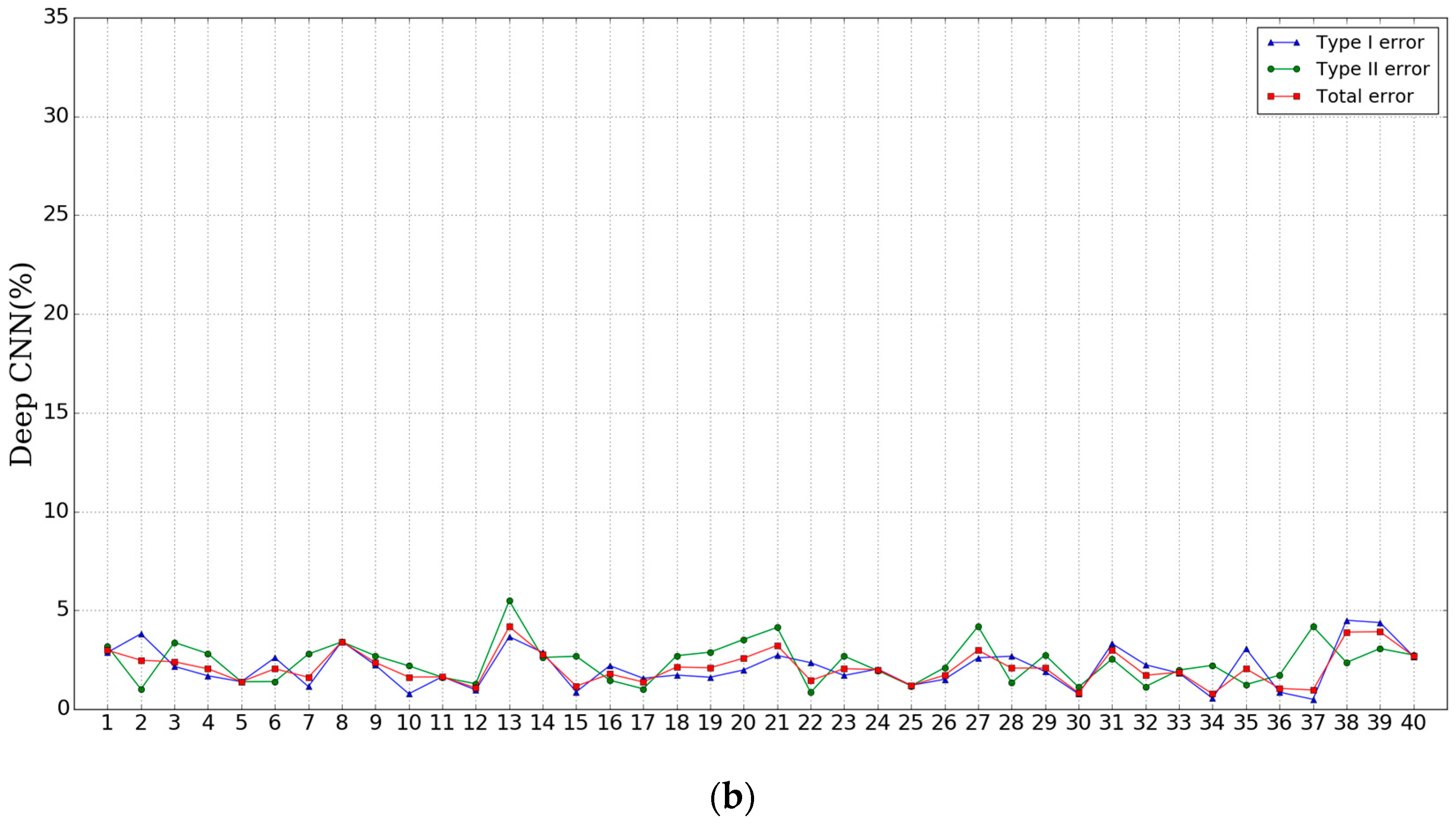

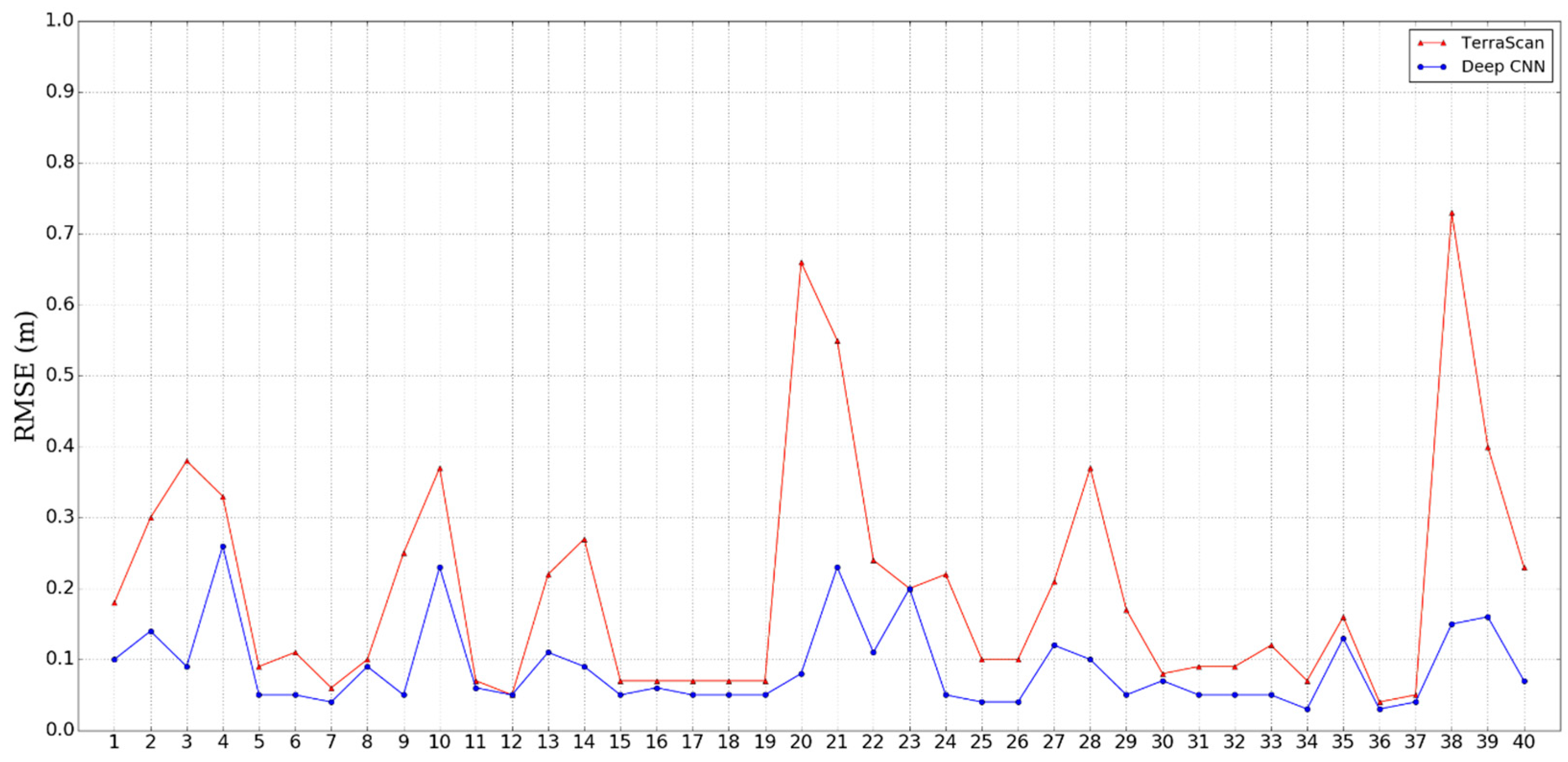

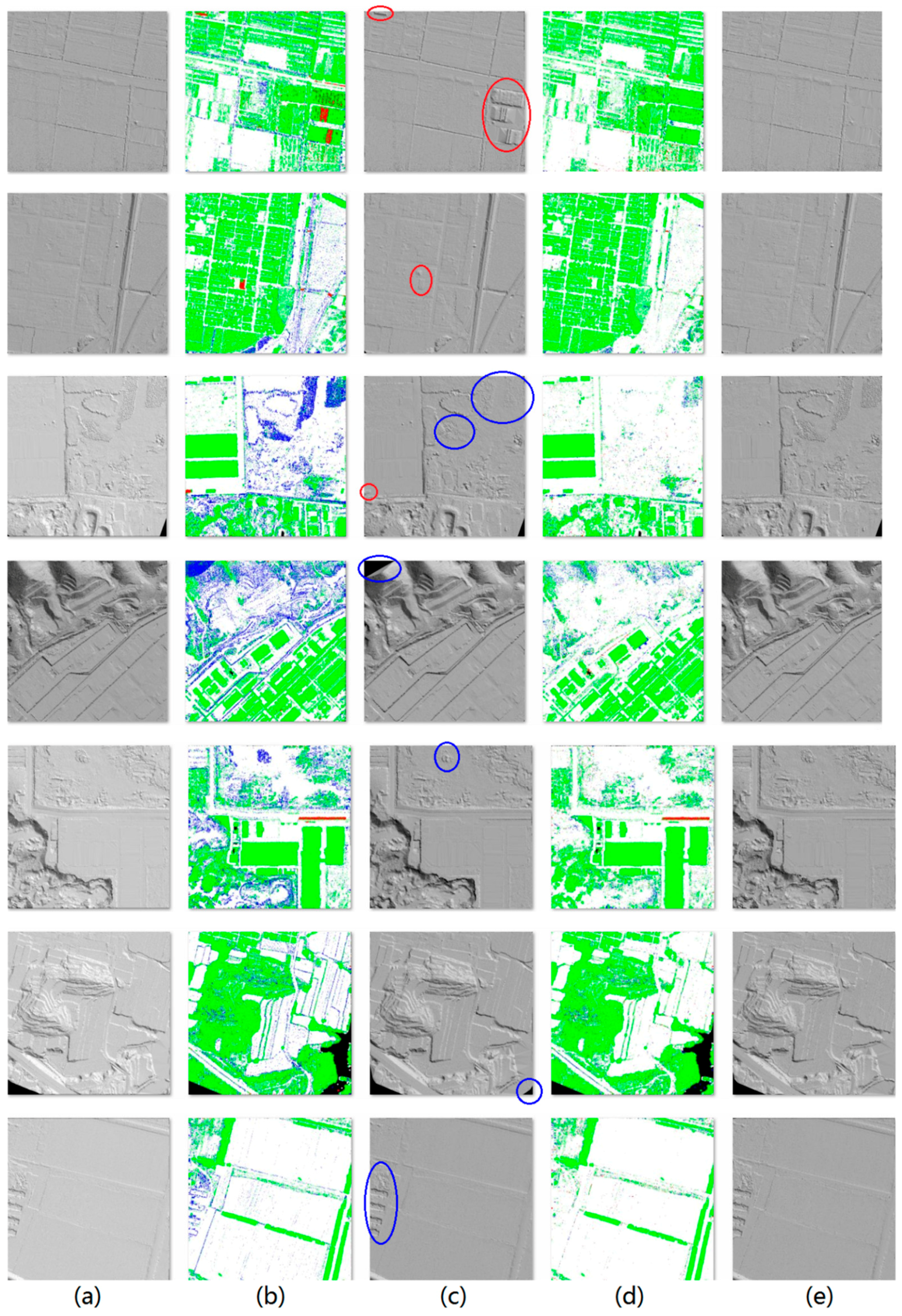

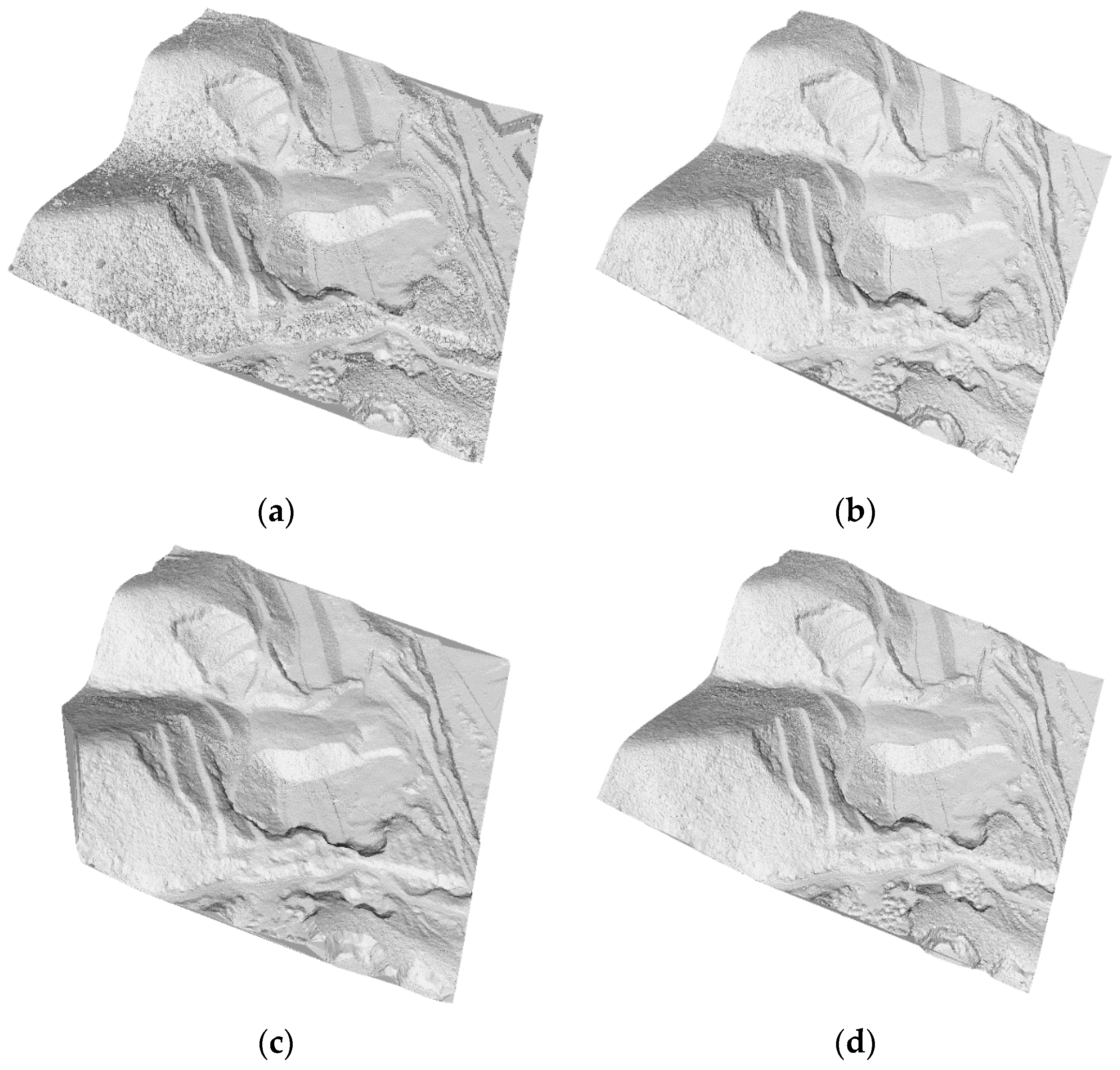

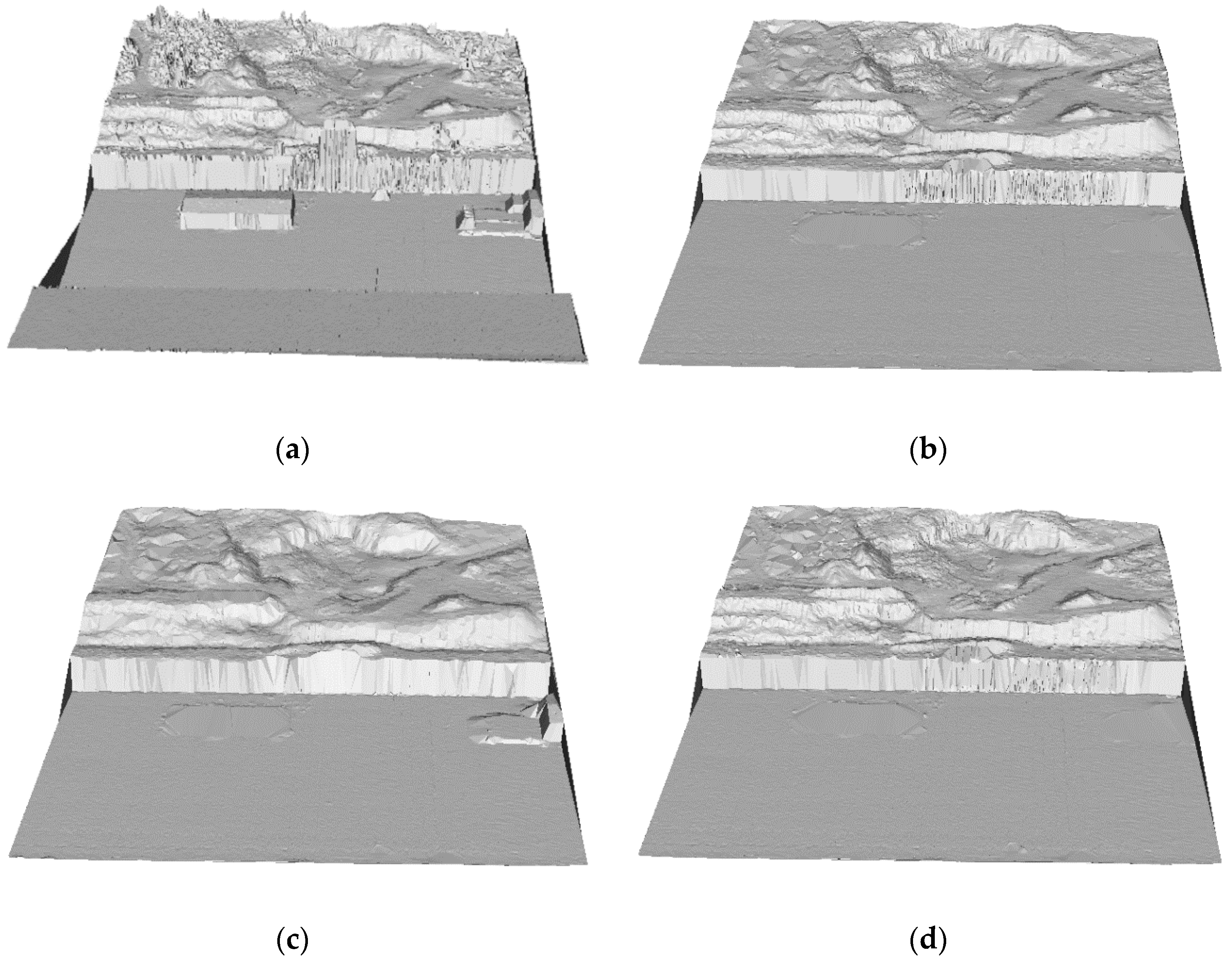

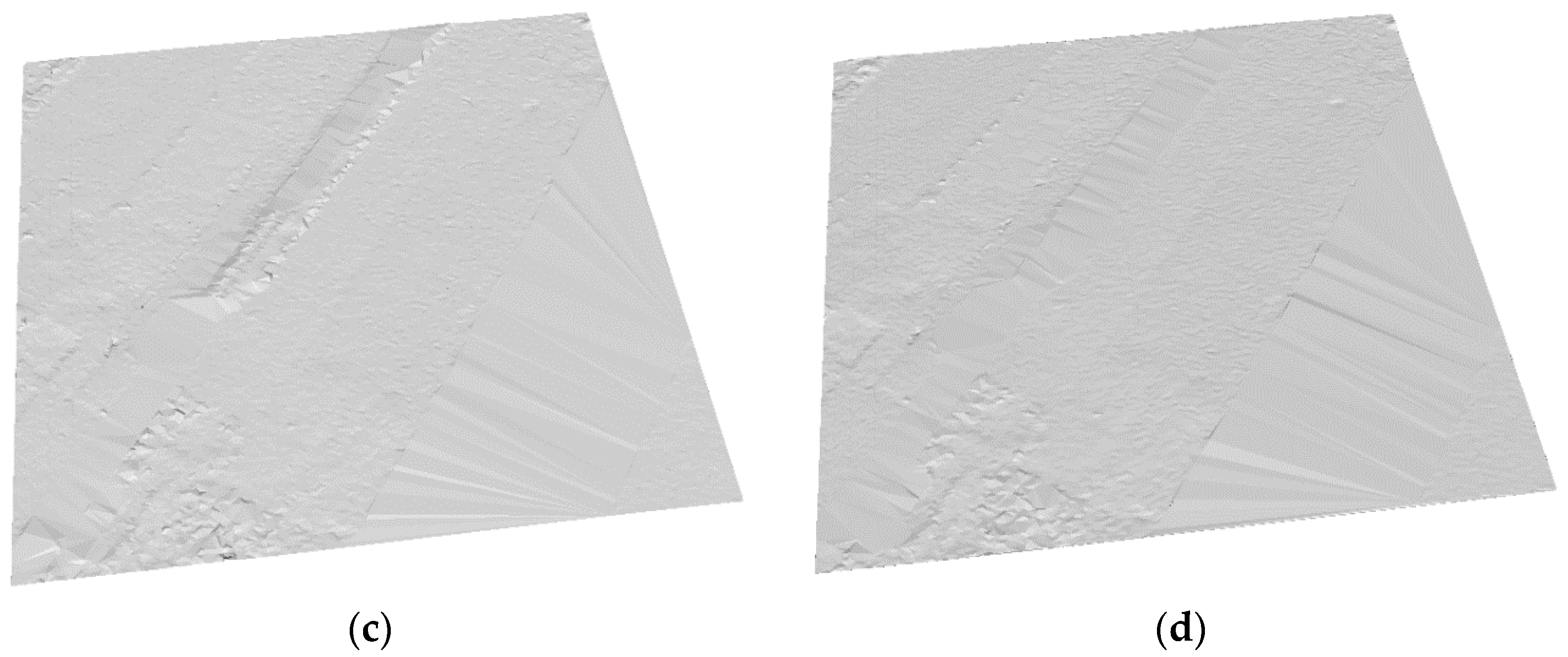

3.3. Results and Comparison with Other Filtering Algorithms

4. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Mongus, D.; Žalik, B. Parameter-free ground filtering of LiDAR data for automatic DTM generation. ISPRS J. Photogramm. Remote Sens. 2012, 67, 1–12. [Google Scholar] [CrossRef]

- Vosselman, G. Slope based filtering of laser altimetry data. Int. Arch. Photogram. Remote Sens. 2000, 33, 935–942. [Google Scholar]

- Brzank, A.; Heipke, C. Classification of Lidar Data into water and land points in coastal areas. Int. Arch. Photogram. Remote Sens. Spat. Inform. Sci. 2006, 36, 197–202. [Google Scholar]

- Zhang, K.; Chen, S.-C.; Whitman, D.; Shyu, M.-L.; Yan, J.; Zhang, C. A progressive morphological filter for removing non-ground measurements from airborne LiDAR data. IEEE Trans. Geosci. Remote Sens. 2003, 41, 872–882. [Google Scholar] [CrossRef]

- Axelsson, P. DEM generation from laser scanner data using adaptive TIN models. Proc. Int. Arch. Photogramm. Remote Sens. 2000, 33, 110–117. [Google Scholar]

- Kraus, K.; Pfeifer, N. Determination of terrain models in wooded areas with airborne laser scanner data. ISPRS J. Photogramm. Remote Sens. 1998, 53, 193–203. [Google Scholar] [CrossRef]

- Kamiński, W. M-Estimation in the ALS cloud point filtration used for DTM creation. In GIS FOR GEOSCIENTISTS; Hrvatski Informatički Zbor-GIS Forum: Zagreb, Croatia; University of Silesia: Katowice, Poland, 2012; p. 50. [Google Scholar]

- Błaszczak-Bąk, W.; Janowski, A.; Kamiński, W.; Rapiński, J. Application of the Msplit method for filtering airborne laser scanning datasets to estimate digital terrain models. Int. J. Remote Sens. 2015, 36, 2421–2437. [Google Scholar] [CrossRef]

- Błaszczak-Bąk, W.; Janowski, A.; Kamiński, W.; Rapiński, J. ALS Data Filtration with Fuzzy Logic. J. Indian Soc. Remote Sens. 2011, 39, 591–597. [Google Scholar]

- Hu, X.; Ye, L.; Pang, S.; Shan, J. Semi-Global Filtering of Airborne LiDAR Data for Fast Extraction of Digital Terrain Models. Remote Sens. 2015, 7, 10996–11015. [Google Scholar] [CrossRef]

- Kubik, T.; Paluszynski, W.; Netzel, P. Classification of Raster Images Using Neural Networks and Statistical Classification Methods; University of Wroclaw: Wroclaw, Poland, 2008. [Google Scholar]

- Meng, L. Application of neural network in cartographic pattern recognition. In Proceedings of the 16th International Cartographic Conference, Cologne, Germnay, 3–9 May 1993; Volume 1, pp. 192–202.

- LeCun, Y.; Boser, B.; Denker, J.S.; Henderson, D.; Howard, R.E.; Hubbard, W.; Jackel, L.D. Backpropagation applied to handwritten zip code recognition. Neural Comput. 1989, 1, 541–551. [Google Scholar] [CrossRef]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 24–27 June 2014; pp. 580–587.

- Tompson, J.; Goroshin, R.; Jain, A.; LeCun, Y.; Bregler, C. Efficient object localization using convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 648–656.

- Taigman, Y.; Yang, M.; Ranzato, M.; Wolf, L. Deepface: Closing the gap to human-level performance in face verification. In Proceedings of the Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 24–27 June 2014.

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. arXiv Preprint, 2015; arXiv:1512.03385. [Google Scholar]

- Sermanet, P.; Eigen, D.; Zhang, X.; Mathieu, M.; Fergus, R.; Lecun, Y. Overfeat: Integrated recognition, localization and detection using convolutional networks. In Proceedings of the International Conference on Learning Representations, Banff, AB, Canada, 14–16 April 2014.

- Waibel, A.; Hanazawa, T.; Hinton, G.E.; Shikano, K.; Lang, K. Phoneme recognition using time-delay neural networks. IEEE Trans. Acoust. Speech Signal Process. 1989, 37, 328–339. [Google Scholar] [CrossRef]

- Collobert, R.; Weston, J.; Bottou, L.; Karlen, M.; Kavukcuoglu, K.; Kuksa, P. Natural language processing (almost) from scratch. J. Mach. Learn. Res. 2011, 12, 2493–2537. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G. ImageNet classification with deep convolutional neural networks. In Proceedings of the Advances in Neural Information Processing Systems 2012, Lake Tahoe, NV, USA, 3–8 December 2012.

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 24–27 June 2014.

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. In Proceedings of the International Conference on Learning Representations, Banff, Canada, 16 April 2014.

- LeCun, Y.; Yoshua, B.; Geoffrey, H. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Ian, G.; Yoshua, B.; Aaron, C. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the International Conference on Machine Learning (ICML), Lille, France, 6–11 July 2015.

- Nair, V.; Hinton, G.E. Rectified linear units improve restricted boltzmann machines. In Proceedings of the 27th International Conference on Machine Learning, Haifa, Israel, 21–24 June 2010.

- WG III/2: Point Cloud Processing. Available online: http://www.commission3.isprs.org/wg2/ (accessed on 1 September 2016).

- Mongus, D.; Zalik, B. Computationally efficient method for the generation of a digital terrain model from airborne LiDAR data using connected operators. IEEE J. Sel. Top. Appl. Remote Sens. 2014, 7, 340–351. [Google Scholar] [CrossRef]

| Type I Error (%) | Type II Error (%) | Total Error (%) | |

|---|---|---|---|

| TerraScan | 11.05 | 4.52 | 7.61 |

| Mongus 2012 | 3.49 | 9.39 | 5.62 |

| SGF | 5.25 | 4.46 | 4.85 |

| Axelsson | 5.55 | 7.46 | 4.82 |

| Mongus 2014 | 2.68 | 12.79 | 4.41 |

| Deep CNN | 0.67 | 2.262 | 1.22 |

| Error | TerraScan | Deep CNN |

|---|---|---|

| type I | 10.5% | 3.6% |

| type II | 1.4% | 2.2% |

| total | 6.3% | 2.9% |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hu, X.; Yuan, Y. Deep-Learning-Based Classification for DTM Extraction from ALS Point Cloud. Remote Sens. 2016, 8, 730. https://doi.org/10.3390/rs8090730

Hu X, Yuan Y. Deep-Learning-Based Classification for DTM Extraction from ALS Point Cloud. Remote Sensing. 2016; 8(9):730. https://doi.org/10.3390/rs8090730

Chicago/Turabian StyleHu, Xiangyun, and Yi Yuan. 2016. "Deep-Learning-Based Classification for DTM Extraction from ALS Point Cloud" Remote Sensing 8, no. 9: 730. https://doi.org/10.3390/rs8090730