1. Introduction

Autonomous-driving technologies have attracted considerable academic and industrial interests in recent years. Navigating an unmanned ground vehicle (UGV) driving through urban areas is a difficult task. The UGV must know exactly where it is in dynamic traffic scenarios. The accuracy of the location information directly impacts the safety and control stability of the UGV.

The localization methods of autonomous driving can be divided into satellite signal-based solutions and map matching solutions. In satellite signal-based solutions, GPS is the most common positioning way. However, because of the multipath effect and the occlusion of satellite signals caused by buildings, trees or clouds, the ordinary GPS equipment with normal accuracy cannot meet the requirement of the positioning system. Therefore, the differential GPS (DGPS) or GPS-inertial navigation system (INS) positioning system comes into use. In the 2007 Defense Advanced Research Projects Agency (DARPA) Urban Challenge, most of the unmanned vehicles were equipped with costly DGPS-based navigation systems [

1,

2]. The expensive equipment will increase the cost of the autonomous driving vehicle. When in a big city, DGPS positioning signals cannot cover everywhere. Moreover, when there are high buildings, big trees, bridges or tunnels, it is impossible for the positioning system to obtain even ordinary GPS satellite signals.

For the map matching-based method, an unmanned vehicle gets its position by comparing the environment map with real-time perception data from mounted sensors, such as laser scanners or cameras [

3–

7]. If the map is large and accurate enough, map matching-based approaches show better stability than the GPS-based method in the urban scenario. Such an environment map can be obtained by various approaches [

8–

10]. However, the tremendous size and the huge cost of a detailed map of a whole city is a big problem. Storage and computational complexity, due to a massive amount of map data, will affect the efficiency of positioning system. In early 2013, the Google self-driving car was reported to be commercially available to customers in five to seven years [

11]. However, how to obtain the accurate position of the self-driving car is still a challenge. In this kind of map matching approach, the determination of the autonomous vehicle relies on very detailed maps of the roads and terrain. Before sending the self-driving car on a road test, a human driver must drive along the route to gather data about the environment. Then, the autonomous vehicle can compare the data acquired from the perception sensors to the previously recorded map data [

12]. Therefore, a very detailed, accurate and high-cost digital map along the driving routes is essential. The high cost refers to not only the map building, but the calculation load of the matching process during autonomous driving.

From the experiences of participation in the Intelligent Vehicle Future Challenge competitions of China since 2009 [

13], we found that a UGV could take advantage of a variety of positioning methods, rather than a particular one in the entire self-driving journey in urban areas. In most cases, the UGV drives on the structured roads with lanes and does not need precise position data. Lanes, road curbs and global path points ahead provide the direction for the UGV that drives itself like water flows in a pipe. Other environment perception technologies, such as traffic sign detection, can offer enough information to constrain the vehicle’s movement. However, when the vehicle enters unstructured areas, such as intersections and other areas without guide lanes, accurate localization information becomes essential to its self-driving. If the detailed map of these unstructured areas is known, the vehicle could locate itself using the map matching method of positioning to follow a pre-planned path. Therefore, for urban self-driving, we only need to build environment maps for unstructured areas and regard structured roads with lanes as channels between the unstructured maps. This approach makes the urban map become a hybrid map integrated with the local environment map and topological map. Compared with an ordinary digital map, the hybrid map is able to provide enough information without substantially increasing data quantity. It will make the urban driving task for a UGV not rely on costly positioning equipment for navigation.

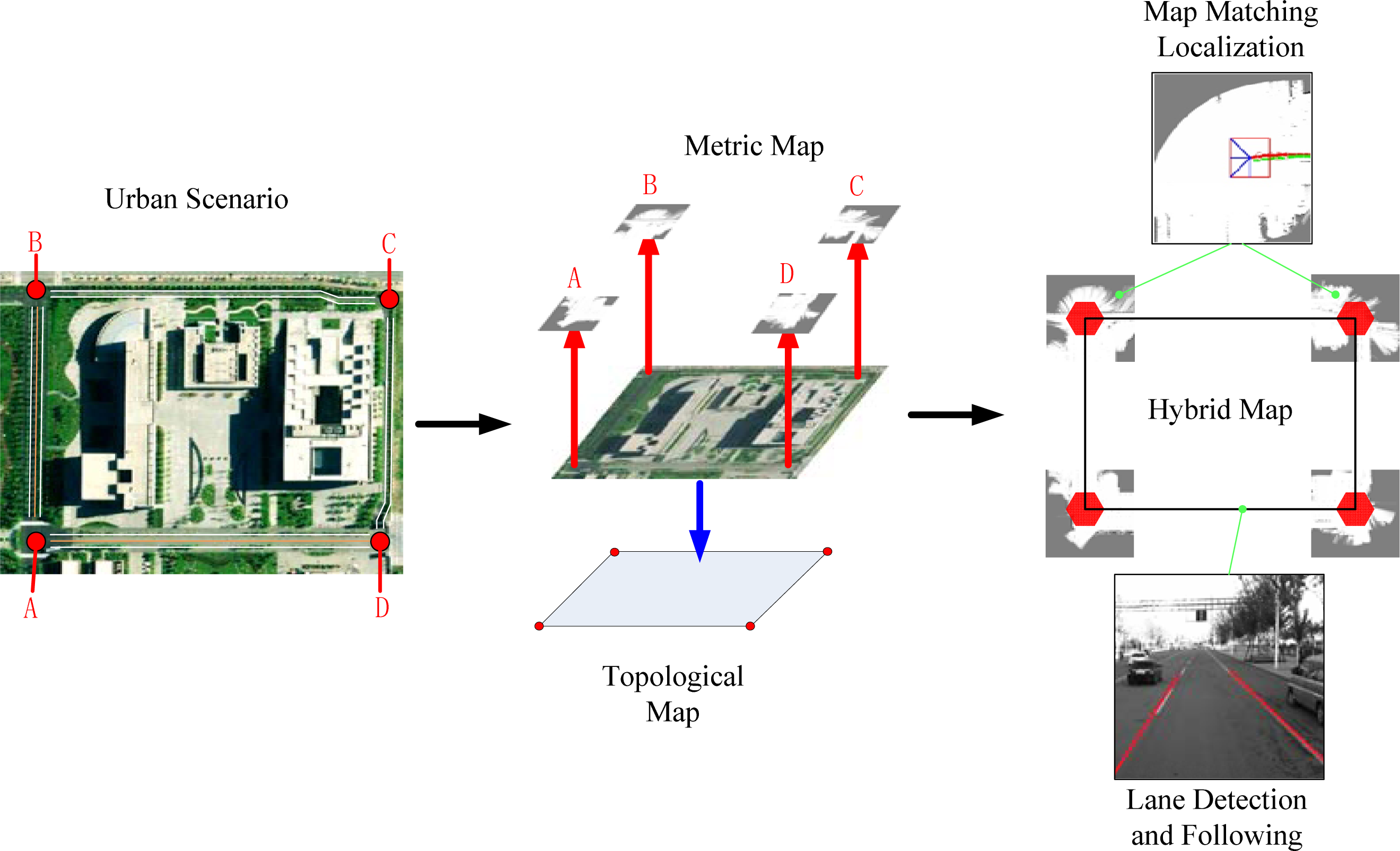

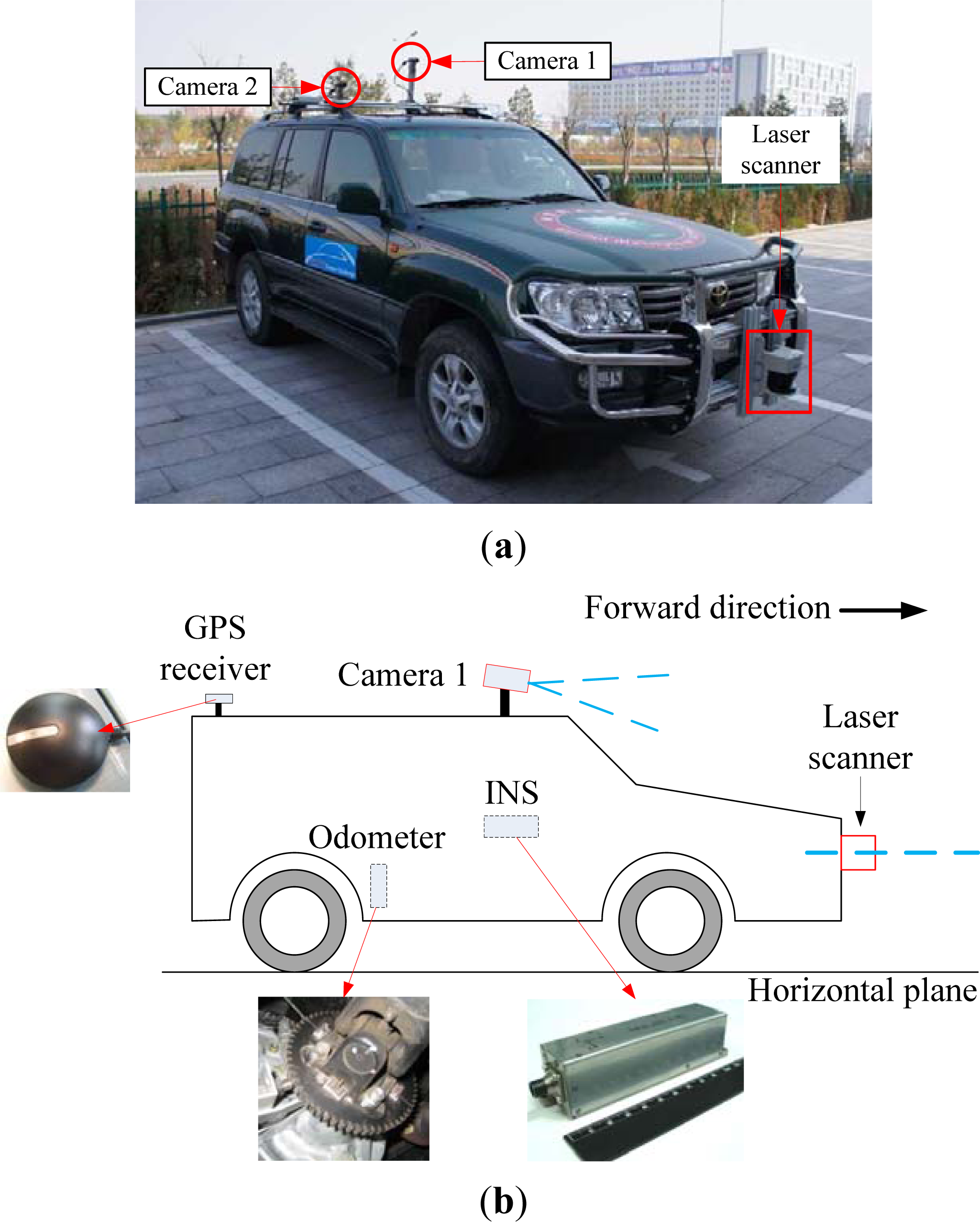

Based on the above analysis, our aim is to build a low-cost hybrid map-based navigation system for a UGV self-driving in urban scenarios, as shown in

Figure 1. The research is focused on the hybrid map building process and localization in the local map. In the following sections, we will describe the process of using OpenStreetMap [

14] for extracting the topological structure of a city map and using a laser scanner to construct a local grid map in detail. Next, an improved Markov localization method is proposed to increase the real-time positioning. Then, we introduce the Beijing Institute of Technology (BIT) unmanned ground vehicle used in this work. Furthermore, the hybrid map mapping and map matching localization in the local map are proposed. At the end, we will discuss the advantages of the hybrid map-based navigation method and future work.

2. Construction of the Hybrid Map

In order to reduce the dependence on a high-precision positioning system and the amount of map data, we divide the whole urban space into two different areas. Because the traffic lanes can provide forward direction for a UGV, the district with visible lanes is defined as a strong constraint (SC) area. On the other hand, the district without lanes is defined as a loose constraint (LC) area. As shown in

Figure 2, the SC areas mainly consist of road regions, and the LC areas may include intersections, parking lots, and so on. Traffic lanes are the primary difference to distinguish an SC area from an LC area. Dividing urban scenarios into these two categories allows us to use a low accurate positioning method in SC areas and precise localization mapping in LC areas.

2.1. Topological Map of Urban Scenario

The global path for a UGV self-driving navigation in either SC areas or LC areas is described in the topological map. Basic elements of the topological map include nodes and edges. Nodes represent significant places in the map, and edges show the relationship between two nodes. In this work, the nodes are defined as GPS positions of LC areas, and the edges represent certain roads between two adjacent nodes.

The common way to get the GPS positions is to drive the vehicle with a GPS receiver and record key waypoints on the routes. In a small area, the GPS data collection work can be done manually. However, when building a whole city map of urban size, the work may be too tedious and time-consuming. Therefore, we extract the urban topological structure from OpenStreetMap (OSM) [

14]. The OSM is a well-known project that provides user-generated street maps. The GPS data downloaded from the OSM website is in XML form, organized in a treelike structure.

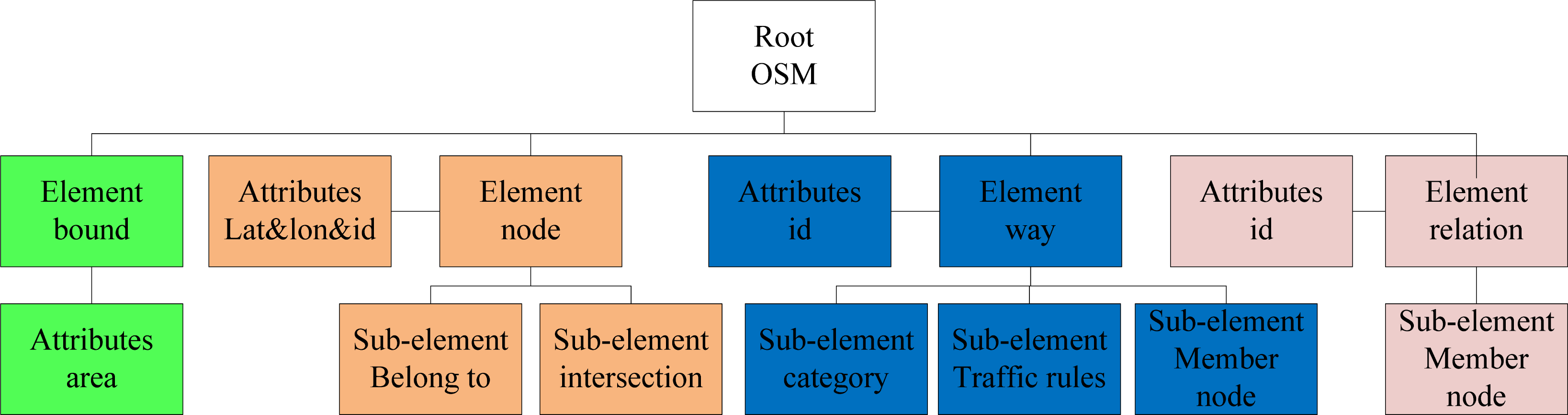

In the XML file structure shown in

Figure 3, the root element OSM illustrates that this is a file downloaded from the OpenStreetMap website. There are four elements under this root, including bound, node, way and relation. Node elements are shown in orange. Attributes from a node provide latitude, longitude and the ID of the node. Some nodes may have sub-elements, which include more knowledge about this node, such as whether it is an intersection or whether it belongs to a building, bus stop, restaurant,

etc. If a node does not have a sub-element, that means it belongs to a road or highway instead of an intersection. The attribute of way element indicates its identification, and the sub-elements of way include its category, traffic rules and member nodes information.

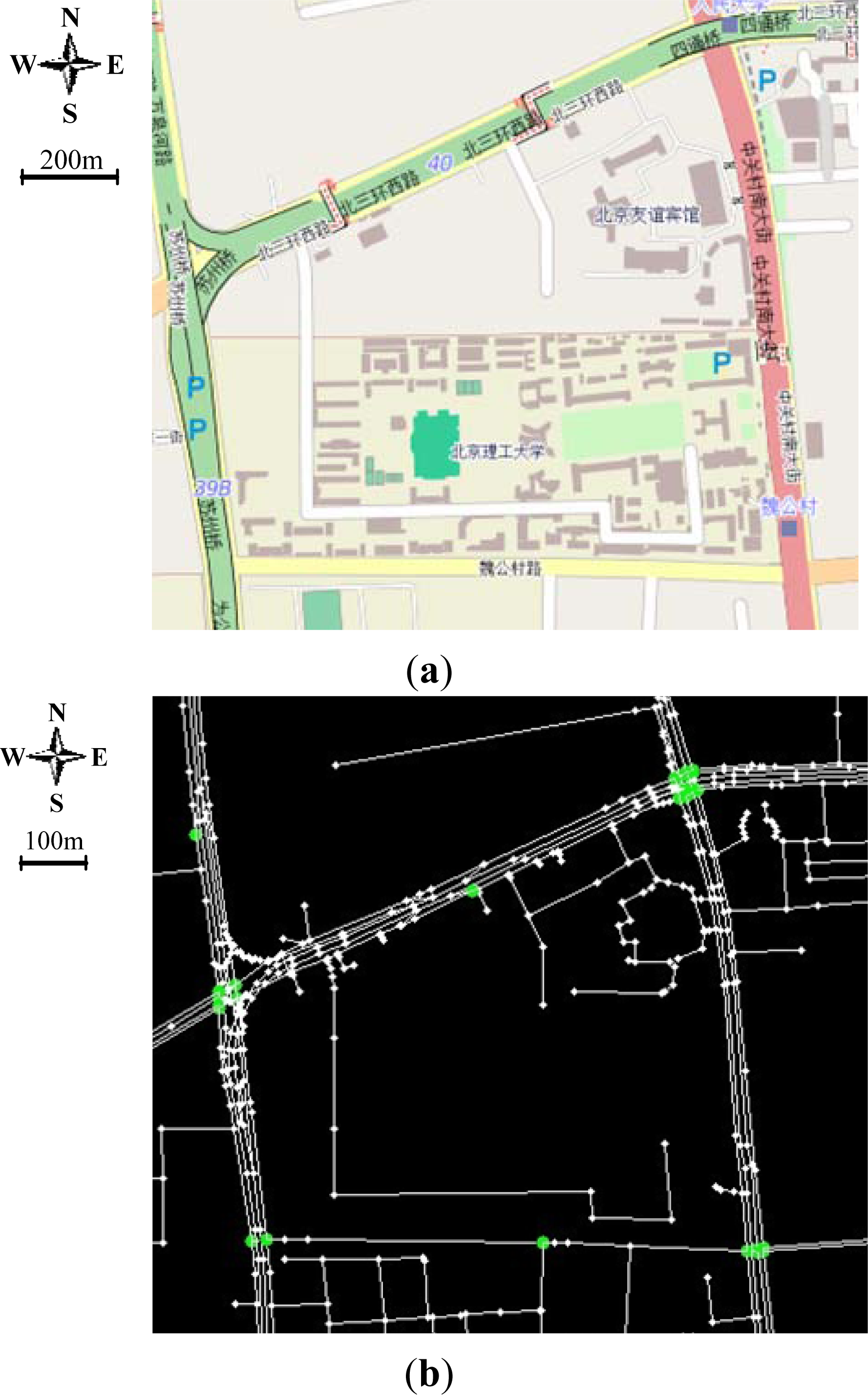

Figure 4a shows urban scenario while

Figure 4b shows the topological map extracted from OSM in the scale of

Figure 4a. We only extract the points from the highway. These points are the nodes of the topological map. Furthermore, based on the sub-element information, the points with the description of “traffic light” are defined as the locations of intersections, as the green points in

Figure 4b. Additionally, these points indicate the position of LC areas where the local metric map should be built for self-driving navigation.

2.2. Metric Map of Loose Constraint Area

For LC areas, there are no lanes to guide the UGV. When the vehicle enters into these areas, either the sparse GPS waypoints in the topological map or the positioning error could cause unstable driving behavior. Therefore, the primary task is to add more environmental constraints to restrict the vehicle movement. The constraints are added in the form of metric map. Additionally, the local metric map can be used for localization, which will be discussed in Section 3.

For environment mapping, there have been many impressive results shown in recent years [

15–

19]. In this work, the occupancy grid mapping method is applied in local metric map building. To build an occupancy grid map, the environment is discretized into grids, and the value of each cell is allocated in accordance with the probability that the cell is occupied by obstacles. The grid map is built according to measurements collected by a laser scanner mounted on the vehicle. Additionally, the simultaneous localization and mapping algorithm is employed in real-time dynamic outdoor environment mapping [

20]. The mapping algorithm includes three basic components: map update, pose estimation and moving object elimination.

2.2.1. Map Update

Given that the observation,

zt, is the measurement of the laser scanner at time

t, and

xt is the vehicle’s corresponding pose, the posterior probability of the occupancy grid map is governed by:

Assume the environment is static. When the map,

m, is known, the measurement,

zt, is independent from the vehicle’s historical state,

x1:t–1, and measurements,

z1:t–1,

Equation (1) can be rewritten as:

Based on reference [

21],

Equation (2) can be further simplified into the form as:

where:

The inverse sensor model,

P(

m|zt,

xt), is determined based on the laser measurements as:

Equation (5) determines the measured occupied probability of each grid. The cell is occupied if it is hit by a laser point. The cell is free if it lies between the endpoint and the vehicle on the laser beam. If the cell is on a laser beam and behind the endpoint, its state is unknown. As the final occupancy probability of a cell is determined by a sequence of measurements, the value of a free cell in the sensor model has a remarkable impact on the generated grid map. Large value for a free cell can make the probability of a previously occupied cell decrease at a lower ratio when it becomes free. This is necessary if we need to preserve small obstacles, such as traffic guardrails, in the generated grid map. Such obstacles are likely to be invisible in sequential frames of detections, as a result of the vehicle motion and the angle resolution of the laser scanner.

2.2.2. Pose Estimation

Calculating a vehicle’s pose is a maximum likelihood estimation problem in the local mapping method, which is finding the most likely pose of the vehicle in the configuration space. Given the posterior likelihood of the vehicle’s pose at the last time step,

x̂t–1, the

a priori likelihood of the pose is predicted based on the vehicle’s control inputs using

P(

xt|

x̂t–1,

ut). After the vehicle makes a new measurement of the environment, its pose can be determined by maximizing the posterior likelihood:

where

m is the grid map and

u is the control inputs of the vehicle.

The pose estimation is resolved using a particle filter. Initially, particles are uniformly distributed in the area without any prior knowledge of the vehicle’s position. A simplified velocity model is employed to predict the particle’s motion:

where the motion of the vehicle between two measurements is assumed to be straight and

θ is the heading angle of the vehicle. The measurement model used in the update procedure of the particle filter is [

20]:

where

is the cell hit by the end-point of laser beam,

k. N is the total number of laser scanner’s data.

The local map method simplifies the map generation by decoupling pose estimation from a map update. This may introduce errors in the generated map, but the errors are acceptable.

2.2.3. Moving Obstacle Elimination

In the map update step, cells occupied with moving obstacles have to be removed in

Equation (4), otherwise the dynamic elements can make the Markov assumption invalid. By comparing the posterior likelihood,

, of an occupied cell,

i, at the direction of a laser beam,

k, with the likelihood of occupied cells in the sensor model, the cell could be classified into static or dynamic [

19]. If the

is smaller than the occupied threshold, the cell will be treated as dynamic and not counted in

Equation (3) for the map update. As only static cells are included in the map update,

Equation (4) can be written as:

2.3. Metric Map Registration

To integrate the local metric map with the urban topological map, we collect some GPS positioning data in the area during a local metric map mapping process. After the local map is established, only one GPS location in each local map is selected as a registration point, which can be used to register the local metric map to a nearest node in the topological map. The registration point’s position is also stored in the corresponding node.

3. Hybrid Map-Based Navigation

In Section 2, we have constructed the topological map of the urban scenario and the local metric maps of the LC areas. In the SC area, our previous works can get enough information, including detections of lane markings [

22], traffic lights [

23] and signs [

24], to provide the direction and the speed limit for the UGV. Because the GPS signal only provides global path information, the GPS receiver used in our UGV can be just an ordinary one with low positioning accuracy.

There are lots of map-matching-based localization methods. In reference [

25], light, humidity and other data are used for radio frequency (RF)-based localization. Furthermore, Markov localization [

26] is widely used. Based on the discretization of a vehicle’s state space, the position is recursively determined by calculating the posterior probability. The original Markov localization method needs to maintain a likelihood matrix of the state space with the same size of the entire metric map. Although the probabilities of most elements in the matrix are negligible, they have to be updated every time when a new measurement is made. In this research, assuming that the vehicle’s true position is close to its GPS indications, we can adopt a small likelihood matrix instead of the whole state space to reduce the computational cost. The likelihood matrix is displayed as a constraint window with its center fixed on the vehicle’s GPS position. Therefore, the window will scroll forward with the vehicle’s movement through the map.

3.1. Navigation in the Hybrid Map

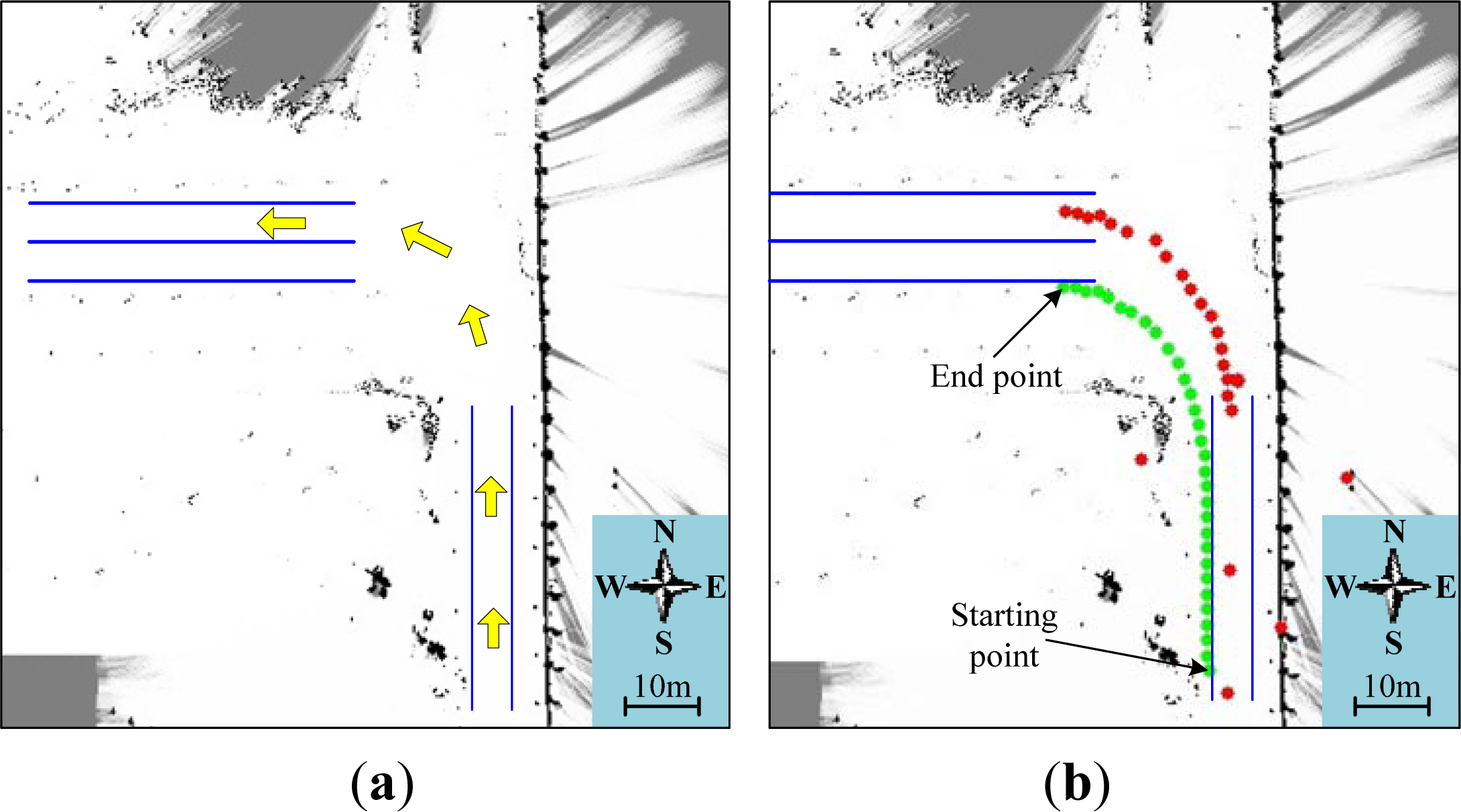

3.1.1. Switch from Strong Constraint (SC) Area to Loose Constraint (LC) Area

The corresponding metric map will be loaded into memory when the vehicle is approaching a node in the digital map. Map matching-based localization will be launched when the vehicle approaches the scope of the metric map and registers the vehicle with the local environment, as location A in

Figure 5. Since the localization in the metric map is independent with the global position of the vehicle and the metric map, the position of the metric map in the global topological map is not required to be very accurate.

3.1.2. Switch from Loose Constraint (LC) Area to Strong Constraint (SC) Area

Following the global path in the topological map, the autonomous vehicle will drive into the expected edge path of the digital map when it leaves the local metric map. The local metric map contains not only the static structure of an area, which is used for localization and obstacle avoidance, but also the connections with edges in the digital map. This information is recorded when the map is generated. The connection with the edge on the global path can be used as the target state in the vehicle’s configuration space for local path planning. Once strong constrained elements are detected, when the vehicle is driving along the local path, the driving mode will be switched to strong constrained mode, for example, as shown in

Figure 5; when the vehicle arrives at location B, the vehicle will detect the road lanes.

3.2. Localization in Loose Constraint (LC) Area

The original Markov localization method needs to maintain a likelihood matrix with the same size of the entire metric map. Except for elements closed to the true position, the probabilities of most elements in the matrix are negligible, but they have to be updated every time after a new measurement is made. This updated probability can be expressed as

Bel(

Lt=

l|s1,…,

st,

a1,…,

at− 1), where

Lt denotes the vehicle state,

l denotes one of the discrete states and

t denotes the time. In this work,

s and

a indicate the data from the odometer and laser scanner, respectively. Based on the Bayesian theorem, this expression can be transformed into:

For the simplification of real-time computation, with the Markov assumption of the vehicle’s movement and the independence of environment perception on the vehicle’s previous position, the expression can be further simplified as:

where

P(

Lt=

l|Lt− 1,

at− 1) and

P(

st|Lt=

l) represent the influence of movement model and perception model on the posterior probability of the discrete state, respectively.

η is a normalized coefficient.

The Markov localization method is generally used for indoor robotics. When it is applied in a large space, the main problem is low positioning frequency caused by increased state space for computation. With the assumption that the vehicle’s true position is close to its GPS indications, we propose a window constraint Markov localization (WCML) method, using a small likelihood matrix to reduce the computational cost in the Markov localization process.

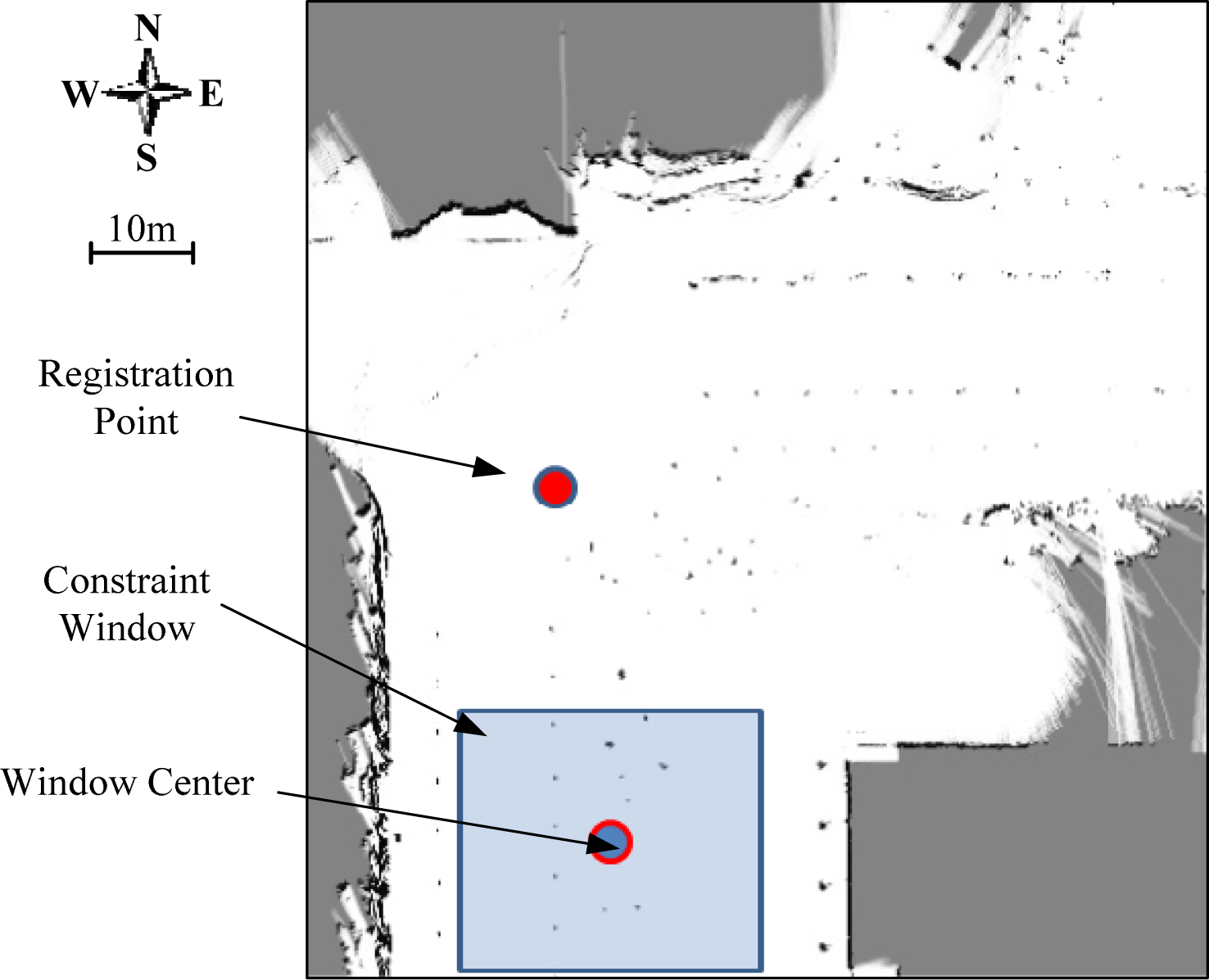

3.2.1. Constraint Window

As shown in

Figure 6, the size of the constraint window (the blue square) is 15 m × 15 m, and its center is fixed on the vehicle’s GPS position. The scope of the constraint window is set to be much larger than normal GPS positioning error. As a result, the true state of the UGV can be covered by the window. Once the corresponding metric map is loaded, the vehicle pose will be transformed into the local coordinate of the map. The relative position of the UGV, which is also the center of the constraint window, can be expressed in the local coordinate in the metric map as:

3.2.2. Window Constraint Markov Localization

In the window constraint Markov localization process, the states needed to be updated in the state space are limited inside the window. Therefore, the movement model of Markov localization can be rewritten as:

where

Wt denotes the state space in the window scale at time

t. We use

Bel(

Lt− 1= l’) instead of

Bel(

Lt− 1= l’|s1,…,st− 1,a1,…,at− 2) for the expression.

Equation (13) describes the state transferring probability matrix. In this work, the matrix is designed as a Gaussian distribution, and its parameters are determined by the error of the odometer mounted on the UGV.

Another part of

Equation (11) is the perception model,

P(

st|Lt=

l), which determines the similarity between the laser data and the occupancy grid map (OGM) at state

l. It is defined as:

where

St is the distances from the laser scanner and

St’ is the desired value measured from the map with the assumption

Lt =

l using the ray tracing method.

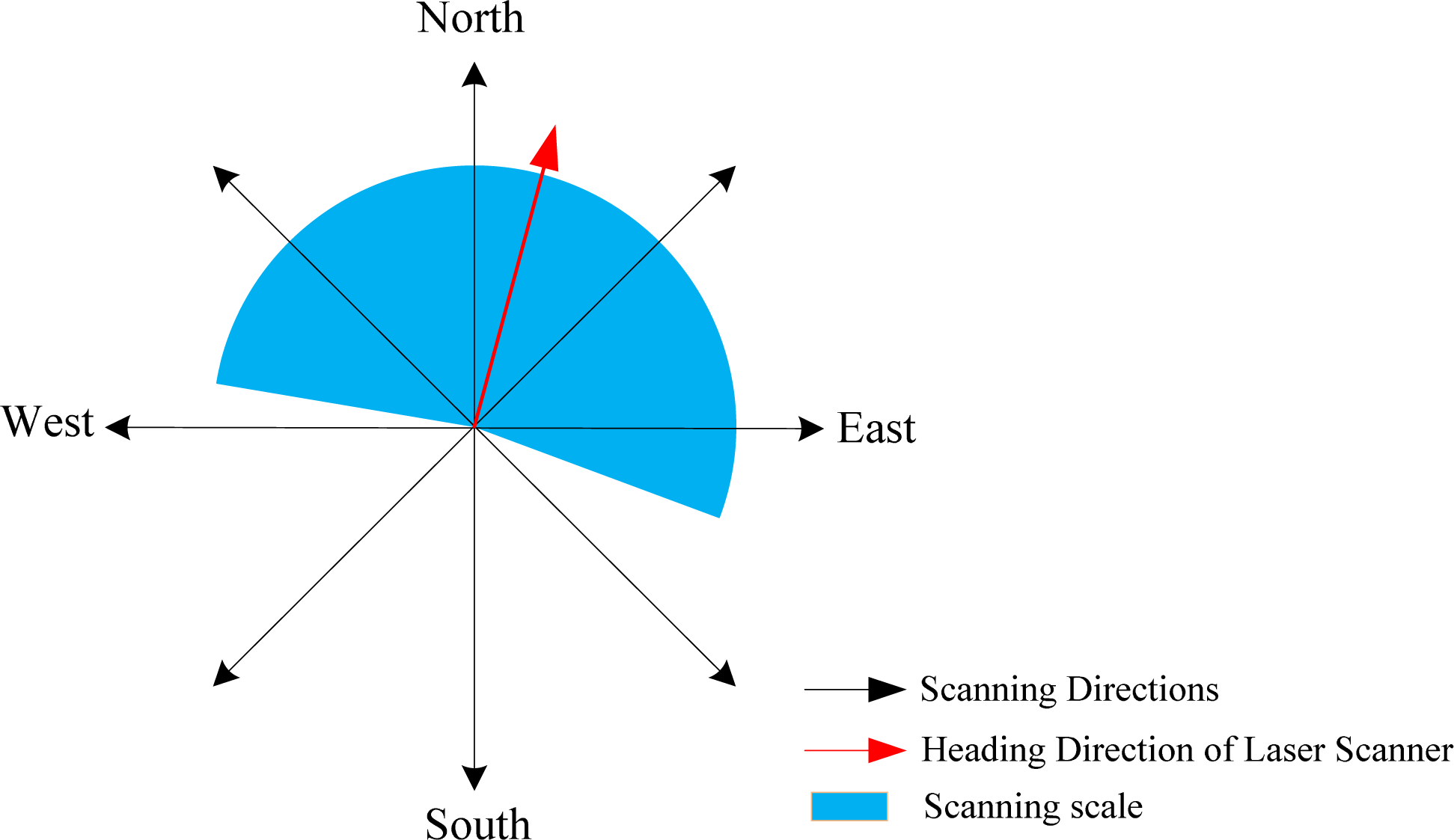

K is the number of scanning directions of the laser scanner. For computational consideration, the scanning directions are previously chosen, and all the values used in the nonlinear computation, such as sine and cosine, are stored for real-time calculation. As shown in

Figure 7, there are eight total directions in the local coordinates of the metric map. Only four or five directions of them are selected to be used in each localization process.

5. Conclusion

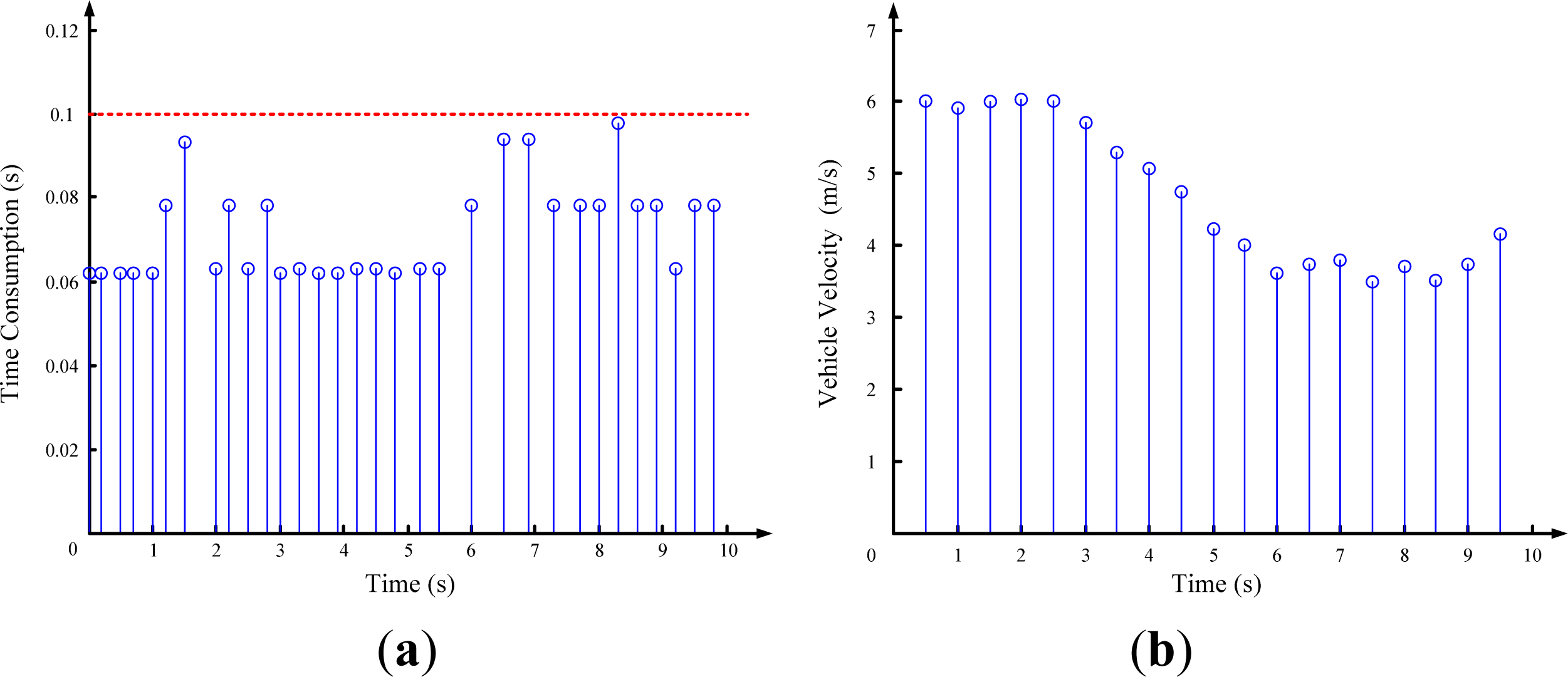

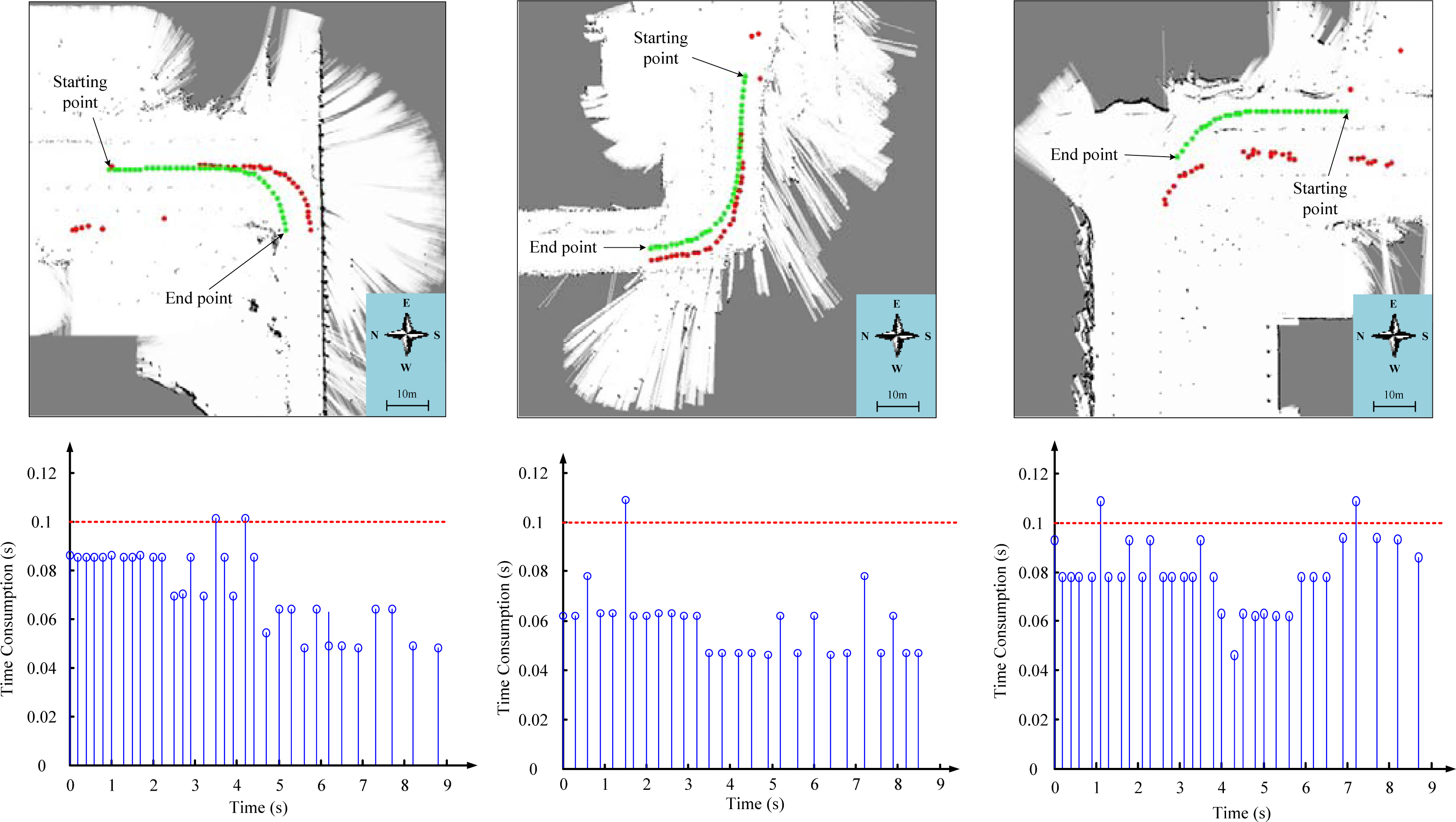

This paper proposes a hybrid map-based navigation method for unmanned ground vehicles in the urban scenario, without using accurate positioning equipment. We divide the urban scenario into strong constraint areas, where the traffic lanes exist, and loose constraint areas, where no lanes can guide the vehicle. In the loose constraint area, the Markov localization method is involved to achieve high positioning accuracy. By combing the local metric maps for loose constraint areas and the global topological map, a hybrid map is established. In our experiment, we have successfully reduced 50% of the map data. If the scale of the scenario is enlarged, the reduction effect could be more obvious.

In order to improve the real-time performance of grid-based Markov localization, we introduce a window constraint Markov localization method for accurate positioning. A constraint window moving with the vehicle can restrict the size of the state space and, thus, reduce the computational cost. According to our experiments, most positioning results can be achieved within 0.1 s. The update frequency and positioning accuracy can meet the navigation requirements for relatively low speed driving in loose constraint areas.

For future research, we will try to involve vision features to improve the performance of the Markov localization process in the environment map building. Furthermore, the vehicle-to-infrastructure and vehicle-to-vehicle [

28,

29] communications should be adopted for the driving safety of unmanned ground vehicles.