Exploring the Use of Google Earth Imagery and Object-Based Methods in Land Use/Cover Mapping

Abstract

1. Introduction

2. Study Area and Data

2.1. Study Area

2.2. Data Collection and Processing

3. Object-Based Classification Method

3.1. Multi-Scale Image Segmentation

3.2. Feature Selection and Classification Rule

3.3. Classification Accuracy Assessment and Comparison

4. Results and Discussions

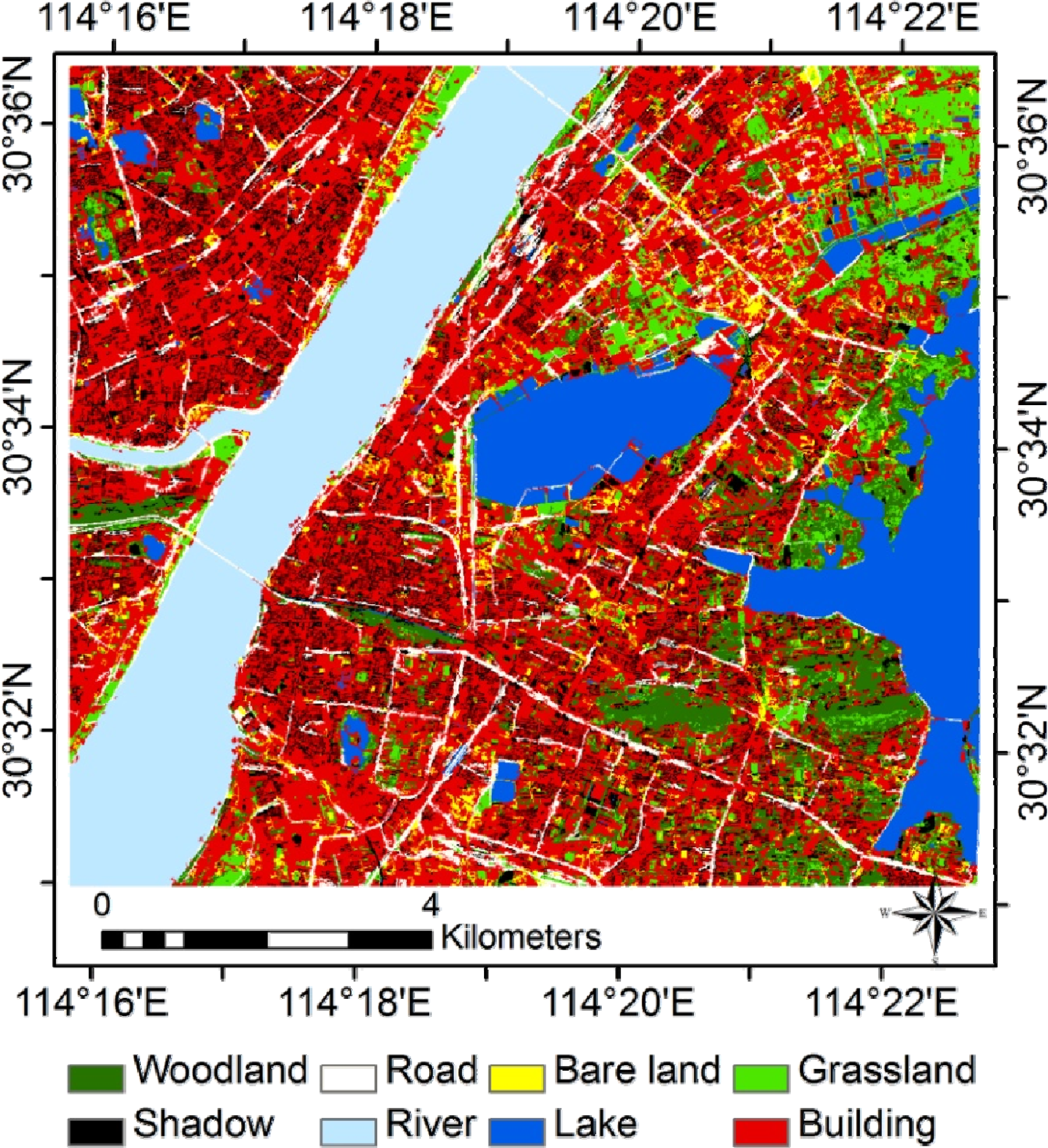

4.1. GE-Based Classification Map

4.2. Assessment of Classification Accuracy

4.3. Potentials Analysis of GE Imagery for Land Use/Cover Mapping

4.4. Uncertainty Analysis of GE Imagery for Land Use/Cover Mapping

5. Conclusion

Acknowledgments

Conflicts of interest

References

- Colditz, R.R.; Schmidt, M.; Conrad, C.; Hansen, M.C.; Dech, S. Land cover classification with coarse spatial resolution data to derive continuous and discrete maps for complex regions. Remote Sens. Environ 2011, 115, 3264–3275. [Google Scholar]

- Sleeter, B.M.; Sohl, T.L.; Loveland, T.R.; Auch, R.F.; Acevedo, W.; Drummond, M.A.; Sayler, K.L.; Stehman, S.V. Land-cover change in the conterminous United States from 1973 to 2000. Glob. Environ. Chang 2013, 23, 733–748. [Google Scholar]

- Wu, W.; Shibasaki, R.; Yang, P.; Zhou, Q.; Tang, H. Remotely sensed estimation of cropland in China: A comparison of the maps derived from four global land cover datasets. Can. J. Remote Sens 2008, 34, 467–479. [Google Scholar]

- Bargiel, D.; Herrmann, S. Multi-temporal land-cover classification of agricultural areas in two European regions with high resolution spotlight TerraSAR-X data. Remote Sens 2011, 3, 859–877. [Google Scholar]

- Wang, Y.; Mitchell, B.R.; Nugranad-Marzilli, J.; Bonynge, G.; Zhou, Y.; Shriver, G. Remote sensing of land-cover change and landscape context of the National Parks: A case study of the Northeast temperate network. Remote Sens. Environ 2009, 113, 1453–1461. [Google Scholar]

- Jiang, H.; Zhao, D.; Cai, Y.; An, S. A method for application of classification tree models to map aquatic vegetation using remotely sensed images from different sensors and dates. Sensors 2012, 12, 12437–12454. [Google Scholar]

- Zhou, H.; Aizen, E.; Aizen, V. Deriving long term snow cover extent dataset from AVHRR and MODIS data: Central Asia case study. Remote Sens. Environ 2013, 136, 146–162. [Google Scholar]

- Wijedasa, L.S.; Sloan, S.; Michelakis, D.G.; Clements, G.R. Overcoming limitations with Landsat imagery for mapping of peat swamp forests in Sundaland. Remote Sens 2012, 4, 2595–2618. [Google Scholar]

- Gong, P.; Wang, J.; Yu, L.; Zhao, Y.; Zhao, Y.; Liang, L.; Niu, Z.; Huang, X.; Fu, H.; Liu, S.; et al. Finer resolution observation and monitoring of global land cover: First mapping results with Landsat TM and ETM+ data. Int. J. Remote Sens 2013, 34, 2607–2654. [Google Scholar]

- Hansen, M.C.; DeFries, R.S.; Townshend, J.R.; Sohlberg, R. Global land cover classification at 1 km spatial resolution using a classification tree approach. Int. J. Remote Sens 2000, 21, 1331–1364. [Google Scholar]

- Tchuenté, A.T.K.; Roujean, J.L.; De Jong, S.M. Comparison and relative quality assessment of the GLC2000, GLOBCOVER, MODIS and ECOCLIMAP land cover data sets at the African continental scale. Int. J. Appl. Earth Obs. Geoinf 2011, 13, 207–219. [Google Scholar]

- Liu, J.; Liu, M.; Deng, X.; Zhuang, D.; Zhang, Z.; Luo, D. The land use and land cover change database and its relative studies in China. J. Geogr. Sci 2002, 12, 275–282. [Google Scholar]

- Zhou, W.; Troy, A.; Grove, M. Object-based land cover classification and change analysis in the Baltimore metropolitan area using multitemporal high resolution remote sensing data. Sensors 2008, 8, 1613–1636. [Google Scholar]

- Laliberte, A.S.; Browning, D.M.; Rango, A. A comparison of three feature selection methods for object-based classification of sub-decimeter resolution UltraCam-L imagery. Int. J. Appl. Earth Obs. Geoinf 2012, 15, 70–78. [Google Scholar]

- Duro, D.C.; Franklin, S.E.; Dubé, M.G. A comparison of pixel-based and object-based image analysis with selected machine learning algorithms for the classification of agricultural landscapes using SPOT-5 HRG imagery. Remote Sens. Environ 2012, 118, 259–272. [Google Scholar]

- Batista, M.H.; Haertel, V. On the classification of remote sensing high spatial resolution image data. Int. J. Remote Sens 2010, 31, 5533–5548. [Google Scholar]

- Clark, M.L.; Aide, T.M.; Grau, H.R.; Riner, G. A scalable approach to mapping annual land cover at 250 m using MODIS time series data: A case study in the Dry Chaco Ecoregion of South America. Remote Sens. Environ 2010, 114, 2816–2832. [Google Scholar]

- Mering, C.; Baro, J.; Upegui, E. Retrieving urban areas on Google Earth images: Application to towns of West Africa. Int. J. Remote Sens 2010, 31, 5867–5877. [Google Scholar]

- Kaimaris, D.; Georgoula, O.; Patias, P.; Stylianidis, E. Comparative analysis on the archaeological content of imagery from Google Earth. J. Cult. Herit 2011, 12, 263–269. [Google Scholar]

- Yu, L.; Gong, P. Google Earth as a virtual globe tool for Earth science applications at the global scale: Progress and perspectives. Int. J. Remote Sens 2011, 33, 3966–3986. [Google Scholar]

- Guo, J.; Liang, L.; Gong, P. Removing shadows from Google Earth images. Int. J. Remote Sens 2010, 31, 1379–1389. [Google Scholar]

- Potere, D. Horizontal positional accuracy of Google Earth’s high-resolution imagery Archive. Sensors 2008, 8, 7973–7981. [Google Scholar]

- Drǎguţ, L.; Tiede, D.; Levick, S.R. ESP: A tool to estimate scale parameter for multiresolution image segmentation of remotely sensed data. Int. J. Geogr. Inf. Sci 2010, 24, 859–871. [Google Scholar]

- Xiao, Y. Spatial-Temporal Land Use Patterns and Master Planning in Wuhan, China. Wuhan University, Wuhan, Hubei, China, 2002. [Google Scholar]

- Cheng, J.; Masser, I. Urban growth pattern modeling: A case study of Wuhan city, PR China. Landsc. Urban. Plan 2003, 62, 199–217. [Google Scholar]

- Jensen, J.R. Thematic Map Accuracy Assessment. In Introductory Digital Image Processing: A Remote Sensing Perspective, 3rd ed; Prentice Hall: Upper Saddle River, NJ, USA, 2005; pp. 476–482. [Google Scholar]

- Shao, Y.; Lunetta, R.S. Comparison of support vector machine, neural network, and CART algorithms for the land-cover classification using limited training data points. ISPRS J. Photogramm. Remote Sens 2012, 70, 78–87. [Google Scholar]

- Aitkenhead, M.J.; Aalders, I.H. Automating land cover mapping of Scotland using expert system and knowledge integration methods. Remote Sens. Environ 2011, 115, 1285–1295. [Google Scholar]

- Yu, Q.; Gong, P.; Clinton, N.; Biging, G.; Kelly, M.; Schirokauer, D. Object-based detailed vegetation classification with airborne high spatial resolution remote sensing imagery. Photogramm. Eng. Remote Sens 2006, 72, 799–811. [Google Scholar]

- Lisita, A.; Sano, E.E.; Durieux, L. Identifying potential areas of Cannabis sativa plantations using object-based image analysis of SPOT-5 satellite data. Int. J. Remote Sens 2013, 34, 5409–5428. [Google Scholar]

- Lu, D.; Weng, Q. A survey of image classification methods and techniques for improving classification performance. Int. J. Remote Sens 2007, 28, 823–870. [Google Scholar]

- Blaschke, T. Object based image analysis for remote sensing. ISPRS J. Photogramm. Remote Sens 2010, 65, 2–16. [Google Scholar]

- Dribault, Y.; Chokmani, K.; Bernier, M. Monitoring seasonal hydrological dynamics of minerotrophic peatlands using multi-date GeoEye-1 very high resolution imagery and object-based classification. Remote Sens 2012, 4, 1887–1912. [Google Scholar]

- Mathieu, R.; Aryal, J.; Chong, A. Object-based classification of Ikonos imagery for mapping large-scale vegetation communities in urban areas. Sensors 2007, 7, 2860–2880. [Google Scholar]

- Myint, S.W.; Gober, P.; Brazel, A.; Grossman-Clarke, S.; Weng, Q. Per-pixel vs. object-based classification of urban land cover extraction using high spatial resolution imagery. Remote Sens. Environ 2011, 115, 1145–1161. [Google Scholar]

- Manandhar, R.; Odeh, I.; Ancev, T. Improving the accuracy of land use and land cover classification of landsat data using post-classification enhancement. Remote Sens 2009, 1, 330–344. [Google Scholar]

- Zhou, W.; Troy, A. An object-oriented approach for analysing and characterizing urban landscape at the parcel level. Int. J. Remote Sens 2008, 29, 3119–3135. [Google Scholar]

- Duro, D.C.; Franklin, S.E.; Dubé, M.G. A comparison of pixel-based and object-based image analysis with selected machine learning algorithms for the classification of agricultural landscapes using SPOT-5 HRG imagery. Remote Sens. Environ 2012, 118, 259–272. [Google Scholar]

- Benz, U.C.; Hofmann, P.; Willhauck, G.; Lingenfelder, I.; Heynen, M. Multi-resolution, object-oriented fuzzy analysis of remote sensing data for GIS-ready information. ISPRS J. Photogramm. Remote Sens 2004, 58, 239–258. [Google Scholar]

- Hu, Q.; Zhang, J.; Xu, B.; Li, Z. A comparison of Google Earth imagery and the homologous Quick Bird imagery being used in land-use classification. J. Huazhong Norm. Univ 2013, 52, 287–291. [Google Scholar]

- Gao, Y.; Mas, J.F.; Navarrete, A. The improvement of an object-oriented classification using multi-temporal MODIS EVI satellite data. Int. J. Digit. Earth 2009, 2, 219–236. [Google Scholar]

- Zhou, W.; Huang, G.; Troy, A.; Cadenasso, M.L. Object-based land cover classification of shaded areas in high spatial resolution imagery of urban areas: A comparison study. Remote Sens. Environ 2009, 113, 1769–1777. [Google Scholar]

- Ghosh, A.; Joshi, P.K. A comparison of selected classification algorithms for mapping bamboo patches in lower Gangetic plains using very high resolution WorldView 2 imagery. Int. J. Appl. Earth Obs. Geoinf 2014, 26, 298–311. [Google Scholar]

- Foody, G.M. Thematic map comparison: Evaluating the statistical significance of differences in classification accuracy. Photogramm. Eng. Remote Sens 2004, 5, 627–633. [Google Scholar]

- Huang, X.; Zhang, L.; Li, P. Classification and extraction of spatial features in urban areas using high-resolution multispectral imagery. IEEE Geosci. Remote Sens.Lett 2007, 4, 260–264. [Google Scholar]

- Smith, A. Image segmentation scale parameter optimization and land cover classification using the Random Forest algorithm. J. Spat. Sci 2010, 55, 69–79. [Google Scholar]

- Castillejo-González, I.L.; López-Granados, F.; García-Ferrer, A.; Peña-Barragán, J.M.; Jurado-Expósito, M.; de la Orden, M.S.; González-Audicana, M. Object- and pixel-based analysis for mapping crops and their agro-environmental associated measures using QuickBird imagery. Comput. Electron. Agric 2009, 68, 207–215. [Google Scholar]

- Petropoulos, G.P.; Kalaitzidis, C.; Prasad Vadrevu, K. Support vector machines and object-based classification for obtaining land-use/cover cartography from Hyperion hyperspectral imagery. Comput. Geosci 2012, 41, 99–107. [Google Scholar]

- Elatawneh, A.; Kalaitzidis, C.; Petropoulos, G.P.; Schneider, T. Evaluation of diverse classification approaches for land use/cover mapping in a Mediterranean region utilizing Hyperion data. Int. J. Digit. Earth 2012. [Google Scholar] [CrossRef]

- Anders, N.S.; Seijmonsbergen, A.C.; Bouten, W. Segmentation optimization and stratified object-based analysis for semi-automated geomorphological mapping. Remote Sens. Environ 2011, 115, 2976–2985. [Google Scholar]

- Salehi, B.; Zhang, Y.; Zhong, M.; Dey, V. Object-based classification of urban areas using VHR imagery and height points ancillary data. Remote Sens 2012, 4, 2256–2276. [Google Scholar]

- Haala, N.; Brenner, C. Extraction of buildings and trees in urban environments. ISPRS J. Photogramm. Remote Sens 1999, 54, 130–137. [Google Scholar]

- Yu, Q.; Wu, W.; Yang, P.; Li, Z.; Xiong, W.; Tang, H. Proposing an interdisciplinary and cross-scale framework for global change and food security researches. Agric. Ecosyst. Environ 2012, 156, 57–71. [Google Scholar]

| Level | Land Use/Cover Types | Segmentation Scale | Shape | Compactness |

|---|---|---|---|---|

| 1 | River, Lake | 80 | 0.2 | 0.5 |

| 2 | Bare land, Shadow, Building, Road | 50 | 0.2 | 0.5 |

| 3 | Grassland, Woodland | 30 | 0.2 | 0.5 |

| Level | Parent Class | Child Class | Rule Sets |

|---|---|---|---|

| 1 | The whole imagery | Land River Lake |

|

| 2 | Land | Vegetation Shadow (buildings) Others: road Bare land Buildings1 |

|

| 3 | Vegetation Shadow(buildings) | Grassland Woodland Buildings2 |

|

| Road | Bare Land | Shadow | Grassland | Woodland | Building | Lake | River | |

|---|---|---|---|---|---|---|---|---|

| Area (km2) | 14.186 | 7.36 | 6.183 | 7.62 | 5.927 | 45.064 | 12.52 | 15.717 |

| Area proportion (%) | 12.38 | 6.42 | 5.4 | 6.65 | 5.17 | 39.33 | 10.93 | 13.72 |

| GE Image | QB Image | |||

|---|---|---|---|---|

| User’s Accuracy (%) | Producer’s Accuracy (%) | User’s Accuracy (%) | Producer’s Accuracy (%) | |

| River | 98.72 | 99.35 | 99.46 | 99.25 |

| Lake | 88.89 | 91.18 | 98.39 | 93.06 |

| Buildings | 79.04 | 70.28 | 77.26 | 75.69 |

| Shadow | 80.15 | 79.46 | 75.19 | 88.56 |

| Woodland | 58.98 | 72.02 | 80.55 | 79.96 |

| Road | 66.78 | 79.19 | 77.86 | 72.76 |

| Bare land | 64.21 | 64.34 | 62.50 | 62.64 |

| Grassland | 68.26 | 59.60 | 76.65 | 76.64 |

| Overall accuracy(%): QB=81.27, GE=78.07 | ||||

| Kappa statistic: QB=0.78, GE=0.74 | ||||

© 2013 by the authors; licensee MDPI, Basel, Switzerland This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license ( http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Hu, Q.; Wu, W.; Xia, T.; Yu, Q.; Yang, P.; Li, Z.; Song, Q. Exploring the Use of Google Earth Imagery and Object-Based Methods in Land Use/Cover Mapping. Remote Sens. 2013, 5, 6026-6042. https://doi.org/10.3390/rs5116026

Hu Q, Wu W, Xia T, Yu Q, Yang P, Li Z, Song Q. Exploring the Use of Google Earth Imagery and Object-Based Methods in Land Use/Cover Mapping. Remote Sensing. 2013; 5(11):6026-6042. https://doi.org/10.3390/rs5116026

Chicago/Turabian StyleHu, Qiong, Wenbin Wu, Tian Xia, Qiangyi Yu, Peng Yang, Zhengguo Li, and Qian Song. 2013. "Exploring the Use of Google Earth Imagery and Object-Based Methods in Land Use/Cover Mapping" Remote Sensing 5, no. 11: 6026-6042. https://doi.org/10.3390/rs5116026

APA StyleHu, Q., Wu, W., Xia, T., Yu, Q., Yang, P., Li, Z., & Song, Q. (2013). Exploring the Use of Google Earth Imagery and Object-Based Methods in Land Use/Cover Mapping. Remote Sensing, 5(11), 6026-6042. https://doi.org/10.3390/rs5116026