Responsible Innovation: A Complementary View from Industry with Proposals for Bridging Different Perspectives

Abstract

:1. Introduction

2. Background on Responsible Research and Innovation: Views from Industry

2.1. A Critical Review of RRI from a Perspective of Business

2.2. A Purpose for RRI: Society, Resilience and Innovation

2.3. What Makes Research and Innovation Different

- (1).

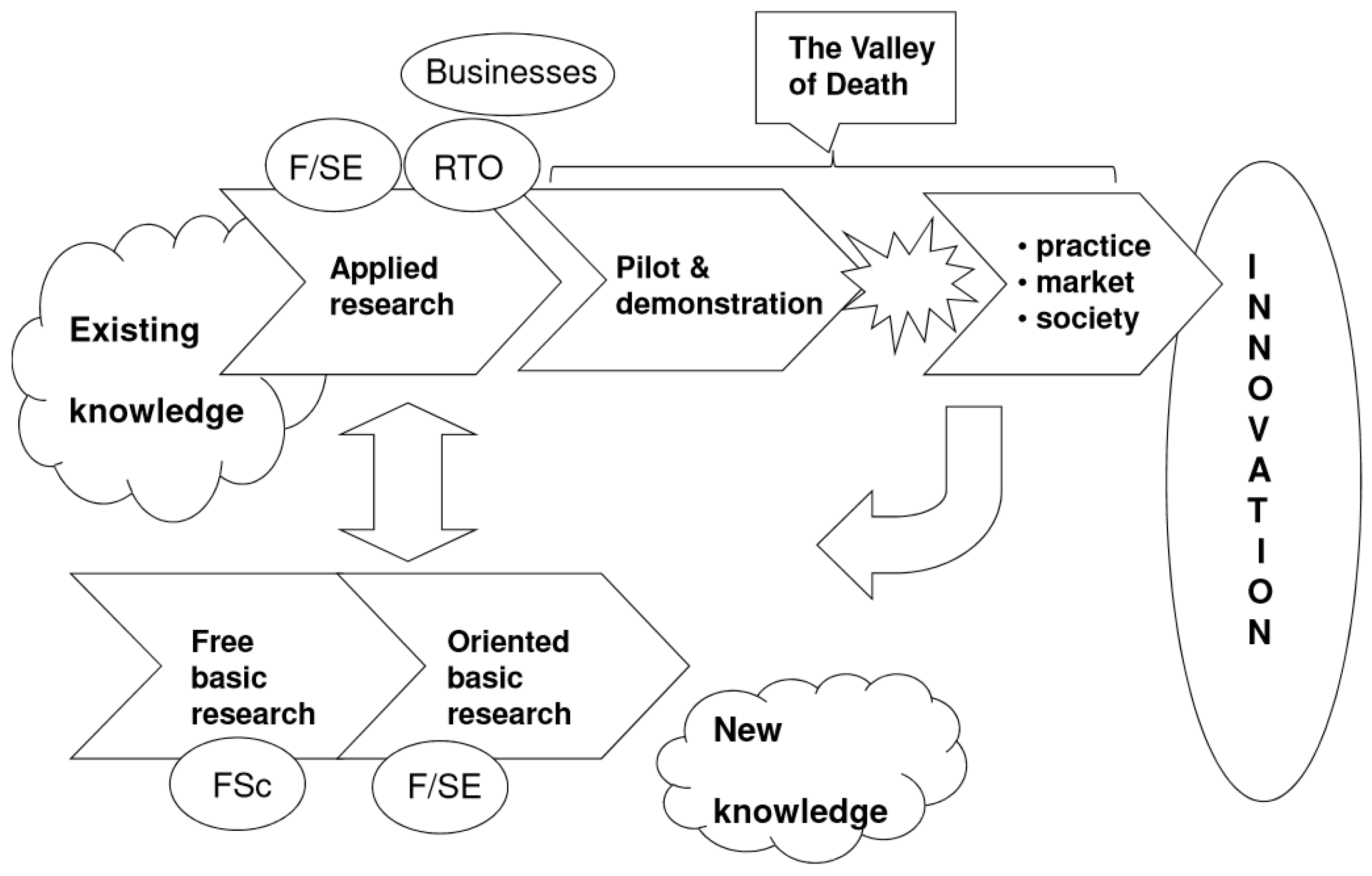

- Exploration, or the task of identifying the issue and exploring potential solutions. It is also called “Applied Research” in technology development, or “Front-End Loading” in New Product Development. This stage is typically chaotic, with a high level of unknowns and uncertainties that must be clarified, and often leads to a project idea being killed. Timelines for this stage are usually difficult to predict and maintain. At this stage, we typically deal with qualitative statements on markets, consumers and benefits; the “reason why”. This stage typically culminates with a pre-feasibility study, including a mapping of risks and opportunities, and cost estimates for the next stage.

- (2).

- Development, sometimes called “Pilot and Demonstration”, with concepts such as fast prototyping, pilot development, and pre-industrial trials. In sectors relying on sophisticated processes and enabling technologies, such as aeronautical, automotive, advanced materials or advanced manufacturing and processing, this is further differentiated by Technology Readiness Levels (TRL) representing the level of maturity of the technology under development [36]. At the end of this process, the benefits for consumers, the risks and the value both for the company and society must be clearly and quantitatively identified. Risk management is central to this stage. The gap between when an opportunity is identified, the knowledge base developed, and the perceived readiness of the market is often called the “Valley of Death”, owning to the high rate of project failures at this stage. Whatever the name given to the development process, the objective is to quantify the value and benefits, identify and mitigate or remove risks and quantify the parameters of the business model such as consumer targets, market share, pricing and costing. Furthermore, because uncertainties are never completely removed and decisions must be taken, this is also the stage where entrepreneurship is most important—where the ability to take risks and have the right business intuition comes to the fore [45,46]. This stage typically culminates in a feasibility study where benefits, risks and investments are quantified as accurately as reasonably possible for the decision to launch. At this stage, the risks must be clearly identified and removed or mitigated, because correcting errors of estimation during or even after implementation can result in crippling liabilities, the more so when malpractices underlie the decisions taken.

- (3).

- Implementation (also “practice, market, society”) is about delivering value not only to consumers, but also to society, while ensuring the compliance of the delivered solution to regulations and standards, as well as scaling up through capital investments, and the necessary investments in marketing, sales and distribution [47]. This stage normally culminates with the product launch.

3. Some Issues with RRI as Viewed from Industry

3.1. Overview

- (1).

- Research on Climate Change raised doubts on the causalities of human activity. Over 95% of scientists active in this field relate climate change to human activity. Citizens have also accepted this message to a large degree, but this message was initially blurred for a long time by campaigns of denial that resulted in procrastination in taking the necessary actions [64]. The debate is not yet completely closed, as we know.

- (2).

- For many years, research on the health effects of smoking tobacco was not communicated transparently by many key actors, and was accompanied by denials of its carcinogenic effects. This also resulted in delaying actions, causing additional deaths that could have been prevented [65].

- (3).

- The deleterious effects of asbestos on lungs were known many years before its use as insulating material was banned [66].

- (4).

- The health effects of high sugar consumption on diabetes and obesity were not communicated transparently, or were blurred by deceptive information on fats and lipids, resulting in an increase of such diseases [67].

- (5).

- The controversy on glyphosates (roundup herbicides) has resulted in delaying any action, mostly based on lack of transparency of data causing different interpretation of analysis [68].

- (6).

- The late withdrawal of Paxil (paroxetine), an antidepressant that was launched on the basis on data misleadingly reported and interpreted, which was later demonstrated to have acute side-effects and questionable efficacy [69].

- (7).

- The recent “diesel-gate” with car engines, resulting from misreporting diesel engine emissions caused by deception and fraud [70], seriously eroding the consumer trust in the car industry’s ability to self-regulate.

- (1).

- They discredit science, and therefore weaken the robustness of science-led policy making (Brussels Declaration [71]).

- (2).

- They tend to reinforce “science myths”, or beliefs that are based on inaccurate information taken to be “facts” [72], and result in inadequate societal decisions or at least in delaying them, with associated additional cost.

- (3).

- Of course, they generate waste of scarce resources by allocating them to the wrong type of research.

- (1).

- We tend to put far more weight on negative information.

- (2).

- We tend to mentally screen facts and figures that reinforce our set of beliefs.

3.2. A Focus on Issues with Basic Research

- (1).

- Using inadequate methods, with statistical mistakes, producing results based on weak statistical power;

- (2).

- Using questionable practices such as data selection, inadequate clinical studies, not divulging conflicts of interest, doctoring images, or over-interpreting results;

- (3).

- Jumping to inadequate conclusions, based on false evidence or inadequate logic; and

- (4).

- Committing outright fraud, such as falsification of data, fabricated data or plagiarism (fortunately these are exceptional situations).

- (1).

- Methods: The phase of designing and conducting research;

- (2).

- Reporting: The phase of communicating research;

- (3).

- Evaluation: The phase of evaluating research;

- (4).

- Reproducibility: The phase of verifying research; and

- (5).

- Incentives: The phase of rewarding research.

3.3. A Focus on Issues with Innovation

- (1).

- are based on deceptive research, and will therefore fail to deliver promised benefits;

- (2).

- are based on misleading scientific reporting;

- (3).

- fail to communicate valid benefits honestly;

- (4).

- underestimate or do not communicate side effects;

- (5).

- are abusing the trust of users by benefitting from asymmetry of information, or claims that users cannot check;

- (6).

- embed unnecessary built-in obsolescence; and

- (7).

- generate unacceptable externalities.

- (1).

- are relying on unethical practices (e.g., child labour, disregarding work safety, and discrimination);

- (2).

- abuse a monopoly, through pricing or not delivering full value;

- (3).

- fail to comply with rules and regulations (e.g., environment, work safety, and contracts); and

- (4).

- fail to respect the best practices of CSR integrating sustainable development issues.

3.4. Some Clarifications on Responsibility

- (1).

- Contractual responsibility, based on clearly defined mutual obligations that are very specific because they are based on an agreement between two or more parties, and are often related to penalties where a breach occurs. However, as innovation is necessarily linked to uncertainties and ambiguities, or asymmetry of information, contracts may still be a source of litigation.

- (2).

- Legal responsibility, which is specific, as it is based on laws and a jurisprudence providing a framework of obligations, but which is dependent on the laws applicable within a specific jurisdiction, e.g., a particular country. More specifically, the laws and jurisprudence related to Product Liability address claims such as negligence, manufacturing defect, design defect, and breach of an expressed or implied warranty. It can extend to strict liability, when the producer is expected to anticipate the negative impacts of its product and take responsibility for them, in view of the asymmetry of information (the producer knowing more about their product than the consumer).

- (3).

- Moral responsibility, which is value and culture-sensitive (see below) and may be open to interpretations that are outside of the competence area of scientists or engineers and must be elevated to the societal level. The link between moral responsibility and values is often illustrated by the thought experiment of the trolley problem (see below).

3.5. A Focus on Moral Responsibility

4. A Framework for Responsible Research and Innovation for Industry

4.1. Responsible Research and Research Integrity

“Any ethical questions that arise when science is regarded in a wider ethical/social context. Is the subject worthy of investigation? What are the consequences of such research? Could the research result in harm for people, nature or society, or conflict with basic human values? Is the research sufficiently independent of interested parties? Could a university or laboratory become too dependent on sponsored contract research? Could the researcher guard against the improper or selective use and misinterpretation of their findings, or against objectionable applications of their discoveries?”.[105]

4.2. A Framework for Responsible Innovation

- (1).

- reconceiving products and markets that achieve common benefits;

- (2).

- redefining productivity in the value chain; and

- (3).

- enabling local cluster development, so that economic activity also has a local beneficial impact.

4.3. Digital Innovation and Responsibility

4.4. Responsible Institutions

4.5. EU and RRI

- (1).

- Early engagement with all societal actors with the goal of inclusiveness;

- (2).

- Gender equality, addressing under-representation of women;

- (3).

- Science education, i.e., enhancing the current education process;

- (4).

- Ethics, addressing both the mandatory legal aspects and the societal relevance and acceptability of research and innovation outcomes;

- (5).

- Open Access, i.e., giving free online access to the results of publicly-funded research (publications and data); and

- (6).

- Governance, i.e., preventing harmful or unethical developments in research and innovation.

- (1).

- It addresses public research that is not directly linked to the “value chain of innovation” while providing its indispensable breeding ground.

- (2).

- It does not clearly separate integrity of research (see Singapore and Montreal Statement above) from ethics of research institutions.

- (3).

- On research, it does not include or reflect many concepts that already exist or are well established, such as the Montreal Declaration on Research Integrity.

- (4).

- It is rather superficial on what ethics is, and fails to reflect the cultural element of the values that underlie choices.

- (5).

- In large part, it covers elements that are more closely related to good governance of an institution or an organisation, (e.g., gender balance, science education), well described by the UN Compact

- (6).

- It is not adequate for the innovation process as we have defined it above, which is typically implemented by the private sector, and it does not sufficiently reflect the many practices formalised within the OECD or UN or from leading Business Schools or Universities and that are now well established and accepted as guidelines. This is detailed in the section above on responsible innovation.

- (7).

- Finally, it is not sufficiently relevant for innovation in the Digital Economy, one of the major fields of innovation at present.

5. Conclusions, and Proposals for a Way Forward

- (1).

- It does not properly reflect established business practices on innovation and product development management, market analysis and consumer research or compliance,

- (2).

- It has failed to observe parallel developments such as the debates on CSV and CSR, sustainable finance, or ethical leadership.

- (1).

- There is a need for a disambiguation of research (generating knowledge) and innovation (generating economic or societal value). These are very different processes, but are often combined, creating confusion.

- (2).

- We need to better understand how research can fail (e.g., by lacking integrity), how innovation can fail (e.g., by generating undue externalities and deceiving benefits), and what mechanisms we can put in place to minimise the likelihood of these failures occurring.

- (3).

- How cognitive bias can affect research integrity needs to be understood.

- (4).

- The RRI framework must be better aligned to the business and industry practices, embedding elements such as Design Thinking, Business Innovation Canvas, Innovation Project Management, and Risk Management.

- (5).

- We need to clarify the purpose of the RRI framework to induce a better acceptance of this idea. Why should it be implemented? What would the mutual benefits for the various stakeholders be? It should therefore encompass the discussions on the SDG implementation and the development of the circular economy;

- (6).

- The debate on the role of industry in society linked to CSR and CSV, and the emerging discussions on Sustainable Finance and Investment must be considered in the RRI framework.

- (7).

- Innovation in the digital sector is impacting society in a major way, and the Responsible Digital Innovation debate must also be part of the discussion on RRI;

- (8).

- We need to clarify the concept of responsibility: how decisions are made, how they are affected by situations, what the guiding principles are for acting responsibly, and how people can be distracted from behaving ethically.

- (9).

- Regarding business governance, the elements of ethical leadership must be part of the discussion: how an organisation or individuals can fail to behave ethically, and what the practices of good governance are that can prevent this.

- (10).

- For societal governance, we need to clarify the dilemma on the precautionary principle (focus on compliance with regulation) and innovation principles (focus on risks and opportunities), and better define the role that agile governance can play in facilitating innovation without giving up on the precautionary principle.

Acknowledgments

Author Contributions

Conflicts of Interest

References

- EIRMA Library EIRMA Publications. Available online: http://www.eirma.org/taxonomy/term/3803 (accessed on 15 August 2017).

- Stilgoe, J.; Owen, R.; Macnaghten, P. Developing a Framework for Responsible Innovation. Res. Policy 2013, 42, 1568–1580. Available online: https://tinyurl.com/lu49qsa (accessed on 15 August 2017).

- Von Schomberg, R. “A Vision of Responsible Research and Innovation”. In Responsible Innovation; Owen, R., Heintz, M., Bessant, J., Eds.; John Wiley: London, UK, 2013; Available online: https://tinyurl.com/y6wgrm7h (accessed on 15 August 2017).

- Blok, V.; Lemmens, P. The Emerging Concept of Responsible Innovation. Three Reasons Why It Is Questionable and Calls for a Radical Transformation of the Concept of Innovation. 2015. Available online: https://tinyurl.com/ycdoophc (accessed on 15 August 2017).

- Lubberink, B.; Blok, V.; van Ophem, J.; Omta, O. Lessons for Responsible Innovation in the Business Context: A Systematic Literature Review of Responsible, Social and Sustainable Innovation Practices. Sustainability 2017, 9, 721. Available online: https://tinyurl.com/ycpzhkw3 (accessed on 15 August 2017). [CrossRef]

- What Is RRI—RRI Tools? Available online: https://www.rri-tools.eu/about-rri (accessed on 20 September 2017).

- Osterwalder, A.; Pigneur, Y. Business Model Generation. 2010. Available online: https://tinyurl.com/y8htrysh (accessed on 15 August 2017).

- PMI. PMBOK® Guide—Fifth Edition. Available online: https://tinyurl.com/y7n58yul (accessed on 15 August 2017).

- Cooper, R.G. New Products—What Separates the Winners from the Losers and What Drives Success. Chapter One, PDMA Handbook. 2013. Available online: https://tinyurl.com/y83n9btc (accessed on 15 August 2017).

- Platner, H. An Introduction to Design Thinking Process Guide. Available online: https://tinyurl.com/y8sgrypm (accessed on 15 August 2017).

- Agile Project Management: Best Practices and Methodologies. Available online: https://tinyurl.com/y8defav4 (accessed on 15 August 2017).

- Kerzner, H. PM 2.0: The Future of Project Management. 2015. Available online: https://tinyurl.com/y98n5lm7 (accessed on 15 August 2017).

- EIRMA Publications. Working Groups Reports. Available online: https://tinyurl.com/y9jvawl5 (accessed on 15 August 2017).

- Lubin, G.; Kasperkevic, J. The 100 Greatest Trends of the Twentieth Century. 2012. Available online: https://tinyurl.com/y6vbtom4 (accessed on 15 August 2017).

- Bardi, U.; Perini, V. Declining Trends of Healthy Life Years Expectancy (HLYE) in Europe. Available online: https://tinyurl.com/y6w2pzdv (accessed on 15 August 2017).

- Zauli, S.S.; Battista, A.; Frova, L.; Lauriola, P. Healthy Life Years: A very Promising Indicator to be Handled with Caution. Epidemiol. Prev. 2014, 38, 394–397. Available online: https://tinyurl.com/yb9yl3zx (accessed on 15 August 2017).

- Obesity and Overweight—Fact Sheet. Available online: https://tinyurl.com/62hyt96 (accessed on 15 August 2017).

- Air Pollution. Crossing Borders. 2016. Available online: https://tinyurl.com/ybxcz4pp (accessed on 15 August 2017).

- Mojtabai, R.; Olfson, M.; Han, B. National Trends in the Prevalence and Treatment of Depression in Adolescents and Young Adults. Am. Acad. Pediatr. 2016. Available online: https://tinyurl.com/yarxzas2 (accessed on 15 August 2017).

- Jones, G. Why Are Cancer Rates Increasing? Cancer Res. 2015. Available online: https://tinyurl.com/gnevhsk (accessed on 15 August 2017).

- Mekonnen, M.; Hoekstra, A. Four Billion People Facing Severe Water Scarcity. Sci. Adv. 2016. Available online: https://tinyurl.com/gwnx7of (accessed on 15 August 2017).

- Searchinger, T.; Craig, H.C.; Ranganathan, J.; Lipinski, B.; Waite, R.; Winterbottom, R.; Dinshaw, A.; Heimlich, R. The Great Balancing Act, Creating a Sustainable Food Future. 2013. Available online: https://tinyurl.com/yb34gsyx (accessed on 15 August 2017).

- Steffen, W.; Broadgate, W.; Deutsch, L.; Gaffney, O.; Ludwig, C. The Trajectory of the Anthropocene: The Great Acceleration. Antropocene Rev. 2015, 2, 81–98. Available online: https://tinyurl.com/y8kjoogg (accessed on 15 August 2017). [CrossRef]

- Ceballos, G.; Ehrlich, P.; Barnosky, A.; García, A.; Pringle, R.; Palmer, T. Accelerated Modern Human–Induced Species Losses: Entering the Sixth Mass Extinction. Sci. Adv. 2015. Available online: https://tinyurl.com/qc5apmq (accessed on 15 August 2017).

- Cassidy, J. Forces of Divergence; Is Surging Inequality Endemic to Capitalism? 2014. Available online: https://tinyurl.com/qxfuwb4 (accessed on 15 August 2017).

- Schneier, B. Liars and Outliers: Enabling the Trust that Society Needs to Thrive. 2012. Available online: https://tinyurl.com/ybfv6clv (accessed on 15 August 2017).

- NASA. Climate Change: How Do We Know? Available online: https://tinyurl.com/jhqa2nm (accessed on 15 August 2017).

- O’Connor, A. How the Sugar Industry Shifted Blame to Fat. 2016. Available online: https://tinyurl.com/y9lf6kow (accessed on 15 August 2017).

- Bawa, A.; Anilakumar, K. Genetically modified foods: safety, risks and public concerns—A review. J. Food Sci. Technol. 2013, 50, 1035–1046. Available online: https://tinyurl.com/ (accessed on 15 August 2017). [CrossRef] [PubMed]

- Sifferlin, A. How the Sugar Lobby Skewed Health Research. 2016. Available online: https://tinyurl.com/hd37rkk (accessed on 15 August 2017).

- Whaley, P. EFSA, IARC and the Glyphosate Controversy. 2016. Available online: https://tinyurl.com/yasp546h (accessed on 15 August 2017).

- Ehrlich, P.; Ehrlich, A. Can a Collapse of the Global Civilisation Be Avoided? 2017. Available online: https://tinyurl.com/ptauwep (accessed on 15 August 2017).

- Meadows, D.; Randers, J.; Meadows, D. A Synopsis-Limits to Growth, the 30 Year Update. 2004. Available online: https://tinyurl.com/yde4z6z6 (accessed on 15 August 2017).

- WIKIPEDIA. Societal Collapse. Available online: https://tinyurl.com/h44zq6c (accessed on 15 August 2017).

- Saracco, R. The Saga of Research versus Innovation. 2014. Available online: https://tinyurl.com/y7pfzrpa (accessed on 15 August 2017).

- Why Innovate? What Are the Challenges for Europe? 2017. Available online: https://tinyurl.com/ybxpodwm (accessed on 15 August 2017).

- The Entrepreneurial State. 2013. Available online: https://tinyurl.com/psz8gpg (accessed on 15 August 2017).

- About Shared Value. Available online: http://sharedvalue.org/about-shared-value (accessed on 20 September 2017).

- The Measurement of Scientific and Technological Activities. Available online: https://tinyurl.com/y92cyeqv (accessed on 15 August 2017).

- Cooper, R. Formula for Success in New Product Development. 2006. Available online: http://www.stage-gate.net/downloads/wp/wp_23.pdf (accessed on 20 September 2017).

- Defining Innovation Goes Far beyond R&D. Available online: https://tinyurl.com/y82hwuhl (accessed on 15 August 2017).

- Batelle. 2014 GLOBAL R&D FUNDING FORECAST. 2013. Available online: https://tinyurl.com/ycw4mydb (accessed on 15 August 2017).

- Innovation Funnel. Available online: https://tinyurl.com/ycuxccfe (accessed on 15 August 2017).

- FDA. The Drug Development Process. Available online: https://tinyurl.com/y7ewmn8l (accessed on 15 August 2017).

- Kahneman, D. Strategic Decisions: When Can You Trust Your Gut? 2010. Available online: https://tinyurl.com/zm3cevv (accessed on 15 August 2017).

- Osterwalder, A.; Pigneur, Y. Business Generation Model. 2009. Available online: https://tinyurl.com/y77h79hv (accessed on 15 August 2017).

- Kerzner, H. Project Management 2.0: Leveraging Tools, Distributed Collaboration, and Metrics for Project Success. 2015. Available online: https://tinyurl.com/ybklseu3 (accessed on 15 August 2017).

- Sutherland, J. SCRUM: The Management System behind the World’s Top Tech Companies. 2014. Available online: https://tinyurl.com/y7qk6oby (accessed on 15 August 2017).

- Zenger, J.; Folkman, J. Research: 10 Traits of Innovative Leaders. 2014. Available online: https://tinyurl.com/owx7r84 (accessed on 15 August 2017).

- Horth, D.M.; Vehar, J. Innovation How Leadership Makes the Difference. 2015. Available online: https://tinyurl.com/y8ad9duc (accessed on 15 August 2017).

- Convention of Oviedo on Human Rights and Biomedicine. Available online: https://tinyurl.com/yaubadj2 (accessed on 15 August 2017).

- Kaplan, J.C. CRISPR-Cas9: Un Scalpel Génomique à Double Tranchant. 2017. Available online: https://tinyurl.com/y9w47rnp (accessed on 15 August 2017).

- Hirsch, F.; Lévy, Y.; Chneiweiss, H. CRISPR-Cas9: A European Position on Genome Editing. Nature 2017, 541, 30. Available online: https://tinyurl.com/y8dvtdan (accessed on 15 August 2017).

- Gupta, N.; Fischer, A.; Frewer, L. Socio-Psychological Determinants of Public Acceptance of Technologies: A Review. 2011. Available online: https://tinyurl.com/y89h4uxr (accessed on 15 August 2017).

- Technology Acceptance Model. 2014. Available online: https://tinyurl.com/6r6nne (accessed on 15 August 2017).

- Lucht, J. Public Acceptance of Plant Biotechnology and GM Crops. Viruses 2015, 7, 4254–4281. Available online: https://tinyurl.com/y9lktlo3 (accessed on 15 August 2017). [CrossRef] [PubMed]

- Kim, Y.; Kim, M. An International Comparative Analysis of Public Acceptance of Nuclear Energy. 2013. Available online: https://tinyurl.com/y8ladjqu (accessed on 15 August 2017).

- Von Schomberg, R. Prospects for Technology Assessment in a Framework of Responsible Research and Innovation. 2011. Available online: https://tinyurl.com/yd2ygmho (accessed on 15 August 2017).

- Von Schomberg, R. A Vision of Responsible Innovation. 2013. Available online: https://tinyurl.com/ycblytot (accessed on 15 August 2017).

- Fiske, S.; Dupree, C. What Do We Know about Public Trust in Science? 2015. Available online: https://tinyurl.com/ya46ghfr (accessed on 15 August 2017).

- Pew Research Center. Public Trust in Government: 1958–2017. 2017. Available online: https://tinyurl.com/mfc69he (accessed on 15 August 2017).

- 2017 Edelman Trust Barometer: Executive Summary. Available online: https://tinyurl.com/y8a58hpm (accessed on 15 August 2017).

- Edelman, R. A Crisis of Trust—A Warning to both Business and Government. 2017. Available online: https://tinyurl.com/yd4qmmgo (accessed on 15 August 2017).

- Nuticelli, D. The Global Warming Debate Isn’t about Science. 2013. Available online: https://tinyurl.com/ybq6mgb3 (accessed on 15 August 2017).

- Bates, C.; Rowell, A. The Truth about the Tobacco Industry in its Own Words. Available online: https://tinyurl.com/jv3ufmt (accessed on 15 August 2017).

- Baur, X. Asbestos: Social Legal and Scientific Controversies and Unsound Science in the Context with the Worldwide Asbestos Tragedy—Lessons to be Learned. Pneumologie 2015, 69, 654–661. Available online: https://tinyurl.com/y96petcw (accessed on 15 August 2017). [CrossRef] [PubMed]

- Kearns, C.; Schmidt, L.; Glantz, S. Sugar Industry and Coronary Heart Disease Research. JAMA Intern. Med. 2016, 176, 1680–1685. Available online: https://tinyurl.com/hoxgxao (accessed on 20 September 2017). [CrossRef] [PubMed]

- Corporate Europe Observatory. Scientist Writes to Juncker: New Tumor Evidence Found in Confidential Glyphosate Data. 2017. Available online: https://tinyurl.com/y93q2rxs (accessed on 15 August 2017).

- The Economist. Clinical Trials, For My Next Trick. 2016. Available online: https://tinyurl.com/z6gldle (accessed on 15 August 2017).

- The Economist. Difference Engine, the Dieselgate Dilemma. 2016. Available online: https://tinyurl.com/ycsfus68 (accessed on 15 August 2017).

- Curry, J. Brussel Declaration on Principles for Science & Policy Making. 2017. Available online: https://tinyurl.com/ycxsq9h3 (accessed on 15 August 2017).

- Harker, D. Creating Scientific Controversies: Uncertainty and Bias in Science and Society. 2015. Available online: https://tinyurl.com/y8o3t2q3 (accessed on 15 August 2017).

- Lebowitz, S.; Lee, S. 20 Cognitive Biases that Screw up Your Decisions. 2015. Available online: https://tinyurl.com/y9zaph4c (accessed on 15 August 2017).

- Kolbert, E. Why Facts Don’t Change Our Minds. 2017. Available online: https://tinyurl.com/ybv2d5je (accessed on 15 August 2017).

- Cook, J. Inoculation Theory: Using Misinformation to Fight Misinformation. 2017. Available online: https://tinyurl.com/yc4tac3p (accessed on 15 August 2017).

- Hilger, N. Why Don’t People Trust Experts? J. Law Econ. 2016, 59, 2. Available online: https://tinyurl.com/yboyu8fm (accessed on 20 September 2017). [CrossRef]

- Brumfiel, G. Controversial Research: Good Science Bad Science. Nature 2012, 484, 432–434. Available online: https://tinyurl.com/7spzjen (accessed on 15 August 2017).

- A Rough Guide to Spotting Bad Science. 2014. Available online: https://tinyurl.com/msltsm6 (accessed on 15 August 2017).

- Unreliable Research, Trouble at the Lab. 2013. Available online: https://tinyurl.com/ovwctme (accessed on 15 August 2017).

- Center for Genomic Regulation. New Method Addresses Reproducibility in Computational Experiments. 2017. Available online: https://tinyurl.com/lolo7wu (accessed on 15 August 2017).

- Oreskes, N.; Conway, E. Merchants of Doubt: How a Handful of Scientists Obscured the Truth on Issues from Tobacco Smoke to Global Warming. 2010. Available online: https://tinyurl.com/y7mzs5b4 (accessed on 15 August 2017).

- Why Bad Science Persists—Incentive Malus. 2016. Available online: https://tinyurl.com/z6jkulp (accessed on 15 August 2017).

- METRICS. Stanford. Why MetaResearch Matters. Available online: http://metrics.stanford.edu/ (accessed on 15 August 2017).

- Regional Innovation Ecosystems. 2016. Available online: https://tinyurl.com/yas8bvta (accessed on 15 August 2017).

- Almquist, E.; Senior, J.; Bloch, N. The Elements of Value. 2016. Available online: https://hbr.org/2016/09/the-elements-of-value (accessed on 15 August 2017).

- Hobcraft, P. Top Ten Causes of Innovation Failure. Available online: https://tinyurl.com/y86dzdjz (accessed on 15 August 2017).

- The Economist. Schumpeter Social Saints, Fiscal Fiends. Available online: https://tinyurl.com/ybc5gph9 (accessed on 15 August 2017).

- Business and Sustainable Development Commission—Better Business Better World. Available online: https://tinyurl.com/hzo65po (accessed on 15 August 2017).

- Iatridis, K.; Schroeder, D. Responsible Research and Innovation in Industry. Available online: https://tinyurl.com/yb7ucy2w (accessed on 15 August 2017).

- Panahi, O. Could There Be a Solution to the Trolley Problem? Available online: https://tinyurl.com/y9j2p8dj (accessed on 15 August 2017).

- Dean, J. The Trolley Dilemna and How It Related to Communication. Available online: https://tinyurl.com/yc7dpgqs (accessed on 15 August 2017).

- Marshal, A. Lawyers Not Ethicists Will Solve the Robocar Trolley Problem. Available online: https://tinyurl.com/y8fgjhvl (accessed on 15 August 2017).

- Richardson, G.; Penn, J. Value-Centric Analysis and Value-Centric Design. Available online: https://tinyurl.com/y96qjbpv (accessed on 15 August 2017).

- Icelandic Human Right Center. Human Rights Definitions and Classification. Available online: https://tinyurl.com/hokwg4z (accessed on 15 August 2017).

- WBCSD. New CEO Guide to the Sustainable Development. Available online: https://tinyurl.com/y829x6sa (accessed on 15 August 2017).

- Illimitable Men. Understanding the Dark Triad—A General Overview. Available online: https://tinyurl.com/y7arsr2q (accessed on 15 August 2017).

- Mauron, A. Les Aspects Éthiques du Diagnostic Pré-Implantatoire. Available online: https://tinyurl.com/ycc5fasd (accessed on 15 August 2017).

- The Nanodiode Project. Enabling Dialogue on Nanotechnologies. Available online: https://tinyurl.com/y8nvfrkj (accessed on 15 August 2017).

- The Telegraph. Worst Tech Predictions of All Time. Available online: https://tinyurl.com/hwrv4t7 (accessed on 15 August 2017).

- Barnett, A. Gates: I’ll Rid the World of Spams. Available online: https://tinyurl.com/y73vt7qo (accessed on 15 August 2017).

- Gupta, N.; Fischer, A.; Frewer, L. Socio-psychological determinants of public acceptance of technologies. Publ. Underst. Sci. 2011, 21, 782–795. Available online: https://tinyurl.com/y95p3exn (accessed on 15 August 2017). [CrossRef] [PubMed]

- Bouter, L.; Tijdink, J.; Axelsen, N.; Riet, G. Ranking Major and Minor Research Misbehaviors. Available online: https://tinyurl.com/yb62w55z (accessed on 15 August 2017).

- World Conference on Research Integrity. Available online: http://www.researchintegrity.org (accessed on 15 August 2017).

- Singapore Statement on Research Integrity. Available online: http://www.singaporestatement.org/ (accessed on 15 August 2017).

- Montreal Statement on Research Integrity. Available online: https://tinyurl.com/y9rosw34 (accessed on 15 August 2017).

- European Code of Conduct for Research Integrity. Available online: https://tinyurl.com/y9qvbkn7 (accessed on 15 August 2017).

- Lemaitre, B. Science, narcissism and the quest for visibility. FEBS 2017, 284, 875–882. Available online: https://tinyurl.com/ydbflp5l (accessed on 15 August 2017). [CrossRef] [PubMed]

- Munafò, M.; Nosek, B.; Bishop, D.; Button, K.; Chambers, C.; du Sert, N.; Simonsohn, U.; Wagenmakers, E.; Ware, J.; Ioannidis, J. A Manifesto for Reproducible Science. Available online: https://tinyurl.com/jcya7f7 (accessed on 15 August 2017).

- Center of Study for the Ethics in Profession. Available online: http://ethics.iit.edu/about (accessed on 15 August 2017).

- UN. Global Compact 2017 Toolbox. Available online: https://tinyurl.com/yckgzaul (accessed on 15 August 2017).

- OECD. Declaration and Decisions on International Investment and Multinational Enterprises. Available online: https://tinyurl.com/y7z5qnyf (accessed on 15 August 2017).

- Financial Time. Definition of Corporate Responsibility. Available online: https://tinyurl.com/yckj9jrl (accessed on 15 August 2017).

- Financial Time. Definition of Creating Shared Value CSV. Available online: https://tinyurl.com/y6wpew2g (accessed on 15 August 2017).

- UN. Sustainable Development Goals (SDG)—17 Goals to Transform Our World. Available online: https://tinyurl.com/q4ddfbf (accessed on 15 August 2017).

- Investopedia. Corporate Social Responsibility. Available online: https://tinyurl.com/kwyjd74 (accessed on 15 August 2017).

- ISO 26000:2010, Guidance on Social Responsibility. Available online: https://tinyurl.com/y8j9mgxl (accessed on 15 August 2017).

- Porter, M.; Kramer, M. Creating Shared Value. Available online: https://tinyurl.com/pb6eo6w (accessed on 15 August 2017).

- Crane, A.; Palazzo, G.; Spence, L.J.; Matten, D. Contesting the Value of “Creating Shared Value”. Available online: https://tinyurl.com/z363kml (accessed on 15 August 2017).

- The Economist. Corporate Social Responsibility, the Ethics of Business. Available online: http://www.economist.com/node/3555286 (accessed on 15 August 2017).

- Can a Different Framework of Value Enable Greater Trust in Business? Available online: https://tinyurl.com/y9fgnqqc (accessed on 15 August 2017).

- Guide de l’Investissement Durable. Swiss Sustainable Finance. Available online: https://tinyurl.com/y8tubxb6 (accessed on 15 August 2017).

- PRI. What Is Responsible Investment? PRI-Principles for Responsible Investments. Available online: https://tinyurl.com/ybr5pybf (accessed on 15 August 2017).

- The Economist. Businesses Can and Will Adapt to the Age of Populism. Available online: https://tinyurl.com/ybw4bx32 (accessed on 15 August 2017).

- European Commission. Responsible Innovation in ICT. Available online: https://tinyurl.com/yb8m7guy (accessed on 15 August 2017).

- Wikipedia. Digital Rights. Available online: https://tinyurl.com/yd662ntg (accessed on 15 August 2017).

- Lessig, L. Code and Other Laws of Cyberspace. Available online: https://tinyurl.com/ycpnb3d8 (accessed on 15 August 2017).

- Hsu, J. Tech Leaders Are Just Now Getting Serious about the Threats of AI. Backchannel. Available online: https://tinyurl.com/ydal2jl9 (accessed on 15 August 2017).

- Future of life Institute. Asilomar AI Principles. Available online: https://futureoflife.org/ai-principles/ (accessed on 15 August 2017).

- WEF. The Future of Jobs. Available online: https://tinyurl.com/ht73pp3 (accessed on 15 August 2017).

- OECD. Key Issues for Digital Transformation in G20. Available online: https://tinyurl.com/yaa7w6uw (accessed on 15 August 2017).

- European Commission. Summary Report of the Public Consultation on Building a European Data Economy. Available online: https://tinyurl.com/ybnl2rz9 (accessed on 15 August 2017).

- UNESCO. Harnessing Science to Society. World Conference on Science. Available online: https://tinyurl.com/yasvu3g9 (accessed on 15 August 2017).

- Nichols, T. How America Lost Faith in Expertise and Why That’s a Giant Problem. Available online: https://tinyurl.com/y7eas5vx (accessed on 15 August 2017).

- Hodak, M.; Buchanan, B. Central Ethical Issues of Corporate Governance. Available online: https://tinyurl.com/ycp9a7wx (accessed on 15 August 2017).

- Jennings, M. Seven Signs of Ethical Collapse. Available online: https://tinyurl.com/ya4x2baq (accessed on 15 August 2017).

- European Commission. Responsible Research and Innovation Europe’s Ability to Respond to Societal Challenges. 2012. Available online: https://tinyurl.com/js738h9 (accessed on 15 August 2017).

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dreyer, M.; Chefneux, L.; Goldberg, A.; Von Heimburg, J.; Patrignani, N.; Schofield, M.; Shilling, C. Responsible Innovation: A Complementary View from Industry with Proposals for Bridging Different Perspectives. Sustainability 2017, 9, 1719. https://doi.org/10.3390/su9101719

Dreyer M, Chefneux L, Goldberg A, Von Heimburg J, Patrignani N, Schofield M, Shilling C. Responsible Innovation: A Complementary View from Industry with Proposals for Bridging Different Perspectives. Sustainability. 2017; 9(10):1719. https://doi.org/10.3390/su9101719

Chicago/Turabian StyleDreyer, Marc, Luc Chefneux, Anne Goldberg, Joachim Von Heimburg, Norberto Patrignani, Monica Schofield, and Chris Shilling. 2017. "Responsible Innovation: A Complementary View from Industry with Proposals for Bridging Different Perspectives" Sustainability 9, no. 10: 1719. https://doi.org/10.3390/su9101719

APA StyleDreyer, M., Chefneux, L., Goldberg, A., Von Heimburg, J., Patrignani, N., Schofield, M., & Shilling, C. (2017). Responsible Innovation: A Complementary View from Industry with Proposals for Bridging Different Perspectives. Sustainability, 9(10), 1719. https://doi.org/10.3390/su9101719