1. Introduction

The influence of long-term forest management practices on soil CO

2 efflux (R

s) rates plays a key role in determining total ecosystem carbon budgets [

1,

2,

3]. It has been estimated that soil CO

2 efflux represents one of the largest global terrestrial fluxes of carbon to the atmosphere, with the total annual R

s flux (75 Pg C year

−1) an order of magnitude greater than current annual anthropogenic carbon (C) emissions from fossil fuel combustion (6 Pg C year

−1) [

4]. Soil CO

2 efflux is a function of various interrelated biogeochemical factors that govern the production of autotrophic soil CO

2 efflux by plant roots and associated mycorrhizal fungi (R

a) and heterotrophic soil CO

2 efflux by soil micro and macro biota(R

h) [

3,

4,

5,

6].

Fire can influence R

s rates by differentially impacting the R

h and R

a sources of CO

2 [

4,

7]. For example, short-term autotrophic production of CO

2 can be reduced by fire due to both aboveground and belowground plant mortality and injury. While the long-term impacts of fire on R

a are variable, R

a has been shown in some cases to increase with time since fire as vegetation recovers following disturbance [

8]. In many cases, the burning of vegetation and surface fuels reallocates nutrient resources via incomplete combustion and subsequent deposition of ash, char, and other residues [

8,

9]. In the short-term period following the deposition of those residues, both plants and soil microbes may respond positively to the availability of such resources, subsequently increasing R

s rates. Long-term prescribed fire management regimes may also impact R

h sources of CO

2, by influencing vegetation composition, structure and associated litter and duff quality, production, and accumulation rates [

10] which in turn have been shown to influence soil microbial populations and metabolic activity [

11]. Fire can also reduce R

h by killing soil microbes through heating of litter and duff layers and upper soil horizons [

4]. Previous studies in multiple ecosystems have shown that both fire and forest management can influence R

s rates, soil carbon pools, and various coupled biogeochemical processes [

12,

13,

14,

15,

16]. For example, in a study of a mixed conifer forest in California, USA, Ryu et al. [

17] found that prescribed fire reduced R

s rates while simultaneously altering soil conditions that would otherwise be associated with increased R

s rates. However, two studies that investigated the influence of prescribed fire in a different mixed conifer forest in California and an upload oak (

Quercus spp. L) forest in Missouri, USA, found that while prescribed burning significantly altered forest floor conditions, there was no clear effect on R

s rates [

18]. The contrasting results reported by these studies demonstrate the complex nature of predicting the influence of prescribed fire on R

s. While many factors influencing R

s rates have been identified, much remains to be determined regarding the effects of specific forest and land management practices on overall R

s rates [

2,

3,

4], especially where management has been long-term rather than experimental.

Frequent prescribed fire is one of the dominant tools for forest management in the pine-grassland forests of the southeastern USA [

19,

20]. Many of the current pine-grassland forests in the region are old-field forest assemblages that exist on former agricultural lands estimated to cover up to 21 million ha across the southeastern USA [

21]. Across many of these forests, frequent (1–3 year return interval) prescribed fire is used to perpetuate native species assemblages, reduce the risk of destructive wildfires, and promote and restore wildlife habitat [

22]. Although less frequently cited, the impacts of prescribed fire regimes on soil carbon sequestration are likely to increase in importance as important commodity trade partners adopt carbon credit exchanges (e.g., Canada). The long term consequences of varying fire return intervals for carbon cycling in pine grasslands can inform fire management decisions and projections of carbon sequestration capacity.

This study sought to investigate the impacts of fire regime, specifically annual burning, biennial burning, and prolonged fire exclusion, on Rs rates in old-field pine-grassland forests. In addition, this study sought to interpret the potential response of Rs to biotic and abiotic factors, including soil temperature, soil moisture, forest stand characteristics, and soil physical properties and chemistry. The intent of this research is to build upon our understanding of the effects of frequent fire on carbon dynamics and sequestration in pine-grasslands and similar woodland and savanna communities worldwide. Studies such as this also provide insight into the response of ecosystem carbon dynamics to forecasted changes in temperature and moisture regimes due to global climate change.

4. Discussion

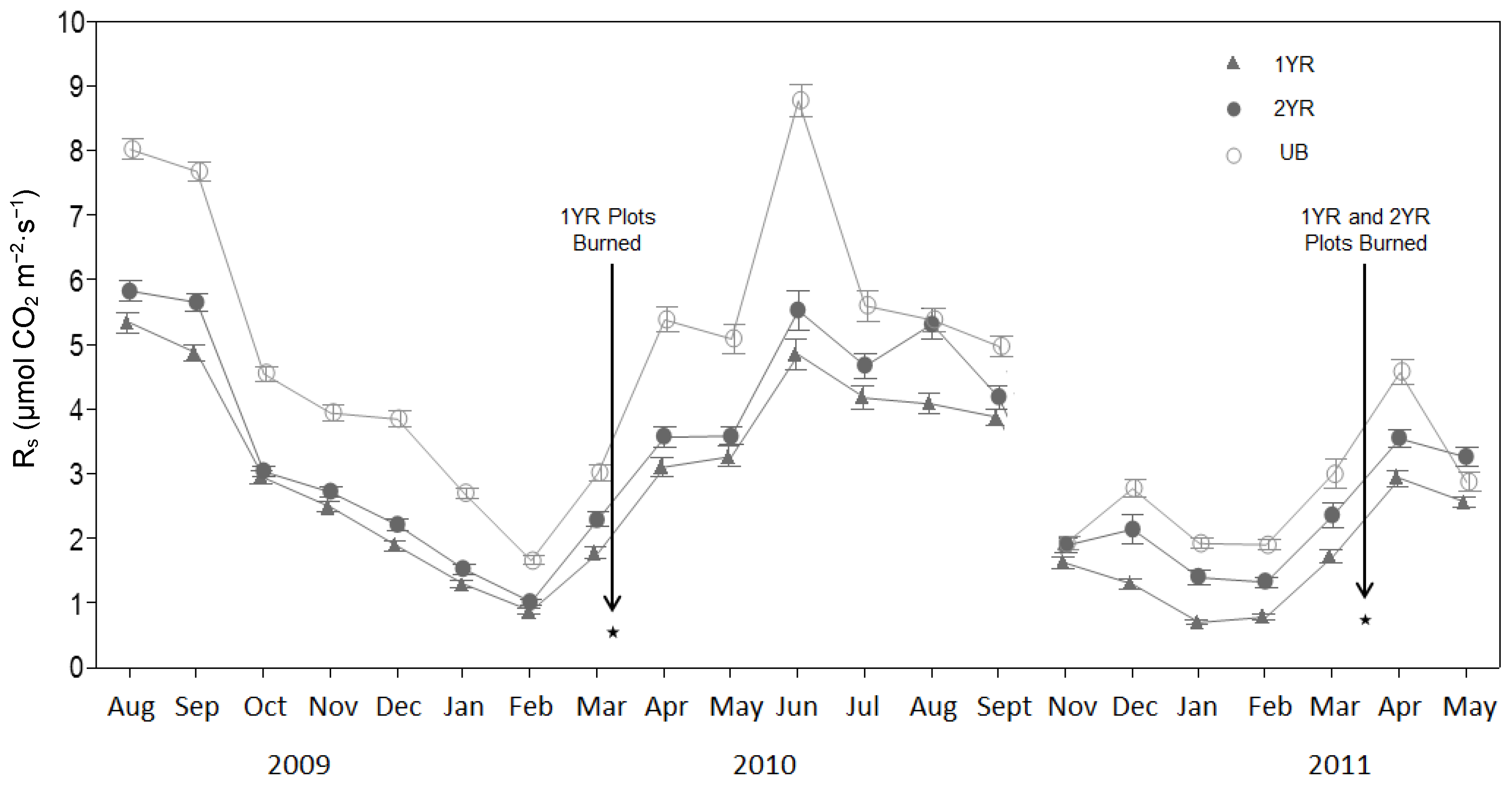

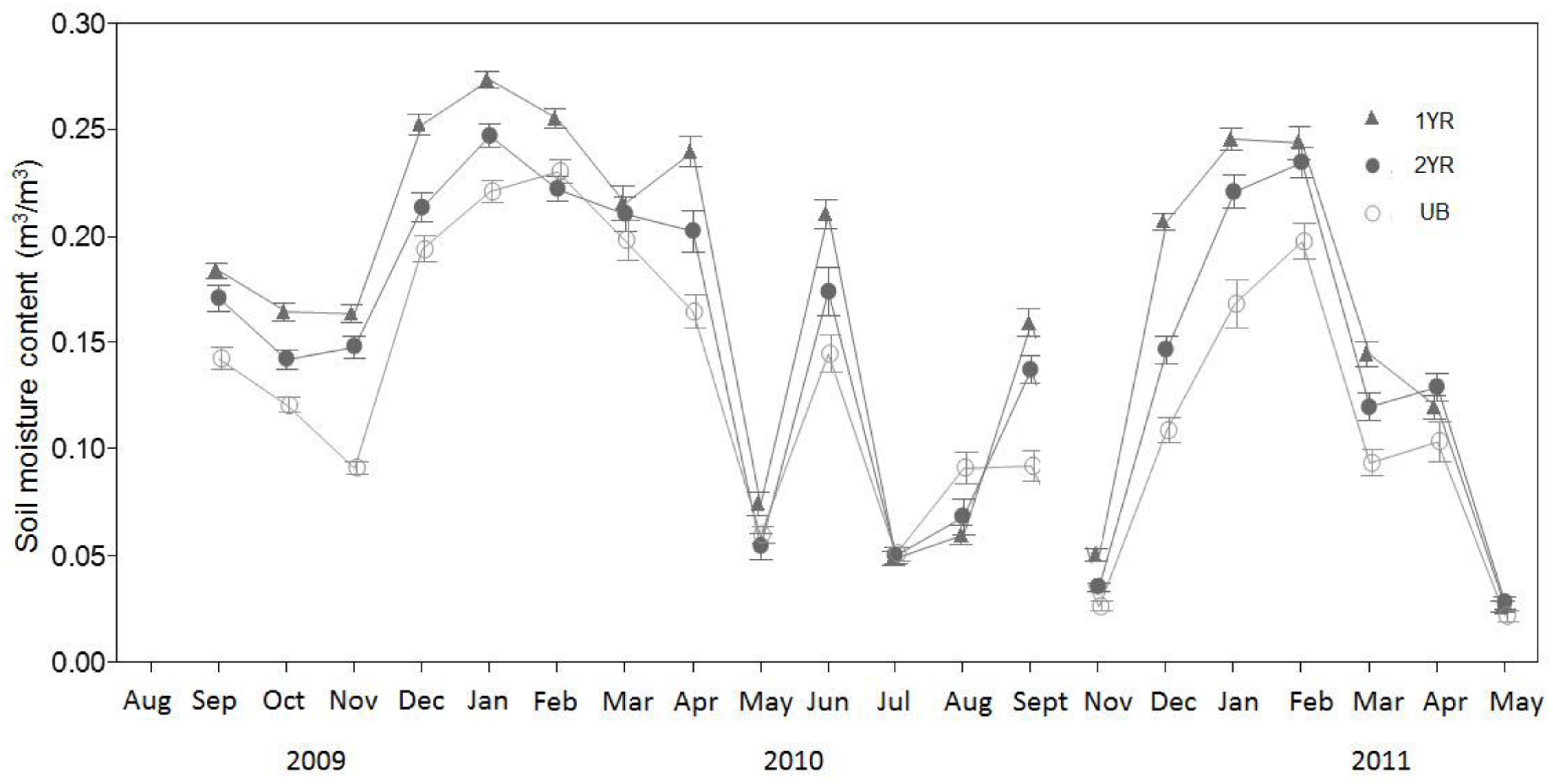

Results of our study show that Rs was consistently higher in long fire-excluded pine-grasslands than in frequently burned pine-grasslands, while there was little difference between annually and biennially burned communities. Although determining specific mechanisms for the observed pattern was beyond the scope of this study, there is evidence that Rs responded to environmental conditions influenced by fire regime effects on forest structure.

In the UB sites, aboveground living tree biomass (≈150 Mg ha

−1) was much greater than in the 1YR and 2YR sites (≈50 Mg ha

−1 and ≈80 Mg ha

−1, respectively), suggesting that the presumably corresponding higher tree root biomass in the unburned plots may have influenced R

s. Other studies investigating R

s rates across stand age and biomass gradients have found mean R

s rates to be higher in older stands with greater aboveground biomass [

30,

31]. In a trenching and exclusion experiment along a chronosequence of temperate forests in China, Luan et al. [

32] found that R

s rates were significantly correlated with site basal area (

R2 = 0.59,

p < 0.05).

Higher soil respiration rates in the unburned sites may have also been influenced by tree species composition and associated litter quality, specifically dominance by deciduous broadleaf trees which were essentially absent in the burned plots. Soil CO

2 efflux rates have been shown in other studies to be lower in coniferous forests than in broad-leafed forests of the same soil type [

5]. In a study of R

s rates in a mixed conifer–deciduous forest in Belgium, Yuste et al. [

33] found that mean R

s rates were lower under conifer tree canopies than under deciduous canopies, with total estimated annual carbon flux approximately 50% greater in the deciduous sites (8.8 ± 2.2 Mg C ha

−1·year

−1) than in the coniferous sites (4.8 ± 0.7 Mg C ha

−1·year

−1). In their review, Raich and Tufekciogul [

5] suggested that the observed differences in R

s between coniferous and deciduous forests may have been driven by forest litter production and quality, carbon allocation, and autotrophic contributions to total R

s.

In our study, litter and duff depths were highest in the UB sites, reflecting higher tree stocking and lack of fire, in turn possibly contributing to higher R

s rates in the UB sites. Other studies have reported that frequent fire greatly reduces the accumulation of litter and duff [

34]. The lower soil carbon levels and lowest R

s in the 1YR plots compared to the 2YR plots likely reflect limited inputs of organic matter because of annual burning. The 2YR plots in turn are assumed to have lower organic matter inputs to the soil than the UB plots because frequent fire, yet the total carbon was higher in the 2YR plots, suggesting lower rates of decomposition than the UB plots consistent with the lower R

s measured. It appears that some characteristic of the frequently burned environment suppressed soil respiration, whether through its effect on R

a or R

h. Increased bulk density associated with burning, found in this and other studies [

35,

36,

37] has been attributed to infiltration of micropore space by ash and char, which might negatively influence microbial activity. Similar patterns of higher total carbon in the mineral soil of burned than unburned areas have been seen in many long-term fire experiments in the southeastern USA [

36,

38,

39,

40,

41].

The R

s rates observed in this study resulted in lower estimated total annual soil carbon emissions in the 1YR (37%) and 2YR (25%) sites relative to the UB sites. The estimated monthly carbon emissions for all sites were similar to those reported by Samuelson et al. [

28] for an unburned loblolly pine plantation in southwestern Georgia, USA, while the estimated total annual soil carbon emissions reported in our study were similar to those (14.10 Mg ha

−1·year

−1) reported by Maier and Kress [

42] for an unburned loblolly pine plantation, but greater than those (7.78–9.66 Mg ha

−1·year

−1) reported by the Samuelson et al. [

28] study.

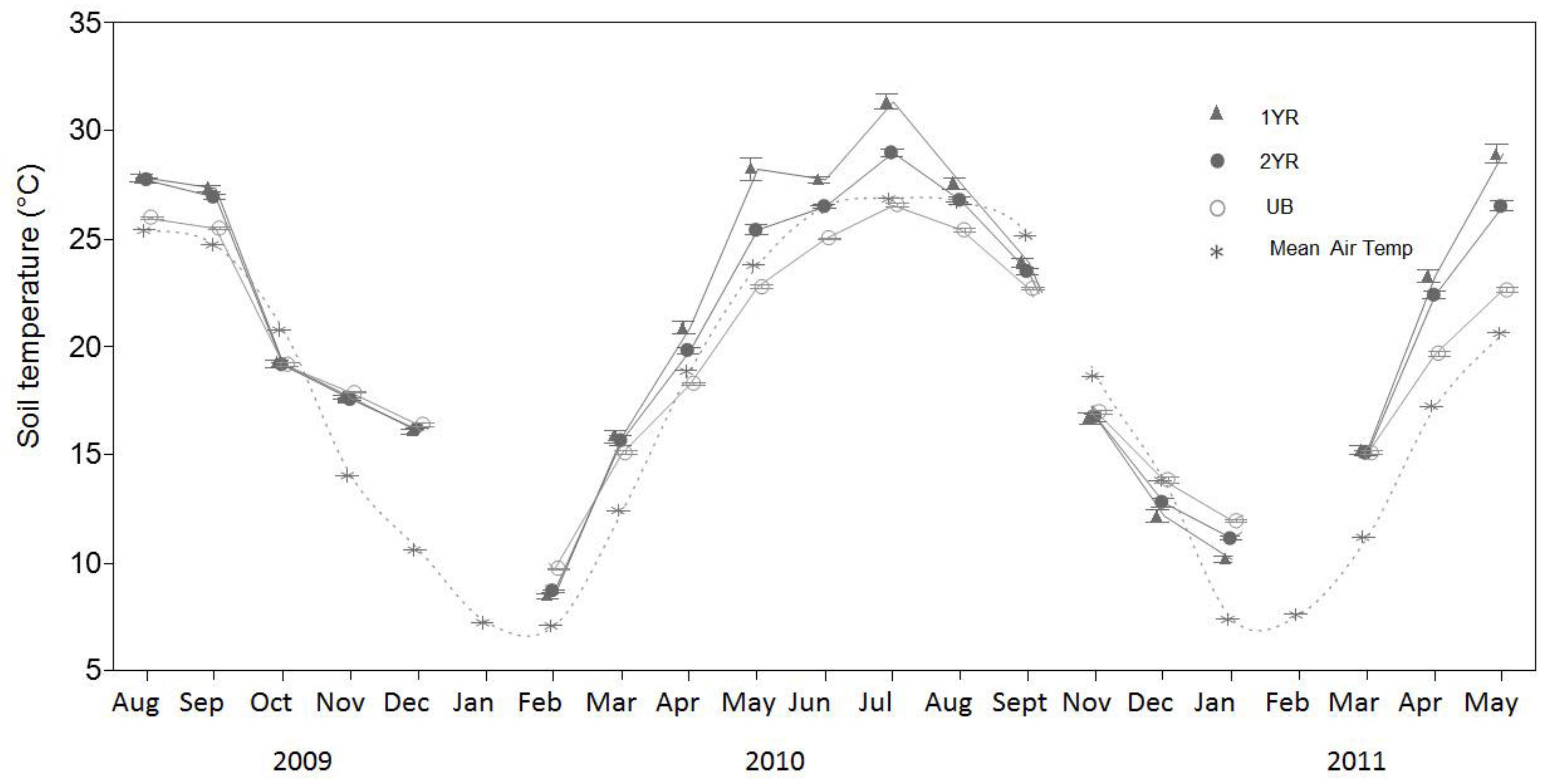

Temporal variability in R

s rates was explained more by soil temperature than any other recorded parameter. These results were consistent with other studies in southeastern USA forest systems in which R

s rates were found to have strong correlations with soil temperature [

28,

42,

43]. The R

s correlation with T

s reported in this study (

R2 = 0.62–0.78 in linear models and

R2 = 0.60–0.80 in nonlinear models) were higher than those reported by Samuelson et al. [

28] for a Georgia, USA loblolly pine plantation (

R2 = 0.38–0.56), much higher than those reported by Templeton et al. [

43] across a range of loblolly pine plantations in 11 southeastern USA states (

R2 = 0.45), and similar to those reported for a loblolly pine plantation in North Carolina, USA (

R2 = 0.70) [

42]. Caution must be used in comparing correlations among studies, as variations in the modeled R

s and T

s relationship may be influenced by statistical modeling techniques regardless of the biogeochemical couplings observed in the field. While T

s explained the majority of the temporal variability in R

s rates, a lack of significant differences in T

s among treatments suggests that temperature was not the key factor contributing to the differences in R

s rates among the treatments.

Over the course of this study’s sampling period, relationships between R

s and T

s varied seasonally, with stronger relationships during the winter seasons. This pattern was evident in the R

2 values of the seasonal linear and nonlinear models as well as in the seasonal Q

10 values. Distinct seasonal variations in Q

10 values have been noted by others [

44,

45] and such variations have potential implications for modeling R

s values based on temperature measurements. Yuste et al. [

45] suggested that while annual Q

10 values may sufficiently model annual R

s rates, seasonal or shorter-term estimates of R

s should be based on season specific Q

10 functions that capture the seasonal variation in R

s temperature response, as observed in our study.

The seasonal variation in the relationship between R

s and T

s may be the result of phenology-related shifts in the relative contributions of R

a and R

h to R

s. Previous research in partitioning the sources of R

s have shown that during periods of aboveground vegetative growth, R

a contributions to R

s can increase relative to R

h, as plants allocate recent C photosynthate belowground, driving higher root maintenance, root growth, and mycorrihizal fungal respiration rates [

6,

46]. In addition, other studies have shown that during periods of aboveground vegetative growth, the T

s and R

s relationship weakens as other variables such as soil moisture and available photosynthetically active radiation (PAR) become important in governing belowground C allocation by plants [

47,

48,

49]. Similar to our findings, Fenn et al. [

50] found that soil temperature explained less of the variation in R

s during the summer than during the spring in a multi-season study of R

s rates in a woodland in Oxfordshire, UK.