1. Introduction

Laser scanner sensors and stereo vision systems provide fast and accurate three-dimensional (3D) information on objects, buildings, and landscapes without maintaining direct contact with the measured objects. This information is useful in several remote sensing applications like digital terrain model generation [

1], 3D city modeling [

2], and feature extraction [

3]. Laser scanner sensors can be placed on aerial (aerial laser scanners, ALS) or terrestrial platforms (terrestrial laser scanners, TLS). TLS can be categorized into two types: static and dynamic. Static TLS data collection is carried out from base stations: A sensor is fixed in a base station, from which the point cloud is acquired. Dynamic TLS or mobile laser scanner (MLS) sensors are installed in a mobile platform. MLS sensors have a navigation system based on global navigation satellite systems (GNSS) and inertial measurement units (IMU). This work is focused on segmenting 3D point clouds produced by MLS sensors to determine existing curbs in urban environments. An accurate method for determining the location of curbs, road boundaries, and urban furniture is crucial for several applications, including 3D urban modeling and developing autonomous navigation systems [

4,

5]. Moreover, accurate and automatic detection of cartographic entities saves a great deal of time and money when creating and updating cartographic databases [

6]. The current trend in remote sensing feature extraction is the development of methods as automatic as possible. The aim is to develop algorithms that can obtain accurate results with the least possible human intervention in the process. It is difficult to create a fully automatic method of determining the location of and extracting every piece of urban furniture in a city for the following reason: Urban furniture has a heterogeneous typology; every city, and almost every street, has its own typical furniture. Most works on feature extraction have proposed semi-automatic methods in which the user must control a few settings for accurate detection. The authors of this work have developed a semi-automatic method to detect street curbs through segmenting the measured point cloud. Furthermore, a method to extract upper and lower curb edges is proposed. We attempted to minimize the number of thresholds. In the current method, the user must control three settings that depend directly on features of the studied area: the height of the curb, the point density in the curb’s vertical wall, and the roughness. The paper is organized as follows:

Section 2 summarizes previous studies related to ours;

Section 3 shows the proposed method to segment the point cloud; and in

Section 4 the results obtained in two study cases performed with different MLS sensors are detailed. Finally, our conclusions and future lines of work are described in

Section 5.

2. Related Work

Many applications for TLS have been reported since the appearance of these systems. The 3D modeling of buildings and indoor areas [

7,

8], the geometry verification of tunnels [

9], the detection of urban furniture and pole-like objects [

10], and the modeling and reconstruction of 3D trees [

11] are some of the applications for which TLS sensors have been used. Additionally, several applications for point clouds detected via MLS sensors exist in the current literature. They have been used in applications such as vertical wall extraction [

12], façade modeling [

13], building footprint detection [

14], and the extraction of pole-like objects, such as traffic signs, lamp posts, or tree trunks [

15,

16].

The amount of point clouds data provided by laser scanning systems is extremely large, composed of (

x, y, z) coordinates and additional information such as intensity, Global Positioning System (GPS) time, or the scanning angle of millions of points. The analysis and processing of these data is computationally complex. Hence, in order to reduce the processing times and the complexity of the datasets, the point clouds are often simplified before an algorithm is used for feature extraction, mapping, or decision-making. In some cases, the point clouds are segmented into several clusters that are individually analyzed and classified [

17]. In [

18] a segmentation method based on the difference of normals of a point and its neighborhood applied in a multi-scale approach is proposed. The difference of normals algorithm is efficient for segmenting a large 3D point cloud into various objects of interest at different scales such as cars, road curbs, trees, or buildings. In other works, the 3D point cloud is divided into other smaller clouds formed by slices of the original to reduce the amount of data. In [

19], as a previous step in the detection of existing street curbs, the measured point cloud is divided into several road cross sections using the GPS time data. Another option to make the point cloud more manageable is to decompose the measured data in a 3D voxel grid. In [

20], a method is presented that performs a 3D scene analysis from streaming data. This procedure consists of a hierarchical segmentation formed by multiple consecutive segmentations of the point cloud. The original point cloud is sparsely quantized into infinitely tall pillars; each pillar is quantized into coarse blocks and each block contains a linked list of its occupied voxels. In this work, voxels are considered the atomic unit that object categories are assigned to. The original point cloud is projected into a 2D raster image that represents the XY surface. Thus, the 3D information is reduced to a 2D raster in which image processing techniques can be applied to determine the location of the target features.

The work presented in this paper is devoted to curb detection from MLS point clouds. In the current literature, there are many studies related to this issue. Some of them use as input data point clouds obtained from stereo vision; recently, several authors have focused on the detection of road markings, lines, and roadsides in straight and curved areas based on data obtained by stereo cameras [

21,

22]. There are also some methods in the current literature used to detect curbs and roadsides based on point clouds measured with TLS and MLS sensors. In [

23], a method to detect curbs using 3D scanner sensor data was presented. The detection process starts with the voxelization of the point cloud and the separation of those points that represent the ground. Later, candidate points for curbs are selected based on three spatial variables: height difference, gradient value, and normal orientation. This gradient value, that is, the local elevation rate of change, is obtained by applying a 3 × 3 Sobel operator in both horizontal and vertical directions in a 2D elevation map. Using a short-term memory technique, every point located in a voxel whose vertical projection is in the road is considered a false positive and is deleted. Finally, the curb is detected by adjusting a parabolic model to the candidate points and performing a RANSAC algorithm to remove false positives. The performance of the method depends on the correct selection of the thresholds for each of the three variables used. This method provided a detection rate of about 98% in two studied datasets.

Weiss and Dietmayer [

24] automatically determined the position of lane markings, sidewalks, reflection posts, and guardrails by a vertically and horizontally automotive laser scanner data. Curb detection applies a third-order Gaussian filter to sharpen the vertical distance profile, which defines the shape of the curb. This profile is divided into sections with a certain width, forming an accumulative histogram. Candidate curbs are found through a histogram-based algorithm that searches those slots of the histogram that are candidates to represent curbs and guardrails. Because not every candidate is a valid curb, the locations of real curbs are determined by analyzing the heights, slopes, and interruptions of every polygon.

Belton and Bae [

25] proposed a method to automatize the identification of curbs and signals using a few steps. The rasterization of the 3D point cloud into a 2D grid structure allows each cell to be examined separately. First, the road is extracted; then, cells that are adjacent to the road are likely to contain curbs. Points in these cells are used to determine the vertical plane of the curb, from which a 2D transversal section is calculated. The top and bottom of the curb are determined as the points that are furthest above and below the line defined by the two furthermost points in the 2D section. This procedure has several limitations. The proposed method would not provide good results detecting concave and non-horizontal roads; furthermore, the method could provide poor results for shorter or curved curbs due to confusing edges with other points of the studied profile.

Yang

et al. [

19] carried out edge detection by dividing the measured point cloud into 2D sections using the GPS time at which every point was registered. They applied a moving window to these 2D sections to detect the roads and road boundaries. Curbs were detected by analyzing the elevation and shape change in the moving windows studied. They also presented a method to detect curbs in occluded parts of the cloud, but some problems in areas with irregular shapes were detected. The value of the parameters and the length of the moving window are critical to the performance of the proposed method.

A recent work by Hervieu and Soheilian [

26] describes a method to extract curbs and ramps, as well as reconstruct lost data in areas hidden by obstacles in the street. A system for the reconstruction of road and sidewalk surfaces is also proposed. They adjust a plane to a group of points from the cloud and compute the angular distance between the normal vector and the z vector. After that, a prediction/estimation model is applied to detect road edges. The procedure requires the user to manually select the curb direction, which is not always easy. This method could fail in curved or occluded sections. To solve this problem, they propose a semi-automatic solution in which the user must choose some points of the non-detected curb to reconstruct these sections.

In [

27], Kumar

et al. developed a method to detect road boundaries in both urban and rural roads, where the non-road surface is comprised of grass and soil and the edges are not as easily defined by slope changes alone. A 2D rasterization of the slope, reflectance, and pulse width of the detected point cloud is carried out. Gradient vector flow and a balloon model are combined to create a parametric active contour model, which allows the road boundaries to be determined. Roadside detection is carried out using a snake curve, which is initialized based on the navigation track of a mobile van along the road section. The snake curve moves using an iterative process until it converges on the roadsides, where the minimum energy state is located. This method has been tested in straight sections and provided good results, but its performance in curved sections is unknown. The procedure is computationally complex, which could make the detection process too slow.

Serna and Marcotegui [

28] propose a method to create obstacle maps from MLS point clouds. They construct range images projecting 3D points and use morphological filters to remove isolate and not elongated structures. Our approach is in some way similar to that, although there are some significant differences since we do not use the minimal range image to detect the lowest points, and morphological operators are applied in a different way. We also add density and roughness as parameters to detect the curbs.

Apart from the methods described in the current literature, there are other solutions for curb detection in commercial software packages [

29], but their technical details could not be found in the literature. These solutions are not fully automatic; users must provide some initial information to the software.

5. Conclusions

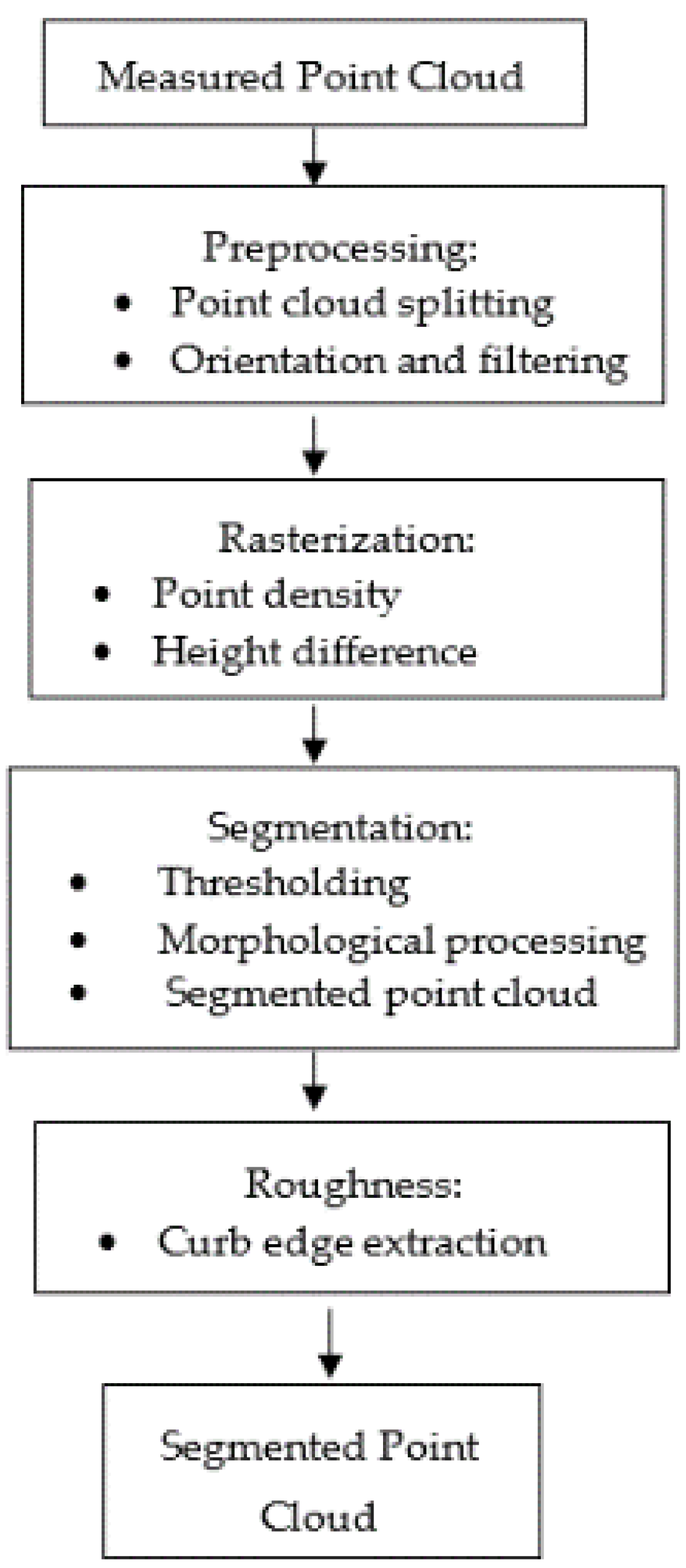

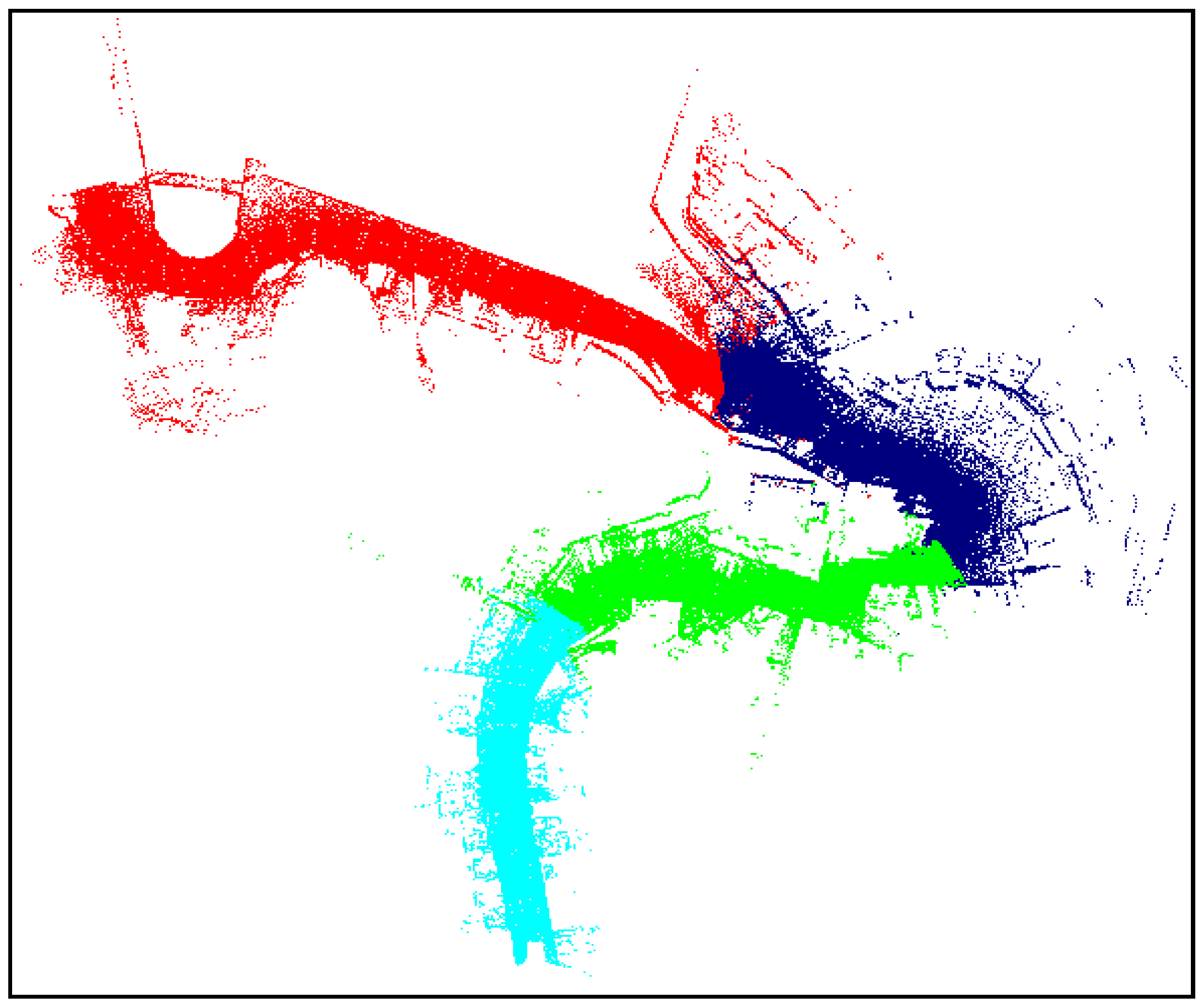

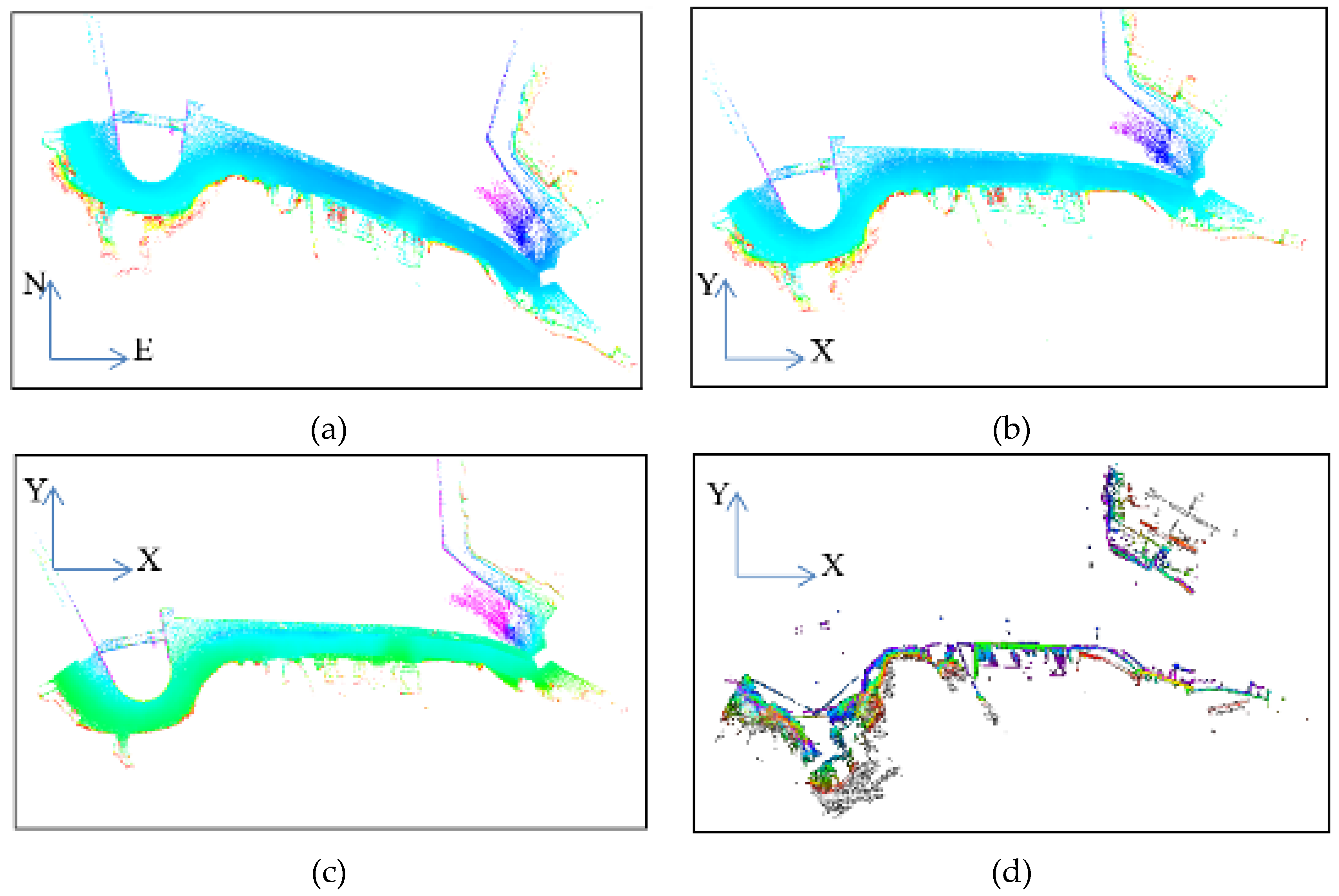

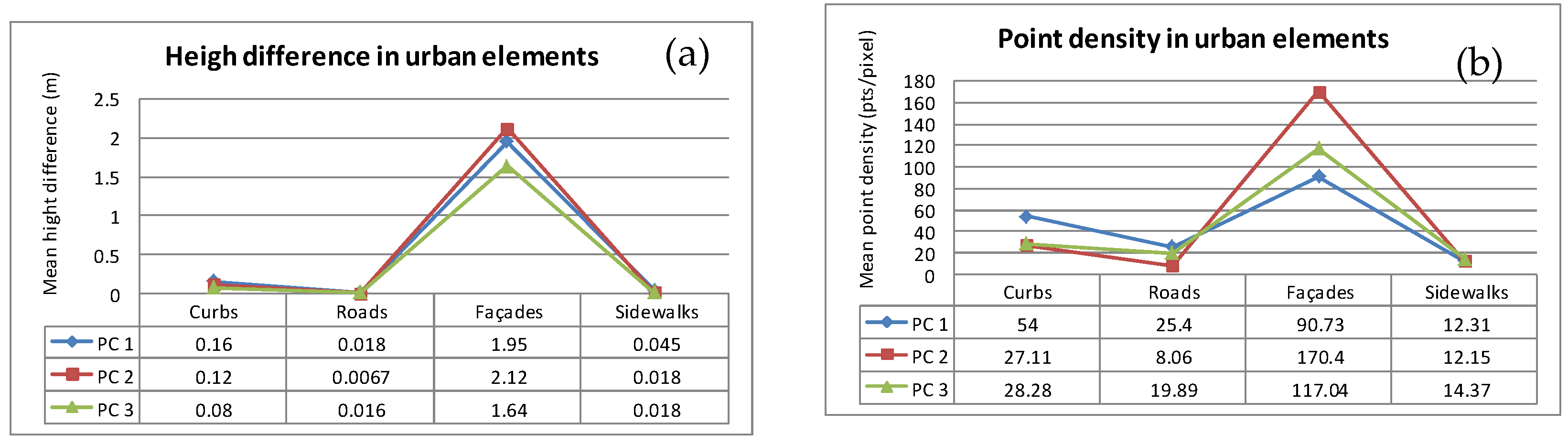

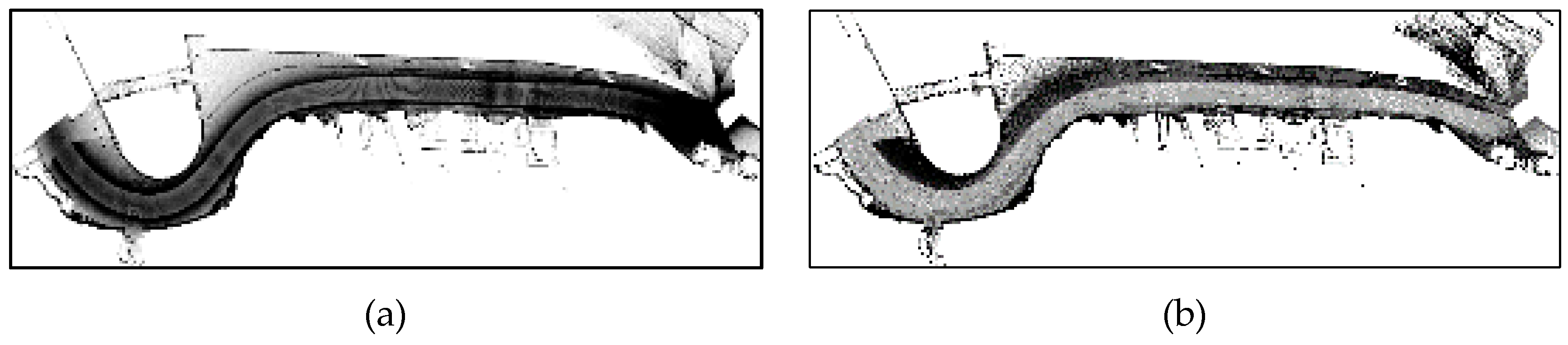

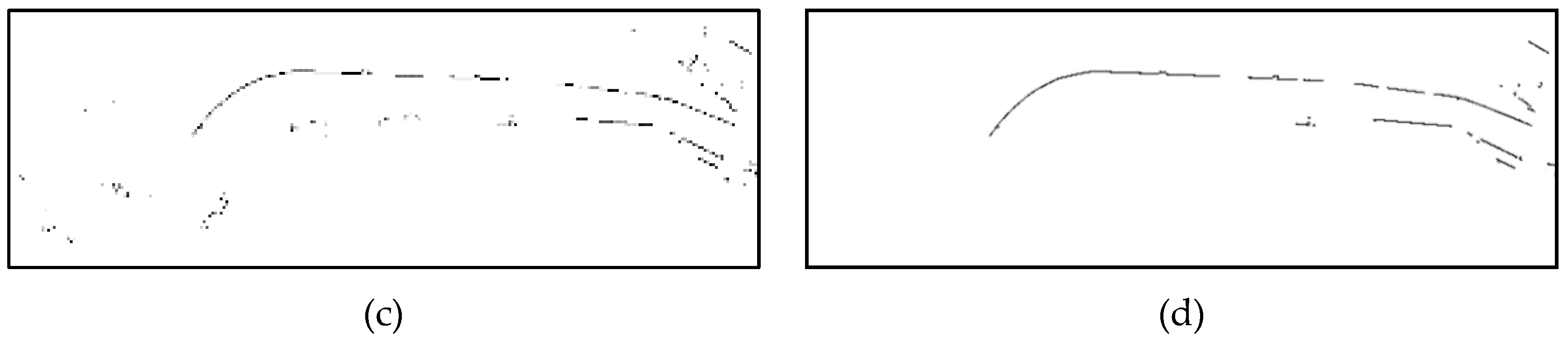

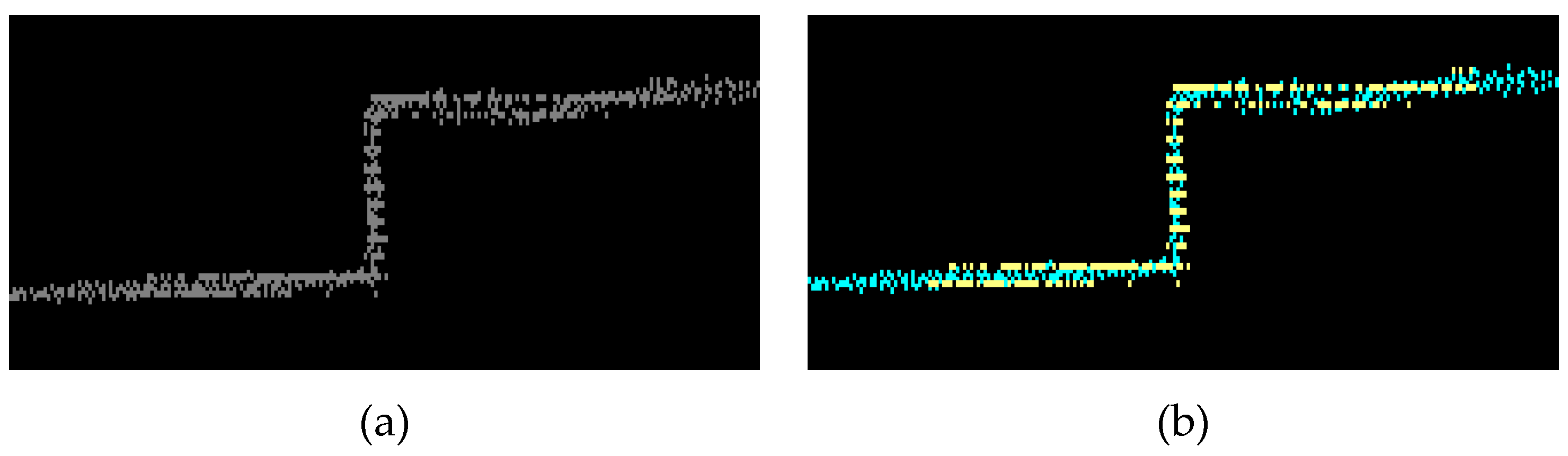

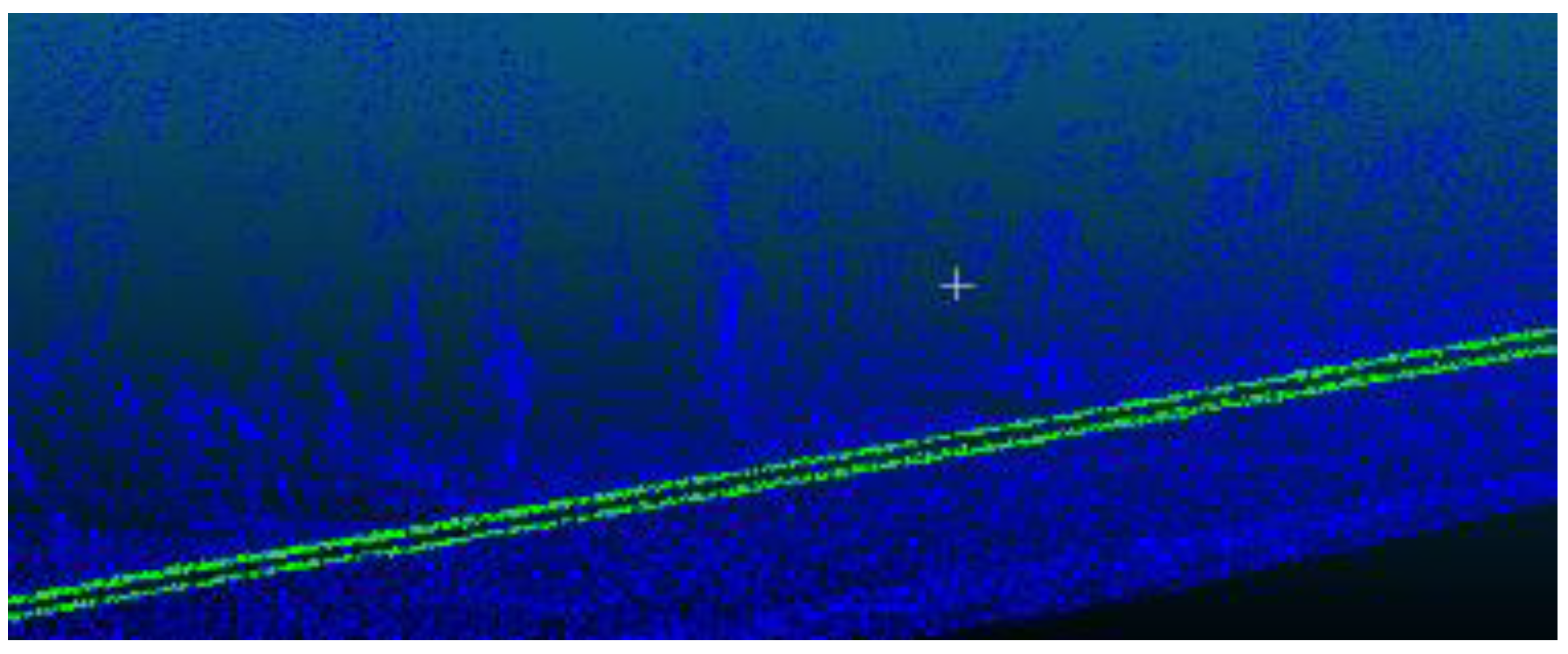

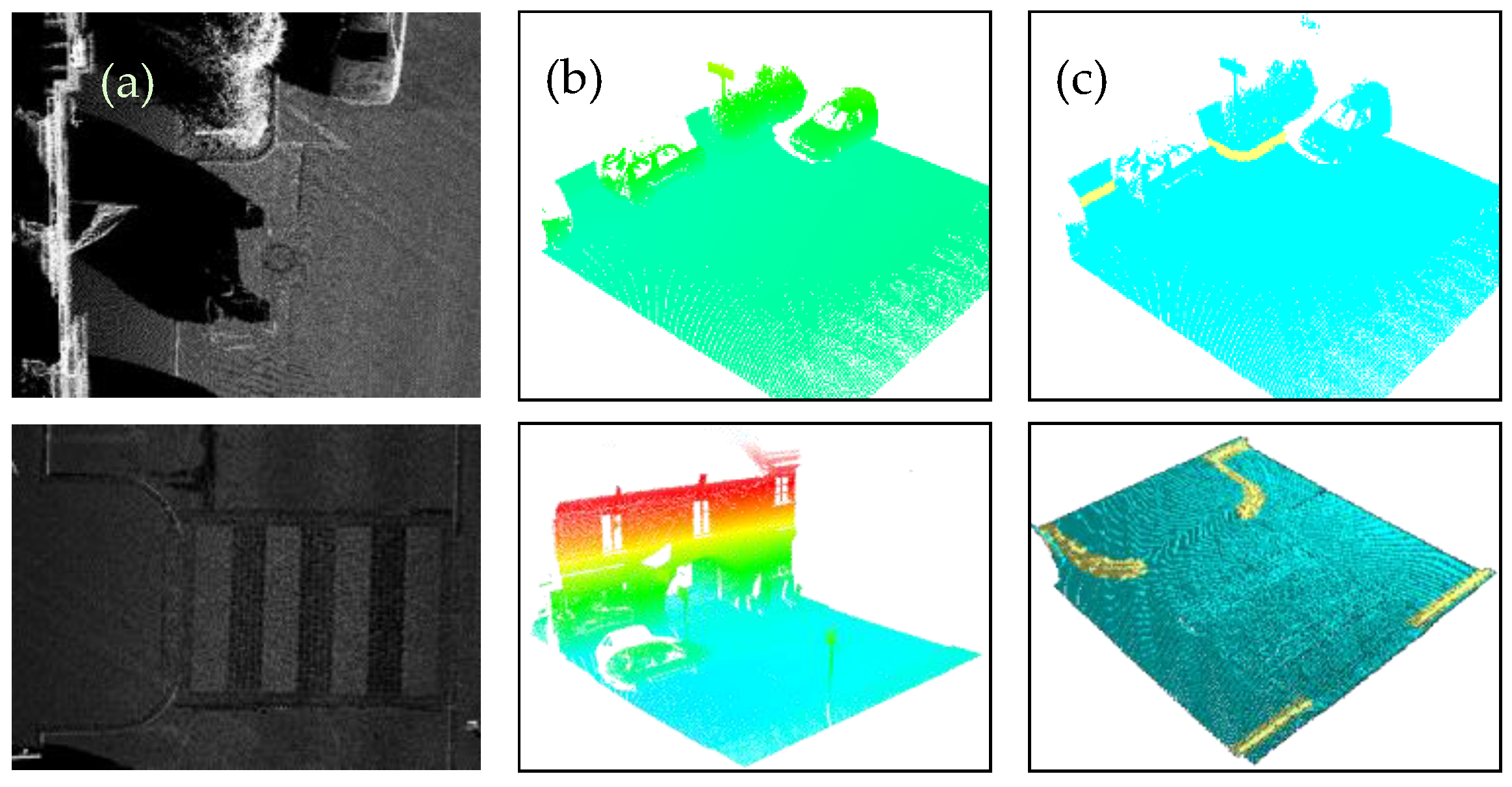

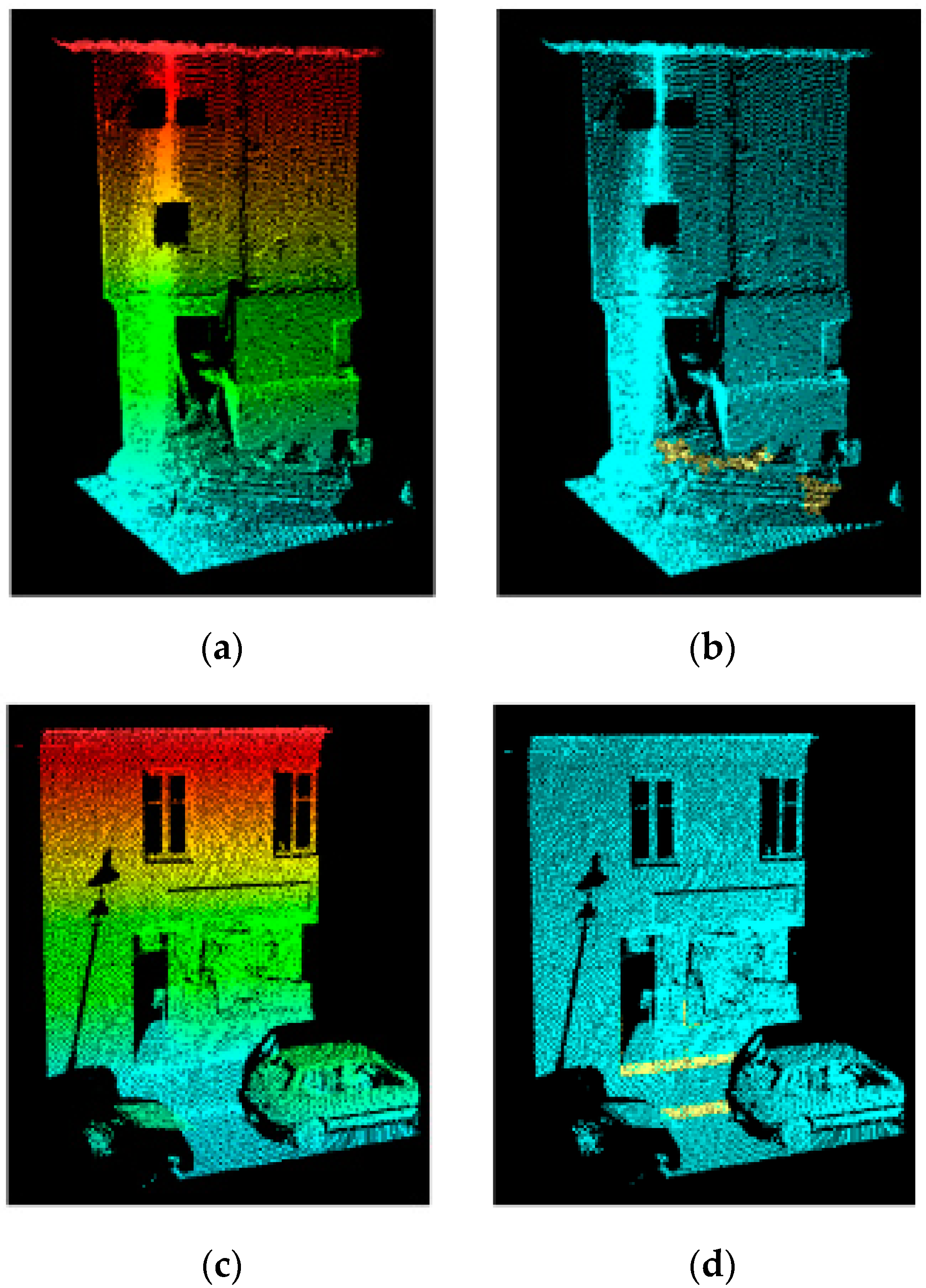

In this paper, a method to detect curbs from MLS cloud data was presented. This method involves the rasterization of the point cloud as a previous step to apply different image processing techniques such as thresholding and an opening morphological operation to determine the location of curbs in the image.

The method was tested in two datasets measured by different MLS sensors, both corresponding to urban environments. The results obtained show completeness and correctness indices higher than 90% and a quality value around 85% in both test sites. From these results, it is possible to conclude that the proposed method can be useful: (1) for curb detection in straight and curved road sections and (2) for MLS data and stereo vision point clouds, due to its independence with the scanning geometry. However, it is still difficult to deal with occluded curbs in shadowed areas and false positives caused by elements with similar properties to the curbs. In the near future, other features will be incorporated to enhance this method through decreasing the false positives rate, better curb edge detection, and estimating the location of road boundaries when they are occluded in the point cloud.