Private and Secure Distribution of Targeted Advertisements to Mobile Phones

Abstract

:1. Introduction

1.1. Problem Statement

1.2. Contribution and Paper Layout

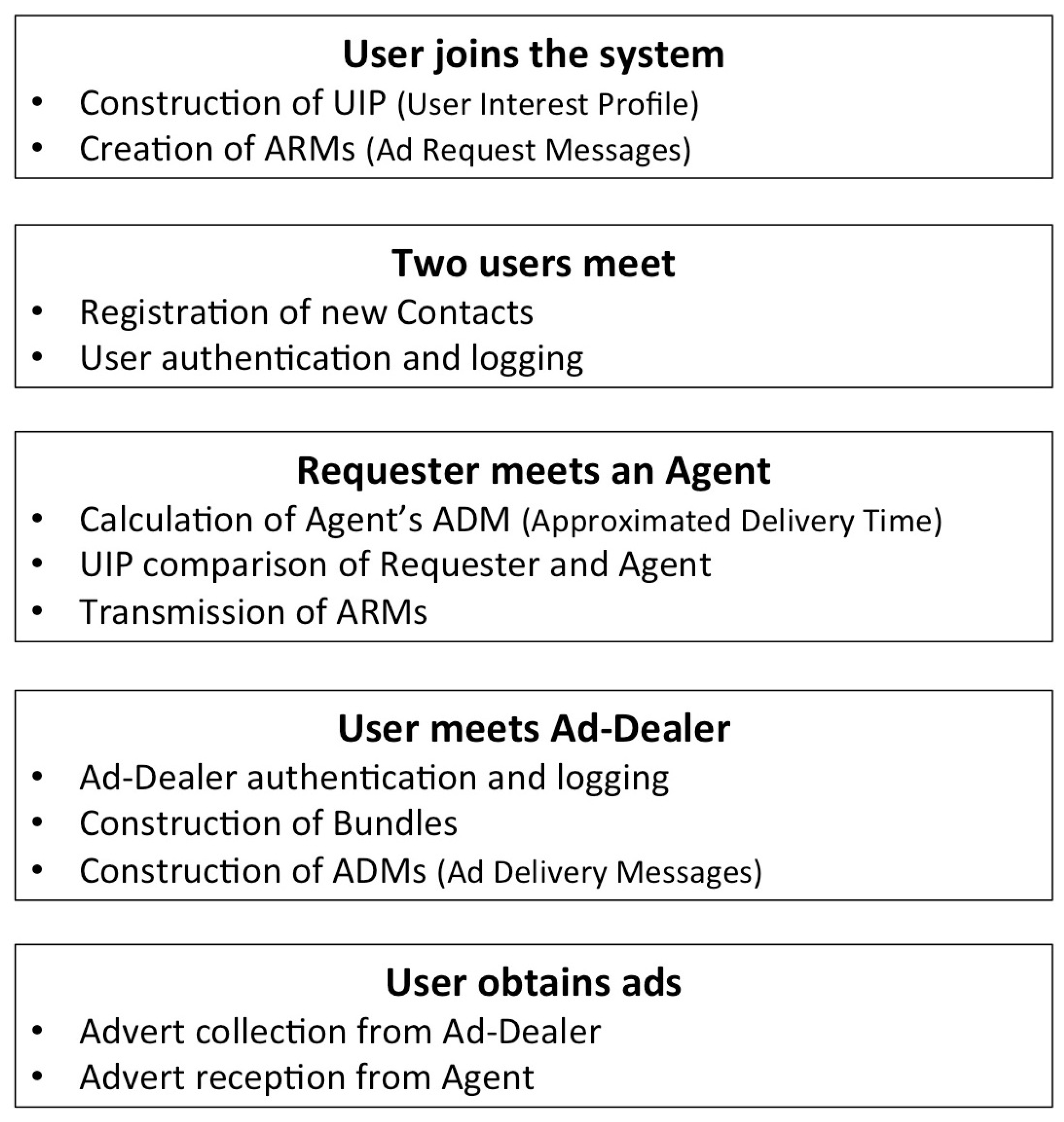

2. The OBA Model

3. Related Work

3.1. Classification of Available Systems

- Trusted proxy-based anonymity.

- Selection from pool of adverts.

- Anonymous direct download.

3.1.1. Trusted Proxy-Based Anonymity

3.1.2. Selection from Pool of Adverts

3.1.3. Anonymous Direct Download

3.2. Literature Review

3.3. Classification Assessment

4. Proposed System Overview

4.1. System Stakeholders

4.2. Trust and Threat Model

4.3. Evaluation Criteria

- User privacy against the Broker and Ad-Dealers: Neither the Broker nor his/her Ad-Dealers should be able to obtain any information that could be used to link a user’s true identity to his/her advertising interests.

- User privacy against other users: Users should not have precise knowledge of the advertising interests of other users within their social circle.

- Protection from fake or harmful content: Attackers should not be able to infect the system with adverts that have not been distributed from a valid Ad-Dealer.

- Protection against impersonation attacks: Attackers should not be able to impersonate the identity of a user or an Ad-Dealer.

- Resource conservation: As mobile devices offer limited resources, the system needs to be conservative in the consumption of memory and battery power.

5. Detailed System Description

5.1. User Joins the System

5.1.1. Construction of UIP

5.1.2. Creation of ARMs

5.2. Two Users Meet

5.2.1. Registration of New Contacts

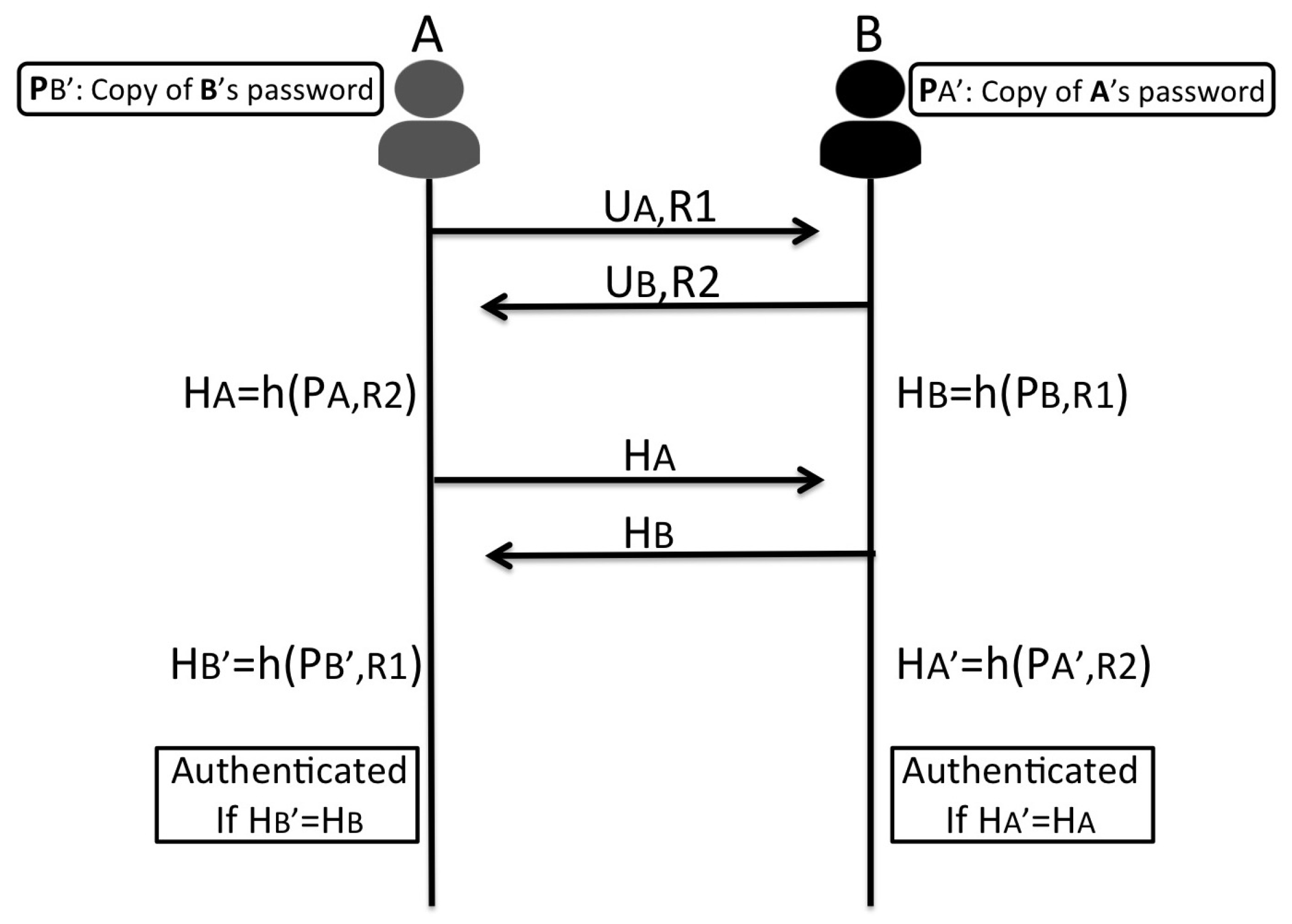

5.2.2. User Authentication and Logging

5.3. Requester Meets an Agent

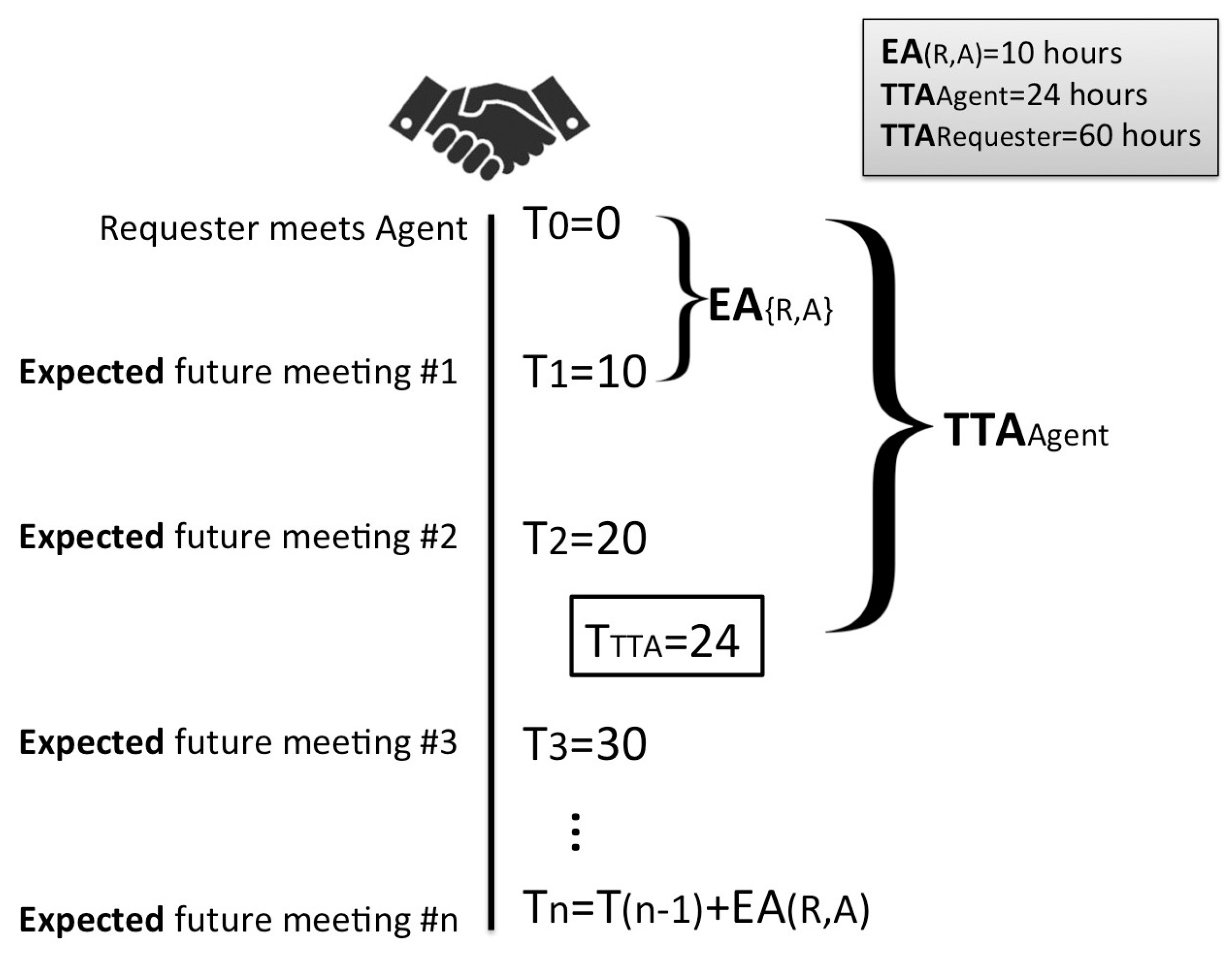

5.3.1. Calculation of Agent’s DT

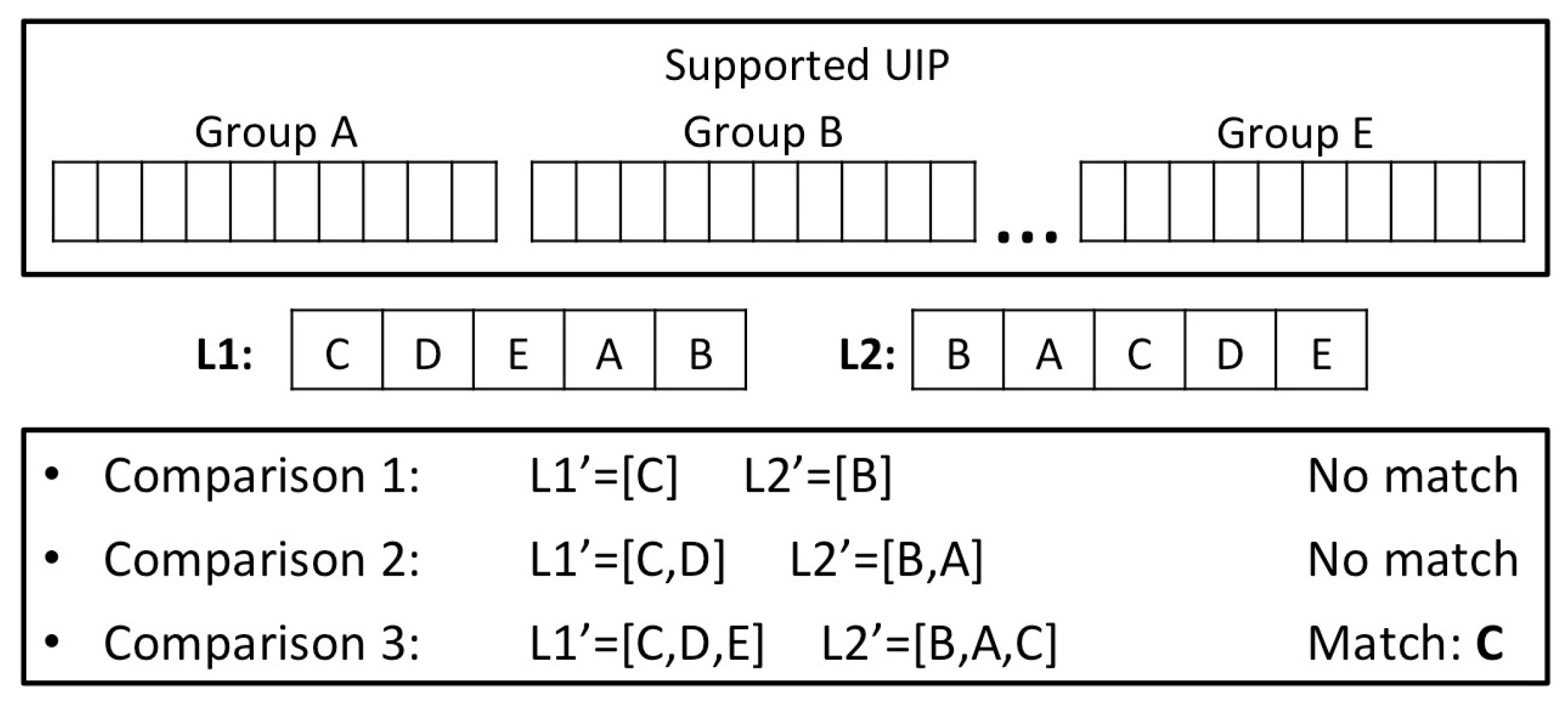

5.3.2. UIP Comparison of Requester and Agent

5.3.3. Transmission of ARMs

5.4. User Meets Ad-Dealer

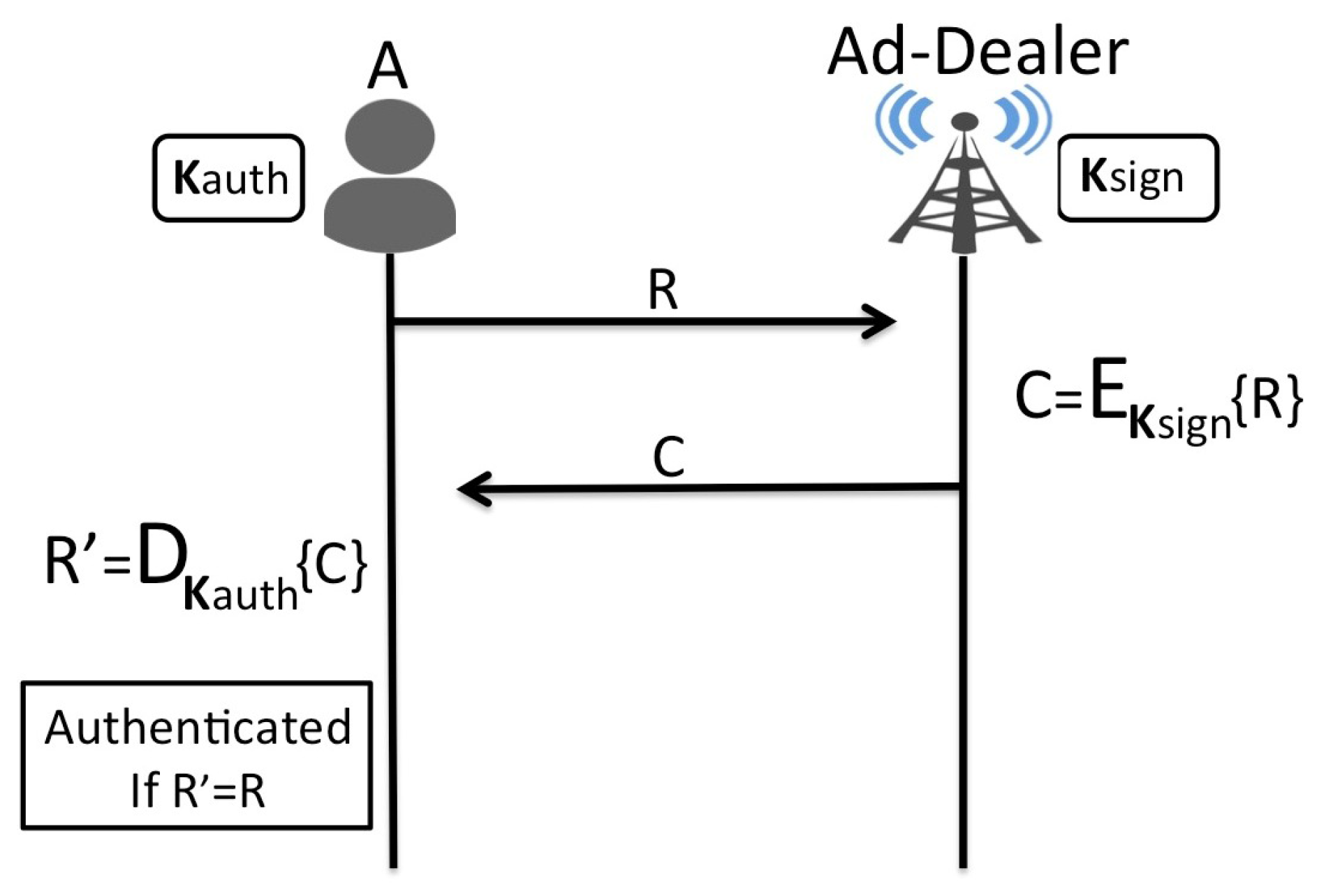

5.4.1. Ad-Dealer Authentication and Logging

5.4.2. Construction of Bundles and ADMs

5.5. User Obtains Ads

5.5.1. Advert Collection from Ad-Dealer

5.5.2. Advert Reception through an Agent

6. Evaluation

6.1. User Privacy against Other Parties (Broker and Ad-Dealers)

6.2. User Privacy against Other Users

6.2.1. Requester and Agent Compare UIPs

6.2.2. Agent Receives ARMs from Requester

6.2.3. Agent Collects Ads

6.2.4. Agent Delivers Ads

6.3. Protection from Malicious Content

6.4. Protection from Impersonation Attacks

- Victim is a user and the Attacker impersonates one of his/her Contacts (that is not an Agent or a Requester).

- Victim is a Requester and the Attacker impersonates his/her Agent

- Victim is an Agent and the Attacker impersonates his/her Requester

- Victim is an Agent and the Attacker impersonates the Ad-Dealer

6.5. Resource Conservation

7. Conclusions and Future Work

Author Contributions

Conflicts of Interest

References

- eMarketer. Mobile to Account for More than Half of Digital Ad Spending in 2015. Available online: https://www.emarketer.com/Article/Mobile-Account-More-than-Half-of-Digital-Ad-Spending-2015/1012930 (accessed on 18 January 2017).

- The Guardian. UK Mobile Ad Spend ’To Overtake Print and TV’. Available online: https://www.theguardian.com/media/2015/sep/30/mobile-advertising-spend-print-tv-emarketer (accessed on 18 January 2017).

- Yan, J.; Liu, N.; Wang, G.; Zhang, W.; Jiang, Y.; Chen, Z. How much can behavioural targeting help online advertising? In Proceedings of the 18th International Conference on World Wide Web, Madrid, Spain, 20–24 April 2009; pp. 261–270. [Google Scholar]

- Purcell, K.; Brenner, J.; Rainie, L. Pew Research Center: Search Engine Use 2012. Available online: http://www.pewinternet.org/2012/03/09/search-engine-use-2012/ (accessed on 18 January 2017).

- Federal Trade Commission (FTC). FTC Staff Report: Self-Regulatory Principles for Online Behavioural Advertising: Tracking, Targeting, and Technology. Available online: https://www.ftc.gov/sites/default/files/documents/reports/federal-trade-commission-staff-report-self-regulatory-principles-online-behavioural-advertising/p085400behavadreport.pdf (accessed on 18 January 2017).

- Sun, Y.; Ji, G. Privacy preserving in personalized mobile marketing. In Active Media Technology: 6th International Conference, AMT 2010, Toronto, Canada, August 28–30, 2010 Proceedings; Springer: New York, NY, USA, 2010; pp. 538–545. [Google Scholar]

- Backes, M.; Kate, A.; Maffei, M.; Pecina, K. Obliviad: Provably secure and practical online behavioural advertising. In Proceedings of the 2012 IEEE Symposium on Security and Privacy, San Francisco, CA, USA, 21–23 May 2012; pp. 257–271. [Google Scholar]

- Chor, B.; Goldreich, O.; Kushilevitz, E.; Sudan, M. Private information retrieval. In Proceedings of the IEEE 36th Annual Symposium on Foundations of Computer Science, Milwaukee, WI, USA, 23–25 October 1995; pp. 41–50. [Google Scholar]

- Toubiana, V.; Narayanan, A.; Boneh, D.; Nissenbaum, H.; Barocas, S. Adnostic: Privacy preserving targeted advertising. In Proceedings of the Network and Distributed System Symposium, San Diego, CA, USA, 28 February–3 March 2010. [Google Scholar]

- Kodialam, M.; Lakshman, T.; Mukherjee, S. Effective ad targeting with concealed profiles. In Proceedings of the IEEE International Conference on Computer Communications (INFOCOM), Orlando, FL USA, 25–30 March 2012; pp. 2237–2245. [Google Scholar]

- Guha, S.; Cheng, B.; Francis, P. Privad: Practical privacy in online advertising. In Proceedings of the 8th USENIX conference on Networked Systems Design and Implementation, Boston, MA, USA, 30 March–1 April 2011; pp. 169–182. [Google Scholar]

- Carrara, L.; Orsi, G. A New Perspective in Pervasive Advertising; Technical Report; Department of Computer Science, University of Oxford: Oxford, UK, 2011. [Google Scholar]

- Carrara, L.; Orsi, G.; Tanca, L. Semantic pervasive advertising. In Proceedings of the 7th International Conference on Web Reasoning and Rule Systems, Mannheim, Germany, 27–29 July 2013; pp. 216–222. [Google Scholar]

- Straub, T.; Heinemann, A. An anonymous bonus point system for mobile commerce based on word-of-mouth recommendation. In Proceedings of the 2004 ACM Symposium on Applied Computing, Nicosia, Cyprus, 14–17 March 2004; pp. 766–773. [Google Scholar]

- Ratsimor, O.; Finin, T.; Joshi, A.; Yesha, Y. eNcentive: A framework for intelligent marketing in mobile peer-to-peer environments. In The 5th international conference on Electronic Commerce (ICEC 2003); ACM: New York, NY, USA, 2003; pp. 87–94. [Google Scholar]

- Ntalkos, L.; Kambourakis, G.; Damopoulos, D. Let’s Meet! A participatory-based discovery and rendezvous mobile marketing framework. Telemat. Inform. 2015, 32, 539–563. [Google Scholar] [CrossRef]

- Lindgren, A.; Doria, A.; Schelen, O. Probabilistic routing in intermittently connected networks. In Service Assurance with Partial and Intermittent Resources; Springer: New York, NY, USA, 2004; pp. 239–254. [Google Scholar]

- Haddadi, H.; Hui, P.; Brown, I. MobiAd: Private and scalable mobile advertising. In Proceedings of the Fifth ACM international Workshop on Mobility in the Evolving Internet Architecture, Chicago, IL, USA, 20–24 September 2010; pp. 33–38. [Google Scholar]

- Lazer, W. Handbook of Demographics for Marketing & Advertising: New Trends in The American Marketplace; Lexington Books: New York, NY, USA, 1994. [Google Scholar]

| Trusted Proxy | Pool of Ads | Direct Download | |

|---|---|---|---|

| Privacy vs. Ad-Network | × | × | + |

| Privacy vs. other users | − | ||

| Security vs. attacker | + | + | − |

| Targeting effectiveness | + | − | + |

| Practicality/usability | + | − | − |

| Resource conservation | + | − | × |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mamais, S.S.; Theodorakopoulos, G. Private and Secure Distribution of Targeted Advertisements to Mobile Phones. Future Internet 2017, 9, 16. https://doi.org/10.3390/fi9020016

Mamais SS, Theodorakopoulos G. Private and Secure Distribution of Targeted Advertisements to Mobile Phones. Future Internet. 2017; 9(2):16. https://doi.org/10.3390/fi9020016

Chicago/Turabian StyleMamais, Stylianos S., and George Theodorakopoulos. 2017. "Private and Secure Distribution of Targeted Advertisements to Mobile Phones" Future Internet 9, no. 2: 16. https://doi.org/10.3390/fi9020016